What is an Optimistic Rollup?

Learn what an optimistic rollup is, how fraud proofs and challenge periods work, and why offchain execution can still inherit L1 security.

Introduction

Optimistic rollups are a way to scale blockchains by moving most transaction execution off the base chain while still using the base chain as the final judge of what counts as valid. That design matters because blockchains become expensive precisely where they are strongest: every node repeats the same computation, stores the same data, and checks the same result. If you want lower fees and higher throughput without giving up too much security, you need a way to stop doing all of that work everywhere.

The puzzle is that this sounds contradictory. If transactions are executed somewhere else, why should the base chain trust the result? And if the base chain still has to re-check everything, where does the scaling come from? An optimistic rollup exists to split those responsibilities carefully: do computation away from the base chain, but keep enough data and enough dispute machinery on the base chain that bad execution can be caught and reversed.

That is why the word optimistic is doing real work here. The system does not prove every batch correct before accepting it. It assumes batches are correct unless challenged, then falls back to a dispute process called a fraud proof when someone claims a batch is wrong. This sounds weaker than proving correctness up front, and in one sense it is. But it can be much cheaper, and that cost difference is the entire reason optimistic rollups exist.

How do optimistic rollups move execution offchain while preserving L1 judgment?

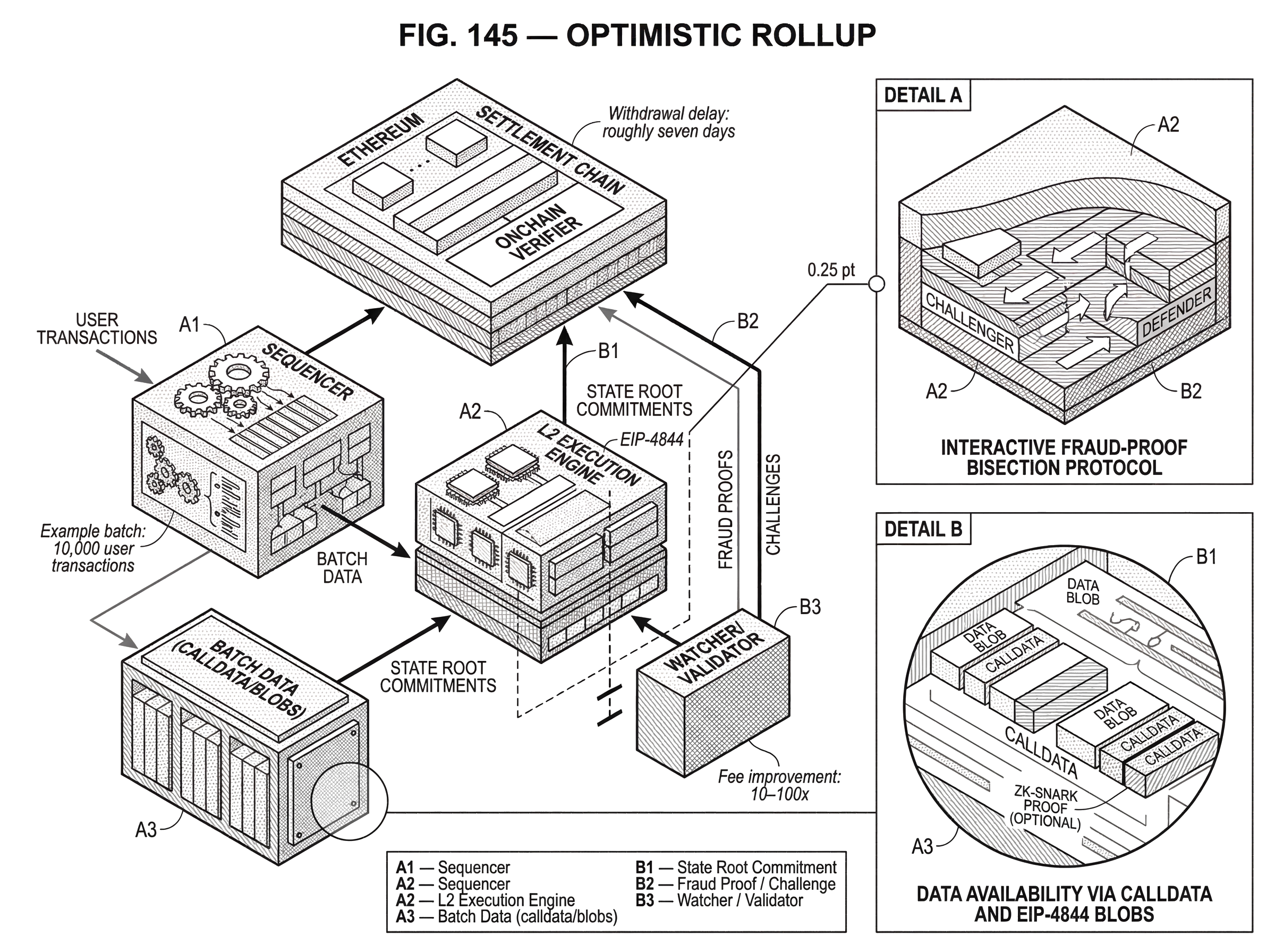

A normal monolithic blockchain does three expensive things in one place. It orders transactions, makes transaction data available, and executes the state transition that those transactions imply. Optimistic rollups try to unbundle this. They usually perform ordering and execution in an L2 environment, then post compressed transaction data and state commitments back to a settlement chain such as Ethereum.

The important distinction is between recomputing every step and having the ability to recompute if needed. An optimistic rollup aims for the second. Ethereum does not execute every L2 transaction as it happens. Instead, it receives the data needed to reconstruct what happened and a claim about the resulting state. If that claim goes unchallenged during a fixed window, the system treats it as final.

This is the central mechanism. The rollup gets scale because the expensive path is no longer the default path. The expensive path becomes the exception: it is only used when someone disputes a batch. If most batches are honest, the system avoids paying L1 execution cost for normal operation while preserving an L1-based path for adjudicating fraud.

That makes an optimistic rollup less like “trust me, I computed this already” and more like “here is the data, here is my claimed result, and anyone can force a check if I am lying.” The security story comes from that anyone can force a check property, not from blind trust in the operator.

What data do optimistic rollups publish on L1 and why is it important?

| Data option | Onchain footprint | Cost | Challenger access | Security impact |

|---|---|---|---|---|

| Calldata | Full transaction data on L1 | Higher per-byte cost | Immediate onchain access | Strong L1-backed availability |

| Blobs (EIP-4844) | Versioned blob commitments on L1 | Lower data fees | Blob sidecars provide data | Cheaper DA with commitments |

| No onchain data | Only hashes or offchain proofs | Lowest L1 cost | Depends on external DA | Weaker L1 guarantees |

An optimistic rollup does not usually post all of its execution steps to the base chain. What it posts is the information needed to anchor the rollup to L1 and to make disputes possible. In Ethereum-based rollups, this transaction data may be posted as calldata or, increasingly, in blobs introduced by EIP-4844.

This detail is easy to underestimate. Data availability is not a side concern; it is part of the security model. If challengers cannot access the rollup’s transaction data, they cannot reconstruct execution and cannot produce a fraud proof. In that case, a malicious operator could publish an invalid state commitment and escape correction simply because nobody has the evidence needed to challenge it.

So optimistic rollups rely on a sharp division of labor. They save money by not proving execution up front, but they do not save money by hiding the underlying transaction data from the settlement chain. The data has to be available somewhere challengers can reliably access. On Ethereum, that is why rollup batch data is published onchain, whether in calldata or blob space.

Blobs matter because they change the economics of this publication. EIP-4844 introduced a blob-carrying transaction format with a separate fee market for this kind of data, letting rollups publish large amounts of data more cheaply than permanent calldata. The EVM does not directly read blob contents during ordinary execution; instead, commitments to blobs are available onchain, while the blob data itself is propagated separately. For rollups, the consequence is simple: publishing batch data can become significantly cheaper, which reduces user fees without changing the basic optimistic-rollup security model.

Why are fraud proofs usually rare in optimistic rollups (worked example)?

Imagine a rollup sequencer collects 10,000 user transactions. It orders them, runs them in the rollup’s execution environment, and computes a new L2 state root. Then it posts the batch data to Ethereum along with a commitment saying, in effect, if you replay these transactions from the previous state, you will end at this new state.

Ethereum does not immediately replay those 10,000 transactions. Instead, it opens a challenge window. During that period, any watcher, validator, or independent node can download the posted data, execute the same batch offchain, and compare the claimed result to the one they compute locally.

If the sequencer was honest, all honest watchers obtain the same post-state and do nothing. The batch eventually passes the challenge period and becomes finalized for L1 purposes. Notice what happened: the system got scale precisely because the base chain did not pay to execute the batch, but the batch was still checkable.

Now change one fact. Suppose the sequencer slipped in an invalid transition that credits itself extra tokens. A watcher replaying the batch notices that the claimed state root does not match the correct execution result. That watcher can challenge the assertion on Ethereum. The system then enters its expensive mode: a dispute process that narrows down exactly where the incorrect computation occurred and lets the base chain decide the winner.

The optimistic design works if the first story is common and the second story is available. If fraud is frequent, the system loses much of its efficiency. If fraud is impossible to challenge, the system loses its security. The design lives in that middle ground: rare disputes, but credible disputes.

How do interactive fraud proofs reduce onchain costs compared with full re-execution?

| Approach | L1 work | Cost | Granularity | Typical usage |

|---|---|---|---|---|

| Full replay | Re-execute entire batch | Very high gas cost | Whole-batch decision | Rare for large batches |

| Interactive bisection | Logarithmic onchain checks | Low to moderate cost | Narrows to single instruction | Common in modern ORUs |

| One-step onchain | L1 evaluates one VM step | Minimal onchain work | Atomic decisive check | Final arbiter at dispute end |

The naive fraud-proof design would be to re-execute the entire disputed batch on L1. That is simple to understand, but it defeats much of the point. If a disputed batch contains thousands of transactions, replaying all of them on Ethereum can be too expensive.

Modern optimistic rollups therefore usually use interactive fraud proofs. The idea is to reduce the amount of work Ethereum must do. Instead of asking L1 to re-run an entire execution trace, the asserter and challenger engage in a back-and-forth protocol that bisects the disputed computation until they isolate a single disputed step.

Here is the mechanism. The asserter says, effectively, “the execution from state A to state Z is correct.” The challenger says it is not. Rather than replaying the whole path from A to Z, they split the trace in half. The asserter commits to an intermediate state M, claiming the computation from A to M and from M to Z is consistent. The challenger then identifies which half contains the error. This repeats, cutting the disputed segment smaller and smaller.

Eventually the disagreement is reduced to one instruction, one VM step, or one tiny state transition. At that point the L1 contract can directly evaluate that step. If the step is invalid, the challenger wins and the false assertion is rejected. If the step is valid, the asserter wins and the challenge fails.

This is the same reason a binary search is efficient: the chain does not need to inspect the entire history, only enough checkpoints to localize the disagreement. In Optimism’s fault dispute game, for example, a root claim can be bisected over output roots and then over execution traces until a one-step proof is checked onchain. Arbitrum’s dispute systems follow the same general direction, though implementations differ in details and incentives.

The analogy to a courtroom is helpful up to a point. The rollup operator makes a claim; a challenger contests it; the base chain acts as judge. What the analogy explains well is the separation between ordinary operation and exceptional adjudication. Where it fails is that blockchains need deterministic, machine-checkable evidence, not witness testimony or human interpretation.

Why must rollup execution be deterministic and how does the VM affect disputes?

For a dispute to be resolvable onchain, the underlying execution must be deterministic. Starting from the same pre-state and the same transaction data, honest parties must compute the same post-state. Otherwise the L1 contract would have no objective way to pick a winner.

This is why optimistic-rollup dispute systems are built around a defined state transition function. In plain language, that means there is an exact rule for how one state becomes the next after each operation. A dispute game does not ask whether an outcome seems reasonable. It asks whether the claimed transition follows from the agreed rules.

In more concrete terms, the disputed object is usually not “the batch” in the abstract. It is a claim about either an output root or the state of a fault-proof virtual machine at some point in an execution trace. In the OP Stack specification, for instance, the fault dispute game narrows a disagreement down until an onchain step function evaluates a single instruction against a committed pre-state and supporting proof data.

This is one reason execution equivalence is hard engineering, not branding. Many optimistic rollups aim to support the EVM or near-EVM semantics so existing contracts can deploy easily. But if the rollup’s execution engine and its dispute VM diverge in edge cases, the security model weakens. The system depends on the rulebook used in disputes matching the rulebook used in normal execution closely enough that “honest re-execution” is a meaningful concept.

Why do optimistic rollups delay finality and how does that affect withdrawals?

| Rollup type | Proofs before finality | Withdrawal latency | Cost profile | User experience |

|---|---|---|---|---|

| Optimistic rollup | No validity proofs upfront | ≈7 days challenge window | Low common-case onchain cost | Fast L2 responses, slow L1 settlement |

| ZK rollup | Validity proof included | Minutes to hours | High proof generation cost | Fast canonical settlement |

| Liquidity-provider fronting | No extra protocol proofs | Perceived instant withdrawal | LP fee + counterparty risk | Improves UX, adds trust dependency |

The challenge period is not an inconvenience added after the fact. It is part of how optimistic rollups buy cheaper execution. Because the system does not prove correctness immediately, it must leave time for someone to contest an incorrect result.

That creates two kinds of finality. Inside the rollup, transactions often appear fast because the sequencer orders them quickly and users can treat them as practically confirmed. But for settlement on L1, finality is slower. An L2-to-L1 withdrawal usually cannot be considered secure until the relevant state commitment survives the challenge window.

On Ethereum, this withdrawal delay is typically on the order of a week. That is why withdrawing directly from an optimistic rollup to L1 often takes roughly seven days. The system is waiting not for more computation, but for the right to say nobody successfully proved this wrong.

This tradeoff is one of the clearest differences between optimistic rollups and ZK rollups. A ZK rollup pays to produce a validity proof up front and can usually finalize withdrawals faster once that proof is accepted. An optimistic rollup avoids that proving cost in the common case, but pays in withdrawal latency. Neither choice is free; each moves cost and complexity to a different part of the system.

Because users dislike waiting a week, many ecosystems rely on liquidity providers or bridge services that front the user funds immediately and later collect the canonical withdrawal after the challenge period ends. That improves user experience, but it does not remove the underlying protocol delay. It just puts a liquidity market on top of it.

What is the honest-watcher assumption and how does it affect rollup security?

The security promise of an optimistic rollup is sometimes stated too broadly. It is not simply “secured by Ethereum” in the same way as a protocol that proves every state transition on Ethereum. It is more precise to say the rollup is settled on Ethereum and can inherit strong security if the conditions for challenging fraud are met.

The most important of those conditions is that at least one honest participant must be able and willing to monitor the rollup and challenge bad assertions. This is often described as a 1-of-N honest assumption. Out of all possible watchers, provers, or validators, you need at least one to catch fraud in time.

That assumption has real consequences. If no honest node is checking execution, a malicious operator could post an invalid state transition, let the challenge window expire, and potentially steal escrowed funds or corrupt state. The base chain would not magically correct the error on its own, because the system was designed not to re-execute everything by default.

This is the deepest conceptual difference between optimistic security and proof-based validity security. In an optimistic system, safety is conditional on available and active challengers. In a validity-proof system, safety is more tightly bound to the proof verifier itself. That does not mean optimistic rollups are insecure; it means their trust model is more operational. Monitoring matters.

Can a sequencer censor transactions and what can L1 do to help?

Most optimistic rollups today use a sequencer to order transactions and produce batches efficiently. In many deployed systems, this sequencer is still relatively centralized. That creates a practical tension: a rollup can be trust-minimized in settlement while still being operationally centralized in transaction ordering.

The immediate risk is censorship or downtime. A sequencer can delay certain users, reorder transactions in self-serving ways, or simply stop producing blocks. The Arbitrum sequencer outage during a traffic spike is a good reminder that scalability systems inherit operational bottlenecks, not just cryptographic ones. When demand for posting data to L1 surged, the batch-posting path itself became a chokepoint.

Optimistic rollups mitigate this imperfectly rather than magically. If transaction data and state commitments are forced onto L1, users can still reconstruct state and eventually exit, and another party may be able to resume production depending on the design. Many systems also provide an L1 path for submitting transactions directly if the sequencer is unavailable, though this is less convenient.

So the invariant is not “the sequencer can never fail.” It is closer to “a sequencer failure should not let the operator permanently trap user funds or make the chain unverifiable.” Posting data to L1 is what makes that possible. L1 cannot prevent every censorship episode in real time, but it can provide an escape hatch and a source of truth.

How does moving data availability off L1 change a rollup’s security model?

The classic optimistic-rollup model keeps data availability on the settlement chain. That is why it is usually called a rollup rather than a validium: the transaction data is published onchain. This choice gives challengers strong access guarantees, but it also makes L1 data costs the main bottleneck.

Some systems experiment with separate data-availability layers to push throughput further. Mantle’s use of EigenDA is one example of this modular direction. The appeal is obvious: a specialized DA layer may offer far more bandwidth and lower cost than Ethereum blob space or calldata.

But the tradeoff is equally important. Once the rollup no longer relies purely on L1 for data availability, the security model changes. Challengers now depend not only on the settlement chain but also on the separate DA mechanism being available and trustworthy enough for disputes to function. That may be a reasonable engineering choice, but it is not the same guarantee as an Ethereum-data-backed rollup.

This is why “rollup” and “validium” are not marketing flavors of the same thing. The location of data availability changes what users must trust. If data is on L1, challengers can use L1 as the shared source of evidence. If data is elsewhere, the fraud-proof story inherits the assumptions of that elsewhere.

Why do dispute incentives (bonds, rewards) matter for optimistic rollups?

A dispute game only protects the chain if honest challengers are motivated to participate. That usually means proposers or asserters post bonds, challengers can win rewards for successful disputes, and false or losing parties are penalized.

This sounds straightforward, but research shows the details are subtle. If rewards are poorly designed, a challenger can win “correctly” while still not being properly compensated after accounting for competition, auctions, or strategic behavior by malicious proposers. In other words, the existence of a fraud-proof protocol is not enough; the economics around it must make honest monitoring sustainable.

That is why modern dispute systems spend so much effort on timing rules, inherited clocks, bond accounting, and edge cases. Optimism’s fault dispute game uses chess-clock-like timers to limit delay and give parties bounded time to respond. Arbitrum’s BOLD protocol emphasizes permissionless, all-vs-all challenges with a bounded confirmation delay. These are not implementation footnotes. They are attempts to turn the abstract honest-watcher assumption into something that can survive adversarial behavior in practice.

When should you use an optimistic rollup and what benefits do they provide?

People use optimistic rollups for the same reason they use any scaling system: they want lower fees and more throughput without leaving the ecosystem they already care about. On Ethereum, that often means running familiar smart contracts, wallets, and token standards in an environment that feels close to mainnet but costs much less to use.

The economic win comes from amortization. Instead of every user paying for separate L1 execution, many users share the cost of a single posted batch. Compression and batching spread the fixed cost over many transactions. Official Ethereum documentation describes optimistic rollups as offering large fee and throughput improvements, sometimes on the order of 10–100x depending on workload and implementation.

That is enough to make entire classes of applications more usable: trading, gaming, social apps, payments, and general smart-contract activity that would be uneconomical if every interaction had to settle directly as a full L1 execution. The reason this matters is not just “more TPS.” It is that the application design space changes when ordinary interactions become cheap enough to happen often.

Conclusion

An optimistic rollup works by changing the default question from can we prove every step now? to can we prove fraud if needed? That one shift explains both its strengths and its compromises.

The strength is scalability: execution moves off the base chain, users share the cost of published data, and the expensive verification path is used only when there is a dispute. The compromise is that security depends on posted data, a real challenge window, sound dispute incentives, and at least one honest watcher who is paying attention.

If you remember one thing, remember this: an optimistic rollup is not secured by hiding work from L1, but by making L1 the court of appeal while hoping court is rarely needed.

How do optimistic rollups affect funding, trading, and withdrawals?

Optimistic rollups trade lower transaction costs for a delayed onchain finality window and rely on posted batch data and active watchers to catch fraud. Before you fund or trade assets on a rollup, check its data-availability model, challenge-window length, and sequencer/operator setup so you understand withdrawal timing and censorship risk; then use Cube to fund, trade, and withdraw while accounting for those constraints.

- Deposit fiat or a supported crypto into your Cube account (choose the network matching the rollup asset you plan to use).

- Verify the rollup’s challenge window and data-availability model (e.g., L1 calldata, EIP-4844 blob, or external DA) before initiating withdrawals or bridging funds.

- Open the relevant market on Cube and place a limit order for predictable execution or a market order if you need immediate fill.

- If you plan to withdraw to L1, factor in the rollup’s withdrawal delay (commonly ~7 days for optimistic rollups) and consider using Cube’s on-exchange liquidity options if you need faster access.

- Review fees, destination chain details, and the sequencer/bridge notes on Cube before submitting the transfer or withdrawal.

Frequently Asked Questions

The challenge window gives third parties time to download the posted batch data, re-execute the transactions offchain, and submit a fraud proof if the sequencer’s claimed state is wrong; it exists because optimistic rollups accept results provisionally rather than proving them up front. On Ethereum this settlement delay is typically on the order of a week (roughly seven days) for withdrawals to be considered secure.

If no honest watcher downloads the posted data and challenges a bad assertion, a malicious operator could publish an invalid state commitment and escape correction after the challenge window, so optimistic rollup safety is conditional on at least one active honest participant monitoring and acting.

Interactive fraud proofs avoid replaying an entire disputed batch on L1 by having the asserter and challenger bisect the execution trace repeatedly until the disagreement is narrowed to a single VM step that the base chain can check directly, substantially reducing the onchain computation required to resolve disputes.

Posting transaction data on L1 gives challengers a shared, highly available source of evidence so fraud can be reconstructed and proven onchain; moving data availability to a separate DA layer can lower costs and increase bandwidth but changes the security assumptions because challengers must now trust and rely on that external DA layer instead of L1.

A centralized sequencer can censor, delay, or reorder transactions in practice, and L1 cannot stop real-time censorship, but posting batch data and commitments to L1 provides an escape hatch: users can reconstruct state and exit or another actor may resume block production depending on the design, so L1 limits permanent loss but not transient censorship.

Deterministic execution is required so independent watchers starting from the same pre-state and transaction data compute the same post-state; if the rollup’s normal execution VM and the dispute VM differ in edge cases, onchain dispute resolution becomes ambiguous and the security model weakens.

Dispute games rely on economic incentives: proposers post bonds, challengers can earn rewards, and losing parties are penalized; if these incentives are set poorly (or freeloader claims remain uncountered) monitoring can become unprofitable or unstable, undermining the honest-watcher assumption and the rollup’s security in practice.

Optimistic rollups trade lower common-case cost for delayed finality: they avoid paying to prove execution up front so withdrawals require surviving the challenge window, whereas ZK rollups pay the cost to generate a validity proof up front and can usually finalize withdrawals much faster once the proof is verified.

Disputes should be rare for the optimistic model to be efficient; if fraud is frequent the system spends more time and money on expensive onchain adjudication and loses most of its scaling advantage, whereas if fraud is hard to challenge (e.g., due to unavailable data) the system also loses security - both outcomes degrade the design’s intended benefit.

Related reading