What Is Finality?

Learn what blockchain finality means, how probabilistic and deterministic finality work, and why confirmations, checkpoints, and slashing matter.

Introduction

Finality is the property that tells you when a blockchain history should be treated as settled. That sounds simple, but it hides the central tension in blockchain design: many machines are trying to agree on one ledger while messages arrive late, validators fail, miners compete, and adversaries may actively try to create conflicting histories. If a system can never confidently say “this transaction is now part of the past,” then it is hard to use for payments, exchanges, bridges, or any application that must act on a result and move on.

The puzzle is that blockchains are often described as immutable, yet many of them can and do revise recent history. A Bitcoin transaction with one confirmation is not final in the same sense that a Tendermint-committed block is final, and an Ethereum checkpoint that has been finalized under Gasper is different again. So the useful question is not whether a blockchain is immutable in the abstract. The useful question is: what mechanism makes reversals hard, how hard, and under what assumptions?

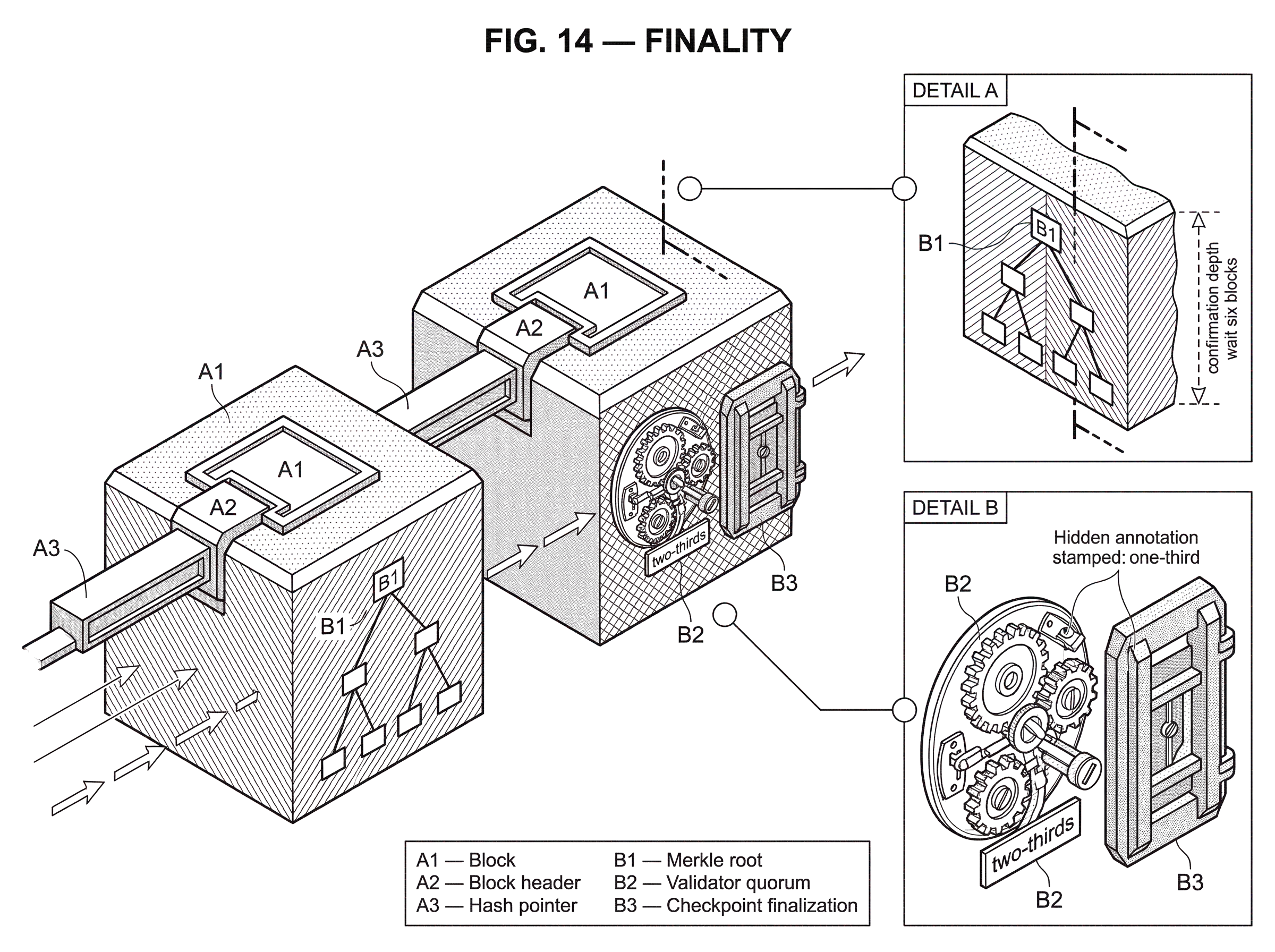

That is what finality measures. In some systems, finality is probabilistic: a block becomes less and less likely to be reversed as more blocks build on top of it. In others, finality is deterministic or economic: once enough validators vote and cross certain thresholds, a reversal would require either breaking the protocol assumptions or destroying large amounts of stake. And in distributed-systems theory, there are even weaker notions, such as deterministic eventual finality, where the guarantee is not that every recent block is permanently safe, but that honest participants’ chosen main chains eventually share a growing common prefix.

The core idea is this: finality is not mainly about blocks being old; it is about the system having accumulated enough evidence, work, votes, or penalties behind a history that competing histories stop being credible. Once that clicks, the different blockchain designs look like different ways of buying the same thing.

Why do blockchains need finality to be operationally useful?

A blockchain is trying to solve a very specific problem: many parties who do not fully trust one another need a shared order of events. If Alice pays Bob and also tries to pay Charlie with the same funds, the system must converge on one ordering and reject the conflicting alternative. That is already difficult in a distributed network where messages can be delayed. It becomes harder when participants are allowed to behave maliciously.

Without finality, a blockchain can keep producing blocks yet still leave an important question open: should an application treat what it sees now as durable? A wallet might show incoming funds, an exchange might credit a deposit, or a bridge might mint wrapped assets on another chain. If the source-chain history later reorganizes and removes the original transaction, those downstream actions become wrong. In that sense, finality is the point where consensus becomes operationally useful.

This is also why finality is closely connected to chain reorganizations, usually called reorgs. A reorg means the network stops treating one recent branch as canonical and switches to another. Finality is the thing that either makes such switches increasingly unlikely, or forbids them beyond a protocol-defined point. If reorgs are the symptom of temporary disagreement, finality is the condition under which that disagreement stops mattering for some prefix of the ledger.

Notice what is fundamental here and what is convention. It is fundamental that a distributed system needs some rule for converging on a shared history. It is a design choice whether that rule is “follow the chain with the most accumulated proof-of-work,” “follow the heaviest attested subtree,” or “accept blocks once two-thirds of validators commit them.” Different rules produce different kinds of finality.

How does the 'moving frontier' mental model explain finality?

A good way to picture a blockchain is as a ledger with a moving frontier. Near the frontier, the history is still contestable: new information can cause the network to prefer a different branch. Deeper behind the frontier, the history becomes harder to contest. Finality is the rule that tells you where the contestable region ends.

In Bitcoin-like systems, the frontier moves because miners continuously extend whichever chain has the greatest accumulated proof-of-work. A recent block may be displaced if another branch catches up and surpasses it. But every additional block above your transaction means an attacker would need to redo more work to replace that history, so the chance of successful reversal falls. Here, depth is a proxy for security.

In Byzantine fault tolerant proof-of-stake systems, the frontier moves differently. Blocks may be proposed frequently, but the key event is not merely that a block exists. The key event is that a supermajority of validators has voted in a way that commits the chain to that block or its descendants. Here, depth alone is not the main signal. The main signal is quorum.

This distinction explains a common misunderstanding. People often say “wait six blocks” or “wait two epochs” as if finality were just a clock. It is not. Time and depth matter only because they let the protocol gather the thing that actually secures history: accumulated work, accumulated attestations, or repeated agreement through some voting process. If the mechanism changes, the meaning of waiting changes with it.

How do Bitcoin confirmations reduce the risk of reorgs?

| Confirmations | Security signal | Typical use | Risk if early | Notes |

|---|---|---|---|---|

| 1 confirmation | Block included at tip | Fast retail or UX | High reorg risk | No protocol guarantee |

| 6 confirmations | Deeper work low risk | Exchange deposits medium value | Low residual probability | Common industry heuristic |

| 100+ confirmations | Very deep work accumulation | Large treasury transfers | Negligible under honest majority | Assumes no majority attacker |

Bitcoin is the canonical example of probabilistic eventual finality. The network timestamps transactions into a chain of hash-based proof-of-work. Nodes treat the chain with the greatest accumulated proof-of-work as the correct history and continue extending it. This means the protocol never says, in an absolute sense, “this block can no longer be replaced.” Instead, it says something subtler: replacing deeper blocks becomes increasingly expensive and increasingly unlikely if honest miners control the majority of CPU power.

The mechanism is straightforward. Suppose your transaction appears in block B. If B is the tip, another miner could produce a competing tip and the network might temporarily split. If five more blocks are added after B, an attacker who wants to remove your transaction must build an alternative branch not just for B, but for B and all of its descendants, and then overtake the honest chain. The Bitcoin whitepaper explicitly frames this as a race: if the attacker controls less than half the hash power, the probability of catching up falls exponentially as the confirmation depth z increases.

That is why users talk about confirmations. A confirmation is not a magical state transition in the protocol. It is a depth count: your transaction is in one block, then z more blocks are linked after it. The deeper it sits, the lower the reorg risk under the model. Finality in Bitcoin is therefore not binary. It is a confidence curve.

A concrete example makes this clearer. Imagine an exchange receives a Bitcoin deposit and must decide when to credit the user. If it credits immediately when the transaction first appears in a block, it is trusting that the block will remain in the winning proof-of-work branch. If it waits for several more blocks, it is requiring that much more work to accumulate on top of the deposit. The exchange is not receiving an absolute guarantee. It is choosing a risk threshold. Small retail payments might tolerate a small residual probability of reversal; a large treasury transfer probably will not.

This also explains why simplified clients, such as SPV-style light clients, inherit the same finality model. They can verify inclusion in a block and see proof-of-work in block headers, but they still depend on the honest-majority assumption. If an attacker can overpower the network, the apparent confirmation depth stops being reliable.

The strength of this approach is that it keeps the system highly available and open-ended. The cost is that there is no protocol event after which recent history is literally unrevisable. That is not a bug in the explanation; it is the design.

What is immediate (deterministic) finality and how does it prevent reverts?

| Finality type | Mechanism | Safety guarantee | Liveness tradeoff | Best for |

|---|---|---|---|---|

| Probabilistic | Accumulated work or sampling | Reversal probability decreases with depth | High availability under partitions | Open permissionless systems |

| Deterministic | Quorum voting and commit | Committed blocks cannot conflict | May stall under partitions | Validator-based networks |

| Economic (hybrid) | Checkpoint votes plus slashing | Final unless catastrophic slashing | Can stall; has recovery mechanisms | Large PoS with accountability |

Some blockchain families make a different tradeoff. Instead of saying “reversal becomes unlikely,” they try to say “once the protocol commits this block, honest nodes will never commit a conflicting one.” This is the style of finality associated with classical Byzantine fault tolerant state machine replication, including systems such as Tendermint.

The underlying mechanism is different from longest-chain competition. Validators participate in explicit rounds of voting. A block is proposed, validators exchange votes, and if the required threshold is reached under the protocol’s fault assumptions, the block is committed. The point of the protocol is to maintain a safety invariant: no two conflicting blocks can both be committed by honest participants.

This is often called immediate finality, though “immediate” does not mean zero latency. It means that once the commit condition is reached, there is no ordinary notion of waiting for more depth to make the block safer. The protocol has already crossed the safety threshold. Additional blocks extend finalized history; they do not make the earlier commitment more final.

Why not design every blockchain this way? Because stronger consistency usually comes with stronger assumptions or tighter coordination requirements. Validators need to communicate enough to form quorums. Long network delays, partitions, or churn can stall progress. The gain is clear settlement. The price is that liveness can depend more directly on synchrony and on the validator set behaving within defined fault bounds.

This is the first major tradeoff behind finality: probabilistic chain growth buys availability and openness; deterministic commit rules buy stronger settlement at the cost of more synchronization.

How does Ethereum’s checkpoint finality (Gasper) make blocks final?

Ethereum proof-of-stake is a useful middle case because it separates two jobs that many readers initially lump together. One job is choosing the head of the chain right now. The other is declaring some older part of the chain settled. In Gasper, those jobs are handled by different but connected mechanisms: LMD-GHOST for fork choice and Casper FFG for finality.

This separation matters. A node may see several recent branches and use LMD-GHOST to decide which branch currently has the greatest attestation weight. That answers the question “what should I build on now?” But it does not by itself answer “what part of history is now safe enough that everyone should treat it as settled?” Casper FFG adds that second answer.

Ethereum finalizes only certain blocks, called checkpoints, which occur at epoch boundaries. A checkpoint first becomes justified when two-thirds of the total staked ether has voted for it in the required way. Then, when a later checkpoint is justified on top of that justified checkpoint, the earlier one becomes finalized. The important mechanism is the supermajority link between checkpoints. Finality is not being assigned to every slot block independently. It is being assigned to checkpoint structure, and ordinary blocks inherit security from the finalized checkpoints around them.

The reason this is credible is not only that validators voted. It is that the protocol makes certain conflicting votes slashable. A validator that equivocates or votes inconsistently can lose stake and be removed. According to Ethereum’s documentation, reverting a finalized checkpoint would require either control of two-thirds of the stake or the destruction of at least one-third of the total staked ether in a critical consensus failure. That is why Ethereum finality is often described as economic finality: the protocol ties conflicting finalizations to severe, provable financial penalties.

A worked example helps. Suppose a user deposits ETH into an application that will release assets elsewhere only after strong settlement. The application may watch the head of the chain for speed, but it should not rely on the head alone. Once the relevant checkpoint is justified and then finalized by a later justified checkpoint, the application has crossed a different threshold. At that point, a conflicting finalized history would not just require “a stronger branch.” It would require a catastrophic break of the stake-voting assumptions, with slashable evidence. The application is no longer relying merely on recent chain preference; it is relying on accountable quorum commitment.

Ethereum also reveals an important subtlety: finality and liveness can come apart. If enough validators go offline or the network is badly disrupted, finalization can stall even while some chain activity continues. Gasper’s inactivity leak is designed to restore plausible liveness by gradually reducing the weight of inactive validators until an active supermajority can finalize again. That mechanism shows what finality is really built from: not just votes, but a system for recovering quorum after disruption.

How should you compare different finality guarantees across chains?

Theoretical work on blockchain finality makes explicit something practitioners often learn informally: there is no single, universal finality property. There are multiple guarantees with different strengths.

At one end is the consensus-style notion where a committed block is never revoked. At another is the familiar probabilistic model, where blocks may be revoked but become safer with depth. Between them are weaker deterministic notions. One important example is deterministic eventual finality, which guarantees that the main chains selected by honest processes eventually share a common increasing prefix. This is weaker than immediate finality because it does not say that newly appended blocks are permanent at once. It only says that honest views converge on a growing shared past.

That distinction matters because some guarantees that sound intuitively reasonable turn out to be impossible in very weak network models. The formal literature shows that in a fully asynchronous setting with Byzantine failures, you can achieve weaker eventual agreement properties, but stronger guarantees such as a fixed bound on how many recently seen blocks may later be revoked (called bounded displacement) are impossible in that general model. Informally: if the network gives you too little timing structure, the protocol cannot promise that “at most the last k blocks are unsafe” for some universal bound without solving a stronger consensus problem.

This is one of the clearest places where distributed computing illuminates blockchain design. Users often want a simple sentence like “after k blocks, you are safe.” Whether a protocol can honestly say that depends not just on engineering quality but on deep limits of what agreement is possible under the assumed failure model.

It also explains why finality discussions can become confused when people compare different chains without comparing assumptions. A protocol proven safe under synchrony or partial synchrony is not directly comparable to one designed for open-ended probabilistic convergence under continual competition. The same word, finality, is being used for different guarantees.

Finality gadgets and modular design

A recurring design pattern is the finality gadget. Instead of making block production itself fully final, a protocol allows blocks to be produced optimistically and then runs a separate mechanism that finalizes some prefix later. Casper FFG and GRANDPA are both examples of this broader idea.

Why split the problem this way? Because block production and finalization pull in different directions. Fast block production favors responsiveness: keep the chain moving, choose a head, let users see progress. Finalization favors caution: gather stronger evidence, confirm that honest participants converge, and only then settle history. A gadget lets the system do both jobs with different tools.

GRANDPA makes this especially explicit. It is designed as a finality gadget that runs alongside block production and finalizes blocks in a partially synchronous setting with up to one-third Byzantine actors. The important consequence is that the chain can continue growing while finalization lags. Clients can optimistically follow the head for speed, but they know that only finalized blocks carry the stronger guarantee. If conflicting blocks were somehow finalized, the protocol provides accountable safety: enough Byzantine voters can be identified for punishment.

This modular view also helps explain why “fork choice” and “finality” are related but not identical topics. Fork choice tells nodes which visible branch to prefer now. Finality tells them which prefix should no longer be contestable. A protocol may use one rule for the moving frontier and another for the settled past.

Which non‑PoW protocols use probabilistic finality and how do they work?

It would be a mistake to think probabilistic finality belongs only to proof-of-work. Avalanche-family protocols, for example, reach decisions through repeated random subsampling and a metastable convergence process. Nodes repeatedly query small subsets of peers; if enough samples support a value, confidence grows, and the system tends to lock into one outcome. The guarantee is again probabilistic: conflicting outcomes become extremely unlikely, not logically impossible.

What changes is the mechanism. In Bitcoin, safety grows from accumulated work and the difficulty of catching up. In Avalanche-style designs, safety grows from repeated sampling dynamics that drive the network toward one stable preference. But the user-facing meaning is similar: after enough consistent support, a transaction is treated as final because reversal probability is very low under the model.

This is a good example of where analogy helps but also fails. You can think of probabilistic finality as “the system building confidence.” That explains the common shape across PoW and Avalanche. But it fails if it makes confidence sound subjective. The confidence here is not merely a user feeling. It is a property induced by the protocol’s security analysis: under stated assumptions, the probability of successful contradiction falls to a chosen threshold.

How do finality assumptions determine bridge security and acceptance policies?

| Source chain | Acceptance signal | Typical wait | Primary risk | Trust boundary |

|---|---|---|---|---|

| Bitcoin | z confirmations (block depth) | Minutes to hours (z blocks) | Deep reorg or hashpower attack | Majority hashpower assumption |

| Ethereum (Gasper) | Checkpoint justification then finalization | Epoch-bound delays (minutes) | Validator collusion or slashing failure | Validator quorum plus slashing |

| BFT chains (Tendermint) | Quorum commit votes | Seconds to minutes | Validator equivocation or censorship | Validator set behaviour and synchrony |

Finality becomes most concrete when one system must act on another system’s history. Bridges are the sharpest case. A bridge that sees a deposit on chain A and mints assets on chain B must decide when the deposit on A is settled enough to trust. If it acts too early and chain A later reorgs, the bridge can become undercollateralized or outright exploited.

This is why bridge design is inseparable from source-chain finality. A bridge integrating with Bitcoin must decide how many confirmations it requires. A bridge integrating with Ethereum may wait for checkpoint finalization. A bridge integrating with a BFT chain may rely on deterministic commit proofs. The right threshold is not cosmetic; it is the bridge’s core risk control.

There is a second layer of risk too. Even if the source chain has strong finality, the bridge’s own validator or guardian system must not be easier to corrupt than the source chain’s settlement process. The Ronin breach is a reminder that attestation systems can fail at the quorum layer itself: if an attacker controls enough bridge validators, they can forge the bridge’s view of what is final regardless of what happened on the source chain. So bridge safety depends on both when the bridge accepts source-chain finality and who is authorized to attest to it.

For the same reason, finality matters to exchanges, lending protocols, treasury systems, and rollup exits. Any workflow that triggers an irreversible action based on observed chain state is secretly a finality consumer.

What are common misconceptions about blockchain finality?

The most common mistake is to treat finality as a simple yes-or-no label attached to a chain. In reality, finality is always relative to an assumption set: synchrony conditions, honest-majority thresholds, validator behavior, economic penalties, and sometimes client policy. Saying “chain X has finality” is incomplete. The meaningful statement is “chain X achieves this kind of finality through this mechanism if these assumptions hold.”

A second mistake is to equate finality with immutability. Immutability is the broad aspiration that history should not change. Finality is the operational rule that tells you when a specific part of history has enough protection to be treated as settled. A chain may aspire to immutability and still expose recent blocks to reorg risk.

A third mistake is to confuse fork choice with finality. Fork choice answers “which branch is best right now?” Finality answers “which prefix is beyond ordinary dispute?” In some protocols the same machinery influences both, but conceptually they are different jobs.

A fourth mistake is to think stronger finality is always better in every environment. Stronger finality usually means stronger coordination requirements or stronger assumptions. In a highly adversarial, globally distributed, permissionless setting, a protocol may rationally choose probabilistic settlement rather than stall too easily under partitions.

When and how can finality fail in real networks?

Finality is never free of assumptions, and it breaks exactly where those assumptions break.

In proof-of-work systems, probabilistic finality weakens if an attacker gains enough hash power, if mining is highly concentrated, or if network conditions allow effective partitioning. The chain may still look normal until a deep reorg proves otherwise.

In proof-of-stake BFT-style systems, finalized history depends on validator thresholds, slashing rules, and network conditions sufficient to gather votes. If too many validators go offline, liveness can stall. If enough validators equivocate or collude, safety can fail, though ideally with slashable evidence and visible accountability.

In protocols with formal persistence guarantees, the bounds depend on explicit assumptions such as synchrony, honest-stake majority, or corruption delays. Change the assumptions and the theorem changes with them.

And in cross-chain systems, the weakest finality assumption in the path often dominates. A bridge is only as safe as the source-chain settlement it waits for and the bridge-quorum process that attests to it.

Conclusion

Finality is the answer to a simple but unforgiving question: when can a blockchain stop revising the past, at least for practical purposes? Different systems answer with different resources. Bitcoin uses accumulated proof-of-work and gives you rising confidence with depth. Tendermint-style systems use quorum commits and aim for deterministic settlement. Ethereum combines fork choice with checkpoint finalization and backs its claims with slashable stake. Other systems use probabilistic sampling or finality gadgets to reach similar ends through different mechanisms.

The part worth remembering tomorrow is this: a transaction is not final because it is old; it is final because the protocol has made conflicting history no longer credible under its rules and assumptions. Once you see that, confirmation counts, validator quorums, checkpoints, slashing, and reorg risk all become pieces of the same idea.

What should I understand about finality before depositing, withdrawing, or trading?

Understand the chain‑specific finality model and pick a concrete wait rule before you deposit, withdraw, or trade. On Cube Exchange, fund your account and use the normal deposit or withdrawal flow while applying those on‑chain checks to reduce reorg and bridge risk.

- Fund your Cube account with fiat or a supported crypto transfer and choose the exact asset + network (e.g., BTC on Bitcoin mainnet, ETH on Ethereum).

- Check the required settlement threshold for the chain: for Bitcoin-style PoW, confirm the transaction has the confirmation depth you require (commonly 6+ for retail, more for large transfers); for Ethereum, wait for the relevant checkpoint to be finalized before treating the deposit as settled.

- If you plan a cross‑chain transfer or bridge action, verify the bridge’s stated finality policy and wait until the on‑chain evidence (confirmations or finalized checkpoint) meets that policy before initiating the mint/withdraw step.

- When executing on Cube, choose the order or withdrawal parameters that match your risk profile (use limit orders for price control, confirm destination network and fees on withdrawal), then submit and monitor the on‑chain confirmations until your chosen finality threshold is met.

Frequently Asked Questions

Probabilistic finality (e.g., Bitcoin, Avalanche) makes reversals increasingly unlikely as more work or repeated sampling accumulates, trading absolute guarantees for high availability and open-ended growth; deterministic or quorum-based finality (e.g., Tendermint-style BFT, checkpoint finality in Ethereum) gives much stronger settlement once a threshold is reached but requires more coordination, synchrony assumptions, or liveness tradeoffs.

A finalized Ethereum checkpoint is intended to be irreversible under normal assumptions: reverting it would either require controlling more than two‑thirds of total stake or producing catastrophic equivocation that destroys (or is compensated by) at least one‑third of stake via slashing, so in practice a reversion implies either overwhelming stake control or an extreme, slashable consensus failure.

No - in the fully asynchronous, Byzantine model you cannot universally bound how many recent blocks might later be revoked (bounded-displacement is impossible) without adding stronger timing or trust assumptions; achieving a fixed ‘wait k blocks and you’re safe’ bound requires stronger assumptions than that weak model permits.

Bridges should pick a finality threshold that matches the source chain’s settlement mechanism (e.g., X confirmations for Bitcoin, a finalized checkpoint for Ethereum, or a BFT commit for a Tendermint chain) and also ensure the bridge’s attestation/quorum process is at least as hard to compromise as the source; accepting deposits too early or relying on a weaker attestor set are the common failure modes.

light clients (SPV clients) do not change the underlying finality model: they inherit the same probabilistic guarantees as the chain they follow and remain vulnerable if the chain’s security assumptions (for Bitcoin, an honest majority of hash power) are violated, because SPV verifies headers and confirmation depth rather than full state.

A finality gadget is a separate mechanism that runs alongside optimistic block production to later finalize prefixes (examples: Casper FFG, GRANDPA); it lets a system be fast and responsive for immediate head selection while still providing stronger, often accountable, settlement for an agreed prefix.

Network partitions affect designs differently: proof‑of‑work systems risk deeper or surprise reorgs if adversaries can concentrate hash power or isolate miners, while BFT-style PoS systems typically risk stalled finalization (loss of liveness) if too many validators are offline or messages are delayed, prompting recovery mechanisms like Ethereum’s inactivity leak or other unstaking/weight‑adjustment policies.

No - “wait k blocks” is only meaningful relative to the protocol’s security mechanism; in PoW depth proxies accumulated work, in quorum‑based protocols waiting is meaningful only insofar as it lets required votes or checkpoints be collected, so the correct waiting rule depends on which resource (work, votes, attestations) actually secures history and on the protocol’s assumptions.

Related reading