What is Proto-Danksharding?

Learn what proto-danksharding is, how EIP-4844 blobs work, why rollups use them, and how separate blob fees make data availability cheaper.

Introduction

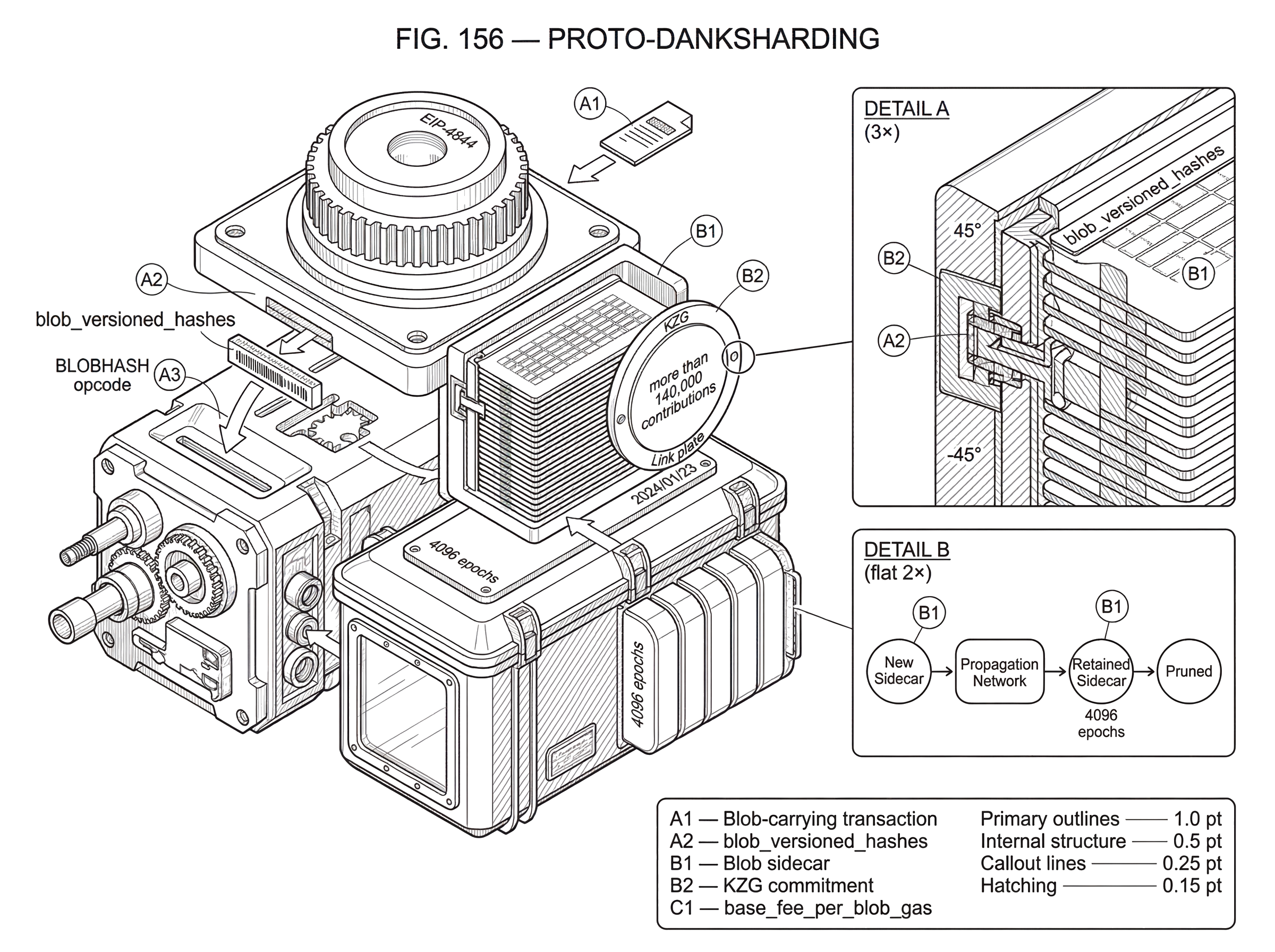

Proto-danksharding is a blockchain scaling design, implemented on Ethereum as EIP-4844, that adds a new kind of block data called a blob so rollups can publish transaction data more cheaply than they could with ordinary calldata. The stakes are practical: for many rollups, the dominant cost is not executing transactions on the L2 itself, but paying L1 to make their data available in a way anyone can verify. Proto-danksharding attacks that bottleneck directly.

The puzzle it solves is simple. Rollups already move execution off the base chain, so why were fees still often meaningfully constrained by Ethereum? The answer is that rollups still need to post data back to L1 so users, verifiers, and challengers can reconstruct state transitions and detect fraud or validate proofs. Historically, much of that data went into CALLDATA, which is expensive because it is processed by Ethereum nodes and stored onchain permanently, even though rollups usually only need the underlying bytes to remain available for a limited window.

Proto-danksharding changes that tradeoff. Instead of forcing rollup data into the same lane as ordinary execution inputs, it creates a separate lane for bulk data availability. That lane is cheaper because the data is not exposed to EVM execution and not kept forever in the same way calldata is. What remains on the execution side is a compact cryptographic reference to the data, while the data itself is carried and retained by the consensus/network layer for a bounded period.

That is the core idea to keep in mind: proto-danksharding is not mainly about making execution cheaper; it is about making data availability cheaper. Once that clicks, the rest of the design (blobs, commitments, sidecars, temporary retention, and a separate fee market) follows naturally.

Why is data availability the main bottleneck for rollups?

A rollup compresses many user transactions into a smaller summary and posts enough information to L1 so the rollup remains verifiable. In an optimistic rollup, that posted data lets challengers reconstruct what happened during the dispute window. In a zero-knowledge rollup, the proof establishes validity, but users and infrastructure still often need the underlying transaction data to reconstruct state, serve wallets, index activity, or exit safely. Either way, the system only works if the needed data is actually available.

This requirement creates a subtle constraint. A base chain does not just need to agree that some transaction happened; it needs to make the relevant bytes accessible to the network long enough that independent parties can check them. If posting those bytes is expensive, then rollup fees stay elevated no matter how efficient the rollup’s own execution engine becomes.

Using calldata for this job was functional but mismatched. Calldata is part of the execution-layer transaction payload. Every full node processes it as part of normal block execution, and it becomes part of permanent chain history. That permanence is useful for some applications, but it is overkill for rollup data whose main requirement is usually availability for a challenge or proving window, not indefinite direct access through the EVM forever.

Proto-danksharding separates these concerns. It says, in effect: if the blockchain’s job here is to guarantee recent availability rather than permanent execution access, then the protocol should expose a cheaper object specialized for that purpose.

What are blobs and why can’t the EVM read them?

The specialized object is the blob. In EIP-4844, a blob transaction is a new transaction type that can carry one or more blobs alongside ordinary transaction fields. The important constraint is that blob contents are not accessible to EVM execution. Smart contracts cannot simply read blob bytes the way they can inspect calldata.

At first glance that sounds restrictive, but it is exactly what makes the design work. If blob contents were fully available inside the EVM, they would behave much more like ordinary execution inputs, and the cost structure would need to reflect that. By denying direct EVM access, the protocol can treat blobs as a cheaper form of data whose purpose is publication and verifiability, not in-contract computation.

What the EVM can access is the blob’s commitment-derived reference. Blob transactions include blob_versioned_hashes, and the BLOBHASH opcode lets contracts read those versioned hashes from the current transaction. This means contracts can refer to which blob is being committed to without reading the blob itself. In addition, EIP-4844 includes a point-evaluation precompile that verifies KZG proofs, allowing a contract to check that a claimed value is consistent with the committed blob at a particular evaluation point.

That division of labor is deliberate. The raw data stays outside normal EVM execution, but the chain still exposes enough cryptographic hooks for protocols to bind onchain logic to off-EVM data.

A useful analogy is a warehouse receipt. The contract does not receive the warehouse’s full contents; it receives a cryptographic receipt that lets it verify claims about what is stored. The analogy helps explain the separation between data possession and data reference, but it fails in one important way: a warehouse receipt depends on an institution enforcing storage rules, while here the guarantees come from protocol rules, consensus validation, and cryptographic proofs.

Why do blobs require KZG commitments?

| Scheme | Commitment size | Trusted setup | Proof size | Onchain check | Best for |

|---|---|---|---|---|---|

| KZG | Constant (short) | Requires trusted setup | Small evaluation proof | Point-eval / KZG precompile | DA with compact handles |

| Merkle tree | Root + log-size proofs | No trusted setup | Logarithmic inclusion proof | Merkle inclusion checks in-contract | Archive-style availability |

| No commitment (calldata) | Full bytes onchain | None | No separate proof (raw data) | Contracts read raw calldata | When permanent EVM access needed |

Once blob contents live outside direct execution, the protocol needs a way to bind those bytes to something small and durable. Otherwise a rollup could claim to have posted one dataset while later serving a different one. The mechanism used here is a KZG commitment, a constant-size polynomial commitment.

The intuition is that the blob is interpreted as data defining a polynomial over a finite field. A commitment compresses that polynomial into a short cryptographic object. Later, someone can prove that a particular evaluation of the polynomial is correct without revealing the entire polynomial. In the proto-danksharding design, the execution layer deals mainly with a versioned hash derived from the KZG commitment, while the consensus/network side handles the actual blob and proof material needed for validation.

You do not need the algebra to understand the role it plays. The protocol wants three things at once: a large data object, a small onchain handle to it, and the ability to verify openings against that handle. KZG commitments are the tool chosen to get that combination.

There is a real assumption underneath this choice. KZG commitments require a trusted setup, which is why Ethereum ran a large public KZG ceremony to generate the needed cryptographic parameters. Ethereum Foundation material describes more than 140,000 contributions to that ceremony. The trust model is not “trust Ethereum developers forever”; it is closer to “the setup remains secure so long as at least one contribution was honestly generated and not all participants were compromised.” That is a meaningful assumption, but still an assumption.

This is also one reason proto-danksharding is worth distinguishing from full danksharding. The current design uses commitments and sidecars to make a near-term improvement deployable. The later roadmap adds broader machinery such as data availability sampling, where not every node needs to download all data to gain confidence it is available.

How does a rollup publish a batch using a blob (worked example)?

Imagine a rollup operator has collected thousands of L2 transactions. It compresses them into a batch and needs to publish the batch data to Ethereum so anyone can reconstruct the rollup state transition if needed. Before proto-danksharding, the operator might put that data into calldata. The data becomes part of the execution payload, permanent chain history grows, and the operator pays normal L1 pricing for those bytes.

With proto-danksharding, the operator instead creates a blob-carrying transaction. The transaction still has familiar fields such as chain_id, nonce, gas parameters, to, value, and data, but it also includes blob-related fields such as max_fee_per_blob_gas and blob_versioned_hashes. The actual blob bytes are not embedded in the normal execution payload in the same way ordinary transaction inputs are. Instead, they travel through the consensus/network machinery as associated blob data.

When the transaction is included, the execution layer sees the transaction and the blob references. Contracts can inspect the versioned hashes with BLOBHASH if they need to bind some state transition or commitment logic to the published blob. Meanwhile, the consensus layer validates and persists the blob data itself for the required availability window. The blob is retained for about 4096 epochs, roughly 18 days in current parameters, and then pruned.

Why is that enough? Because many rollups only need L1 to guarantee availability across a dispute or proving window, not forever. Ethereum’s own Dencun FAQ notes that optimistic rollups typically have a 7-day withdrawal period, so an 18-day availability window leaves buffer beyond that. The result is that the rollup buys the security property it actually needs (recent availability) without paying as much for an unnecessary permanence property.

The consequence for users is indirect but important. If the rollup’s dominant L1 publishing cost falls, the rollup can often lower the fees it charges to users.

Why do blobs use a separate fee market (blob gas)?

| Resource priced | Congestion coupling | Pricing mechanism | Main effect | Best for |

|---|---|---|---|---|

| Blob gas | Decoupled from compute | EIP-1559-like basefeeperblobgas | Keeps bulk-data cheap | Rollups posting bulk data |

| Regular gas | Couples compute and calldata | EIP-1559 base fee | Prices execution and calldata | General transactions and contracts |

If blobs were priced inside ordinary gas, they would compete directly with computation and calldata in a way that would make the market noisy and hard to tune. Proto-danksharding instead introduces blob gas as a distinct resource with its own EIP-1559-style pricing rule. The protocol tracks base_fee_per_blob_gas separately from ordinary base fee.

This is one of the cleanest parts of the design. Execution gas measures demand for computation and state-access-related work. Blob gas measures demand for temporary bulk data availability. These are different burdens on the network, so the protocol prices them separately.

EIP-4844 sets conservative initial throughput parameters: a target corresponding to 3 blobs per block and a maximum corresponding to 6 blobs per block. In byte terms, the cited values correspond to about 0.375 MB target and 0.75 MB max per block. Those limits are not meant to be the final destination. They are a cautious starting point chosen to deliver real scaling gains without immediately pushing network bandwidth and propagation assumptions too far.

This separation also explains why proto-danksharding mostly reduces L2 fees, not necessarily ordinary L1 execution fees. The new cheap lane is specifically for rollup data publication. Some spillover effects on L1 fee dynamics are possible if rollup demand moves out of calldata, but the main design goal is cheaper rollup posting.

There is also an economic subtlety here. Blob pricing is per blob-space usage pattern, not perfectly proportional to every rollup’s internal demand moment by moment. Research on EIP-4844 economics suggests a rollup’s decision depends not only on posting cost but also on delay cost: if a rollup has low transaction volume, waiting to fill a blob may impose too much latency, making ordinary blockspace more attractive in some periods. So blobs are not automatically optimal for every rollup at every time.

Why are blobs propagated as sidecars rather than embedded in the block body?

A natural question is: if blobs are part of block-related data, why not simply place the full blob contents directly inside the beacon block body? The answer is forward compatibility.

In the consensus design, blobs are referenced in beacon blocks but propagated separately as blob sidecars. A BlobSidecar contains the blob, its KZG commitment, proof, the signed block header, and an inclusion proof connecting the commitment to the block. The sidecar design means the block structure can remain relatively stable while the protocol’s method of checking data availability can evolve.

Here is the mechanism. Today, nodes still download the relevant blob data and validate it. But by keeping full blob contents outside the main beacon block container, the protocol leaves room for a future in which is_data_available() is implemented through data availability sampling rather than universal download. The consensus specs state this motivation explicitly: sidecars “black-box” the data-availability check so the internals can change later without redefining the whole block format.

This is where proto-danksharding earns the “proto” in its name. It is not the final danksharding system. It is an intermediate architecture that already introduces the key user-facing primitive (cheap blob data for rollups) while arranging the network and consensus layers so future upgrades can scale much further.

The networking rules reflect that blobs are large and therefore dangerous if propagated carelessly. EIP-4844 explicitly says nodes must not automatically broadcast full blob transactions to peers. They should announce hashes, and peers can then request the full transaction or blob data if desired. This reduces bandwidth-based denial-of-service risk in the mempool. On the consensus side, dedicated gossip topics and request/response protocols handle blob sidecars, with explicit validation rules for inclusion proofs, KZG proofs, proposer signatures, subnet mapping, and serving windows.

In other words, proto-danksharding is not just a new transaction type. It is also a new networking discipline for large, semi-separate data objects.

Are temporary blobs safe; why does proto-danksharding prune data?

| Option | Retention | EVM access | Typical cost | Who archives | Best when |

|---|---|---|---|---|---|

| Blobs | Temporary (18 days) | Not readable by EVM | Lower per-byte | Rollups / indexers may archive | Recent availability suffices |

| Calldata | Permanent onchain | Readable by EVM | Higher per-byte | Protocol maintains history | Need permanent EVM access |

| External archive | Indefinite if stored | Offchain only | Variable (third-party) | Third parties / storage providers | Historical retrieval needed |

Many readers first encounter blobs and immediately ask whether pruning them after about 18 days makes the system less secure. The answer is: it depends on what security property you think the data is supposed to provide.

If you expect the base chain to be a permanent archive of all rollup batch bytes, then yes, pruning removes that property. But proto-danksharding is not trying to preserve that property for blob contents. It is trying to provide a cheaper guarantee: the data is available to the network long enough for the rollup’s security model to work.

For many rollups, that is enough. The chain guarantees recent availability, while historical archiving can be handled by rollups themselves, users, indexers, or external storage systems. Ethereum’s Dencun FAQ is explicit that historical blob data may need to be preserved by third parties if needed after pruning.

This design keeps chain growth under better control. Permanent storage is expensive not because disks are expensive in isolation, but because permanently increasing the required historical footprint of the network changes who can run nodes and how costly sync and verification become. Temporary blobs reduce those long-run burdens.

The tradeoff is operational rather than mysterious. If an application truly needs indefinite raw-byte retrievability from the base protocol itself, blobs are the wrong tool. If it needs broad recent availability with cryptographic binding, blobs are often a better fit.

Proto-danksharding vs full danksharding: what’s the difference?

Proto-danksharding is often used almost interchangeably with EIP-4844, and in practice that shorthand is usually fine. But there is a useful distinction. Proto-danksharding is the scaling idea and intermediate architecture; EIP-4844 is the concrete Ethereum implementation that introduced blob-carrying transactions, blob gas, KZG commitments, and sidecar handling.

It is also not the same as full danksharding. Official Ethereum documentation describes full danksharding as the later stage where the number of blobs grows much further (often framed as moving from 6 to 64 blobs) and where additional machinery such as data availability sampling and proposer-builder-related improvements come into play. Proto-danksharding gives the ecosystem an immediately useful subset of that future: the cheap data lane arrives now, while the more ambitious scaling assumptions arrive later.

This distinction matters because some people hear “danksharding” and imagine that Ethereum already reached a state where every node samples tiny pieces instead of downloading all data. That is not what proto-danksharding delivers. It delivers the transaction and fee-market foundation on which that later system can be built.

What assumptions does proto-danksharding rely on?

The first assumption is cryptographic. KZG commitments are efficient and compact, but they rely on a trusted setup. Ethereum mitigated this through a very large public ceremony, yet the design still inherits the setup assumption.

The second assumption is network-level. Cheap blob space is only useful if the network can reliably propagate and serve blobs within the intended retention window. That is why EIP-4844 and the Deneb consensus specs are careful about sidecar gossip, request ranges, and anti-DoS rules. The protocol parameters start conservatively because bandwidth and propagation are real bottlenecks.

The third assumption is application-level. Proto-danksharding is especially valuable when applications need recent data availability but not permanent EVM-readable storage. Rollups fit this pattern well, but not every use case does.

And there is a roadmap assumption. Proto-danksharding is designed to compose with future data availability sampling. Research on DAS formalizes why this next step is powerful: a sufficiently large set of verifiers can gain confidence that block data is available without each verifier downloading everything. But proto-danksharding itself does not yet provide that final property. It prepares the protocol structure for it.

This broader point is not Ethereum-specific. Other modular systems also center scaling around data availability rather than only execution throughput. Celestia, for example, organizes its architecture around data publication and availability services, though with different protocol choices and a stronger native focus on modular DA. The shared lesson is that once execution moves off the base chain, availability of compressed transaction data becomes the new scarce resource.

Conclusion

Proto-danksharding is best understood as a redesign of what the base chain sells. Instead of forcing rollups to buy permanent, execution-visible calldata when they mostly need temporary, verifiable publication of bytes, it introduces blobs: cheaper data objects with cryptographic commitments, separate pricing, and bounded retention.

That single shift explains nearly everything else: why blobs are off-EVM, why there is a blob fee market, why sidecars exist, why data is pruned, and why the upgrade helps rollups first. Proto-danksharding makes data availability a first-class protocol resource. That is why it lowers rollup costs today, and why it also serves as the bridge toward fuller danksharding later.

How does proto-danksharding affect real-world usage?

Proto-danksharding (EIP-4844) matters because it changes how rollups publish data: blobs are temporary, off‑EVM data objects with KZG commitments and a separate blob-gas market that can lower L2 publishing costs but introduce new availability and archival considerations. Before you fund or trade assets tied to a rollup, follow this Cube checklist to translate proto-danksharding’s design into concrete checks on fees, data retention, and provider support.

- Check whether the rollup or L2 you plan to use publishes batches as blobs (EIP-4844) or still uses calldata by reading the rollup’s docs, release notes, or operator announcements.

- Inspect blob-gas metrics (base_fee_per_blob_gas) and recent L2 posting fees on a block explorer or analytics dashboard to estimate likely fee behavior after blob adoption.

- Verify the rollup’s dispute/withdrawal window against blob retention (≈4096 epochs ≈ 18 days); if the window exceeds retention, confirm an archival plan from the rollup, indexer, or third-party storage.

- Confirm your wallet or RPC/provider supports blob-sidecar retrieval and blob-aware transaction flows; switch to a provider that documents blob support if needed.

- Fund your Cube account and size trades to tolerate short-term fee volatility while you monitor blob utilization and L2 posting patterns before increasing exposure.

Frequently Asked Questions

Proto-danksharding (EIP-4844) adds a new on-chain data type called a blob that the consensus and network layers carry cheaply for a bounded time so rollups can publish large batches of transaction bytes without paying permanent calldata costs; the EVM only sees a small cryptographic reference to the blob, not its raw contents.

Blobs are intentionally not readable by the EVM so the protocol can treat them as a cheaper, availability-focused resource rather than execution inputs; contracts can still refer to blobs via versioned hashes (BLOBHASH) and verify openings with the KZG verification precompile.

Blob data is retained for about 4096 epochs (roughly 18 days) and then pruned; if an application needs indefinite raw-byte archival, that long-term storage must be provided by rollups, indexers, or third-party archival services.

Proto-danksharding uses KZG polynomial commitments to bind large off‑EVM blobs to compact on‑chain handles; KZG requires a trusted setup, and Ethereum mitigated this by running a large public KZG ceremony whose security rests on at least one honest contributor.

Blobs live in a separate blob-gas market (tracked by base_fee_per_blob_gas) so data-availability demand doesn’t compete with execution gas; EIP-4844 sets conservative initial limits (target ≈ 3 blobs/block, max ≈ 6 blobs/block, about 0.375 MB target and 0.75 MB max per block), and because of delay/latency tradeoffs some rollups with low arrival rates may still prefer posting via ordinary calldata.

blob sidecars are the separate network objects that carry full blob bytes, KZG proofs, and inclusion proofs outside the beacon block body so the block format can remain stable and later migrate to data availability sampling (DAS) or other DA checks without redefining blocks.

No - proto-danksharding (EIP-4844) is an intermediate step that provides cheap, temporary DA via blobs; full danksharding is a later stage that expects many more blobs per block plus data-availability-sampling, proposer-builder separation, and other machinery that proto-danksharding prepares the protocol to adopt but does not itself implement.

If blob capacity becomes saturated, rollups may need to fall back to posting data as permanent calldata (or otherwise use non-blob channels), which would raise L1 costs and therefore increase L2 publishing fees until blob capacity or network provisioning is adjusted.

Proto-danksharding’s effectiveness relies on multiple assumptions: the cryptographic assumption from the KZG trusted setup, network-level assumptions about reliable propagation and serving of large blobs within the retention window, and an application-level assumption that recent availability (not permanent on-chain bytes) satisfies the rollup’s security model.

Related reading