What is Danksharding?

Learn what danksharding is, why Ethereum uses blobs and data availability sampling, and how it scales rollups beyond EIP-4844.

Introduction

Danksharding is Ethereum’s design for scaling by making data availability cheap and abundant, rather than by asking every node to execute far more computation. That sounds like a subtle shift, but it is the key idea that makes the whole design click: if rollups do most execution off-chain, then the main chain’s bottleneck is no longer “compute every transaction,” but “publish enough data so anyone can check the rollup honestly.” Danksharding is the architecture built around that observation.

The name can mislead readers who expect a classic sharded blockchain with many shard chains running separate execution. That is not what Ethereum is pursuing here. The current roadmap explicitly moved away from traditional shard chains. Instead, Ethereum uses blobs, polynomial commitments, and eventually data availability sampling so the network can support much more rollup data without forcing every validator and full node to download and keep all of it forever.

The simplest way to frame the problem is this: a rollup can compute transactions cheaply off-chain, but users still need a guarantee that the underlying transaction data was actually published somewhere. If an operator posts only a state root and withholds the raw data, then users cannot reconstruct the rollup’s state, verify fraud proofs, or exit safely. So the scarce resource is not only execution. It is verifiable publication of data. Danksharding exists to expand that resource by orders of magnitude.

What scaling problem does danksharding solve?

A blockchain that verifies everything itself hits a familiar limit: every increase in Throughput raises the burden on every validating participant. Bigger blocks mean more bandwidth, more storage, and more time to verify. That pushes the system toward centralization because only stronger machines and better-connected operators can keep up.

Rollups change that tradeoff by moving execution elsewhere. They batch many user transactions, execute them off-chain, and then post compressed results to Ethereum. But that only works if the transaction data behind those batches is available. For a rollup, publishing data is not an optional detail. It is the condition that makes the rollup auditable.

Here is the mechanism. A rollup sequencer can compute a new state root and claim, “this is the correct result of processing these user transactions.” If the transaction data is public, anyone can replay the computation or at least inspect it enough to challenge bad behavior. If the data is hidden, then the state claim becomes much harder to contest. In other words, execution can move away from the base chain, but data availability cannot become a private promise.

That is why calldata became a bottleneck. Before blob transactions, rollups often used normal Ethereum calldata to publish batch data. Calldata is durable, visible to the EVM, and expensive because it competes directly with normal execution resources. Danksharding’s central insight is that rollup data does not need all of calldata’s properties. It needs to be available to the network for a period long enough to verify and recover from it, but it does not need to live forever inside the execution payload or be read directly by smart contracts byte-for-byte.

So Ethereum split the problem. Keep execution data and state transitions in one lane. Add a second lane for large, temporary, verifiably committed data. That second lane is what proto-danksharding introduced and full danksharding expands.

Why doesn't danksharding create multiple shard chains?

Traditional sharding usually means dividing the blockchain into multiple sub-chains, each processing its own transactions. The goal is to spread execution across many committees so the whole system processes more work in parallel. That approach raises hard questions about cross-shard communication, security assignment, and state composability.

Danksharding keeps a different invariant: there is still a single proposer view of the block, and execution is not split into dozens of separate user-facing chains at the base layer. What scales is the network’s ability to carry and attest to data for rollups. That is why official Ethereum material says proto-danksharding and danksharding do not follow the traditional shard-chain model, but instead use distributed data sampling.

This distinction matters because it explains both the strength and the limitation of the design. Danksharding is excellent at making rollup data cheap. It is not a plan for making the base EVM itself execute 100,000 transactions per second. The architecture assumes that high-throughput execution happens mainly in Layer 2 systems, while Ethereum specializes in ordering, settlement, and data availability.

You can see the same principle in other parts of crypto infrastructure. Systems scale better when the base layer focuses on the narrow thing that must be globally agreed, and pushes everything else outward. In settlement systems that use threshold signing, for example, the goal is often not “make every participant hold and use the full secret,” but “distribute trust while preserving a single verifiable outcome.” Cube Exchange uses a 2-of-3 threshold signature scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. Danksharding has a different mechanism, but a similar architectural flavor: keep the trust-critical function on the base layer, and make the heavy operational work happen in a more scalable form around it.

What is proto-danksharding (EIP-4844) and what is live now?

| Channel | On-chain visibility | Retention | Cost driver | Suitable for |

|---|---|---|---|---|

| Calldata | Raw bytes readable by EVM | Permanent in execution payload | Competes with execution gas | Contract storage and calls |

| Blobs (EIP-4844) | Only cryptographic commitment | Ephemeral (4096 epochs ≈ 18 days) | Dedicated blob gas market | Temporary rollup batch data |

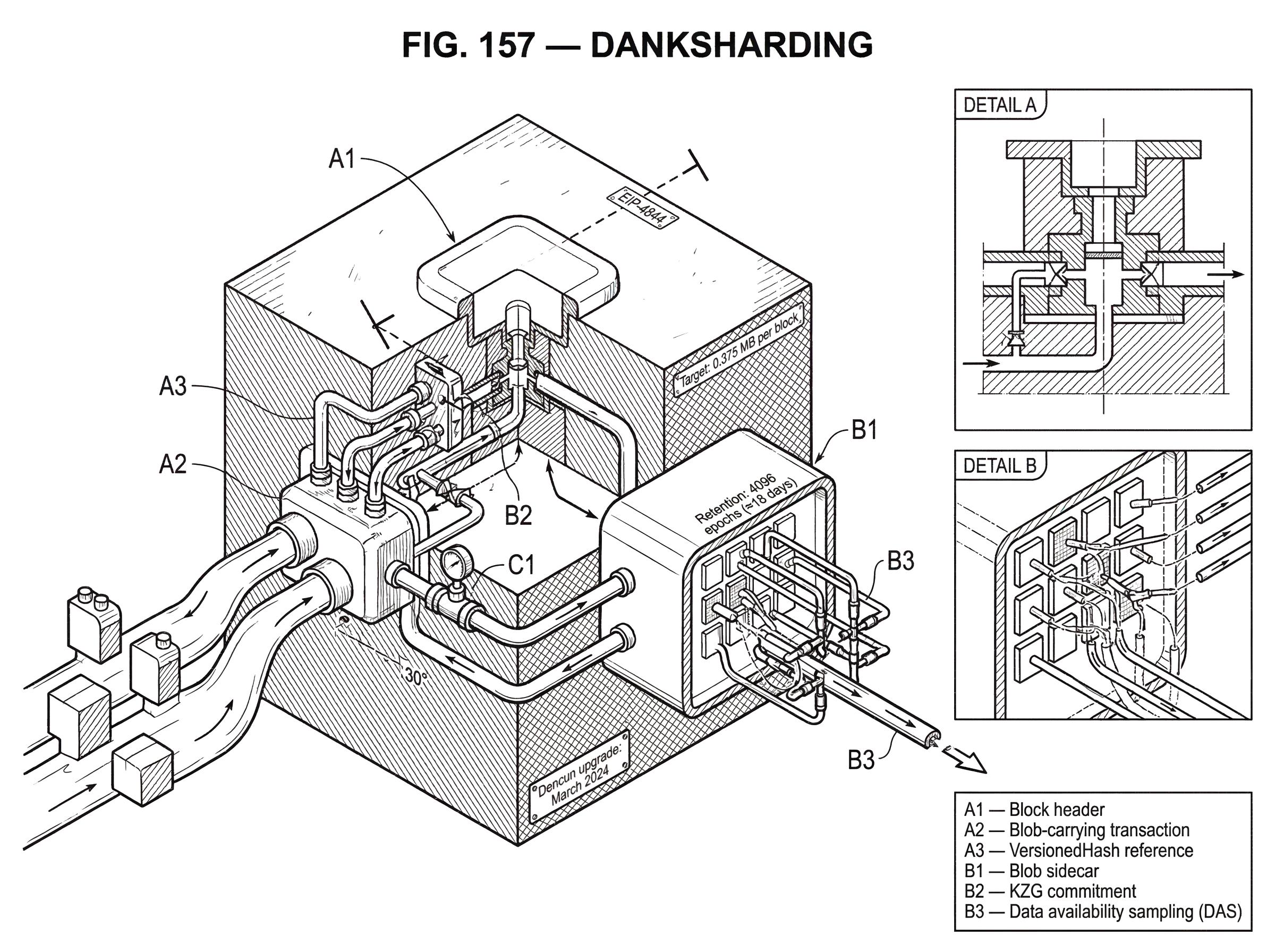

The term danksharding often refers to the full design, but the mechanism users encounter today is proto-danksharding, standardized in EIP-4844 and activated on Ethereum mainnet in the Dencun upgrade in March 2024. Proto-danksharding is the bridge between old calldata-based rollup posting and the future full system.

EIP-4844 introduced a new transaction type: the blob-carrying transaction. A blob is a large fixed-size data payload attached to a transaction. The crucial design choice is that the blob’s raw bytes are not accessible to EVM execution. The EVM can access only a compact cryptographic reference to the blob, not the entire contents. This is why blobs can be priced separately and more cheaply than calldata: they do not impose the same execution-layer burden.

The specification describes proto-danksharding as a stop-gap on the path to full data sharding. Its initial throughput is deliberately modest: a target of roughly 0.375 MB of blob data per block and a hard limit around 0.75 MB. That is enough to lower rollup costs materially without yet requiring the full networking and sampling machinery needed for much larger data volumes.

Blob data is also ephemeral. Consensus nodes are expected to keep blob sidecars available for a minimum retention window of 4096 epochs, about 18 days. After that, the network does not promise to serve the blob forever. This is a feature, not a bug. Rollups need the data during the challenge, proving, and synchronization window. They do not need every batch to become permanent execution-layer baggage.

Here is the practical consequence: if you are thinking in terms of smart contracts, blobs are not a cheaper version of contract storage. They are a cheaper publication channel for off-chain systems that need temporary but reliable visibility. That is why rollups benefit so directly from them.

How do blob-carrying transactions and KZG commitments work?

A blob transaction carries three distinct things that are easy to conflate if you are new to the design.

First, there is the normal execution transaction: sender, recipient, gas terms, and so on. This is the part the execution layer understands in the usual way.

Second, there is the blob data itself: the large payload a rollup wants the network to make available.

Third, there is a cryptographic commitment to that blob. Ethereum uses KZG commitments for this. A KZG commitment turns a large structured piece of data into a short object that can later be used with proofs showing that the data matches what was committed.

The reason commitments matter is that the chain does not want to drag the full blob through every interface forever. It wants a compact commitment on-chain and a way to verify that off-chain propagated blob data really corresponds to that commitment. EIP-4844 defines a VersionedHash derived from the KZG commitment, and the transaction includes these blob versioned hashes. Smart contracts and proofs can work with the commitment reference even though they cannot read the full blob contents directly.

There is also a point-evaluation precompile. Intuitively, this gives contracts and proof systems a standard way to check claims of the form “the polynomial represented by this committed blob evaluates to this value at this point.” The full cryptographic details are specialized, but the high-level purpose is simple: compact verification that a claimed piece of blob data is consistent with the commitment.

A worked example helps. Imagine a rollup sequencer has collected thousands of user transfers. It compresses the batch into blob data and creates a blob transaction. The transaction enters Ethereum with references to one or more blobs and fee terms for blob gas. On the consensus side, the actual blob bytes are propagated separately from the compact block body, as sidecars. Validators and nodes check that the sidecar data matches the KZG commitments referenced by the transaction. The EVM never sees the raw blob as ordinary calldata, so execution stays lighter. But the rollup and anyone monitoring it can retrieve the blob during the retention window, reconstruct the batch, and verify the rollup’s claims. The mechanism works because the commitment anchors the data cryptographically while sidecar propagation carries the bytes efficiently.

Why does proto-danksharding use a separate blob gas fee market?

| Market | Scarce resource | Pricing rule | Node burden | Best for |

|---|---|---|---|---|

| Execution gas | Computation and state | EIP-1559 base fee | High CPU and state storage | Smart-contract execution |

| Blob gas | Short-term data publication | Separate EIP-1559-style base fee | Bandwidth during retention window | Rollup batch posting |

If blobs had to compete directly with ordinary execution gas, they would inherit the same congestion dynamics and lose much of their advantage. EIP-4844 therefore introduced blob gas as a separate accounting system with its own pricing rule.

This is not just a cosmetic distinction. Blob demand and execution demand stress different parts of the protocol. Execution gas reflects computation and state-access burden in the EVM. Blob gas reflects short-term data publication burden. Combining them into one fee market would mix two different scarcity sources and likely overprice rollup data whenever normal execution was busy.

So blob gas has its own target and its own EIP-1559-style base fee adjustment. When recent blob usage exceeds target, the blob base fee rises; when usage is below target, it falls. The specification models this with a self-adjusting rule approximating exponential updates from excess blob gas. The consequence is exactly what the design wants: rollups get a dedicated market for data publication, and ordinary execution does not have to bid against that market directly.

This separation is one reason proto-danksharding can reduce Layer 2 fees even without changing the EVM itself. The user-visible improvement comes from cheaper batch posting, not from making every Layer 1 computation cheaper.

Why are blob sidecars used instead of embedding blobs in blocks?

A compact block body and a large data payload pull in opposite directions. If you stuff every blob directly into the canonical block body, you make consensus messages large and awkward immediately. If you keep blobs entirely off to the side with no cryptographic link, you lose verifiability.

The sidecar design is the compromise. On the consensus layer, beacon blocks reference blobs, but do not fully encode them in the block body. Instead, the full blobs are propagated separately as blob sidecars. This keeps the main block representation compact while still making the data available to nodes that need it.

This design also preserves the upgrade path. The spec explicitly notes that the current is_data_available() style check can later be replaced with data availability sampling in full sharding. In other words, sidecars are not an accidental implementation detail. They are part of the bridge from “everyone relevant downloads the blobs” to “the network can probabilistically verify availability by sampling pieces.”

There is a tradeoff here. Blob transactions increase bandwidth and mempool pressure because large data still has to move through the network. The EIP includes mitigations such as announcement-only gossip rather than automatic full broadcast, but it also acknowledges that DoS and mempool risks remain. This is an important theme throughout danksharding: the cryptography is only half the problem. The networking must also scale.

What does full danksharding add beyond proto-danksharding?

Proto-danksharding proves the transaction format, the cryptographic commitment path, the fee market, and the sidecar model. Full danksharding pushes the data capacity much further. Official roadmap material describes moving from the small proto-danksharding blob count to around 64 blobs per block in the full design, with Ethereum’s broader scaling vision targeting rollup throughput above 100,000 transactions per second.

The obstacle is straightforward. At that scale, it is no longer reasonable to expect every validating participant to download all blob data. If everyone had to fetch every byte of a very large block, the network would again centralize around high-bandwidth operators. So the system needs a way for nodes to be confident that data was published without each node possessing all of it.

That is what data availability sampling, or DAS, is for.

How does data availability sampling (DAS) enable full danksharding?

| Validation model | Data needed | Node bandwidth | Security guarantee | Scalability |

|---|---|---|---|---|

| Full download | Entire block blobs | Very high per node | Deterministic availability | Poor at very large blocks |

| Data availability sampling | Random authenticated pieces | Low per node (samples) | Probabilistic detection of withholding | Enables much larger throughput |

Data availability sampling starts from a simple observation. To catch a block producer who withholds data, a node does not necessarily need the whole block. It needs random enough checks that withholding becomes very likely to be detected.

The usual picture is that blob data is encoded with redundancy using erasure coding and arranged into a larger structure. Then nodes sample random pieces. If the producer withheld too much data, many random samples become unavailable, and the block is rejected with high probability. If enough of the encoded data is available, then the full data can in principle be reconstructed from the available pieces.

This is why official descriptions say full danksharding relies on distributed data sampling rather than classic shard chains. The scale-up comes from changing the validation rule from “download everything” to “sample enough random authenticated pieces that large-scale withholding is overwhelmingly likely to be noticed.”

Here the KZG commitments matter again. Sampling is only useful if a sampled piece can be authenticated against the commitment. Otherwise an attacker could answer sampling requests with fake fragments. The combination of commitments, proofs, and erasure-coded structure is what turns random sampling into a meaningful security check.

A subtle but important point is that sampling gives a probabilistic guarantee, not an absolute one. The guarantee improves as more independent nodes sample, as the sample count increases, and as network assumptions hold. This is normal for scalable distributed systems, but it is also where many misunderstandings begin. Danksharding does not magically remove the need for data to exist. It changes how the network convinces itself the data exists.

What is PeerDAS and how does it address the networking challenge for DAS?

Ethereum’s proposed concrete DAS networking design is PeerDAS, specified in EIP-7594. PeerDAS lets nodes perform data availability sampling while downloading only a subset of total blob data. It combines gossip, peer discovery, and request-response retrieval so nodes can check availability efficiently.

The organizing principle is that the protocol must do two jobs at once. It must disperse data into the network so enough peers hold it, and it must let individual nodes sample small authenticated pieces quickly. Those are different problems. A network path that is good for broad dispersal is not always the best path for low-latency sampling.

PeerDAS extends EIP-4844 blobs with erasure coding and partitions the resulting data into smaller authenticated units called cells, grouped across rows into columns. Nodes sample columns rather than full blobs. With current parameters, the spec describes nodes downloading about 1/8 of the total data for sampling, with room to reduce this fraction later through parameter changes.

The protocol also shifts some expensive work outward. Computing many cell KZG proofs is costly, while verifying them is relatively cheap, so PeerDAS proposes requiring blob transaction senders to compute and include these proofs in the transaction wrapper. That is a very characteristic engineering move: move heavy one-time preparation to senders so block producers and validators can perform cheaper repeated verification.

This part of the design remains under active development. Consensus-spec release notes and roadmap documents make clear that full danksharding and PeerDAS are future work, not something already deployed on Ethereum mainnet. Proto-danksharding is live; full DAS-based scaling is still ahead.

What are the main risks and assumptions behind danksharding?

The most common misunderstanding is to think danksharding is “just cheaper calldata.” That misses the design boundary. Blobs are cheaper because they are weaker than calldata in specific ways: they are not accessible to the EVM as raw bytes, and they are not retained forever.

A second misunderstanding is to think the hard part is only cryptographic. In reality, networking is a major bottleneck. Research and implementation work repeatedly emphasize that DAS introduces stringent latency and bandwidth requirements. If samples cannot be retrieved fast enough, or if dispersal fails under adversarial conditions, the theoretical scaling gains do not materialize safely.

There are also recoverability nuances. Research on 2D data availability sampling shows that having enough total available data can imply recoverability, but recoverability is not identical to instant recovery. Some patterns of missing data can create latency and coordination challenges even when reconstruction is eventually possible. So when people summarize DAS as “nodes only need a few random samples,” that is directionally right but incomplete. The actual security story depends on coding design, sampling parameters, and network behavior.

The KZG commitment scheme introduces another assumption: a trusted setup. Ethereum addressed this with the public KZG Ceremony, which drew over 140,000 contributions and produced the parameter transcript used for EIP-4844. The trust model is that a single honest contribution is enough to secure the setup, and the ceremony was designed to widen confidence in that assumption. Still, this is a real assumption, not something to hand-wave away.

Finally, implementation details matter. Real systems fail at edges, not just in whitepapers. Blob transactions enlarge the mempool and create new code paths. Client teams had to add blob pools, fetchers, sidecar handling, KZG verification libraries, and new validation rules. There have already been implementation-level bugs, including a reported Erigon issue where malformed blob transaction handling around a nil to field could trigger a nil-pointer dereference panic. That kind of incident does not invalidate danksharding’s design, but it is a reminder that scaling features widen the surface area clients must get right.

How does danksharding affect end-user fees and experience?

For most users, danksharding is not something they will “use” directly the way they use a wallet feature. They will feel it through cheaper Layer 2 transactions, because rollups spend less to publish their batch data to Ethereum.

That is the economic chain of cause and effect. Rollups need to buy data availability on Ethereum. If that resource becomes cheaper and more abundant, the per-user cost of a rollup batch falls. When competition and product design pass that saving through, users see lower fees. This is why official documentation frames proto-danksharding and danksharding primarily as ways to make Layer 2 transactions as cheap as possible.

It also clarifies what Ethereum is optimizing for as a platform. The base layer is not trying to personally execute every future retail payment, game action, and social post. It is trying to be the settlement and data availability core that many execution environments can rely on.

Conclusion

Danksharding is Ethereum’s answer to a specific scaling reality: once rollups handle execution, the scarce base-layer resource is verifiable data availability. Proto-danksharding, live today through EIP-4844, creates blob transactions and a separate fee market so rollups can publish data more cheaply. Full danksharding extends that path with data availability sampling, letting the network support far more data without requiring every node to download all of it.

The idea worth remembering tomorrow is simple: danksharding does not scale Ethereum by splitting execution into many shard chains; it scales Ethereum by making rollup data cheap, temporary, and verifiably available at much larger volume.

How does danksharding affect real-world usage and what checks should I run before funding or trading?

Danksharding matters because it changes what the base layer sells: verifiable, temporary data availability rather than permanent on-chain execution. On Cube Exchange that matters for how you evaluate and act on assets tied to rollups or L2 throughput; you should check the data-availability and dispute assumptions before you fund or trade, then use Cube’s normal deposit and market workflows to execute.

- Review the rollup or L2 project docs to confirm whether it posts batches using EIP-4844 blobs (proto-danksharding) or still uses calldata.

- Check the rollup’s dispute/challenge window and confirm it fits within proto-danksharding’s retention (≈4096 epochs ≈ 18 days) or that the project offers archival fallbacks.

- Monitor on-chain metrics for the blob gas market (recent blob base fee and block blob usage versus the ~0.375 MB target) to see if data-publishing costs are rising.

- Fund your Cube account (fiat on-ramp or crypto deposit), open the relevant market for the asset you want, choose an order type (use a limit order for price control), review estimated fees and blob-gas related risk, then submit.

Frequently Asked Questions

Blobs are large data payloads attached to a transaction whose raw bytes are not accessible to the EVM; instead the chain records a short cryptographic commitment (a KZG-derived VersionedHash) that anchors the blob. Because the execution layer never has to process blob bytes directly and blobs are retained only temporarily, they can be priced separately and much more cheaply than ordinary calldata.

Proto-danksharding keeps blob bytes only for a limited retention window (the spec recommends 4096 epochs, ≈18 days), because the base chain's role is to provide short-term verifiable availability for rollups’ challenge and sync periods. The article and EIP-4844 note the chain does not promise permanent storage, so long-term archival or history beyond the retention window is outside proto-danksharding’s guarantees and must be handled off-chain or by separate infrastructure (the article does not prescribe a single archival strategy).

Data availability sampling (DAS) encodes blob data with redundancy (erasure coding), commits to it cryptographically (KZG), and lets light nodes sample random authenticated pieces; if a proposer withholds too much data, random samples will likely be missing and the block is considered unavailable with high probability. The guarantee is probabilistic because sampling only checks random subsets rather than forcing every node to download every byte, so security improves with more independent samples, stronger parameters, and honest-sampling assumptions.

PeerDAS is the concrete DAS networking design referenced for full danksharding; it partitions erasure-coded blob data into smaller authenticated units (cells/columns) and has nodes sample columns rather than whole blobs. Because computing many cell KZG proofs is expensive, the spec proposes shifting that CPU work to blob transaction senders by requiring them to compute and include those proofs in the transaction wrapper.

The networking challenges include much higher bandwidth and latency demands, larger mempool pressure from big transactions, and new DoS vectors from propagating large blobs; the EIP and research surveys propose mitigations such as announcement-only gossip but emphasize that networking remains a key open engineering risk. These concerns are repeatedly highlighted in the article and in DAS/PeerDAS research as factors that could limit real-world safety and scalability if not addressed.

Danksharding is not the old-style shard-chain design that spreads base-layer execution across many sub-chains; instead it keeps a single proposer view of the block and scales the network’s ability to carry verifiable rollup data. It therefore aims to make rollups cheap to run (by lowering data costs) rather than to make the base EVM itself process 100,000 TPS of on-chain execution.

KZG commitments require a trusted-setup parameter set; Ethereum ran a public KZG ceremony (with over 140,000 contributions) to generate those parameters, and the security model assumes at least one honest ceremony contribution. This is an explicit trust assumption noted in the article and ceremony materials, not an assumption that was silently removed.

Full danksharding (with large blob counts and DAS) is described as future work and “several years away” in roadmap material; it depends on additional pieces (e.g., full DAS/PeerDAS networking, proposer/builder separation, parameter choices) and the article and spec notes make clear there is no concrete mainnet activation date yet.

Related reading