What is an Interoperability Protocol?

Learn what an interoperability protocol is, how cross-chain verification works, and why trust models determine bridge security and design.

Introduction

Interoperability Protocol is the name for a protocol that lets separate blockchains exchange messages, assets, or commands in a way that applications can rely on. That sounds simple until you ask the question that actually matters: if Chain B is going to mint tokens, execute a contract call, or update state because of something that happened on Chain A, why should Chain B believe it?

That is the whole problem. Blockchains are designed to be self-contained systems that reach their own consensus and protect their own state. Interoperability tries to connect those isolated systems without casually importing new trust assumptions, new failure modes, or new ways to lose money. This is why cross-chain design has produced both some of the most useful infrastructure in crypto and some of its largest failures.

At a high level, an interoperability protocol gives chains a shared way to express cross-chain events and verify them. In practice, that can mean secure data transfer, token transfers, arbitrary message passing, remote contract execution, interchain accounts, or query mechanisms. But those features are consequences. The core mechanism is always the same: a destination chain needs a rule for deciding whether a claimed event on a source chain is real, final, and authorized to trigger some action.

A useful way to think about the space is this: an interoperability protocol is not primarily a transport network. It is a verification system for cross-chain claims. Moving bytes is easy. Trusting those bytes is the hard part.

Why do blockchains need interoperability protocols?

If blockchains could directly read and verify each other's state at low cost, there would be much less need for special interoperability machinery. But most chains cannot do that. They have different consensus rules, different finality models, different state formats, different virtual machines, and different cryptographic assumptions. Even when two chains are both programmable, they do not automatically share a common notion of truth.

That creates a specific coordination problem. Suppose a user locks tokens on one chain and expects usable tokens on another. Or suppose a lending app on one chain wants to react to a liquidation event on another. Or suppose a DAO wants governance decisions on one chain to control contracts on a second chain. In each case, something on the destination chain must react to an event that happened elsewhere. Without an interoperability protocol, the destination chain has no native reason to accept that foreign event as valid.

So the protocol has to supply three things. It needs a way to identify the event, a way to prove the event happened under the source chain's rules, and a way to ensure the event is safe to act on now rather than later. That last point matters because chains differ in finality. Some have probabilistic finality and can reorganize. Others have faster deterministic finality. A cross-chain protocol that ignores finality is not really verifying source-chain truth; it is gambling on it.

This is also why interoperability is broader than bridging in the narrow token-transfer sense. Token movement is only one application. Official IBC materials describe the protocol as allowing chains to exchange any type of data encoded as bytes, which is the right abstraction. Once a chain can trust a foreign message, token transfer becomes one application among many.

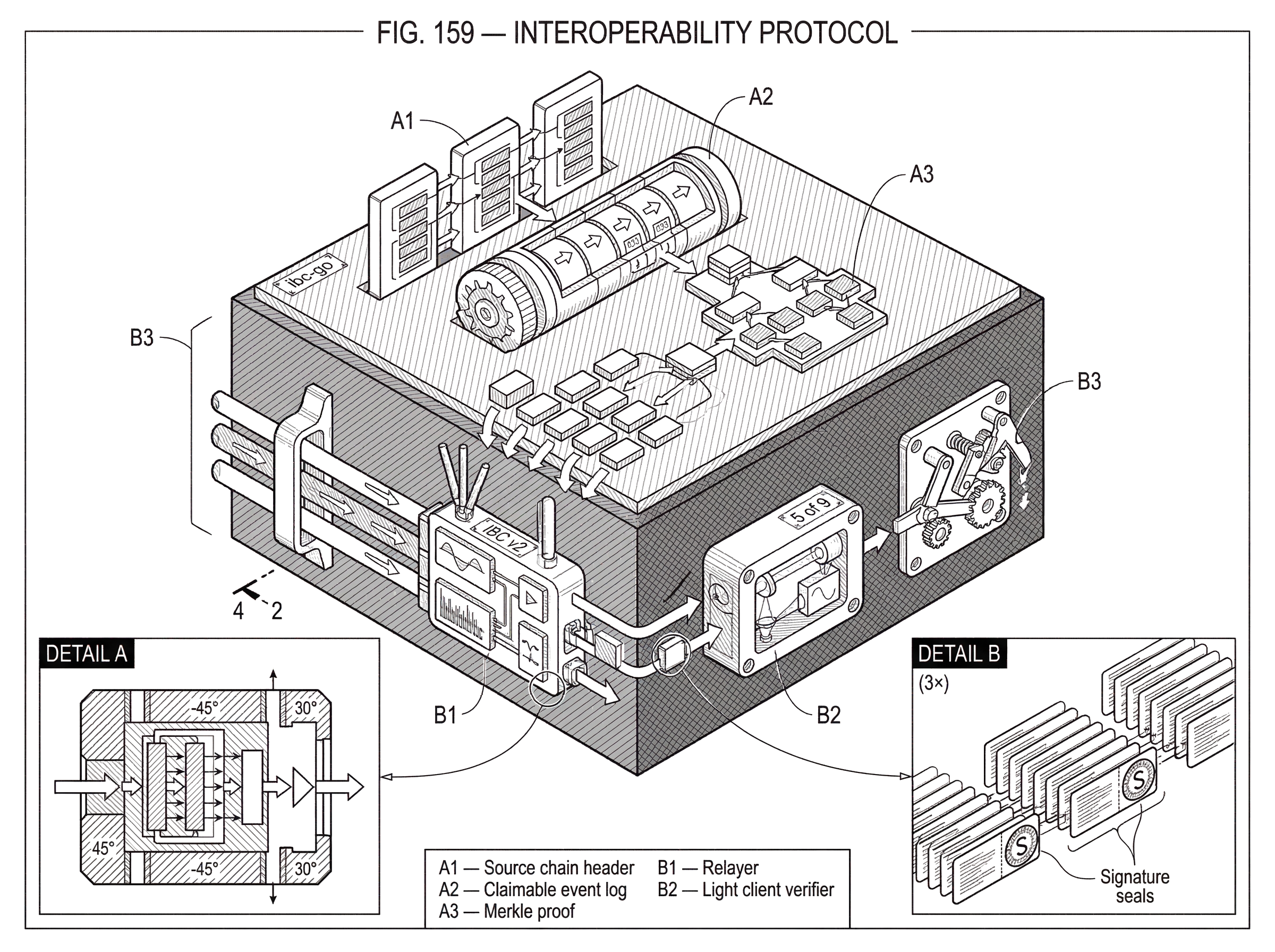

How does a cross-chain claim get proved and turned into an on-chain effect?

Every interoperability protocol, no matter how it brands itself, has to implement the same causal chain.

First, something happens on a source chain. A user deposits tokens, a contract emits an event, a governance proposal passes, or an application writes some packet data. This produces a claimable fact: “this state transition occurred.”

Second, that fact has to be carried to the destination chain. Sometimes an off-chain relayer does this. Sometimes an oracle network does. Sometimes users do it themselves. The transport step is operationally important, but it is not where security fundamentally comes from.

Third, the destination chain must verify enough evidence to decide whether to accept the claim. Depending on the protocol, that evidence might be a light-client proof, validator signatures, oracle attestations, threshold signatures, or some local verification scheme.

Fourth, if verification succeeds, the destination chain performs an effect. It may mint a wrapped asset, release escrowed funds, execute a contract call, create a packet receipt, update remote state, or record that a query result is authentic.

That four-part structure explains most of the design space. Different interoperability protocols differ mainly in who is trusted to produce the proof and how the destination chain checks it.

Why is verification more important than relayer delivery in cross‑chain systems?

A common misunderstanding is to imagine interoperability as a messaging pipeline where the main job is to move information from one place to another. But if a relayer or bridge server simply tells Chain B that something happened on Chain A, Chain B still faces the real problem: should it act on that statement?

This is why protocols separate delivery from verification. Cosmos IBC makes this distinction especially clear. Its architecture separates a transport layer, often described as TAO for Transport, Authentication, and Ordering, from the application layer that defines what packet bytes mean. Relayers are important in IBC, but they are not supposed to be trusted judges of truth. They are more like couriers. They scan chain state, construct the required datagrams, and submit them, while each chain verifies incoming claims against a light client of the counterparty chain.

That design choice captures the central insight. The relayer may be untrusted because the verification path is trusted. If a relayer lies, submits stale data, or goes offline, the protocol should not silently accept false state. It may stall, but stalling is a different failure from corrupt verification.

This is also where interoperability protocols divide into fundamentally different trust models. Some make the destination chain verify source-chain state natively or via a light client. Others delegate truth to an external committee, oracle network, guardian set, multisig, or validator federation. Both are real interoperability protocols. But they solve the verification problem in different ways, and the risk profile changes accordingly.

What are the main trust models for cross‑chain verification?

| Model | Proof source | Trust assumption | Best for | Main risk |

|---|---|---|---|---|

| Native verification | Light client of source | No third party | High-assurance integrations | On-chain client complexity |

| External verification | Oracle/validator committee | Attestor quorum trusted | Fast, wide connectivity | Key governance compromise |

| Local / user verification | User-supplied proofs | Receiver or user checks | Niche, low-trust apps | Poor UX; developer burden |

The cleanest organizing principle is not branding or product category but where cross-chain truth comes from.

One family uses native state verification. Here the destination chain verifies source-chain claims against some representation of the source chain's own consensus output, often through a light client. This is the model emphasized by IBC. Official IBC documentation describes a light client–based model intended to remove the need for a trusted third party. Mechanically, the destination chain tracks enough of the source chain's headers and proofs to validate that a packet commitment, state root inclusion, or other event really exists under the source chain's rules.

Another family uses external verification. In this model, an outside set of actors attests to source-chain events, and the destination chain trusts that attestation if enough of the actors agree. Chainlink CCIP, for example, presents itself as using a decentralized architecture with multiple oracle networks and defense-in-depth controls. Wormhole's public documentation highlights components such as Guardians and VAAs. Many bridge designs surveyed in research fall into this broader pattern: external validators, oracle networks, or committees stand between the source event and the destination effect.

A third family uses local or user-mediated verification, where users or applications themselves participate more directly in checking foreign state. This matters conceptually, though it is less common in mainstream, convenience-oriented systems.

The important point is not that one family is always superior in every dimension. Native verification often reduces added trust assumptions but can be expensive and technically demanding. External verification often expands connectivity and simplifies integration, but it introduces new key-management and governance risks. That trade-off appears repeatedly in both official docs and bridge-security research.

How does a lock‑and‑mint cross‑chain token transfer work?

| Method | Mechanism | Proof checked by | Asset custody | Typical risk |

|---|---|---|---|---|

| Lock-and-mint | Lock on source; mint wrapped | Light client or oracle | Custodian on source | Custodian compromise |

| Burn-and-release | Burn wrapped; release original | Proof of burn | Destination holds wrapped | Replay or forged proof |

| Atomic swap | Coordinated on-chain swap | Both chains' confirmations | No custodian | Higher latency, UX friction |

Consider a simple case. A user wants to move value from Chain A to Chain B.

In a lock-and-mint style design, the user sends tokens into a contract or module on Chain A. Those tokens are now locked, and the source chain records that fact. At this point, nothing has yet happened on Chain B. The system only has a source-chain event that might justify minting or releasing value elsewhere.

Now a relayer or oracle network observes that lock event and carries evidence of it toward Chain B. If the protocol is light-client based, Chain B checks a proof that the lock event or packet commitment is included in Chain A's authenticated state and is final enough to rely on. If the protocol is externally verified, Chain B checks whether the required validator or oracle signatures are present and valid under that protocol's rules.

Once the evidence passes verification, Chain B creates the corresponding effect. That might mean minting a wrapped representation of the source asset, or it might mean releasing inventory already held on the destination side, depending on the bridge design. The user experiences this as “my tokens arrived,” but mechanically what happened is subtler: Chain B accepted a verified claim about Chain A and changed its own state in response.

The reverse trip exposes the same structure. If the user later burns the wrapped asset on Chain B, that burn event becomes the new source-chain fact. Evidence of the burn is sent back to Chain A, and Chain A releases the original locked tokens only if it accepts the proof.

This example also shows why interoperability protocols are not only about assets. Replace “lock tokens” with “emit a governance packet,” “open a remote account,” or “send an arbitrary payload,” and the same verification logic still applies.

Why is IBC the canonical example of secure interoperability?

IBC, the Inter-Blockchain Communication Protocol, is useful as a reference point because it makes the protocol layers and trust assumptions unusually explicit. Official materials describe IBC as a protocol that allows blockchains to talk to each other, exchange arbitrary byte-encoded data, and do so through a light client–based model rather than a trusted intermediary. The Cosmos documentation also frames IBC as a set of data structures, abstractions, and semantics that different ledgers can implement if they satisfy certain requirements.

That framing matters. It means IBC is not merely a bridge product. It is closer to a standard for authenticated cross-chain messaging. Its transport layer handles secure connections and packet authentication, while application-layer standards define how particular kinds of payloads are interpreted. That separation is why IBC can support fungible token transfer, but also interchain accounts, interchain queries, and more specialized application protocols.

The repository structure of the canonical IBC spec reflects this modularity, with specification materials organized into core, client, app, and related directories. Even without diving into every ICS document, the structure itself tells you something important: interoperability is not one monolithic trick. It is a stack of agreements about clients, channels, packets, ordering, and application semantics.

IBC also illustrates an important operational truth: even a protocol designed to minimize trust in transport still depends on off-chain infrastructure. Relayers are required to move messages forward. So “trustless” in this context does not mean “no off-chain actors exist.” It means the correctness of state transitions should not depend on trusting those actors to tell the truth.

How is cross‑chain message passing different from token bridges?

People often meet interoperability through bridges because token transfer is easy to see. But the more fundamental primitive is message passing.

A message is just structured bytes plus protocol rules about authenticity, replay protection, ordering, timeout behavior, and application interpretation. Once a destination chain can verify that a message genuinely corresponds to a source-chain event, it can do almost anything its local execution environment allows. That may be transferring assets, but it can also be instructing a contract, opening a remote account, updating a registry, synchronizing governance, or proving a query result.

This is why IBC documents highlight asynchronous communication, middleware customization, interchain accounts, and interchain queries. It is also why CCIP describes support for arbitrary messaging, token transfer, and programmable token transfer. Different ecosystems package the capability differently, but the underlying pattern is the same: verified foreign messages become local inputs.

The phrase “arbitrary data encoded as bytes” is doing real work here. It means the protocol itself does not need to understand the economic meaning of the payload. It only needs to authenticate the payload, preserve the right delivery semantics, and let the receiving application interpret it safely.

Where this analogy fails is worth naming. Interoperability protocols are not the same as the internet's packet routing layer. Internet routers do not need to decide whether a packet represents a valid consensus event from a foreign sovereign system. Cross-chain protocols do. So the resemblance to networking is useful for intuition about modularity and transport, but it breaks at the point where consensus verification enters.

What are common failure modes for interoperability protocols?

The simplest way to understand interoperability risk is to ask: what if the destination chain accepts a false claim? If that happens, assets can be minted without backing, escrow can be released improperly, arbitrary calls can be executed, or application state can diverge across chains.

This is why so many major failures cluster around verification and permissions rather than mere message delivery. Research surveyed in recent papers describes bridge hacks totaling billions of dollars and finds that a large share of losses came from systems secured by intermediary permissioned networks or weak key-management practices. Another survey of public bridge attacks found permission issues to be the most common root-cause category.

The Ronin incident is a clear example of the external-verifier risk. According to the project's postmortem, the attacker gained control of five of nine validator keys and used that quorum to forge withdrawals. The mechanism is straightforward: if the bridge's security reduces to “enough trusted signers say this withdrawal is valid,” then compromising enough signers collapses the bridge's truth model.

The Wormhole exploit illustrates a different failure mode: even if a protocol has a credible attestation model on paper, an implementation bug in verification logic can nullify it. CertiK's analysis describes how an attacker bypassed signature verification on Solana by exploiting improper sysvar validation, then posted a malicious message and minted unbacked wrapped ETH. The deeper lesson is not just “bugs are bad.” It is that verification code is the protocol's security boundary. A flaw there is equivalent to letting the destination chain believe fiction.

IBC-style protocols are not immune to problems, but their failure modes differ. If the light client is correct and proofs are checked correctly, a malicious relayer should not be able to fabricate source-chain events. The hard parts shift toward client correctness, proof verification, timeout handling, counterparty upgrades, and the operational liveness of relayers. The system may stall or require governance action in some edge cases, but stalling is still better than accepting forged state.

How do interoperability protocols trade off security, cost, and connectivity?

| Approach | Security | Cost | Connectivity | Best when |

|---|---|---|---|---|

| Light client / native | High | High | Limited per-chain | Security-first integrations |

| External oracle / committee | Moderate | Low | Broad multi-chain | Rapid cross-chain access |

| Hybrid / delegated | Moderate-high | Moderate | Moderate | Balance security and reach |

Interoperability protocol design is mostly an exercise in constrained trade-offs.

If you want the destination chain to verify source-chain truth as directly as possible, you usually need some form of light client or native verification. That tends to preserve stronger security alignment with the source chain, but it can be expensive to maintain across many heterogeneous chains. Different consensus systems imply different proof systems, update paths, and on-chain verification costs.

If you want to connect many chains quickly with a unified developer experience, external committees or oracle-style systems are often easier to scale operationally. They can provide broad connectivity and simpler abstractions. But that convenience comes from a different place: users are now trusting the external verifier set, its key management, its governance, its upgrade controls, and its operational integrity.

This is why official Ethereum guidance says there are no perfect bridge designs, only trade-offs among security, convenience, connectivity, data richness, and cost. It is also why the distinction between “trusted” and “trustless” bridges is helpful but incomplete. Real systems live on a spectrum. Even a light-client system may depend on governance to upgrade client code. Even an oracle-based system may use strong decentralization and layered controls. What matters is to state clearly who can cause false acceptance, who can halt the system, and what assumptions are outside the base chains themselves.

How do interoperability standards and implementations differ and evolve?

An interoperability protocol is usually both an abstract specification and a family of implementations. That distinction matters because users often interact with products, while security comes from the protocol rules and the implementation quality underneath.

IBC shows this separation cleanly. There is a public specification repository containing organized spec materials, and there are implementations such as ibc-go. Cosmos documentation also notes that recent releases include both IBC Classic and IBC v2 as separate protocol versions, with a given connection using one or the other. That tells you interoperability protocols are not static. They evolve as ecosystems discover better abstractions, performance improvements, and safer operational models.

By contrast, some other systems are experienced less as open standards and more as platform protocols tied to a particular network of operators, contracts, and tooling. Chainlink CCIP, for instance, provides official APIs, SDKs, and CLI tools for integration and emphasizes its defense-in-depth architecture. LayerZero and Wormhole similarly expose developer platforms, operational infrastructure, and ecosystem tooling around their messaging layers. For a builder, that may feel simpler. For a risk analyst, it means the protocol cannot be understood only at the message API level; you also need to understand who runs the off-chain machinery and how upgrades or attestations are governed.

What capabilities does an interoperability protocol give a blockchain?

At bottom, an interoperability protocol buys a chain the ability to treat some external facts as locally actionable facts.

That sounds abstract, but it is the key. Without interoperability, a blockchain only acts on its own history. With interoperability, it can act on a constrained, verified subset of another chain's history. That is what makes cross-chain assets, cross-chain governance, remote execution, and multichain applications possible.

The cost of that power is that every protocol must answer a severe question with precision: when a foreign chain claims something happened, who proves it, how is it checked, when is it final, and who can break or override that path? If the answers are weak, the bridge becomes a mint for counterfeit claims. If the answers are strong, the protocol becomes part of the trust fabric of a multichain system.

Conclusion

An interoperability protocol is best understood as a system for verifying cross-chain claims, not merely a way to shuttle tokens around. Its job is to let one blockchain safely cause effects on another by defining how foreign events are represented, transported, proved, and accepted.

Everything else follows from that. Message passing, token bridges, interchain accounts, and programmable transfers are applications of the same core idea. The real differences between protocols are differences in verification model and trust assumptions; and those differences determine both what the protocol enables and how it can fail.

How do you move crypto safely between accounts or networks?

Move crypto safely by verifying the exact asset, destination network, fees, and finality rules before you send. On Cube Exchange, start by funding your account, then use the withdrawal or transfer flow to move assets after you complete the checks below.

- Fund your Cube account with fiat or a supported crypto deposit.

- Verify the asset ticker, the receiving address, and the destination chain ID or token contract address (copy and paste the contract address to avoid typos).

- Open Cube’s withdrawal/transfer flow for that asset and select the exact network that matches the destination (e.g., Ethereum Mainnet vs Polygon). Review the fee and gas token shown.

- When sending to an exchange, contract, or new address, send a small test amount first and confirm it arrives and is credited as expected.

- Wait for the destination chain’s safe confirmation threshold before treating the transfer as final (for example, wait ~12 confirmations on probabilistic‑finality chains or the receiver’s stated finality checkpoint), then continue with trading or further transfers.

Frequently Asked Questions

Because the hard problem is not moving bytes but proving that a claimed source‑chain event is real, final, and authorized under the source chain’s rules; blockchains differ in consensus, state formats, and finality models, so delivery alone does not make a destination chain safe to act.

There are three common families: native state verification (destination chains run or check a light client of the source chain), external verification (an oracle/committee/guardian set attests to events), and local or user‑mediated verification where users or apps help validate foreign state.

IBC emphasizes native light‑client verification so relayers act as untrusted couriers while the destination verifies proofs; oracle‑based systems instead rely on external attestations (e.g., validator signatures, DONs, guardians) so the destination’s truth depends on that attestor set’s security and governance.

Most high‑value bridge failures trace to weak verification or permissioning rather than mere delivery problems; examples include Ronin (compromised validator keys allowed forged withdrawals) and Wormhole (a verification implementation bug allowed forged attestations), illustrating both governance/key‑management and code‑level verification risks.

Relayers are typically not trusted for correctness in secure designs; protocols separate transport from verification so a malicious or offline relayer can stall message delivery but should not be able to make the destination accept false state if verification is done correctly.

Finality refers to how and when a chain’s state is considered irreversible; because chains have different finality models (probabilistic vs deterministic), a cross‑chain protocol must account for finality to avoid acting on reorganizable or reversible history.

Use light clients when you want the strongest alignment with the source chain’s consensus and can bear the implementation and verification cost; choose external oracle/committee models when you need fast, broad connectivity and are willing to accept additional key‑management and governance trust assumptions.

Message formats in interoperability protocols include rules for authenticity, replay protection, ordering, timeouts, and application semantics, so replay and duplicate effects are mitigated by those protocol‑level protections rather than by transport alone.

Off‑chain infrastructure like relayers, decentralized oracle networks (DONs), and guardian sets move proofs and attestations between chains and are operationally required; they improve liveness and connectivity but introduce operational, key‑management, and governance risks separate from the on‑chain verification model.

There is no single monolithic IBC file; the ecosystem and implementations expose multiple spec versions (for example, ibc‑go v10 supports IBC Classic and IBC v2 and a given connection uses one or the other), and the canonical spec, versioning, and governance around upgrades are handled at the repo and project level rather than by a single definitive file.

Related reading