What is Throughput (TPS)?

Learn what blockchain throughput (TPS) really measures, how it works, why limits exist, and why TPS claims vary across Bitcoin, Ethereum, Solana, and more.

Introduction

Throughput (TPS) in blockchain is the rate at which transactions are confirmed or added to the chain, usually expressed as transactions per second. That sounds like a simple performance metric, but it hides the central engineering problem of blockchain design: a blockchain is not just processing transactions, it is asking many independent machines to agree on the same ordered history while each of them checks the work for itself. The harder that agreement problem is, the harder it becomes to raise TPS.

This is why two systems can both be called blockchains and yet live in completely different throughput regimes. A network that aims for open participation, global verification, and strong resistance to censorship will usually accept tighter throughput limits at the base layer. A system that narrows the trust model, changes how consensus works, or moves execution off the base chain can often push TPS much higher. So TPS is not just a speed figure. It is a compact summary of architectural choices.

The key idea is this: blockchain throughput is bounded by the slowest shared step in the path from "user submitted a transaction" to "the network has accepted and can sustain it." Depending on the chain, that bottleneck may be block size, block interval, bandwidth, signature verification, state access, consensus messaging, data availability, or contention on the same piece of state. Once that clicks, most throughput debates become easier to reason about.

Is TPS a capacity budget rather than a simple per-second speed?

When people first hear TPS, they often imagine a clean benchmark: how many transactions did the chain handle in one second? But a blockchain does not work like a single server answering requests in isolation. Transactions are collected, ordered, validated, propagated, and finalized under shared rules. So TPS is better understood as a capacity budget over time.

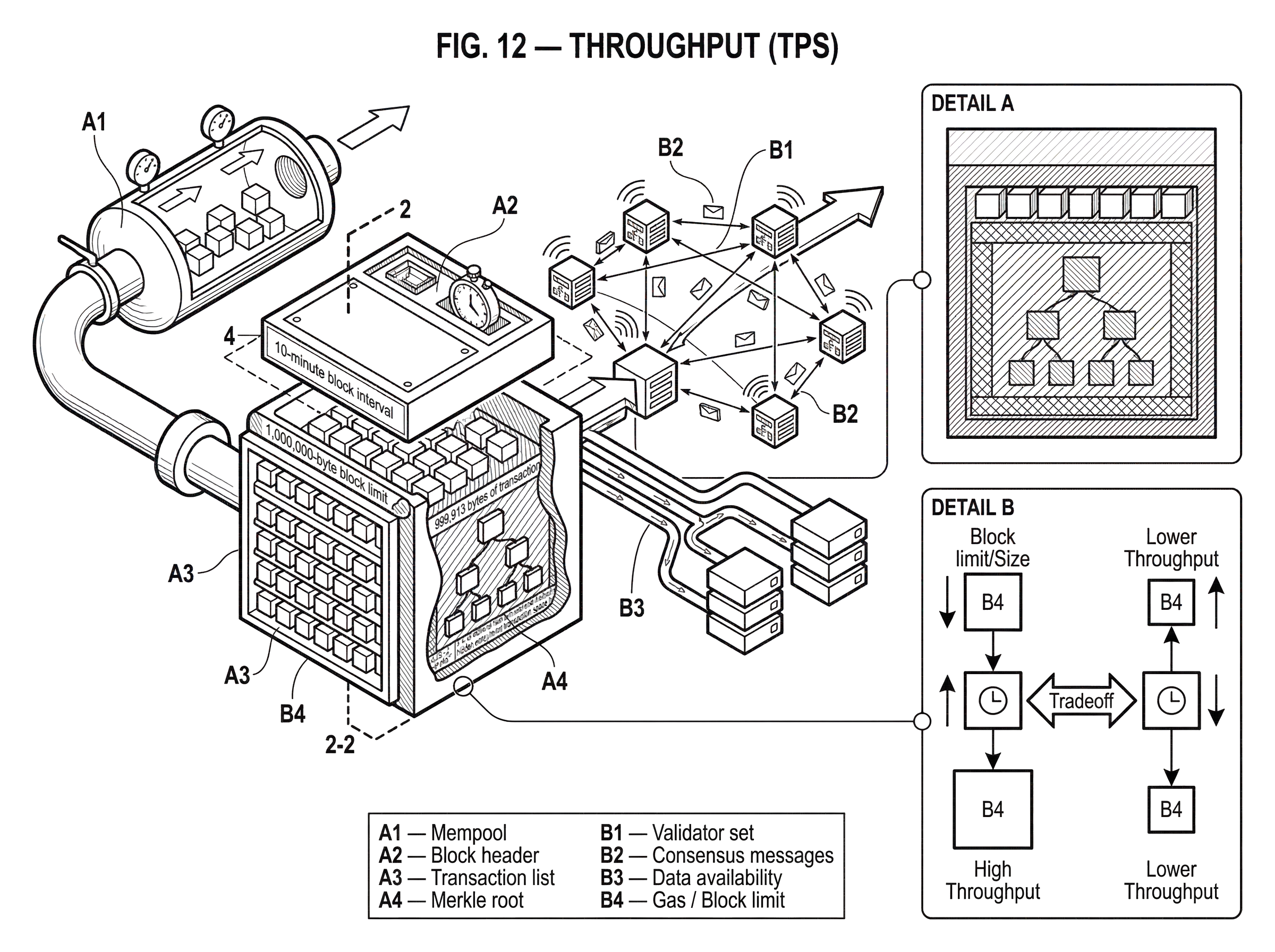

A block-based chain makes this visible. Nodes gather transactions into blocks, and the protocol determines how often blocks are produced. If each block can carry only a certain amount of transaction data, then the chain's baseline throughput is roughly "transaction capacity per block" divided by "time between blocks." Bitcoin's original design illustrates the structure clearly: nodes collect transactions into blocks, and proof-of-work pacing sets an average block cadence. The whitepaper uses a 10-minute block interval as the working cadence, which immediately tells you that throughput will be bursty at the block level and only smooth out as an average over many blocks.

That already reveals a common misunderstanding. TPS is usually quoted as a per-second number, but many blockchains do not actually confirm transactions continuously every second. They confirm in batches. The average can be 5 TPS or 50 TPS, while the user experience still depends heavily on when the next block arrives and how many blocks must follow before the transaction is considered final enough. This is why throughput and latency are related but not identical. A chain can have respectable TPS and still make users wait.

The same logic extends beyond simple payment chains. On smart-contract systems, a "transaction" is not a uniform unit of work. Some transactions are cheap transfers; others trigger many reads, writes, and cryptographic checks. In that setting, TPS becomes a rough surface metric, because the real scarce resource is not transaction count alone but execution resources per block.

How do block size and block interval determine TPS?

The cleanest first-principles model has two moving parts: how much fits in a block, and how often blocks appear. Increase either, and the protocol can in principle confirm more transactions per unit time. But both levers have consequences.

Bitcoin offers a useful worked example because the arithmetic is concrete. A protocol-level analysis for legacy Bitcoin under a 1,000,000-byte block limit and 10-minute average block interval asks a narrow question: if you ignore propagation delays, economics, and mempool behavior, what is the exact upper bound imposed by the protocol rules themselves? The analysis subtracts block overhead from the 1,000,000-byte block budget and finds 999,913 bytes of transaction space. It then packs the block with the smallest possible transactions under its assumptions: one 65-byte coinbase transaction and as many 61-byte non-coinbase transactions as fit. That produces an upper bound of 27 transactions per second.

That number is interesting not because it describes normal Bitcoin usage, but because it shows what TPS really means at the protocol level. The bound comes from bytes and time. If blocks arrive every 600 seconds on average, and each block can only carry so many transactions, then the best-case TPS is just arithmetic after you define what counts as a transaction and how efficiently you can pack one.

The caveat matters just as much as the number. That 27 TPS figure is a theoretical upper bound under specific assumptions: pre-SegWit Bitcoin, 1 MB blocks, minimal transaction forms, and no allowance for real-world network effects. The same analysis explicitly excludes propagation delay, chain growth dynamics, mempool behavior, and economic incentives. So it is not an operational guarantee. It is a ceiling from the protocol's byte budget.

This distinction explains why headline TPS claims are often slippery. There is no single TPS number floating in the air for a blockchain. There is only TPS under a stated workload, protocol version, and operating environment. Change the transaction format, the average transaction size, the block weight rules, or the fraction of block space used efficiently, and the number changes.

What are the trade-offs of increasing block size or decreasing block time?

At first glance, throughput seems easy to increase. Make blocks bigger, or make them come more often. If a chain can include more transactions per block or produce blocks twice as fast, should TPS not simply rise?

Mechanically, yes. Systemically, not for free.

Bigger blocks take longer to transmit and validate. Faster blocks leave less time for a block to reach the network before another producer creates a competing block. In proof-of-work systems, the Bitcoin whitepaper already hints at this tradeoff: if two nodes produce different next blocks at nearly the same time, the network temporarily splits on which one it saw first, and the tie is later resolved by whichever branch gains more proof-of-work. That means higher block frequency, relative to network delay, tends to increase temporary forks and wasted work.

This is why scaling discussions repeatedly come back to propagation and validation limits. A block is not useful just because one producer created it. The rest of the network has to receive it, verify it, and build on it. If blocks become too large or too frequent for ordinary nodes to keep up, two things happen. First, effective throughput may rise less than expected because more work is discarded in forks or delayed by congestion. Second, the system may become more centralized because only better-connected or better-provisioned operators can participate comfortably.

The tradeoff is not unique to proof-of-work. In Byzantine fault tolerant and proof-of-stake systems, the bottleneck often shifts from pure block propagation to consensus communication, voting rounds, and validator coordination. The same principle holds: if more throughput means more data or more messages per unit time, some shared bottleneck will start to dominate.

Why is gas a better metric than raw TPS on smart-contract platforms?

| Measure | Captures | Best for | Main risk |

|---|---|---|---|

| Count transactions | Transaction count only | Simple throughput headlines | Hides variable work per tx |

| Measure gas | Computation & I/O cost | Ethereum-style capacity planning | Depends on opcode pricing |

| Execution units | Actual CPU / IO use | Low-level performance tuning | Requires instrumentation |

For a smart-contract chain, counting transactions alone can mislead because transactions vary widely in computational cost. A token transfer and a transaction that touches many storage slots are both "one transaction," but they do not consume the same resources.

Ethereum makes this explicit with gas. A block does not primarily cap the number of transactions; it caps the total gas that those transactions can consume. That is a more honest reflection of the underlying problem: the network must bound how much computation and state access validators are expected to process before the next block.

This is also why opcode pricing affects throughput. During Ethereum denial-of-service incidents, the protocol discovered that some state-reading operations were too cheap relative to the actual work they forced nodes to do. EIP-150 repriced IO-heavy opcodes such as SLOAD, BALANCE, and the CALL family upward because underpriced state reads had become an easy way to spam the network and degrade performance. The proposal also recommended raising the gas limit target to preserve the de facto transactions-per-second capacity for average contracts after those repricings.

The mechanism here is worth seeing clearly. Throughput is not only about letting more legitimate work in; it is also about pricing scarce resources so abusive work cannot crowd out everything else too cheaply. If the gas schedule underestimates the real cost of disk reads, trie access, or nested execution, then the chain can look like it has high nominal TPS while actually being fragile under adversarial load. Good throughput design includes resistance to this kind of distortion.

So on chains with rich execution, a statement like "this chain does 100 TPS" is often less informative than it sounds unless you also know what kinds of transactions those were, how much gas they used, and whether they stressed compute, storage, calldata, or specific hot accounts.

Which architecture components become TPS bottlenecks across different chain designs?

| Architecture | Dominant bottleneck | Primary scaling lever | Typical tradeoff |

|---|---|---|---|

| UTXO payment chain | Block space & propagation | Bigger/packed blocks | Forks and centralization risk |

| Smart-contract chain | State access and execution | Gas pricing / calldata limits | Higher per-tx cost or complexity |

| BFT-style / PoS validators | Validator communication | Linearized protocols / sharding | Validator-set scalability limits |

| Permissioned / enterprise | Ordering and endorsement | Execute-order-validate model | Narrower trust assumptions |

Once you stop treating TPS as a magic badge and start treating it as a bottleneck question, the variation across blockchain designs becomes easier to interpret.

In a simple UTXO payment chain, the dominant limits may be block space, signature verification, and propagation. In a general-purpose smart-contract chain, state access and execution often dominate. In a BFT-style system, validator communication can become the scaling wall as the validator set grows, because many protocols require many validators to exchange and verify votes. Research on BFT protocols such as HotStuff focuses exactly on this problem: reducing communication overhead so a correct leader can make progress with communication that scales linearly in the number of replicas, rather than suffering heavier coordination costs during leader changes.

That message-complexity story matters because throughput is not just "how fast can one machine run transactions?" It is "how fast can all required participants coordinate on them?" A protocol that demands too many messages between too many validators will hit a wall as the validator set expands, even if execution itself is cheap.

Other designs try to reduce consensus overhead in different ways. Polkadot separates block production and finality through BABE and GRANDPA. BABE can produce blocks rapidly in slots of around six seconds, while GRANDPA finalizes chains, often in batches. This separation can improve overall responsiveness, but it also means throughput has to be understood together with fork behavior and finality rules. A faster production layer is only part of the story if finality and shared relay-chain resources impose their own limits.

Permissioned systems expose a different side of the problem. Hyperledger Fabric does not need to optimize for the same open, adversarial participation model as a permissionless public chain. Its execute-order-validate design allows transactions to be executed and endorsed before ordering, and only a subset of peers may need to endorse a given transaction according to policy. That architecture increases concurrency and can deliver much higher practical throughput in enterprise settings, precisely because the system is solving a narrower trust problem.

This is why raw TPS comparisons across architectures are often apples-to-oranges. A permissionless public chain, a rollup, a validium, and a permissioned consortium ledger can all quote TPS, but they may be making very different promises about who can participate, what must be verified on-chain, where data lives, and what happens when operators misbehave.

How do rollups, validium, and sharding increase TPS by moving work off the base layer?

A useful rule of thumb is that very large TPS gains usually do not come from merely "optimizing the same architecture a little." They come from changing where work happens, who does it, or what exactly must be agreed on globally.

Rollups are a clean example. Ethereum's scaling approach treats the base layer as a place for consensus, data availability, and settlement, while transaction execution can happen outside layer 1. Rollups execute transactions off-chain and then post transaction data to layer 1, allowing them to inherit Ethereum's security model for settlement while increasing effective throughput. The gain comes from reducing how much execution the base chain has to perform directly.

Data availability is the crucial piece here. If a rollup publishes enough data to layer 1, users and validators can reconstruct and verify the rollup state transitions or at least ensure the chain remains auditable. Ethereum's rollup-centric roadmap leans into this by improving data publication economics. The move toward blob-based data availability, often described through Danksharding-related changes, is meant to make rollup data cheaper to post and verify efficiently. That does not magically make layer 1 itself process every rollup transaction. Instead, it lets layer 1 support more off-chain execution safely.

Validium pushes the idea further. It can achieve very high throughput by using validity proofs while keeping transaction data off the main chain. The trade is straightforward: less on-chain data availability can mean more throughput and lower cost, but it changes what users must trust or what failure modes they face if data is withheld. Here TPS rises because the base layer is carrying even less data per transaction.

Sharded or multi-chain designs follow the same logic in another form. Cosmos positions modular chains as able to reach high throughput partly because execution is distributed across many sovereign chains rather than concentrated in one global state machine. Polkadot's parachain model similarly aims for aggregate throughput by letting multiple chains execute in parallel while sharing security at the relay layer. Again, the increase comes from dividing work, not from making one monolithic chain infinitely fast.

Why do Bitcoin and Solana report such different TPS numbers?

| Protocol | Primary bound | Key assumptions | Advertised claim | Stress risk |

|---|---|---|---|---|

| Bitcoin (legacy) | Block bytes × interval | 1 MB blocks, 10 min cadence | Protocol upper bound ≈ 27 TPS | Propagation delays, mempool effects |

| Solana (whitepaper) | Network link capacity | 1 Gbit/s links, 176B tx packets | Theoretical up to 710,000 TPS | Ingress overload, forks, memory |

Consider the difference between a protocol-level Bitcoin upper bound of 27 TPS under narrow assumptions and a Solana whitepaper claim that throughput up to 710,000 TPS is possible with current hardware. These numbers are not merely far apart. They are describing different machines.

In the Bitcoin derivation, throughput is constrained by a small block space budget and a long average block interval. The chain is deliberately conservative about how much data enters the ledger and how quickly blocks are expected to arrive. The network's design priorities make that understandable.

In Solana's design, the throughput story starts from a different premise: if ordering overhead can be reduced, execution can be parallelized, and the network path is engineered for high bandwidth, the bottleneck may become link capacity rather than small-block packing. The Solana whitepaper explicitly derives its 710,000 TPS figure from a 1 Gbit/s network connection and a 176-byte transaction packet size, noting some expected loss from Ethernet framing. That is a network-bound estimate, not a statement that every real deployment will sustain that rate under every workload.

The architecture behind the claim matters. Solana uses Proof of History as a timing primitive to reduce messaging overhead, a leader-based pipeline for sequencing, and aggressive use of parallelism and hardware acceleration. That can produce much higher throughput if the operating assumptions hold. But the assumptions are doing real work: hardware capacity, bandwidth, validator behavior, and contention patterns all matter.

This is exactly why outage reports are so informative. In one Solana Mainnet Beta incident, an inbound flood of roughly 6 million transactions per second produced more than 100 Gbps of traffic at individual nodes. Validators ran out of memory, votes did not land quickly enough to finalize earlier blocks and clean up abandoned forks, and consensus stalled. The lesson is not simply that "high TPS is bad" or "the chain failed." The lesson is that effective throughput depends on overload behavior. A system may advertise extraordinary theoretical throughput, yet still reveal bottlenecks in ingress control, fork cleanup, memory management, or fee prioritization under adversarial or economically extreme conditions.

That is why the mitigations from that incident are directly about throughput mechanics: replacing raw ingestion paths with QUIC for flow control, introducing stake-weighted quality of service for network ingress, and adding fee-based execution priority to handle contention. Throughput is not just how fast a happy-path benchmark runs. It is how the network allocates scarce capacity when demand becomes pathological.

What do people commonly misunderstand about TPS?

The first common mistake is treating TPS as a complete measure of performance. It is not. If a chain confirms many transactions per second but takes a long time to finalise them, or often reorders them under congestion, the user experience and risk profile may still be poor.

The second is assuming all transactions are comparable units. They are not. Transaction count ignores differences in size, computation, state access, and data availability cost. This is especially misleading across smart-contract platforms and across layer 1 versus layer 2 systems.

The third is forgetting that sustained throughput matters more than burst throughput. A system may briefly accept huge transaction volumes into an ingress queue or mempool, but the real question is what volume it can continue to process, propagate, verify, and finalize without degrading into instability.

The fourth is comparing theoretical maxima with observed production numbers as though they are the same kind of fact. They are not. A theoretical bound tells you what the protocol permits under idealized assumptions. A benchmark tells you what a specific implementation achieved on a specific setup. A production metric tells you what happened under live demand, real operators, and adversarial conditions. All three are useful, but they answer different questions.

How should I use TPS when evaluating a blockchain?

TPS is still useful. It gives a rough measure of whether a blockchain can plausibly serve a given class of applications on its base layer. It also helps explain why some systems need secondary scaling layers, batching, app-specific chains, or narrower trust assumptions.

But the right way to use TPS is as an entry point into deeper questions. What is the scarce resource being measured? What assumptions make the number possible? What happens as the validator set grows? What happens when many users touch the same state? How much data must every validator see and store? What degrades first under stress: networking, execution, consensus, or data availability?

Those questions reveal the mechanism. And mechanism is what makes throughput claims meaningful.

Conclusion

Throughput (TPS) is the rate at which a blockchain can confirm transactions, but the deeper reality is simpler and more important: TPS is a measure of how much shared work a network can safely sustain. It comes from capacity limits in block space, execution, networking, consensus, and data availability, not from a single speed dial.

The number matters, but the mechanism behind the number matters more. If you remember one thing, remember this: a blockchain's TPS is never just about counting transactions; it is about what the whole system must do, and agree on, for each of them.

What should I check about throughput before trading or transferring?

Before trading or moving funds, understand how throughput limits affect confirmation speed, fee pressure, and finality so you can choose the right network and execution strategy. Cube Exchange lets you act on that understanding by funding your account and executing trades or transfers with network-aware settings.

- Fund your Cube account with fiat or a supported crypto deposit and select the network you plan to use (check that Cube supports the chain and asset).

- Check the chain's confirmation/finality rule for the asset (for example, number of confirmations or finality time) and estimate required fees or gas with Cube's fee estimator.

- Open the relevant market or withdraw flow on Cube; choose a limit order for price control or a market order for immediate execution, and set gas/fee preferences if available.

- After submitting, monitor confirmations and only consider the transfer settled once the chain-specific confirmation threshold or finality rule you checked in step 2 is met.

Frequently Asked Questions

Because TPS is defined only relative to a workload, transaction format, protocol rules, and operating environment - different chains pack, validate, and finalise transactions very differently, so there is no single universal TPS number for a given blockchain.

Throughput at the base layer is roughly block capacity (how many bytes or how much gas fit in a block) divided by block frequency, but simply increasing block size or making blocks faster raises propagation and validation delays, increases fork/wasted-work risk, and can centralize participation, so it is not a free win.

The commonly cited 27 TPS for legacy Bitcoin is a theoretical upper bound derived from a 1,000,000‑byte block limit, a 10‑minute average block interval, and an assumed packing of minimal transactions; it excludes propagation delay, mempool dynamics, incentives, and modern protocol changes like SegWit, so it is a ceiling under narrow assumptions, not an operational guarantee.

On smart‑contract chains like Ethereum, gas limits the total computation and state access per block, so gas (and opcode pricing) is a more honest way to reason about capacity than raw transaction count; EIP-150 is an example where repricing IO‑heavy opcodes reduced cheap spam vectors and preserved practical capacity.

Rollups and similar layer‑2 designs raise effective throughput by changing what the base layer must do: they execute transactions off‑chain and only post data or proofs to L1 so the base chain handles consensus and data availability rather than all execution, trading on‑chain execution cost for off‑chain work while inheriting settlement security when data availability is preserved.

High headline claims (for example Solana’s theoretical 710,000 TPS) come from very different architectural and hardware assumptions - such as 1 Gbit/s links and small packet sizes - and are network‑ or hardware‑bound theoretical estimates; real-world incidents (e.g., the Solana overload) show that ingress control, memory, fork cleanup, and fee/priority mechanisms determine whether high throughput is sustainable.

Bottlenecks depend on architecture: UTXO payment chains are typically limited by block space, signature verification, and propagation; general‑purpose smart‑contract chains are often limited by state access and execution costs; BFT or large validator sets are often limited by message‑complexity and validator coordination.

Use TPS as an entry point: ask which scarce resource it measures, what transaction types and assumptions underlie the number, how validator set and data‑availability scale, and how the system behaves under sustained or adversarial load - those mechanism questions matter more than bare TPS.

Related reading