What is a Fraud Proof?

Learn what a fraud proof is, how optimistic rollups use dispute games and single-step verification, and where the model depends on data and incentives.

Introduction

Fraud proof is the mechanism that lets an optimistic rollup say, in effect, "accept this state update for now, unless someone can prove it is wrong." That sounds weaker than proving correctness up front, but it solves a very specific scaling problem: fully verifying every L2 computation on L1 is expensive enough to erase much of the benefit of moving execution offchain. A fraud-proof system keeps the common case cheap by assuming proposed state transitions are valid, then reserves expensive verification for the rare case of a dispute.

That design creates an immediate puzzle. If the chain is not checking every step as it happens, why should anyone trust the result? The answer is not “because the proposer is trusted.” The answer is that the protocol is built so a false claim can be challenged, narrowed down, and eventually tested at a point small enough for the settlement layer to verify directly. The optimistic assumption is not the security model. It is a performance shortcut wrapped around an adversarial checking mechanism.

This is why fraud proofs matter to scaling. They are the enforcement layer for optimistic systems. Without them, “optimistic rollup” would mean little more than “someone posts results and asks you to believe them.” With them, the system becomes: results are accepted provisionally, but any incorrect result can be economically and mechanically exposed. That is the core idea to hold onto through the rest of the article.

Why do optimistic rollups use fraud proofs?

A rollup wants two things that pull in opposite directions. It wants execution to happen away from the base chain so users get lower fees and higher throughput. But it also wants the base chain to remain the place where correctness is settled. If L1 re-executes everything, the rollup loses much of its cost advantage. If L1 does not retain a way to check correctness, the rollup stops inheriting L1 security in any meaningful sense.

Fraud proofs are the compromise. They make verification lazy. Instead of paying to prove every computation, the system posts a claim about the result of computation (usually some commitment to L2 state, such as an output root) and gives the world a challenge window. If nobody objects, the claim stands. If someone does object, the protocol runs a dispute process that isolates exactly where the disagreement lies.

The invariant that matters is simple: for a deterministic state transition, there is only one correct result for a given prior state and a given set of inputs. Honest parties may disagree about where an error occurred at first, or how to package evidence, but they cannot both be right about the final post-state. Fraud proofs exploit that determinism. They do not ask the chain to judge broad narratives like “this block seems suspicious.” They ask it to adjudicate a sharply reduced question: given this pre-state and this step, what is the correct next state?

That reduction is the whole trick. A large computation is too expensive to verify monolithically onchain. A single machine step is usually cheap enough.

How does an interactive dispute game narrow a full execution to a single step?

The easiest way to understand a fraud proof is to imagine two parties looking at the same long computation trace. One party says, “the final answer is A.” Another says, “no, the final answer is B.” If they had to replay the entire computation onchain to settle that argument, the system would be far too expensive. So instead they play a narrowing game.

First they identify a midpoint. Did they already disagree halfway through the trace, or only afterward? Once they know which half contains the disagreement, they ignore the other half. Then they split again. And again. Eventually the dispute shrinks from “the whole block transition is wrong” to “this exact instruction executed from this exact machine state produced the wrong next state.” At that point, the settlement chain no longer needs to reason about the whole block. It only needs to verify one step.

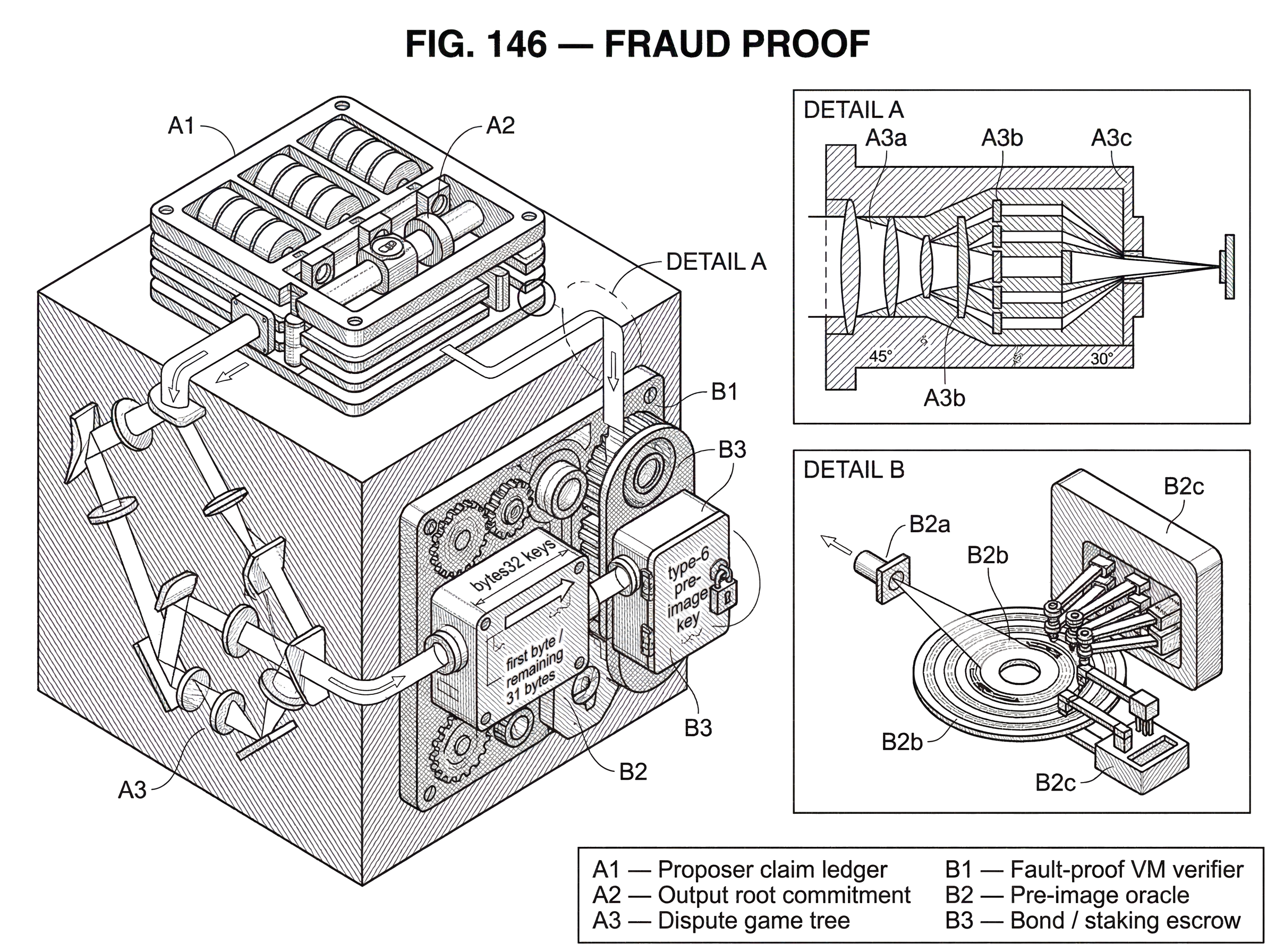

This process is usually called an interactive dispute game or fault dispute game. In the OP Stack specification, a fault proof is described as having three components: a Program, a VM, and an Interactive Dispute Game. That decomposition is useful because it separates roles that are easy to blur together.

The Program is the deterministic logic that reproduces the disputed rollup computation from agreed inputs. The VM is the machine model in which that program is executed and, crucially, the thing that can verify a single disputed step onchain. The dispute game is the protocol that moves parties from a broad disagreement to that single step. The system works because these parts fit together: a deterministic program, a verifiable machine, and a challenge protocol that makes verification affordable.

What does a fraud proof actually disprove onchain?

In an optimistic rollup, what gets posted to L1 is usually not the entire L2 state in raw form, but a compact commitment to it. In OP Stack terminology, disputes begin around an L2 output root and later, deeper in the game, shift to commitments to fault-proof VM states at specific instruction points. The point of a fraud proof is not to reveal every byte of the state immediately. It is to show that some previously posted commitment could not have come from correct execution.

That distinction matters. A fraud proof is not usually a standalone artifact that says “here is the invalid transaction.” It is a protocol for falsifying a claim about a state transition. The claim might be “block n transitions to output root R,” and the challenge is to show that correct execution from the agreed predecessor state does not lead there.

This is why commitments and Merkle-style state representations are so common around fraud-proof designs. The system needs a compact way to commit to large states, memory contents, or traces while later revealing only the small parts needed for a dispute. Asterisc, for example, describes producing commitments to memory, registers, CSR state, and overall VM state, then re-emulating the disputed step inside the EVM to determine the correct result. That is a concrete example of the general pattern: commit broadly, reveal locally.

How does a fraud-proof dispute proceed step-by-step?

| Path | Who acts | Onchain cost | Timeframe | Outcome |

|---|---|---|---|---|

| Uncontested claim | Sequencer/posts only | Minimal gas | Challenge window duration | Claim finalizes provisionally |

| Interactive dispute | Challenger opens game | Moderate gas per move | Days to weeks typical | Trace bisected toward fault |

| Max-depth onchain step | Settlement chain executes step | High single-step gas | Bounded single-step time | Definitive accept or reject |

The high-level mechanism has a consistent shape across optimistic systems, even though implementation details differ.

A proposer posts a claim about L2 execution to the settlement layer. That claim is accepted provisionally, not finally. During a dispute window, another participant can challenge it by staking a bond and opening a dispute game. The game then walks down a tree of increasingly specific claims. At higher levels, the claims may refer to coarse checkpoints such as output roots over portions of execution. At lower levels, they refer to machine states in the execution trace. At the bottom, the dispute becomes a question about a single VM transition.

The OP Stack’s Fault Dispute Game makes this explicit. It bisects first over output roots and then, below a split depth, over the execution trace of the fault-proof VM, until the game reaches its maximum depth. At that maximum depth, the protocol resolves the dispute by performing an onchain VM step through a fault-proof processor. The provided pre-state and proof data are used to compute the post-state, and if that post-state does not match the challenged claim, the challenge succeeds.

That sounds abstract, so it helps to walk through a worked example.

Imagine a sequencer claims that after processing a batch of L2 transactions, the rollup reaches state commitment R_final. A challenger recomputes the batch and gets a different result. They open a dispute game. At first, neither side needs to put the whole computation onchain. Instead, they commit to intermediate checkpoints in the trace. Suppose the disagreement is localized to the second half of execution. The first half is now irrelevant. They bisect the second half and discover the error lies in its first quarter. Then they bisect again, narrowing from a quarter of the trace to an eighth, then to a handful of steps, and eventually to exactly one instruction.

Now the argument is no longer “the batch was invalid.” It is “from machine state S_i, executing the next instruction should produce S_i+1_true, but the claim committed to S_i+1_false.” The onchain verifier receives the pre-state, the proof material needed for that step, and the disputed post-state claim. It executes the one-step transition function. If the result matches the challenger’s position rather than the proposer’s claim, the fraudulent claim is defeated. The entire broad accusation collapses to one falsified step.

This is the deep reason fraud proofs scale: the expensive part of execution stays offchain unless contested, and even when contested the onchain cost is forced toward a tiny base case rather than full re-execution.

Why do fraud proofs require a single-step verifiable VM?

| VM type | Determinism | Onchain verifier cost | Tooling maturity | Best fit |

|---|---|---|---|---|

| EVM-mirror | Deterministic | Moderate to high | Mature Solidity/Yul tooling | EVM-native rollups and compatibility |

| RISC-V (Asterisc) | Deterministic | Low to medium per step | Growing (rvgo + rvsol) | General-purpose proofs, small verifier |

| Custom FPVM (Cannon) | Deterministic | Optimized low cost | Specialized tooling | Maximize onchain verification efficiency |

A common misunderstanding is to think of the VM as just an implementation detail. It is more central than that. Fraud proofs require a machine model whose transitions are deterministic, replayable, and cheap enough to verify one step at a time on the settlement layer.

In the OP Stack spec, a fault-proof VM must provide three things: a smart contract that verifies a single execution-trace step, a CLI tool that generates a proof for a single step, and a CLI tool that computes VM state roots at arbitrary points in the trace. Those requirements reveal the true job of the VM. It is not merely “the computer that runs the rollup.” It is the bridge between a large offchain computation and a small onchain adjudication.

This is also why different fraud-proof VMs can coexist. Cannon and Asterisc are examples of different VM implementations in the OP ecosystem. Asterisc uses a RISC-V design and mirrors its slow-mode step in Solidity/Yul for onchain replay. That diversity is not cosmetic. It reflects a broader design goal: the dispute protocol can remain conceptually stable while the execution environment used for proofs evolves.

The analogy here is a courtroom that does not rehear an entire company’s operations, but demands a very specific ledger entry and the exact rule used to compute it. The analogy helps explain why a single-step verifier is enough. Where it fails is that real courts rely on human interpretation, while the fraud-proof VM relies on strict deterministic execution.

How does a pre-image oracle supply data for fraud-proof verification?

A fraud-proof VM cannot verify even a single step if it lacks access to the data that step depends on. But simply letting the program read arbitrary external state would make verification ambiguous and hard to audit. That is why OP Stack’s design routes Program–VM communication through a single narrow interface: the pre-image oracle.

The spec is explicit that the pre-image oracle is the only form of communication between the Program and the VM. Data is requested by typed bytes32 keys whose first byte identifies the key type and whose remaining 31 bytes identify the requested pre-image. The key-type scheme distinguishes, among other things, local keys, global Keccak-256 keys, SHA-256 keys, EIP-4844 point-evaluation keys, and precompile-result keys.

This is an important architectural choice because it turns “whatever data the proving program might need” into a constrained retrieval model. The program does not get ambient access to the world. It asks for specific pre-images corresponding to previously committed data. That makes the proof environment more stateless and easier to reason about.

The fault-proof program itself is described as reproducing L2 state by applying L1 data, retrieved through this oracle, to prior agreed L2 history. Its structure has a prologue, main content, and epilogue: load the minimal bootstrapping inputs, process the disputed transition, and verify the resulting claim. The essential point is that the correctness check is framed as a pure function of committed inputs, not as an appeal to hidden offchain context.

This narrow input surface also enables acceleration tricks. The OP spec describes precompile accelerators for cases where directly executing expensive precompiles in the fault-proof VM would be impractical. In that design, the program hints the host and later retrieves the precompile result through a type-6 pre-image key, which includes whether the precompile reverted. The mechanism preserves the proof framework while avoiding prohibitively costly emulation of certain operations.

How do timing rules and clocks affect fraud-proof disputes and finality?

Another common misunderstanding is to imagine a fraud proof as a single packet of evidence posted once. In many modern optimistic systems, especially interactive ones, the proof is better understood as a timed protocol. The dispute unfolds over moves, and those moves are constrained by clocks and bonds.

In the OP Fault Dispute Game, claims are arranged in a game tree. Players make attacks and defends, each adding new claims at deeper positions in the tree. Every claim has a clock. The protocol uses per-claim chess-clock logic with a maximum duration and extension rules, so one side cannot stall forever and honest challengers are protected from being timed out unfairly near the end of a turn.

These timing rules are not peripheral. They are part of the security model. Fraud proofs only protect the rollup if there is enough time for an honest participant to notice bad claims, gather the relevant L1 data, and respond. But they must also terminate. A dispute protocol with unbounded delay is vulnerable to griefing even if its final correctness is sound in principle.

This is one reason newer dispute designs emphasize bounded resolution. Offchain Labs’ BOLD protocol for Arbitrum is framed around exactly this point: permissionless validation requires not just correctness in the limit, but a fixed upper bound on confirmation delays and resistance to denial-of-service by malicious disputers. The important takeaway is not that all fraud-proof systems use the same game, because they do not. It is that once you rely on post hoc challenges, liveness becomes part of the proving problem.

How do bonds and incentives ensure challenges happen in fraud-proof systems?

Fraud proofs are sometimes explained as if logic alone secures the rollup. In reality, there is also an economic layer. Someone must be willing to monitor claims, challenge invalid ones, and spend gas participating in disputes. If honest participation is too expensive or insufficiently rewarded, the fraud-proof mechanism may exist on paper but fail in practice.

This is why dispute games use bonds. In OP Stack’s design, participation in the Fault Dispute Game requires bonded claims. Bonds serve two roles at once. They deter frivolous or dishonest claims by forcing participants to post collateral, and they create a pool from which successful challengers can be rewarded. At maximum game depth, where the single-step VM adjudication happens, the relevant bond can go to the account that successfully called step().

But bond design is subtle. Optimism’s writing on incentives emphasizes two goals: it must be worthwhile to act honestly, and worthwhile to participate at all. Those are not identical. A protocol can be logically correct yet economically brittle if gas costs, bond sizes, or payout mechanics make honest defense unattractive.

This is not merely theoretical. The OP materials note a collateralization problem: if bond requirements and gas volatility are not calibrated carefully, dishonest actors who can outspend honest ones may leave bad claims uncontested. Research has also highlighted broader incentive pathologies in dispute games, including cases where winning challengers may still fail to earn an appropriate profit. So the underlying mechanism of fraud proofs depends on two layers of assumptions: deterministic computation, and enough honest economically motivated participation to trigger the mechanism when needed.

What are the main failure modes and assumptions of fraud proofs?

The strongest statement fraud proofs can support is conditional: if the system’s assumptions hold, then invalid state transitions can be rejected. The assumptions matter.

The first assumption is determinism. The disputed computation must have a uniquely correct result from the agreed inputs. If execution depends on ambiguous external state, inconsistent software behavior, or nondeterministic effects, interactive dispute resolution stops being crisp.

The second is data availability. Challengers need the relevant inputs and state witnesses to reconstruct the disputed transition. In the OP design, the pre-image oracle and related mechanisms formalize how needed data is served. If critical data is unavailable, an honest challenger may know a claim is wrong in principle but still fail to produce the required onchain response.

The third is timely honest participation. Fraud proofs are reactive. A bad claim that nobody challenges on time may still finalize. This is the defining trade-off against validity-proof systems, which prove correctness before acceptance rather than relying on watchers to object afterward.

The fourth is economic robustness. Incentive failures do not necessarily make the computation model unsound, but they can make the practical defense of the system unreliable. The FDG spec itself notes edge cases such as freeloader claims, which do not change overall global resolution if ignored but can distort who receives bond payouts unless honest challengers counter them. That kind of issue illustrates a broader point: the game can be logically correct while still having incentive wrinkles that matter in production.

Fraud proofs vs validity proofs: trade-offs for rollup finality

| When correctness established | Upfront cost | Watcher requirement | Finality speed | Best for |

|---|---|---|---|---|

| Post-acceptance (challenge window) | Low in uncontested cases | Necessary (watchers/challengers) | Slower (waiting window) | High-throughput, low-cost common path |

| Pre-acceptance (cryptographic proof) | High (proof generation) | Not required | Faster once verified | Strong finality, watcher-light deployments |

Fraud proofs and validity proofs both answer the same scaling question: how can L1 trust an L2 result without re-executing everything itself? They differ in when and how correctness is established.

A validity proof says, “here is cryptographic evidence that this state transition is correct before you accept it.” A fraud proof says, “accept the claim provisionally, but leave a window during which anyone can force a precise check if it is wrong.” The first pays more effort upfront to remove the need for a challenge game. The second keeps the common path lighter but introduces challenge windows, watcher requirements, and dispute machinery.

Neither approach is “just better” in every dimension. Fraud proofs can be simpler in some execution settings, easier to align with existing software stacks, and cheaper in the uncontested case. Validity proofs reduce reliance on active challengers and typically support faster finality once verified, but they impose a different proving burden. The right comparison is not moral but mechanical: one is proactive verification, the other reactive falsification.

A short conclusion

A fraud proof is best understood not as a magic proof object, but as a system for turning a big disputed computation into a tiny verifiable contradiction. Optimistic rollups rely on that system because verifying everything on L1 would cost too much, while trusting offchain execution blindly would give up too much security.

The memorable version is this: **optimistic rollups are fast because they assume claims are valid, and safe because fraud proofs give anyone a way to force the system to check the exact point where a false claim breaks.

** Everything else exists to make that sentence true in practice.

- dispute trees

- single-step VMs

- pre-image oracles

- clocks

- bonds

How does this part of the crypto stack affect real-world usage?

Fraud proofs change how and when an L2 state becomes final; they let chains accept updates quickly but require watchers and dispute windows to catch mistakes. Before you fund or trade assets on a rollup that uses fraud proofs, run a short operational checklist and then use Cube Exchange to research markets and manage positions while you monitor those rollup parameters.

- Look up the rollup's dispute window and withdrawal delay (expressed in blocks or hours) and record both values.

- Verify the rollup's data-availability method (on-chain calldata, separate DA layer, or compressed commitments) and confirm you can access the historical calldata or output roots needed to reconstruct disputes.

- Check whether the rollup publishes single-step VM verifiers or follows a known spec (e.g., OP Stack Fault Dispute Game) so onchain adjudication is deterministic.

- Review economic parameters: challenger/proposer bond sizes, bond slashing rules, and reward mechanics; note whether bonds are large enough to deter spam but not so large they discourage honest challengers.

- If moving meaningful funds, stagger transfers and set alerts (block explorers, RPC subscribe, or third-party watchers) for output-root updates and dispute events before completing large deposits or withdrawals.

Frequently Asked Questions

The dispute game bisects the execution trace: challengers and proposers repeatedly narrow which half contains the disagreement until it isolates a single machine instruction from a known pre-state, and the settlement chain then verifies that one-step transition onchain.

The pre-image oracle is the narrow, typed interface the Program uses to fetch any external inputs needed to reconstruct a disputed step, turning arbitrary ambient state access into constrained, auditable lookups so the onchain verifier can deterministically re-evaluate the step.

Dispute protocols are timed games: each claim has a clock and chess-clock rules so neither side can stall indefinitely, and those timing windows are part of the liveness/security model because honest watchers must have enough time to observe, fetch data, and respond.

No - fraud proofs are reactive and require timely, economically motivated watchers to challenge bad claims; if nobody monitors or challenges within the dispute window, an invalid claim can finalize despite being refutable in principle.

Fraud proofs accept state updates provisionally and rely on post hoc challenges to catch errors, while validity proofs attach cryptographic evidence of correctness up front; fraud proofs are cheaper in the uncontested path but introduce challenge windows and watcher/incentive requirements, whereas validity proofs reduce reliance on active challengers at the cost of higher upfront proving work.

The model requires four key assumptions: deterministic execution, sufficient data availability to reconstruct disputed steps, timely honest participation to raise challenges, and economic incentive robustness; violations of any of these (e.g., unavailable inputs or undercollateralized bonds) weaken practical security.

Yes - the dispute protocol can be VM-agnostic in design and different implementations can plug into the same game; the article cites Cannon and Asterisc as example VMs used within the OP ecosystem to implement the required single-step verification model.

Bonds make dishonest or frivolous claims costly and create reward pools for successful challengers, but designs like 'Big Bonds™' trade capital efficiency for robustness and the spec warns of a collateralization problem where poorly calibrated bonds or volatile gas can leave invalid claims uncontested.

Disputes start from compact commitments (e.g., output roots or state roots) and only require revealing small witnesses: the pre-state, the minimal proof material for the disputed instruction (registers, memory slices, etc.), and the claimed post-state so the onchain step verifier can recompute the correct next state without revealing the entire trace.

Related reading