What Is a Risk Engine in Derivatives Trading?

Learn what a risk engine is in derivatives trading, how it calculates margin and liquidations, and why it matters for market stability.

Introduction

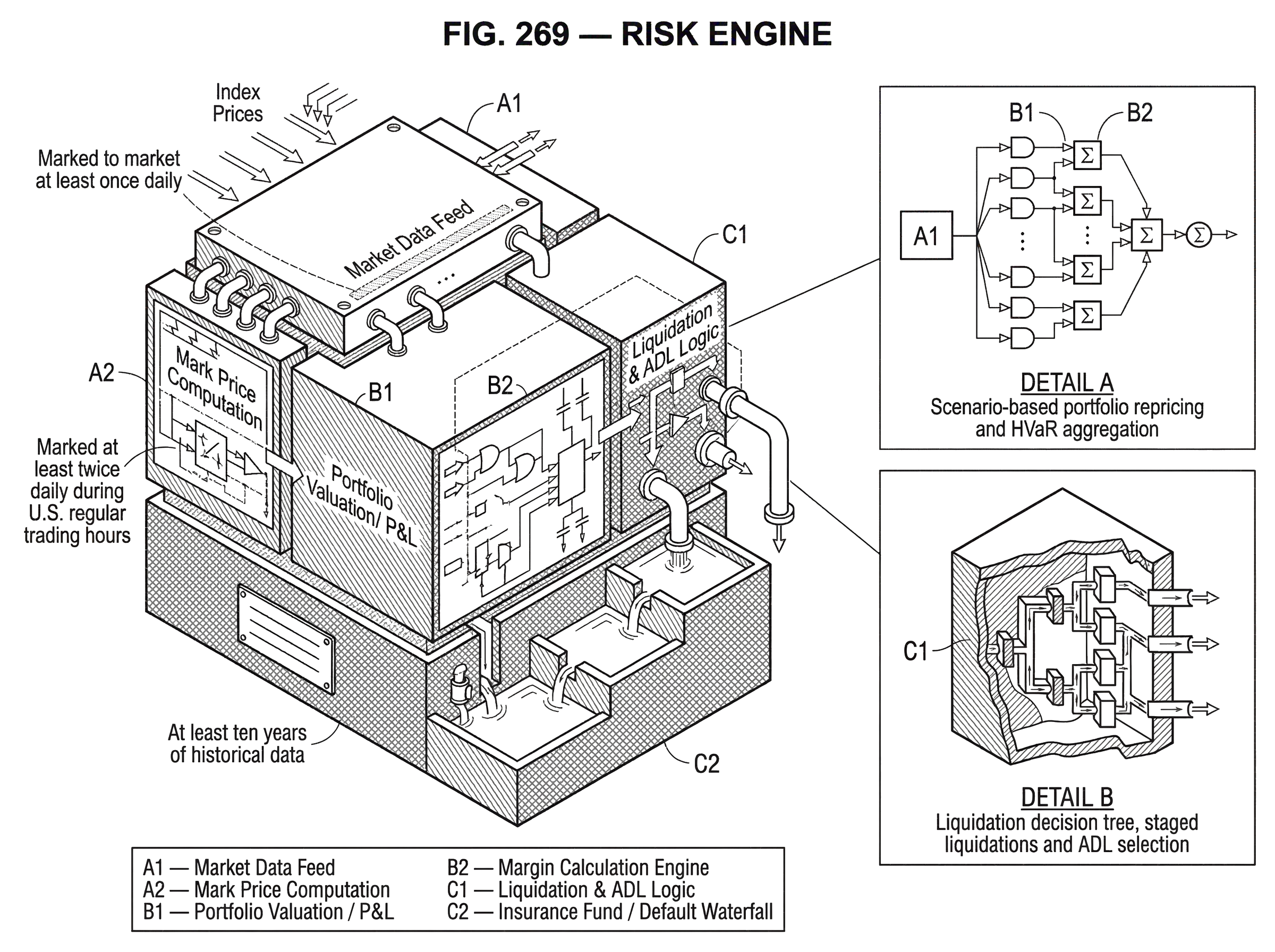

Risk engine is the system a derivatives venue or clearinghouse uses to measure portfolio risk and turn that measurement into action. In ordinary markets, people often notice the visible parts first; leverage limits, margin requirements, liquidation warnings, insurance funds. But those are outputs. The thing producing them is the risk engine.

Why does this matter? Because a derivatives market makes promises about future cash flows. If prices move sharply, one side of those promises loses money faster than the other side can be paid. A market therefore needs a disciplined way to answer a hard question continuously: *given the positions traders hold right now, under plausible price moves, how much collateral is enough, and what happens if it is not enough? * A risk engine is the mechanism for answering that question in real time or near real time.

At first glance, this can sound like a calculator with a few thresholds. It is not. A production risk engine sits at the intersection of pricing, market data, margin methodology, liquidation logic, collateral management, and default handling. At a futures clearinghouse, that might mean a portfolio margin model such as CME SPAN, which CME describes as a market-simulation-based Value at Risk system for portfolio risk assessment. In uncleared OTC derivatives, it may mean a standardized initial margin engine such as ISDA SIMM, which uses prescribed sensitivities, weights, correlations, and concentration rules. On crypto derivatives venues, the same basic function appears in a different operational form: mark prices, maintenance margin checks, staged liquidations, insurance fund usage, and, if losses outrun those defenses, auto-deleveraging.

The unifying idea is simple: a risk engine is the institution’s way of converting uncertain future losses into present collateral and control decisions. Everything else follows from that.

How does a risk engine prevent leverage from becoming runaway credit?

A derivatives trade creates exposure before final settlement. If a trader posts too little collateral relative to the risk of the position, the venue or clearinghouse is effectively extending credit. That credit can become dangerous quickly in leveraged products because losses grow with position size while posted margin is only a fraction of notional exposure. So the first job of a risk engine is not abstract risk reporting. It is loss containment.

Here is the mechanism. The engine takes a portfolio, asks how its value changes under adverse moves, compares those losses with available collateral, and then decides whether the account remains safe, needs more margin, or must be reduced. If this sounds close to Value at Risk, that is because many risk engines are built around VaR-like ideas. CME’s SPAN is explicitly a market-simulation-based VaR framework at the portfolio level. CME’s newer SPAN 2 is built on a historical VaR framework and then adds stress, liquidity, and concentration components. The common structure is not accidental: a single position is rarely the right unit of risk. What matters is the portfolio, because gains in one place may offset losses in another, while concentrated or illiquid exposures may be much more dangerous than their headline size suggests.

This explains an important tension in risk-engine design. If the engine is too conservative, it demands excessive collateral and makes trading unnecessarily expensive. If it is too permissive, the market becomes fragile and losses are pushed into insurance funds, mutualized default resources, or other traders. CME’s summary of SPAN states this tradeoff directly: risk-based margining aims to provide effective coverage while preserving efficient use of capital. That balance (safety versus capital efficiency) is not a side issue. It is the core engineering constraint.

How do risk engines turn loss estimates into margin calls and liquidations?

The easiest way to understand a risk engine is as a loop with three stages.

First, it marks positions to a reference price and computes current unrealized profit and loss. This matters because collateral adequacy depends on where the market is now, not where a trader entered. In crypto derivatives, the choice of reference price is especially important. Binance’s clearing rules define Mark Price as a computed price that considers inputs such as last trade, top-of-book bid and ask, Funding Rates, and an index price, with contract-specific details in the specifications. That is not cosmetic. If liquidation triggers were based only on the last traded price, a thin or manipulated print could force needless liquidations. A mark-price construction tries to produce a more stable reference for risk decisions.

Second, it projects adverse outcomes. Different engines do this differently. Some shock underlying prices and implied volatilities across predefined scenarios, as SPAN does through scan risk arrays and related spread treatments. Some replay historical market moves and scale them for current volatility and correlations, as SPAN 2’s HVaR framework does. Some do not simulate full repricing scenarios at all, but instead aggregate standardized sensitivities, as SIMM does for delta, vega, curvature, basis, and concentration risk. These look different on the surface, but the purpose is the same: estimate how much the portfolio could lose over the risk horizon the institution cares about.

Third, it compares estimated loss to available resources and applies rules. Those resources may include posted collateral, variation margin already settled, excess account equity, insurance funds, guaranty funds, or mutualized default resources, depending on market structure. If resources cover the modeled loss with the required buffer, the account remains open. If not, the engine escalates: margin call, trading restriction, partial liquidation, full liquidation, or transfer into default management procedures.

That is the whole machine in abstract form. Most complexity comes from making each stage robust enough for real markets.

Why do risk engines evaluate portfolio risk instead of checking each position independently?

A smart beginner often assumes risk is just leverage on each position. That is too crude. A trader long one oil future and short another related oil future is not exposed like someone outright long both. An options portfolio may look dangerous contract by contract while being much less risky once delta and volatility exposures net out. Conversely, two positions that appear diversified may become tightly correlated in stress.

This is why exchange-grade and clearing-grade risk engines are usually portfolio engines, not position limit checkers. CME describes SPAN as assessing risk on an overall portfolio basis and across combinations of futures, options, physicals, equities, and other instruments. The engine is trying to capture the fact that risk lives in the pattern of offsets and concentrations across the whole book.

But netting is only half the story. The other half is deciding which offsets deserve credit. SPAN’s design includes intra-commodity spreads, inter-commodity spreads, and other offset treatments because some positions genuinely hedge each other under plausible scenarios. Yet not every apparent hedge should receive full margin relief. If the historical or structural relationship can break, the engine has to limit how much offset it recognizes. The engine therefore embeds a view about which dependencies are robust enough to trust under stress.

This is also where concentration enters. SPAN 2 adds a concentration charge for large positions above thresholds calibrated from recent average daily volume. The idea is straightforward. A position can be directionally hedged in theory and still be costly to close in practice if it is large relative to market depth. The engine is not only estimating mark-to-model loss. It is estimating the loss and friction of unwinding a real book in a real market.

How do margin engines calculate required collateral (SPAN, HVaR, SIMM)?

| Method | Inputs | Nonlinearity | Strength | Weakness | Best for |

|---|---|---|---|---|---|

| Scenario repricing | Full portfolio prices in shock scenarios | Yes, captures options and spreads | Intuitive for complex payoff profiles | Sensitive to chosen scenarios | Exchange/clearinghouse portfolios |

| Historical VaR + overlays | Long historical returns + stress scenarios | Partly, via scaled scenarios | Grounded in data; includes stress/liquidity | Depends on lookback and judgement | Products needing calibrated tail cover |

| Sensitivity (SIMM) | Standardized deltas/vegas/curvature | Limited for nonlinear payoffs | Interoperable and auditable | Less tailored to unique books | Bilateral uncleared OTC margining |

There are three broad design patterns visible in the supplied sources, and each reflects a different answer to the same problem.

The first is scenario-based portfolio repricing. SPAN is the clearest example.

The portfolio is repriced across a family of market scenarios and margin is based on the worst or otherwise prescribed loss after applying spread and offset rules.

- scan risk arrays

- extreme scenarios

- composite delta scenarios

This approach is intuitive because it asks a concrete question: if markets moved like this, what would the portfolio lose? Its strength is that it naturally captures nonlinear payoffs such as options. Its weakness is that scenario design matters enormously; if the chosen shocks miss a relevant risk, the output can look precise while being incomplete.

The second is historical VaR plus overlays. SPAN 2 uses at least ten years of historical data to generate gain-and-loss scenarios, with volatility and correlation scaling, explicit treatment of seasonal effects, and an implied-volatility surface including skew for options. Then it combines historical risk with stress risk, liquidity, and concentration. CME summarizes the total portfolio margin formula as x Historical Risk + (1 - x) Stress Risk + Liquidity + Concentration, where x is a weight chosen by the methodology. This structure is revealing. Historical data provides a grounded base; stress scenarios broaden the model beyond the exact past; liquidity and concentration acknowledge that losses in default are not just mark-to-market losses. They also include execution costs and market impact.

The third is sensitivity-based standardization. ISDA SIMM does not ask each firm to run its own unconstrained portfolio VaR for uncleared OTC initial margin. Instead, it defines the required inputs and aggregation rules. Sensitivities such as PV01 for interest rates, CS01 for credit spreads, and analogous delta, vega, and curvature measures are grouped by risk class and bucket, weighted, correlated, and aggregated according to a published specification. This gives market participants a common and auditable method. The tradeoff is that standardization gains interoperability and comparability by giving up some freedom to tailor the model to every portfolio’s nuances.

The underlying principle across all three is the same: turn a complicated portfolio into a loss number that is conservative enough to survive stress but structured enough to avoid charging collateral for risks that genuinely offset.

How does a sharp price move lead to staged liquidation or ADL on crypto venues?

| Path | Trigger condition | Engine action | Market impact | Typical use |

|---|---|---|---|---|

| Immediate liquidation | Maintenance margin breached | Close positions immediately | High instantaneous impact | Simple retail venues |

| Staged liquidation | Escalating shortfall detected | Partial reductions in steps | Lower peak impact, longer unwind | Venue with execution controls |

| Insurance fund + ADL | Losses exceed collateral + fund | Use fund; then auto-delever opposing accounts | Loss mutualisation; counterparty risk shift | Crypto venues with mutualised pools |

Imagine a trader holds a leveraged perpetual futures position on a crypto venue. The account has collateral, unrealized P&L, open orders, and one large directional position. The trader is not thinking about the risk engine, but the engine is constantly evaluating the account.

The market drops sharply. The venue does not immediately look only at the last traded price, because a single print may be noisy. Instead, it computes a mark price from a basket of inputs such as book quotes, index price, and funding-related adjustments, depending on the venue’s specification. That mark price reduces the account’s unrealized P&L. Under Binance’s clearing-rule definitions, Margin Balance is the value of margin in the account plus unrealized P&L, and Liquidation Price is the mark price at which margin balance falls below the margin requirement.

Once the mark price moves far enough, the account is no longer adequately collateralized. The engine now has to decide what to do. On a simpler retail venue, that may mean direct liquidation once maintenance margin is breached. On a more layered system, it may mean staged liquidation. Binance’s rules explicitly define Stage 1 and Stage 2 liquidation. The exact algorithms live in the detailed rules and procedures, but the conceptual point is clear: liquidation itself is not always a single atomic event. A risk engine may reduce risk progressively to avoid unnecessary market impact.

Suppose market conditions are disorderly and the liquidated account’s remaining losses exceed its own collateral. Then the next defense is typically an insurance fund. Bybit describes its insurance fund as a reserve used to cover losses beyond a liquidated position’s margin. If that fund is insufficient, the venue may invoke auto-deleveraging, selecting opposing traders’ positions to reduce system exposure. Bybit’s documentation says this selection is driven by an ADL ranking based on leveraged return, with the highest-ranked positions chosen first. In other words, the risk engine has moved from account-level risk control to system-level loss allocation.

This example shows why the risk engine cannot be separated from liquidation and ADL. Margin calculation is only the front half of the process. The back half is the decision tree for what happens when modeled protection is not enough.

Why do mark prices and settlement cadence change liquidation and credit exposure?

| Mark cadence | Exposure window | Operational demand | Effect on margin calls |

|---|---|---|---|

| Continuous / real‑time | Seconds to minutes | Very high | Fewer surprise calls; prompt settlement |

| Intraday (twice daily) | Hours | Moderate | Reduces buildup; needs intraday liquidity |

| Daily | One day | Lower | Higher unsecured intraday exposure |

A risk engine is not only a model. It is also a clock.

Two accounts with identical portfolios can face different outcomes depending on how often positions are marked and when funds actually move. CME’s PFMI disclosure states that positions are marked to market at least once daily for all products and at least twice daily for exchange-traded derivatives during U.S. regular trading hours, with continuous exposure monitoring around the clock. This matters because unsettled losses are credit exposure. Frequent variation settlement shrinks that exposure by moving cash from losers to winners sooner.

The mechanism is important. If losses are recognized but not settled quickly, the clearinghouse or venue is temporarily carrying more unsecured exposure. If settlement is frequent, the system needs more operational robustness and participant liquidity, but less hidden build-up of risk. So the cadence of marking and settlement is part of the risk engine’s design, not merely back-office plumbing.

The same is true on-chain, though the failure modes differ. The dYdX chain incident review describes a case where an order-of-operations bug in collateral-pool transfer logic communicated a negative balance even though the relevant insurance fund had enough capital. That false signal triggered a protocol-level failsafe and halted the chain, which in turn paused liquidations and oracle updates. This is a striking example of a first-principles truth: risk logic is state-transition logic. If the sequencing of updates is wrong, the engine can make the wrong decision even when the economic resources are sufficient.

What protections and add‑ons sit beyond initial margin (stress, liquidity, concentration, default waterfalls)?

It is tempting to think of a risk engine as “the thing that sets initial margin.” That is too narrow. Mature systems treat initial margin as one layer in a broader defense stack.

SPAN 2 makes this explicit by separating historical risk, stress risk, liquidity charge, and concentration charge. Each term exists because a different assumption can fail. Historical VaR can understate structural breaks or rare tail events, so stress scenarios are added. Mark-to-market loss can understate actual close-out cost, so liquidity charges are added. A portfolio can look diversified in percentages but still be too large for the market to absorb cheaply, so concentration charges are added.

Clearinghouses then add another layer: default waterfalls. LCH SA’s default management material describes a sequence in which the defaulter’s own variation and initial margin is used first, then the defaulter’s default fund contribution, then the clearinghouse’s own dedicated contribution, then non-defaulting members’ resources, and potentially further assessments. CME similarly describes independent financial safeguards waterfalls for different product families. These structures are not separate from the risk engine. They are what the engine is protecting. Margin models, liquidation logic, and stress testing are designed to make resort to deeper layers as rare as possible.

At the exchange level, crypto venues often replace formal mutualized default waterfalls with insurance funds and ADL. Economically, the role is similar: create a sequence of loss absorbers before the platform itself becomes impaired. The vocabulary differs, but the problem is the same.

Why do risk engines need governance and human judgment in addition to models?

A risk engine always contains judgment, even when it looks highly quantitative.

This is obvious in SPAN 2’s stress framework, which explicitly allows expert judgment in managing seen and unforeseen risks. It is also obvious in SIMM, where the standardized methodology still leaves some implementation mapping choices, such as how to map certain non-standard sub-curves. And it is obvious operationally in default management, where LCH SA’s process relies on decision bodies such as its Default Crisis Management Team and Default Management Group to determine hedging and liquidation strategy.

Why does this matter? Because no finite model fully specifies market behavior under stress. Someone has to decide whether a correlation assumption still deserves trust, whether liquidity has deteriorated enough to warrant a bigger add-on, whether a hedge should precede an auction, or whether recovery tools should be activated. BIS and IOSCO guidance on recovery planning and CCP default management auctions exists precisely because the quantitative model cannot be the whole story. Institutions need documented, testable governance for when the world departs from the model.

This is also why validation matters. CME’s PFMI disclosure describes model risk governance including committee review, sensitivity analysis, backtesting, and independent validation. A risk engine is only credible if its outputs are challenged against realized outcomes and its assumptions are revisited when markets change.

Common misconceptions about what risk engines are trying to do

The most common misunderstanding is to think the engine is trying to predict the future exactly. It is not. A risk engine is making a control decision under uncertainty. The relevant question is not “was the loss estimate exactly right?” but “did the system hold enough resources and intervene early enough often enough to remain solvent and orderly under its design standard?”

A second misunderstanding is to equate lower margin with better risk management because it feels more efficient. Lower margin may simply mean the engine is underestimating risk or over-crediting offsets. Capital efficiency is valuable only after coverage is credible.

A third misunderstanding is to assume liquidation is proof the engine failed. Often liquidation is the engine working as designed. Failure is more subtle: liquidating too late, liquidating based on distorted prices, underestimating close-out costs, mis-sequencing state updates, or relying on offsets that vanish under stress.

A final misunderstanding is to treat risk engines as interchangeable. A SPAN-style scenario engine, a SPAN 2 HVaR-plus-overlays framework, a SIMM sensitivity engine, and a crypto exchange mark-price plus liquidation engine all solve related problems, but under different institutional constraints. Clearinghouses mutualize risk across members and manage defaults with waterfalls and auctions. Bilateral OTC markets need standardized interoperability across firms. Crypto venues often need fully automated retail-facing liquidation and ADL mechanisms. The engine follows the market structure.

What can fail when a risk engine’s assumptions break (correlation, liquidity, inputs, sequencing)?

Risk engines are most vulnerable where their assumptions are least visible.

If correlations become unstable, portfolio offsets can disappear just when traders rely on them most. If historical data lacks the relevant regime, HVaR can understate forward risk even with long lookbacks. If liquidity evaporates, close-out costs can dominate modeled mark-to-market losses. If mark prices depend on stale or manipulable inputs, liquidation timing becomes unreliable. If operational sequencing is wrong, as the dYdX incident illustrates, the engine can move into a failure state despite adequate economic backing.

There is also a structural tradeoff between automation and discretion. Automation is fast, consistent, and scalable; discretion can handle novel situations the model did not foresee. Too much automation can create brittle edge cases. Too much discretion can create opacity and unpredictability. Production systems try to combine both: codified rules for ordinary stress, governed intervention for extraordinary stress.

Conclusion

A risk engine is the machinery that keeps a derivatives market from turning leverage into unbounded credit exposure. It works by estimating portfolio loss under adverse conditions, comparing that loss with available resources, and enforcing actions such as margin calls, liquidation, use of insurance or guaranty resources, and, if necessary, broader default management.

The detail varies but the core idea is stable.

- SPAN scenarios

- SPAN 2 HVaR and stress overlays

- SIMM sensitivities

- exchange mark-price and ADL logic

A market can only offer leverage safely if it has a disciplined way to translate uncertain future losses into present collateral requirements and timely interventions. That disciplined way is the risk engine.

How do you start trading crypto derivatives more carefully?

Start smaller, choose a margin mode that limits cross‑exposure, and use order types that control execution risk. On Cube Exchange you can fund your account, select isolated or cross margin, and place sized orders while monitoring the platform’s displayed mark price and liquidation price so you limit downside before opening a trade.

- Fund your Cube account with fiat or a supported crypto deposit.

- Select margin mode (use isolated to limit a single position’s downside or cross only if you accept portfolio exposure).

- Size your position by setting notional and leverage (lower leverage reduces liquidation risk); use a limit order for controlled entry.

- Set an explicit stop‑loss or take‑profit and confirm the displayed mark price and liquidation price before submitting the order.

Frequently Asked Questions

A mark price is a computed reference (often combining last trade, top‑of‑book quotes, an index and funding adjustments) used to mark positions so liquidations aren’t triggered by a thin or manipulated print; exchanges like Binance explicitly define mark price inputs to produce a more stable reference for margin and liquidation decisions.

There are three common patterns: scenario‑based portfolio repricing (e.g., CME SPAN), historical‑VaR with stress and overlays (CME SPAN 2’s HVaR + stress + liquidity + concentration structure), and sensitivity‑based standardization (ISDA SIMM for uncleared OTC initial margin); each balances realism, conservatism and operational simplicity differently.

Risk engines calculate portfolio‑level offsets but limit credit for offsets whose relationships can break under stress; SPAN-style engines explicitly recognize intra‑ and inter‑commodity spreads while SPAN 2 adds concentration charges calibrated to recent ADV to penalize positions that are large relative to market depth.

If a liquidation leaves uncovered losses, venues typically first use an insurance or guaranty fund and, if that is insufficient, can apply auto‑deleveraging (ADL) or other system‑level loss allocation; exchanges like Bybit describe ADL selection rules (e.g., ranking by leveraged return) and Binance/Bybit document staged liquidations and insurance‑fund usage.

Governance and human judgment are built into production risk engines because models can miss novel regimes; SPAN 2 explicitly allows expert judgment in stress calibration and CCPs maintain default‑management committees (e.g., LCH’s Default Management Group) to make decisions that models cannot fully specify.

How often positions are marked and settled changes unsecured exposure materially: CME discloses marking at least once daily and twice daily for many exchange‑traded products (with continuous monitoring), while on‑chain systems have different failure modes - the dYdX incident shows an order‑of‑operations bug can trigger protocol halts even when an insurance fund existed.

Operational failures can come from more than model error: unstable correlations that remove offsets, evaporating liquidity that raises close‑out costs, manipulable or stale mark‑price inputs that mis‑time liquidations, and sequencing bugs in state updates (the dYdX case is an example) - all of which can defeat otherwise adequate economic resources.

Related reading