What is Blockchain Latency?

Learn what blockchain latency really means, from propagation and inclusion to confirmation and finality, and why it shapes speed, forks, and liveness.

Introduction

Blockchain latency is the delay between submitting or observing an event and the moment the network makes that event usable, accepted, or irreversible. People often talk about latency as if it were a single number, but that hides the real engineering problem. A user asks, “How long until my transaction goes through?” A validator asks, “How long until I hear about the latest block?” A protocol designer asks, “How quickly can the system reach agreement without becoming fragile?” These are related questions, but they are not the same question.

That distinction matters because blockchains are distributed systems before they are financial systems. No central clock tells every node what just happened. Information must move across a network, be checked, be ordered against conflicting information, and then survive long enough that everyone treats it as settled. The delay introduced by each of those steps is latency. If you compress them into one vague idea of “speed,” you miss the mechanism; and then you miss why different chains make such different design choices.

The core fact to keep in view is simple: a blockchain cannot confirm what it has not yet heard, and it cannot safely finalize what the network has not sufficiently agreed on. Everything else follows from that. Network distance, message sizes, verification work, consensus rounds, leader rotation, quorum thresholds, and fee markets all show up as different ways of paying that cost.

What stages make up blockchain latency and which stage matters to me?

| Stage | Who asks | Typical metric | Why it matters |

|---|---|---|---|

| Locally submitted | Wallet user | Time to hash | Identifiable not settled |

| Network visible | Peers/relays | Time to mempool visibility | Needed for inclusion |

| Included | Block proposer | Time to block inclusion | Usable for many apps |

| Confirmed | Exchanges/apps | N confirmations time | Practical confidence |

| Finalized | Protocol validators | Finality time | Durable irreversibility |

When someone sends a blockchain transaction, several different clocks start running at once. The transaction first has to reach at least one node, then spread to other nodes, then remain attractive enough for a block producer to include it, then be accepted by other nodes as part of a valid block, and finally become hard to reverse. Each stage has its own source of delay. If a wallet says a transaction is “pending,” that usually means the transaction has been broadcast but not yet included. If an explorer says it has “1 confirmation,” that means inclusion happened, but not necessarily finality. If a protocol advertises “fast finality,” it is making a claim about the later part of the pipeline, not necessarily the earlier parts.

This is why the most useful way to think about latency is as a sequence of state transitions. A transaction moves from locally submitted to network visible to included to confirmed enough for practical use to finalized. A block moves from produced by one node to known by peers to known by most of the network to accepted by fork choice to finalized. In both cases, latency is the elapsed time between these stages. The stages differ across architectures, but the pattern does not.

Ethereum’s developer documentation makes this explicit at the transaction level: a transaction is broadcast to the network, enters a transaction pool, is included by a validator in a block, and later that block becomes justified and finalized. Bitcoin uses different terminology and weaker native finality, but the same structure is there: broadcast, mempool diffusion, block inclusion, and then increasing confidence as more blocks build on top. Tendermint-style systems tighten this pipeline by making commit a quorum artifact of the consensus protocol itself, but even there, visibility and proof can lag by an additional block because the commit votes for block h are only embedded in block h+1.

So the first useful correction is this: latency is not just block time. A chain can have short block intervals and still deliver poor user experience if transactions propagate slowly, if proposers are selective under congestion, or if finality takes many communication rounds. A chain can also have longer block intervals but fairly predictable settlement semantics. Block time matters, but only as one component inside a larger timing model.

How does network propagation and physical delay affect blockchain latency?

At the bottom, blockchain latency begins as ordinary network delay. A message has to leave one machine, cross links and routers, arrive at another machine, and be processed there. In network measurement, the canonical one-way delay metric is defined as the time between when the first bit leaves the source and when the last bit arrives at the destination. That definition comes from Internet performance standards, and it is useful here because it separates the thing being measured from the applications built on top of it.

That sounds almost too obvious to mention, until you notice how much trouble real measurement creates. One-way delay is hard to measure accurately because the source and destination clocks must be closely synchronized. The standards literature emphasizes that synchronization error, clock resolution, skew, and the difference between host timestamps and actual wire times can dominate the error budget. In other words, even before we ask about blockchains, “how much latency is there?” is not always a trivial measurement problem.

For blockchains, the raw network delay is only the starting point. Nodes do not simply passively receive bytes. They parse messages, check signatures, validate headers, compare against local mempool contents, sometimes request missing data, and often avoid forwarding information they think is invalid or redundant. That means the user-visible propagation time is not just propagation in the physics sense. It is propagation plus protocol overhead plus local verification work.

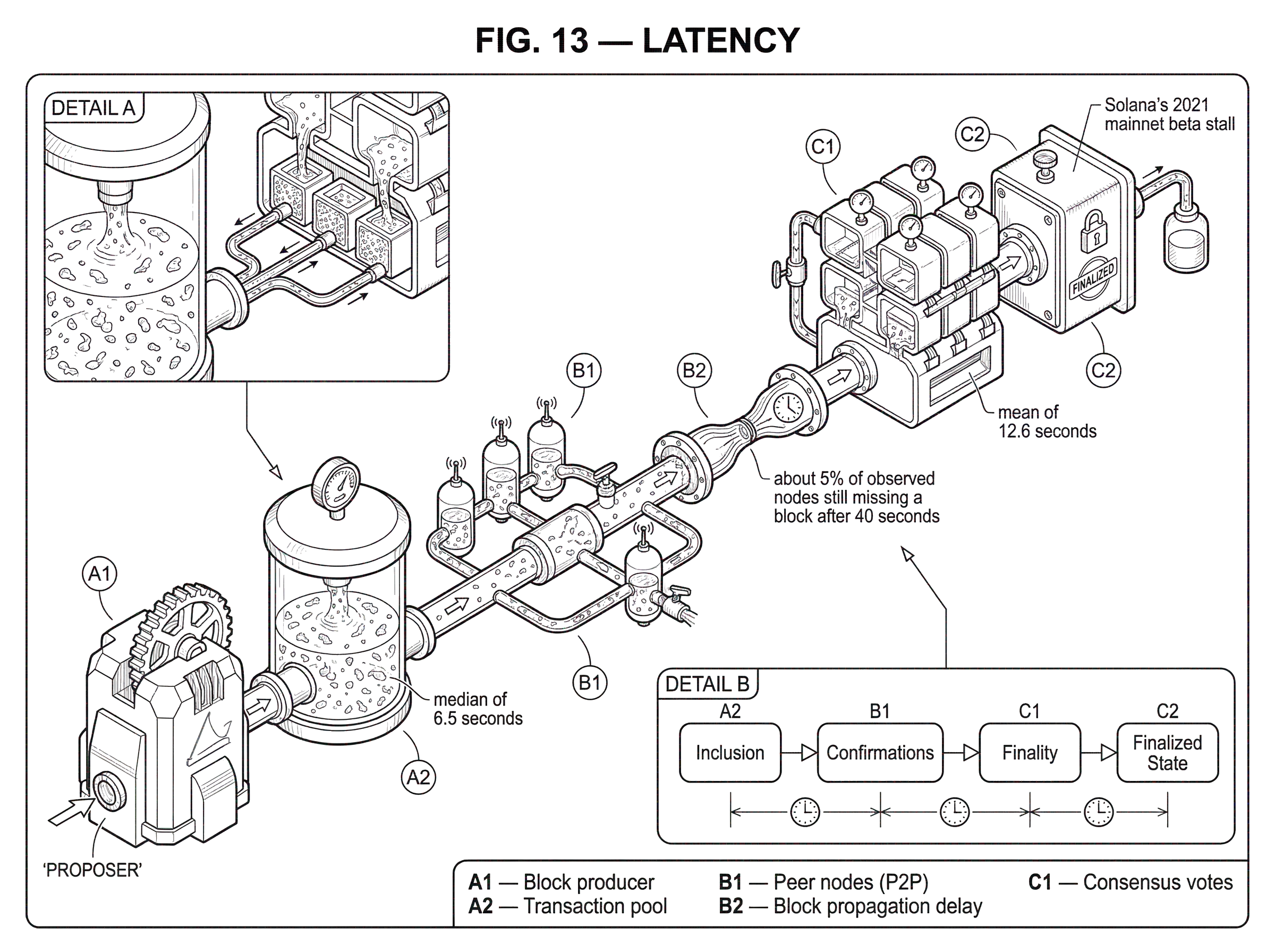

Decker and Wattenhofer’s Bitcoin measurements made this concrete. They found that block propagation to many nodes was fairly fast, but with a long tail: a median of 6.5 seconds, a mean of 12.6 seconds, and about 5% of observed nodes still missing a block after 40 seconds. That long tail matters more than the median might suggest, because consensus safety is sensitive not just to how quickly the average node learns about a block, but to how long competing information can coexist in different parts of the network.

How does latency cause forks and give an advantage to well‑connected participants?

In a blockchain, the dangerous case is not merely that things are slow. It is that different nodes are slow in different ways at different times. If miner A finds a block and some part of the network learns about it later than another part, miner B can still be mining on the previous tip. If B finds a competing block before hearing about A’s block, the network now has a fork. That fork may resolve quickly, but it is still a real cost: wasted work, inconsistent local views, delayed certainty, and opportunities for strategic behavior.

This is why propagation delay is tightly linked to stale blocks. Decker and Wattenhofer argued, and empirically supported, that propagation delay in Bitcoin is the primary cause of blockchain forks. Their observed fork rate over 10,000 blocks was 1.69%, and their propagation-based model predicted about 1.78%, a close match. The mechanism is straightforward. The longer it takes for a new block to become common knowledge, the larger the window in which someone else can produce a conflicting block that was locally rational from their perspective.

This also creates asymmetry. If some miners or validators are better connected, or participate in specialized relay networks, they hear about blocks earlier and can switch work sooner. That reduces their stale-block risk relative to slower peers. FIBRE exists precisely because this matters economically in Bitcoin: it is a low-latency relay overlay built to move blocks across the network near the speed-of-light bound in fiber, using UDP, forward error correction, and compact blocks. Its stated purpose is to reduce stale block races, because large mining operations otherwise gain an asymmetric advantage.

The important principle is broader than Bitcoin. Latency is not just an inconvenience; it changes who sees the state first. In any competitive environment (block production, arbitrage, liquidation, MEV, dispute participation) earlier visibility is power. That is why low-latency access often becomes its own infrastructure layer.

How do block size and bandwidth change propagation delay and fork risk?

A message that carries more bytes generally takes longer to get across the network, especially when multiple hops and queueing are involved. In blockchains, this means block size and transaction volume interact directly with latency. The deeper point is not merely “bigger is slower.” It is that bigger blocks widen the disagreement window between “producer has block” and “network has block.”

Again Bitcoin provides a clean worked example. In the 2013 measurements, blocks larger than about 20 kB showed an additional delay of roughly 80 ms per extra kilobyte until a majority of observed nodes knew about the block. Small blocks had their own overhead because the protocol path involved announcement, request, and transfer round trips. So the latency curve had two parts: fixed coordination overhead for small objects, then transmission-dominated delay for larger ones.

That mechanism generalizes. If a blockchain wants more throughput by packing more transactions into each block, it usually increases the amount of data that has to be disseminated and verified. Unless the network and relay design improve enough to compensate, higher throughput pushes up block propagation latency, and higher propagation latency tends to increase fork risk or force more conservative consensus timing. This is one reason throughput and latency are in tension: they compete for the same communication and verification budget.

The most elegant optimizations therefore try to reduce bytes sent without changing the underlying block contents. Bitcoin’s compact block relay does this by assuming peers already have most transactions in their mempools. Instead of transmitting full transactions again, a node sends short per-peer identifiers for transactions it expects the receiver already knows. The receiving node reconstructs the block from local mempool contents and only requests whatever is missing. If the two mempools are similar, both bandwidth and latency fall sharply because the critical path shrinks.

But notice the hidden assumption: similar mempools. If peers have diverged views of pending transactions, compact blocks become less effective and may require extra round trips to fetch missing data. So even an optimization that looks purely about transport is really about state alignment between nodes. Here the mechanism is subtle but important: latency can be reduced by sending less data only if receivers can accurately predict what they already know.

How much does local verification and execution add to blockchain latency?

It is tempting to imagine the network as the only bottleneck, but local computation matters. A node that receives a block still needs to validate it. Signature checks, transaction verification, state execution, and consistency checks all sit on the path between receipt and onward forwarding or acceptance. If that computation is expensive, effective propagation slows even if the raw network is fast.

The Bitcoin propagation paper explicitly defined propagation delay as the combination of transmission time and local verification. That is a useful corrective because blockchain discussions often isolate “network latency” from “execution latency” too cleanly. In practice, nodes are not dumb routers. They are participants in a shared validity game, and checking validity takes time.

This is also why some systems try to structure work so that ordering can happen before full execution or so that expensive verification can be parallelized. Solana’s Proof of History is an example of an architecture aimed at reducing messaging overhead around ordering. The whitepaper presents PoH as a way to encode verifiable passage of time and event ordering so validators do not need to negotiate timestamps through as many rounds of communication. The sequence is generated sequentially, but verification can be parallelized, which lowers the wall-clock cost to check the ordering evidence. The intended consequence is lower end-to-end time to consensus and, in the system’s framing, sub-second finality when paired with the surrounding consensus machinery.

The tradeoff is that replacing one bottleneck often sharpens another. Solana’s own whitepaper notes that synchronizing multiple PoH generators can improve throughput but reduces true-time accuracy because network latencies between generators matter. So even here, time-ordering shortcuts do not abolish latency; they rearrange where it enters the system.

What is the difference between propagation delay and consensus latency?

| Consensus family | Finality model | Latency profile | Trade-off |

|---|---|---|---|

| Nakamoto (PoW/PoS) | Probabilistic finality | Confidence grows over time | Simple, longer finality |

| Tendermint (BFT) | Quorum (2/3) finality | Predictable commit latency | Requires 2/3 availability |

| HotStuff (responsive BFT) | Quorum finality | Responsive when synchronous | Low latency when healthy |

A node can know about a block long before the network has agreed to treat it as final. This is where propagation latency ends and consensus latency begins. The difference is conceptual: propagation asks, “Who has heard the message?” Consensus asks, “Has enough of the right set of participants expressed compatible views that the message is now safe to build on?”

In Nakamoto-style systems, this answer is probabilistic. A block is not final because a quorum signed it; it becomes increasingly costly to reverse as more work or stake-weighted extension accumulates on top of it. Latency here is not one threshold set by protocol law, but a confidence curve chosen by users, exchanges, wallets, and applications. The familiar “wait for N confirmations” convention is not fundamental physics. It is an operational response to probabilistic settlement.

In BFT-style systems, the protocol often makes consensus latency more explicit. Tendermint defines a commit as a set of signed messages from more than two-thirds of validator weight. That gives a cleaner mechanical notion of final acceptance, but it also reveals the cost: enough validators must exchange messages, receive them in time, and produce signatures. If the network is slow or if too many validators are faulty or unreachable, liveness suffers and latency stretches or stalls.

HotStuff sharpens this design space. Its key idea is responsiveness: once the network is behaving synchronously enough, a correct leader can drive consensus at the pace of actual network delay rather than worst-case timeout assumptions. That matters because timeout-heavy BFT protocols often pay latency pessimistically even when the network is healthy. By making progress track real communication delay after synchrony is restored, HotStuff-style designs try to reduce the gap between observed and worst-case latency while keeping Byzantine fault tolerance.

Ethereum’s proof-of-stake design lives in this broader family of tradeoffs. Its specifications explicitly emphasize liveness under major partitions and minimizing complexity even at some cost in efficiency. That tells you something important about latency even without quoting a single timing number: the protocol is willing to avoid certain aggressive optimizations if they would complicate reasoning about safety and recovery. Simplicity is not free; it is purchased partly with foregone latency improvements.

Why can a transaction appear sent quickly but still take a long time to settle?

Imagine you submit a transaction on Ethereum with a competitive fee. Within moments, your wallet gets back a transaction hash. That can create the impression that the transaction is basically done. But what has really happened is narrower: one node accepted your signed message and broadcast it. The transaction is now identifiable, not settled.

Next, the transaction has to diffuse through the peer-to-peer network and reach enough validators and nodes that it is broadly visible in mempools. If the network is congested, some peers may learn it later than others, or not keep it at all if local policies differ. A validator then has to choose to include it in a block. Under EIP-1559, your fee settings affect that inclusion priority, so latency now depends partly on fee market competition rather than just transport speed.

Suppose the transaction gets included in the next block. For many applications, that is already “good enough.” But the protocol still distinguishes inclusion from stronger settlement. As time passes, the block becomes justified and later finalized. Those later transitions depend not on your individual transaction but on consensus over the chain containing it. So the latency you care about depends on what you are trying to do. A game might treat inclusion as enough. A cross-chain bridge may demand finality. An exchange may pick something in between.

The same transaction therefore has multiple honest latency numbers: time to hash, time to network visibility, time to inclusion, time to practical confidence, and time to finality. None is fake. They answer different questions.

When does high latency turn into a liveness failure on blockchains?

The most revealing blockchain incidents are the ones where latency stops being “a few more seconds” and becomes “the system cannot make progress.” That is a liveness problem, but latency is often the path by which you get there.

Solana’s 2021 mainnet beta stall is a clear example. According to the postmortem, a validator ran two instances that transmitted different blocks for the same slot, creating multiple minority partitions. A deeper data-availability bug meant internal structures identified blocks and state by slot number alone, so different blocks occupying the same slot were not properly distinguishable for repair. An optimization in Turbine had also reduced propagation of duplicate shreds, which delayed fault visibility. The result was not just disagreement but an inability to repair missing blocks between partitions, and the network halted until coordinated recovery.

The lesson is not simply “bugs are bad.” It is more specific: low-latency optimizations often depend on invariants about identity, repair, and fault propagation. If those invariants are wrong, the same shortcuts that normally reduce delay can amplify a partition or make missing information unrecoverable.

Kusama’s finality stall shows the same pattern in a different layer. There, disputes imported from already-disabled validators could remain Active without meaningful participation, and GRANDPA’s chain selection treated those unresolved Active disputes as threats, omitting blocks that included them. Finality lag then stretched to around an hour. Here the system was not bottlenecked by raw packet travel time. It was bottlenecked by protocol state that could not resolve under the implemented rules. Yet from the user’s perspective, it still appeared as latency: blocks existed, but finality stopped advancing.

So blockchain latency has two regimes. In the healthy regime, it measures how long the system takes to do normal work. In the unhealthy regime, it reveals whether the system can still coordinate under stress.

The same mechanisms govern both.

- propagation

- repair

- quorum formation

- timeout handling

What techniques do blockchains use to lower latency, and what are the trade‑offs?

| Lever | What it reduces | Main trade-off | Best when |

|---|---|---|---|

| Compact blocks | Block propagation latency | Needs similar mempools | Mempools largely aligned |

| Relay overlays (FIBRE) | Inter-node relay time | Creates infra asymmetry | Large miners/operators |

| Responsive BFT / PoH | Consensus latency | Complex invariants or sync needs | Low-latency finality designs |

| Parallel verification | Local verification delay | Greater implementation complexity | GPU/CPU rich nodes |

| Fee market incentives | Queueing latency | Less egalitarian inclusion | Congested networks |

There is no universal trick for low latency, because the delay comes from multiple layers. But the levers are coherent if you think in terms of mechanism.

One lever is to reduce the amount of data that must move on the critical path. Compact blocks do this by sending short identifiers instead of full transactions when mempools align. Relay overlays such as FIBRE push the same logic further with specialized transport and error correction to shrink block relay time. These approaches mostly target block propagation latency.

Another lever is to reduce the amount of coordination needed before participants can order or accept data. PoH aims at this by giving the network a cryptographically verifiable ordering stream. Responsive BFT protocols aim at it by making the number of communication steps fixed and allowing progress at actual network speed once synchrony holds. These approaches mostly target consensus latency.

A third lever is to make local processing cheaper or more parallel. Better signature verification pipelines, execution parallelism where possible, and careful separation of header validation from full block checking all reduce the delay between receiving information and acting on it. But this must be done carefully: forwarding too early can spread bad data, while verifying too much before forwarding can make honest data arrive too late.

And a final lever is economic rather than purely technical: use fee markets and proposer incentives to let urgent transactions buy faster inclusion. This does not reduce network latency itself, but it reduces queueing latency in the path from mempool to block. Ethereum’s fee market is a clear case where latency for an individual transaction depends partly on what the sender is willing to pay during contention.

Each lever trades against something. Sending less data depends on shared state assumptions. Faster relay networks can create infrastructure asymmetries. Shorter timeouts can make the system brittle under variance. Aggressive parallelism can complicate validity rules. Faster inclusion via fees can make performance less egalitarian. There is no free low-latency design.

What's the single core idea to remember about blockchain latency?

The cleanest way to remember blockchain latency is this: latency is the cost of turning local knowledge into shared knowledge, and shared knowledge into durable agreement.

That is why it shows up everywhere. It determines how quickly a transaction becomes visible, how likely blocks are to race, how much extra throughput a network can safely absorb, how fair block production is across geography, how long users wait for confidence, and how a chain behaves under faults. Different architectures shift the bottleneck between propagation, verification, and consensus, but none escape the underlying problem.

If you remember one thing tomorrow, remember this: a blockchain is fast only to the extent that it can move information, validate it, and gather enough agreement before conflicting information gains too much time to grow.

What should I check about latency before trading or transferring crypto?

Understand latency before trading or transferring so you can pick fees, order types, and confirmation targets that match your risk. On Cube Exchange, set the correct network and fee profile, then use order and confirmation choices to reduce slippage, stalled transfers, or bridge reverts.

- Select the exact asset and blockchain network in Cube’s deposit, withdraw, or trade flow. Verify the chain’s finality model (probabilistic confirmations vs. explicit finality) before proceeding.

- If you are sending on-chain, set an appropriate gas/priority fee in Cube’s transaction settings to match current mempool conditions; increase the max priority fee when inclusion speed matters.

- For market activity, prefer limit orders to control execution price when latency or thin liquidity could cause slippage; use market orders only when immediacy is essential.

- After an on-chain transfer, wait for the chain-specific confirmation or finality threshold before relying on the funds for high-value trades or cross-chain moves.

Frequently Asked Questions

Block time is only one component; latency is the sequence of delays from local submission to network visibility, block inclusion, increasing confidence, and finalization, and different applications care about different points on that chain.

Long tails matter because a small fraction of nodes learning a block much later increases the window in which competing blocks can be produced, raising stale-block/fork rates and giving better‑connected participants an advantage; Decker and Wattenhofer measured a median propagation of 6.5s, mean 12.6s, and ~5% of nodes still missing a block after 40s, which correlated closely with observed fork rates in their dataset.

Compact blocks cut bandwidth and latency by sending short identifiers instead of full transactions when peers have similar mempools, but their effectiveness falls if mempools diverge because receivers need extra round trips to fetch missing data and that can reintroduce delay and bandwidth costs.

Consensus design shifts where latency appears: Nakamoto-style chains give probabilistic finality that improves with more extensions, BFT/Tendermint-style protocols make commit a quorum artifact with explicit message exchanges, and HotStuff-style protocols aim for responsiveness so progress matches actual network delay once synchrony holds, each trading off predictability, communication rounds, and worst‑case timeouts.

Low‑latency optimizations can worsen failure modes when their invariants break: Solana’s 2021 stall shows how propagation/identity optimizations plus a data‑availability bug prevented repair across partitions, and Kusama’s finality stall shows unresolved disputes can stall finality even when blocks exist, so faster paths can amplify partitions or make recovery harder if not carefully designed.

Latency is both network transmission and local work: raw one‑way delay is the physics baseline but nodes also parse, verify signatures, execute state, and possibly request missing data, so verification and processing time are part of propagation delay and can dominate overall latency if expensive.

Larger messages or higher throughput tend to increase propagation time because more bytes amplify transmission and queuing delays; empirical Bitcoin measurements showed small‑object overhead then a roughly linear extra delay per kilobyte past a threshold, which explains why increasing block data can widen disagreement windows unless relay and verification infrastructure improve.

Designers reduce latency by sending less data on the critical path (compact blocks, relay overlays), reducing coordination rounds (PoH ordering, responsive BFT), parallelizing or cheapening verification, and letting fee markets shorten queueing delay, but each lever introduces tradeoffs such as state‑alignment assumptions, infrastructure asymmetry, complexity, or reduced egalitarianism.

Related reading