What is an Aggregator?

Learn what a rollup aggregator is, how it batches and publishes L2 data to L1, and why it is central to rollup scalability, cost, and security.

Introduction

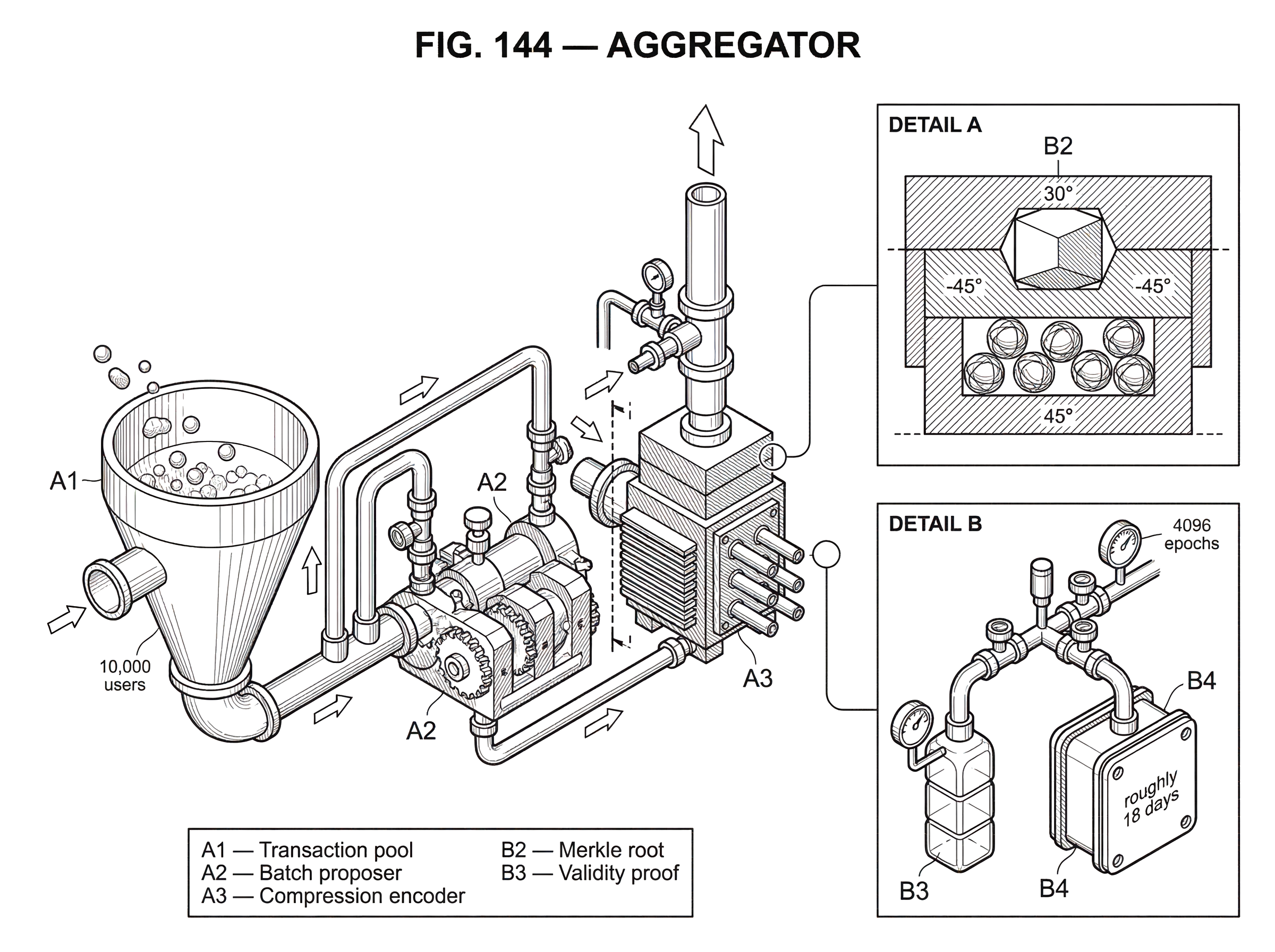

Aggregator is the name for the rollup component that collects transactions, bundles them, compresses them, and publishes the result to a base chain such as Ethereum. If rollups are supposed to make blockchains cheaper and faster, the natural question is: where does that savings actually come from? Not from magic, and not from skipping security entirely. It comes from taking many user actions that would have been posted and executed separately on Layer 1, doing most of the work offchain, and then using one party or service to turn that activity into a compact onchain commitment.

That party is often also called an operator, batch submitter, or, in some designs, a validator. The names vary because rollups vary. But the underlying job is stable: take a stream of L2 activity and represent it on L1 in a way that is cheap enough to scale and structured enough to be checked later.

The important idea is that an aggregator is not just a messenger. It is the compression point between a high-volume execution environment and a lower-throughput settlement layer. That means it sits exactly where the main benefits of rollups appear; and where many of the main risks appear too. If the aggregator fails, withholds data, censors users, or submits bad commitments, the rollup’s safety and liveness depend on what the rest of the protocol can do in response.

To understand aggregators, it helps to keep one distinction in mind from the start. In some systems, the component that orders transactions and the component that posts them to L1 are separate; in others, they are effectively the same actor. This is why aggregators are often confused with sequencers. A sequencer decides ordering and gives users fast L2 confirmations. An aggregator’s defining job is narrower and more concrete: it packages L2 activity into a publishable form that L1 can store, verify, or challenge.

Why do rollups need an aggregator?

A base chain like Ethereum is expensive because every transaction competes for limited block space, and every full node must replay and store the relevant data. If each user interaction were posted individually to L1, the system would inherit L1’s security; but also its cost and throughput limits. Rollups improve this by moving execution offchain while keeping enough information onchain for the result to remain recoverable and enforceable.

That creates a new problem. If thousands of users are transacting on the rollup, someone has to collect those transactions, transform them into a compact representation, and publish the right commitments to L1. Without that role, there is no bridge between offchain execution and onchain settlement.

Here is the mechanism. Users send transactions to the rollup. The rollup executes them offchain and updates its own state. The aggregator then takes many such transactions, groups them into a batch, compresses the batch data, and submits the batch to an L1 contract. By spreading one onchain publication cost across many L2 transactions, the average cost per user falls sharply.

That cost reduction is not incidental. It is the whole point. A rollup becomes economically useful only when fixed L1 submission costs are amortized across a large enough batch. The aggregator is the component that performs that amortization.

How does aggregator batching create real rollup scalability?

| Batching policy | Amortization | User latency | Finality anchoring | Best when |

|---|---|---|---|---|

| Frequent small batches | Low amortization | Low latency | Frequent L1 anchors | Latency-sensitive apps |

| Large infrequent batches | High amortization | High latency | Infrequent L1 anchors | Fee-sensitive high throughput |

| Medium cadence | Balanced amortization | Moderate latency | Regular L1 anchors | General-purpose workloads |

The easiest way to think about an aggregator is as a translator between two accounting systems. On the L2 side, there may be thousands of individual transfers, swaps, liquidations, bridge messages, and contract calls. On the L1 side, what matters is a much smaller object: the data needed for availability, plus a commitment to the state transition or a proof that the transition is valid.

Suppose 10,000 users submit transactions during a busy period. Posting all of them separately to Ethereum would mean paying the overhead of 10,000 distinct L1 transactions. Instead, the aggregator can compress them into a single batch submission. Even if that single submission is large, its fixed overhead is paid once, not 10,000 times. That is why batching matters more than any buzzword about “speed.” The rollup is cheaper because the aggregator turns many expensive publications into one more-efficient publication.

Compression deepens that effect. Rollup transactions often contain structure that can be encoded more efficiently than raw Ethereum calldata. Repeated fields, signatures, addresses, and transaction formats can be packed or transformed to reduce bytes. The exact compression method depends on the rollup design, but the causal chain is straightforward: fewer bytes posted to L1 means lower publication cost, which means lower fees for users.

Historically, many rollups posted this compressed data through Ethereum calldata, because it was a simple way to make the data available onchain without storing it permanently in contract state. More recently, blob-carrying transactions introduced by EIP-4844 give aggregators a cheaper place to put rollup data. blobs are designed for exactly this use: large amounts of data that must be available to the network, but do not need to be readable by the EVM forever. They are cheaper than calldata, retained only for a limited window of roughly 18 days, and referenced through commitments rather than direct EVM access.

That change matters because it shifts the aggregator’s economics. Before blobs, the aggregator’s main publication choice was usually some form of compressed calldata. With blobs, the same role still exists, but the cost curve improves. The aggregator still batches and publishes; the medium changed, and that medium affects how much throughput a rollup can buy from L1.

What data and commitments does an aggregator publish on Layer 1?

An aggregator does not merely say, “trust me, the new state is correct.” It posts commitments that let others reconstruct, verify, or challenge what happened.

In an optimistic rollup, the aggregator commonly submits the old state root, the new state root, and a commitment to the transaction batch, such as a Merkle root. The old and new roots summarize the rollup state before and after the batch. The transaction commitment lets anyone prove that a particular transaction was included. This structure is what makes dispute systems possible. If a watcher believes the transition from old root to new root is invalid, it can use the published data and commitments to challenge the batch.

The subtle point is that the state root by itself is not enough. A root is a compact summary, but summaries are useless for verification unless the underlying data is available. That is why rollup designs require the aggregator to publish the transaction data (or enough data to reconstruct the transition) on L1. Without data availability, users and challengers could know that some state commitment was posted, but not whether it was valid or how to recover if the operator disappeared.

This is where many smart readers initially underestimate the role. The aggregator is not only reducing cost; it is also carrying a data-availability obligation. Cheap publication is useful, but recoverable publication is what keeps the rollup from becoming a private database with a bridge attached.

How do aggregators work in optimistic rollups and what are the security assumptions?

In optimistic rollups, the aggregator’s submission is accepted provisionally. The system behaves as if the posted state transition is valid unless someone proves otherwise during a challenge window. That design makes the aggregator operationally simple in one sense: it does not need to attach a validity proof for every batch. But it also creates a security assumption that cannot be ignored.

The assumption is that at least one honest participant can observe the posted batch, reconstruct the state transition, and challenge fraud if necessary. If no honest watcher exists, a malicious aggregator could submit an invalid state update and, in the worst case, steal funds. This is why optimistic rollup security is often described as “trustless, assuming at least one honest verifier.” The aggregator is central to that assumption because it is the party making the claim that others must check.

There is also a liveness and censorship issue. If the aggregator goes offline, refuses to include some transactions, or withholds state data, users may be unable to progress normally. Optimistic rollups mitigate this by forcing operators to publish state-related data on Ethereum and, in some designs, by allowing users to force transactions into an onchain queue for later inclusion by the sequencer. These mechanisms do not remove the aggregator from the system; they constrain how much damage a bad aggregator can do.

A bond-and-slash model is often used to sharpen those constraints. Operators may need to post a bond before producing blocks or submitting batches. If they submit invalid blocks or build on invalid history, that bond can be slashed. The bond does not prove honesty. What it does is change incentives: dishonesty becomes economically costly if detection works.

Notice the dependency chain. The aggregator posts a batch. The batch is accepted temporarily. Honest watchers need published data to inspect it. If the batch is invalid, they must be able to challenge it in time. Bonds make cheating expensive, but only if detection and challenge are feasible. The whole design is therefore a combination of cryptography, data publication, and social distribution of monitoring.

What role do aggregators play in zk‑rollups and proof aggregation?

In a zk-rollup, the aggregator role changes shape. The system still needs someone to collect transactions, form batches, and publish data commitments to L1. But instead of relying on a fraud challenge period, the rollup submits a validity proof showing that the batch transition was computed correctly.

That does not eliminate aggregation. It adds another layer of it.

A useful example is proof aggregation. StarkWare’s SHARP is explicitly a proof aggregator: it takes many proof statements, verifies and combines them recursively offchain, and ultimately submits only a final proof onchain. The reason this helps is the same reason transaction batching helps: onchain verification has a fixed cost, so combining many verifications into one object reduces the average cost per statement.

The same pattern appears in systems like Scroll, where the rollup workflow separates execution, data commitment, and proof generation/finalization. There, components such as chunk proposers, batch proposers, relayers, coordinators, provers, and aggregator provers cooperate so that block traces become chunk proofs, chunk proofs become batch proofs, and only then does the final batch get finalized on L1. The aggregator in this setting may be less about simple transaction collection and more about batch-level proof assembly and submission. But the invariant is still the same: take many lower-level events and represent them in one higher-level artifact that L1 can verify efficiently.

This is why “aggregator” can refer to slightly different things across rollup families. In optimistic designs, it often means the operator who batches and posts state commitments. In zk systems, it may also mean the service that aggregates proofs recursively. The common thread is not the exact implementation. The common thread is compression of many events into one verifiable onchain footprint.

Sequencer vs aggregator: what’s the difference and why it matters?

| Role | Orders? | Publishes to L1? | Main risk | Best for |

|---|---|---|---|---|

| Sequencer-only | Decides transaction order | No (local only) | Front-running and MEV | Low-latency UX |

| Aggregator-only | Does not order transactions | Yes; posts batches | Data withholding; backlog | Amortized L1 costs |

| Unified actor | Orders and posts | Yes; combined duty | Single choke point | Simplicity and efficiency |

These roles are often merged in practice, which is why the distinction is blurry. But conceptually they solve different problems.

The sequencer decides which transaction goes where and in what order. That gives users fast feedback and defines the execution order that applications care about. The aggregator decides how the resulting activity is packaged for L1. If the sequencer is the actor producing the rollup’s local timeline, the aggregator is the actor turning that timeline into a settlement artifact.

In some optimistic rollups, a single sequencer has priority access and exclusive rights to submit transactions to the onchain contract. Other participants may be able to validate or challenge, but the sequencer effectively controls ordering and publication timing. In that setup, the sequencer and aggregator are functionally fused. In other systems, batch posting may be permissionless or delegated to distinct modules such as a relayer or batch submitter.

This distinction matters because ordering power and publication power create different risks. Ordering power is where front-running, censorship, and MEV live most directly. Publication power is where data availability, backlog risk, and finality delays live. A single actor can possess both powers, but they should not be confused analytically.

That analytical distinction also helps when thinking about newer designs such as external builder sidecars. A system like Rollup Boost connects OP Stack-style sequencers to external builders while adding local fallback and validity checks against the local execution engine. Even there, the question remains: who is determining order, who is packaging outputs, and who is responsible if publication or liveness fails?

What centralization, censorship, and failure risks do aggregators introduce?

The core weakness of an aggregator-centric design is that efficiency naturally pulls toward centralization. It is operationally simpler to have one active batch submitter, one main sequencer, one relayer, or one proving coordinator. But that simplicity can create choke points.

The first choke point is censorship. If users must rely on a single aggregator to include their transactions, that actor can delay or refuse inclusion. Rollups try to soften this with force-inclusion paths and onchain data publication, but the user experience can still degrade sharply before those safety valves matter.

The second choke point is data withholding. If the aggregator posts only a summary and not the needed data, users may not be able to reconstruct state or withdraw safely. This is why publishing batch data on L1 is not an implementation detail but a security boundary.

The third is backlog and delayed hard finality. Research on batch-submitter failure modes in Arbitrum-style systems showed that when batches accumulate faster than they are posted to L1, transactions can linger in a soft-final state: visible on L2, but not yet hard-final on L1. Under certain conditions, this longer window can be abused by attacks that rely on rollback or delayed posting. The broader lesson is not that all rollups are broken. It is that the aggregator’s throughput, posting policy, and compression behavior can affect not just fees but what users should consider final.

There is also a more ordinary operational limit: the aggregator must continuously arbitrate between latency and efficiency. Posting batches more often gives faster L1 anchoring but worse amortization. Waiting longer improves cost efficiency but increases confirmation delay and can enlarge the backlog. There is no perfect setting independent of workload, fee market conditions, and user expectations.

How do EIP‑4844 blobs change aggregator responsibilities and data availability?

| Submission medium | Per-byte cost | Retention window | EVM access | Best for |

|---|---|---|---|---|

| Calldata | Higher | Permanent | Readable by EVM | Small critical data |

| Blobs (EIP-4844) | Lower | 18 days | Not readable by EVM | Large rollup batches |

Blobs sharpen an important distinction between execution permanence and availability window. Blob data is not accessible to the EVM and is pruned after about 4096 epochs, roughly 18 days. For rollups, that is acceptable because the goal is not eternal raw-byte storage inside the execution layer; the goal is a sufficient availability window for verification, fraud proof construction, and network recovery.

For the aggregator, this means the publication obligation becomes time-bounded but still real. The aggregator must ensure that the data is posted correctly, committed correctly through KZG commitments and versioned hashes, and made available during the retention window. Ethereum’s consensus and execution rules enforce limits on blob usage, including a separate blob gas market and a cap on the number of blobs per block. So an aggregator cannot simply dump arbitrary amounts of rollup data onto L1 without regard to network constraints. It is buying scarce data-availability bandwidth in a market designed specifically for this purpose.

This detail is easy to miss but conceptually important. Rollups do not become cheaper because L1 stopped caring about data. They become cheaper because L1 created a more suitable resource for the kind of data rollups need.

How does threshold aggregation (for example, 2‑of‑3 signing) reduce single‑point failure risk?

The aggregation idea also appears in signing and settlement flows, not only in batching transactions. When systems need a single authorization outcome without letting one party hold the entire signing key, they can use threshold signing. Cube Exchange is a concrete example: it uses a 2-of-3 threshold signature scheme for decentralized settlement, where the user, Cube Exchange, and an independent Guardian Network each hold one key share. No full private key is ever assembled in one place, and any two shares are required to authorize a settlement.

This is not the same thing as a rollup aggregator, but it illustrates the broader architectural logic. Instead of concentrating control in one actor that directly produces the final artifact, the system distributes pieces of authority and then aggregates them into one valid result. In rollups, what is aggregated is usually transactions, state commitments, or proofs. In threshold signing, what is aggregated is signing authority. The shared design instinct is to preserve a compact final output while reducing single-point-of-failure risk.

Key takeaways: what an aggregator does and why it matters

An aggregator exists because offchain execution is not enough by itself. A rollup needs a component that turns many L2 actions into a compact, checkable, and publishable L1 artifact.

That is the essential picture: the aggregator is the rollup’s packaging layer. It collects transactions, compresses them, posts data for availability, submits state commitments or proofs, and makes the economics of batching work. In optimistic rollups, its outputs must be challengeable. In zk-rollups, its outputs must be provable. In both cases, the same principle holds: scalability comes from representing many events with one onchain footprint without losing the ability to verify what happened.

If you remember one sentence tomorrow, remember this: an aggregator is where a rollup turns throughput into settlement.

How does this part of the crypto stack affect real-world usage?

Aggregators determine how quickly a rollup’s state becomes settled on L1, how cheap transactions are, and how hard it is to recover funds if an operator goes wrong. Before you fund or trade assets on networks that rely on rollups, check the aggregator’s publication, data‑availability, and finality properties; then perform your trade on Cube once you understand those trade‑offs.

- Look up the rollup’s publication medium: confirm whether it posts compressed calldata or uses EIP‑4844 blobs and note blob support (affects cost and retention window).

- Check the settlement model and timing: record the optimistic rollup dispute window or zk‑rollup proof cadence and any explicit withdrawal delays.

- Verify operator controls and safeguards: find docs on who can submit batches (sequencer vs. permissionless submitters), whether bonds/slashing exist, and whether force‑inclusion or recovery paths are available.

- Fund your Cube account and proceed: deposit fiat or supported crypto, select the network asset only after you accept its aggregator‑related delays/risks, and place your trade.

Frequently Asked Questions

By amortizing a single L1 publication cost across many L2 transactions: the aggregator groups transactions into a batch, compresses repetitive fields, and posts a compact commitment so the fixed overhead is paid once rather than per transaction, which lowers the average fee per user.

A sequencer orders transactions and gives users fast L2 confirmations, while an aggregator’s job is to package that ordered activity into a compact, publishable L1 artifact (batches, commitments, or proofs); the two roles are often merged in practice but are conceptually distinct.

If an aggregator withholds data or censors transactions, users can face stalled progress or loss of recoverability; optimistic designs rely on onchain publication and force‑inclusion/withdrawal paths to mitigate censorship and data‑withholding risks but those mechanisms do not fully eliminate the problem.

Blobs (EIP-4844) give aggregators a cheaper, time‑bounded place to publish rollup data than calldata, improving the cost curve for submissions, but they also make the aggregator’s publication obligation explicitly time‑limited because blob bytes are pruned after a retention window (~18 days), so aggregators must ensure data is posted and committed correctly during that window.

In optimistic rollups the aggregator posts state transitions provisionally and security depends on at least one honest watcher being able to access the published data and challenge invalid batches during the dispute window; bonds and slashing can change incentives but do not remove the need for effective monitoring and data availability.

In zk‑rollups the aggregator still batches and publishes data but often also coordinates proof generation or proof aggregation so that many executions or proofs are combined into a single onchain verification, reducing per‑batch verification costs (for example, recursive proof aggregation patterns like SHARP combine many proofs offchain before submitting a final proof).

Yes - practical efficiency tends to centralize aggregation (one main batch submitter or prover coordinator is simpler to operate), but that creates choke points for censorship, withholding, and single‑point operational failure; designs try to mitigate this with permissionless submission paths, bonds, or multi‑party schemes, though decentralization remains an open operational trade‑off.

Aggregators must publish not just a state root but the transaction data or enough data commitments to allow anyone to reconstruct and verify the transition; without that onchain availability, summaries are useless for recovery or dispute because third parties cannot check or reconstruct the state changes.

Posting cadence is a trade‑off: submitting batches more often reduces L1 anchoring latency but worsens amortization (higher average fees), while waiting to aggregate larger batches improves cost efficiency but increases confirmation delay, backlog risk, and the window during which soft‑finalized state could be problematic.

Related reading