What is a Shared Sequencer?

Learn what a shared sequencer is, why multiple rollups use one ordering layer, how it works, and the tradeoffs around composability and security.

Introduction

Shared sequencing is the idea that multiple rollups use the same transaction-ordering layer instead of each rollup running its own separate sequencer. The stakes are higher than the phrase first suggests. In a rollup-centric world, you can scale by creating many execution environments, but the moment those environments need to interact, the system starts to feel fragmented again: different clocks, different mempools, different trust assumptions, and no obvious way to make a transaction on one rollup depend safely on a transaction on another.

That is the puzzle a shared sequencer is trying to solve. A rollup does not only need execution and settlement; it also needs someone, or something, to answer a simple but crucial question: in what order did transactions happen? If every rollup answers that question independently, each rollup can be fast in isolation, but coordination across rollups becomes awkward and often unsafe. If several rollups share the same ordering layer, they can at least agree on a common sequence of events.

The core intuition is simple: execution can be separate while ordering is shared. A shared sequencer does not have to run every rollup’s state transition itself. In many designs, it only orders opaque transaction data, commits that ordering, and lets each rollup execute its own transactions later according to its own rules. That separation is what makes the idea plausible at all. Without it, a single shared system would need to understand and execute every rollup’s logic, which would be far harder to scale and much harder to keep sovereign.

Why do rollups need a shared sequencer?

| Model | Control | Cross-rollup coordination | Shared dependency | Best for |

|---|---|---|---|---|

| Per-rollup sequencer | Max local control | Fragmented coordination | Operator single-point risk | Sovereign rollups, custom policies |

| Shared sequencer | Less local control | Common ordering domain | Shared dependency risk | Cross-rollup composability, MEV scale |

A normal sequencer already plays an outsized role in a rollup. It accepts transactions, decides their order, gives users fast confirmations, and later ensures the ordered data is posted somewhere the rollup can rely on. In many deployed systems, this sequencer is still a single operator or a tightly controlled service. That is operationally convenient, but it creates a chokepoint: outages can halt the chain, censorship becomes possible, and external systems often end up trusting offchain promises made by one actor.

This is not just a theoretical concern. Operational guidance for OP Stack chains explicitly distinguishes between sequencer downtime and failures to publish sequenced transactions to L1. In those systems, users can bypass a malfunctioning sequencer by sending transactions through an L1 contract, but the existence of that bypass also reveals the problem: the normal path depends on a sequencer being up and behaving correctly. Real incidents have shown how brittle that can be. Analyses of centralized sequencer outages on Arbitrum describe how a surge in traffic overwhelmed the posting pipeline and caused the sequencer to stall. The lesson is not merely that one implementation had a bug. It is that sequencing is a critical coordination point, and centralizing it concentrates both technical and economic risk.

Now add a second problem: fragmentation. Suppose Rollup A and Rollup B each have their own sequencer. A user wants to atomically sell an asset on A and buy another on B, or a bridge wants a burn on one chain and a mint on another to be coordinated tightly, or an arbitrageur wants a cross-rollup trade to either fully happen or not happen at all. If the two rollups have separate ordering domains, there is no single place where both actions can be ordered together. Each sequencer sees only its own local mempool and acts on its own local clock.

That creates two consequences. First, composability degrades: interacting across rollups starts to resemble sending messages between independent systems rather than composing contracts in one shared environment. Second, latency and uncertainty become part of the economic logic. Searchers, bridges, market makers, and applications now have to reason about timing differences between sequencers, publication delays, and the possibility that one leg of a strategy executes while the other does not.

A shared sequencer is meant to attack both problems at once. It can replace many isolated sequencers with a common ordering service, and if that service is decentralized, it can reduce reliance on a single operator. That is why projects in this area often emphasize censorship resistance, fast confirmations, and cross-rollup composability together. Those are not three unrelated features. They all flow from controlling one common ordering layer rather than many isolated ones.

How does ordering differ from execution in a shared sequencer?

| Layer | Primary job | What it orders | Who verifies | Effect on rollups |

|---|---|---|---|---|

| Ordering | Decide transaction order | Opaque (rollupid, txbytes) | Sequencer consensus | Provides a common clock, not state |

| Data availability | Publish retrievable data | Committed blobs/namespaces | DA samplers/validators | Enables rollups to fetch ordered data |

| Execution | Compute state transitions | Uses ordered data | Rollup execution nodes | Preserves rollup sovereignty |

The easiest mistake is to imagine a shared sequencer as a super-chain that executes everything for everyone. That is usually not what is meant.

The fundamental separation is between ordering, data availability, and execution. Ordering answers which transaction data came before which other transaction data. Data availability answers whether that ordered data has actually been published and can be retrieved. Execution answers what state transition each rollup computes from that data. These are different jobs, and shared sequencer designs become much easier to reason about once you keep them separate.

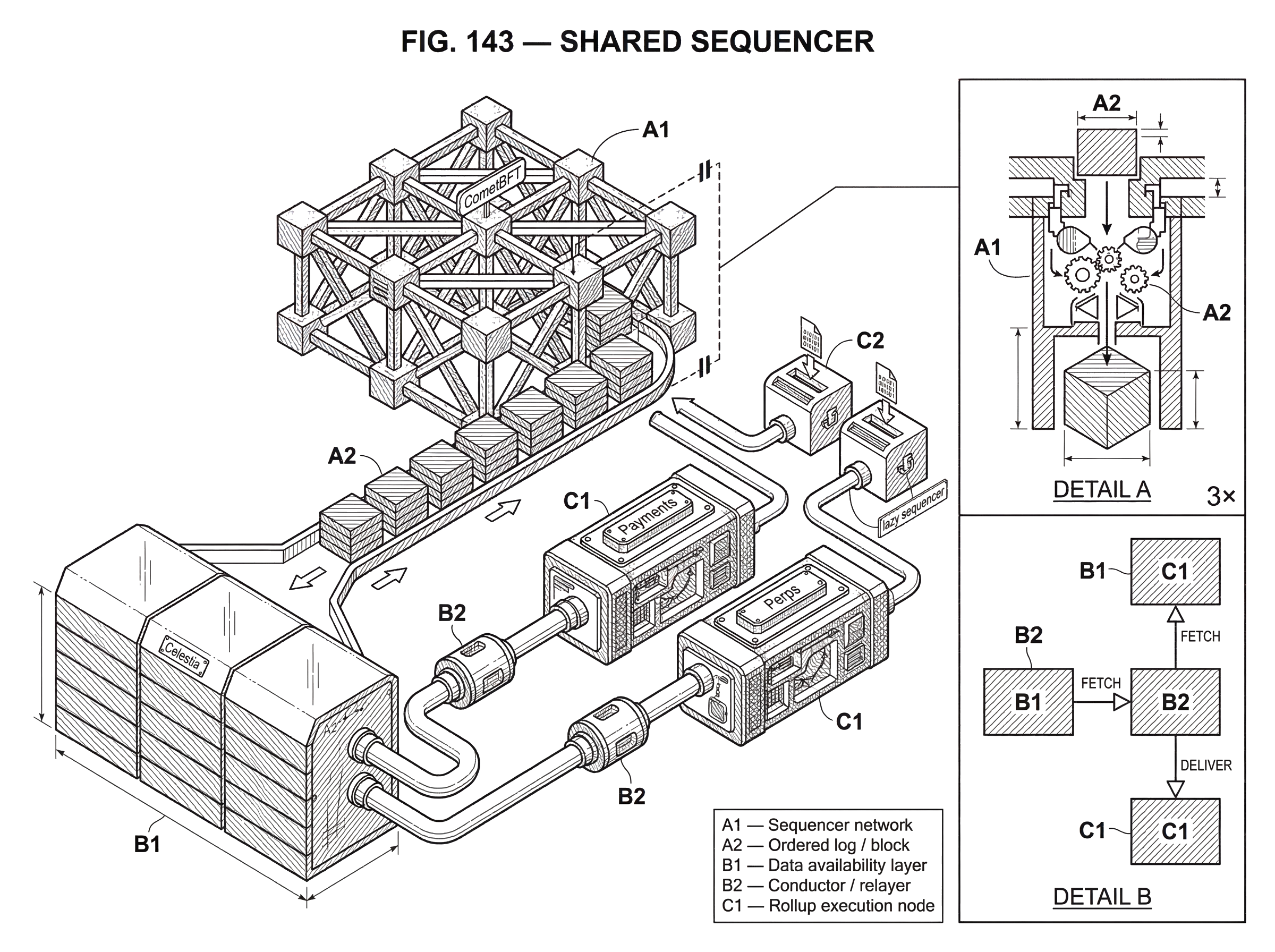

Astria’s architecture makes this especially clear. Its sequencer network comes to consensus on ordered transactions represented as (rollup_id, tx_bytes). That is an important detail. The sequencer does not need to understand the internals of each rollup transaction. It only needs to agree on inclusion and order of opaque bytes associated with a rollup identifier. Then the ordered data is published through Celestia for availability, and rollup-specific software later fetches, verifies, and derives the transactions relevant to that rollup before handing them to the execution node.

Astria calls this a lazy sequencer design: the data is sequenced and committed first, while execution is delayed and left to the rollup. The name matters because it captures the design choice precisely. The sequencer is not lazy in the sense of being slow; it is lazy in the sense of refusing to eagerly execute application logic at the consensus layer. That choice removes a major bottleneck. A shared ordering network can serve heterogeneous rollups without embedding all their transaction formats and state machines into one consensus protocol.

This is the compression point for the whole topic: a shared sequencer scales across many rollups only if it can remain mostly ignorant of their execution logic. Once you see that, many design choices make sense. Opaque bytes. Rollup-specific conductors or relayers. External data-availability layers. Rollup sovereignty over execution. They are all consequences of the same principle.

How does a shared sequencer order and publish transactions?

At a high level, a shared sequencer is a network that accepts transaction data destined for many rollups, reaches consensus on an ordering, and produces an ordered log that each rollup can consume. The exact implementation varies, but the mechanism usually has the same shape.

Users or applications submit transaction payloads associated with a target rollup. The shared sequencer network then decides inclusion and order. In Astria’s case, the sequencer network is a decentralized set of nodes using CometBFT consensus. The output is not “the next state” of every rollup. The output is closer to a globally agreed ordering of messages.

After ordering, that data must become available. Some designs combine sequencing and availability more tightly; others separate them. Astria explicitly publishes sequenced data to Celestia. That means the trust and liveness story is split across layers: the sequencer network is responsible for ordering, while the DA layer is responsible for making the ordered data retrievable. This separation can be powerful because it lets a specialized DA layer handle publication and sampling, but it also means availability depends on the guarantees of that DA layer rather than on the sequencer alone.

Finally, each rollup consumes the shared output. Astria’s Conductor illustrates the pattern: it fetches the sequenced data, verifies it, extracts the transactions relevant to a particular rollup, and forwards them to that rollup’s execution node, all while remaining agnostic to the rollup’s transaction format and state transition function. In effect, the shared sequencer produces a common ordered tape, and each rollup reads its own track from that tape.

A concrete example makes this easier to see. Imagine two rollups sharing one sequencer: a payments rollup and a perps rollup. Users on both submit transactions during the same short interval. The shared sequencer receives payloads like “payments-rollup, bytes…” and “perps-rollup, bytes…”, mixes them into one ordered block, and reaches consensus on that order. That ordered block is then posted to a DA layer. The payments rollup later reads the block, ignores entries for the perps rollup, derives only its own transactions, and executes them according to its own VM and fee rules. The perps rollup does the same for its own entries. Both rollups remain separate execution environments, but they now share a common ordering event. That shared event is what makes tighter coordination possible.

How does a shared sequencer enable better cross‑rollup composability?

| Ordering model | Cross-rollup atomicity | Latency uncertainty | Implementation complexity |

|---|---|---|---|

| Independent sequencers | None; relies on messaging | High | Per-rollup infra and bridges |

| Shared ordering only | Makes atomicity feasible | Reduced | Sequencer plus DA integration |

| Shared ordering + bundling | Enables atomic bundles | Minimal | High (bundling, protocol, governance) |

The strongest claim made for shared sequencers is not merely that they save infrastructure. It is that they create a common clock for multiple rollups.

When rollups sequence independently, cross-rollup coordination must be reconstructed afterward through messages, proofs, bridges, retries, and timeouts. When they share ordering, some coordination becomes a property of the ordering layer itself. If two related transactions can be submitted into the same shared sequencing domain, the network can decide their relative order once rather than asking two sequencers to make compatible decisions separately.

That is why teams often connect shared sequencing with atomic cross-rollup composability. Astria’s repository describes atomic cross-rollup composability as one of the promised outcomes of its shared sequencer network. Rome Protocol frames the same idea even more explicitly, proposing an “Atomic Composability Layer” for related transactions across rollups, such as bridge mint actions and arbitrage. Espresso likewise emphasizes fast confirmations that underpin cross-chain applications across connected chains.

Still, this is the place to be careful. The phrase shared sequencer does not automatically imply full atomic execution in every design. Shared ordering is a precondition for some kinds of atomic coordination, but the exact guarantee depends on additional machinery: how transactions are bundled, whether validity conditions can be checked before commitment, how rollups interpret shared ordering, and whether failure on one rollup can cancel or invalidate related actions on another. The supplied materials themselves leave some of these details unresolved in specific systems.

So the right way to think about it is narrower and more accurate: a shared sequencer creates the conditions under which cross-rollup atomicity becomes much more feasible. It does not, by itself, solve every execution and settlement edge case.

When should developers and users choose a shared sequencer?

For rollup teams, the appeal starts with outsourcing a hard problem. Running a good sequencer is not trivial. It must stay online, handle bursts of demand, price inclusion, publish data reliably, and avoid becoming a censorship or trust bottleneck. If many rollups can share a decentralized sequencing layer, each rollup can focus more on execution and application design rather than rebuilding ordering infrastructure from scratch.

That is the sense in which a shared sequencer can offer economies of scale. Rome’s materials make this argument directly: isolated sequencers fragment liquidity and duplicate infrastructure, while a shared sequencer can improve coordination and MEV search by removing uncertainty across multiple sequencers. Even if one does not accept every product claim, the basic mechanism is sound. A common ordering venue reduces the number of independent latency domains that participants must price into their strategies.

For users, the benefits are often indirect: faster confirmations, simpler UX, and less hidden dependence on a single operator. But there is also a more concrete user-experience problem: if the sequencing layer is a distinct network, do users need to hold a separate token and wallet just to get their transactions ordered?

Astria’s Composer exists precisely because this is awkward. Its sequencing layer can be used directly by sophisticated users who want more control over ordering, but ordinary users can instead send transactions through the rollup path, where an operator-run Composer sidecar bundles them and underwrites sequencing costs. The interesting point is not the product detail itself. It is what it reveals about shared sequencers as infrastructure: once sequencing becomes its own shared layer, fee payment and order-flow routing become design problems in their own right. A shared sequencer is not only a consensus design. It is also a market design.

This dual-path model also hints at the kinds of users a shared sequencer serves. Some users care deeply about exact ordering and may submit directly. Others are order-agnostic and care more about convenience. A shared sequencer can support both, but not with the same interface or guarantees.

Why does data availability matter for shared sequencers?

Many shared sequencer discussions quickly lead to data availability because ordering without retrievable data is not enough. If a network says “this is the order,” but the underlying data is unavailable, rollup nodes cannot reconstruct what they are supposed to execute.

That is why modular stacks often pair shared sequencing with an external DA layer such as Celestia. Celestia’s role is not to decide rollup execution. It orders blobs and keeps them available while execution lives above it. Its use of data availability sampling and namespaced Merkle trees matters because a shared sequencer serving many rollups needs a practical way for each rollup to retrieve and verify only its own data rather than downloading everyone else’s. The namespace mechanism is especially relevant here: multiple applications can share a DA layer while selectively fetching their own data.

This pairing creates a useful division of labor. The shared sequencer establishes a common order. The DA layer makes the ordered data retrievable. The rollup executes. Mechanically, that is elegant.

But it also creates layered assumptions. If a system sequences through one network and publishes through another, its guarantees are only as strong as the composition of both. Astria’s own documentation is explicit on this point: it sequences and commits data while relying on Celestia to publish it for availability. So when evaluating a shared sequencer, one should ask not only who orders? but also where is the ordered data published, and what if that layer fails?

What are the main risks and hidden assumptions of shared sequencers?

The most common misunderstanding is that a shared sequencer is obviously better for everyone. It is not that simple.

The first hard part is economic security and decentralization. Many shared sequencer pitches rely on the idea that one decentralized network can replace many centralized sequencers. That can be true in principle, but a decentralized sequencing network still needs an operator model, incentives, Sybil resistance, and some credible source of security. Astria’s materials say its network is permissionless to join, but the supplied documents do not fully specify the governance and participation model. Rome’s materials make the issue explicit from another angle, arguing that shared sequencers need a very large amount of economic stake to securely deliver atomic composability. The exact number is debatable, but the structure of the problem is real: if many rollups depend on one sequencing layer, that layer becomes a high-value target and must be secured accordingly.

The second hard part is outage and recovery behavior. A shared sequencer can remove the single-operator risk of one rollup’s sequencer, but it also creates shared infrastructure. If many rollups depend on one ordering network, failures can become correlated. Centralized sequencer outages already show how disruptive a sequencing bottleneck can be. A shared sequencer changes who bears the risk and how it is distributed; it does not abolish liveness risk.

The third hard part is what atomicity really buys you. Shared sequencing is often sold partly on MEV and arbitrage benefits, but recent research cautions against simplistic claims. A paper modeling arbitrage under atomic execution across two CPMM pools finds that switching to atomic execution does not always improve profits and can even produce losses in some scenarios. The broader lesson is subtle but important: better coordination does not automatically mean larger extractable value for every participant. Atomicity changes the game; it does not guarantee that every strategy becomes more profitable.

The fourth hard part is preserving rollup sovereignty. Shared sequencing is attractive partly because rollups want coordination without surrendering their own execution rules. Lazy sequencing helps here by keeping the sequencer agnostic to transaction format and state transition logic. But sovereignty is never free. The more powerful the shared layer becomes, the more questions arise about who controls upgrades, fee policy, admission rules, ordering policy, and interoperability standards.

Shared sequencer vs per‑rollup sequencer; what are the tradeoffs?

A shared sequencer is best understood as a specialized kind of sequencer, not a replacement for the sequencer concept itself. Every rollup still needs ordering. The difference is whether that ordering is local to the rollup or provided by a common service used by many rollups.

That means the comparison is not “sequencer versus shared sequencer.” It is “per-rollup sequencer versus shared sequencing domain.” A per-rollup sequencer maximizes local control and minimizes external dependency, but it fragments coordination. A shared sequencer improves coordination and can centralize security effort into one layer, but it adds shared dependency and a more complicated economic and governance surface.

This also explains why shared sequencers are appearing across different architectures rather than belonging to one chain family. Astria uses a CometBFT-based sequencer network with Celestia for DA. Espresso presents itself as a decentralized base layer for rollups, with integrations spanning different rollup stacks. Rome proposes using Solana validators and smart contracts as the sequencing substrate. The common pattern is not one consensus engine or one settlement chain. The common pattern is the architectural move: multiple execution environments, one ordering layer.

Conclusion

A shared sequencer is a transaction-ordering layer used by multiple rollups at once. Its purpose is to replace isolated sequencing domains with a common one, so rollups can get faster coordination, better foundations for cross-rollup composability, and less dependence on a single operator per chain.

The idea works because ordering does not have to imply execution. A shared sequencer can order opaque transaction data for many rollups, publish that data through a DA layer, and let each rollup execute its own state transitions independently. That separation is what makes shared sequencing both scalable and compatible with rollup sovereignty.

The promise is real, but so are the assumptions. Shared sequencing depends on the security of the sequencing network, often on an external DA layer, and on additional machinery if it wants to offer true cross-rollup atomicity. The shortest way to remember it is this: a shared sequencer gives many rollups one clock, but not automatically one state machine.

How does this part of the crypto stack affect real-world usage?

Shared sequencers change how cross‑rollup trades settle and how quickly deposits become final. Before you fund, trade, or rely on an asset tied to a shared sequencing domain, verify the sequencing and data‑availability guarantees and then use Cube Exchange to fund and execute your position.

- Identify the asset and its sequencing domain. Read the project docs to confirm which shared sequencer and which DA layer (e.g., Celestia) the rollup uses and note any named namespaces or Composer/sidecar actors.

- Verify finality and publication behavior. Find the sequencer’s ordering rule, the DA publication path, and any L1 fallback or timeout thresholds you must wait for before a cross‑rollup leg is considered final.

- Fund your Cube account with the correct token and network. Pick the canonical asset/network pair (check the rollup’s deposit path), then wait for the chain‑specific confirmation or checkpoint the project specifies.

- Place your trade on Cube using an execution type that matches timing risk. Use limit or post‑only orders when ordering certainty matters; use a market order only when you accept immediate fill and potential cross‑rollup timing exposure.

- Monitor post‑trade publication and recovery options. Watch for DA publication delays or sequencer stalls and be ready to follow the project’s recovery path (L1 submission or timeout procedures) if needed.

Frequently Asked Questions

A shared sequencer provides one common ordering layer used by many rollups instead of each rollup running its own local sequencer; execution remains rollup-specific so the sequencer orders opaque payloads (e.g., (rollup_id, tx_bytes)) and does not itself compute per-rollup state transitions.

No - most shared‑sequencer designs sequence opaque transaction bytes and defer execution to each rollup (Astria’s "lazy sequencer" explicitly sequences (rollup_id, tx_bytes) and relies on rollup conductors to fetch, verify, and execute those entries later).

Ordering without retrievability is insufficient: sequenced data must be published to a DA layer so rollup nodes can reconstruct transactions; Astria, for example, publishes ordered data to Celestia and relies on its namespace and sampling primitives for availability.

No - shared ordering makes atomic cross‑rollup coordination much more feasible but does not, by itself, guarantee atomic execution; additional protocols for bundling, validity checks, cancellation semantics, or dispute handling are required and the details are often left unspecified in current materials.

The key security challenges are economic and operational: a widely used sequencer becomes a high‑value target requiring substantial economic stake, clear participation/governance models, and Sybil‑resistance, and it also concentrates outage and recovery risk across many rollups.

A shared sequencer reduces the number of independent latency and mempool domains - helping coordination and simplifying some MEV/market assumptions - but research cautions that atomic execution doesn’t always increase arbitrage profits and can change incentives in non‑trivial ways rather than uniformly increasing extractable value.

Dependence shifts: a shared sequencer can remove single‑operator risk per rollup but creates shared dependencies - if the shared ordering network or its DA publisher stalls or fails, many rollups may face correlated outages or reduced liveness, as seen in independent sequencer outage analyses.

Not necessarily; user flows can be dual: sophisticated users may submit directly to the sequencing layer, while ordinary users can route through an operator‑run Composer/sidecar that bundles and underwrites sequencing costs - but the Composer itself provides no hard ordering guarantees and relies on operator funding policies.

When evaluating a shared sequencer, ask who performs ordering, where ordered data is published (which DA layer and namespace model), the sequencer’s participation/governance and incentive model, and what outage/recovery and atomicity guarantees are actually provided.

Related reading