What is a Validity Proof?

Learn what a validity proof is, how it secures rollups, why it enables fast finality, and the trade-offs around proving cost, data availability, and proof systems.

Introduction

Validity proof is the idea that makes a certain kind of blockchain scaling feel almost paradoxical: a base chain can remain the final judge of correctness without doing most of the computation itself. That matters because blockchains are expensive precisely when every full node must replay every transaction. If you want more throughput without giving up the base chain’s security guarantees, you need a way to separate doing the work from checking that the work was done correctly.

A validity proof is that separation mechanism. Instead of asking Ethereum, or another settlement layer, to execute an entire batch of Layer-2 transactions, a system can execute them elsewhere, produce a cryptographic proof that the resulting state transition is correct, and submit that proof onchain. The chain then verifies the proof and accepts the new state if the proof checks out. In a sentence: the chain stops re-running the computation and starts verifying evidence about the computation.

That shift is why validity proofs sit at the center of many rollup designs, often called ZK-rollups or validity rollups. It is also why they are easy to misunderstand. People often hear “zero-knowledge” and think privacy; or they hear “proof” and imagine a simple signature over a batch. But the important property here is not primarily privacy, and not merely attestation. The important property is that the proof ties a claimed state update to a specific execution of the rollup’s rules, in a form that the base chain can verify cheaply.

Why do rollups use validity proofs instead of re-executing transactions?

| Model | Acceptance style | Finality delay | Main risk | Best for |

|---|---|---|---|---|

| Validity proofs | Proof required upfront | No dispute window | Prover centralization and DA risk | Fast finality and strong correctness |

| Fraud proofs (optimistic) | Accepted unless challenged | Multi-day challenge window | Delayed fraud detection | Lower proving cost, slower exits |

The scaling problem begins with an invariant that blockchains rely on: all honest validators or full nodes should agree on the same state because they can all verify the same transition rules. In a basic monolithic chain, this means every node re-executes every transaction. That is robust, but expensive. Throughput stays limited because the whole network pays the computational cost of each user action.

Layer-2 systems try to change that cost structure. They move transaction execution off the base chain, batch many transactions together, and submit only a compact summary back to the base layer. But the moment execution moves elsewhere, a hard question appears: why should the base layer trust the reported result?

There are two broad answers. The optimistic answer is: accept the result by default, and give observers time to challenge fraud. The validity-proof answer is stricter: accept the result only if it arrives with a cryptographic proof that it is correct. That is the core contrast with fraud proofs. Fraud-proof systems are reactive; validity-proof systems are proactive. In a fraud-proof design, invalid state may be proposed and then later rejected if challenged. In a validity-proof design, the state transition is not accepted at all unless correctness is proven up front.

This is why validity-proof-based rollups can offer much faster finality at the settlement layer. Once the proof is verified on L1, the new rollup state is accepted without waiting through a challenge window. Ethereum’s rollup documentation makes this explicit: exits from a ZK-rollup can be executed once the validity proof is verified, unlike optimistic rollups, which impose a delay to leave time for disputes.

How do validity proofs work in simple terms?

The simplest mental model is to imagine a teacher grading a class. Recomputing every student’s work line by line is expensive. A validity proof is like receiving a compact, checkable certificate that says: this batch of answers really does follow from the rules. The teacher still checks the certificate, but does not redo the whole exam.

That analogy explains the efficiency gain, but it also has a limit. In ordinary life, a “certificate” usually depends on trusting whoever issued it. A validity proof is different because the verifier does not need to trust the prover personally. The guarantee comes from the proof system’s soundness: if the proof verifies, then, except with some very small failure probability determined by the system’s assumptions and parameters, the claimed computation was carried out correctly.

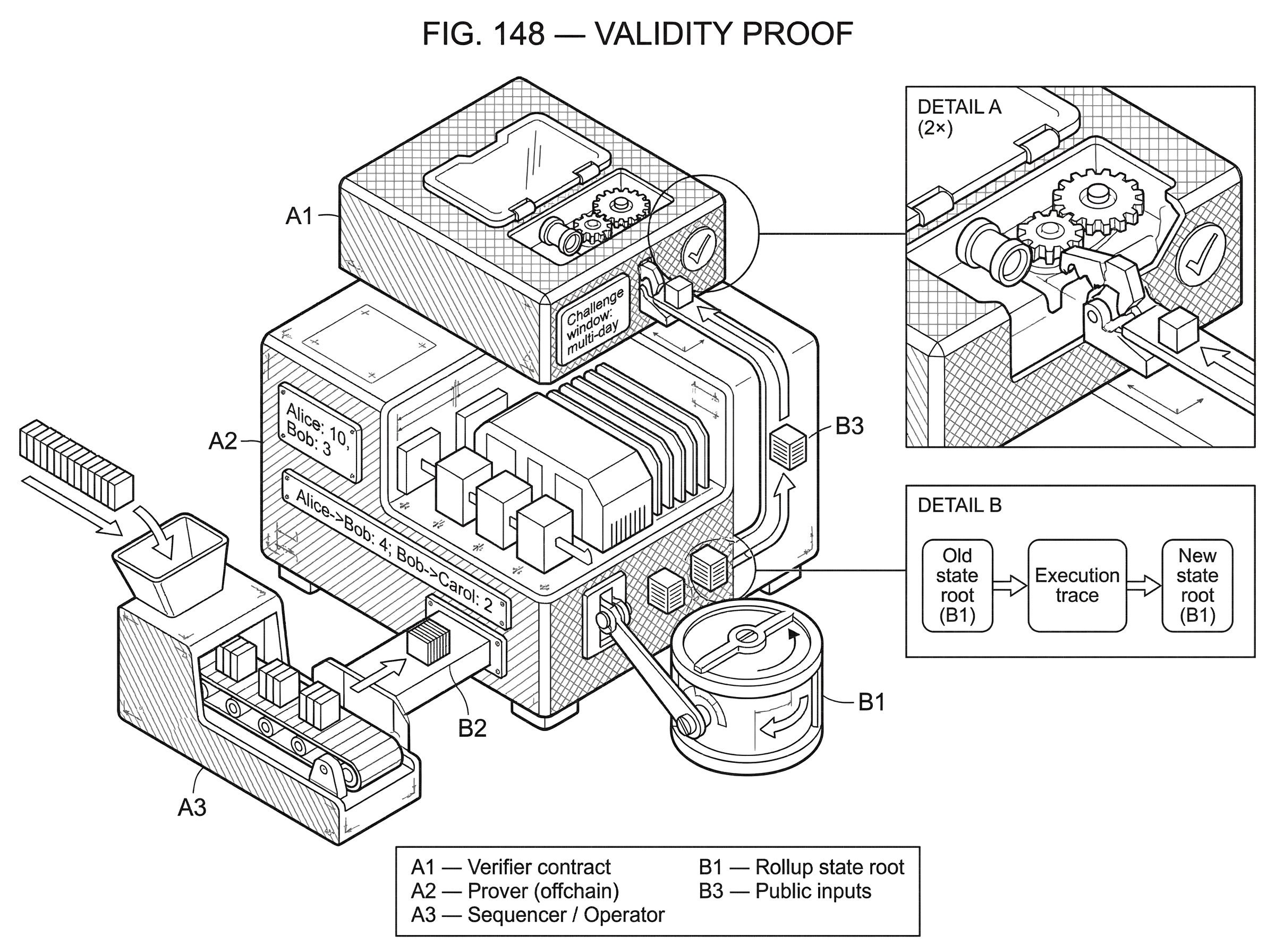

Mechanically, a validity-rollup flow usually looks like this. Users send transactions to the rollup. An operator or sequencer orders them and executes them offchain according to the rollup’s state machine. That execution starts from some previous state root and ends at a new state root. A prover then constructs a proof that this specific transition (from old root to new root, under the batch of included transactions and the rollup’s rules) is valid. The proof, together with certain public inputs, is sent to a verifier contract on the base chain. If the verifier contract accepts it, the contract updates the rollup’s canonical state root.

The important point is what the base chain is and is not checking. It is not replaying each transaction. It is checking that a proof exists for the statement: “Given this prior state commitment and this batch description, the rollup rules produce this new state commitment.” The contract can also check public inputs such as the previous root, the new root, and a commitment to the batch data. Ethereum’s documentation describes this directly: the verifier contract checks the proof and the public inputs, and only then updates the canonical rollup state.

Example: how a validity proof prevents an invalid transfer

Suppose a rollup starts from a state in which Alice has 10 tokens and Bob has 3. During one batch, Alice sends Bob 4, Bob sends Carol 2, and a decentralized exchange trade updates several other balances. Offchain, the rollup operator processes the batch and computes a new state root that commits to all resulting balances.

Without a validity proof, the operator could simply tell L1: “trust me, this is the new state root.” That is not good enough. The operator might have accidentally run buggy software, or might maliciously try to debit Alice 8 instead of 4, or credit itself tokens it did not earn. L1 does not store enough of the rollup’s internal state to notice by simple inspection, and re-executing everything on L1 would defeat the point of the rollup.

With a validity proof, the operator does something stronger. It provides a proof that, starting from the previously accepted state root, there exists a valid execution trace of the rollup program over the batch that leads exactly to the proposed new state root. That trace includes checks such as signature verification, nonce handling, balance conservation, and application-specific rules. The L1 verifier does not inspect the whole trace directly. Instead, it verifies a succinct proof that those checks were all satisfied.

If the operator tries to slip in an invalid transfer, the prover should be unable to produce a valid proof for that false statement. And if the operator submits a batch without a valid proof, the verifier contract will reject it and leave the canonical rollup state unchanged. This is the central security property: an invalid state transition should not be finalizable merely because nobody noticed in time.

What does a validity proof actually attest; the program, the inputs, or the output?

At a high level, the statement being proven has three parts.

First, there is a claim about the program or state machine. The rollup has rules: how transactions are parsed, how signatures are checked, how balances change, how smart contracts execute, how fees are accounted for, and so on. Those rules must be encoded into something the proving system understands; often a circuit, or a lower-level algebraic representation of computation.

Second, there is a claim about the inputs. The prover is not proving that some transition is valid in the abstract. It is proving that a specific batch, with specific public commitments and often some private witness data, was processed correctly from a specific pre-state.

Third, there is a claim about the output. The new state root, or app hash, is not arbitrary. It must be exactly the result of running the encoded rules on those inputs.

This is why validity proofs are more than signatures, multisig approvals, or committee attestations. Those mechanisms tell you who approved something. A validity proof tells you, under the assumptions of the proof system, that the computation itself satisfies the specified constraints.

Why is proof verification cheaper than re-executing all the rollup transactions?

The most surprising feature of validity proofs is asymmetry. Producing the proof can be computationally heavy, but verifying it can be much cheaper than redoing the original computation. This is the economic engine of validity rollups.

StarkWare’s STARK overview describes this explicitly: computation is moved to an offchain prover, while onchain verification requires only compact resources. Ethereum’s rollup documentation makes the same point in practical terms: a rollup verifier contract can verify a proof instead of re-executing every transaction. ZKsync’s documentation similarly notes that verifying a ZK proof is significantly cheaper than replaying each transaction onchain.

This asymmetry does not mean proving is free or easy. Quite the opposite. Proof generation can require substantial CPU, memory, engineering effort, and sometimes specialized hardware. Some deployments have relied on highly optimized proving infrastructure, shared provers, or specialized languages designed to make programs easier to prove. These operational realities matter because they shape decentralization: if only a small number of actors can afford to generate proofs reliably, the system may inherit centralization pressure even if the base-layer verifier remains permissionless.

So the key trade is not “expensive versus cheap” in the abstract. It is this: pay the heavy cost once per batch in the proving layer, then amortize that cost across many transactions through a single L1 verification. The larger and denser the batch, the more that amortization can help.

SNARKs vs STARKs: trade-offs to consider for validity rollups

| System | Trusted setup | Proof size | Onchain cost | Security properties | Best for |

|---|---|---|---|---|---|

| SNARKs | Often requires trusted setup | Small proofs | Low verification cost | Generally not post‑quantum | Minimal onchain fees |

| STARKs | No trusted setup (transparent) | Larger proofs | Higher calldata cost | Post‑quantum resistant | Transparent, scalable batches |

A validity proof is a role. SNARKs and STARKs are two families of cryptographic systems that can play that role.

SNARKs are attractive because proofs are typically small and verification is efficient. That can reduce onchain verification overhead. But many SNARK constructions require a trusted setup, often called a common reference string. If that setup is compromised, an attacker may be able to forge proofs. This does not mean every SNARK deployment is unsafe; it means the trust assumptions include the integrity of the setup process, unless the scheme used avoids that requirement.

STARKs take a different path. They are usually described as transparent, meaning they do not require that trusted setup ceremony. StarkWare also emphasizes another often-cited advantage: post-quantum security assumptions, at least relative to the assumptions used by many current SNARK systems. The trade-off is that STARK proofs are generally larger, which can raise calldata or verification costs depending on the design.

This is where a common misunderstanding appears. People sometimes ask which system is “better” as if there were a universal answer. There usually is not. The right question is: which costs and assumptions are you trying to optimize? Small proofs help with L1 costs. Transparent setup reduces one category of trust assumption. Different systems also interact differently with recursion, proof aggregation, available tooling, and the kind of virtual machine the rollup wants to support.

Does a validity proof guarantee data availability and recoverability?

| Mode | Data location | Availability guarantee | Privacy | Trust assumptions | Best for |

|---|---|---|---|---|---|

| ZK‑rollup (onchain DA) | Posted onchain calldata | Permissionless reconstructability | Low privacy by default | No DA trust required | Max recoverability, minimal trust |

| Validium (offchain DA) | Stored offchain by operators | Weaker independent recovery | Higher privacy potential | Trust in operator or DAC | Lower L1 cost, more privacy |

| Volition / Hybrid | Per-transaction choice | Depends on user choice | Configurable per-tx privacy | Mixed trust assumptions | Flexible balance of cost and safety |

A validity proof can tell you that a state transition is correct. It does not, by itself, guarantee that users can reconstruct the state or generate future transactions if the operator disappears. That is the separate problem of data availability.

This distinction is crucial. A rollup can have perfectly valid state transitions and still be operationally dangerous if the underlying transaction or state-diff data is withheld. If observers cannot access the data needed to reconstruct the rollup state, they may be unable to continue the chain independently. ZKsync’s documentation states this plainly: if the rollup data is not available to observers, state cannot be restored and the system may become dependent on a trusted validator.

In classic rollup mode on Ethereum, transaction or state-update data is posted onchain, often as calldata, so anyone can reconstruct state independently. Ethereum’s rollup docs highlight this as a core property: publishing data onchain allows individuals and businesses to reproduce the rollup state themselves. Some systems also provide anti-censorship escape hatches by letting users submit transactions directly to the rollup contract on L1 if the operator refuses to include them.

Other architectures intentionally move data offchain to reduce cost or improve confidentiality. Validium is the usual example. In that design, the validity proof still enforces correct state transitions, but users accept weaker data-availability guarantees because transaction data is not fully published onchain. That can be a rational trade in some contexts, but it changes the trust model. You get correctness of posted transitions, not the same level of permissionless recoverability.

How do validity proofs speed up withdrawals and finality?

The practical user-facing consequence of validity proofs is simple: once L1 has verified the proof, the corresponding state transition is accepted as correct. There is no need to wait through a long dispute window in case someone might discover fraud later.

That is why withdrawals from validity-proof rollups are typically much faster than withdrawals from optimistic rollups. Ethereum’s documentation states that exits can be executed once the proof is verified. StarkWare’s comparative material makes the same point from another angle: validity proofs allow secure withdrawals on the timescale of proof generation and posting, rather than the multi-day waiting period common in optimistic systems.

There is an important nuance here. “Fast finality” does not always mean the user experience is literally instantaneous. Real systems often have batching intervals, proof-generation latency, operational scheduling, and sometimes extra safety delays before settlement actions are finalized on L1. ZKsync, for example, describes immediate soft confirmations at the L2 level while also discussing a deliberate delay before final L1 settlement in one design. So the principle is firm (no fraud window is required) but the exact latency still depends on system design choices.

How can recursive proofs aggregate multiple batches or blocks?

Once you accept that one proof can represent one batch, the next idea is almost inevitable: can one proof represent many proofs? In many systems, yes. This is the role of recursive proving and proof aggregation.

A recursive proof is a proof that includes verification of other proofs inside its own computation. That sounds circular at first, but it is really just composition. If a proving system can express “I verified these earlier proofs correctly,” then many lower-level proofs can be compressed into a higher-level proof. Ethereum’s rollup documentation notes that recursive techniques allow several blocks to be finalized with a single validity proof.

This matters because L1 verification often has a substantial fixed cost. If ten batches each required separate onchain verification, you would pay that cost ten times. If they can be aggregated into one proof-of-proofs, the cost can be amortized across all included transactions. ZKsync’s architecture materials describe this kind of aggregation explicitly in network terms: multiple proofs can be combined into a single proof settled to Ethereum.

The consequence is that validity proofs do not just save re-execution cost at the transaction level. They also create a path for hierarchical compression of verification itself.

What are the remaining risks for validity-proof rollups?

Validity proofs provide a strong correctness guarantee, but they do not make a system invulnerable.

The first failure mode is in the proof system’s assumptions or implementation. If a trusted setup is compromised in a setup-dependent SNARK system, forged proofs may become possible. If the proving or verification software has bugs, the implemented system may fail to reflect the intended security model. These are not theoretical footnotes; they are design-defining assumptions.

The second failure mode is liveness rather than correctness. A validity rollup may refuse to finalize invalid state, but it can still halt or degrade if sequencers, provers, or supporting infrastructure fail. Starknet’s incident reporting makes this distinction concrete: the proving layer acted as a safeguard that prevented inconsistencies from compromising chain integrity, yet the network still suffered degraded service and required reorgs. In other words, validity protects correctness, not guaranteed uptime.

The third failure mode is centralization pressure. Proof generation can be resource-intensive. Verification on L1 can also be expensive in gas terms. Ethereum documentation notes that proof verification on Mainnet is costly, and that specialized hardware for proving may encourage concentration among a few operators. Some ecosystems are responding with faster provers, shared proving infrastructure, or explicit decentralization roadmaps, but this remains an active engineering and governance challenge.

The fourth failure mode is data availability, already discussed. A valid transition is not enough if the underlying data needed to continue the system is unavailable.

How do validity proofs apply across chains beyond Ethereum?

Validity proofs are often discussed in Ethereum terms because rollups are a prominent Ethereum scaling path. But the idea is broader: it is a general way to let one system trust the result of computation performed elsewhere without re-executing it.

Cosmos IBC guidance, for example, treats ZK rollups as systems whose reported app hash can be trusted as soon as the proof and the data-availability-layer finality are established. The same conceptual split appears in Sovereign SDK materials: a chain’s business logic can be compiled into a proof-friendly form, and specialized provers can post proofs so clients can verify state claims without running a full node. In those settings, the surrounding architecture may differ (perhaps a DA layer rather than Ethereum, perhaps no traditional settlement layer in the same sense) but the core role of the validity proof stays the same.

That broader view helps isolate what is fundamental from what is conventional. Fundamental: a succinct proof attests that a committed computation followed agreed rules. Conventional: which base chain verifies it, how data is published, which proving system is used, whether there is a single operator or many, and how the economics are organized.

Conclusion

A validity proof is a cryptographic way to say, “this state transition is correct, and you can check that without redoing all the work.” That is the idea worth remembering.

Everything else follows from it. Because the base layer verifies a proof instead of re-executing a batch, rollups can scale. Because the proof is required before acceptance, invalid state should not become final. Because there is no need for a challenge window, settlement can be much faster than in optimistic designs. And because proving is expensive, choices about proof systems, data availability, recursion, and prover decentralization shape the real-world trade-offs.

Validity proofs do not remove every assumption. They do not solve data availability on their own, and they do not guarantee liveness. But they change the central bottleneck of blockchains in a profound way: from universal recomputation to succinct verification. That is why they matter.

How does this part of the crypto stack affect real-world usage?

Validity proofs shape withdrawal speed, recoverability, and the trust assumptions that matter when you fund or trade on a rollup-backed market. Before you move funds or open a position, translate those technical facts into concrete checks you can perform. Use Cube Exchange to hold and trade assets after you verify the rollup’s proof, data-availability, and operational cadence.

- Read the rollup’s docs and confirm its data-availability method (onchain calldata, DA layer, or Validium). Note whether transaction data is published on L1; if not, plan for weaker recoverability.

- Identify the proof family (SNARK or STARK) and whether the project used a trusted setup. If it relies on a trusted setup, find the ceremony details and who participated.

- Check proof-posting cadence and batch intervals: find the typical proof generation time and how often proofs reach L1. Use that to estimate withdrawal or finality latency for the asset you plan to use.

- Fund your Cube account (fiat on-ramp or a supported crypto transfer), open the market or deposit flow for the asset, choose an order type (limit for price control or market for speed), and review the network/withdrawal notes on Cube before you place the trade.

Frequently Asked Questions

Validity proofs are proactive: the base chain accepts a state transition only if a cryptographic proof of correctness is submitted up front. Fraud-proof (optimistic) designs are reactive: they accept results by default and rely on a challenge window during which observers can submit fraud proofs to undo invalid state.

No - a validity proof only attests that a reported state transition was computed correctly; it does not by itself ensure observers can access the underlying transaction or state data. Data availability must be solved separately (for example by publishing calldata onchain or using a DA layer); without that, users may be unable to reconstruct state if the operator disappears.

Because many proof systems are designed to be succinct, verifying a validity proof onchain can be far cheaper than re-executing every transaction, while generating the proof offchain can be much more computationally intensive. This asymmetry is the economic engine of validity rollups: pay a heavy proving cost once per batch and amortize it across many transactions via a single cheap verification.

A validity proof asserts three linked things: (1) the rollup program or rules were encoded into the proving statement, (2) a specific batch of inputs (public commitments and any private witnesses) was processed, and (3) the claimed output (the new state root/app hash) is exactly the result of running those rules on those inputs. The verifier checks the proof and public inputs rather than replaying the full execution trace.

SNARKs typically give very small proofs and efficient verification but many constructions require a trusted setup (a common reference string), which adds a trust assumption; STARKs are usually transparent (no trusted setup) and argued to have stronger post‑quantum assumptions, but their proofs are larger and can raise calldata or verification costs. The choice depends on which costs and trust assumptions a project prioritizes.

Because a validity proof removes the need for a fraud challenge window, withdrawals and L1 finality can be much faster once the proof is verified; however, practical latency still depends on batching intervals, proof‑generation time, and design decisions, so fast finality is not necessarily instantaneous. For example, some designs still impose deliberate delays (ZKsync documents a 3‑hour L1 settlement delay) despite the lack of a fraud window.

Yes - many systems use recursion or proof aggregation so a single higher‑level proof can attest to the validity of many lower‑level proofs or batches; that lets projects amortize fixed onchain verification costs across multiple blocks. Recursive proving/aggregation is a common technique mentioned in rollup literature for compressing verification.

No; validity proofs reduce but do not eliminate centralization pressure. Producing proofs can be resource‑intensive and onchain verification is gas‑costly, which can incentivize a small set of specialized provers or operators unless explicit decentralization measures are adopted. Several projects and roadmaps explicitly treat prover decentralization as an ongoing challenge.

Validity proofs strengthen correctness guarantees but do not guarantee perfect safety or liveness: risks remain from compromised trusted setups or proof‑system/implementation bugs, prover/sequencer outages that halt progress, prover centralization or censorship, and insufficient data availability. These distinct failure modes are repeatedly highlighted as residual risks even for validity‑proof systems.

Related reading