What Is Data Availability?

Learn what data availability means in blockchain scaling, why rollups depend on it, and how blobs, erasure coding, and sampling make it work.

Introduction

Data availability is the question of whether the data behind a block or batch has actually been published and can be downloaded by the network. That sounds narrower than “is the chain valid?”, but in scaling systems it is often the condition that makes validity checkable at all. If the data is missing, other nodes cannot reconstruct state, light clients cannot gain confidence in what was posted, and fraud proofs or many forms of independent verification become impossible.

This is the puzzle: a blockchain can appear to have reached consensus on a block header or a commitment, while the underlying data needed to understand that block is not broadly retrievable. A chain may agree that “something” was included without enough participants being able to inspect what that something was. Once you see that gap, much of modern blockchain scaling becomes easier to understand. Many designs are really attempts to make two things happen at once: publish much more data, and still let many participants check that the data was genuinely available.

The reason this matters most in scaling is simple. throughput is not only about faster execution; it is also about how much transaction data can be carried, propagated, and checked. Rollups need users and watchers to reconstruct batches. Parachain systems need validators to keep candidate data available during dispute windows. Modular chains try to separate execution from the job of ordering and publishing data. In each case, availability is the bridge between a compact commitment and meaningful verification.

Why a cryptographic commitment doesn’t guarantee you can fetch the block data

A commitment is a cryptographic promise about data. It lets you later prove facts about that data or check consistency against it. But a commitment does not, by itself, guarantee that everyone can fetch the data being committed to. That distinction is the heart of data availability.

Suppose a block producer publishes only a block header or a commitment to a large batch of transactions. If full nodes have the entire data, they may be able to validate it. But if the producer withholds part of the batch from the wider network, many others cannot reconstruct the block. That breaks more than convenience. In an optimistic system, for example, someone may need the full posted data to generate a fraud proof. If the bad block’s missing pieces were never available, then the chain may have accepted something that cannot be challenged effectively.

This is why fraud proofs alone are not enough. A fraud proof can show that a published block is invalid, but it cannot help if the relevant data was never available to begin with. Withholding data is also awkward to attribute cleanly. If someone says “the producer did not publish chunk X,” the producer can sometimes release it later or claim a network issue. The deeper problem is that verification requires not just correctness of data, but access to data.

So the system needs an additional guarantee: before a block is treated as safely accepted, there must be good reason to believe its data was actually dispersed to the network widely enough that independent parties can obtain it.

How higher throughput and larger blocks worsen data availability concerns

In a small, simple chain, the straightforward answer is: every full node downloads every block in full. That works, but it scales poorly. As blocks get larger, bandwidth and storage requirements rise. Then one of two things tends to happen.

Either the system keeps blocks small enough that ordinary full nodes can still download everything, which limits throughput, or it increases block data and accepts that not everyone can inspect all of it directly. The latter path creates the need for mechanisms that give strong assurance without forcing every participant to fetch every byte.

This is especially important for light clients, which intentionally download only a small amount of chain data. A light client cannot inspect every block payload. Historically, such clients often relied on stronger trust assumptions, such as assuming the chain preferred by consensus contains only valid blocks. Research on fraud proofs and data availability sampling tries to weaken that assumption. The goal is not to make a phone into a full node; it is to let a phone get meaningful assurance that a block’s data was actually published.

This also explains the move toward modular architectures. In a monolithic chain, one system handles consensus, execution, settlement, and data publication together. In modular designs, the base layer may focus mainly on ordering data and making it available, while execution happens elsewhere. LazyLedger, the design that later informed Celestia’s approach, makes this explicit: consensus can be optimized around ordering and guaranteeing availability of transaction data, while transaction validity is pushed to the clients that care about those transactions. That only works if availability is a first-class property rather than an afterthought.

What data availability actually guarantees (short-term retrievability, not archival storage)

It helps to be precise here. In this context, data availability usually does not mean permanent archival storage. It means that the data for a block, batch, or candidate has been published and is retrievable during the period when others may need it for verification, dispute, reconstruction, or settlement.

That time horizon matters. Rollup batch data might need to remain accessible throughout a challenge window. Polkadot’s parachain validators are responsible for ensuring the data needed to validate candidates remains available for the duration of a challenge period, and they use erasure-coded chunks of that data to do it. Ethereum’s blob design in EIP-4844 also reflects this limited-horizon idea: blob data is made available through the consensus layer for a minimum retention window rather than being part of ordinary long-term EVM-accessible state.

So availability sits between two other ideas that are easy to confuse with it. It is stronger than merely having a cryptographic commitment, because the bytes must actually be fetchable. But it is weaker than promising indefinite storage forever. That is why data availability layers are not the same thing as decentralized archival storage networks. The security question is short-term retrievability for verification, not long-term backup.

How erasure coding and sampling make withheld data detectable

| Method | How it works | Bandwidth cost | Verification guarantee | Best for |

|---|---|---|---|---|

| Full download | everyone downloads full blocks | high per-node | deterministic | small chains |

| Committee attest | validators attest data possession | low per-node | trust-based | permissioned systems |

| Sampling + erasure | encode then sample random chunks | low per-client | probabilistic | light clients, rollups |

From first principles, there are only a few ways to gain confidence that data is available. One is brute force: have everyone download everything. Another is social or economic attestation: ask a committee or validator set to attest that they hold the data. A third is probabilistic checking: arrange the data so that small random samples are enough to make withholding very likely to be caught.

The modern technical path centers on the third idea. The system expands the original data using an erasure code. If the original data is split into M chunks, the code transforms it into N chunks, where N is larger than M, with the property that any M of the N chunks are enough to reconstruct the original. This changes the geometry of withholding. Instead of being able to hide a few tiny crucial pieces, an attacker must withhold enough of the extended data that reconstruction becomes impossible.

That is what makes sampling useful. If light clients sample random chunks from the extended data and each requested chunk is available with a valid proof, then widespread availability becomes increasingly likely. The client does not prove with certainty that every chunk is available. Rather, it gets a probabilistic guarantee: if a meaningful fraction of the extended data were missing, random sampling would very likely hit some missing part.

This is the compression point for the whole topic: erasure coding turns small, hard-to-detect withholding into larger, statistically detectable unavailability. Without that transformation, a producer might hide exactly the few pieces they need to prevent reconstruction while still answering most spot checks.

Example: why simple spot checks fail and how coding changes the economics of withholding

Imagine a producer creates a batch consisting of 100 chunks of original data. If a light client simply samples 20 random original chunks, the producer might try to withhold only 2 chunks and hope the client misses them. The block could look mostly available while still being unreconstructible for anyone who needs all 100 chunks.

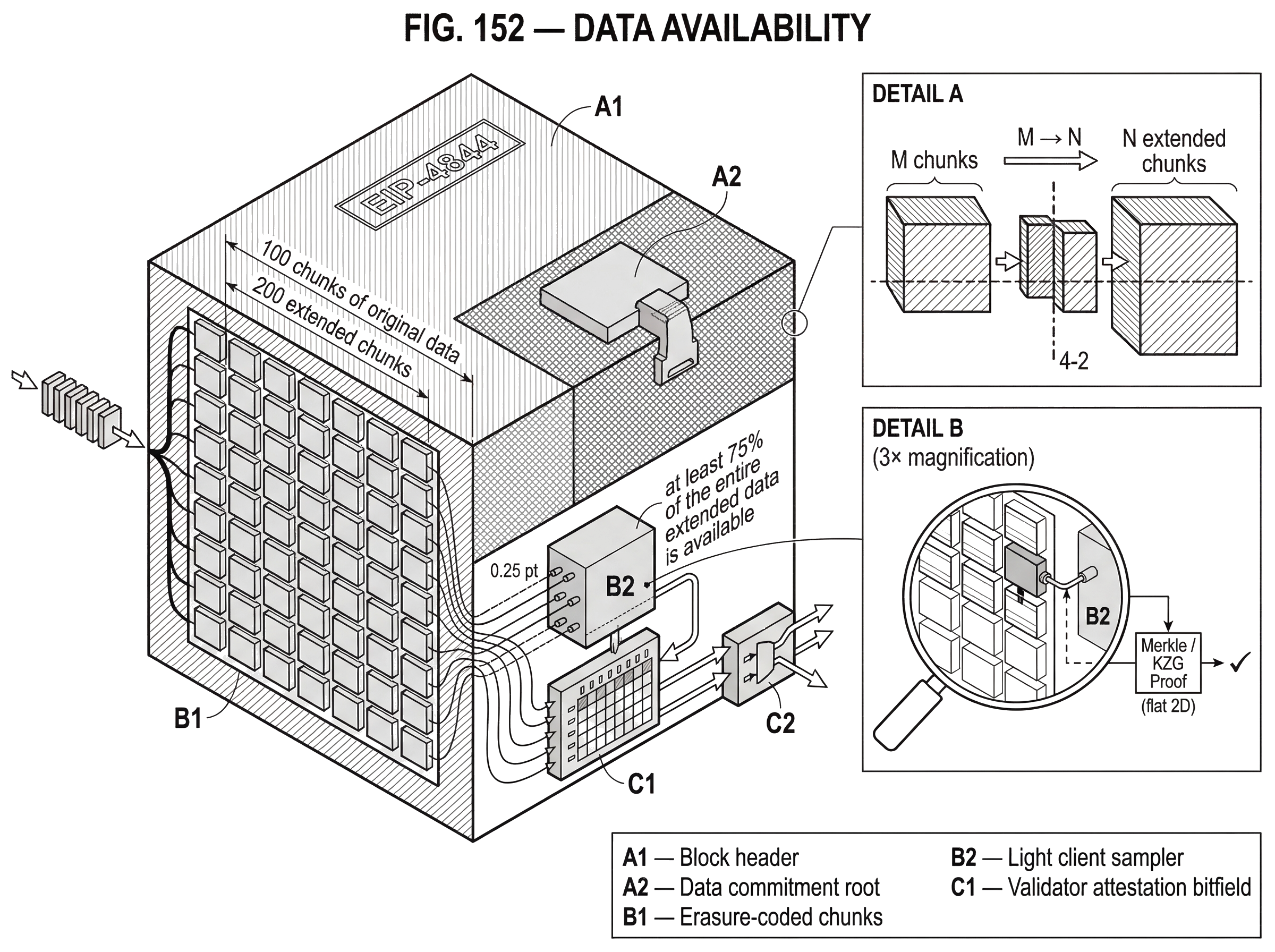

Now change the scheme. Before publication, the 100 original chunks are encoded into 200 extended chunks such that any 100 of the 200 can recover the original data. Suddenly, withholding only 2 chunks does nothing useful; there are still 198 available chunks, far above the 100 needed for recovery. To make the original data unrecoverable, the attacker must now withhold more than 100 of the 200 chunks. That is no longer a tiny hidden defect. It is a large missing region that random sampling is much more likely to notice.

The mechanism is not magic. It does not make unavailable data become available. It changes the economics of cheating by making successful withholding require a larger visible absence. A client that samples random positions and demands proofs for those positions can then reject a block if some requests fail. Because the attacker must hide a large enough fraction to break reconstructibility, even modest sample counts can provide high confidence.

This is why data availability sampling is statistical rather than absolute. A client makes k random requests, where k is chosen to push the failure probability low enough for the application. More samples mean more bandwidth used by the client, but lower probability of accepting a block with too much missing data.

How Merkle proofs and KZG/polynomial commitments authenticate sampled data

| Primitive | Commitment size | Proof size | Trusted setup | Best for |

|---|---|---|---|---|

| Merkle tree | constant root | O(log n) proof | no trusted setup | simple proofs, larger size |

| KZG polynomial | constant commitment | constant evaluation proof | requires trusted setup | succinct proofs, setup risk |

Sampling only works if the client can verify that the chunk it received is the right chunk from the committed block. Otherwise a malicious peer could answer with invented data. So the block must commit not just to “some data exists,” but to the exact encoded data that is being sampled.

A common way to do this is with a Merkle root. The block header commits to a Merkle root of the encoded chunks, and each sampled chunk comes with a Merkle proof. The client checks that the chunk sits at the claimed position in the committed dataset. This is conceptually simple and appears in many designs.

More advanced designs use polynomial commitments, especially KZG commitments. The attraction is succinctness: a large blob can be represented by a compact commitment, and proofs about evaluations of the committed polynomial can also be compact. The underlying cryptographic idea, formalized in polynomial commitment schemes such as the Kate–Zaverucha–Goldberg construction, is that you can commit to a polynomial once and later prove that its value at a chosen point is y without revealing the whole polynomial. These commitments are constant-size, and evaluation proofs are also constant-size.

That matters because many data-availability schemes represent data as evaluations of a polynomial over a domain. A verifier can then ask for values at random points and check them against the commitment. Ethereum’s roadmap uses KZG commitments heavily. EIP-4844, for example, introduces blob-carrying transactions whose large data payloads are not accessible to the EVM directly, but whose commitments are. It also adds a point-evaluation precompile so proofs about blob contents can be checked against those commitments.

Here the distinction between intuition and implementation is worth keeping clear. The intuition is: commit succinctly to a large dataset, then answer spot checks with short proofs. The implementation details depend on the design. Merkle-based DAS and KZG-based schemes both aim at the same problem, but make different tradeoffs in proof size, trusted setup assumptions, and engineering complexity.

Why 2D (row/column) coding improves recoverability and how sampling works there

Single-dimensional coding already helps, but many practical systems use two-dimensional constructions because they improve recovery and proof efficiency. The rough idea is to place the data in a grid, treat rows and columns as low-degree polynomial evaluations, and extend both dimensions with parity data. Celestia’s design does this with 2-dimensional Reed–Solomon encoding: data is arranged in a k × k matrix and extended to a 2k × 2k matrix. Light nodes sample random coordinates in the extended square and verify the returned shares.

Why does two-dimensionality help? Because recovery can proceed along rows and columns. If enough entries in a row are known, the rest of the row can be reconstructed. That can then unlock more columns, which unlock more rows, and so on. It reduces proof sizes for incorrect encoding and makes the coding structure more workable at large sizes.

But this is also where subtlety enters. Recoverability is not the same as instant recoverability. Research on Ethereum’s planned 2D DAS notes a useful threshold: if at least 75% of the entire extended data is available, the rest is provably recoverable. But some missing-data patterns can require multiple rounds of row-by-column propagation to reconstruct. So “recoverable” does not always mean “everyone can finish recovery immediately.” Fortunately, under the 75% condition, there are stronger bounds showing that global recovery can still converge quickly.

This is a good example of how DA systems depend on precise invariants, not just broad intuitions. The important invariant is not “most data exists somewhere.” It is something closer to: enough of the coded structure is available that the original can be reconstructed, and the sampling process is calibrated to test for that condition with high probability.

How Ethereum (EIP-4844), Celestia, and Polkadot implement data availability

| Protocol | Layer role | DA mechanism | Commitments/proofs | Who attests | Best use-case |

|---|---|---|---|---|---|

| Ethereum | consensus layer with blobs | blob sidecars, blob gas | KZG commitments, point-eval precompile | beacon + full nodes | rollup blob availability |

| Celestia | modular data-availability layer | 2D Reed–Solomon + DAS | Namespaced Merkle Trees | light nodes sampling | shared DA for rollups |

| Polkadot | parachain availability coordinator | per-validator erasure chunks | Merkle proofs to erasureroot | parachain validators bitfields | parachain candidate security |

Ethereum’s near-term answer to rollup data availability is EIP-4844, often called proto-danksharding. It introduces blob-carrying transactions that provide cheaper space for rollup data than calldata. The crucial point is that blobs are for availability, not for general EVM access. The execution layer sees commitments; the consensus layer is responsible for persisting the actual blob data. Blobs are referenced in beacon blocks but propagated separately as sidecars, a design chosen partly for forward compatibility so more advanced sampling-based schemes can replace full blob download requirements later.

Mechanically, EIP-4844 also creates a separate fee market for blob usage through independent blob gas accounting. That matters because DA bandwidth is a distinct scarce resource. Pricing it separately prevents blob demand from being conflated with ordinary execution gas demand. The design is conservative in its initial throughput targets precisely because pushing more data through the network creates real bandwidth and mempool stress.

Celestia starts from a more DA-centric architecture. Its chain is designed as a modular data availability layer where light nodes can verify availability efficiently without downloading whole blocks. It combines 2D Reed–Solomon coding with data availability sampling, and uses Namespaced Merkle Trees so applications can retrieve and verify only their own data. The namespace mechanism is important in a modular world: if many rollups share the same DA layer, each one wants efficient proofs about its own slice of the published data, not the entire block.

Polkadot approaches the same underlying problem in its parachain system. Validators must ensure the data needed to validate a parachain candidate remains available during the challenge period. The candidate’s available data includes its Proof-of-Validity and persisted validation data; this payload is erasure-coded into per-validator chunks, each authenticated by a Merkle proof against an erasure root. Availability bitfields then let validators attest which candidate data they hold. The details differ from Ethereum’s blobs or Celestia’s DAS, but the underlying mechanism is familiar: split the critical validation data, distribute it, and make possession attestable and reconstructible.

These examples are useful because they show data availability is not an Ethereum-specific trick. It is a recurring scaling problem that appears whenever a system wants to separate compact consensus from widespread verifiability.

What DA enables in practice: rollup security, validiums, and modular execution

The clearest practical use is rollup security. A rollup can post compressed state updates or proofs, but users and independent verifiers still need the underlying transaction or state-diff data to reconstruct the rollup’s state and verify what happened. EIP-4844 is explicitly aimed at making this cheaper by giving rollups blob space that guarantees availability more cheaply than calldata.

The same logic explains the difference between rollups and validiums. In a rollup, data is made available on a base layer or DA layer so others can reconstruct and verify. In a validium, execution data is kept off-chain, and only validity proofs or commitments may be posted. That can scale better, but if the external DA provider withholds data, users may be unable to prove balances or exit safely. The design saves bandwidth by moving the trust boundary.

Data availability also matters for systems that decouple execution from settlement or consensus. A modular exchange or settlement system may rely on one layer to make transaction or state-transition data available while another layer enforces settlement conditions. In threshold-signature systems, there is an analogous but distinct idea: no single party should hold the full signing key. For example, Cube Exchange uses a 2-of-3 threshold signature scheme for decentralized settlement, where the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize settlement. That is not itself a DA mechanism, but it illustrates the same architectural pattern common in scaling systems: separate critical powers so that safety does not depend on one actor or one hidden object existing in one place.

What can go wrong with data availability: withholding, selective answering, and DoS risks

The first failure mode is simple withholding. If enough data is missing, reconstruction fails and independent verification breaks. The second is more subtle: selective answering. An attacker may answer some sample requests while withholding others, hoping the network does not collectively query enough distinct positions. This is why DA sampling depends on honest-minority assumptions about enough independent light clients making sufficiently uncorrelated requests, and enough honest full nodes existing to generate and relay fraud proofs when needed.

The third failure mode is invalid coding. A producer might publish commitments to data that is malformed or incorrectly extended under the erasure code. That is why systems like Celestia rely on fraud proofs for incorrectly generated extended data, and why polynomial-commitment-based designs often need proofs that the low-degree extension was formed correctly.

There are also practical engineering constraints. EIP-4844 explicitly notes bandwidth and mempool denial-of-service risks from blob transactions, which is why nodes do not automatically rebroadcast full blob payloads to peers. Instead, they announce them and require explicit requests. This may sound like a networking detail, but it reflects a core truth: data availability is not only a cryptographic problem. It is also a data propagation problem under real bandwidth limits and adversarial load.

Finally, availability guarantees are usually about a time window, not forever. If applications need long-term access, they need separate archival strategies. Confusing short-term DA with permanent storage leads to bad system design.

What assumptions DA schemes rely on (honest samplers, client diversity, cryptography)

Every DA design rests on assumptions, and the differences matter. Full-download designs assume enough nodes can keep up with bandwidth. Committee-based designs assume the committee is honest enough and economically accountable enough. Sampling-based designs assume enough independent clients sample randomly, enough honest nodes can help with reconstruction or fraud proofs, and the coding/proof system is sound.

Cryptographic commitments also come with their own assumptions. KZG commitments are compact and powerful, but depend on pairing-based cryptography and setup assumptions. Merkle proofs avoid trusted setup but usually give larger proofs. Neither choice is “the real answer” in the abstract; each is a way to instantiate the same underlying need for authenticated access to encoded data.

And there is a limit to what DA can guarantee. If the network is badly partitioned, if too few honest participants sample, or if the design relies on a centralized disperser or operator set, the practical guarantee may be weaker than the clean theoretical story. Some modern DA services, for instance, use off-chain coordinators or limited operator sets to achieve high throughput, which can introduce centralization and governance risk even if the coding and commitments are sound.

Conclusion

Data availability is the property that makes blockchain verification possible in practice: the data behind a block or batch must not only be committed to, but actually retrievable. Scaling designs care about it because once execution moves off-chain or block data gets large, consensus on a header is no longer enough.

The memorable version is this: a blockchain cannot be meaningfully verified from data that was never made available. Erasure coding, sampling, commitments, blobs, sidecars, validator chunks, and modular DA layers are all different ways of enforcing that simple idea under tight bandwidth constraints.

How does data availability affect real-world usage?

Data availability determines whether others can reconstruct or challenge on‑chain state; which directly affects whether you can exit, prove balances, or trust rollup batches. Before funding or trading assets that depend on a network’s DA properties, check the protocol details and then use Cube’s funding and trading flows to act on your decision.

- Read the network’s protocol docs and whitepaper to identify the DA design (e.g., EIP-4844 blobs, Celestia-style DAS, parachain erasure coding) and note retention windows or sidecar rules.

- Verify implementation details in explorers, client repos, or RPC docs: look for blob/sidecar endpoints, sampling APIs, commitment types (Merkle vs KZG), and any published retention parameters (for example, blob retention epochs).

- Fund your Cube account using fiat on-ramp or a crypto deposit to the network asset you plan to trade or hold.

- Open the relevant market or withdrawal flow on Cube. For trading, use a limit order for price control or a market order for immediate execution; for transfers, confirm the destination network and any blob/sidecar support noted earlier.

Frequently Asked Questions

A cryptographic commitment proves a specific dataset was fixed, but it does not guarantee that the bytes are actually retrievable by others; if the publisher withholds the underlying data, parties cannot reconstruct or challenge the block even though the header or commitment looks valid.

Erasure coding expands M original chunks into N > M coded chunks so any M of N suffice to reconstruct; light clients then randomly sample a small number of coded chunks and verify them against the commitment, giving a high-probability assurance that large-scale withholding would be detected without downloading everything.

Data availability in blockchains normally means short-to-medium‑term retrievability during the period when verification or dispute can occur, not indefinite archival storage; applications that need permanent access must use separate archival strategies.

Two-dimensional (2D) schemes place data in a k×k grid and extend rows and columns so reconstruction can propagate row-to-column and vice versa, which improves recoverability and proof efficiency; however, recoverability is statistical and some patterns require multiple propagation rounds, and research notes useful thresholds (e.g., ≈75% availability) for guaranteed recovery.

Common failure modes are simple withholding of enough data to prevent reconstruction, selective answering (serving some samples but hiding others to evade detection), malformed or incorrect erasure-encoding of the extended data, and practical issues like bandwidth exhaustion or network partitioning that undermine sampling assumptions.

Merkle-based schemes avoid a trusted setup and use logarithmic-size proofs but produce larger proofs per checked chunk, while KZG/polynomial commitments give constant-size commitments and evaluation proofs at the cost of pairing-based cryptographic assumptions and (typically) a trusted-setup or structured public parameters.

EIP-4844 (proto-danksharding) introduces blob-carrying transactions whose large payloads are committed in the beacon/consensus layer and treated as DA payloads (not EVM-state), uses separate blob gas accounting to price DA bandwidth, and handles blob propagation via announcements/explicit requests to mitigate mempool and bandwidth DoS risks; the spec also notes a minimum sidecar retention guidance (e.g., 4096 epochs ≈ 18 days).

Sampling-based DA designs rely on probabilistic guarantees and therefore need assumptions like a sufficient number of independent honest samplers and rebroadcasting honest nodes; if too few clients sample or the network is strongly adversarial/partitioned, the statistical guarantees can break down.

No - data availability sampling and related checks give probabilistic (statistical) assurances rather than absolute proofs that every byte was published; designers pick sample counts and coding parameters to bound the failure probability for their threat model.

For rollups, posting batch data on an available base or DA layer lets users and watchers reconstruct state and challenge invalid batches; by contrast, validiums keep execution data off‑chain and so scale bandwidth more but depend on an external DA provider - if that provider withholds data users may be unable to exit or prove balances.

Related reading