What is Gas?

Learn what gas is in blockchain transactions, why it exists, how Ethereum gas fees work, and how gas limits, base fees, and tips affect execution.

Introduction

Gas is the mechanism blockchains use to meter computation, bound execution, and turn scarce block space into a price. The idea sounds simple at first: pay for the work your transaction causes the network to do. But that simplicity hides the real design problem. A blockchain is a shared computer where every validating node must replay the same transaction. If computation were free, a user could submit transactions that run forever, touch huge amounts of state, or consume disproportionate network resources while paying almost nothing.

Gas exists to stop that. It gives execution a unit, so the protocol can say: this transaction may consume at most this much work, and if it does consume that work, the sender pays for it. That does two jobs at once. It protects the network from unbounded computation, and it creates a fee market for inclusion when many users want block space at the same time.

On Ethereum, gas is especially important because transactions can do far more than transfer value. They can call smart contracts, read and write storage, emit logs, create contracts, and trigger nested calls. These actions impose very different costs on the network, so the protocol meters them with different gas charges. Other systems solve the same basic problem with different names and mechanics. Solana, for example, uses compute units and a compute budget. The labels differ, but the underlying problem is the same: shared execution must be limited and priced.

The core fact to keep in mind is this: gas is not the fee itself. Gas is the quantity of computational work the protocol measures. The fee is what you pay in the chain’s native asset for each unit of that work. That distinction is the key to understanding why gas exists, how wallets estimate it, and why users sometimes pay even when a transaction fails.

Why do blockchains need gas to limit computation?

Imagine a normal web application. If a server has to do more work, the operator buys more machines, rate-limits users, or rejects abusive requests. A blockchain cannot rely on that kind of centralized discretion. The network needs a rule that every validator can apply mechanically. That rule must answer two questions before execution gets dangerous: how much work is this transaction allowed to consume, and who pays if it consumes that work?

Gas answers both. Every operation in the execution environment has a gas cost attached to it. A transaction arrives with a gasLimit, which is the maximum amount of gas the sender is willing to let execution consume. Execution then proceeds step by step, deducting gas as the virtual machine performs work. If execution finishes before the budget is exhausted, the remaining gas is not spent. If execution runs out of gas partway through, state changes are reverted, but the gas already consumed is still charged.

That last point often surprises newcomers, but it follows directly from the mechanism. The network has already done real work by the time failure occurs. Validators have executed instructions, accessed state, expanded memory, and checked conditions. If failure erased the fee obligation, users could force the network to do expensive computation for free simply by arranging for the last step to fail. So the invariant is: pay for computation performed, not only for successful outcomes.

This also explains why gas is a better concept than a flat transaction fee. A plain ETH transfer has a known minimum execution footprint and therefore a standard gas requirement of 21000. A complex contract interaction may require much more. If both paid the same fixed fee, either simple transactions would overpay or complex transactions would underpay. Gas lets the protocol price work more proportionally to the burden imposed on the network.

How is gas different from the gas price and transaction fee?

| Metric | What it measures | Who determines | Varies with | Effect on fee |

|---|---|---|---|---|

| Gas used | Amount of compute executed | Protocol + runtime | Transaction operations executed | Multiplies price per gas |

| Price per gas | Wei paid per gas unit | Protocol rules + market | Network demand and base fee | Sets per-unit cost |

To make gas intuitive, separate the system into two variables. First, there is how much work execution consumes. That is measured in gas units. Second, there is how much each unit costs in the native asset. On Ethereum that price is expressed in wei per gas, often discussed in gwei for readability.

So the basic fee relation is:

transaction fee = gas used × effective price per gas

This is the simplest formula in the topic, and most of the surrounding machinery exists to determine the two terms on the right side. The gas used part depends on what the transaction actually does when executed. The effective price per gas depends on the chain’s fee rules and the user’s bid for inclusion.

A worked example makes the split clearer. Suppose Alice sends a plain ETH transfer. That transfer uses 21000 gas. If the effective price per gas is 200 gwei, then the execution fee is 21000 × 200 gwei, which is 0.0042 ETH. If instead Alice interacts with a contract function that ends up using 120000 gas at the same effective price, the fee is much larger because the measured work is larger, not because the transaction is morally more important.

This distinction is also why people sometimes say “gas is high” when they really mean one of two different things. Sometimes they mean their transaction uses a lot of gas because the operation is computationally heavy. Other times they mean the price per gas is high because demand for inclusion is intense. Those are different causes. A simple transfer during congestion may still use only 21000 gas but be expensive because each unit costs more. A quiet network does not make a complex contract call simple; it only lowers the price of each unit of work.

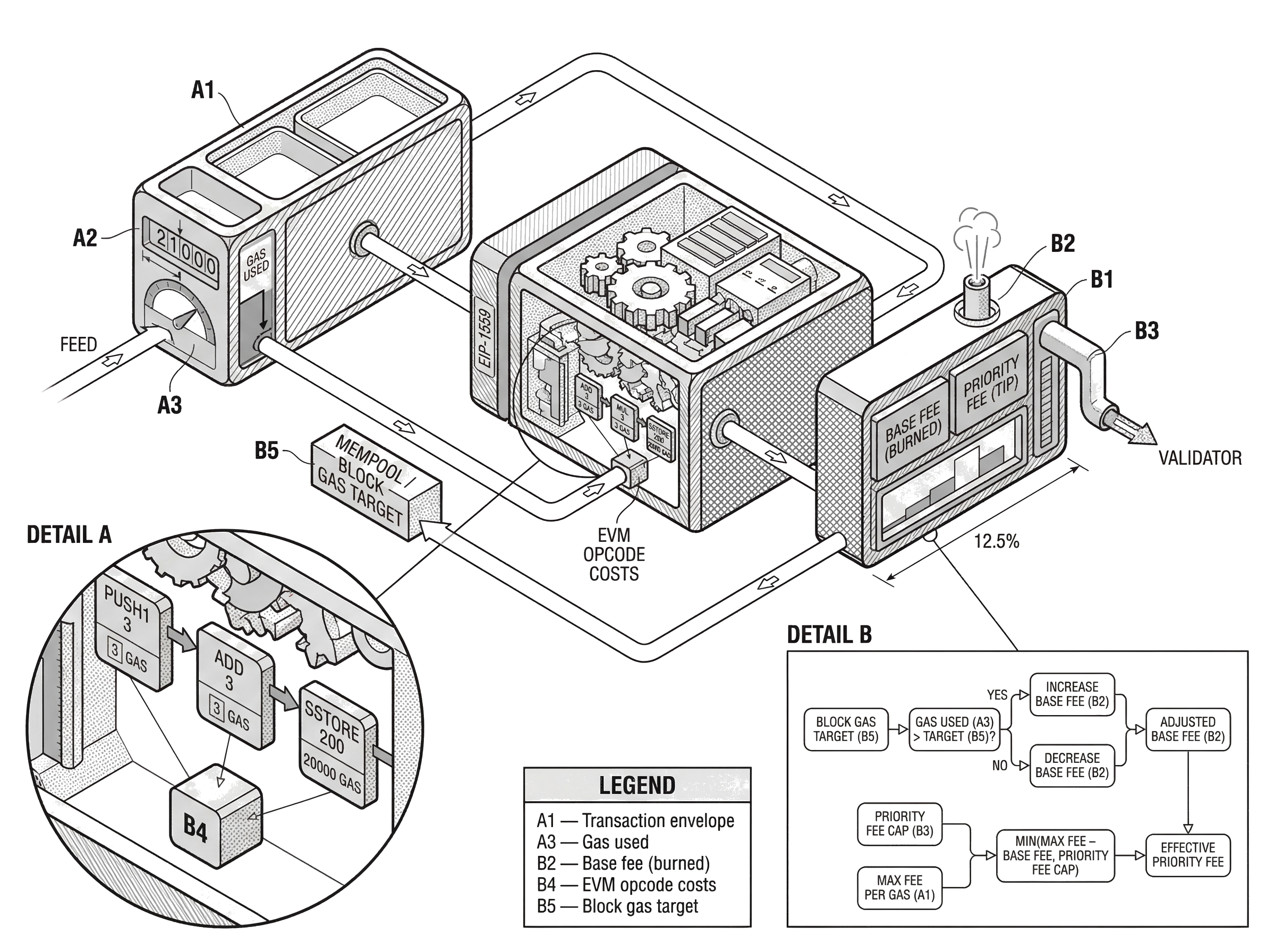

How does Ethereum measure and charge gas at the opcode level?

Ethereum’s execution engine, the EVM, charges gas at the opcode level. That is the low-level mechanism behind the user-facing concept. Each instruction the EVM can execute has a defined gas cost, and some operations have costs that depend on what they do at runtime. Reading or writing storage, copying data, hashing input, emitting logs, and making external calls can all add variable charges.

Here the purpose of gas becomes more concrete. Different operations burden the network in different ways. Arithmetic on stack values is cheap. Accessing persistent state is more expensive. Expanding memory becomes progressively costlier, and in the implementation this expansion is charged with a formula that grows more than linearly for the newly expanded region. That is not arbitrary decoration. It exists because memory use and state access create resource demands that the protocol wants to discourage from being abused.

Storage writes are a good example of why gas rules get complicated. Writing to persistent storage is among the most expensive things a contract can do because it changes long-lived state that all full nodes must preserve. Ethereum has repeatedly revised storage gas accounting through protocol upgrades such as EIP-2200, EIP-2929, and EIP-3529. Those changes were not cosmetic. They adjusted gas charges and refunds to better reflect actual resource costs, reduce denial-of-service risks, and close loopholes such as gas-token strategies that shifted gas costs across time.

The cold-versus-warm access distinction introduced by EIP-2929 shows the underlying logic well. The first access to an account or storage slot in a transaction is more expensive than later accesses to the same item. Why? Because the first touch is the expensive one from the state-access point of view; subsequent touches can benefit from transaction-local tracking and caching assumptions. So Ethereum maintains transaction-scoped access sets and charges a higher cold cost on first access, then a lower warm cost afterward. The names are metaphorical, but the mechanism is precise.

A useful warning follows from all this detail: there is no single eternal gas schedule independent of protocol version. The concept of gas is fundamental, but many exact gas costs are conventions chosen by the protocol and revised when experience shows they are underpriced, overpriced, or exploitable.

What is a gas limit and why does it matter for my transaction?

Every Ethereum transaction specifies a gasLimit. This is not the fee you will definitely pay. It is the maximum amount of gas your transaction is allowed to consume. You can think of it as an execution budget ceiling.

That ceiling matters because the network needs a hard bound before running user code. Without one, a validator could not know whether execution might continue indefinitely. By requiring users to buy gas up front and declare a maximum budget, Ethereum ensures that every transaction has a finite worst case from the validator’s perspective.

The mechanics are straightforward. When the transaction is prepared, the sender must have enough balance to cover the maximum possible gas expenditure implied by the gas limit and fee settings. During execution, gas is consumed as instructions run. If the transaction uses less than the limit, the unused portion is effectively returned. If it exceeds the limit, execution halts with an out-of-gas exception, state changes are reverted, and the gas consumed up to that point is still lost.

Consider a contract call that needs about 90000 gas in normal conditions, but the sender sets gasLimit = 50000. The transaction begins executing. It may validate inputs, load storage, perform several computations, and even make part of its intended state transition. Once the gas counter reaches zero, the EVM halts exceptionally. The partial effects do not stick, but the network still had to do the work that led up to the failure. That is why underestimating gas produces a failed transaction that still costs money.

This is also why wallets estimate gas before sending. They are trying to predict gas used, so they can set a gasLimit high enough for success without being absurdly oversized. On Ethereum, oversizing the gas limit does not by itself mean you pay for all of it; you pay for gas actually used. But an undersized limit can guarantee failure. So the practical problem is asymmetric: too low is dangerous, too high is usually tolerable within reason.

How does EIP‑1559 set the base fee and priority fee for transactions?

| Component | Who sets it | Recipient | Burned? | Behavior |

|---|---|---|---|---|

| Base fee | Protocol (per block) | No one | Yes | Adjusts up to 12.5% per block |

| Priority fee (tip) | User (transaction) | Validator / proposer | No | User incentive for faster inclusion |

| Legacy gasPrice | User (first-price bid) | Validator | No | Single-price first-price auction |

Ethereum used to rely more directly on a single user-specified gasPrice, which behaved like a first-price auction. EIP-1559 changed that by separating the fee into two economically different pieces: a protocol-set base fee and a user-chosen priority fee.

The base fee is the minimum per-gas amount required for inclusion in a block. It is not paid to the validator. It is burned, meaning removed from circulation. The protocol adjusts this base fee from block to block depending on how full recent blocks were relative to a target gas usage. If the previous block used more gas than the target, the next block’s base fee rises. If it used less, the base fee falls. The per-block adjustment is bounded; the canonical rule allows the base fee to move up or down by at most 12.5% relative to the previous block.

This mechanism matters because it turns fee pricing into a feedback system rather than a pure spot auction for every transaction. Instead of every user guessing an exact market-clearing price from scratch, the protocol carries forward a moving reserve price that responds automatically to demand. That makes fee estimation more reliable and reduces some of the worst overpayment behavior of pure first-price bidding.

The priority fee, often called the tip, is different. This is the part paid to the validator or block proposer as an incentive to include your transaction. It answers a narrower question: given that the protocol’s reserve price has been met, how strongly are you signaling urgency relative to other pending transactions?

Type 2 Ethereum transactions therefore typically specify two fee caps: maxPriorityFeePerGas and maxFeePerGas. The first is the most you are willing to tip per unit of gas. The second is the most you are willing to pay in total per unit of gas, including both base fee and tip.

For these transactions, the effective priority fee is:

priority fee = min(maxPriorityFeePerGas, maxFeePerGas - baseFeePerGas)

And the effective price actually paid per gas is:

effective gas price = baseFeePerGas + priority fee

This gives users protection against sudden base-fee movements while still letting validators observe a clear incentive signal. If the base fee rises so high that baseFeePerGas exceeds maxFeePerGas, the transaction is no longer eligible for inclusion until conditions change or the user replaces it.

A concrete example helps. Suppose the current baseFeePerGas is 190 gwei. You submit a transaction with maxPriorityFeePerGas = 10 gwei and maxFeePerGas = 220 gwei. Since 220 - 190 = 30, your full 10 gwei tip fits under the max fee cap. So your effective gas price is 200 gwei: 190 gwei burned and 10 gwei paid to the validator. If the base fee unexpectedly rises to 215 gwei before inclusion, your tip is clipped to min(10, 220 - 215) = 5 gwei, so the effective gas price becomes 220 gwei. If the base fee rises above 220 gwei, the transaction cannot be included at all under those settings.

How do gas fees affect transaction inclusion and mempool priority?

Gas pricing is inseparable from transaction ordering. After a transaction is broadcast, it typically enters the mempool, the pool of pending transactions seen by nodes and validators. Validators are free to choose which transactions to include, subject to protocol validity rules and block gas constraints. In practice, higher priority fees generally improve a transaction’s chance of early inclusion because they offer more value to the block producer.

That said, higher tip does not mathematically guarantee inclusion. Validators have discretion, private order flow exists, and block building can involve considerations beyond simple public-mempool tip ranking. This is one place where the clean protocol story meets messier market reality. The basic incentive remains true: more priority fee usually means stronger inclusion incentive. But actual ordering can also be shaped by MEV-aware block construction, private relays, and bundle-based workflows.

This is why gas estimation tools speak in probabilities rather than certainties. A service may recommend a fee that has, say, a high chance of next-block inclusion, not a guarantee. It is also why wallets often provide presets like low, market, and aggressive. They are helping users choose among cost-speed tradeoffs in a market whose state can change from block to block.

Why do I still pay gas when my transaction fails or reverts?

This is worth isolating because it is one of the most misunderstood parts of the concept. A failed Ethereum transaction does not mean “nothing happened” in resource terms. It means the requested state transition did not complete successfully and therefore did not persist. But before the failure, validators still executed instructions and consumed resources.

There are several ways failure can occur. A contract may revert because a condition was not met. An external call may fail. The transaction may run out of gas. The EVM may hit an exceptional condition. In all of these cases, the common principle is that consumed computation has still been consumed. So the gas charge reflects the work done up to failure, not whether the final state change was accepted.

This rule is not punitive; it is defensive. If fees were only charged for successful state transitions, adversaries could spam the network with intentionally failing but expensive transactions. Gas closes that loophole by attaching cost to execution itself.

Why did Ethereum reduce gas refunds (for SELFDESTRUCT and storage clears)?

Ethereum historically included gas refund mechanisms for some actions, especially storage-related ones. The intuition was that some operations relieve burden on the state and so may deserve partial rebate. But refunds turned out to have side effects. In particular, they enabled strategies such as GasToken, where users effectively stored state in one period and cleared it later to claim refunds when gas prices were high.

That behavior revealed an important design lesson: a refund is not just a rebate to one user. It changes block-level resource dynamics and can let users shift gas purchasing power across time in ways the protocol may not want. Ethereum therefore tightened refund rules over time. EIP-3529 removed the SELFDESTRUCT refund, reduced SSTORE refunds, and lowered the maximum refund cap from half the gas used to one fifth.

The broader point is that gas accounting is not only about local fairness for one transaction. It is also about global network stability. A pricing rule that looks reasonable in isolation can create bad incentives once millions of users and automated strategies interact with it.

How do other blockchains handle execution limits and fees compared with Ethereum?

| Platform | Unit name | Budget expressed as | Charged on | Refunds |

|---|---|---|---|---|

| Ethereum | Gas units | gasLimit (ceiling) | Actual gas consumed | Refunds limited (reduced by EIP-3529) |

| Solana | Compute units | Requested compute-unit limit | Requested/allocated units (paid on request) | No gas refunds |

Although “gas” is most associated with Ethereum, the underlying idea is broader. Any programmable blockchain has to ration execution somehow. What changes across systems is the exact unit being measured and how payment is attached to it.

Solana is a useful comparison because it makes the contrast obvious. Instead of EVM gas, Solana uses compute units and a compute budget. Transactions can include instructions to set a compute unit limit and a compute unit price. The chain also has scheduler-level resource accounting before runtime execution. So the same first-principles problem appears again: execution must be bounded, the network must know what resource budget a transaction asks for, and inclusion priority can be influenced by an explicit payment signal.

But the details differ in meaningful ways. On Solana, the priority fee is determined by the requested compute unit limit, not the actual units consumed. That means over-requesting can make you pay for unused budget. Ethereum works differently: users specify a gas limit ceiling, but unused gas is returned, so the cost is driven by actual gas consumed rather than the mere size of the budget request. Same problem, different tradeoff.

This comparison helps separate what is fundamental from what is conventional. The fundamental part is that shared computation needs metering, limits, and some pricing mechanism for scarce inclusion. The conventional part is how exactly the chain expresses the budget, which sub-resources it meters, how refunds work, and whether fees are based on requested or actual usage.

How should users and developers account for gas costs in practice?

For ordinary users, gas is mostly a practical question: how much will this transaction cost, and how quickly will it be included? Wallets hide much of the machinery, but the core decisions remain. A user sending ETH, swapping on a DEX, minting an NFT, or bridging assets is paying for network computation. If the action is contract-heavy or the network is congested, the fee rises because either gas usage, gas price, or both are higher.

For developers, gas is also a design constraint. Contract code that touches storage repeatedly, copies large chunks of data, emits many logs, or makes deep call chains will cost more to run. That cost affects user experience directly. So smart contract design is partly about resource economics: minimize expensive state writes when possible, avoid unnecessary memory expansion, and understand which protocol changes may alter the gas profile of previously acceptable code.

The same concept also shapes transaction construction. Developers choose fee fields, estimate gas limits, decide when to resubmit with higher fees, and account for the fact that even view or pure logic may cost gas when invoked internally on-chain even if an off-chain eth_call simulation is free to the caller.

Conclusion

Gas is best understood as the protocol’s meter for computation. It exists because blockchains need a hard bound on execution and a way to make users pay for the work their transactions impose on the network.

On Ethereum, that meter interacts with a fee market: gas used measures work, the gas limit bounds how much work can be consumed, and EIP-1559 determines the price through a burned base fee plus a validator tip. The details can be intricate, especially at the opcode and storage-accounting level, but the memorable core is simple: gas makes smart-contract execution finite, billable, and prioritizable.

What should I understand about gas before sending or trading crypto?

Understand the gas mechanics that affect cost and speed, then use Cube Exchange to fund and submit the transaction with explicit fee fields. On Cube you can fund your account, set Ethereum Type‑2 fee caps or chain‑specific execution limits, and send the transaction while watching for confirmations.

- Fund your Cube account with fiat or a supported crypto deposit for the chain you’ll use.

- Check the chain’s current base fee (or equivalent) and set Ethereum Type‑2 fields: choose maxPriorityFeePerGas and maxFeePerGas so maxFeePerGas > baseFee + desired tip.

- Let Cube estimate gas usage or run a local simulation; if your action is contract‑heavy, increase the gasLimit modestly above the estimate.

- Submit the transaction and monitor for inclusion; if it stalls, use Cube’s replace/accelerate flow to raise the priority fee or resubmit with adjusted caps.

Frequently Asked Questions

Because validators have already executed the instructions and consumed resources before the failure, the protocol charges gas for work performed even if the final state change is reverted; this prevents attackers from forcing expensive, intentionally failing computation for free.

EIP-1559 splits the per-gas price into a protocol-set base fee that is burned and a user-chosen priority fee (tip) paid to the block proposer; the base fee adjusts up or down by at most 12.5% per block based on recent demand, while the tip signals urgency to validators.

The gas limit is the maximum execution budget a sender allows (and must be affordable up front), while gas used is the actual measured work consumed during execution; you pay for gas actually used, and any unused gas from the limit is returned.

On Ethereum unused gas from an oversized gasLimit is refunded so you only pay for gas used, but on some chains like Solana priority fees are based on the requested compute-unit limit rather than actual consumption, so over‑requesting there can make you pay for unused budget.

Refunds for operations that reduced state burden were historically allowed, but they enabled strategies (e.g., GasToken) that shifted costs across time and created undesirable incentives, so Ethereum tightened and removed many refunds (EIP-3529) to improve global incentives and reduce exploitation.

The EVM charges gas per opcode and some costs depend on runtime context; accessing persistent storage, expanding memory, hashing, emitting logs, and cold first-time account/storage accesses are relatively expensive, which is why exact gas costs vary by operation and by protocol upgrades like EIP-2200 and EIP-2929.

EIP-2929 introduced a cold-versus-warm access distinction: the first access to an account or storage slot in a transaction is charged a higher "cold" cost and subsequent accesses to the same item are charged a lower "warm" cost, reflecting that the expensive work is the first touch.

Higher priority fees (tips) generally increase the probability of earlier inclusion because they give validators more incentive, but they do not mathematically guarantee inclusion since validators can exercise discretion and private order‑flow or MEV practices can affect ordering; fee estimators therefore report probabilities, not certainties.

Burning the base fee removes that portion from supply, so under some demand regimes ETH issuance can be offset or reversed, but the long‑term net effect on ETH supply (inflationary vs deflationary) depends on usage patterns and is difficult to predict precisely.

All chains must meter and limit execution, but implementations differ: Ethereum measures gas at the EVM opcode level with refunds, cold/warm rules, and post‑London base-fee mechanics, while other chains like Solana use compute units and a compute budget with different billing semantics and priority mechanisms.

Related reading