What Is a ZK Rollup?

Learn what a ZK rollup is, how validity proofs and data availability work, why withdrawals are fast, and what tradeoffs shape zk-rollup design.

Introduction

ZK rollups are a way to scale blockchains by moving most transaction execution off the base chain while still letting the base chain verify that the result is correct. The interesting part is not simply that they are “faster” or “cheaper.” It is that they try to preserve the base chain’s security model while avoiding the need for every node on that base chain to re-run every computation.

That solves a very specific bottleneck. In a conventional blockchain, the chain is secure partly because many nodes independently execute the same transactions and check the same rules. But that same redundancy is also why throughput is limited: the system buys trust by repeating work. A ZK rollup changes the bargain. It says: let execution happen elsewhere, but require a Succinct cryptographic proof that the offchain execution followed the rules. Then the base chain only checks the proof and stores enough data for the rollup state to remain recoverable.

If that sounds almost contradictory, that is because the core idea is subtle. A ZK rollup is not merely “offchain computation.” Plenty of systems move work offchain by trusting an operator. A ZK rollup is offchain computation with onchain verifiability. The base layer does less work, but it does not simply stop checking. It checks in a different way.

Why do blockchains use ZK rollups to improve throughput and retain security?

The problem begins with a basic invariant: every fully validating node must be able to agree on the current state after processing transactions. On Ethereum, for example, that means balances, contract storage, code, and other state must end up identical for all honest nodes. The straightforward way to guarantee this is to have everyone execute every transaction. That is robust, but it scales poorly because total throughput is bounded by what the network’s validators can all afford to process.

A rollup changes where the expensive work happens. Transactions are executed in a separate system, often called a layer 2. The layer 2 keeps its own internal state, but it anchors that state to the base chain through a smart contract. The contract stores a compact commitment to the rollup’s state, usually a state root, which is a cryptographic summary of all balances, contract data, and other internal records. When the rollup processes a new batch of transactions, it proposes a new state root.

At that point there are two questions the base chain must care about. First: is the new state root actually the result of valid execution? Second: if the rollup operator disappears, can others reconstruct the state and continue? ZK rollups answer the first question with a validity proof and the second with onchain data publication. Those two ingredients belong together. A proof tells you the transition was valid; published data lets anyone recover what the state actually is.

This is why rollups became so important in Ethereum’s scaling roadmap, but the concept is not Ethereum-specific. The same basic logic appears whenever a system wants to separate execution from settlement: do heavy computation elsewhere, keep a cryptographic checkpoint on a more secure chain, and make sure users are not trapped if an operator goes offline. Projects built with modular stacks and sovereign rollup frameworks use the same pattern even when their data lives on a dedicated data-availability layer rather than directly on a settlement chain.

What is the ‘zero-knowledge’ (validity proof) component of a ZK rollup?

| Proof family | Proof size | Trusted setup | Verification cost | Quantum resistance | Typical fit |

|---|---|---|---|---|---|

| ZK‑SNARK | Very small | Often requires CRS | Low verification gas | Less quantum resistant | Onchain‑friendly, low gas |

| ZK‑STARK | Larger | No trusted setup | Higher verification gas | More quantum resistant | Transparent, scalable |

The defining mechanism of a ZK rollup is the validity proof. After executing a batch of transactions offchain, the rollup operator generates a compact proof showing that, starting from the old state root, applying the published transactions according to the rollup’s rules really does lead to the new state root. The base-chain contract verifies this proof. If verification succeeds, the batch is accepted.

The phrase “ZK” comes from zero-knowledge proofs, though in practice the property that matters most here is often succinct validity rather than privacy. Many ZK rollups are not private at all; users’ transaction data may still be publicly available. The crucial benefit is that the proof is much smaller and cheaper to verify than re-executing the whole batch onchain. In other words, the proof compresses computation, not necessarily information about users.

This is a common point of confusion. The word “zero-knowledge” suggests hidden inputs, secret balances, or anonymous transfers. A ZK rollup can support privacy-enhancing constructions, and some do exploit that. But the foundational idea is broader: the proof convinces the verifier that the computation was correct. Whether transaction details are public or private depends on the specific design.

There are two major proof families used in practice. ZK-SNARKs usually produce very small proofs and support fast verification, which is attractive for onchain use, but many SNARK systems require a trusted setup, often called a Common Reference String. If that setup is compromised, false proofs may become possible. ZK-STARKs avoid trusted setup and are more transparent, and they are often described as more scalable and more resistant to future quantum attacks, but their proofs are larger and verification can be more expensive. This is not merely a cryptography preference; it directly affects rollup costs, trust assumptions, and engineering complexity.

How does a ZK rollup batch get produced, proven, and accepted step‑by‑step?

A ZK rollup becomes much clearer when you follow one batch from beginning to end.

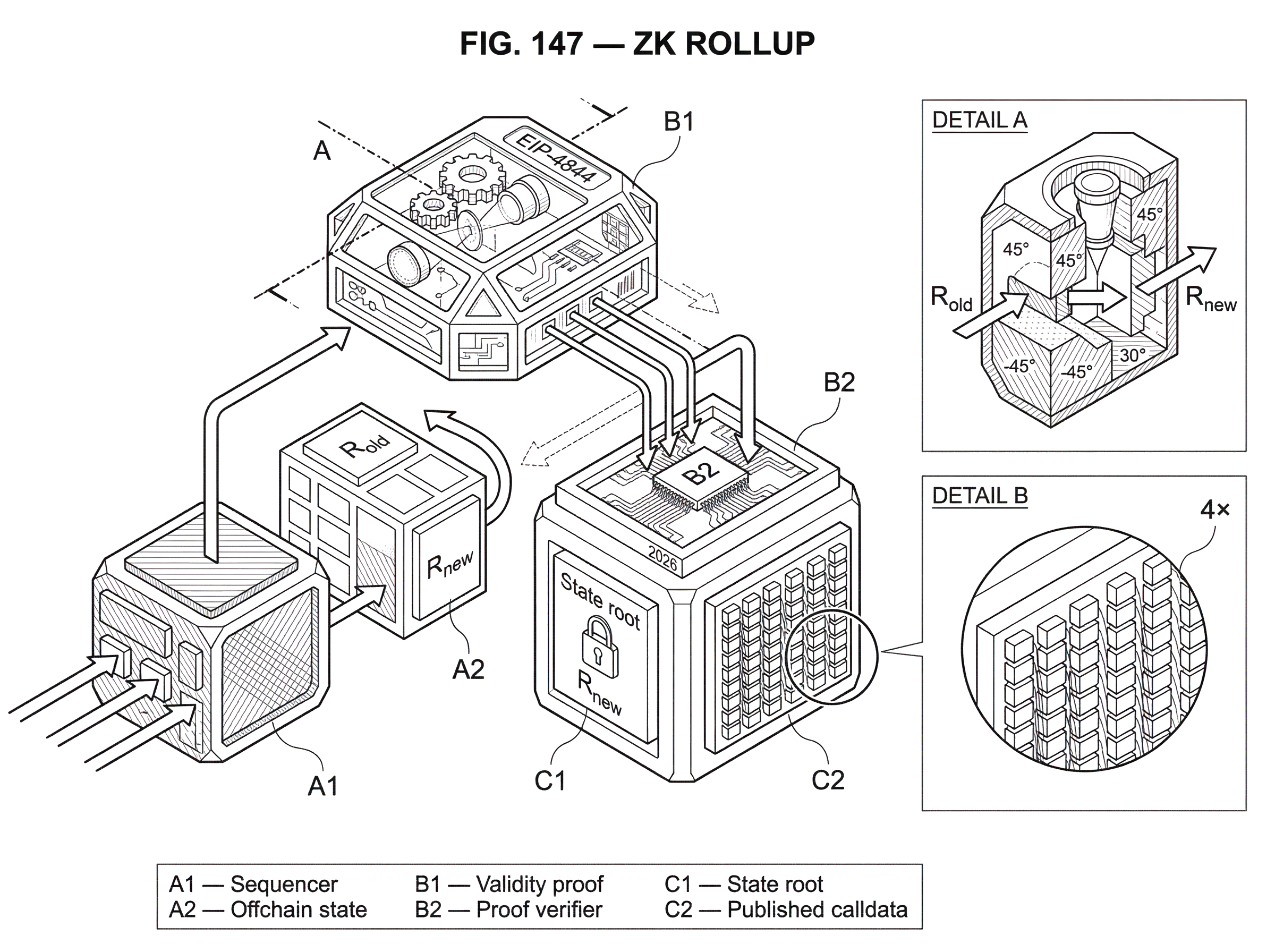

Imagine users submit transfers and contract calls to a rollup sequencer. The sequencer orders them and executes them against the current rollup state. Suppose the old state root is R_old. After executing the batch, the sequencer computes a new root, R_new. If this were only an offchain database update, users would have to trust the sequencer that R_new is honest. In a ZK rollup, that is not enough.

The operator also takes the batch, the execution trace, and the circuit or proving system that encodes the rollup’s rules, and generates a validity proof. Intuitively, the proof says: “I know a valid execution that starts from R_old, applies this batch under the protocol rules, and ends at R_new.” The base-chain rollup contract then receives at least three things: the compressed batch data, the proposed new state commitment, and the proof. The contract verifies the proof and, if verification succeeds, updates the stored state root from R_old to R_new.

Why is this powerful? Because the contract does not need to execute every transfer, signature check, swap, or contract call itself. It only checks the proof. Verification still costs gas, and not a trivial amount, but it is far cheaper than naively re-running the entire batch onchain. The heavy work has been shifted to proof generation.

Now add the second ingredient: data availability. Most ZK rollups publish the transaction or state-delta data to the base chain as calldata, and increasingly to cheaper blob-style data spaces where the protocol supports them. The purpose is not to help the verifier check the proof directly in the usual way. The purpose is to ensure that anyone can reconstruct the rollup state independently. If the sequencer disappears, another party can still recover the rollup and users can still exit because the necessary data is available.

That distinction matters. The proof tells the chain that the transition is valid. The published data tells the world what the transition actually was.

Why must ZK rollups publish transaction data as well as post validity proofs?

| Design | Data location | Proofs present | Who you must trust | Typical fees | Withdrawal safety |

|---|---|---|---|---|---|

| ZK rollup | On‑chain (calldata/blobs) | Validity proofs yes | Trust L1 for DA | Higher recurring fees | Strong (trustless exits) |

| Validium | Off‑chain (DAC) | Validity proofs yes | Trust DAC operators | Lower fees | Weak if DA withheld |

| Volition | Per‑tx choice | Validity proofs yes | Choice: L1 or DAC | Variable by choice | Choice‑dependent |

It is tempting to think the proof is the whole security story. It is not. A proof can certify that some new state is valid without revealing enough information for outside parties to recover that state themselves. If transaction data were withheld, users might know that the rollup has transitioned to some valid state but still be unable to determine balances, reconstruct accounts, or produce the information needed to withdraw safely.

That is why canonical rollups publish transaction-related data on the base chain. On Ethereum, older designs commonly used calldata, which is expensive because every byte becomes part of the chain’s permanent data burden. This cost is often the dominant component of rollup fees. The proof is sophisticated, but the mundane act of publishing bytes to layer 1 is frequently what users are really paying for.

This is also why Ethereum’s blob transactions matter to rollups. With EIP-4844, rollups can post large data payloads into blobs rather than ordinary calldata. Those blobs are designed for data availability rather than EVM execution, so they can be much cheaper. Mechanically, this changes where rollup batch data sits and how contracts reference it, but conceptually it does not change the core rollup bargain: execute elsewhere, prove correctness, publish enough data for recovery.

Nearby designs reveal the importance of this choice. In Validium, the system still uses validity proofs, but transaction data is kept offchain, often with a data-availability committee. That can reduce fees dramatically, and may improve confidentiality, but it weakens the trust model. If the offchain data managers withhold data, users may be unable to reconstruct state or withdraw even though the proofs remain valid. So the difference between a ZK rollup and a Validium is not whether proofs exist; it is where the data lives, and therefore what users must trust.

Why are withdrawals from ZK rollups faster than from optimistic rollups?

| Rollup family | Finality model | How validity decided | Withdrawal delay | Per‑batch L1 cost |

|---|---|---|---|---|

| ZK rollup | Final after proof verified | Cryptographic validity proof | Fast (no long delay) | Higher verification gas |

| Optimistic rollup | Provisional until challenged | Assumed valid; fraud proofs | Slow (challenge window days) | Lower verification gas |

The cleanest comparison point is the optimistic rollup. Both optimistic and ZK rollups execute transactions offchain and publish enough data onchain for reconstruction. The difference is how the base chain decides whether a batch is valid.

An optimistic rollup assumes a batch is valid unless someone proves otherwise during a challenge window. That means withdrawals back to the base chain are delayed, often for days, because the system must leave time for fraud proofs. The operator may be honest, but the protocol cannot settle immediately because it relies on the possibility of later dispute.

A ZK rollup works in the opposite direction. The batch is not accepted until a validity proof is verified. Once that happens, the base chain has cryptographic assurance that the transition was correct. So exits do not need a long challenge period. Users generally only wait for the proof-bearing batch to be finalized on the base chain.

This difference has practical consequences beyond convenience. Fast withdrawals improve capital efficiency, make bridging back to the base layer less awkward, and reduce the need for liquidity providers that front users their funds before a challenge period ends. That is one reason ZK rollups are often seen as the more elegant long-run design, even if they are harder to build.

How do ZK rollups achieve scaling through amortization, compression, and recursion?

The simplest story is “proofs make things scale,” but that is incomplete. The larger savings often come from amortization and compression.

A batch lets many transactions share one fixed verification cost on layer 1. Instead of paying separately for every onchain execution, thousands of offchain executions can share a single proof verification. At the same time, rollups compress transaction representation. A token transfer that would require a full smart-contract execution on Ethereum can often be represented in a much smaller encoded form inside the rollup’s published data. This is why the per-transaction onchain footprint can fall dramatically.

ZK rollups also get one special compression advantage. If some piece of transaction information is only needed to verify that execution was legal, and not needed later to reconstruct state transitions, that information may be left offchain because the proof already certifies its correctness. An optimistic rollup cannot always do that, because a future fraud proof may require enough raw data for others to re-execute disputed computation. This gives ZK rollups a structural way to be more compact.

As proving systems improve, recursive proofs extend this idea further. A recursive proof is a proof that verifies other proofs. In rollup terms, that means multiple L2 blocks can be individually proved and then wrapped into a higher-level proof that the base chain verifies once. The consequence is important: more computation can be finalized per L1 verification step, pushing effective throughput higher and reducing settlement overhead.

What technical challenges make ZK rollups and zkEVMs difficult to build?

If the idea is so strong, why did optimistic systems often ship earlier for general-purpose execution? Because proving arbitrary smart-contract computation is difficult.

To generate a validity proof, the rollup’s rules must be expressed in a form the proving system understands. For simple payments or exchange logic, that can be manageable. For a fully general virtual machine with dynamic control flow, storage access, precompiles, and all the quirks developers expect, the engineering burden becomes much higher. This is the challenge behind the zkEVM: making Ethereum-style computation provable in a way that is practical enough for production.

This is not just compiler work. It affects proving time, hardware requirements, and system architecture. Proof generation is computationally intensive and may require specialized hardware or highly optimized infrastructure. That creates pressure toward centralization because a small number of sophisticated operators may be best positioned to produce proofs quickly and cheaply.

So while the validity proof gives strong correctness guarantees, the surrounding system may still be operationally centralized. Many deployed ZK rollups rely on a single or small set of sequencers. A centralized sequencer can censor transactions, delay inclusion, or create outages even if it cannot finalize invalid state on layer 1. This is a useful distinction: ZK rollups can be very strong on integrity while still weaker on liveness and decentralization than the base chain they settle to.

A real-world incident makes this concrete. Starknet reported an outage in 2026 where a sequencer-side execution bug caused incorrect processing, and a period of activity had to be reverted. What is notable is not only that such bugs can happen, but that the proving layer prevented the bad execution from reaching layer-1 finality. That shows both sides of the design: the prover acts as a backstop against invalid state, but users can still experience downtime, reorgs, or disrupted UX when the offchain system malfunctions.

What do ZK rollup fees cover and what trust assumptions remain for users?

From a user’s perspective, a ZK rollup fee usually bundles several mechanisms together. Part of the cost comes from updating rollup state. Part comes from publishing batch data to the base layer. Part pays the operator or sequencer. And part reflects proof generation and verification. In many designs, the data-publication component is the largest recurring cost driver.

This leads to a subtle but important insight: the main economic bottleneck for a rollup is often not “cryptography is expensive” in isolation. It is that verifiable data availability is expensive. If you want users to retain the right to reconstruct state and exit without trusting an operator, someone has to pay to make the relevant data widely available.

That is why cheaper data layers matter so much. Ethereum blobs reduce this cost within Ethereum’s own architecture. Modular data-availability layers such as Celestia aim to provide a different place to publish rollup data. Sovereign-style rollups can combine zk proving with external DA layers. But the security model changes depending on where settlement happens, where data is posted, and which chain users trust as the final arbiter of state. The core design pattern survives across architectures; the trust boundary does not stay fixed.

Which applications and use cases are a good fit for ZK rollups today?

ZK rollups are attractive anywhere users want lower fees and faster confirmation than the base chain, but do not want to give up cryptographic assurance of correctness. That includes token transfers, trading, payments, gaming, and increasingly general-purpose smart-contract applications. Early ZK rollups found product-market fit in applications with simpler execution patterns, such as payments and exchanges, because those were easier to prove efficiently.

The rise of zkEVM systems broadened that scope. Projects such as Polygon zkEVM, zkSync Era, Scroll, Linea, Taiko, and Starknet represent different technical paths toward a provable smart-contract environment. They do not all make the same tradeoffs in proof system, compatibility model, operator design, or data-availability strategy, but they share the same ambition: let developers deploy richer applications without paying full layer-1 execution cost for every step.

Outside the Ethereum-specific stack, the same validity-proof architecture appears in other ecosystems and tooling. Prover networks such as Succinct focus on generating proofs efficiently. Rollup frameworks such as the Sovereign SDK make validity proofs one option within a broader modular design. The reason these examples matter is that they show ZK rollups are not just a single product category on one chain; they are a general answer to the problem of compressing trustworthy computation.

What assumptions and failure modes do ZK rollups rely on?

A ZK rollup is not “trustless” in a magical sense. Its strongest guarantee is that invalid state transitions should not finalize if the cryptography, circuits, and verifier are sound. But several assumptions sit around that guarantee.

If the proving system uses a trusted setup, the ceremony must have been done correctly. If the sequencer is centralized, users still depend on that operator for timely inclusion unless there is a robust forced-transaction path. If proof generation is concentrated among a few actors, censorship and liveness risks remain. If the implementation has bugs in the prover, circuit, or state-transition logic, finalization failures or outages can occur even if the abstract design is sound. Security research has already found zero-day finalization bugs in production zk-rollup systems, which is a reminder that elegant cryptographic structure does not eliminate ordinary software risk.

And if data is not published in a way that users can reliably access, then the system may cease to be a rollup in the strongest sense and start looking more like a Validium or other hybrid design. That is not necessarily bad; it may be the right tradeoff for some applications. But the trust model is different, and the difference is fundamental rather than cosmetic.

Conclusion

A ZK rollup works by separating execution from verification. Transactions run offchain, the resulting state transition is proven with a succinct validity proof, and the underlying data is published so the rollup remains recoverable. That combination is what lets the base chain do less work without giving up the ability to reject invalid state.

The short version to remember is this: a ZK rollup scales a blockchain by replacing onchain re-execution with onchain proof verification, while keeping enough data available for users to recover the system themselves.

Everything else follows from how that basic bargain is implemented.

- faster withdrawals

- lower fees

- zkEVM engineering

- SNARK versus STARK tradeoffs

- blobs

- recursion

- sequencer centralization

How does this part of the crypto stack affect real-world usage?

ZK rollups affect real-world usage by changing withdrawal latency, fee composition, and which party you must trust for liveness and data recovery. Before funding or trading assets tied to a rollup, check the rollup’s data-availability model, proof family, and sequencer liveness protections; then use Cube Exchange to fund and trade once you understand those constraints.

- Read the rollup’s docs and note three specifics: whether it posts calldata or uses an external DA layer (EIP‑4844 blobs vs offchain DA), which proof family it uses (SNARK vs STARK), and whether a forced-exit or backup sequencer path exists.

- Check decentralization and liveness indicators: look for multiple provers/sequencers, published uptime metrics, and a clear forced-withdrawal or fraud/fallback mechanism you can trigger if the operator halts.

- Fund your Cube account with the asset and network you plan to use (use the exact token contract and network listed by the rollup docs to avoid bridging errors).

- On Cube, open the market or withdrawal flow for that asset, choose an order or withdrawal that matches your needs (use limit orders for price control; choose onchain withdrawal if you plan to exit to L1), review the estimated onchain data and proof-related fees, and confirm.

Frequently Asked Questions

ZK rollups publish the transaction or state-delta data on the base chain (historically as calldata, and increasingly into blob-style data spaces) so anyone can reconstruct the rollup’s state if the sequencer disappears; the proof only certifies correctness while the published data enables independent recovery.

Because a ZK rollup requires a validity proof before the base chain accepts a batch, the chain has cryptographic assurance the transition is correct and users do not need to wait for a long fraud-challenge window as in optimistic rollups; exits are generally limited only by block finality and proof posting latency.

They both use validity proofs, but a ZK rollup posts transaction data onchain (or to an agreed DA layer) so anyone can reconstruct state, whereas a Validium keeps data offchain (often with a data-availability committee), which lowers fees but requires trusting data holders for withdrawals and recovery.

SNARKs typically make very small, fast-to-verify proofs but often rely on a trusted setup (Common Reference String), while STARKs avoid trusted setup and offer transparency and stronger post-quantum claims at the cost of larger proofs and generally higher verification or publication overhead; this tradeoff affects fees, trust assumptions, and engineering complexity.

No - validity proofs protect integrity (preventing invalid state from finalizing) but do not remove operational trust: a centralized sequencer can still censor transactions, delay inclusion, or cause outages, and proof-generation concentration or implementation bugs create liveness and operational risks.

Publishing enough data for users to reconstruct state (verifiable data availability) is often the dominant recurring cost for rollups because L1 calldata is expensive; EIP-4844’s blob model and external DA layers aim to lower that cost, but they change how and where data is stored and introduce new operational tradeoffs.

“ZK” refers to a validity-proof that the computation was correct, not automatically to privacy: many ZK rollups publish full transaction data and are not private, though zero-knowledge techniques can be designed to hide inputs if explicitly implemented.

Proving general-purpose (EVM-style) computation is hard because the VM semantics must be encoded for the prover, which raises proving time, hardware needs, and engineering complexity; that difficulty has delayed wide deployment of general-purpose zkEVMs and tends to push proof generation toward well-resourced operators, risking centralization.

Recursive proofs let a prover verify multiple earlier proofs and produce a single, smaller verification to post on L1, so many L2 blocks can be amortized into one L1 verification - increasing finalizable computation per L1 step and improving effective throughput as provers and recursion scale.

Related reading