What is a Sequencer?

Learn what a sequencer is in rollups and scaling systems, how it orders transactions, why it improves latency, and what risks it creates.

Introduction

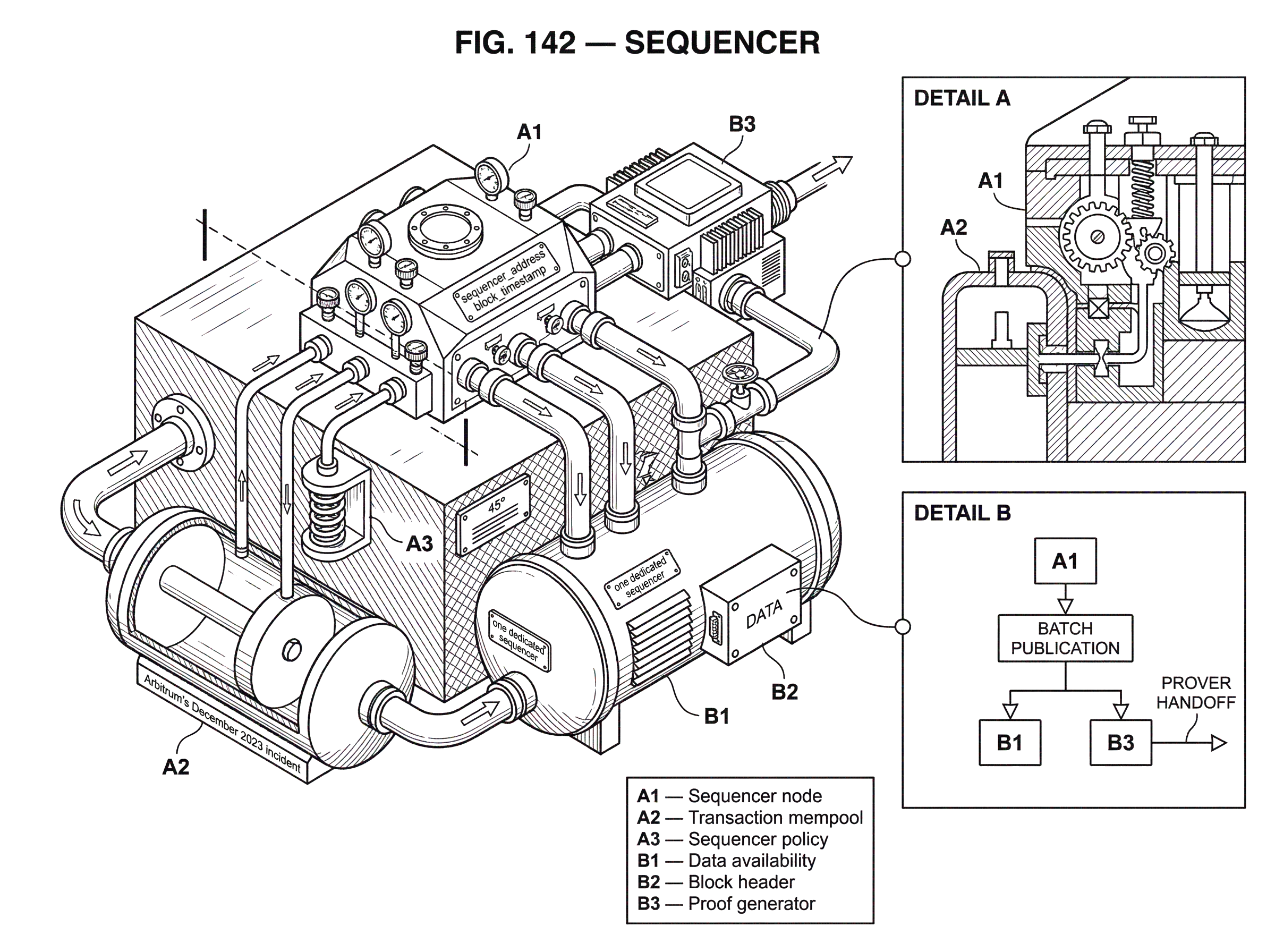

A sequencer is the component that decides the order of transactions before they are turned into a block or batch and published for the rest of the system to process. In many scaling systems, especially rollups, this is the place where the user’s experience is won or lost: fast confirmations, predictable inclusion, and low latency usually come from having a sequencer. The same design choice also concentrates power. Whoever controls ordering can delay transactions, choose which transactions go first, and often capture a large share of the system’s MEV.

That tension explains why sequencers matter so much. At first glance, they can look like just another node role, or a relabeled block producer. But a rollup is not simply replaying the base chain’s full consensus process at the same speed and scope. It is trying to move execution off the base layer while still inheriting some of the base layer’s security. A sequencer exists because someone has to make fast, local decisions about ordering and batching before slower settlement, proof generation, or dispute resolution catches up.

The key idea is simple: a sequencer gives you fast ordering now, while the rest of the system gives you stronger settlement later. Once that clicks, many design choices follow naturally. Why are sequencers often centralized at first? Because a single writer is the easiest way to minimize coordination delay. Why is decentralizing sequencers hard? Because you are trying to keep the speed benefits of a single coordinator while reintroducing the safety and neutrality properties of distributed consensus.

Why do rollups and scaling layers use sequencers?

The problem a sequencer solves is not merely “put transactions in order.” Blockchains already do that. The harder problem is how to do it quickly and cheaply when the underlying settlement layer is slower, more expensive, or both. If every user action on a rollup had to wait for the base layer’s full inclusion and finality path before the rollup could present an updated state, the rollup would lose much of its practical advantage.

So scaling systems separate two jobs that are tightly coupled on many base chains. First, there is the near-term job of accepting transactions, ordering them, and executing them into a provisional next state. Second, there is the slower job of making that state durable and accountable by publishing data, posting commitments, and in some systems generating proofs. The sequencer handles the first job.

In the OP Stack documentation, this appears as the Sequencing Layer, which “determines how user transactions ... are collected and published to the Data Availability Layer.” That phrasing is useful because it puts sequencing in the middle of a pipeline. The sequencer does not merely listen to users. It also has to produce something the rest of the protocol can consume: transaction batches, blocks, or ordered inputs that later derivation and execution logic can replay.

This is why sequencers are common in both optimistic and zero-knowledge rollups, even though those systems differ in how they prove correctness. In ZKsync Era, for example, the sequencer orders and executes transactions off-chain to produce state updates, while a prover later generates the validity proof and an L1 verifier finalizes it. Starknet describes a similar division of labor: sequencers order transactions and produce blocks; then, after block creation and consensus approval, provers generate a proof for L1. The mechanism differs across systems, but the structural need is the same. Someone has to be the fast path.

What does a sequencer do in a rollup?

The cleanest mental model is to think of a sequencer as the system’s traffic controller for execution. Users send transactions toward it. The sequencer chooses an order, executes or simulates them against the current state, constructs a block or batch, and then hands that result to the next layer of the architecture: data availability, derivation, proof generation, settlement, or some combination.

Here is the mechanism in ordinary terms. A user submits a transaction. The sequencer decides whether to accept it into its working set, where to place it relative to other transactions, and when to seal a block or batch. It then executes those transactions in order, because order changes outcomes whenever transactions compete for the same state: balances, AMM reserves, liquidation opportunities, NFT listings, bridge queues, and so on. The output is not just a list of transactions. It is also the resulting state change, receipts, events, and block metadata.

Different systems expose different parts of that output. Starknet’s protocol documentation is unusually explicit: the block header includes a sequencer_address, meaning the block records which sequencer created it, and block_timestamp, defined as the time at which the sequencer began building the block. The block hash itself commits to the sequencer_address and other header fields. That tells you something important: the sequencer is not only an operational actor; it becomes part of the protocol-visible provenance of the block.

In rollups, the sequencer usually also interacts closely with data availability. Ordering alone is not enough. Other nodes must be able to reconstruct what happened. A DA layer exists to prove that the relevant transaction data was published to the network and can be downloaded for some period. This is why sequencing and DA are linked so tightly in modular designs. The sequencer decides what happened next; the DA layer makes the evidence of that ordering and execution available so others can verify or challenge it.

How is sequencer confirmation different from finality?

A common misunderstanding is to treat sequencer confirmation as final settlement. In practice, these are different promises.

When a centralized rollup sequencer quickly tells a user that their transaction has been accepted, what the user usually has is a preconfirmation or near-term inclusion signal, not the strongest form of finality the system can offer. Stronger assurance arrives later, after the ordered data has been published, derived, challenged if necessary, or proven and verified on the base layer.

This distinction matters most when things go wrong. If a sequencer goes offline, censors transactions, publishes invalid data, or builds on assumptions that later turn out to be false, the fast local picture can diverge from the durable global one. The OP Stack interop specification makes this concrete. It says new validity rules apply to sequencer-built blocks, and if the rules are not followed, the sequencer risks producing empty blocks and chain reorgs. In other words, a sequencer can move first, but it cannot move arbitrarily. The rest of the protocol still has veto power.

That is the broader invariant: a sequencer may choose ordering within the protocol’s allowed space, but it does not get to redefine validity. The OP Stack spec states this directly through the idea of sequencer policy. Policy covers actions not constrained by consensus, and transaction ordering is policy. Consensus, by contrast, dictates that transactions included in a block must be valid. This is a useful boundary. It tells you where a sequencer’s discretion ends.

Worked example: how a sequencer orders transactions and affects outcomes

Imagine a rollup with a single sequencer. Alice sends a swap transaction to buy a token on a DEX. Bob sends a liquidation transaction to seize collateral from an undercollateralized loan. Carol sends a bridge-related transaction that depends on a message from another chain.

The sequencer receives all three around the same time. It cannot treat them as interchangeable. If Alice’s swap runs before Bob’s liquidation, the price impact might change whether Bob’s liquidation is profitable or even valid. If Carol’s transaction consumes a message that has only weak confirmation on the remote chain, then including it may make the local block vulnerable if that remote message disappears in a reorg.

So the sequencer does real decision-making. It chooses an order, executes the transactions, checks whether Carol’s cross-chain dependency is sufficiently safe under its policy, and then builds a block. That block may give users fast feedback immediately. But later, the batch still has to be published to the DA layer and processed by the rest of the rollup pipeline. If Carol’s remote dependency was trusted too early, the block can become invalid under the stricter rules the protocol ultimately enforces.

This example shows why sequencing is not just queue management. The sequencer is constantly balancing latency, validity risk, and economic consequences of ordering.

Why do many rollups start with a centralized sequencer?

| Model | Speed | Coordination cost | Censorship risk | Best for |

|---|---|---|---|---|

| Single sequencer | Lowest latency | Minimal | High | Early-stage UX and iteration |

| Multiple sequencers (rotating) | Low–moderate latency | Rotation & scheduling | Reduced | Permissioned decentralization |

| Permissionless arranger / SBC | Higher latency | High (consensus) | Low | Strong neutrality and auditability |

The default design in many rollups is still a single sequencer. OP Stack documentation says that in the default rollup configuration, sequencing is typically handled by one dedicated sequencer, with derivation rules limiting how long it may withhold transactions. That setup is common for a simple reason: a single operator is the easiest way to achieve low-latency ordering.

If only one actor decides the next block, there is no need for a distributed committee to exchange votes before each user-visible inclusion decision. That reduces coordination overhead, simplifies operations, and usually improves user experience. It also makes product iteration easier. Teams can upgrade software, tune mempool policy, and respond to incidents without waiting for a decentralized validator set to adopt each change.

But the cost is concentration of power. A centralized sequencer can censor transactions, delay them, favor its own order flow, or extract MEV by reordering around user trades and liquidations. This is not a special rollup-only pathology. It is a general consequence of ordering power. Paradigm’s MEV explainer defines MEV as profit available to a miner, validator, sequencer, and so on through the ability to include, exclude, or reorder transactions. A sequencer often has even cleaner ordering control than an L1 proposer because the rollup’s near-term transaction flow is concentrated through it.

There is also a liveness cost. A single sequencer is a single operational choke point. Arbitrum’s December 2023 incident is a practical reminder. During a surge of inscriptions, the Arbitrum One sequencer temporarily stopped relaying transactions properly, and the resulting backlog pushed gas prices up before operations normalized. The exact outage details are incident-specific, but the general lesson is broader: if one service sits on the fast path for all user transactions, its outages become ecosystem events.

How does sequencing create MEV and economic incentives?

Once you see that a sequencer controls ordering, the connection to MEV is immediate. Many on-chain outcomes are path-dependent. The first liquidation gets the collateral. The first arbitrage captures the spread. The first trade sets the next pool price. So the right to decide order is also the right to decide who captures value created by that order.

This is why discussions about sequencers quickly spill into market design. Some systems and proposals separate ordering from execution more explicitly, or auction parts of the ordering right. Others explore proof-enforced ordering constraints. Still others try to push the sequencing role toward a builder market. But the underlying issue stays the same: if sequencing is scarce and valuable, someone will try to monetize it.

That does not automatically make sequencers bad design. It means the protocol has to choose where that power sits and what constraints surround it. A single sequencer can provide very good latency. A decentralized set can reduce unilateral control. Auctions can make extraction more transparent but do not necessarily eliminate user harm. Proof systems can constrain certain behaviors but may introduce complexity or performance costs. These are design trades, not free upgrades.

How do cross-chain messages increase sequencer risk?

| Approach | Latency | Safety vs L1 | Reorg risk | When to use |

|---|---|---|---|---|

| Accept preconfirmation | Very low | Weak until settlement | High (remote reorgs) | Latency‑sensitive UX |

| Require L1 confirmations | High | Strong | Low | Security‑critical flows |

| Shared sequencer / coordinator | Low–medium | Depends on shared trust | Lower cross‑chain reorgs | Atomic cross‑chain actions |

Sequencing becomes more subtle when a chain wants to consume messages initiated on another chain. Now the sequencer is no longer evaluating only local transactions against local state. It is also making judgments about the safety of remote information.

The OP Stack interop specification is especially useful here because it states the tradeoff directly. A sequencer may include executing messages with any level of confirmation safety. Using lower-confirmation, preconfirmed messages reduces latency, but it raises the risk that the local chain produces an invalid block. The spec adds an important recursive point: the safety of a block depends on the safety of the blocks that included the initiating messages it consumes, and that dependency can recurse across chains.

This is easy to underestimate. Suppose Chain A sends a message to Chain B, and Chain B wants to act on it immediately for a better user experience. If Chain B’s sequencer trusts only a preconfirmation from Chain A’s sequencer, then Chain B has effectively imported Chain A’s sequencing risk into its own state transition. The OP Stack spec warns that if a local sequencer accepts inbound cross-chain transactions whose initiating message has only preconfirmation-level security, the remote sequencer can potentially trigger a reorg on the local chain.

That is a deep point. In a multi-chain world, sequencing trust can propagate across domains. Faster interoperability often means borrowing safety assumptions from somewhere else.

What are shared sequencers and how do they affect cross‑chain composability?

This cross-chain problem is one reason shared sequencers attract so much attention. If the same block builder can build the next canonical block for multiple chains, then some cross-chain interactions become much easier to coordinate. The OP Stack specification says this can enable synchronous composability, where transactions execute across multiple chains at the same timestamp.

Why does that help? Because many cross-chain failures come from asynchronous uncertainty. Chain X moves first, Chain Y waits, and each side must decide how much remote risk to trust before its own state changes. A shared sequencer can collapse part of that uncertainty by deciding the next state transition for multiple domains together.

But the idea has limits. A shared sequencer does not magically remove all trust or coordination problems. It can reduce timing mismatch and help with atomicity, but it also creates a larger concentration point. If one sequencing layer now coordinates multiple rollups, its censorship, outage, and MEV surface may expand along with its composability benefits. This is why some research explores alternatives that approximate cross-rollup atomicity without fully merging sequencing, such as builder-coordinator schemes or state-lock auctions. Those approaches try to coordinate ordering across domains while avoiding immediate protocol-wide shared sequencing.

How can sequencers be decentralized and what are the trade‑offs?

| Approach | Ordering method | Latency impact | Data-availability fit | Complexity |

|---|---|---|---|---|

| Multiple Sequencer module | Scheduled rotation among actors | Small additional delay | Uses standard DA | Moderate |

| Consensus proposer (BFT/Tendermint) | Proposer + consensus rounds | Higher latency | DA integrated in blocks | High |

| Decentralized arranger (SBC) | Set consensus on batches | Variable, generally higher | Can replace DACs / built-in | High, research‑stage |

Decentralizing a sequencer sounds conceptually straightforward: replace one ordering actor with many. Mechanically, though, you are reintroducing the old blockchain problem the centralized sequencer was partly created to avoid. Multiple parties must agree on a next order without giving up too much speed.

The OP Stack component overview reflects this in a modest way through a Multiple Sequencer module, where the sequencer at any given time is selected from a predefined set of actors. Chains can choose the set and the selection mechanism. This moves the system away from one permanently trusted operator, but it does not by itself solve every issue. Someone still needs a schedule, a rotation rule, and a fault-handling process.

Outside rollups, other blockchain architectures show analogous roles. Tendermint has a proposer that creates a block proposal for consensus. Solana’s whitepaper describes a single elected Leader at any given time, which sequences user messages and orders them within a Proof-of-History stream, while verifiers replay and confirm the result. These are not rollup sequencers in the narrow sense, but they illuminate the design space. The common theme is that systems often want a temporarily privileged actor to put transactions into a concrete order, while a wider protocol constrains or confirms that actor’s behavior.

Research proposals push further. One recent paper proposes a decentralized “arranger” that combines sequencing and data-availability-committee functions using set consensus. The details vary, and such proposals come with serious implementation and trust-model questions, but they point to the real challenge: decentralizing sequencing is not only about leader rotation. It is also about preserving data availability, auditability, and predictable recovery when no single operator is in charge.

Sequencer vs. prover, verifier, aggregator, and proposer; what’s the difference?

This topic is often confusing because several neighboring roles touch the same transaction flow.

A sequencer decides order and usually executes transactions into a tentative next state. A prover generates a proof that the state transition was valid in a ZK system. A verifier is typically an on-chain contract or protocol component that checks that proof. An aggregator usually bundles transactions, state transitions, proofs, or data for submission, but does not necessarily control ordering itself. A proposer in an L1 consensus system is the actor currently entitled to put forward a block for the validator set to accept or reject.

In practice these roles can be collapsed into one organization or software stack, or separated across components. The OP Stack interop spec even notes that “block builder” and “sequencer” are used interchangeably for the document’s purposes, while acknowledging they need not be the same entity in practice. That caveat matters. As systems mature, what looks like one role at a high level often splits into specialized actors with different incentives.

What trust and infrastructure assumptions do sequencers rely on?

The most important assumption is not “the sequencer is honest.” It is more precise: the protocol can tolerate whatever the sequencer is allowed to do before stronger checks catch up.

If the sequencer can only delay but not indefinitely suppress transactions, then users face a liveness risk bounded by protocol rules. If the sequencer can preconfirm transactions that later disappear, then users face a settlement-risk window. If the sequencer can reorder transactions freely, then MEV and fairness concerns become central. If the sequencer relies on remote RPCs or weakly confirmed messages for cross-chain execution, then validity risk now depends on the freshness and honesty of those inputs.

The OP Stack interop spec surfaces one particularly concrete assumption: a block builder may need to fully execute a transaction and validate any executing messages it produces before even knowing the transaction is valid. That creates a denial-of-service possibility for the builder. The reason is structural. When validity depends on effects discovered only during execution, the builder must spend work on transactions that may ultimately fail protocol checks. Fast ordering in complex environments therefore creates not only trust tradeoffs, but also resource-exhaustion risks.

Data availability is another hidden dependency. A sequencer can produce an excellent local ordering service, but if transaction data is not made available, the rest of the ecosystem cannot reliably reconstruct or verify the state transition. This is why DA layers matter so much in modular rollup designs. They are not a storage convenience. They are part of the trust story that makes sequencing accountable.

Conclusion

A sequencer is the fast-ordering engine of a rollup or similar scaling system. It exists because users want immediate, low-latency inclusion, while the protocol still needs slower mechanisms for publication, verification, proof, or dispute resolution.

The essential trade is easy to remember: sequencers buy speed by concentrating the right to order. Everything interesting follows from that. It improves user experience, but creates censorship risk, MEV power, operational choke points, and more complicated cross-chain trust assumptions. As scaling systems evolve, much of their architecture is really an attempt to answer one question: how much of sequencing’s speed can we keep while taking back some of the neutrality and resilience that concentration gives up?

How does the sequencer affect real‑world usage?

Sequencers determine how quickly a rollup reports a transaction as "accepted" and how much settlement risk that acceptance carries. That affects when you should fund, trade, or move large balances on a rollup; use Cube Exchange to fund and trade while applying these operational checks.

- Read the chain and rollup docs to identify the sequencer model (single operator, rotating set, or shared sequencer) and the data-availability and finality guarantees the project cites.

- Note the stated settlement window and confirmation types (preconfirmation vs. L1 proof). For large trades or withdrawals, wait for the stronger DA/proof finality the project lists.

- Fund your Cube account via fiat or supported crypto, open the market for the asset on the target rollup, and use limit orders and slippage controls to reduce exposure to reordering or MEV.

- For cross-chain moves, check the bridge and destination chain's required confirmations and whether they accept preconfirmed messages; only move or rely on funds after the chain-specific confirmations you verified are met.

Frequently Asked Questions

A sequencer’s acceptance is a fast, local inclusion signal (a preconfirmation); final settlement arrives later when the ordered data is published, challenged or proven and verified on the base layer - so sequencer confirmation is not the same as protocol finality.

Because a single operator eliminates inter-party coordination, a lone sequencer minimizes latency and simplifies operations and product iteration; the tradeoff is concentration of censorship, outage, and MEV risk that comes from having one actor control ordering.

Ordering controls who goes first on path-dependent outcomes (liquidations, arbitrage, AMM price changes), so whoever sequences can capture value by including, excluding, or reordering transactions - this ordering power is the source of MEV and hence economic power for sequencers.

When a sequencer consumes messages from other chains it must judge the remote messages’ confirmation safety; trusting low‑confirmation (preconfirmed) remote messages reduces latency but imports reorg and validity risk recursively across chains, per the OP Stack interop guidance.

Decentralizing sequencing requires reintroducing distributed agreement while keeping low latency; practical approaches include rotating a predefined set of sequencers or research designs (arrangers, DACs, set consensus), but each adds coordination, DA and trust-model complexity and may widen the attack surface or reduce throughput.

A sequencer depends on data availability (so others can reconstruct and verify batches), the protocol’s allowance for preconfirmation behavior (i.e., what it can do before stronger checks catch up), and reliable inputs for cross-chain data; these dependencies determine the system’s liveness, settlement-risk window, and denial-of-service exposure.

A shared sequencer can reduce cross-chain timing uncertainty and enable more synchronous composability by building coordinated blocks for multiple chains, but it concentrates censor/MEV/outage risk across those chains and does not eliminate all coordination or trust trade-offs.

Related reading