What is Bridge Risk?

Learn what bridge risk is, how cross-chain bridges fail, and why verification, custody design, and finality assumptions determine bridge security.

Introduction

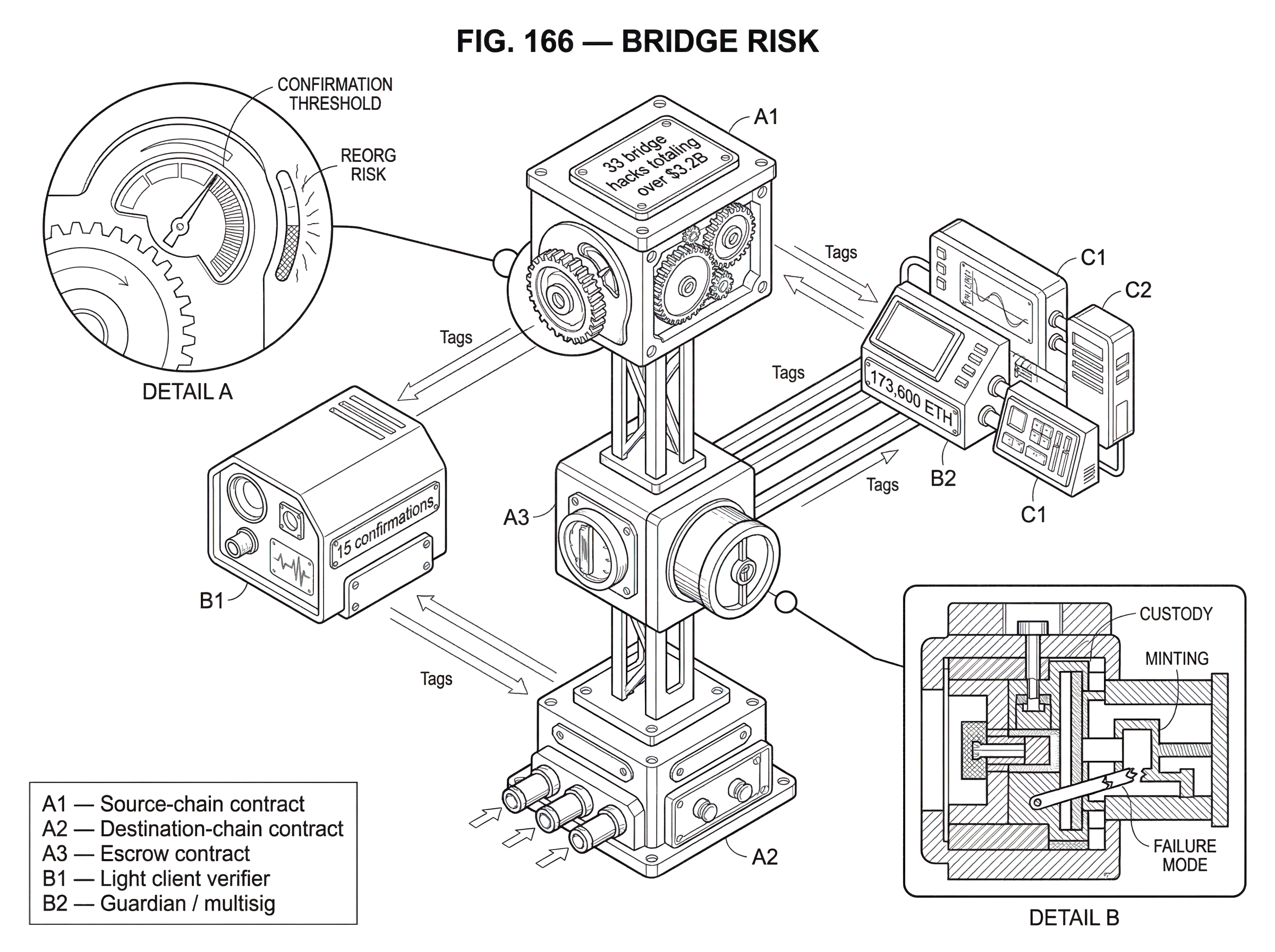

bridge risk is the risk that a system connecting two blockchains fails in a way that causes loss, insolvency, censorship, or incorrect cross-chain state. That sounds abstract until you notice what a bridge is trying to do: make one chain act on facts that originated somewhere else, even though blockchains do not natively share state. The difficulty is not merely moving data from chain A to chain B. The difficulty is convincing chain B that the data from chain A is real, final, and authorized; without introducing a new weak point that is easier to attack than either chain on its own.

That is why bridge risk is so persistent. A bridge is not just an app sitting on top of a blockchain. It is a machine for translating trust between systems with different consensus rules, finality models, execution environments, and operational assumptions. If that translation is wrong, the bridge can mint unbacked assets, release locked collateral, accept messages from transactions that later disappear in a reorganization, or simply stop functioning when its relayers or signers fail. The bridge becomes a place where value accumulates and assumptions concentrate.

This matters because bridges are useful precisely where the stakes are high. They let users move assets across ecosystems, let applications trigger actions on other chains, and let liquidity and state escape the boundaries of a single network. Ethereum’s own documentation frames bridges as necessary because blockchains are isolated and cannot natively communicate or move tokens between each other. But the moment you ask one chain to trust evidence from another, you inherit a new security problem: how is that evidence verified, and under what assumptions?

The shortest way to understand bridge risk is this: a bridge is only as safe as its verification method, its custody design, and the finality assumptions it makes about the chains it connects. Everything else follows from that.

Why do cross-chain bridges introduce unique security risks?

A normal token transfer inside one chain is protected by that chain’s own consensus. If a payment is valid, the chain’s validators or miners agree on it, and the same system that updates balances is the system that verifies the payment. A bridge breaks that neat unity.

The source chain records some event but the destination chain cannot see that event directly.

- perhaps tokens were locked

- burned

- a message was emitted

It needs a proof, an attestation, or a signed statement brought in from outside.

Here is the mechanism behind the risk. Suppose a user locks 10 ETH on one chain and wants 10 units of a corresponding asset on another chain. The destination chain cannot independently inspect Ethereum’s state unless the bridge gives it a way to do so. So the bridge must choose some verification path. It might trust a custodian to say the lock happened. It might trust a committee of signers. It might verify a cryptographic proof against a light client. It might combine an oracle with a relayer and rely on their independence. But in every case, the bridge is creating a rule that says, in effect, “if this evidence appears, then release or mint value here.”

That rule is the real asset. If an attacker can forge the evidence, compromise the signers, exploit the proof verifier, or exploit a mistaken assumption about finality, they do not need to break the source chain itself. They only need to make the bridge believe something false. Once the bridge accepts the false claim, it may mint wrapped tokens with no backing, unlock escrow it should not unlock, or execute a privileged message on the destination chain.

This is why bridge risk is not just another version of ordinary application risk. It combines at least three layers at once. There is the verification layer, where the bridge decides whether a source-chain event is real. There is the asset backing layer, where the bridge decides how value is represented across chains. And there is the operational layer, where relayers, validators, committees, APIs, key management, monitoring, and upgrade controls keep the whole system running. Many of the worst failures happen not because one layer is weak, but because the bridge is only as strong as the weakest of all three.

What is the core security invariant for bridges (one claim, one backing, one final event)?

The central security invariant for a bridge is simple to state: the destination chain should only create or release value if a corresponding source-chain event really happened and is final. A security survey of blockchain interoperability makes this precise by defining a valid cross-chain event as one whose metadata is correct and whose local transactions are final. That captures the heart of the problem. It is not enough that a transfer appeared to happen. It must be the right transfer, with the right parameters, and it must not later disappear.

This invariant sounds obvious, but almost every bridge exploit is some way of violating it. Sometimes the violation is direct: the bridge accepts a forged message and mints assets out of thin air. Sometimes it is indirect: the bridge’s escrow is drained even though no matching burn or release should have occurred. Sometimes the event really did happen, but the bridge treated it as final too early, and a source-chain reorganization later erased it. Sometimes the event was valid, but the bridge’s custody or accounting logic no longer guarantees that each wrapped asset is actually backed one-for-one.

A worked example helps. Imagine a bridge that uses the classic lock-and-mint pattern. Alice locks 100 tokens on chain A. The bridge contract on chain A now holds those 100 tokens in escrow. On chain B, the bridge mints 100 wrapped tokens for Alice. If Alice later wants to go back, she burns the wrapped tokens on chain B, and the bridge releases the original 100 tokens from escrow on chain A. In the happy path, the mechanism works because the wrapped asset on chain B is a claim on the escrow on chain A.

Now notice where the risk lives. If the escrow on chain A is stolen, the wrapped tokens on chain B are no longer fully backed. If the mint on chain B can be triggered by fake evidence, new wrapped tokens can appear without new escrow, making the system insolvent. If a burn on chain B is reported incorrectly, chain A might release funds that should still remain locked. And if chain B accepts the lock event before chain A has truly finalized it, Alice could receive wrapped tokens on B and then see the original lock on A reversed by a reorg. The bridge would have created value against an event that no longer exists.

That is why bridge risk is inseparable from bridged asset risk. A bridged token is not just “the same asset on another chain.” It is a contingent claim whose solvency depends on the bridge’s invariant continuing to hold.

How do bridge verification models determine who is trusted?

| Model | Who decides | Main failure mode | Typical mitigation | Example |

|---|---|---|---|---|

| Custodial | Operator / custodian | Counterparty theft or censorship | Audits, insurance, legal recourse | wBTC custodian model |

| Multisig / Guardian | Signer committee | Key compromise or collusion | More signers, HSMs, rotation | Wormhole / Ronin style |

| Oracle + Relayer | Oracle and Relayer | Collusion between parties | Operator independence, vendor diversity | LayerZero pattern |

| Attestation service | Single attester service | Attester mis‑signing or wrong finality | Timelocks, rate limits, audits | Circle CCTP Iris |

| Light client | On‑chain light client | Client bugs or proof incompatibility | Formal verification, conservative checks | Cosmos IBC |

When people describe bridges as “trusted” or “trustless,” they are usually pointing at the verification model. Ethereum’s bridge documentation uses a helpful first cut: trusted bridges rely on a central entity or system, while trust-minimized bridges rely more heavily on smart contracts and algorithms. In practice, most real systems live somewhere between those poles. The useful question is not whether a bridge uses the word decentralized. The useful question is: who can cause the destination chain to accept a false cross-chain claim?

In a custodial or highly trusted bridge, the answer may simply be the operator. A classic example is a system where a custodian holds native assets on one chain and issues representations on another. This can be operationally simple, but it introduces direct counterparty risk. The operator can censor withdrawals, lose keys, be compromised, or misappropriate collateral. Here the mechanism is straightforward: users are not mostly relying on cryptographic verification of the source chain; they are relying on an institution’s custody and honesty.

Committee- or multisig-based bridges move a step away from single-operator custody, but not all the way to chain-native verification. Wormhole, for example, describes a model where an independent network of Guardians observes source-chain messages and signs attestations called VAAs, which are then verified on the destination chain. Mechanically, this means the destination chain is not verifying source consensus directly. It is verifying that a sufficient guardian authority approved the message. The security question therefore becomes: how hard is it to compromise, collude with, or bypass the guardian set and its signing flow?

The Ronin exploit is an unusually clear illustration of this model’s failure mode. According to Sky Mavis’s postmortem, the attacker obtained control of five out of nine validator private keys and used that control to forge withdrawals, draining 173,600 ETH and 25.5 million USDC from the bridge. The root cause was not a break in Ethereum’s consensus or some impossibility of cross-chain transfer in principle. It was that bridge authority had been concentrated in a signer set small enough, and operationally exposed enough, that compromising keys let the attacker manufacture valid-looking bridge actions. The lesson is mechanical: if a bridge’s truth comes from signatures, key compromise becomes equivalent to reality manipulation.

Oracle-and-relayer designs split authority differently. LayerZero’s whitepaper describes a model where an Oracle provides a block header from the source chain and a Relayer provides the transaction proof; delivery is valid only if the two match, and the key assumption is that Oracle and Relayer do not collude. This is an important design move because it tries to avoid trusting any single off-chain actor. But the security guarantee is conditional. If the Oracle and Relayer are truly independent, forging a message is much harder. If they can collude, the model collapses. So the bridge risk is concentrated not in a single signer key, but in the credibility of operational independence between the two providers.

Attestation systems make another tradeoff. Circle’s CCTP uses on-chain message emission, off-chain attestation by Circle’s Iris service after sufficient confirmations, and on-chain receipt on the destination chain. This avoids the wrapped-asset escrow model for USDC by burning on the source domain and minting on the destination domain, which removes a giant honeypot of locked collateral. But it also makes the attestation service a central trust point. If Iris signs an invalid message, or signs based on insufficient source-chain finality, the destination chain can mint USDC incorrectly. The benefit is that the backing model is cleaner than lock-and-mint. The cost is explicit reliance on Circle’s attestation authority.

At the other end are Light Client Bridge, which try to verify the source chain with cryptographic proofs rather than trusted signers. The Cosmos IBC specification is organized around client, core, app, and relayer modules, reflecting exactly this idea: the receiving chain maintains a client of the sending chain and verifies packets against it. The major security advantage is conceptual: the bridge is trying to inherit the security of the connected chains rather than replacing it with a committee. But this approach is demanding. It depends on the feasibility of light-client verification, on correct client implementation, and often on fast-finality assumptions that are easier in some ecosystems than others.

So the trust spectrum is not a marketing distinction. It is a map of failure modes.

How do finality assumptions affect bridge safety and speed?

| Finality type | Rollback risk | Typical wait choice | Best for |

|---|---|---|---|

| Deterministic finality | Minimal | short, bounded wait | high‑value transfers |

| Probabilistic finality | Decreases over time | many confirmations | consumer transfers, faster UX |

| Configurable confirmations | Depends on threshold | user or app configurable | mixed risk applications |

| Low‑latency commitment | Higher | seconds to minutes | low‑value, latency‑sensitive flows |

A bridge does not just need evidence that an event happened. It needs evidence that the event will stay happened. That is a finality problem.

Some chains offer stronger or faster notions of finality than others. Some provide deterministic or near-deterministic finality after validator agreement. Others, like Ethereum-style probabilistic systems, reduce reversal risk as more confirmations accumulate but do not make it vanish instantly. A bridge must choose when it treats a source-chain event as stable enough to act on. That choice is always a speed-versus-safety tradeoff.

LayerZero’s whitepaper gives a concrete example: for Ethereum, the Oracle waits for 15 confirmations before sending the block header to the destination chain. The purpose is plain; reduce reorg risk before cross-chain delivery. Circle’s CCTP similarly says Iris signs messages only after sufficient confirmations and defines chain-agnostic finality thresholds it calls Confirmed and Finalized. Solana’s RPC documentation exposes the same issue through commitment levels: processed, confirmed, and finalized, with lower-latency levels carrying more rollback risk.

The underlying mechanism is the same across ecosystems. If a bridge credits a user on the destination chain before the source-chain event is truly settled, then a later rollback can produce double value. The user may keep the minted or released asset on the destination chain while the original debit, lock, or burn on the source chain disappears. Inbound relations in the topic graph capture this cleanly: bridge risk is tightly related to both finality and chain reorganization risk.

A smart reader may think, “then just wait longer.” Often that helps, but it is not free. Longer waits degrade user experience and can make bridges commercially unattractive. Some protocols therefore expose configurable thresholds or different handling paths. CCTP lets recipients implement separate handlers for messages received at stronger versus weaker finality thresholds. That is a useful pattern because it acknowledges that finality is not binary at the application level. An app can decide that a low-value transfer may tolerate faster but weaker confirmation, while a treasury movement should wait for stronger assurance.

Still, no confirmation policy can fully rescue a design that misunderstands the source chain’s consensus. Finality assumptions are not a tuning knob bolted on afterward. They are part of the bridge’s core security argument.

What common failure patterns make bridges frequent hack targets?

Bridge exploits are not random. They reflect the fact that bridges gather value and complexity in one place.

The ecosystem evidence is stark. Ethereum’s documentation warns that all bridges carry risks including smart contract bugs, software failure, human error, spam, malicious attacks, and problems with the underlying chain. The interoperability security survey reports around $3.1B in losses from cross-chain bridge hacks and a dataset of 33 bridge hacks totaling over $3.2B. It also finds that a substantial share of stolen funds came from projects secured by intermediary permissioned networks with weak cryptographic key operations. That should not be surprising. If the bridge’s security reduces to “protect this set of keys and this verification service,” attackers will target the keys and the service.

The Wormhole exploit shows the code path version of the problem. CertiK’s analysis describes how an attacker exploited a Solana verification flaw involving a spoofed sysvar account and a deprecated helper function, bypassing signature verification and minting about 120,000 wETH. The critical lesson is not “Solana is unsafe” or “Wormhole is uniquely flawed.” The deeper lesson is that bridge verifiers are unusually dangerous code. A bug in an ordinary application might misprice a trade or lock a feature. A bug in a bridge verifier can authorize the creation of assets with market value across ecosystems.

The Ronin exploit shows the operational version. The bridge was drained not by a subtle proof forgery but by validator key compromise, aided by phishing, infrastructure access, and a stale allowlist. Here the bridge failed as an organization as much as as a protocol. Monitoring was weak enough that the theft was discovered days later. That matters because bridge security is not only cryptographic. It is also key management, access control, anomaly detection, upgrade discipline, and incident response.

There is also an economic reason bridges are attractive targets. Many designs, especially lock-and-mint bridges, accumulate large escrow balances on the source chain. The security survey found this model especially risky in practice, noting that several hacks drained source-chain escrow and that these accounted for a very large fraction of losses. This is not mysterious. Locked collateral is visible, concentrated, and often controlled by contracts or authorities whose attack surface is smaller than the chain securing the collateral itself. Bridges can become giant vaults guarded by smaller walls.

How do lock-and-mint, burn-and-mint, message-passing, and light-client bridges differ in failure modes?

It helps to think about bridge risk by asking what the bridge is trying to preserve.

If the bridge preserves value by escrowing assets and issuing claims elsewhere, then the main danger is insolvency. That insolvency may come from stolen escrow, unauthorized minting, accounting bugs, or inability to redeem under stress. Wrapped assets are especially exposed here, because their value depends on a redeemability promise.

If the bridge preserves value by burning on one side and minting on the other, as CCTP does for USDC, then escrow theft is reduced or eliminated, but attestation authority becomes more central. The system no longer needs a giant pool of locked collateral for that asset, but it still needs a trusted process to confirm that a burn really occurred and was final.

If the bridge is mainly message passing, then the risk is not only financial theft in the narrow sense. A forged message can trigger arbitrary downstream actions: releasing funds from another protocol, updating governance state, executing a cross-chain trade, or changing permissions. Chainlink CCIP and Wormhole both emphasize token transfer plus arbitrary messaging because that is what developers actually want. But arbitrary messaging means the blast radius depends on what destination contracts do when they receive a “valid” message. The bridge may be secure enough to move bytes correctly while the receiving application remains dangerously permissive.

If the bridge relies on operator sets, guardians, or decentralized oracle networks, then governance and operations become part of the risk model. Chainlink CCIP highlights defense-in-depth features such as multiple oracle networks, rate limiting, and timelocked upgrades. Those are meaningful mitigations because they reduce blast radius and surprise changes. But they do not remove the need to trust the system’s chosen operators, upgrade controls, and configuration management. They make the trust surface more layered, not nonexistent.

And if the bridge aims for light-client verification, it shifts risk toward correctness of proof systems, client maintenance, and compatibility with each chain’s consensus model. This often produces the strongest cryptographic story, but also a narrower implementation envelope and more engineering burden.

So “bridge risk” is not one thing. It is the shape taken by cross-chain failure under a particular design.

How can you reduce bridge risk and limit an exploit's blast radius?

| Mitigation | Addresses | Primary trade-off | When to use |

|---|---|---|---|

| Light‑client verification | Trusted signer concentration | Engineering and gas cost | Chains with compatible proofs |

| Split authority (Oracle/Relayer) | Single operator compromise | Residual collusion risk | When off‑chain relays required |

| Rate limits & timelocks | Large sudden drain or upgrades | Slower emergency operations | High‑value token flows |

| Stronger operational crypto | Key compromise risk | Higher operational cost | Custodial or multisig setups |

| Monitoring & IR playbooks | Undetected breaches | Ongoing ops expense | Any production bridge |

Because bridge risk comes from concentrated assumptions, the best mitigations either remove those assumptions or make mistakes less catastrophic.

The most fundamental mitigation is stronger verification. If a bridge can verify source-chain state with a well-designed light client instead of trusting a signer committee, it removes one of the most common catastrophic failure modes. That is why light-client bridges are often described as mitigating bridge risk relative to multisig or committee designs. But this is not always practical across every chain pair, especially where finality is probabilistic or proof verification is expensive.

When off-chain authorities remain necessary, the next best move is to separate power. LayerZero’s Oracle/Relayer split is an example: the point is not to assert that off-chain actors are perfect, but to avoid giving any one actor enough authority to forge a transfer. That same principle applies more broadly through threshold signatures, independent operators, hardware security modules, and strong key management. The survey evidence that so much stolen value came from permissioned intermediary networks with weak key operations suggests that operational cryptography is not a side issue. It is often the issue.

Another mitigation is to constrain damage when verification fails. CCIP’s rate limiting is a good example of a control that does not prevent every compromise but can cap how much value moves before humans respond. Timelocked upgrades serve a similar purpose for governance risk: they create a review window before security-critical changes take effect. Monitoring large outflows, which Ronin’s postmortem admits was missing, is another example. Good monitoring does not make a bad bridge good, but it can turn a total catastrophe into a contained incident.

Design choices about asset representation matter too. Burn-and-mint models can remove escrow honeypots for assets whose issuer can support native cross-chain minting. Expiration windows, replay protection through Nonce, and idempotent message handling all reduce the chance that stale or duplicate attestations create value twice. CCTP’s unique nonces and expiration block are examples of this class of control.

For users and integrators, the practical mitigation is to inspect the trust model before looking at the interface. Ask: who signs? who upgrades? what finality threshold is used? is there locked collateral? can the protocol be paused? are there rate limits? what happens if relayers stop? what happens if the source chain reorgs? Those questions reveal more than slogans like “secure” or “trustless.”

Conclusion

Bridge risk is the risk of asking one blockchain to act on another blockchain’s truth. The core challenge is not transport but verification: how does the destination chain know that a source-chain event is real, final, and uniquely authorized?

Once you see that, the rest becomes easier to reason about. Multisig bridges fail like key-management systems. Oracle-and-relayer bridges fail when independence assumptions break. Attestation bridges fail when the attester is wrong or overtrusted. Lock-and-mint bridges fail as escrow systems and wrapped-asset balance sheets. Light-client bridges fail, if they fail, at the level of proof verification and consensus assumptions.

The durable point to remember tomorrow is this: bridge risk is concentrated trust risk disguised as interoperability. The safer the bridge, the more directly it inherits security from the connected chains; and the less extra trust, custody, and operational fragility it asks users to accept.

How do you move crypto safely between accounts or networks?

Moving crypto safely between accounts or networks starts with verifying the exact token, network, and destination address, then using a controlled transfer path that respects fees and finality. On Cube Exchange, the practical workflow is to fund your account, prepare the destination and network details, and use Cube’s Withdraw/Transfer flow while checking provider-specified confirmation thresholds and fees.

- Fund your Cube account with the token you plan to move via fiat on‑ramp or a direct crypto deposit.

- Open Cube’s Withdraw/Transfer flow, select the token, pick the exact destination network (for example: Ethereum Mainnet vs Arbitrum), and paste the recipient address; verify the token contract/address if available.

- Review the estimated fees, any bridge or transfer-provider notes, and the recommended finality threshold; for Ethereum-style transfers, consider waiting for the provider’s recommended confirmations (often in the low double digits) before treating the transfer as final.

- Submit the transfer, monitor the on-chain transaction or provider status, and only continue related activity after the stated confirmation threshold is reached; if you detect an unexpected address, fee, or delay, pause and contact Cube support.

Frequently Asked Questions

Bridge risk combines three interacting layers: the verification layer (how the destination chain is convinced a source-chain event occurred), the asset-backing layer (how value is represented and reserved across chains), and the operational layer (relayers, signers, key management, monitoring, and upgrades). Failures often happen when the weakest of these three is exploited or misconfigured.

Finality determines when a bridge treats a source-chain event as irreversible, so shorter waits reduce user latency but increase reorg risk; longer waits improve safety but hurt UX. The article and protocols like LayerZero and Circle’s CCTP illustrate this tradeoff (e.g., LayerZero waiting for ~15 confirmations; CCTP exposing discrete confirmed/finalized thresholds).

Light Client Bridge try to cryptographically verify source-chain state on the destination chain, which means they inherit the connected chains’ security rather than trusting an off-chain committee; this materially reduces concentrated trust. However, they are engineering- and consensus-model-intensive and can be impractical across probabilistic-finality chains or where proof verification is costly, which is why many real bridges use hybrid designs.

Bridges that base truth on signer sets or multisigs concentrate risk in key material and operator integrity; the Ronin bridge (validator key compromise) and Wormhole (signature/sysvar verification flaws) show attackers either stole keys or bypassed verifier logic to mint or withdraw large sums. These incidents demonstrate that signer compromise or verifier bugs can let attackers manufacture apparently valid cross-chain actions without breaking the source chain itself.

Lock-and-mint designs concentrate risk in escrow (stolen escrows make wrapped tokens undercollateralized), while burn-and-mint designs reduce escrow honeypots but shift trust to the attestation authority that proves burns; message-passing bridges carry additional risk because forged messages can trigger arbitrary actions on destination contracts. Each design changes the primary failure mode from insolvency to attestation or arbitrary-execution risk.

Effective mitigations either remove concentrated trust (e.g., sound light clients) or limit blast radius when trust fails: split authorities (Oracle/Relayer), threshold signatures and HSM-backed key ops, rate limits, timelocked upgrades, expiration/nonces for attestations, and robust monitoring for large outflows. These are explicitly recommended in the article and echoed by protocols (e.g., LayerZero's Oracle/Relayer split, CCIP rate-limiting, and CCTP nonces/expiration).

Before using a bridge, inspect its trust model rather than marketing labels: ask who signs attestations, who can upgrade contracts, what finality thresholds are enforced, whether collateral is escrowed, whether rate limits or timelocks exist, and how monitoring and recovery are organized. The article lists these exact diagnostic questions as the practical mitigation for users and integrators.

Related reading