What is Layer 1?

Learn what Layer 1 means in blockchain scaling: the base chain that provides consensus, settlement, and data availability for secure verification.

Introduction

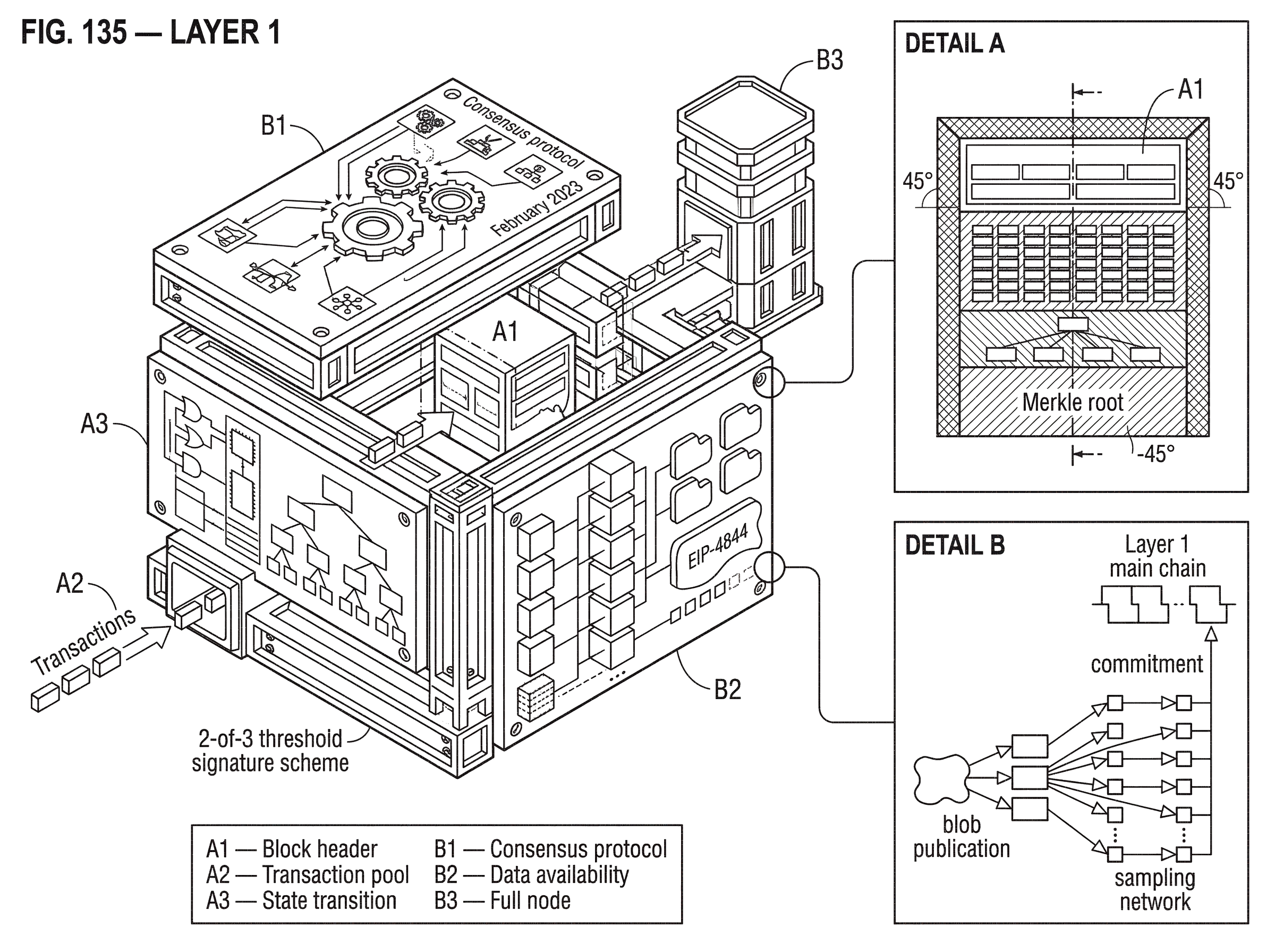

Layer 1 is the base blockchain itself: the network, consensus rules, and state machine that determine which transactions are valid and in what order they become part of shared history. When people talk about scaling crypto systems, Layer 1 matters because it is the place where security, final settlement, and data publication ultimately have to become real rather than merely promised.

That framing helps resolve a common confusion. People often use “blockchain” and “Layer 1” as if they were exact synonyms. They overlap, but they are not identical ideas. Blockchain names a general kind of system. Layer 1 names the role a blockchain plays in a layered architecture. Bitcoin can be described as a blockchain; it can also be described as a Layer 1 because other systems can anchor to it. Ethereum is a blockchain, but in scaling discussions it is specifically the Layer 1 that rollups post data to and settle on. The term becomes useful precisely when there is something above the base layer.

The puzzle is this: if Layer 1 is the foundation, why not just make it arbitrarily fast and cheap so no other layers are needed? The answer is that a blockchain is not only a computer. It is a computer that many independent parties must be able to verify. Every increase in throughput changes the burden on validators, full nodes, network links, storage, and block propagation. If those burdens rise too far, fewer people can participate directly, and the system becomes easier to centralize. So the problem Layer 1 is solving is not merely “process transactions.” It is maintain a shared ledger that stays secure and broadly verifiable under adversarial conditions.

What are the core responsibilities of a Layer 1 blockchain?

At first principles, a Layer 1 does three things at once.

It defines a state transition function: given the current state and a set of valid transactions, what is the next state? On Bitcoin this is largely about UTXOs and signatures. On Ethereum it is broader: balances, contract storage, and arbitrary smart-contract execution all change state. On Polkadot parachains, the runtime itself is the chain’s business logic, compiled into deterministic code. Different chains package the state machine differently, but the underlying question is the same: if everyone replays the same inputs under the same rules, do they get the same result?

It also defines consensus: who gets to propose blocks, how the network agrees on one history, and what “final” means. Some Layer 1s use Nakamoto-style longest-chain logic with probabilistic finality. Others use validator voting systems that aim for faster and more explicit finality. This distinction is not cosmetic. A system with probabilistic finality asks users and applications to wait for confidence to accumulate. A system with near-instant finality pushes more work into the validator protocol itself. The design choice affects latency, reorg risk, bridge design, and the cost of independently verifying the chain.

Finally, a Layer 1 provides data availability. This is less intuitive than execution or consensus, but it is foundational. A block is only meaningful if the data needed to verify it has actually been published and can be downloaded. If a producer could convince the network to accept a block without making its contents available, other participants could not check the state transition for themselves. In that world, the chain would stop being verifiable by ordinary participants and become something closer to “trust the producer.”

That last point is why Layer 1 is more than a settlement stamp. It is the place where the network commits not just to results but, in many designs, to enough underlying data that others can audit those results. This is especially important once Layer 2 systems enter the picture, because many L2s depend on L1 not only for final settlement but for publishing the data that lets anyone reconstruct or challenge off-chain execution.

Why is scaling a Layer 1 blockchain difficult?

| Scaling option | Node burden | Decentralization effect | Failure mode | When acceptable |

|---|---|---|---|---|

| Larger blocks | Higher storage and bandwidth | Raises barrier to entry | Propagation delays and forks | Permissioned or small networks |

| Faster block rate | Higher latency sensitivity | More frequent temporary forks | Consensus stalls and reorgs | Low‑latency trusted setups |

| Fewer validators | Lower per‑node cost | Reduces decentralization | Collusion or governance capture | Trusted operator models |

| Specialized hardware | Higher hardware requirements | Centralizes block production | Single‑operator failures | Performance‑critical private chains |

The central constraint on Layer 1 is simple to state: every node that fully verifies the chain must be able to keep up. Everything else follows from that.

Suppose a chain doubles its block size or block frequency. In the abstract, that sounds like more throughput. Mechanically, it means more bytes must move across the network, more data must be stored, and more computation must be performed quickly enough that honest nodes do not fall behind. Larger or faster blocks also have to propagate through the network before the next round of consensus, or else nodes begin to disagree about the latest tip more often. What looks like a pure throughput increase is really a coordinated increase in bandwidth, latency sensitivity, storage growth, and hardware requirements.

This is why scaling discussions are always tied to tradeoffs around decentralization and security. Ethereum’s developer documentation states the goal directly: increase transaction speed and throughput without sacrificing decentralization or security. That is the hard part. If you are willing to reduce the number of validators, require expensive hardware, or let a small operator set dominate block production, scaling gets easier. If you want many independent participants to remain able to validate the chain, the design space becomes much tighter.

A concrete failure mode makes this vivid. In Solana’s February 2023 outage, the problem was not that the cryptography stopped working. The network suffered severe degradation in block finalization because the block-propagation layer became congested. Solana propagates blocks as smaller units called shreds, including recovery shreds generated with Reed-Solomon coding. In this incident, an abnormally large block and failures in deduplication behavior among forwarding services created propagation loops that overwhelmed the network. The lesson is not “high-throughput chains are impossible.” It is more precise: Layer 1 throughput is inseparable from networking and propagation mechanics. A blockchain can fail to scale not only in consensus theory, but in the messy details of getting bytes reliably from producer to verifier fast enough.

How does Layer 1 create trust among untrusted participants?

A useful way to think about Layer 1 is as a machine for converting local checks into global agreement.

Each full node does not need to trust a block producer personally. It checks signatures, transaction rules, state transitions, and consensus evidence against the protocol’s rules. If the block passes, the node accepts it as part of the chain. If enough independent nodes do this under the same rules, the system produces a shared history without requiring a central operator. The invariant is that validation is public and rule-based. Anyone with the software and sufficient resources can, in principle, verify the chain from genesis or from a trusted checkpoint.

Here is a simple worked example in prose. Imagine Alice sends tokens to Bob on a smart-contract chain. She signs a transaction and broadcasts it. Validators or miners see the transaction, check that Alice is authorized to spend those funds, and include it in a candidate block. Other nodes receive the block and do not ask whether they trust the proposer’s intentions; they ask whether the transaction was valid under the chain’s rules and whether the block itself satisfies consensus conditions. If the answer is yes, the chain’s state updates so that Bob now controls the tokens. What made the update credible was not the existence of a database entry. It was the combination of published data, deterministic validation, and consensus over ordering.

That same mechanism supports much more complex activity. A decentralized exchange on Ethereum, a Cosmos chain sending IBC packets, or a Polkadot parachain relying on relay-chain security all still bottom out in a Layer 1 process that says: these bytes were published, these rules were applied, and this resulting state is the canonical one.

How do Layer 1 and Layer 2 interact, and what security do Layer 2s inherit?

| L2 type | Data on L1 | Security model | Throughput | Best for |

|---|---|---|---|---|

| Optimistic rollup | Data posted on L1 | Fraud proofs | Medium throughput | Broad dApp scaling |

| ZK rollup | Data posted on L1 | Validity proofs | High throughput | Fast finality apps |

| Validium | Data kept off L1 | Validity proofs | Very high throughput | Throughput‑first apps |

| Sidechain | Independent of L1 | Independent consensus | Very high throughput | Custom chain needs |

The term Layer 1 becomes most informative when contrasted with Layer 2. A Layer 2 handles some work away from the base chain, then uses Layer 1 as an anchor for security, settlement, or data publication.

Rollups are the clearest example. On Ethereum, rollups execute transactions outside mainnet, then post data back to Layer 1 where consensus is reached. That design changes the cost structure. Instead of every Layer 1 node executing every user transaction directly, the L2 does most execution work elsewhere and uses L1 as the source of truth for the compressed record. This is why rollups can often be cheaper and higher-throughput than doing all execution directly on L1.

But the phrase “inherits L1 security” is often stated too casually. It is not magic. It depends on the mechanism. If a rollup posts enough transaction data to L1, then anyone can reconstruct the rollup state and, depending on the design, challenge an invalid state transition or verify a validity proof. If the data is kept off-chain instead, as in validium-style systems, throughput can be very high, but the trust assumptions change because data availability no longer rests fully on L1. So the real dividing line is not only “on-chain versus off-chain execution.” It is also what exactly Layer 1 is asked to guarantee.

This is where Layer 1’s role as a data-availability layer becomes crucial. For an optimistic rollup, users need published data so invalid execution can be challenged. For a zero-knowledge rollup, proof verification may be compact, but users and applications still care about data publication for reconstruction, exits, and broader trust minimization. The base layer is doing less application execution directly, but it is still doing the more fundamental job of making verification possible.

How do Layer 1s scale in practice (blobs, DA, parachains, sampling)?

| Approach | Bottleneck targeted | Node cost change | Primary tradeoff | Best when |

|---|---|---|---|---|

| Blob transactions (EIP‑4844) | Rollup data publication | Higher bandwidth needs | Increases bandwidth usage | When rollups dominate demand |

| Data‑availability networks (Celestia/Avail) | Data availability | Sampling lowers light‑node cost | New DA infrastructure required | Many L2s need shared DA |

| Parachains (Polkadot) | Serial execution limits | Specialized validators required | Depends on relay security | Parallel sovereign chains |

| Sharding / parallel execution | Single‑chain throughput | Complex node software | Cross‑shard complexity | Monolithic throughput growth |

Because Layer 1 has to preserve verifiability, its scaling techniques usually target a specific bottleneck rather than attempting to make every part of the system “bigger” at once.

One lever is to improve how the chain uses block space. Ethereum’s blob transactions from EIP-4844 are a good example. They introduce a new transaction type that can carry large amounts of data for rollups, but that data is not directly accessible to the EVM. Instead, the execution layer sees commitments to the blob data, while the consensus layer is responsible for persisting the blobs. The blobs are propagated separately as sidecars rather than being embedded in the normal execution payload. Mechanically, this matters because it creates a cheaper and more purpose-built way for rollups to publish data on Layer 1 without forcing every byte through the ordinary execution path.

The important idea here is not “Ethereum added blobs” as a standalone fact. The idea is that Layer 1 can scale by specializing resources according to what needs to be verified. Rollup data publication has different requirements from smart-contract execution. By giving blob data its own fee market, with separate blob gas and a separate base fee, Ethereum can price demand for data availability independently from demand for computation. That does not remove the tradeoff entirely; EIP-4844 explicitly increases bandwidth requirements, though the initial caps are intentionally conservative. But it shows the pattern of L1 scaling: isolate the bottleneck, add a mechanism that serves that bottleneck more directly, and contain the extra resource burden so nodes can still keep up.

Another lever is to change the architecture of the base layer itself. Celestia is a clear modular example: it positions Layer 1 primarily as a data availability network that orders blobs and keeps them available, while execution and settlement live above it. Its key mechanism is data availability sampling. Rather than requiring every light node to download every block, Celestia encodes block data into a two-dimensional Reed-Solomon matrix and lets light nodes sample random shares with proofs. If enough samples are available across the network, nodes gain high confidence that the full data is available. This approach changes what it means to participate in Layer 1 verification. The chain is still the base layer, but the main service it provides is not universal execution of every application; it is making published data verifiable at scale.

Avail makes a similar broad bet on dedicated, cryptographically verifiable data availability as a Layer 1 service for other chains and applications. The exact technical details require project-specific documentation, but the conceptual point is the same: some newer Layer 1 designs treat data availability as the scarcest base-layer resource and optimize around that fact.

A different architectural path appears in Polkadot. There, scalability comes from parallelization across parachains that connect to a relay chain. A parachain is a specialized blockchain that benefits from the relay chain’s shared security instead of maintaining its own independent validator set. The compression point is that Polkadot does not simply make one chain process more transactions serially. It spreads work across multiple specialized chains while centralizing enough security and coordination in the relay chain to preserve a common trust base. That is still Layer 1 scaling, but it looks very different from “make blocks larger.”

Cosmos shows yet another angle. Rather than a single global Layer 1 for all execution, many Cosmos-based chains are sovereign Layer 1s that interoperate through IBC. IBC relies on on-chain light clients, proofs, connections, channels, and relayers. Here the base layer’s job includes maintaining consensus states that other chains can verify. The architecture accepts a world of many L1s rather than one L1 with many dependent L2s. That diversity is a useful reminder: Layer 1 is a role, not a single universal design.

When should users and developers use Layer 1 directly?

Despite all the scaling discussion, people still use Layer 1 directly whenever the base layer’s guarantees matter more than convenience.

Users settle high-value transfers on L1 because finality and censorship resistance tend to be strongest there. Developers deploy smart contracts on L1 when they want the deepest liquidity, the most neutral settlement environment, or the broadest composability. Rollups and appchains use L1 as the court of final appeal: the place where proofs are checked, state commitments are posted, or disputes are resolved. Bridges depend on L1 finality assumptions to decide when an event on one chain is real enough to act on elsewhere.

That last case exposes why Layer 1 security assumptions propagate outward. Cross-chain systems are only as sound as the chains and verification mechanisms beneath them. Research on interoperability emphasizes that the underlying chains form the bedrock of security. If a source chain can be reorganized, or if its finality assumptions are misunderstood, a bridge or messaging protocol can accept an event that later turns out not to be final. This is one reason bridge failures have been so damaging: they often sit at the boundary where one system is making strong claims based on another system’s Layer 1 guarantees.

There is also a practical key-management angle. When institutions or exchanges settle to a Layer 1, they do not usually want a single hot private key controlling all movement. A real-world example is Cube Exchange’s 2-of-3 threshold signature scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. This is not a Layer 1 protocol rule by itself, but it shows how Layer 1 settlement interacts with cryptographic control systems above it. The stronger and more final the base layer settlement, the more valuable it is to control access to that settlement with equally careful cryptography.

What are the limits and common failure modes of Layer 1 designs?

It is easy to talk about Layer 1 as if it were a perfect foundation. In practice, the guarantees depend on assumptions, and different assumptions break in different ways.

If the node requirements become too high, the system may remain technically decentralized in name while operationally depending on a small number of powerful operators. If the consensus protocol has fast finality but a narrow validator set, the user may get speed at the cost of resilience to collusion or governance capture. If the chain optimizes heavily for throughput, networking failures and propagation edge cases can become the limiting factor, as Solana’s outage illustrated. If the base layer is used as a trust anchor for bridges or higher layers, misunderstandings about finality or data availability can amplify into losses far away from the original chain.

Even the phrase “Layer 1 scaling” can mislead if taken to mean there is a single scoreboard. A Bitcoin-style settlement layer, an Ethereum-style smart-contract settlement and data layer, a Polkadot-style shared-security relay architecture, and a Celestia-style modular DA layer are all solving different versions of the base-layer problem. Comparing them only by raw transactions per second obscures what each is trying to preserve.

The deepest invariant is not TPS. It is this: can independent participants still verify the system’s claims without trusting a privileged intermediary? Layer 1 designs differ mainly in how they distribute the costs of preserving that property.

Conclusion

Layer 1 is the base blockchain layer that provides consensus, canonical state, and the publication of data needed for independent verification. It exists because blockchains are not just databases or execution engines; they are shared systems that must remain secure and auditable even when participants do not trust one another.

That is why Layer 1 is both powerful and constrained. It is the ultimate source of settlement, but every improvement in throughput has to be paid for in bandwidth, computation, storage, and coordination. The lasting idea to remember is simple: Layer 1 is the place where crypto systems make their strongest promises; and where those promises are most expensive to keep.

How does this part of the crypto stack affect real-world usage?

Layer 1 rules (consensus, finality, and data availability) determine how long you should wait for deposits, how safe cross‑chain transfers are, and what assumptions dApps make about settlement. Before you fund, trade, or rely on an asset, translate those protocol properties into practical checks and then complete the Cube workflow to custody and trade the asset securely.

- Look up the chain’s finality model and required confirmations. Note recommended confirmation counts or explicit challenge periods for withdrawals and cross‑chain messages.

- Check data‑availability and rollup relationships. If the asset lives on an L2 or rollup, confirm whether the rollup posts calldata/blobs to L1 or uses an off‑chain DA design and record any challenge or exit latency.

- Verify the exact token and network you will use on Cube. Select the correct chain variant (mainnet vs. rollup) and token denomination before depositing to avoid cross‑network mistakes.

- Deposit funds into your Cube account via the supported fiat on‑ramp or a crypto transfer to the chosen network. Use the specified memo/address and gas token for that chain.

- Wait for the chain‑specific confirmation or challenge threshold you recorded, then place your trade on Cube. Use a limit order to control execution price or a market order for immediate fill, and only initiate withdrawals after the on‑chain finality condition is met.

Frequently Asked Questions

Because every throughput increase raises the work every fully verifying node must do - more bytes to transfer, store, and validate and tighter propagation timing - which pushes up hardware, bandwidth, and latency requirements and thereby risks concentrating validation in fewer operators rather than preserving broad verifiability.

Data availability means the block producer actually publishes the bytes needed to check a block; without it, other participants cannot reconstruct or audit state transitions and the system effectively requires trusting the producer rather than independent verification.

Rollups only "inherit" L1 security when they post enough data (or a compact proof) to L1 so third parties can reconstruct or challenge execution; designs that keep execution data off‑chain (validiums) change the trust model because L1 no longer guarantees data availability.

EIP‑4844 (blobs) adds a new transaction type that carries large rollup data as off‑chain sidecars with commitments in the execution payload, creates a separate fee market for blob data, and intentionally caps initial parameters - which saves expensive EVM calldata bandwidth but does increase node bandwidth requirements by a bounded amount (~0.75 MB per beacon block in the spec's caveats).

Modular DA networks like Celestia concentrate on ordering and making data verifiable by using erasure coding (a 2‑D Reed‑Solomon encoding) plus random sampling so light parties need only sample shares to gain high confidence the full data exists, rather than downloading everything or executing application logic on‑chain.

A common real‑world failure mode is network and propagation breakdowns: for example, Solana's Feb 2023 outage involved abnormally large blocks, shreds with Reed‑Solomon recovery data, and forwarding‑service deduplication failures that caused propagation loops and halted finalization - showing high throughput can fail due to networking edge cases, not cryptography alone.

Finality describes how confident you can be that a block won't be reverted: Nakamoto‑style consensus gives probabilistic finality (confidence grows over time), while many validator‑voting protocols aim for explicit/near‑instant finality, and the choice affects latency, reorg risk, and how long bridges or applications must wait before acting.

Bridges and cross‑chain systems inherit the security assumptions of the L1s they rely on; if a source chain’s finality or data‑availability guarantees are misunderstood or weak, a bridge can accept events that later revert or were unverifiable, which is a major reason bridge failures (e.g., Wormhole) produced large losses.

If node resource requirements (storage, bandwidth, CPU) rise too far, running a full verifier becomes costly and the network may be operationally dependent on a small set of powerful validators, sequencers, or indexers - a deliberate design tradeoff that improves throughput at the cost of decentralization and resilience.

Related reading