What is Validium?

Learn what Validium is, how it uses validity proofs with off-chain data, why it scales well, and the key trade-offs versus ZK rollups.

Introduction

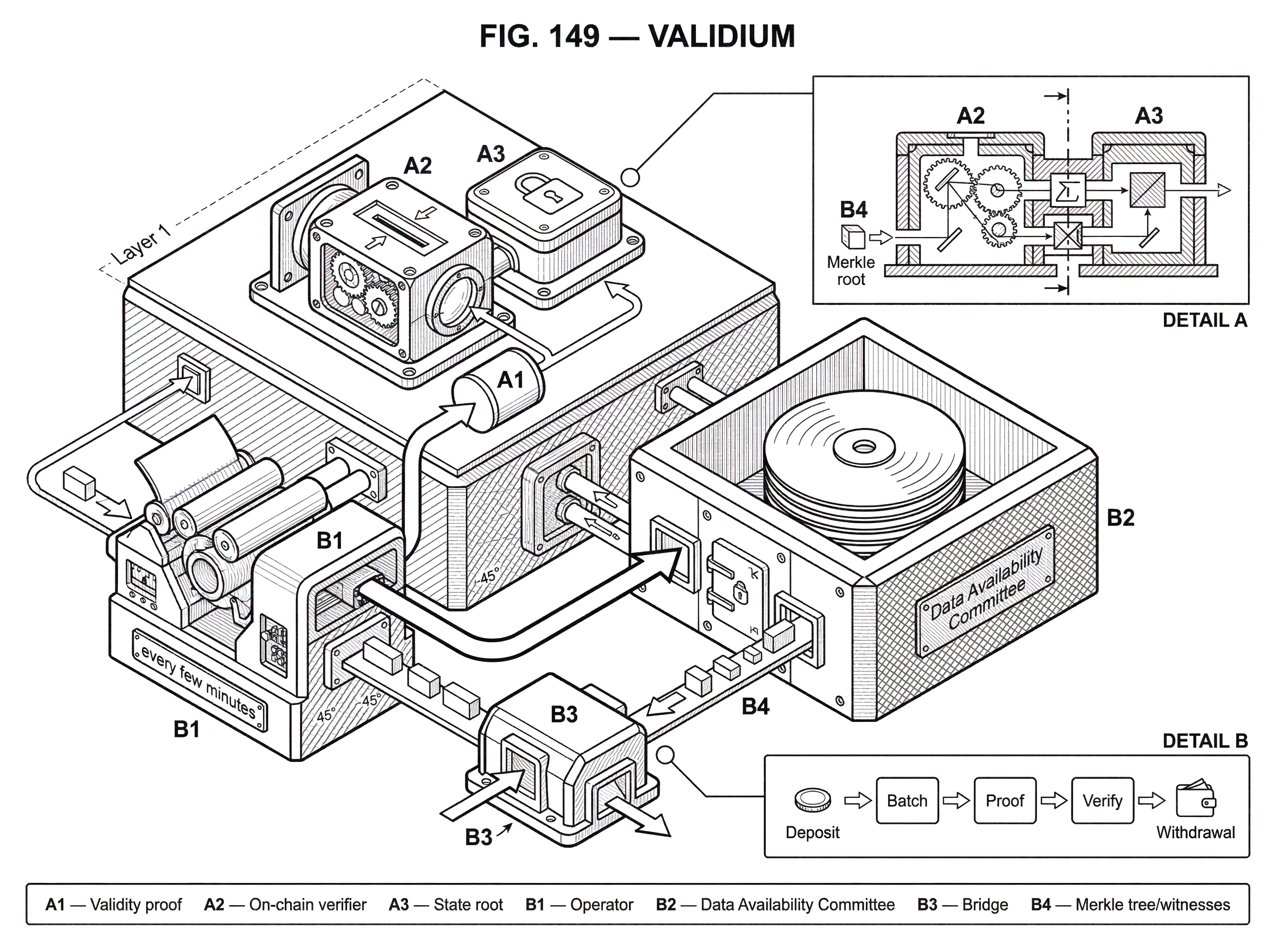

Validium is a layer-2 scaling design that uses validity proofs to guarantee correct state transitions while keeping the underlying transaction data off-chain. That combination is interesting because it splits what many people casually bundle together as “rollup security” into two separate questions: Is the new state valid? and Can everyone reconstruct the data behind it? In a validium, the first question gets a strong cryptographic answer, while the second depends on an off-chain data-availability mechanism.

That difference sounds subtle, but it changes the whole system. If you only look at proof verification, a validium can feel very close to a ZK rollup. If you look at data availability, it is not close at all. The reason validium exists is that publishing all transaction data on a base chain like Ethereum is expensive, and that data cost often becomes the bottleneck. By moving data off-chain, validiums can push throughput higher, reduce fees, and sometimes keep balances or order flow less exposed to the public chain. The cost is that users no longer inherit fully trustless data availability from the base layer.

So the core puzzle is this: how can a system be cryptographically valid, yet still leave users exposed? The answer is that correctness and availability are different properties. A proof can show that a transition from one committed state root to another was computed correctly. But the proof does not, by itself, give users the ledger data they need to understand the state, reconstruct balances, or generate the Merkle proofs needed for withdrawals. Validium is best understood as the architecture you get when you keep validity on-chain and move availability off-chain.

How does a Validium prove state changes without posting transaction data on-chain?

A useful way to think about a validium is as a compressed ledger. The layer 2 executes many transactions off-chain. Instead of posting all those transactions to Ethereum or another settlement chain, the operator posts a commitment to the new state and a validity proof showing that the transition obeyed the system’s rules. The settlement contract accepts the new state root only if the proof verifies.

Here is the mechanism that matters. The system has some state, often represented as a Merkle tree. A root of that tree is stored on-chain. Off-chain, the operator collects transactions, applies them to the current state, and computes a new root. Then a prover generates a proof (commonly a ZK-SNARK or ZK-STARK) attesting that the new root really does follow from valid execution of those transactions. The on-chain verifier contract checks that proof. If the proof is valid, the contract updates its stored commitment from the old root to the new one.

At this point, it is tempting to conclude that everything important is secured by the base chain. But that would miss the missing piece: the transaction data itself is not fully published on-chain. In a ZK rollup, the chain carries not just the proof and state commitment but also the data needed for anyone to reconstruct the ledger. In a validium, that data lives elsewhere. Ethereum, in the common case, sees the proof and a commitment, not the whole ledger update.

This means a validium preserves state correctness but weakens state recoverability from L1 alone. No operator can finalize an invalid state update if the verifier contract is sound and the proof system is secure. But users may still be unable to recover their exact balances or exit independently if the off-chain data is unavailable. That is the central trade.

Why do projects choose Validium instead of posting calldata on L1?

The pressure that creates validium is straightforward: data is expensive, proofs are comparatively cheap to verify, and many applications care more about low fees and high throughput than about maximal trustlessness in data availability.

On systems like Ethereum, posting transaction data to layer 1 consumes scarce block space. Even if computation moves off-chain, the rollup still pays for data publication. For applications with many simple state updates (trades, transfers, game actions, NFT operations, Order Book activity) that cost can dominate. If the application can tolerate a stronger trust assumption around data storage, moving data off-chain can substantially improve economics.

There is also a second motivation that gets less attention: confidentiality of ledger details from the public chain. A validity proof does not need to reveal the full transaction history it certifies. If balances, order flow, or account updates are stored off-chain rather than posted as calldata, fewer details are exposed to anyone reading L1. This is not the same as full privacy (off-chain storage operators may still see the data) but it can reduce public transparency in ways some applications want.

That is why validium has often appeared in high-throughput, application-specific systems. NFT trading, payments, gaming, and exchange-style applications can benefit from lower cost and higher throughput, especially when the application is already willing to operate with a designated operator and a structured set of data custodians. StarkEx deployments are the clearest production example: the same validity-proof machinery can support rollup mode, validium mode, or a hybrid called volition, where users or assets choose between on-chain and off-chain data availability.

Step‑by‑step: how a Validium processes deposits, transactions, proofs, and withdrawals

The cleanest way to see a validium is to follow a batch from deposit to settlement.

Suppose a user deposits assets into an on-chain contract that serves as the system’s bridge and custodian. The assets remain controlled by that contract, not by the operator directly. Off-chain, the validium tracks who owns what in its internal state tree. The user then submits a transfer or trade. The operator sequences that action together with many others and applies them to the current off-chain state. As balances change, the operator computes a new Merkle root representing the updated ledger.

Now comes the proving step. A prover takes the executed batch and produces a validity proof showing that every included transaction followed the rules: signatures were valid, balances were sufficient, no asset was created from nowhere, and the new root is exactly the result of applying those valid transitions to the previous root. That proof is sent to the on-chain verifier contract. If verification succeeds, the contract accepts the new root.

Notice what the chain has established at this point. It knows that the transition from old root to new root is valid. It knows which assets it custodies. It can enforce withdrawals that are consistent with the accepted state. But it may not know the full contents of the batch. If a user later wants to withdraw directly, they often need a Merkle proof showing that their balance appears in the committed state tree. To construct that proof, they need access to the underlying ledger data.

This is why the off-chain data store is not an accessory. It is part of the security model. In some deployments, a Data Availability Committee, or DAC, holds copies of each batch’s data and signs attestations that the data is available. StarkWare’s documentation describes validium state updates being accepted on-chain only once a quorum of committee members signs. In that design, the proof says the state transition is valid, and the DAC signatures say the data needed to recover that state has been retained off-chain.

The two guarantees do different jobs. The proof stops invalid transitions. The DAC is supposed to stop data disappearance.

What are the security trade‑offs in Validium: correctness versus data availability?

The most common misunderstanding about validium is to hear “Zero-Knowledge Proofs” and infer “trustless security.” That is only half right. The proof gives strong integrity guarantees. It does not automatically give strong availability guarantees.

This distinction is easiest to see by comparing failure modes. If an operator tries to update the state root to something inconsistent with the rules, the verifier contract rejects the proof. That attack fails cryptographically. But if the operator and data holders simply stop serving ledger data, the proof system does not help users reconstruct the missing state. The contract may still be holding their assets, yet users may be unable to produce the Merkle witnesses needed to claim them.

In other words, a validium can be safe against invalid state transitions while still being fragile against data withholding. This is why ethereum.org explicitly treats withheld state data as the main risk: without the off-chain transaction data, users cannot compute the Merkle proof required to withdraw. The funds are not necessarily stolen in the narrow sense, but they can become frozen.

That is the core compression point for the topic. A rollup is not only about proving that state transitions are correct. It is also about ensuring that the data needed to use those transitions remains available. Validium keeps the first property on-chain and outsources the second.

What is a Data Availability Committee (DAC) and how does it protect a Validium?

| Model | How availability proven | Trust model | Liveness risk | Best for |

|---|---|---|---|---|

| Data Availability Committee (DAC) | Committee signatures | Permissioned trusted parties | High if committee colludes | Low‑cost enterprise apps |

| Bonded / staked providers | Stake with slashing | Economically‑incentivized | Penalty delays; exits blocked | Incentivized availability |

| Cryptoeconomic DA (EigenDA / Celestia) | Decentralized economic guarantees | Permissionless cryptoeconomics | Lower systemic withholding risk | High‑assurance public apps |

Because off-chain data availability is the weak point, validium systems need some mechanism to make withholding less likely or less final. The most established approach is a Data Availability Committee. This is a set of known parties that store the batch data and attest, usually by signature, that they have it.

The idea is simple enough: if multiple independent entities retain the data, the system should not fail just because one operator disappears. In practice, though, the quality of this guarantee depends on several details that are easy to gloss over. Who chooses the committee? What quorum is required? Are some signers mandatory? What storage and replication standards do they run? What happens if some members are offline, colluding, or coerced? Those are not side questions. They are the effective security model.

StarkEx documentation and committee software make this concrete. Committee members keep data associated with each state root and sign availability claims. Batches accepted on-chain must satisfy configured signature thresholds, including any mandatory signers. If the operator becomes non-responsive or malicious, committee members are expected to publish the accounts data for the latest on-chain root so users can withdraw. That escape path matters because it shows what a validium ultimately leans on in a crisis: not the proof alone, but some honest party preserving and releasing the withheld data.

Other designs have explored bonded or staked availability providers rather than a fixed trusted committee. The idea there is to add cryptoeconomic penalties if data is not served. In principle, that can improve incentives. In practice, it does not eliminate the basic problem that unavailable data can halt user exits before any penalty is collected, and the source material here leaves open which model gives the best real-world balance of cost, decentralization, and liveness.

The result is that validium security is usually a layered claim, not a single one. The system may be trustless with respect to valid state transitions while relying on a semi-trusted or economically trusted mechanism for state availability. If those layers are described carelessly, users can think they have rollup-like guarantees when they do not.

What happens if a Validium operator withholds data? A concrete failure example

Imagine an NFT marketplace running in validium mode. Users deposit ETH and NFTs into an on-chain contract. Trades happen off-chain all day. Every few minutes, the operator submits a new state commitment plus a STARK proof. Ethereum verifies the proof, so everyone knows the marketplace state evolved according to the rules.

Now suppose the operator goes offline after accepting a new state root, and the marketplace API no longer serves account data. A user knows, in the ordinary sense, that they sold one NFT and should now have a certain balance. But the contract does not accept “ordinary sense” as evidence. It needs a Merkle proof showing that the user’s balance is included in the accepted state tree. To build that proof, the user needs the off-chain tree data or enough batch data to reconstruct it.

If a DAC member still has the ledger and publishes it, the user can compute the required witness and withdraw. If no one serves the data, the proof that settled on Ethereum is still valid; but that does not help the user identify their leaf and path in the tree. The state root is a commitment, not a readable ledger. So the user’s funds are not lost because the state transition was false. They are stuck because the supporting data is unavailable.

That is exactly why the phrase data availability matters so much in scaling design. A commitment without accessible underlying data is like a sealed checksum of a book you are not allowed to read. You can verify the checksum once you have the book, but the checksum does not recreate the book for you.

The analogy helps explain the mechanism, but it also has a limit: unlike a book checksum, a state commitment can still be used by a Smart Contracts to enforce some transitions if the right Merkle witnesses are later supplied. So the system is not merely informationally incomplete; it is operationally dependent on who can produce those witnesses.

Validium vs ZK rollup: how do data publication and user recovery guarantees differ?

| Design | Data placement | Withdrawals without operator | Fees | Privacy | Best for |

|---|---|---|---|---|---|

| Validium | Off‑chain (DAC‑backed) | No without DA | Lower fees | More ledger privacy | High‑throughput apps |

| ZK rollup | On‑chain calldata | Yes from L1 alone | Higher fees | Less ledger privacy | Trust‑minimized withdrawals |

The neighboring concept that matters most is the ZK rollup. Both architectures use validity proofs. Both post state commitments to an L1 verifier contract. Both can settle quickly once proofs are accepted. The difference is not in how they prove correctness. The difference is where they put the transaction data.

A ZK rollup publishes enough data on-chain for anyone to reconstruct the state from L1 alone. That makes trustless exits possible even if the operator disappears. It also makes the system more expensive, because calldata or blob space is not free. A validium avoids most of that cost by keeping the data off-chain. The payoff is higher throughput and lower fees. The price is weaker data-availability guarantees.

This means validium is not “better than” or “worse than” a ZK rollup in the abstract. It is a point in the design space. If the application needs maximum trust-minimized recoverability, rollup mode is the stronger choice. If the application cares more about cost, privacy of ledger details, or application-specific control over infrastructure, validium may be attractive.

The important discipline is not to collapse these into a single security label. Saying both are “ZK-based” is true but incomplete. For a user deciding what risk they bear, the more important question may be: if the operator and APIs disappear tomorrow, can I still reconstruct the state from the base chain alone? For a rollup, the answer is designed to be yes. For a validium, the answer is generally no unless the off-chain availability mechanism works as intended.

What is volition and when should projects choose on‑chain versus off‑chain data?

Once the trade-off is clear, the appeal of volition becomes obvious. Some transactions want rollup-grade data availability; others mainly want lower cost. Volition keeps both paths open by letting assets, vaults, or transactions choose whether data is posted on-chain or held off-chain.

StarkWare describes this as maintaining both an on-chain ledger and a DAC-backed ledger. That hybrid structure is not just a product feature. It is an admission that different actions within the same application can reasonably want different guarantees. A large-value withdrawal path may justify on-chain data costs. A high-frequency in-game action may not. Volition is what you build when you accept that “best” data availability is use-case dependent.

That also helps place validium in the broader scaling landscape. It is not a historical dead end or a lesser imitation of rollups. It is one answer to a genuine engineering constraint: the user may want proof-backed correctness without paying L1 publication costs for every state update.

Which applications are best suited for Validium and which workloads struggle?

Validium tends to fit best where transactions are numerous, simple, and relatively homogeneous. Payments, token transfers, exchange operations, and NFT workflows are natural examples because their state transitions are easier to prove and their economics are sensitive to per-transaction L1 data cost. Production systems like StarkEx and Immutable X show this pattern clearly.

General-purpose smart-contract execution is harder. The issue is not that validity proofs cannot, in theory, support arbitrary computation. The issue is proving overhead, VM design, and developer compatibility. Ethereum’s execution model is expensive to prove directly, so many systems either use specialized Virtual Machine, custom circuits, or nonstandard compilation flows. The consequence is that validium has often offered excellent performance for tailored applications while lagging in broad EVM compatibility and tooling simplicity.

This is partly a technology maturity question, not a permanent law. As zkEVM systems improve, the line may move. But the current practical takeaway remains: validium has usually been easiest to deploy where the application can shape its own execution environment rather than demanding seamless compatibility with the full Ethereum smart-contract stack.

There is also an operational strain point in proving itself. Generating validity proofs requires substantial compute and specialized infrastructure. Verification on-chain is cheap compared with recomputing the batch, but proof production is not. That can centralize the proving pipeline around specialized operators or shared proving services. So even apart from data availability, a real validium is usually not a magically decentralized machine. It is a system that uses cryptography to constrain what centralized infrastructure is allowed to do.

What operational and governance assumptions should I check before trusting a Validium?

| Assumption | Why it matters | What to ask |

|---|---|---|

| Who stores data | Determines state recoverability | Ask: who hosts copies and SLAs |

| Committee quorum & policy | Sets availability thresholds | Ask: quorum size and mandatory signers |

| Prover centralization | Affects censorship and centralization | Ask: multiple provers or shared service |

| Contract upgrade keys | Can change exit protections | Ask: time‑locks and multisig controls |

If you are evaluating a validium, the key questions are less about the elegance of the proof system and more about the exact assumptions around off-chain data and recovery.

You want to know who stores the data, what threshold of signatures is required for state updates, whether any signers are mandatory, and what the emergency publication path looks like. You want to know whether users can force withdrawals or freeze the system if operators ignore them, and whether those escape hatches are actually usable in practice. You also want to know whether upgrade keys or operator permissions could disable or weaken those protections.

Those questions matter because validium’s failure mode is not usually “the math breaks.” It is “the organizational and operational layer around the math stops cooperating.” A strong proof system prevents counterfeit state transitions. It does not guarantee that a user can recover the data needed to act on a valid state.

Conclusion

Validium is a proof-based scaling design that keeps execution correctness on-chain but moves data availability off-chain. That single architectural move explains nearly everything else: lower fees, higher throughput, some confidentiality benefits, and a sharper dependence on committees or other external data custodians.

If you remember one thing, make it this: a validium can prove that the ledger changed correctly without guaranteeing that the ledger data remains available to everyone from L1 alone. That is why validium exists, why it can scale well, and why its trust model is meaningfully different from a ZK rollup.

How does this part of the crypto stack affect real-world usage?

Validium's off‑chain data availability directly affects whether you can recover funds or prove balances if an operator or API goes offline. Before you fund or trade assets tied to a Validium, confirm the project's data‑availability model and emergency withdrawal mechanics, then use Cube Exchange to fund and trade once those checks satisfy your risk tolerance.

- Find the project's docs and confirm whether it runs as a Validium, a ZK rollup, or a volition hybrid; note the stated data‑availability model (DAC, bonded providers, or other) and any required quorum or mandatory signers.

- Locate the emergency withdrawal or data‑publication procedure and record concrete timelines and trigger actions (who can publish data, required signatures, and how long a forced‑exit takes).

- Check operator and prover centralization: identify the proving/operator entities, whether committee members are independent, and whether incentives or bonds exist to deter data withholding.

- Fund a small test amount into your Cube Exchange account, trade or deposit the asset you plan to use, and then run the project’s documented withdrawal or proof‑request steps to confirm you can obtain the Merkle witness or a committee attestation.

Frequently Asked Questions

The key difference is where transaction data lives: both use on‑chain validity proofs to ensure state transitions are correct, but a ZK rollup publishes enough calldata on L1 so anyone can reconstruct the ledger, whereas a validium keeps transaction data off‑chain and thus does not guarantee recoverability from L1 alone.

No - a validity proof prevents the operator from finalizing an invalid state, but if the off‑chain data needed to build Merkle proofs is withheld, users can be unable to demonstrate their balances and withdraw even though the committed state is cryptographically valid.

A Data Availability Committee (DAC) is a group of off‑chain parties that store batch data and sign attestations of availability; a validium typically relies on a DAC to make withheld data recoverable, so the system’s real‑world security depends on who the committee members are, quorum rules, and their operational behavior.

If a DAC withholds data, users may need an honest committee member to publish the ledger so they can build Merkle witnesses and withdraw; systems often provide such emergency publication paths, but the practicality, quorum thresholds, and timelines vary by deployment and can be fragile in practice.

Validium is best for high‑throughput, application‑specific workloads where per‑transaction L1 calldata cost matters - examples include exchange Order Book, NFT marketplaces, payments, and gaming - because these use cases value lower fees and throughput over fully trustless data availability.

Yes - moving data off‑chain replaces a universal L1 recovery guarantee with assumptions about operators, DAC members, or bonded availability providers, so using a validium introduces centralized or semi‑trusted dependencies that are part of its security model.

Generating validity proofs requires substantial specialized compute and infrastructure, which tends to concentrate prover roles around dedicated operators or shared proving services; verification on L1 is cheap, but proof production itself can be a centralization pressure.

Today, full EVM‑compatible general‑purpose workloads are harder to support in Validium mode because proving arbitrary Ethereum semantics is costly; many deployments favor specialized VMs or tailored circuits, and it remains an open question how quickly zkEVM tooling will close that gap.

Related reading