What Are KZG Commitments?

Learn what KZG commitments are, how they use pairings and a trusted setup to commit to polynomials, and why EIP-4844 uses them for blobs.

Introduction

KZG commitments are a cryptographic way to commit to a polynomial so that later you can prove what that polynomial evaluates to at chosen points, while keeping both the commitment and the proof very small. That sounds narrow until you notice what it solves: many systems need to bind themselves to a large body of structured data, then reveal or verify only tiny parts of it without retransmitting everything.

This is why KZG shows up in places that at first seem unrelated. In zero-knowledge proof systems, a prover often needs to convince a verifier about values of committed polynomials. In blockchain data-availability designs, a protocol may want to bind a large data blob to a compact on-chain reference and later check consistency with small proofs. The appeal is the same in both settings: constant-size commitments and short evaluation proofs.

The catch is equally important. KZG gets this efficiency by leaning on pairings over elliptic-curve groups and on a trusted setup that publishes structured public parameters derived from a secret value. If that setup is compromised in the wrong way, binding can fail. So the right way to understand KZG is not as “magic compression,” but as a precise trade: it buys unusually compact proofs by introducing stronger structure and stronger assumptions than simpler commitment schemes.

Why use KZG commitments instead of publishing coefficients or Merkle trees?

| Method | Commitment size | Proof size per evaluation | Trusted setup | Verifier cost | Best for |

|---|---|---|---|---|---|

| Publish coefficients | O(t) coefficients | None (reveal data) | None | Linear evaluation | Small polynomials |

| Merkle tree | O(1) root (hash) | O(log n) sibling path | None | Log-time hash ops | Transparent setup; sparse checks |

| KZG commitment | 1 group element | 1 group element | Required (SRS) | Pairings | Bandwidth-limited, many checks |

Suppose you have a polynomial φ(x) over a finite field. You want to publish a commitment to the whole polynomial now, and later answer questions like “what is φ(7)?” with a proof that convinces anyone the answer is consistent with the original commitment.

A naive approach is to publish all coefficients. That works, but it destroys compactness: the commitment is as large as the polynomial. Another approach is to evaluate the polynomial on many points and put those values in a Merkle tree. Then the root is small, and a proof for one position is only logarithmic in the number of leaves. That is already useful, and Merkle trees remain attractive because they avoid trusted setup and rely mostly on hash functions. But the proof size is still tied to the amount of data. If the object being committed is very large and many checks must be verified, logarithmic proofs can become expensive.

KZG aims at a more extreme target. It tries to make the commitment to a degree-bounded polynomial just one group element, and an evaluation proof also one group element, independent of the polynomial degree. That is the compression point: instead of committing to the polynomial by listing data or hashing a tree of data, KZG commits to the polynomial’s value at a secret point hidden inside public parameters. Everything else follows from that idea.

How do KZG commitments bind a polynomial by evaluating at a hidden (trapdoor) point?

Here is the central mechanism in plain language.

Imagine there were a secret field element α, and somehow public parameters let you work with expressions involving α without learning α itself. If you could compute a commitment that effectively encodes φ(α), then two different polynomials would almost never agree unless they were actually the same polynomial; at least as long as the polynomial degree stays within the supported bound.

Why? Because a nonzero polynomial of degree at most t cannot have too many roots. If two degree-t polynomials φ and ψ satisfy φ(α) = ψ(α), then their difference δ(x) = φ(x) - ψ(x) has δ(α) = 0. For a randomly hidden α, the only reliable way for this to happen is that δ is the zero polynomial, meaning φ = ψ. This is the invariant KZG exploits: low-degree polynomials are rigid. They cannot match at too many places unless they are identical.

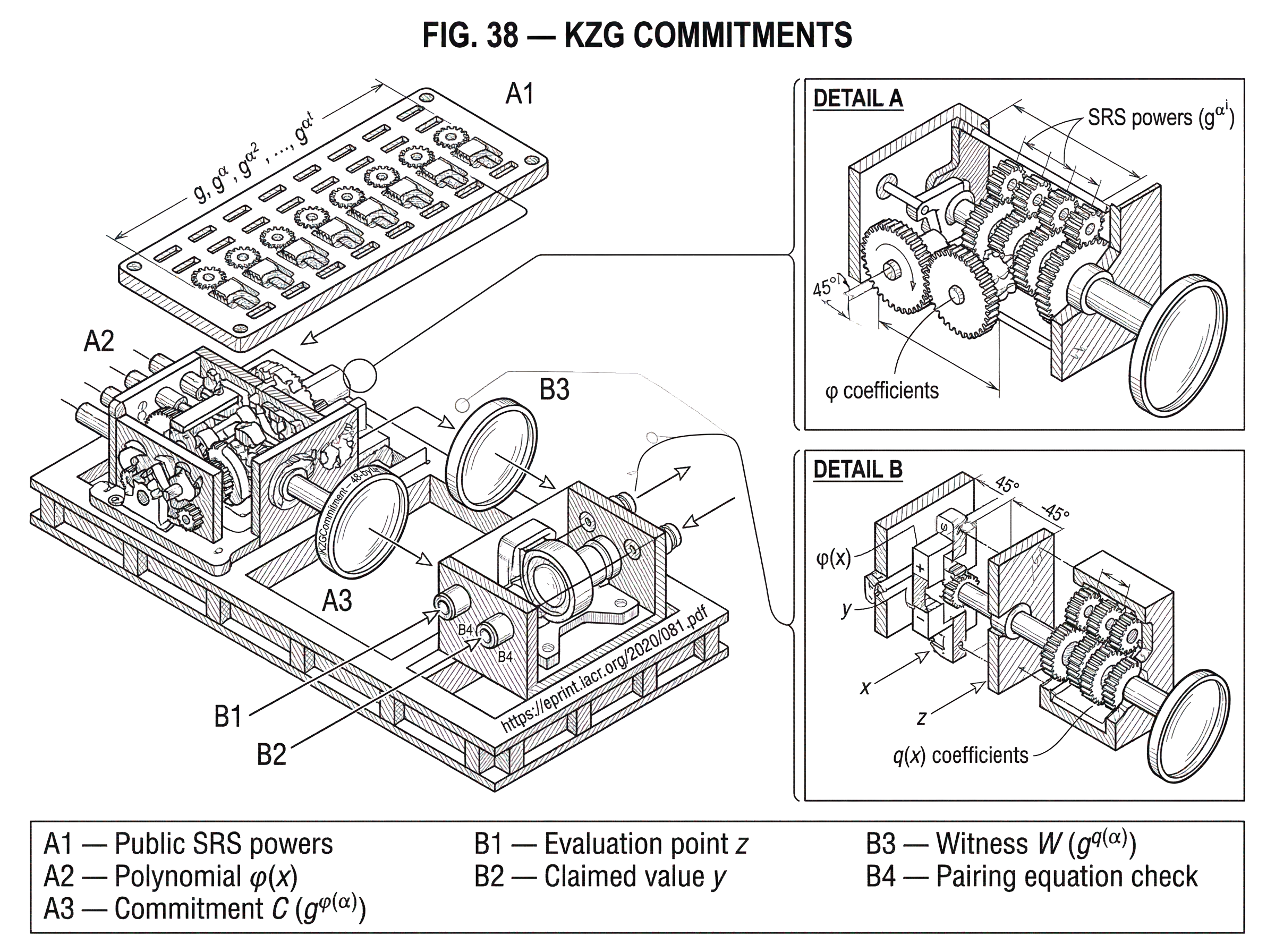

Of course, in a real protocol the committer must not know α, because then they could potentially game the system. So the setup phase generates structured public parameters derived from α without revealing α itself. In the original construction, setup publishes powers like g, g^α, g^{α^2}, and so on up to the supported maximum degree, where g is a generator of a pairing-friendly group. Given the coefficients of φ(x), the committer can combine these public elements to produce a commitment equal to g^{φ(α)} without ever learning α directly.

That is the heart of KZG: a commitment is a compressed group encoding of the polynomial evaluated at a hidden trapdoor point.

How is a KZG commitment computed from polynomial coefficients?

Now the notation has somewhere to land.

In the original KZG-style construction, setup chooses a secret α and publishes g, g^α, g^{α^2}, ..., g^{α^t} for a degree bound t. If the polynomial is

φ(x) = a0 + a1 x + a2 x^2 + ... + at x^t,

then the committer computes

C = g^{φ(α)} = g^{a0 + a1 α + a2 α^2 + ... + at α^t}.

Because exponentiation distributes over multiplication in the group, the committer can build C from the public powers:

C = (g)^{a0} (g^α)^{a1} (g^{α^2})^{a2} ... (g^{α^t})^{at}.

So the commitment is just a single group element, even though it binds an entire polynomial of degree up to t. This compactness is not a coincidence or an implementation trick; it is the direct consequence of committing to φ(α) rather than to every coefficient individually.

This also explains why the setup is structured rather than generic. The powers of α are exactly what let the committer evaluate an arbitrary degree-t polynomial “in the exponent.” Without those powers, the scheme would lose its main property.

How does a KZG evaluation proof (opening) demonstrate φ(z)=y?

The natural next question is the hard one: if the commitment only encodes φ(α), how can anyone verify a claim about φ(z) at some point z without learning the whole polynomial?

The answer uses a simple algebraic identity. If y = φ(z), then the polynomial φ(x) - y has a root at x = z. That means it is divisible by x - z. So there exists a quotient polynomial

q(x) = (φ(x) - y) / (x - z).

This quotient is the witness to the claim. Intuitively, if I say the committed polynomial evaluates to y at z, I am also claiming that the residual polynomial φ(x) - y factors cleanly as (x - z) q(x). If that factorization is true, then y really is the correct value.

KZG turns that factorization into a short proof by committing to q(α) in the same style. The witness is

W = g^{q(α)}.

Now look at what must hold if the claim is honest:

φ(α) - y = (α - z) q(α).

That identity is exactly the evaluation claim, but checked at the secret point α. Since the commitment encodes g^{φ(α)} and the witness encodes g^{q(α)}, a verifier can use a pairing equation to test this relationship without learning α.

In the original paper, verification uses a bilinear pairing e and checks an equation equivalent to

e(C / g^y, g) = e(W, g^α / g^z).

The pairing is what makes exponent relations visible. It lets the verifier check that the exponent on the left, φ(α) - y, matches the exponent on the right, (α - z) q(α), even though those exponents remain hidden.

This is the mechanism, stripped to essentials. The proof is short because the witness is just one commitment to the quotient polynomial at the hidden point.

Example: create a KZG commitment and prove φ(5) = 38

Take a small polynomial φ(x) = 3 + 2x + x^2. Suppose someone has already published a KZG commitment C to it. Later they want to prove that φ(5) = 38.

They do not prove this by revealing the polynomial and asking the verifier to plug in 5. That would defeat the point. Instead, they subtract the claimed value from the polynomial and observe that

φ(x) - 38 = x^2 + 2x - 35.

Because the claim is supposed to be true at x = 5, this residual polynomial must vanish at 5. And it does: 25 + 10 - 35 = 0. So it factors as (x - 5) q(x). The quotient here is q(x) = x + 7.

The prover then creates a witness commitment to this quotient at the hidden setup point, producing something like W = g^{q(α)}. The verifier never sees α, never sees q(α) as a number, and does not need the original coefficients. Instead, the verifier checks through the pairing equation that the commitment C, the claimed point 5, the claimed value 38, and the witness W are all algebraically consistent with the identity

φ(x) - 38 = (x - 5) q(x).

If the claim were false (say the prover claimed φ(5) = 39) then φ(x) - 39 would not be divisible by x - 5, so there would be no valid quotient polynomial, and the pairing check would fail.

The important thing here is not the arithmetic itself. It is the pattern: an evaluation proof is really a divisibility proof. KZG packages that divisibility proof into one group element.

When should a system choose KZG commitments?

The strongest practical property of KZG is not just that commitments are small. It is that selective verification stays small too.

If a system commits to a large structured object that can be represented as a low-degree polynomial, then later it can answer narrowly targeted consistency questions with constant-size proofs. This matters in zero-knowledge systems because many proof protocols reduce statements about computation to statements about evaluations of committed polynomials. It also matters in data-availability designs because a large blob can be encoded as field elements, interpreted as a polynomial, and then referenced by a short commitment.

Ethereum’s EIP-4844 is a concrete example. The protocol defines KZGCommitment and KZGProof as fixed 48-byte objects and uses them to bind blob data to compact references in blob transactions. The execution layer refers to blobs through versioned hashes derived from commitments rather than embedding the blob contents directly, and verification can use a point-evaluation precompile. On the consensus side, blobs are propagated separately as sidecars. The cryptographic point is straightforward: the chain needs a compact handle for a much larger data object, together with a way to check consistency.

The same underlying idea also appears in data-availability systems beyond Ethereum. Avail’s documentation likewise describes KZG commitments as part of its data-availability design. The details of how a protocol encodes data and samples it vary, but the reason KZG keeps appearing is stable: it gives a compact cryptographic binding to polynomial-encoded data.

What does “constant-size” mean for KZG commitments, and what are the hidden costs?

It is easy to hear “constant-size commitment” and imagine that KZG somehow makes all costs disappear. It does not.

What stays constant is the communication size of the commitment and of a single evaluation proof, measured as group elements. What does not stay constant is the setup size, the prover’s polynomial arithmetic, or the cost of pairings during verification. The original paper is explicit that the public parameters scale with the maximum supported degree t: setup publishes roughly t + 1 powers. So KZG compresses the committed object into a constant-size handle, but only after paying for a structured reference string of size linear in the degree bound.

This is an important design trade. If your application cares most about bandwidth, proof size, or on-chain verification footprint, KZG can be very attractive. If your application prioritizes transparent setup and simpler assumptions, a hash-based construction may be preferable even with larger proofs.

How do KZG batch openings reduce proof size and what are the trade-offs?

A further reason KZG became so influential is that it extends naturally to batch openings.

The original work showed that opening multiple evaluations can still be done with only a constant amount of communication overhead; essentially a single witness element in the batch setting described there. Later work developed more advanced batching techniques for multiple points and multiple polynomials, with different prover-verifier tradeoffs. The recurring idea is that the quotient-based witness can be generalized so that many consistency checks are folded together into a small proof.

This matters because modern proof systems rarely ask just one evaluation question. They ask many, often across several related polynomials. KZG’s batching story is one reason it became embedded in systems such as PLONK-family proving schemes and in protocols that need compact verification at scale.

But batching also exposes a broader lesson about KZG: the scheme’s elegance comes from algebraic structure, and exploiting more of that structure often yields smaller proofs at the price of more sophisticated assumptions, more verifier work, or larger structured reference strings. KZG is not one monolithic object so much as a base construction with a family of practical refinements.

What cryptographic assumptions does KZG rely on and what can go wrong?

| Layer | What can break | Consequence | Mitigation |

|---|---|---|---|

| Algebraic layer | Degree bound violated | Binding guarantees weaken | Enforce deg ≤ t; input checks |

| Cryptographic layer | Pairing or SDH assumption fails | Forgeable openings possible | Use vetted curves; conservative params |

| Setup / operational | Trapdoor (α) revealed | Complete collapse of binding | Multi‑party ceremony; verify transcript |

KZG’s security story has two distinct layers: the mathematics of low-degree polynomials, and the cryptographic assumptions that let group elements stand in for hidden exponent relations.

At the algebraic layer, binding depends on the polynomial degree bound. The scheme is designed for polynomials of degree at most t, where t matches the setup. If that assumption is violated, the rigidity argument no longer says what you want it to say. Degree bounds are not bookkeeping; they are part of the security boundary.

At the cryptographic layer, the original construction’s proofs rely on pairing-based assumptions such as t-SDH and related variants for stronger or batched properties. These are not the weakest or most familiar assumptions in cryptography. They are accepted in many practical systems, but they are still a meaningful cost of choosing KZG. The original paper also gives a Pedersen-style randomized variant, PolyCommitPed, which improves hiding properties while keeping computational binding under pairing assumptions.

The setup is the most visible operational risk. The public parameters are derived from a hidden secret often written as α or τ. If an attacker learns that secret trapdoor, they may be able to create fake openings to commitments, undermining binding. This is why KZG deployments rely on ceremonies that combine randomness from many participants. The safety claim is usually one honest participant is enough: if at least one contributor destroys their secret contribution, the final trapdoor remains unknown.

That is the logic behind Ethereum’s KZG ceremony. The ecosystem ran a large public ceremony, coordinated participants and a sequencer, published transcripts, and emphasized auditability. This ceremony was not a side detail. It was part of making the trusted-setup assumption operationally credible for a production blockchain feature.

Why does KZG need a trusted setup, and how does that affect security and design?

Trusted setup is often presented as a simple downside. That is true, but incomplete.

It is a weakness because it creates a one-time ceremony with real social and technical risk. Someone must generate structured parameters, participants must contribute entropy correctly, implementations must verify transcripts correctly, and users must trust that the resulting SRS is sound. Transparent schemes avoid this entire class of concern.

But trusted setup is also the reason KZG is so compact. The structured powers of the hidden trapdoor are exactly what let the scheme evaluate arbitrary low-degree polynomials “in the exponent” and prove divisibility relations so efficiently. If you remove that structure, you usually lose the constant-size property or move to a different set of tradeoffs.

So the right comparison is not “KZG would be perfect without setup.” The right comparison is “KZG occupies one point in the design space: small proofs and pairings in exchange for a structured reference string.” Whether that is a good trade depends on the application.

How are KZG commitments and proofs serialized and verified in practice (e.g., EIP-4844)?

In deployed blockchain systems, KZG is not just an abstract algebraic object. It has concrete byte formats, validation rules, and API boundaries.

In Ethereum’s Deneb and EIP-4844 specifications, commitments and proofs are serialized as 48-byte encodings of BLS12-381 G1 points. Public APIs are expected to accept raw bytes and normalize them before internal processing. A blob is represented as a fixed number of field elements (4096 in the EIP-4844 setting) and the specification defines procedures to convert between blob data, polynomial form, commitments, proof generation, and proof verification. The readable consensus-spec reference makes explicit that verification reduces to a pairing check over terms derived from the commitment, the claimed value, the proof, and setup elements in G2.

This implementation detail matters for two reasons. First, it shows that KZG’s compactness is not just asymptotic theory; it turns into fixed wire sizes that protocol designers can reason about. Second, it highlights a frequent source of real-world risk: many failures happen not in the algebraic idea, but in serialization, point validation, field-element canonicality, or misuse of trusted setup material. Production libraries such as c-kzg-4844 and lower-level pairing libraries like blst exist largely because these edge conditions are too important to leave informal.

Common misconceptions about KZG commitments

| Misunderstanding | Reality | Practical implication |

|---|---|---|

| KZG is general compression | Commits polynomials only | Encode data as field elements first |

| Proof shows data availability | Only proves algebraic consistency | Combine with DA protocols/sampling |

| Trusted setup is ceremonial | Setup is core to security | Validate ceremony; prefer many contributors |

The most common misunderstanding is to think KZG is a general-purpose compression scheme for arbitrary data. It is not. KZG commits to polynomials over a field, and the reason it can do so compactly is that low-degree polynomials have strong algebraic structure. When applications use KZG for “data,” they first encode that data into field elements and then into a polynomial representation.

A second misunderstanding is to treat the proof as evidence of data possession or data availability by itself. A KZG proof only shows an algebraic consistency statement about a committed polynomial. Whether that is enough for data availability depends on the surrounding protocol; for example, how blobs are distributed, sampled, retained, or reconstructed.

A third misunderstanding is to treat trusted setup as merely ceremonial overhead. In KZG it is part of the primitive itself. You cannot cleanly separate the scheme from the quality and trust model of its setup.

Conclusion

KZG commitments are a polynomial commitment scheme built around a simple but powerful idea: commit to a polynomial by encoding its value at a hidden setup point. Because low-degree polynomials are rigid, and because pairings let verifiers check exponent relations, this yields a one-element commitment and short evaluation proofs.

That efficiency is why KZG became foundational in modern proof systems and in blockchain designs like EIP-4844. But the efficiency is not free. It depends on pairings, degree bounds, and a trusted setup whose trapdoor must remain unknown. The shortest way to remember KZG is this: it turns divisibility of polynomials into tiny cryptographic proofs; by paying for structure up front.

What should you understand before using KZG commitments?

Understand the trust model and data-availability limits before depending on KZG-backed features, and then use Cube’s normal funding and trading flows to limit exposure. Check the protocol’s SRS/ceremony, verify on-chain pairing support and blob encoding details, and only trade or deposit once you’ve validated those facts.

- Look up the protocol specification that the asset uses (e.g., EIP-4844 / Deneb) and confirm it relies on KZG commitments and the exact blob encoding (field-element count, curve

BLS12-381, 48-byte G1 encodings). - Open the published SRS/ceremony transcript and confirm the SRS participants and hash match the repository or canonical source the project cites.

- Verify the chain supports on-chain pairing checks (precompile) and estimate gas cost for verification so you can factor fees into trading or transfer decisions.

- Fund your Cube account (fiat on-ramp or crypto transfer), open the relevant market or deposit flow for the asset, and read the asset’s listing notes or links to the SRS/spec before placing orders.

- For initial exposure, use a limit order and small position size until you’ve confirmed both the SRS integrity and that blob availability practices (propagation, sampling, retention) meet your risk tolerance.

Frequently Asked Questions

The trusted setup is a one-time generation of a structured reference string (SRS) that publishes powers of a secret trapdoor α (g, g^α, g^{α^2}, … up to degree t), and it is required because those powers let committers form g^{φ(α)} without learning α; without this structured SRS the scheme loses its constant-size evaluation and witness properties. The SRS scales with the degree bound t and must keep α unknown because if the trapdoor is revealed binding can fail.

If the trapdoor α is learned by an attacker they can forge fake openings (create commitments and corresponding evaluation proofs that should not exist), which undermines the binding property; this is why KZG deployments run multi‑contributor ceremonies and rely on ‘‘one honest participant’’ assumptions.

You cannot get the same constant-size KZG properties without structured public parameters; in practice people mitigate trust by running a distributed ceremony so the SRS is updatable and requires at least one honest contributor, but removing the trusted setup entirely requires changing to different primitives with different trade‑offs.

KZG gives single‑element (constant-size) commitments and single‑element evaluation proofs, whereas Merkle trees give logarithmic-size proofs; the tradeoff is that KZG relies on pairing-based cryptographic assumptions and a structured setup while Merkle constructions avoid trusted setup and rely only on hash function assumptions.

The scheme’s security crucially depends on the degree bound t in the setup: if a committed polynomial exceeds the bound the rigidity argument no longer applies and binding guarantees break down, so the degree limit is an explicit security boundary.

KZG supports batch openings where many evaluation checks can be folded into a single small witness; this often keeps communication nearly constant but typically increases algebraic/verification complexity, may require different batching randomness or SRS structure, and trades off verifier work and setup size against proof compactness.

In Ethereum’s EIP‑4844 and Deneb specs, KZG commitments and proofs are represented as fixed 48‑byte encodings of BLS12‑381 G1 points, blobs are encoded as a fixed number of field elements (e.g., 4096), and verification reduces to pairing checks using SRS elements in G2; implementations must also enforce field-element canonicality and serialization rules.

A KZG opening proves an algebraic consistency statement (that φ(x)−y is divisible by x−z at the hidden point) and does not by itself guarantee that large blob data are available or stored; data‑availability claims depend on the surrounding protocol (how blobs are distributed, sampled, and retained).

Security proofs and practical deployments of KZG rely on pairing‑based assumptions such as t‑SDH and related variants (and sometimes the Algebraic Group Model in proofs), so choosing KZG is choosing a somewhat stronger, pairing‑based assumption set compared with hash‑based alternatives.

Rotating or revoking an SRS requires generating a fresh SRS (effectively rerunning a ceremony or producing a new trusted setup) because the SRS is part of the primitive; the operational details (how to provision, rotate, publish, and validate new parameters) are implementation questions that real deployments must address via ceremonies and audit transcripts.

Related reading