What is a Token?

Learn what tokens are in blockchain systems, how they represent value, and why standards like ERC-20, ERC-721, and ERC-1155 matter.

Introduction

Tokens are the basic way blockchains represent assets, rights, balances, and claims that are not necessarily the chain’s native currency. That sounds simple until you notice a puzzle: on one network, a token is just a smart contract keeping score; on another, tokens are built into the ledger itself; and yet wallets and applications still talk about “sending tokens” as if the idea were uniform. The reason this works is that a token is less a particular piece of code than a shared agreement about how some unit of value is represented, updated, and recognized across a system.

That idea matters because most blockchain applications are really about tokens, not chains in the abstract. Stablecoins, governance assets, in-game items, vault shares, loyalty points, deeds, and bridged assets all depend on the same underlying move: take something that people care about and represent it in a machine-readable form that wallets, applications, and other contracts can work with. Once that representation is standardized, the token becomes composable. Exchanges can list it, wallets can display it, lending protocols can accept it, and other contracts can react to it.

The useful question, then, is not merely “what is a token?” but what problem does a token solve? A token solves the problem of representing shared state about ownership or entitlement in a way that software can verify and update without trusting a central database operator. From there, the rest follows: fungibility, uniqueness, minting, burning, metadata, approvals, transfer rules, and standards.

What does it mean that a token is a ledger representation?

The easiest mistake is to picture a token as a little digital object moving around the network. That is often good enough for casual conversation, but mechanically it is misleading. In most systems, what actually changes is a ledger entry: one account’s balance goes down, another’s goes up; one identifier now maps to a new owner; one vault share count increases while underlying assets are deposited.

So the core of a token is not the “thing” itself. The core is a state transition rule for a ledger. That rule answers questions like: who owns how much, what makes units interchangeable or distinct, who is allowed to create or destroy supply, and what interface outside applications can rely on when they interact with it.

This is why a broad, implementation-neutral definition is useful. The Token Taxonomy Framework describes a token as a digital representation of some shared value. That value may be purely digital, or it may be a receipt, title, or claim tied to something outside the chain. The same definition covers a stablecoin, a concert ticket, an NFT deed, a vault share, or a tokenized claim on real-world collateral. What changes is not the basic idea, but the rules attached to the representation.

That framing also explains why “token” is broader than “cryptocurrency.” A chain’s native asset, such as ETH or SOL, is part of the base protocol. A token is usually an asset represented within that system using its asset machinery. Sometimes that machinery is a smart contract, as with many Ethereum tokens. Sometimes it is a ledger-native feature, as with Cardano native assets. Either way, the token is a formally tracked representation of value.

Why do shared interpretation and token standards make tokens useful?

A token only becomes valuable in practice when many independent pieces of software interpret it the same way. If a wallet thinks a transfer succeeded, but an exchange parses the contract differently, or a lending market cannot tell whether approval works, the token is technically live but economically isolated.

This is why token standards matter so much. Ethereum’s documentation puts the point plainly: token standards define how tokens behave and interact across the ecosystem, making them easier to build and ensuring they work with wallets, exchanges, and DeFi platforms. The deeper reason is composability. A standard turns a custom asset into a predictable component.

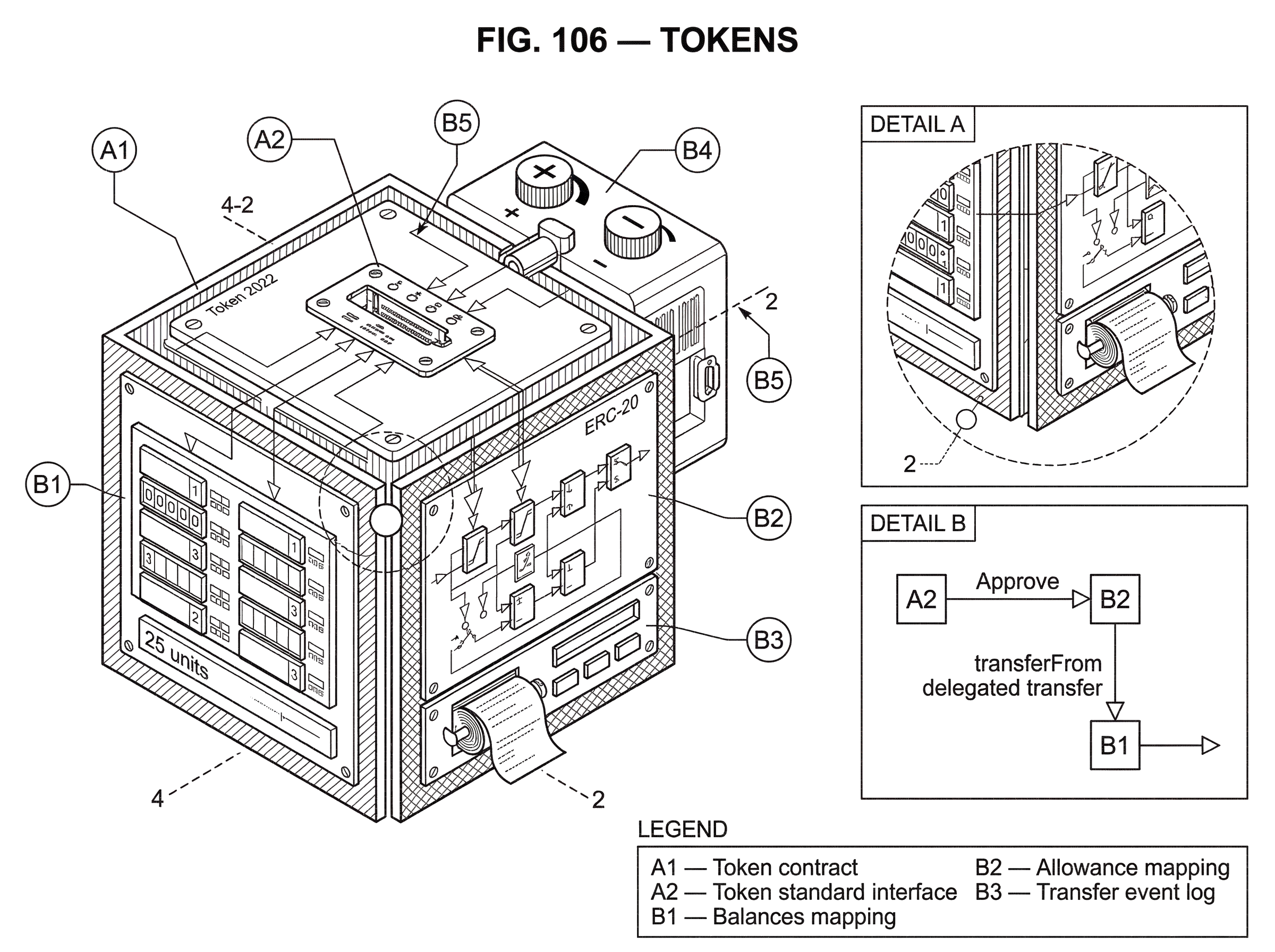

Here is the mechanism. Suppose a new fungible token follows ERC-20. A wallet already knows how to ask for a balance. An exchange already knows how to request delegated spending through approve and transferFrom. Indexers already know to watch Transfer events. None of these integrations need bespoke code for that one project. The token plugs into an existing software environment because its behavior is legible in advance.

Without standards, every token would be a custom integration problem. With standards, the ecosystem can scale. That is why ERC-20, ERC-721, and ERC-1155 are so important on Ethereum, why cw20 matters in CosmWasm, and why Solana’s SPL Token program gives applications a common base implementation. The specifics differ by platform, but the underlying purpose is the same: make asset behavior predictable enough that other software can safely compose with it.

How do fungible and non‑fungible tokens differ in accounting and integration?

The most basic divide in token design is whether units are interchangeable.

A fungible token is about quantity. If you have 10 units and I have 10 units of the same fungible token, there is no meaningful difference between your units and mine. The ledger tracks amounts, not individual identity. This is the right model for currencies, voting balances, staking balances, reward points, and vault shares.

A non-fungible token is about identity. The ledger tracks ownership of specifically identified units, and the distinction between them matters. Token #17 is not interchangeable with token #18, even if both belong to the same collection. This is the right model for deeds, collectibles, tickets with unique attributes, or any asset where provenance or distinct properties matter.

That distinction sounds obvious, but it is not merely a naming convention. It changes the storage model, the transfer model, and the integration model. In ERC-20, the main state is balances and allowances. In ERC-721, the main state is ownership of a unique tokenId, plus approval rules around that identifier. In other words, fungibility is not just “all tokens look alike.” It is a commitment to a particular kind of accounting.

Some systems also support a middle ground often described as multi-token or semi-fungible design. ERC-1155 is the clearest example. Instead of deploying a separate contract for every token type, one contract can manage many token IDs, where each ID may correspond to a fungible, non-fungible, or other configured asset type. The gain is efficiency: batch transfers and shared logic reduce cost and make mixed-asset applications, especially games and marketplaces, easier to build.

Mechanically, what happens when you send a token?

Consider a simple fungible token payment on Ethereum. Alice wants to send 25 units of a token to Bob. At the user level, she clicks “send.” Under the hood, the token contract checks whether Alice’s balance is at least 25. If so, it subtracts 25 from Alice’s recorded balance, adds 25 to Bob’s, and emits a Transfer event. Off-chain wallets and indexers see that event and update their display of who owns what.

Notice what did not happen. No digital coin flew through space. No file changed hands. The system updated a shared state machine according to agreed rules. The event matters because other software uses it to reconstruct token activity. ERC-20 explicitly requires transfer and transferFrom to emit Transfer, and even zero-value transfers must be treated as normal transfers and emit the event. That may sound pedantic, but it is exactly the kind of predictability other systems depend on.

Now change the example slightly. Alice wants a decentralized exchange to trade on her behalf. She does not transfer tokens directly to the exchange contract first. Instead, she calls approve, setting an allowance for the exchange. Later, the exchange contract calls transferFrom to move some amount from Alice to the destination permitted by the allowance. This delegated transfer pattern is what makes many DeFi interactions work. It is also why allowances are a common source of risk and UX complexity.

The same idea appears in other ecosystems with different mechanics. On Solana, common SPL Token operations include creating a mint, creating token accounts, minting, transferring, approving delegates, burning, freezing, and thawing. On Cardano, native assets are tracked directly by the ledger and can be carried together in token bundles within UTXO outputs. The user-facing action still feels like “send token,” but the underlying representation depends on the chain’s architecture.

How do token standards encode behavior beyond function names?

A standard is often described as an interface, but that phrase can sound thinner than it really is. A good token standard is not just a list of function names. It encodes a behavioral contract between implementers and integrators.

Take ERC-20. It defines core functions such as totalSupply, balanceOf, transfer, approve, allowance, and transferFrom, along with Transfer and Approval events. But the key is not the inventory of methods. The key is that each method comes with expectations. Callers must handle boolean failure returns. approve overwrites existing allowance rather than incrementing it. transferFrom is the delegated withdraw workflow. Metadata fields like name, symbol, and decimals are optional, so other contracts must not assume they exist.

Those details explain both the power and the limitations of the standard. ERC-20 became foundational because the interface was small enough to adopt widely, yet expressive enough for exchanges, wallets, and DeFi protocols to build around. At the same time, its allowance model introduced known UX and safety issues. The standard itself notes the race-condition risk in changing allowances and recommends that clients set allowance to 0 before updating it to a new value. In other words, standards create interoperability, but they also freeze design tradeoffs into the ecosystem.

ERC-721 does something similar for non-fungible tokens. Its central promise is that each NFT is identified by a unique tokenId within a contract, and the pair of contract address plus tokenId forms a globally unique identifier. Ownership, approvals, and transfer behavior are standardized around that identity model. The safeTransferFrom path adds an important protection: if the recipient is a contract, it must implement the proper receiver callback or the transfer reverts. That exists because otherwise NFTs can be sent to contracts that cannot handle them and become stuck.

ERC-1155 extends the idea further. Its contract can manage many token IDs, and it standardizes single and batch transfers, receiver hooks, metadata URI handling, and event-based accounting for balances and supply. The important insight is that the token unit is no longer “this contract” but often “this id within this contract.” That lets developers represent many assets under a common logic layer.

How do minting, transfer rules, and burning define a token’s lifecycle?

If standards make tokens legible to the ecosystem, lifecycle rules make them economically meaningful.

Every token design has to answer a few basic questions. How does supply come into existence? Who can move it? Can it be destroyed? Can some transfers be blocked or delegated? These are not secondary implementation details. They determine what the token is in practice.

Minting is the clearest example. A token with no mint function after deployment behaves very differently from a token whose supply can expand under governance, algorithmic rules, vault deposits, or bridge messages. Burning matters for the same reason: it defines whether supply can contract and under what authority. On Cardano, minting and burning are controlled by a minting policy, and the association between an asset and its policy is permanent. On Ethereum, many token contracts expose mint or burn logic through custom extensions around a standard interface. In vault standards like ERC-4626, new share tokens are minted when users deposit the underlying asset and burned when they redeem.

That last case is especially revealing. ERC-4626 vaults represent shares of a single underlying ERC-20 asset. The shares are themselves tokens, but their meaning is relational: each share is a claim on a portion of the vault’s managed assets. Here the token is not primarily a currency or collectible. It is an accounting wrapper around a position. The vault standard exists because integrations need a common way to understand deposits, withdrawals, share conversion, and total managed assets.

Maker’s Dai shows another lifecycle pattern. Dai is minted against collateral placed in protocol vaults, and its stability depends on collateralization, liquidation, and governance-managed parameters. MKR, by contrast, is both a governance token and a recapitalization mechanism: the protocol can mint MKR in debt auctions when system losses need to be absorbed. These are both tokens, but the rules governing creation and destruction reveal very different roles.

Restrictions matter too. Some tokens support freezing, delegated control, or non-transferability. Solana’s common token instructions explicitly include Freeze Account and Thaw Account. ERC-4626 vault shares may implement ERC-20 while still reverting on transfer or transferFrom if the vault is intentionally non-transferable. A token is therefore not defined by transferability alone. It is defined by the set of operations the system recognizes as valid.

How do tokenized claims depend on off‑chain assumptions and governance?

| Token type | Claim dependency | On-chain verifiability | Main trust assumption | Typical risk | Mitigation |

|---|---|---|---|---|---|

| Native ledger asset | Native protocol only | Full ledger proofs | Protocol security | Market volatility | Protocol design, decentralization |

| Vault share | Underlying pooled assets | Share accounting on-chain | Vault operator honesty | Custodian or strategy failure | Audits and transparent reserves |

| Bridged token | Lock on source chain | Bridge state only | Bridge custody and relayers | Unbacked minting exploit | Multisig, proofs, audits |

| Representative stablecoin | Off-chain reserves or IOU | Depends on issuer disclosures | Issuer solvency and law | Reserve mismanagement | Regular audits, legal recourse |

At this point it helps to separate two kinds of token value.

Some tokens are intrinsic to the ledger system itself. Their value comes from protocol use, governance rights, or market demand for the token as a digital asset. Others are representational claims. A stablecoin may represent a claim on collateral or redemption process. A vault share represents a fraction of pooled assets. A bridged token represents an asset supposedly locked somewhere else. An NFT may represent a deed, license, or external entitlement.

This is where many smart readers get tripped up. The chain can prove the existence and ownership of the token representation. It cannot automatically prove every external fact the token is supposed to stand for. If a bridged asset is minted on one chain, its reliability depends on whether the bridge really locked or controls the corresponding asset on the source chain. If a token represents off-chain property, legal and operational systems must make that representation meaningful in the world outside the ledger.

Wormhole’s exploit is a clean illustration of the point. The attacker was able to mint wrapped ETH on Solana without providing the corresponding Ethereum collateral. The token interface could still look normal locally. Wallets could display balances. Protocols could see a standard token. But the backing assumption had failed. The token representation remained on-chain while the economic claim it was supposed to embody became false.

That is not a problem unique to bridges. It is the general rule for tokenized claims: the stronger the claim on something external, the more the token depends on governance, collateral systems, legal enforcement, or security assumptions outside the bare token interface. The interface tells you how to interact with the token. It does not, by itself, guarantee what the token is worth.

How and why do token architectures differ between blockchains?

It is tempting to talk about tokens as if Ethereum’s model were the universal one. It is not.

Ethereum popularized the contract-based token standard model. ERC-20, ERC-721, ERC-1155, and ERC-4626 all describe interfaces implemented in smart contracts. This made token innovation extremely fast because developers could design new asset behavior at the application layer. The tradeoff is that basic token safety depends heavily on contract correctness and integrator discipline.

Cardano takes a different route for many token operations. It is a multi-asset ledger, so native assets are tracked and transferred at the ledger level without requiring smart contracts for ordinary token movement. Assets are identified by PolicyID.AssetName, and minting or burning is controlled by a minting policy script. This reduces dependence on custom transfer logic for basic operations, though it introduces its own design constraints, such as minimum ada requirements per token-carrying output.

Solana’s SPL Token system offers another pattern: a common token program handles mint, account, transfer, delegate, burn, and authority operations. Applications interact with that shared program rather than each project deploying entirely bespoke token logic from scratch. Token 2022 keeps compatibility with the same base implementation for common examples.

CosmWasm’s cw20 shows the same interoperability instinct in a different environment: define an interface specification so that contracts can implement fungible tokens in a way consumers recognize. The family resemblance across ecosystems is more important than the surface differences. Everywhere, the problem is the same: represent asset state consistently enough that tools and applications can interoperate.

What integration boundary issues remain even when a token follows a standard?

People sometimes treat token standards as if they solved the whole problem. In reality, they solve the first interoperability problem: how software should talk to the token. The more subtle problems begin at the boundaries.

Metadata is one example. ERC-20 metadata fields are optional. ERC-721 metadata commonly points to a tokenURI, but the standard does not guarantee permanence or authenticity of off-chain content. ERC-1155 uses URI templates with client-side {id} substitution, but observers still need reliable hosting or indexing. Solana’s introductory token docs push metadata to Metaplex rather than baking it into the basic token explanation. The recurring lesson is that a token standard often standardizes where metadata is referenced, not whether the metadata is trustworthy.

Enumeration is another boundary problem. ERC-1155 relies heavily on events for off-chain enumeration of balances and token existence. That is efficient on-chain, but it means explorers, marketplaces, and analytics systems must index logs correctly. Cardano wallet and application developers need to reason about token bundles and minimum ada constraints in UTXO outputs. Even when the token logic is standardized, state discovery and display are often partly off-chain problems.

Finally, permissions and approvals are integration boundaries where many failures occur. ERC-20 allowances are famously subtle. Safe receiver hooks in ERC-721 and ERC-1155 exist because sending tokens to contracts that do not understand them can cause assets to be trapped. Tokenized vault previews in ERC-4626 are useful for planning transactions, but the standard warns they can be manipulated and are not always safe as Price Oracle. Standards help, but they do not remove the need to understand what an integration is assuming.

Conclusion

A token is best understood as a standardized ledger representation of value, rights, or claims. Sometimes that representation tracks interchangeable quantities; sometimes it tracks unique identities; sometimes it tracks shares in an underlying pool or a claim on assets elsewhere. What makes tokens powerful is not just that they exist on-chain, but that standards and lifecycle rules make them legible to wallets, exchanges, protocols, and users.

If you want the shortest durable intuition, remember this: a token is not mainly a digital object; it is a shared rule for accounting. Once many systems agree on that rule, assets become programmable and composable. And once you see that, the differences between ERC-20, NFTs, vault shares, native assets, and bridged representations become variations on the same underlying idea rather than separate worlds.

How do you evaluate a token before using or buying it?

Evaluate a token by checking its on‑chain rules, supply mechanics, and any off‑chain assumptions before you buy or grant approvals. On Cube Exchange you can act on that assessment: fund your account, open the token market or approval flow, and execute a controlled trade or approval once you’re satisfied with the checks.

- Check the token contract on a block explorer and verify the source code; inspect totalSupply, decimals, and whether mint/burn, pausable, or blacklist/owner roles exist.

- Review tokenomics and distribution: look for team/vesting schedules, locked allocations, and large holder concentration by scanning recent Transfer events.

- If the token is a bridged or collateralized asset, verify the bridge/custodian contract, multisig attestations, and recent mint/burn attestations or audits.

- On Cube Exchange, fund your account, open the token market or token approval flow, and place a limit order or set a restricted allowance (avoid unlimited approvals).

Frequently Asked Questions

A token is a representation of value tracked by the chain’s asset machinery (often a smart contract) rather than the chain’s native currency, which is part of the base protocol; tokens can be implemented as contract-based assets (Ethereum) or ledger‑native assets (Cardano).

A token standard encodes behavioral expectations, not just function names: it specifies which calls and events exist and how they should behave (e.g., failure handling, what events must be emitted, and which metadata are optional), so integrators can rely on predictable semantics.

Because ERC-20’s approve/allowance model separates authorization from transfer, concurrent transactions can create a race condition when changing allowances; the EIP and ecosystem guidance therefore recommend setting allowance to zero before updating it to a new value.

“Sending a token” is a ledger state transition: the contract or ledger verifies the sender’s balance/rights, updates balances or ownership records, and emits standard events (like Transfer) so wallets and indexers can update their views - no digital file or coin physically moves.

Bridged or wrapped tokens depend on off-chain or cross-chain assumptions about backing; if a bridge/minting authority is compromised, wrapped tokens can be minted without real collateral - as in the Wormhole incident - so the on‑chain token interface can look normal while the economic claim is false.

Fungible tokens track quantities (balances and allowances), non-fungible tokens track unique ownership of tokenIds, and multi-token standards (like ERC-1155) let one contract manage many IDs - these choices change storage layout, transfer primitives (single vs batch), and how integrators enumerate or index assets.

Different chains make different tradeoffs: Ethereum popularized contract-based, application-layer token logic; Cardano tracks native assets at the ledger level with permanent minting policies; Solana uses a shared SPL Token program that applications call instead of deploying bespoke token contracts.

Standards often leave metadata hosting, token enumeration, and some discovery tasks to off‑chain systems: ERC‑20 metadata is optional, ERC‑1155 enumeration relies on emitted events, and tokenURI fields are off‑chain pointers - so explorers, wallets, and marketplaces must handle hosting, indexing, and integrity checks themselves.

Lifecycle rules (who can mint or burn, whether transfers can be restricted) determine a token’s economic role: for example, ERC‑4626 shares are minted/burned to represent vault deposits/redemptions, while Maker’s Dai and MKR have mint/burn and governance roles that shape stability and recapitalization behavior.

ERC‑721 and ERC‑1155 include “safe” transfer paths that require recipient contracts to implement receiver hooks; if the recipient contract lacks the hook, the transfer must revert to avoid permanently trapping tokens.

Related reading