What is a Bridge?

Learn what a blockchain bridge is, how bridges move assets and messages across chains, the main trust models, and why bridge security is hard.

Introduction

A bridge is a system that lets one blockchain recognize and act on something that happened on another blockchain. That sounds simple, but it solves a surprisingly hard problem: blockchains are designed to reach agreement within their own world, not across many worlds at once.

If you send ETH on Ethereum, Solana does not automatically know it happened. If a token is locked on one chain, another chain has no native way to verify that lock and issue a corresponding asset. A bridge exists to carry that fact across the boundary. In the most general sense, a bridge transfers messages between chains; moving assets is just the most popular consequence of that message transfer.

That is why bridges matter. They let users move value to another ecosystem, access applications on another chain, and onboard to environments such as Ethereum layer 2 networks. They also let developers treat multiple chains less like isolated islands and more like components of a larger system. But every bridge has to make a tradeoff about how the destination chain decides to trust information from the source chain, and that tradeoff is the center of bridge design.

What problem does a blockchain bridge solve?

A blockchain is good at answering questions about its own history. Ethereum can verify Ethereum blocks. A Cosmos chain can verify its own state transitions. Solana can verify Solana transactions. What a chain generally cannot do cheaply and natively is verify the full consensus process of some other chain.

That creates a basic interoperability gap. Suppose Alice locks 10 ETH in a contract on Ethereum and wants to use equivalent value on another chain. The destination chain must decide whether the lock event really happened, whether it is final, whether it has already been used before, and what action should follow. Without a bridge, that destination chain has no built-in source of truth for those facts.

So a bridge is really a cross-chain verification system. Asset transfer is the user-facing story, but underneath it, the bridge is answering a narrower question: what evidence is good enough for chain B to accept a claim about chain A?

Once that clicks, many confusing details become easier to organize. Wrapped assets, relayers, guardians, oracles, light clients, proofs, and threshold signers are all different ways of answering the same trust question.

How does a bridge prove a remote event and trigger actions on another chain?

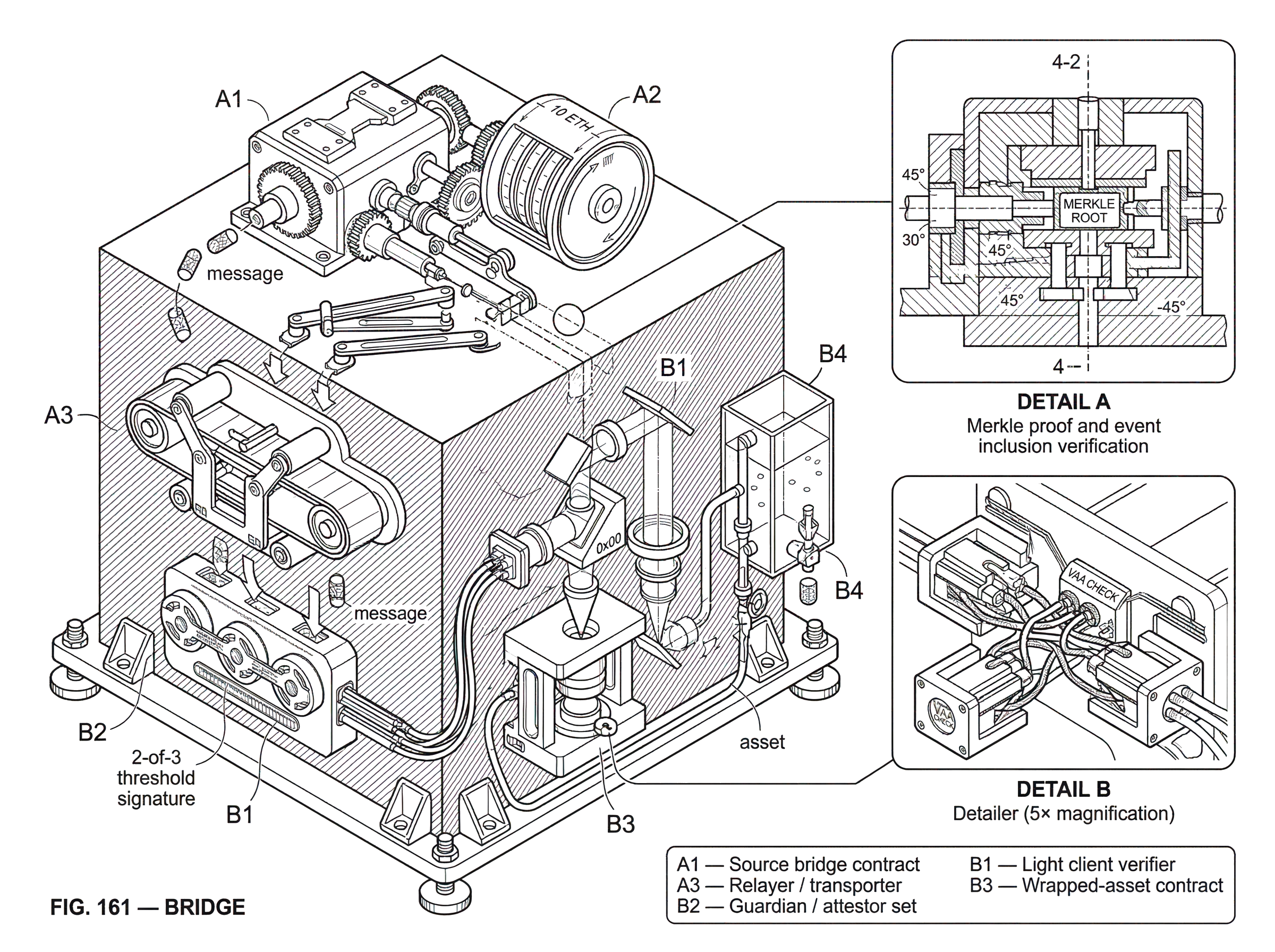

Most bridges follow the same abstract pattern. Something happens on the source chain. The bridge turns that event into evidence. That evidence is delivered to the destination chain. Then the destination chain executes the corresponding effect.

In an asset bridge, the source-chain event is usually that tokens were locked, escrowed, or burned. The destination-chain effect is usually that tokens are minted, released from liquidity, or otherwise credited to the recipient. In a messaging bridge, the source-chain event may be an application sending a packet or command, and the destination-chain effect is a contract call, state update, or token transfer.

The important invariant is this: the destination chain should act only if the source-chain event is valid and has not already been consumed in a conflicting way. If that invariant fails, a bridge can create unbacked assets, release collateral twice, or execute messages that were never legitimately authorized.

A simple narrative example makes this concrete. Imagine a user wants to move a stablecoin from Ethereum to another chain. They send the token into a bridge contract on Ethereum. That contract now holds the original asset in escrow. Somewhere, the bridge system records an event saying that this deposit happened, for this amount, for this recipient, and at this time or block. A relayer or other off-chain process notices the event and carries evidence of it to the destination chain. The destination-side bridge verifies that evidence using whatever trust model it was built around. If the evidence passes, the destination chain either mints a wrapped representation of the stablecoin or releases liquidity from a pool. The user sees a token arrive, but mechanically what really happened is that the destination chain accepted a claim about Ethereum and executed a consequence.

That same logic applies beyond tokens. A cross-chain governance action, an interchain account command, or a message to a lending protocol is still just: observe event, verify event, execute result.

Trusted vs. trustless bridges; what's the difference?

The most common dividing line in bridge design is whether the bridge introduces new trust assumptions beyond the chains it connects.

A trusted bridge depends on some external operator, custodian, committee, or service to attest that an event happened. The destination chain accepts the operator’s word, directly or indirectly. This can be operationally simple and fast, but it means users now depend on that external party not to censor, collude, lose keys, or behave incorrectly. Ethereum’s general bridge guidance treats this as a meaningful extra risk: once external verifiers are inserted, the bridge moves away from the security of the underlying domains.

A trustless bridge, in the stricter sense used in interoperability discussions, tries to make the destination chain verify the source-chain fact in a way that is equivalent or close to equivalent to the underlying chains’ own security. In practice, this often means on-chain verification through light clients, validity proofs, or similarly strong cryptographic mechanisms rather than simple operator signatures.

The distinction is useful, but it is not perfectly binary. Real bridges live on a spectrum. Some rely on a multisig or MPC signer set. Some use decentralized oracle networks. Some use light clients plus off-chain relayers, where integrity is trust-minimized but liveness still depends on relayer participation. Some use optimistic schemes that assume honesty unless challenged. Some use zero-knowledge proofs to compress verification costs. The central question is always the same: what additional assumptions must the user accept for the bridge to work?

How do lock‑and‑mint, burn‑and‑mint, and liquidity‑network bridges differ?

| Pattern | Backing model | Return path | Liquidity risk | Speed | Best for |

|---|---|---|---|---|---|

| Lock-and-mint | Escrowed original assets | Redeem by unlocking escrow | Low if escrow secure | Moderate | Preserve supply backing |

| Burn-and-mint | Source tokens destroyed | Return via market liquidity | Higher, market dependent | Moderate | Reduce custody concentration |

| Liquidity-network | Pools and market makers | Instant via pool liquidity | Inventory fragmentation risk | Fast | Best UX, low latency |

When people think of a bridge, they often imagine the familiar lock-and-mint flow. You lock an asset on the source chain, and the bridge issues a corresponding wrapped asset on the destination chain. This design is common because it preserves a supply relationship: the wrapped token is supposed to be backed by the original token held elsewhere.

That backing relationship is exactly why bridge security matters so much. If the bridge can be tricked into minting wrapped assets without the original collateral being locked, the wrapped token becomes undercollateralized or entirely unbacked. The Wormhole exploit is a famous example of this class of failure: an attacker was able to mint wrapped ETH on Solana without depositing the corresponding ETH collateral on Ethereum, creating a severe supply integrity problem until outside capital stepped in.

A second pattern is burn-and-mint. Instead of locking the original asset forever in escrow, the source-side representation is destroyed and a new representation is created elsewhere. This can reduce some custody concentration, but it changes the liquidity picture. Industry guidance notes that burn-based designs can increase liquidity risk because moving back may depend on markets and available liquidity rather than simply redeeming escrowed collateral.

A third pattern is liquidity-network bridging. Instead of proving that a token should be newly minted, the system uses pools or market makers on multiple chains. The user deposits on one side, and liquidity is released on the other. This can improve speed and user experience, but it introduces inventory management, pricing, and liquidity-fragmentation concerns. The core trust question does not disappear; it just shifts toward settlement guarantees and pool solvency.

Why are bridges fundamentally about message passing rather than just tokens?

It is tempting to think bridges are about tokens. More fundamentally, bridges are about messages.

A token bridge only works because one chain sends a fact like “10 units were deposited for recipient X” and another chain interprets that fact as “mint or release 10 units to X.” If you can send arbitrary authenticated messages, then token movement becomes just one application among many. The same channel can carry instructions for governance, lending, account control, settlement, or any other contract behavior that can be encoded and interpreted on the destination side.

This is explicit in protocols such as IBC and CCIP. IBC is designed as a general protocol for chains to exchange arbitrary byte-encoded data. Its architecture separates transport and authentication from the application logic that interprets packets. CCIP likewise exposes distinct primitives for arbitrary messaging, token transfer, and programmable token transfer, where tokens and instructions move together so the destination chain can immediately use the received asset according to the attached data.

That separation matters because it clarifies what part is fundamental and what part is convention. The fundamental part is secure cross-chain agreement on whether a message is valid. The convention is what the receiving application chooses to do with that message.

How does a destination chain verify that an event really happened on the source chain?

| Mechanism | Who attests | Integrity | Liveness | Cost | Best for |

|---|---|---|---|---|---|

| External attestors | Validator set or guardians | Requires trusted supermajority | Operators control delivery | Low on-chain cost | Fast, simple bridges |

| Light clients | On-chain light client verification | Security matches source chain | Relayers needed for delivery | Moderate verification cost | IBC-style trust-minimized links |

| Proof systems | zk / validity proofs | Cryptographic proof guarantees | Prover nodes produce proofs | High prover cost, low verify | High-assurance trustless bridges |

| Oracle networks | Decentralized oracle / DONs | Assume honest oracle nodes | Relayers/nodes affect liveness | Moderate cost and complexity | Programmable messaging (CCIP) |

Bridge designs differ most sharply in their verification mechanism.

One family uses external attestors. A set of validators, guardians, or oracle networks observes the source chain and signs statements about events. The destination chain checks that enough authorized parties signed. Wormhole is a well-known example of this style: its signed VAAs are valid when a supermajority of guardians signs the same message. This creates a clear threshold trust model. If the required threshold behaves honestly, the message is accepted correctly. If the threshold is compromised or if the verification logic around those signatures is flawed, the bridge can fail catastrophically.

Another family uses light clients. Here, each chain verifies compact evidence about the other chain’s headers and state, allowing it to check whether a claimed event really appears in a finalized block. IBC is the canonical example. Each side of an IBC connection uses a light client of the other chain, while off-chain relayers carry packets between them. The relayer is the transport mechanism, not the source of truth. That distinction is important: relayers affect liveness and speed, but the validity of the message comes from on-chain verification against the light client.

A third family uses proof systems, including zero-knowledge designs. zkBridge is an example of the general idea: instead of trusting an external committee, the destination chain verifies a succinct cryptographic proof that a certain event happened in the source chain according to the source chain’s own rules and light-client logic. The attraction is clear: fewer external trust assumptions. The challenge is equally clear: proof generation, integration complexity, and the need to rely on the correctness of the proof system and supporting assumptions.

There are also oracle-backed and modular interoperability systems such as CCIP or LayerZero-style messaging architectures, where multiple networks or roles collaborate to attest to or deliver cross-chain messages. The implementation details vary by protocol, but the design space generally tries to balance decentralization, cost, latency, and operational reliability.

Why do even trust‑minimized bridges rely on off‑chain relayers?

A common misunderstanding is that a “trustless” bridge has no off-chain components. In practice, many such bridges still depend on off-chain actors for delivery, even if not for validity.

IBC makes this explicit. Relayers scan one chain, construct the appropriate datagrams, and submit them to the counterparty chain. They are the physical connection layer. If no relayer delivers packets, the system stalls. But a relayer cannot simply invent a valid packet if the on-chain verification is working correctly. So the bridge may be trust-minimized for integrity while still depending on relayers for liveness.

That split between integrity and liveness is useful across bridge design. Integrity asks: can an invalid cross-chain event be accepted? Liveness asks: will a valid cross-chain event eventually be delivered and executed? A bridge can be strong on one and weak on the other.

The same pattern appears in signing and settlement systems more broadly. In threshold-signing architectures, multiple parties collectively authorize an action without ever reconstructing a full private key in one place. A concrete real-world example is Cube Exchange’s 2-of-3 threshold signature design for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize settlement. That is not itself a universal bridge design, but it illustrates a broader interoperability lesson: distributed authorization can reduce single points of compromise, while still depending on operational participants to remain available.

What are the primary sources of risk in bridge designs?

Bridges are risky because they sit at a concentration point of value and trust. They often custody assets, issue representations of those assets elsewhere, and encode assumptions about finality, message validity, upgrades, and key control. If any of those assumptions is wrong, the bridge may create or release value incorrectly.

Security research has emphasized that bridges are still immature and have suffered repeated, high-impact failures. Surveys of the ecosystem describe many attack vectors and very large aggregate losses. A particularly important finding from recent security work is that a large share of stolen funds came from systems secured by intermediary permissioned networks with weak cryptographic key operations. In plain language: if a bridge depends on a small or semi-closed group to say what is true, key compromise and governance failure become central risks.

That is why “trusted” does not just mean philosophical discomfort. It means concrete failure modes: censorship, custodial theft, key loss, collusion, and governance abuse. At the same time, “trustless” does not mean safe by default. Smart contracts can have bugs. Proof verification logic can be implemented incorrectly. Upgrade processes can silently break invariants. Finality assumptions can be mishandled.

The Nomad exploit is an unusually clear example of the last point. After an upgrade, the system initialized a trusted root to 0x00, and because unproven messages also mapped to the zero value by default, the contract’s proof check effectively accepted arbitrary messages. The deep lesson is not merely “there was a bug.” It is that bridge security often depends on small invariants inside verification code, and if a dangerous default value collides with a trusted sentinel, the whole trust model can collapse.

How do bridges decide when a source‑chain event is "final enough"?

| Strategy | When to act | Main risk | Latency | Best when |

|---|---|---|---|---|

| Wait confirmations | After N blocks | Reorgs within confirmation window | High | Probabilistic-finality chains |

| Light-client validation | After on-chain header verify | Light-client bug or upgrade | Low-to-moderate | When destination runs light clients |

| Optimistic challenge | After challenge window expires | Fraud during challenge window | High (challenge delays) | Low verification-cost priority |

| Finality certificate | After finality proof or vote | Delayed finality events | Low once finalized | Chains with deterministic finality |

For a bridge, it is not enough that an event appeared on the source chain. The bridge must decide when that event is final enough to act on.

This sounds trivial on a chain with fast deterministic finality, but it becomes more delicate across different consensus models. Some chains have probabilistic finality, where reorganization risk decreases over time but is not literally zero after one block. Others have explicit finalization checkpoints. A bridge that acts too early risks honoring a source-chain event that is later reverted. A bridge that waits too long becomes slow and frustrating to use.

This is one reason bridge architectures differ so much across ecosystems. The right verification and waiting strategy depends on what the source chain can prove succinctly, what the destination chain can verify affordably, and what latency users can tolerate.

Why use bridges at all if they add security risk?

If bridges are difficult and risky, why use them at all? Because a multichain ecosystem without bridges is fragmented by design.

Users bridge into Ethereum layer 2s because the applications or fees are different there. Developers use cross-chain messaging because the state or liquidity they need is not confined to one chain. Ecosystems such as Cosmos were built around the idea that sovereign chains should still be able to communicate through a common protocol. General interoperability systems exist because applications increasingly want assets, instructions, and accounts to move across boundaries rather than remain trapped behind them.

So bridges exist because specialization creates value. Different chains optimize for different things: execution model, fee structure, finality characteristics, application environment, or governance. A bridge is the mechanism that lets a user or protocol borrow those strengths without abandoning the rest of the ecosystem.

What should users and builders check before using or integrating a bridge?

The practical question is never just “does this bridge work?” It is “what assumptions make it work, and what happens if they fail? ”

For a user, that means asking whether the bridge is operator-trusted or cryptographically verified, whether assets are wrapped or released from liquidity, whether the bridge is canonical for the ecosystem in question, and what kind of incident history or audit posture it has. It also means recognizing that bridgeing value is not purely a software interaction; there may be liquidity, custody, censorship, or upgrade risks embedded in the design.

For a builder, the key discipline is to protect invariants. The destination chain must never accept an invalid event. A valid event must not be processed twice. Supply accounting across chains must remain consistent. Upgrades must not silently change sentinel values, trust roots, signer sets, or verification assumptions. Monitoring must cover not just code execution but also operational health: key management, relayer activity, unusual flows, and threshold-governance changes.

No currently dominant design eliminates all tradeoffs. Even the better approaches choose among cost, latency, implementation complexity, and trust assumptions. That is consistent with the broad guidance from ecosystem documentation: bridges are important, but the design space is still early, and the best architecture may not yet have emerged.

Conclusion

A bridge is best understood not as a token mover but as a system for making one blockchain act on a fact from another blockchain.

Everything else is a way of implementing that idea.

- wrapped assets

- relayers

- guardians

- light clients

- proofs

- liquidity pools

The question that matters most is simple: why should the destination chain believe the source-chain claim? If you understand how a bridge answers that question, you understand both why bridges are useful and why they are dangerous. That is the part worth remembering tomorrow.

How do you move crypto safely between accounts or networks?

Confirm the asset, network, and destination details before you move funds, and use Cube Exchange to fund or trade the asset if you need to prepare liquidity. Cube can hold funds you deposit (fiat on‑ramp or crypto transfer) so you can convert or withdraw the exact token and amount you plan to send across chains.

- Deposit the asset into your Cube account via the fiat on‑ramp or a direct crypto transfer. Fund the exact token (or a stable alternative) you will use for the cross‑chain move.

- Verify the receiving network and the exact destination address or memo/tag. Copy the destination address from the recipient and confirm the chain ID and token standard (e.g., ERC‑20 vs native token).

- Check the bridge or withdrawal instructions for the required confirmation threshold and wait for that many confirmations on the source chain before initiating the destination claim. If the bridge publishes a recommended finality wait, follow that value.

- Review fees and gas-token requirements on both chains, confirm whether the destination will receive a wrapped token or a released original, and choose the transfer path accordingly (on‑chain withdraw vs third‑party bridge).

- Initiate the transfer from the sending wallet or Cube withdrawal flow, then monitor the destination for the expected deposit and reconcile balances once the bridge or relayer reports completion.

Frequently Asked Questions

Finality depends on the source chain’s consensus and the bridge’s verification strategy: some bridges wait for explicit finality checkpoints, others use a probabilistic wait (e.g., many blocks), and acting too early risks honoring a reverted event while waiting too long hurts usability. Choosing the wait strategy is a tradeoff between safety and latency and must match what the source chain can prove and the destination chain can verify.

A “trusted” bridge accepts attestations from external operators, custodians, or a signer set and is operationally simple but adds censorship, collusion, key‑loss, and custodial risks; a “trustless” bridge aims to verify source events on‑chain via light clients or cryptographic proofs so the destination chain’s security approaches that of the source chain, but it is usually costlier, more complex, and still subject to software or proof‑verification bugs. Real designs fall on a spectrum, so users should ask what extra assumptions they accept.

Even trust‑minimized bridges often rely on off‑chain relayers for delivery: relayers scan the source chain and submit packets to the destination, so they affect liveness and speed but - if on‑chain verification (e.g., a light client) is used - they cannot forge validity. The integrity/liveness split means relayers are a transport dependency even when they are not the source of truth.

Common verification approaches are external attestors (guardian/oracle sets whose signatures the destination checks), light clients (on‑chain verification of compact headers and state proofs), and cryptographic proof systems (e.g., zk proofs that succinctly attest to remote state); each reduces some trust assumptions at different operational and cost tradeoffs. Which one fits depends on desired decentralization, cost, latency, and implementation complexity.

Lock‑and‑mint keeps original tokens escrowed and mints a wrapped representation elsewhere, preserving a direct backing relation; burn‑and‑mint destroys the source token representation before minting on the destination, changing liquidity dynamics; liquidity‑network bridging uses pools or market makers to satisfy cross‑chain movement and trades off settlement guarantees for speed and UX. Each model shifts the core trust and liquidity risks rather than eliminating them.

Bridges concentrate value and new trust assumptions - risk vectors include custodial theft, key compromise or mis‑rotation, governance abuse, smart‑contract bugs, incorrect verification logic, and bad upgrade defaults - and many large losses have come from bridges relying on small or permissioned validator sets or weak key operations. No model is risk‑free, and historical incidents show both operator compromise and subtle code/upgrade errors are primary causes.

Yes - bridges can create unbacked assets if the destination accepts minting without valid escrow proofs; the Wormhole incident is a prominent example where wrapped tokens were minted without corresponding collateral, illustrating how broken verification or signer compromise leads to supply integrity failures. This is why the backing relationship and its verification are critical.

Not all ledgers can run every bridge protocol: protocols like IBC require a ledger to meet certain requirements (e.g., support for light‑client verification and the necessary finality model), so interoperability protocols are not universally applicable without those capabilities. Implementations also rely on off‑chain relayers and protocol version compatibility (e.g., IBC Classic vs IBC v2) which complicate seamless cross‑deployment.

As a user, check what assumptions a bridge makes: whether integrity is operator‑attested or on‑chain verified, whether assets are wrapped or released from liquidity, the bridge’s incident history and audits, and what happens if its signer set or verification root changes; as a builder, protect invariants (no double‑consumption, correct supply accounting) and monitor operational health, key management, relayers, and upgrade effects. Neither audits nor decentralization eliminate all risk, so understanding failure modes is essential.

Related reading