What is Block Propagation?

Learn what block propagation is, how blocks spread across peer-to-peer networks, why relay speed matters, and how protocols optimize latency and bandwidth.

Introduction

block propagation is the process by which a newly produced block spreads from the node that first learned it to the rest of the network. That sounds like a plumbing detail, but it is actually one of the main mechanisms that makes a blockchain behave like a single shared system instead of many loosely connected machines with slightly different views of reality.

The puzzle is simple: a blockchain is supposed to have one latest block, but blocks are created at one place and heard about elsewhere only later. During that gap, different nodes can honestly disagree about the chain tip. The shorter and more predictable that gap is, the more smoothly the system works. The longer it is, the more often miners or validators build on stale information, the more bandwidth the network wastes, and the easier it becomes for attacks or accidents to push nodes into inconsistent views.

So block propagation is not merely “sending blocks around.” It is the part of the infrastructure that determines how quickly a local update becomes common knowledge. In proof-of-work systems, that directly affects stale-block and fork risk. In BFT-style systems, it affects whether participants receive proposals and supporting data in time to maintain liveness. In high-throughput designs, it can become the dominant bottleneck because even if block production is fast, the network still has to carry the data.

A useful way to think about it is this: consensus decides which block should count, but propagation determines when everyone can even evaluate that question. If consensus is the rulebook, propagation is the messenger system. A perfect rulebook with a slow or fragile messenger system still produces confusion.

Why block propagation creates temporary chain disagreement and fork risk

A blockchain node only knows what it has already received and verified. When a new block is produced, the rest of the network does not instantly acquire it. Instead, the block must travel across a peer-to-peer graph: node to node, hop by hop. Each hop adds some combination of network transmission time, queueing delay, and local verification work. The result is that the network is temporarily out of sync by design.

That temporary inconsistency is not a bug in one implementation. It is a consequence of distributed systems living on real networks. No blockchain can avoid it entirely. What protocols can do is reduce the size and duration of the inconsistency window.

Why does that window matter so much? Because blockchains keep moving while the block is still spreading. In Bitcoin-like systems, if Miner A finds a block and Miner B has not yet heard about it, Miner B may keep mining on the previous tip. If B then finds a competing block, the network has two valid candidates at the same height. Research measuring Bitcoin propagation found that the median time for a node to learn of a block was 6.5 seconds, the mean was 12.6 seconds, and even after 40 seconds about 5% of nodes had still not received the block. That long tail matters more than the median, because forks are created by the nodes that are still behind.

The same measurements found that propagation delay is a strong driver of forks, and that larger blocks make the problem worse. For Bitcoin blocks larger than 20 kB in that study, each additional kilobyte added about 80 ms until a majority knew about the block. The exact numbers depend on protocol version, topology, hardware, and relay optimizations, but the mechanism is general: more bytes and more validation work usually mean a longer inconsistency window.

So the central job of block propagation is to make two things happen at once: move block information quickly, and avoid sending much more data than necessary. Those goals are often in tension. The fastest method may waste bandwidth by sending whole blocks aggressively. The leanest method may require extra round trips, which adds latency.

How does block propagation spread a newly produced block across peers?

At the most basic level, block propagation is a gossip protocol. Each node maintains connections to peers. When it learns about a new block, it tells some or all of them. Those peers decide whether they already know the block; if not, they request it or process it; then they repeat the process with their own peers.

The important idea is that most peer-to-peer networks do not blindly flood full block data to everyone immediately. They usually separate announcement from transfer. First a node says, in effect, “I know about block X.” Then peers that do not yet have block X ask for the missing data. This avoids repeatedly shipping large objects to peers that already have them.

Bitcoin provides a clean example of this structure. Peers use inv messages to announce objects they know about, including blocks. A receiving peer compares those inventories to what it has already seen. If the block is new, it requests it with getdata. The sender then returns the block using a block message. Bitcoin documentation also notes that some miners may send unsolicited block messages for newly found blocks, but the announce-then-request pattern is the standard relay primitive.

That means block propagation is often a multi-step conversation, not a single send. And that has a direct consequence: every extra message exchange costs time. If a protocol needs announcement, then request, then response, each hop may incur at least part of a round-trip delay before the next hop can begin relaying useful data. That is why relay protocol design focuses so much on shaving off round trips near the chain tip, where seconds matter most.

Here is the mechanism in narrative form. Imagine a miner finds a block and its local node validates the header and basic structure well enough to relay it. That node tells its peers that a new block hash exists. A peer that has not seen that hash asks for the block. Once the block arrives, the peer checks the header, links it to the parent it knows, validates enough of the payload to treat it as real, and then announces the same block to its own peers. The process repeats outward across the graph. The block is not moving through a central hub; it is percolating through overlapping local neighborhoods.

The invariant throughout this process is simple: nodes try to avoid downloading or relaying the same block unnecessarily while still converging quickly on the newest chain tip. Most of the protocol engineering is about serving that invariant under real constraints.

Why do nodes relay headers before sending full blocks?

A full block can be large. A block header is small. That difference creates an obvious optimization: tell peers enough to identify and preliminarily verify the block before sending all transaction data.

Again Bitcoin illustrates the pattern. Peers can request chains of headers with getheaders, and they can ask peers to announce new blocks using headers instead of inv by sending sendheaders. A header lets a receiving node quickly check proof of work, the previous-block hash, and basic structural fields. That does not complete full validation, but it gives the node enough information to place the block in the chain and decide whether the full contents are worth fetching.

The key insight is that the network often does not need to move certainty all at once. It first needs to move identity and position: what block is this, and where does it fit? Once nodes share that skeleton view, they can pipeline the expensive part.

Headers-first relay also helps initial synchronization, because a node can learn long stretches of chain structure without downloading all bodies immediately. But near the tip, its value is mostly latency reduction and better scheduling. A node that hears the header quickly can stop building on the old tip even before it has every transaction in the new block.

The limitation is equally important: a header is not the full block. If a system relays headers much faster than bodies, it may reduce tip ambiguity but still leave nodes waiting on the actual state transition data. So headers-first helps, but it does not eliminate the underlying bandwidth problem.

How do compact blocks and mempool overlap reduce block-relay bandwidth?

| Method | Bandwidth | Latency | Failure mode | Complexity | Best when |

|---|---|---|---|---|---|

| Full block | High bandwidth | Single transfer | Sends redundant data | Low | Bootstrapping or divergent mempools |

| Compact blocks (BIP152) | Low in common case | Often saves a RTT | Reconstruction fails if mempools differ | Moderate | High mempool overlap |

| Graphene (set reconciliation) | Very low for large blocks | May need extra RTT on failure | Probabilistic decode failure | High | Large blocks with similar mempools |

The main reason full blocks are expensive to relay is that most of their contents are not actually new to the network. In many chains, especially those with mempools, the transactions in a newly mined block have already been gossiped beforehand. If peers already have most transactions locally, sending the full block again is redundant.

That observation is the compression point for modern block propagation: do not retransmit data the receiver is likely to already have. Instead, send a compact description of the block and let the receiver reconstruct it from local state.

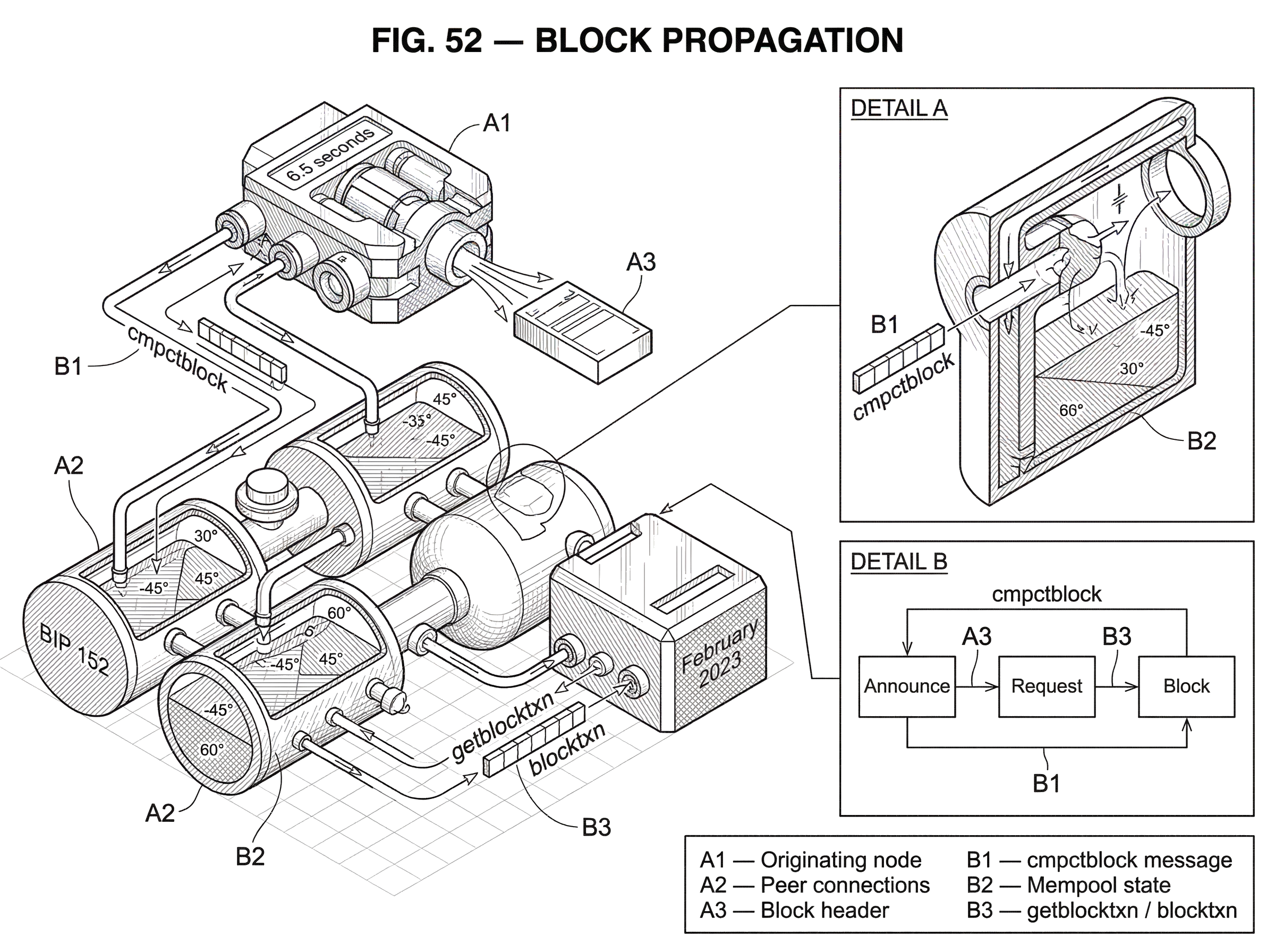

Bitcoin’s compact block relay, specified in BIP 152, is a direct implementation of this idea. Rather than always sending every transaction, a peer can send a cmpctblock message containing the block header, a nonce, short transaction identifiers, and a few prefilled transactions. The receiver computes the same short IDs for transactions in its mempool and tries to match them. If it can reconstruct the whole block from those matches plus the prefilled entries, propagation completes with much less bandwidth. If some transactions are missing, the receiver asks for just those via getblocktxn, and the sender replies with blocktxn.

Mechanically, this works because the sender and receiver are not trying to agree on arbitrary data from scratch. They are trying to reconcile two sets that are already very similar: the sender’s block transactions and the receiver’s mempool transactions. Compact blocks exploit that overlap.

BIP 152 defines short transaction IDs as 6-byte values derived from the block header and a nonce, then applied to transaction identifiers using SipHash and truncation. The details matter for interoperability, but the intuition matters more first: the sender gives the receiver a small per-block fingerprint for each transaction instead of the full transaction. The nonce changes the mapping per block, which helps avoid reuse patterns. The IDs are short enough to save bandwidth, but because they are only 48 bits, collisions can happen occasionally. The protocol therefore treats reconstruction failure as normal and falls back to requesting missing transactions. Nodes must not be penalized for these collisions.

This design creates an explicit tradeoff. You save bandwidth in the common case because peers already share most block transactions in their mempools. But you are now betting on mempool similarity. If mempools differ substantially, reconstruction fails more often and the savings shrink.

That is where transaction propagation and block propagation intersect. A network that spreads transactions efficiently tends to have more converged mempools, which makes compact block reconstruction more successful. Research on Erlay focuses on transaction announcement efficiency, not block relay directly, but it highlights the broader point: relay protocols are coupled. Better transaction dissemination can improve block propagation indirectly because more peers already possess the transactions needed to reconstruct a block.

High-bandwidth vs low-bandwidth relay: the latency–bandwidth trade-off

| Mode | Latency | Outbound bandwidth | Round-trips | Peer selection | Best for |

|---|---|---|---|---|---|

| High-bandwidth | Lower (no extra RTT) | Higher (unsolicited sends) | Removes a round trip | Limited to few top peers | Well-connected peers for tip speed |

| Low-bandwidth | Higher (request required) | Lower (on-demand) | Requires request RTT | Any peer | Bandwidth-constrained or many peers |

BIP 152 also makes a useful distinction between two operating modes. In high-bandwidth mode, peers announce new blocks by sending cmpctblock unsolicited, which can happen even before full validation is complete. In low-bandwidth mode, they announce via inv or headers, and the receiver explicitly requests a compact block.

This is a classic latency-versus-bandwidth tradeoff. High-bandwidth mode removes a round trip and so can reduce time-to-learn for the freshest blocks. But it spends more outbound bandwidth because a peer may send compact blocks to connections that were not going to ask for them. For that reason, the spec says nodes must not send high-bandwidth compact-block announcements to more than three peers.

That limit reveals an important truth about propagation engineering: optimization is not just “make it faster.” It is “make the right thing faster for the right neighbors.” The best-connected, best-performing peers are worth more aggressive relay because they help the block reach the rest of the network quickly. Sending everything aggressively to everyone just turns optimization into spam.

The spec also says a node must not send a compact block unless it can answer any corresponding getblocktxn request for all transactions in that block. That obligation matters because compact relay is only useful if the sender can finish the job when reconstruction is incomplete.

What is set-reconciliation (Graphene) and how does it improve block relay?

Compact blocks are not the end of the idea. They are one point in a broader design space: if sender and receiver mostly share the same transactions, how can they reconcile the difference using the fewest bytes?

Graphene pushes this further using probabilistic data structures. It combines a Bloom filter with an Invertible Bloom Lookup Table, or IBLT, to let peers recover the difference between the sender’s block transaction set and the receiver’s candidate set using far less bandwidth in many cases. The paper reports that for larger blocks Graphene can use about 12% of the bandwidth of existing deployed systems.

The mechanism is more subtle than compact blocks. A Bloom filter cheaply narrows the receiver’s mempool to likely candidates for the block, but Bloom filters allow false positives. An IBLT then helps recover and correct the set difference. In effect, the protocol uses one probabilistic structure to reduce the search space and another to fix the ambiguity introduced by that reduction.

The analogy is searching a library with a blurry index and then a repair tool. The Bloom filter says, “These books might be in the set,” knowing that some are false alarms. The IBLT then helps peel away the mismatch until the remaining difference is small enough to identify. What the analogy explains is why two imperfect summaries together can outperform sending explicit lists. Where it fails is that IBLTs have a very specific algebraic decoding behavior; they are not just generic cleanup tools.

Graphene shows the deeper principle behind many block relay optimizations: block propagation increasingly looks like set reconciliation rather than raw file transfer. If both sides already have almost the same content, the problem is not moving a block wholesale. It is efficiently describing the difference.

The price is probabilistic failure and added complexity. Graphene can fail to decode and may need an extra round trip. Deterministic protocols are simpler to reason about operationally. So the choice of relay protocol depends on whether bandwidth, latency, implementation complexity, or predictability matters most in that environment.

How does P2P topology affect block propagation speed and security?

It is tempting to think propagation is mostly about serialization and compression. But the peer graph itself matters just as much. A perfectly compact message still travels slowly if it has to pass through a sparse or poorly connected topology.

This is why node connectivity is a security issue, not just a performance knob. Research on Bitcoin transaction relay argues that current connectivity is too low for optimal security, and that increasing connectivity under the old announcement design becomes too bandwidth-expensive. The general lesson carries over to block propagation: more and better-connected peers can reduce path length and improve resilience, but only if the relay protocol keeps the bandwidth cost manageable.

Different ecosystems implement that graph differently. Ethereum’s devp2p stack defines the discovery and secure communication protocols over which block and transaction messages travel. Tendermint specifications explicitly treat block propagation as part of protocol semantics because proposals and supporting votes must arrive in time for consensus rounds to progress. In Polkadot, RPC nodes, collators, and validators sit in different roles, so “block propagation” may include both ordinary P2P dissemination and the structured movement of parachain block candidates and proofs toward validators.

These are not cosmetic architectural differences. They change what exactly is being propagated, who needs it first, and what counts as success. A Bitcoin node wants the full block quickly enough to avoid mining stale work. A Tendermint validator may need a proposal and commit-related data in time to preserve liveness. A parachain collator must get a candidate and proof-of-validity to relay-chain validators. The common idea is still propagation, but the operational bottleneck sits in a different place.

How do high-throughput chains change block-propagation design?

On some chains, propagation is not an invisible background process. It becomes one of the main architectural constraints.

Solana is a useful example. Its primary block-propagation protocol, Turbine, treats block data as many small “shreds” distributed across a tree-like structure of neighborhoods. The point is to bound the amount of work each node does while spreading data quickly across the network. Instead of every node sending the full block to every peer, nodes forward pieces through a structured topology.

That design shows what changes when throughput targets are high: block propagation stops looking like occasional gossip of moderate-sized objects and starts looking like sustained high-rate data distribution. Efficiency then depends not only on deduplication and compression, but also on topology control, forward error recovery, and backpressure.

The February 2023 Solana outage is instructive precisely because it exposed block propagation as a systems problem. According to the incident report, external shred- and block-forwarding services re-broadcast recovery shreds for an abnormally large block, overwhelming deduplication and saturating Turbine. Propagation fell back to the slower Block Repair protocol, and finalization degraded significantly. The important lesson is not merely that “there was an outage.” It is that relay paths, filtering assumptions, and external forwarding layers can interact in ways that amplify a local anomaly into a network-wide propagation failure.

In other words, once propagation becomes complex enough, the system can fail not because consensus chose the wrong block, but because the network could not distribute the right block fast enough and cleanly enough.

What propagation attacks and failures should operators watch for?

| Failure | Mechanism | Attacker resources | Impact | Mitigation |

|---|---|---|---|---|

| Slow propagation | Large blocks or multi-hop RTTs | None (natural conditions) | Higher fork and stale-block rates | Headers-first, compact relay, more peers |

| Eclipse attack | Monopolize victim's peers | Hundreds of bots or many IP blocks | Isolated stale view; double-spends | Randomized peers, feelers, eviction fixes |

| BGP hijack | Route diversion via prefix hijack | AS-level control or prefix hijacks | Partitioning or widespread delays | Routing-aware peers, dedicated relays (SABRE), monitoring |

The most obvious failure mode is simple slowness. Slow propagation increases the time during which different nodes hold different tips. In proof-of-work systems that raises stale-block risk. In fast-finality systems it can threaten liveness if messages miss round deadlines.

But the more interesting failures are adversarial. If an attacker can control who a node hears from, they can distort that node’s view of the chain. Eclipse attacks do exactly this by monopolizing a victim’s incoming and outgoing connections, isolating it from honest peers. Once isolated, the victim receives a filtered or stale version of block propagation and can be exploited for double-spends, selfish-mining variants, or adversarial forks.

A different layer of attack targets Internet routing rather than peer selection. Work on BGP hijacking showed that routing attacks can partition or delay Bitcoin by diverting traffic through malicious autonomous systems. The key mechanism is external to the blockchain protocol itself: BGP does not verify route advertisements robustly, and routers prefer more-specific prefixes, so an attacker can attract traffic and interfere with block dissemination. The attack can happen quickly, while mitigation is often slow and operationally messy.

These attacks teach the same lesson from different directions: block propagation depends on assumptions outside the block format. It assumes the peer graph is not monopolized, the routing layer is not being manipulated too aggressively, and nodes can discover and retain healthy peers.

This is also why specialized relay networks have been proposed. SABRE, for example, is a relay-network design intended to protect Bitcoin block dissemination against routing attacks by placing relay nodes in locations that are inherently more resilient under BGP policy and by offloading relay work into programmable network hardware. Whether or not such designs become standard, they reflect a real shift in understanding: for large public blockchains, propagation robustness may require dedicated infrastructure, not just nicer message encodings.

Which propagation limits are unavoidable and which are engineering choices?

Some parts of block propagation are fundamental. A distributed network cannot make a new block globally known instantly. There will always be delay, and during that delay some nodes will disagree. There will always be tradeoffs among latency, bandwidth, verification cost, and topology.

Other parts are conventions or design choices. Using inv and getdata is one protocol pattern, not a law of nature. Using headers-first announcements is an optimization choice. Compact blocks, Graphene, relay trees, or dedicated relay networks are all engineering responses to the same underlying problem, but none is the uniquely correct answer for every chain.

The right design depends on what assumption you can safely lean on. If peers’ mempools are usually similar, set-reconciliation methods are attractive. If throughput is very high, topology-aware chunking becomes more important. If adversarial routing is a major concern, relay-network placement and secure transport matter more. If the consensus protocol has tight timing requirements, predictable worst-case delivery may be more valuable than average-case bandwidth savings.

This is also where real-world custody and settlement systems intersect with propagation. For example, Cube Exchange uses a 2-of-3 threshold signature scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. Threshold signing solves a different problem than block propagation, but the two meet in practice at settlement time: once a threshold-authorized transaction or state update exists, the network still depends on propagation infrastructure to distribute the resulting block or settlement data quickly and reliably. Cryptographic authorization does not remove network-distribution risk; it simply changes who is allowed to originate the update.

Operational objective: how to reduce the 'interesting' window for new blocks

The best way to summarize block propagation is operational rather than formal. A newly produced block is interesting only for a short moment. During that moment, the network is deciding whether this block is known, available, reconstructible, and worth building on. Good propagation makes that moment short. Bad propagation stretches it into a period where honest nodes disagree, attackers have room to interfere, and throughput gains on paper evaporate in the network.

So when engineers optimize block propagation, they are really trying to make a fresh block become boring as fast as possible. Boring means every relevant node has it or can reconstruct it, has checked enough to trust its place in the chain, and has moved on to the next step of consensus, execution, or block production.

That is the durable idea to remember: block propagation is the machinery that turns a block from someone’s local discovery into the network’s shared present. When that machinery is fast and resilient, the blockchain feels coherent. When it is slow or fragile, every other layer starts to wobble.

How does block propagation affect real-world usage?

Block propagation determines how quickly a new block becomes visible and usable across the network, which affects deposit credit times, how many confirmations you should wait for, and when trades or withdrawals are safe to execute. Use Cube’s normal deposit and trading flows, but first verify the network’s propagation and finality characteristics so you choose an appropriate confirmation threshold before funding or trading.

- Check the chain’s finality model and common confirmation guidance (probabilistic PoW vs deterministic BFT) using protocol docs or explorers.

- Look up recent block-propagation metrics (median and long-tail delays) or test by sending a small deposit and measuring how long it takes to be seen and confirmed.

- Fund your Cube account by depositing fiat or sending the supported crypto after selecting the correct network and token.

- Place your trade or withdrawal on Cube. Use a limit order for price control when confirmation delays may cause reorg risk, or a market order for immediate execution if you accept on-chain confirmation timing.

Frequently Asked Questions

Slow or unreliable propagation lengthens the window during which different nodes have different chain tips, which raises stale-block and fork rates in proof-of-work systems and can break liveness or cause missed proposals in fast-finality/BFT systems. Empirical Bitcoin measurements show median learn-time ~6.5s and a long tail (≈5% of nodes still missing a block after 40s), and larger blocks increase fork risk by adding per-kB delay.

Compact block relay (BIP 152) sends a block header plus short per-transaction IDs and a few prefilled transactions so a receiver can reconstruct the block from its mempool; if reconstruction fails the receiver requests just the missing transactions via getblocktxn/blocktxn. The tradeoff is substantial bandwidth savings when mempools overlap, at the cost of occasional reconstruction failures, complexity, and a reliance on mempool similarity.

Reconstruction fails mainly when the receiver's mempool lacks transactions present in the block or when short transaction IDs collide (BIP152 uses 48-bit short IDs), so compact-relay protocols treat such failures as normal and fall back to requesting missing data; nodes must not be penalized for collision-caused failures. The practical failure rate depends on mempool divergence in the wild, which the spec and measurements note but do not fully quantify.

Set-reconciliation schemes like Graphene use probabilistic summaries (Bloom filters + IBLTs) to describe the sender’s transaction set so receivers can recover the set difference with much less bandwidth in many cases, but they are probabilistic and can require extra round trips or large IBLT sizes in edge cases. The payoff is lower bandwidth for large blocks when sender and receiver sets are similar; the cost is additional complexity and a tunable (nonzero) decode-failure probability.

Network topology and peer connectivity strongly affect how fast a block percolates: more and better-connected peers shorten path lengths and improve resilience, whereas sparse or adversarially controlled peer graphs (eclipse attacks) or routing-layer manipulations (BGP hijacks) can delay or partition block dissemination and enable double-spends or selfish-mining variants. Practical studies show eclipse attacks exploit stale/limited peer tables and BGP hijacks can divert traffic at the AS level, so propagation robustness depends on both P2P behavior and Internet routing.

high-throughput chains treat propagation as a core design constraint rather than an occasional background task: Solana’s Turbine splits blocks into many small ‘shreds’ and forwards them through a structured neighborhood tree to bound per-node work and increase throughput, but the Feb 2023 outage illustrated that misbehaving forwarders or excessive recovery-shred rebroadcast can overwhelm deduplication and fall back to slower repair protocols, degrading finalization. That shows topology control, chunking, and forward-error recovery become first-order concerns at high throughput.

Some aspects are fundamental - there will always be a nonzero propagation delay and trade-offs among latency, bandwidth, verification cost, and topology - while other aspects (message formats, announce-vs-transfer patterns, headers-first, compact-relay, Graphene, relay networks) are engineering choices. Which design is best depends on assumptions you can rely on (mempool similarity, timing requirements, adversarial routing risk, throughput targets), so propagation is about picking the right set of compromises for your protocol.

Transaction-relay improvements indirectly improve block propagation because compact- and set-reconciliation relays rely on mempool overlap; protocols like Erlay aim to reduce duplicate transaction bandwidth and can cut transaction-relay overhead (the paper reports ~40% savings under certain connectivity assumptions), which should increase compact-block reconstruction success, though the end-to-end effects on block-relay performance in diverse topologies remain an open empirical question.

Related reading