What is Polkadot?

Learn what Polkadot is, how its relay chain and parachains work, why shared security matters, and how XCM enables cross-chain communication.

Introduction

For the token explainer, see DOT.

Polkadot is a blockchain network built around a specific idea: many different chains should be able to run in parallel, share security, and communicate without relying on a trusted middleman. That sounds straightforward, but it pushes against a basic tension in blockchain design. A single chain is easier to secure because everyone agrees on one history and one state machine. A network of many chains is easier to specialize and scale, but it usually fragments security and makes interoperability messy.

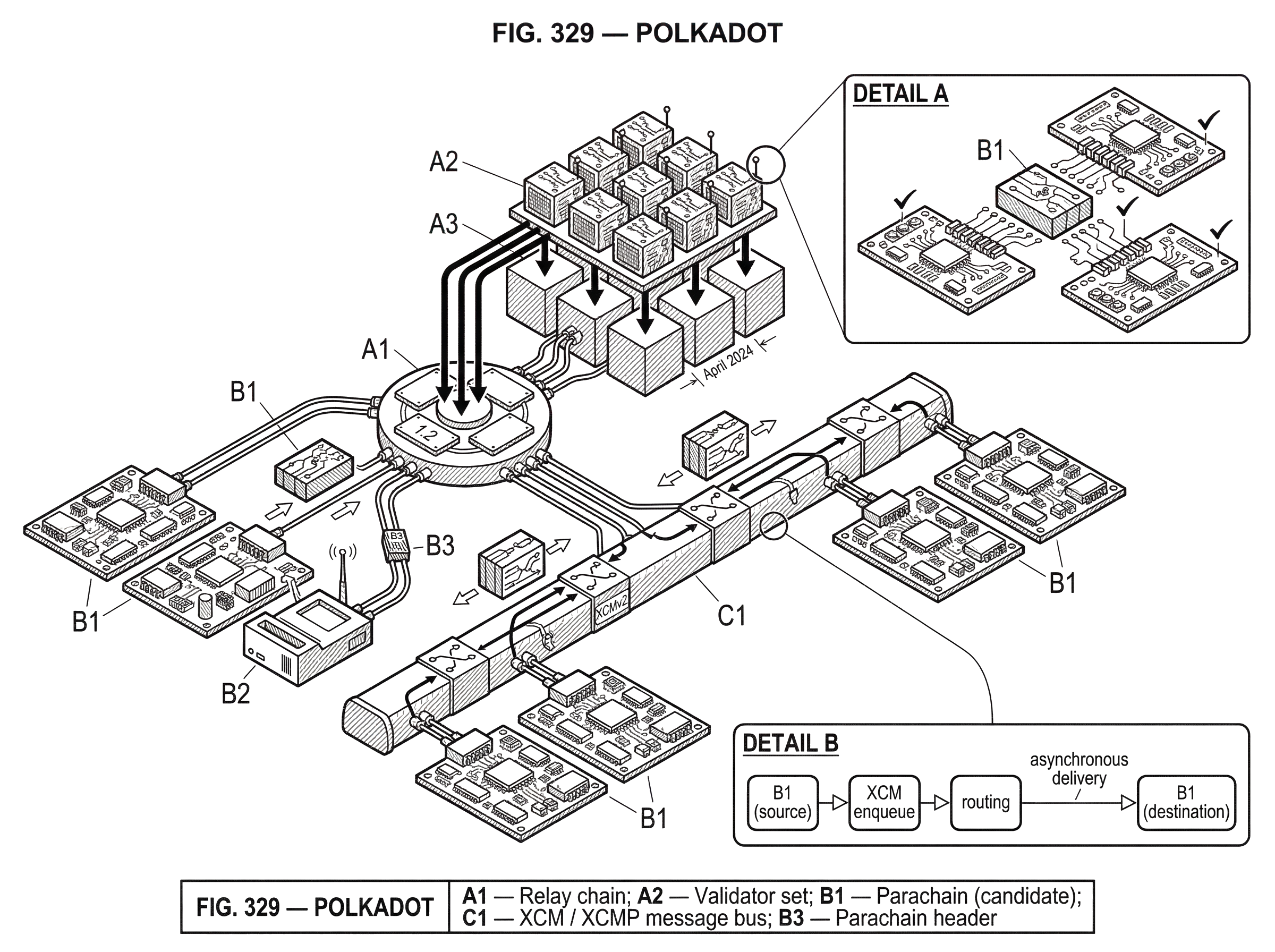

Polkadot exists to change that trade-off. Instead of asking every application or community to launch its own fully sovereign chain with its own validator set, Polkadot offers a common security layer called the relay chain. Independent execution environments called parachains connect to it. Those parachains can have different logic, different state models, and different purposes, but they inherit security from the same validator set and can exchange messages through a common framework.

The deepest idea in Polkadot is that agreement on history and execution of application logic do not have to be the same thing. In the Polkadot whitepaper, this is framed as separating canonicality from validity. Canonicality means the network agrees which chain history counts. Validity means state transitions are correct. Traditional blockchains bundle these together tightly. Polkadot loosens that bundle so one base chain can coordinate many execution environments at once.

That design has real consequences. It helps explain why Polkadot is described as a heterogeneous multi-chain, why the relay chain is intentionally minimal, why parachains do most of the computation, and why cross-chain activity in Polkadot is built around asynchronous messaging rather than pretending the whole network is one synchronous computer. It also explains the system’s constraints: data availability becomes a major bottleneck, runtime upgrades become powerful but operationally sensitive, and shared security means parts of the system are coupled in ways users may not notice at first.

What problem does Polkadot solve with shared security and parachains?

| Model | Security model | Interoperability | Independence | Best for |

|---|---|---|---|---|

| Single‑chain | One validator set | Native, synchronous | High independence | Simple apps, single runtime |

| Sovereign chains (Cosmos) | Separate validator markets | Hub-to-zone bridges | High sovereignty | Independent ecosystems |

| Polkadot (shared security) | Pooled relay‑chain validators | XCM asynchronous messaging | Coupled to relay chain | Specialized chains with shared security |

A normal blockchain asks one validator set to do everything at once. It must agree on block order, execute transactions, verify state transitions, keep data available, and secure the whole system economically. This works, but it creates pressure in two directions. If you keep the chain general-purpose, every application competes for the same blockspace and execution rules. If you split activity across multiple chains, each chain usually has to recruit and pay for its own security, and moving assets or messages between them becomes difficult.

Polkadot starts from the observation that these functions do not need to be packaged in exactly one way. If a base layer can provide shared security, finality, and coordination, then application-specific chains can specialize without each one having to bootstrap its own validator market. That is the appeal for developers. A chain focused on DeFi, identity, gaming, governance, or asset issuance can tailor its execution logic while still plugging into a common security layer.

This is why Polkadot is often compared with other multi-chain systems, especially Cosmos. The important difference is not merely that both have many chains. The real distinction is where security lives. In Cosmos-style designs, chains are generally more sovereign and interoperability is built between separately secured networks. In Polkadot, parachains are more tightly integrated because they rely on the relay chain’s pooled validator set. That gives them stronger shared guarantees, but it also means they are less independent than a fully sovereign chain.

So the core problem Polkadot is solving is not just “how to connect chains.” It is how to connect specialized chains without forcing each chain to recreate consensus and security from scratch. Everything else in the architecture follows from that.

What does the Polkadot relay chain do?

The relay chain is the center of Polkadot. Its job is deliberately narrow: it handles block production, validator staking, core scheduling, data availability, and the shared security that parachains depend on. The relay chain is intentionally not where most user-facing application logic lives. Polkadot’s own documentation emphasizes this minimalism, and notes that user-facing functions such as accounts, balance transfers, and staking have been moved to Asset Hub, which is a system parachain rather than a feature set of the relay chain itself.

This minimalism is not aesthetic. It is a scaling choice. If the relay chain tried to be a full application platform in its own right while also coordinating many parachains, it would become the bottleneck it is supposed to relieve. By keeping the relay chain focused on security and coordination, Polkadot pushes most execution outward.

A useful way to think about the relay chain is as the place where the network says, “these parachain outputs are the ones the system recognizes.” Parachains do the work of executing their own transactions and producing candidate blocks. The relay chain does not re-execute all parachain logic in the same way a monolithic chain would execute everything itself. Instead, it organizes validators to check parachain state transitions and then seals parachain headers into relay-chain blocks. That is how local execution becomes globally recognized history.

This is also where shared security becomes concrete rather than rhetorical. All validators are staked on the relay chain in DOT. They are not separate security markets for each parachain. A parachain connected to the relay chain benefits from that pooled security model rather than needing to recruit its own validator set from scratch.

There is, however, an important trade-off. Shared security also means shared state coupling. Polkadot’s architecture documentation notes that parachains connected to the relay chain share in the relay chain’s security, and if the relay chain had to revert, parachains would also revert. That is the price of integration. The same mechanism that gives parachains stronger security guarantees also ties their fate to the relay chain’s consensus history.

What are parachains and how do they use Polkadot’s shared security?

A parachain is a specialized blockchain, or more generally a dynamic data structure, connected to the relay chain. The whitepaper is explicit that it does not need to be “a blockchain” in the narrow conventional sense. What matters is that it can produce state transitions in a form validators can check. In practice, parachains are where most computation in Polkadot happens.

The key design freedom here is heterogeneity. The relay chain does not impose a single application model on parachains beyond the requirement that they be able to generate proofs of valid state transition for the validators assigned to them. This means different parachains can optimize for different goals. One can focus on asset issuance, another on smart contracts, another on identity or privacy, another on governance-heavy logic. They share security and messaging standards, but not necessarily execution style.

This is where Polkadot differs from simply being “many copies of the same chain.” The value is not only parallelism. It is parallelism with specialization. If all chains had to behave identically, a multi-chain system would mostly be a throughput trick. Polkadot instead treats chain-level customization as part of the architecture.

There is also an economic scheduling layer to this. Polkadot documentation describes parachains as securing execution time, or coretime, with DOT. Some parachains can be regular and some on-demand. The exact economic details are beyond a high-level definition, but the basic point is that relay-chain validation capacity is scarce and must be scheduled. This matters because “parallel execution” is never free. The system still has to decide which parachain candidates get checked, when, and by whom.

A concrete example helps. Imagine a parachain devoted to stable asset transfers and another devoted to on-chain gaming. The asset chain wants predictable messaging, strict accounting, and efficient transfers. The gaming chain may want fast state updates and application-specific rules. In a monolithic chain, both must fit into the same runtime constraints and compete for the same execution lane. In Polkadot, each chain can define its own runtime while relying on the same shared validator pool for final recognition. The mechanism is not that the relay chain executes both applications directly. The mechanism is that each parachain executes itself and proves enough for relay-chain validators to accept its candidate block.

How does Polkadot validate parachain blocks?

Polkadot’s security model depends on who checks parachain blocks and how those checks are distributed. The whitepaper describes parachain validation as being performed by cryptographically randomly segmented subsets of validators, with one subset assigned per parachain. This matters because having every validator fully execute every parachain would destroy the scaling benefit. Random assignment lets the network spread work across validators while still making collusion harder.

The high-level flow is this. A parachain candidate block is assembled from transactions and the chain’s current state. That candidate is presented together with proof material to validators assigned to that parachain. Those validators verify that the state transition is valid. Once accepted, the parachain header is sealed into the relay chain. That sealing step is important: it prevents the parachain block from floating around as a loosely attached side structure vulnerable to independent reorganization. Its recognized place in history is anchored by the relay chain.

The participant roles clarify the mechanism. Collators maintain full nodes for a particular parachain, collect transactions, and build candidate parachain blocks. They provide these candidates and the associated proofs to validators. Validators are elected through Nominated Proof-of-Stake, or NPoS, and they produce relay-chain blocks while also checking parachain validity and ensuring parachain blocks remain available. Nominators back validators with stake, helping choose the active validator set. The original whitepaper also describes fishermen, who act more like bounty hunters watching for provable misbehavior.

This division of labor answers a question readers often have: if collators build parachain blocks, are collators the ones securing parachains? Not in the same sense as validators. Collators are essential for block production on a parachain, but the economic security comes from relay-chain validators and the staking system around them. Collators propose; validators validate.

That distinction matters especially when things go wrong. Polkadot has explicit offense and slashing rules for validator misconduct. Its documentation groups major validator offenses into invalid votes and equivocations, with penalties including slashing, disabling, and reputation damage. This is not just punitive bookkeeping. Shared security only works if the validator set has stronger incentives to reject bad state transitions than to collude in accepting them.

How does relay-chain consensus and finality work on Polkadot?

At the relay-chain level, Polkadot combines a Byzantine fault-tolerant consensus approach with NPoS validator selection. The whitepaper characterizes the relay-chain consensus as a modern asynchronous BFT design inspired by systems such as Tendermint and HoneyBadgerBFT, and pairs that with stake-based validator election.

From first principles, this combination is trying to achieve two things at once. The BFT side gives the network a way to finalize a shared history even under faulty or malicious behavior by some participants. The PoS side gives the network an economically structured way to decide who participates in validation and how honesty is incentivized. You need both pieces because finality without an economic selection mechanism is incomplete in an open network, and stake-weighted participation without a robust agreement process does not solve cross-chain coordination.

For Polkadot, finality matters especially because parachains rely on relay-chain recognition. A parachain is not secure merely because its local participants think a block happened. The block matters system-wide when the relay chain includes and finalizes the relevant state. That is why a shared-security architecture leans so heavily on the quality of relay-chain consensus.

This also explains why bridges to other networks are a meaningful part of the design. The whitepaper discusses interoperability with Ethereum and potentially Bitcoin through bridge mechanisms. Those bridges matter because a strongly finalized relay-chain history is easier for external systems to reason about than a looser or more probabilistic one. But bridge design is highly implementation-dependent, and not every interoperability claim should be treated as equally trustless or equally mature.

How do XCM and XCMP enable cross-chain messages on Polkadot?

| Timing | Composability | Trust model | Typical use |

|---|---|---|---|

| Local synchronous call | Immediate, in‑tx | Single‑chain safety | Internal contract calls |

| Polkadot XCM (asynchronous) | Queued, later execution | Relay‑chain routing rules | Parachain message flows |

| Bridge transfers / teleports | Variable latency | Configuration‑dependent trust | Cross‑network asset moves |

If Polkadot were only about shared security, it would still be useful. What makes it an ecosystem rather than just a hosting layer is the ability of chains to communicate. In current Polkadot terminology, XCM is the message format and XCMP is the delivery mechanism. That distinction matters because many people casually talk about XCM as if it were “the protocol,” but Polkadot’s documentation is careful here: XCM is a format for cross-consensus messages, not a single protocol implementation.

This separation is conceptually elegant. A format defines what a message means. A transport defines how it gets delivered. XCM says how cross-consensus intent is represented. XCMP and related routing machinery are how such messages move across the network.

The deeper point is that Polkadot’s cross-chain model is asynchronous. The whitepaper describes interchain messages as queued posts with ingress and egress handling, and explicitly notes there is no intrinsic synchronous return path. This is not an incidental detail. It is a consequence of dealing with multiple chains that may advance at different times under a shared coordination layer.

That means developers cannot safely assume cross-chain calls behave like ordinary local function calls. If parachain A sends a message to parachain B, the system does not guarantee an immediate in-transaction response the way a contract call within one execution environment might. Instead, A emits a message that gets routed and later processed by B. If B needs to respond, that response is itself another message. This increases latency and complexity, but it is more honest about the mechanics of multi-chain execution.

A simple narrative example makes this clearer. Suppose a user on an asset-focused parachain wants to move value into a smart-contract parachain and then trigger an action there. The first chain creates an outbound message using XCM. Validators and routing machinery ensure that message is recognized and delivered. The destination chain later executes the message, credits or interprets the asset according to its own rules, and may emit a further message if some acknowledgment or follow-up effect is needed. At no point is the whole system pretending these two chains are a single synchronous state machine. The consequence is less magical composability than “everything on one chain,” but more explicit and usually safer coordination.

There is an important limit here. A security audit of XCMv2 noted that some asset transfer modes, such as teleports and reserve transfers, are not trustless in the absolute sense: receiving chains must trust relevant configuration and counterpart assumptions, and there is not always an automatic remote proof that assets were burned or locked on the sender. That is a useful corrective. “Cross-chain” should never be treated as automatically equivalent to “trustless under every configuration.” In Polkadot, a large part of XCM security lives in runtime configuration choices.

Why does Polkadot use on-chain runtimes and forkless upgrades?

| Upgrade method | Downtime risk | Rollback ability | Best for |

|---|---|---|---|

| On‑chain Wasm runtime upgrade | Low if tested | Limited rollback | Frequent behavioral changes |

| Traditional hard fork | Higher coordination risk | Explicit chain split rollback | Incompatible protocol changes |

Polkadot’s developer stack is built around the idea that a chain’s business logic lives in its runtime, which acts as the chain’s state transition function. In Polkadot SDK-based chains, the runtime is compiled to WebAssembly, stored on-chain, and can be upgraded through governance. That is one of the system’s most distinctive operational features.

The attraction is obvious. If the runtime itself is on-chain code, then changing core protocol logic does not necessarily require the kind of disruptive social coordination associated with traditional hard forks. A runtime upgrade can be enacted by replacing the on-chain Wasm blob through a governance-controlled mechanism such as system.setCode. The documentation stresses that the process is transparent and trustless in the protocol sense, provided the chain’s governance authorizes it.

This is not only for parachains. It reflects a broader design philosophy in the Polkadot ecosystem: make protocol rules legible and updateable as part of the chain’s own machinery. From a first-principles perspective, that reduces the gap between “application code” and “protocol code.” The chain can evolve without requiring every participant to coordinate around an external fork event in the same way older networks often did.

But the mechanism comes with sharp edges. The docs note that forgetting to bump spec_version means the runtime change will not be recognized. More importantly, real incidents show that runtime upgrades can be operationally delicate because the network has to transition between old and new behavior while staying live. In April 2024, Polkadot parachains stalled after runtime 1.2 was enacted because a subsystem used for async backing did not distribute backed statements correctly after a mid-session transition. The network self-healed at the next session, but the episode is revealing.

The lesson is not “runtime upgrades are bad.” The lesson is that forkless does not mean riskless. When runtime APIs activate new node behavior, testing the transition path becomes as important as testing the end state. The postmortem explicitly notes that tests running only against the latest runtime version can miss subtle node-runtime interactions at upgrade boundaries. That is exactly the sort of failure mode a reader might overlook if they hear only the phrase “seamless upgrades.”

What are Polkadot’s scalability strengths and its main limits?

Polkadot’s scaling story is not “infinite throughput because many chains exist.” The architecture helps because parachains can process transactions in parallel and specialize their execution environments. But the hard part moves rather than disappears.

The whitepaper is unusually candid about the main bottleneck: data availability and validator bandwidth. If the network is to remain safe, validators or the system around them must be able to ensure parachain data is available enough for verification, dispute handling, and recovery. As the number of chains increases, bandwidth and availability responsibilities become more demanding. In other words, scaling execution is easier than scaling the assurance that execution data can be checked when needed.

This is why Polkadot’s design discussions include proposals such as availability guarantors, relaxed availability latency, collator pools, and hypercube routing. These are attempts to manage the fact that a shared-security multi-chain needs not only fast execution lanes but also a robust way to ensure that the data behind those lanes is actually retrievable. Some of these ideas were presented in the whitepaper as exploratory rather than settled.

That uncertainty matters. It means Polkadot should be understood as a serious architectural direction, not a completed theorem. The core ideas are clear: shared security, heterogeneous chains, asynchronous cross-chain messaging, and a minimal relay chain. But some of the engineering around availability and large-scale coordination remains a domain of evolving implementation rather than final doctrine.

How does Polkadot governance manage protocol and runtime changes?

Because Polkadot expects frequent runtime-level evolution, governance is not an add-on; it is part of how the network changes itself. Polkadot’s current governance model, OpenGov, replaces earlier centralized structures with a system of origins, tracks, referendums, conviction voting, and delegation.

The important conceptual point is that governance in Polkadot is wired directly into protocol change. A proposal is not just a public opinion poll. Depending on its origin and track, it can enact runtime changes, treasury actions, or other protocol-relevant decisions. Tracks define different procedural parameters such as approval thresholds and timelines, and multiple referendums can proceed simultaneously.

This makes governance more expressive, but it also means operational decisions can move quickly in ways that depend on the track and origin involved. The Technical Fellowship can, for example, use whitelisting mechanisms to shorten enactment timelines for certain technical proposals. That can be valuable during urgent upgrades, but it also reminds us that governance throughput and governance safety are always being balanced.

In Polkadot, then, governance is not merely social consensus floating above the code. It is one of the code paths by which the network updates itself. That fits naturally with the runtime model, but it also raises the stakes of governance quality.

Conclusion

Polkadot is best understood not as “a faster blockchain,” but as a shared-security architecture for many specialized chains. Its central move is to separate agreement on history from execution of application logic, letting a minimal relay chain coordinate and secure heterogeneous parachains that run in parallel.

That design gives developers something powerful: customization without fully independent security bootstrapping, and interoperability through a common message framework. But it also imposes real discipline. Cross-chain communication is asynchronous, shared security creates shared coupling, runtime upgrades are powerful but operationally sensitive, and scaling depends heavily on solving data-availability problems well.

The short version worth remembering tomorrow is this: Polkadot tries to make a network of different blockchains behave like one coordinated system without pretending they are all the same chain. That is the promise, and also the engineering challenge at its core.

How do you buy Polkadot?

You can buy DOT (Polkadot’s native token) on Cube Exchange by funding your Cube account and trading on the DOT spot market. Use a market order for immediate execution or a limit order if you want precise price control.

- Fund your Cube account with fiat or transfer a supported stablecoin (for example USDC) into Cube.

- Open the DOT/USDC (or DOT/your-quote-asset) spot market on Cube Exchange.

- Choose Market for instant execution or Limit to set the price you want; enter the DOT amount or the USDC you want to spend.

- Review estimated fees, expected fill, and the order preview, then confirm to submit the trade.

Frequently Asked Questions

Unlike Cosmos-style designs where each chain is largely sovereign and must secure itself, Polkadot pools security on a single relay-chain validator set so parachains inherit that shared economic security rather than bootstrapping their own validator markets; this gives stronger shared guarantees but also makes parachains less independent than fully sovereign chains.

If the relay chain reverts, connected parachains would also revert because their recognized history is anchored by relay-chain finality - shared security therefore couples parachain fate to relay-chain consensus.

Collators build parachain candidate blocks and collect proofs, but relay-chain validators (selected via NPoS) perform the economic security work by checking validity, producing relay blocks, and ensuring availability; collators propose, validators validate and secure.

Polkadot spreads validation work by assigning cryptographically random subsets of validators to check individual parachain candidates instead of having every validator re-execute every parachain, though the exact partitioning/assignment commit method is described as out of scope and remains an implementation detail.

Cross-chain messages use XCM as a message format and XCMP-like routing/transport for delivery, and the overall model is asynchronous so messages are queued and later processed rather than providing immediate synchronous call/return semantics across parachains.

Not always; an XCM audit and the documentation note that certain transfer modes (for example teleports and some reserve transfers) require trusting runtime configuration and counterpart assumptions rather than providing an absolute trustless remote proof, so cross-chain transfers can have mode-dependent trust assumptions.

Runtimes are stored on-chain as Wasm and can be upgraded without a hard fork, but upgrades are operationally sensitive: forgetting to bump spec_version prevents activation and real incidents (e.g., an April 2024 parachain stall) show mid-session runtime transitions can break node–runtime interactions, so forkless upgrades reduce social disruption but are not risk-free.

Polkadot scales by parallel, specialized parachains, but the main bottleneck is data availability and validator bandwidth - ensuring parachain data is retrievable and verifiable at large scale remains a core engineering challenge and a subject of proposed mitigations rather than a settled solution.

There is an economic scheduling layer - parachains secure scarce relay-chain validation "coretime" (regular and on-demand slots) - but the article and documentation treat exact pricing, lease mechanics, and on-demand scheduling details as implementation-specific and not fully specified at the high-level overview.

Governance (OpenGov) is integrated with protocol change and can enact runtime and treasury actions via origins, tracks, and referenda, and special mechanisms (for example Technical Fellowship whitelists) can shorten enactment timelines - this makes governance a direct upgrade path but also concentrates operational importance on governance quality and process parameters.

Related reading