What is Layer 2?

Learn what Layer 2 is, why it exists, how rollups and channels work, and how Layer 2 scales blockchains while keeping Layer 1 settlement.

Introduction

Layer 2 is a way to scale a blockchain by moving some activity away from the base chain while still relying on that base chain for the part that matters most: final settlement and security. The puzzle it solves is simple to state but hard to escape. If every full node on a blockchain must execute every transaction, store the relevant data, and agree on the result, then the system is highly verifiable; but also constrained in throughput and often expensive to use.

That tradeoff is not an accident. It comes from the structure of a decentralized system. A blockchain that is easy for many independent parties to verify cannot also let transaction volume grow without bound on the same layer. So when demand rises, fees rise, confirmation times become more contested, and many applications become uneconomic.

Layer 2 changes that by asking a more precise question: *which parts of transaction processing really need to happen on Layer 1, and which parts can happen elsewhere without giving up too much trust? * The answer depends on the design, but the core idea is consistent across systems. Do more work away from the base chain, then use the base chain to anchor the result.

On Ethereum, this idea is now central to scaling. Official documentation describes Layer 2 as solutions that handle transactions off Ethereum Mainnet while taking advantage of Mainnet’s decentralized security model. In practice, the most important family of Layer 2 systems there is the rollup. On Bitcoin, the Lightning Network is a different Layer 2 design built around payment channels rather than general-purpose offchain execution, but it follows the same broad principle: shift activity off the base layer and return to it for settlement and security-critical guarantees.

The important thing to understand is that Layer 2 is not just “another chain.” That is where much confusion begins. A true Layer 2 is defined less by where computation happens than by what it inherits from Layer 1. If a system processes transactions elsewhere but depends on its own separate validator set or consensus for safety, it may help with scaling, but it is not inheriting security in the same way.

How does Layer 2 move execution offchain while keeping Layer 1 settlement?

To see why Layer 2 exists, start from first principles. A base blockchain does three jobs at once. It orders transactions, it makes the resulting state authoritative, and it provides the data needed for others to verify what happened. If every node does all three jobs for every transaction, the network remains robust; but capacity is scarce.

Layer 2 works by separating those jobs. The base chain still acts as the ultimate court of record, but the day-to-day transaction work happens elsewhere. Users send transactions to the Layer 2 system, an operator or group of operators orders and processes them, and then the Layer 2 periodically sends compressed information back to Layer 1. That information is what lets Layer 1 act as the settlement layer.

This is the compression point: Layer 2 scaling is not mainly about doing fewer transactions. It is about making Layer 1 verify or arbitrate many transactions in bulk instead of processing each one in full. Once that clicks, much of the design space becomes easier to understand. Batching reduces cost because a single Layer 1 interaction can represent many Layer 2 actions. Proofs or dispute mechanisms preserve correctness because Layer 1 remains able, directly or indirectly, to reject invalid state transitions.

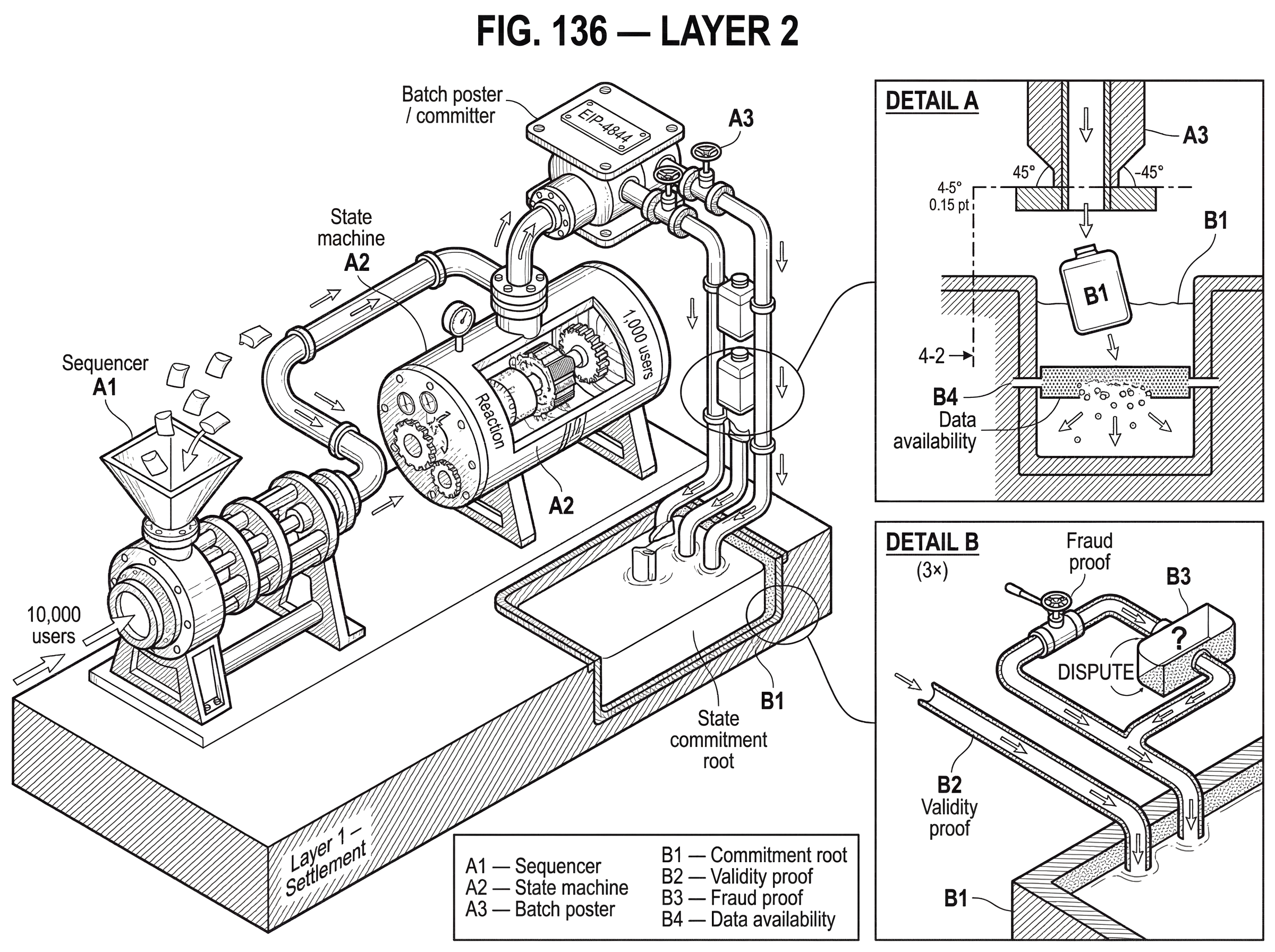

A simple narrative example helps. Imagine 10,000 users want to trade, transfer, or interact with applications. On Layer 1 alone, each action competes for scarce blockspace. On a Layer 2 rollup, those actions are first executed offchain by the rollup’s system. Instead of each user paying Layer 1 costs separately, the system groups many transactions into a batch and posts the batch’s data, or a commitment to it, back to Layer 1.

The users still care about the same end result but Layer 1 sees a summarized form rather than 10,000 independent computations.

- balances changed

- trades settled

- contracts updated

The details of that summary are where Layer 2 designs differ. Some systems post enough data on Layer 1 for anyone to reconstruct the Layer 2 state independently. Some rely on fraud challenges if an invalid result is posted. Others rely on validity proofs that mathematically attest to correct execution. Some systems keep more data offchain, which lowers costs further but changes the trust assumptions. Those are not superficial implementation choices. They determine what users must trust, how quickly withdrawals finalize, and what failure modes are possible.

How can users tell if a network is a true Layer 2 or a sidechain?

| Type | If operator disappears | Who you must trust | Data on L1 | Security reduces to L1 |

|---|---|---|---|---|

| Genuine Layer 2 (rollup) | Users can recover via L1 | Minimal extra trust | Posts commitments and data | Yes inherits L1 security |

| Sidechain or federation | Recovery depends on validators | Trust validator committee | Often posts limited data | No not purely L1 security |

| Exchange internal ledger | Users need operator cooperation | Trust operator custody | Data kept offchain | No separate L1 guarantees |

A useful test is to ask what happens if the Layer 2 operator misbehaves or disappears. If users can still recover funds or verify the true state using Layer 1, the system is behaving like a genuine Layer 2. If they must trust a separate committee, federation, or independent consensus to get their assets back, then the system is closer to a sidechain or another offchain network with its own security model.

This is why Ethereum’s documentation is careful. It describes Layer 2 as off-mainnet transaction handling that still leverages Mainnet security, while also noting that not every offchain scaling system inherits security equally. Sidechains, for example, run parallel to Ethereum and may be EVM-compatible, but they have their own consensus and validator assumptions. They can be useful and fast, but their safety does not reduce cleanly to Ethereum’s safety.

Rollups are often treated as the archetypal Layer 2 because they push this inheritance furthest. L2BEAT summarizes them as systems that periodically post state commitments to Ethereum. Those commitments are then either backed by validity proofs or accepted optimistically and open to challenge within a fraud-proof window. In both cases, the goal is the same: the Layer 2 may execute elsewhere, but the right to declare a state final comes from a process anchored on Layer 1.

The distinction matters because “offchain” by itself says almost nothing. An exchange’s internal ledger is offchain. A sidechain is offchain relative to Ethereum. A payment channel is offchain between updates. What makes Layer 2 interesting is not mere relocation of activity but retention of Layer 1 as the trust anchor.

How do rollups (optimistic and zk) batch transactions and anchor state to Layer 1?

| Type | Proof model | Data on L1 | Withdrawal latency | Cost | Main risk |

|---|---|---|---|---|---|

| Optimistic rollup | Fraud proofs on challenge | Typically posts calldata | Days due to challenge window | Lower proving overhead | Challenge delays and rollback risk |

| ZK-rollup | Validity proofs upfront | Posts proof and data | Fast finality | Higher proving cost | Prover resource requirements |

| Validium | Validity proofs but offchain data | Keeps transaction data offchain | Fast finality | Lowest L1 costs | External data availability trust |

Because rollups dominate current Layer 2 design on smart-contract platforms, they are the clearest place to see the mechanism. A rollup usually has a sequencer or operator that accepts user transactions. That operator orders them, executes them in the Layer 2 environment, and produces updated state. Periodically, it sends a batch to Layer 1.

That batch does two logically separate things. First, it tells Layer 1 what the new claimed state is by posting a state commitment or equivalent root. Second, it makes available enough information for Layer 1 or external verifiers to judge whether that claim is legitimate. The exact form of the second part determines the security model.

In an optimistic rollup, the batch is treated as valid unless challenged. The operator posts the claim, and there is a dispute window during which someone can submit a fraud proof if the claim is wrong. This means correctness is enforced by the possibility of challenge rather than by an upfront proof for every batch. The advantage is lower proving overhead. The cost is that finality, especially for withdrawals back to Layer 1, may be delayed until the challenge period passes.

In a zk-rollup, the operator posts a validity proof attesting that the new state follows correctly from the old state and the included transactions. Layer 1 verifies the proof before accepting the update. This shifts effort from dispute resolution to proof generation. Proving can be computationally heavy, but once the proof is available, finality can be much faster because correctness has already been established.

The mechanism is easier to grasp through a transaction batch story. Suppose a thousand users swap tokens on a decentralized exchange that lives on a rollup. The sequencer receives those swaps and executes them in the rollup’s state machine. It computes the new balances and the new exchange reserves. It then compresses the relevant transaction data and forms a batch. On Layer 1, it posts a new state commitment representing the result. If this is an optimistic rollup, observers can challenge if the sequencer cheated. If it is a zk-rollup, the sequencer or proving system also posts a proof showing that those thousand swaps were processed according to the rules. Either way, the key economy is the same: Layer 1 is no longer individually executing a thousand swaps.

Real systems add many engineering layers around this core. Arbitrum Nitro describes itself as a complete optimistic rollup system with fraud proofs, a sequencer, token bridges, and advanced calldata compression. It uses a prover for interactive fraud proofs over WASM, while normal execution runs in native code and switches to WASM only if a fraud proof is needed. Optimism’s OP Stack similarly provides a reusable software stack for rollup-like chains such as OP Mainnet and Base. The underlying lesson is that a Layer 2 is not just a proof scheme; it is an operational system with sequencing, posting, bridging, state management, and upgrade machinery.

What is data availability for rollups and why does it matter?

| Option | Data location | Availability guarantee | Cost | Trust assumption | Best for |

|---|---|---|---|---|---|

| Onchain calldata | Stored on Layer 1 | Strong public availability | Higher per-byte cost | L1 security only | Maximal trustless recovery |

| Blob transactions (EIP-4844) | Blobs referenced as L1 sidecars | Commitments onchain with sampling | Much lower per-byte cost | Depends on node retention | Rollups needing cheaper DA |

| Validium | Offchain data availability | Depends on provider guarantees | Lowest L1 cost | Trust external DA providers | Very high throughput use cases |

A rollup is only as strong as the information users can access to verify it. This is the role of data availability. If a rollup posts a state commitment but withholds the transaction data needed to reconstruct the state, then even an honest commitment mechanism is not enough for users to independently verify or exit safely.

That is why Ethereum’s rollup-centric roadmap has paid so much attention to cheap data publication. The key insight is that rollups do not mainly need Layer 1 to redo all computation. They need Layer 1 to provide a trustworthy place to publish the data from which computation can be checked. Historically, this made calldata costs a major bottleneck for rollups.

EIP-4844, often described as proto-danksharding, addresses this directly. It introduces blob-carrying transactions: transactions that include large data payloads not accessible to EVM execution, while exposing commitments to that data. The blob data is meant for data availability, not normal smart-contract execution. It is priced through a separate blob-gas market with its own self-adjusting base fee, rather than competing directly with ordinary execution gas.

That design reveals an important architectural idea. The base chain is learning to specialize. Execution data that smart contracts need immediately remains in normal transaction space; bulk rollup data that mainly needs to be available and verifiable can live in blobs. Consensus nodes propagate these blobs separately as sidecars, and the system is designed to be forward-compatible with future data-availability sampling. In plain terms, Ethereum is making room for Layer 2s by recognizing that publishable data and EVM-executable data are not the same resource.

This is also where some neighboring designs diverge. A validium uses validity proofs like a zk-rollup, but keeps transaction data off Layer 1. That can raise throughput and reduce costs significantly, but it changes the guarantee. Users are now trusting some external data-availability arrangement. If that arrangement fails or withholds data, the proof may still certify that some state transition was correct relative to hidden data, yet users may be unable to reconstruct state or exit in the same trust-minimized way.

So when people say one Layer 2 is “more secure” than another, they often mean one of two different things. They may mean stronger correctness guarantees about state transitions, or they may mean stronger guarantees that transaction data will remain available to users. Those are related but distinct properties.

How does Bitcoin’s Lightning Network differ from Ethereum rollups as a Layer 2?

It is easy to equate Layer 2 with rollups because that is the center of Ethereum’s current scaling approach. But the broader idea applies across architectures.

Bitcoin’s Lightning Network is also a Layer 2, though its mechanism is very different. Its canonical specifications, the BOLTs, define an interoperability standard for payment channels and routing. Instead of batching arbitrary smart-contract execution into state commitments, Lightning moves repeated payments into channels that parties update offchain. Only channel openings, closures, and certain dispute-related actions need Layer 1. The scaling gain comes from avoiding onchain settlement for every small payment.

The analogy between rollups and Lightning is useful but limited. Both reduce Layer 1 load by moving frequent activity offchain and using Layer 1 as the enforcement layer. Where the analogy fails is that rollups usually maintain a shared offchain execution environment for many users and applications, while Lightning is built around channel relationships and routed payments. The invariant in Lightning is the enforceability of the latest valid channel state onchain. The invariant in rollups is the correctness and recoverability of the shared Layer 2 state as anchored to Layer 1.

This matters because “Layer 2” is not a single mechanism. It is a design pattern: move high-frequency activity away from the base layer while keeping the base layer as the source of truth when something important must be settled or disputed.

Why do Layer 2s reduce fees and multiply throughput?

The immediate economic effect of Layer 2 comes from amortization. Many user actions share the cost of a smaller number of Layer 1 interactions. If 1,000 transfers fit into one batch posted to Layer 1, the fixed cost of touching Layer 1 is spread across those 1,000 users instead of paid separately by each one.

Compression helps further. Rollup operators do not usually repost full high-level transaction objects in the most expensive possible form. They reorder, encode, and compress data to reduce publication cost. Arbitrum Nitro explicitly highlights improved batching and compression to minimize Layer 1 costs. Ethereum’s move toward blob data through EIP-4844 then cuts the price of the specific resource rollups consume most heavily: publishable data availability.

The throughput gain follows from the same mechanism. Layer 1 blockspace remains scarce, but each unit of that blockspace now represents more end-user activity. What changes is not the raw speed of the base chain’s consensus so much as the amount of economic activity each base-layer byte can support.

This also explains why Layer 2 is often better thought of as Throughput multiplication rather than simple speed. Users may experience faster confirmations on the Layer 2 itself because sequencers provide quick local acknowledgment, but the deeper reason the system scales is that Layer 1 is being used more efficiently.

What tradeoffs and risks should I watch for with Layer 2s?

Layer 2 does not remove tradeoffs; it rearranges them. The biggest misunderstanding is to think that moving work offchain makes the hard parts disappear. It does not. It changes which assumptions are cryptographic, which are economic, and which are operational.

Sequencers, for example, often introduce a practical centralization point. Even when the safety of funds is anchored to Layer 1, transaction ordering may be controlled by a single operator or small set of operators. That affects censorship resistance, latency, and fairness. Ethereum’s documentation notes that many Layer 2 systems rely on operators or sequencers, and that this can create centralization risks if operator diversity is weak.

Withdrawal behavior is another point where mechanism shows up in user experience. In optimistic rollups, withdrawals back to Layer 1 can be delayed by the fraud-proof window because the system must allow time for invalid claims to be challenged. In validity-proof systems, this delay can be shorter because correctness is proven before acceptance. But that only tells part of the story; bridges, proving cadence, and application design also matter.

There are also subtle failure modes around batching and timing. Security research has shown rollback-based double-spend attacks against optimistic-rollup designs under certain conditions, exploiting delays in posting batches and the gap between soft finality on Layer 2 and hard finality on Layer 1. The lesson is not that optimistic rollups are fundamentally broken. It is that the gap between local Layer 2 confirmation and Layer 1 inclusion is a real security boundary, and applications that bridge across chains must treat it carefully.

Data availability assumptions can also quietly dominate the whole trust model. A system with strong validity proofs but offchain data can still leave users exposed if the data disappears. Conversely, a system with onchain data but weaker liveness assumptions may offer stronger recoverability. There is no single axis called “security.” There are at least separate questions of correctness, data availability, censorship resistance, upgrade trust, and recovery under operator failure.

Governance matters too. Many production Layer 2s are still evolving, and upgrades are often controlled by councils, multisigs, or core teams to some degree. Optimism’s upgrade process, for instance, includes review and veto structures, while external frameworks such as L2BEAT stage systems partly by decentralization properties. For users, this means the real trust surface includes not only proofs and contracts but also who can change them.

How are Layer 1 and Layer 2 evolving together?

The broader scaling trend is increasingly clear. Rather than forcing the base layer to do everything, ecosystems are specializing layers by function. Layer 1 provides scarce, high-value settlement and data availability. Layer 2 provides higher-throughput execution and better user-facing cost. Improvements at Layer 1, such as blob transactions in EIP-4844, are being designed specifically to make this layered model work better.

That is why Ethereum’s ecosystem has become explicitly rollup-centric. The goal is not to abandon Layer 1 security, but to use it where it is most valuable and stop spending it on work that can be compressed, proven, or disputed more efficiently elsewhere. Other ecosystems follow the same logic with different mechanisms: channels, app-specific networks, validity systems, or modular data layers.

The enduring idea is simple. A blockchain becomes more useful when not every action must be handled at the most expensive and most globally replicated layer.

Conclusion

Layer 2 is a scaling design that moves transaction activity away from the base chain while keeping the base chain as the final source of security and settlement. Its real innovation is not merely being offchain.

It is letting Layer 1 judge many transactions in bulk instead of processing each one individually.

- through commitments

- proofs

- disputes

- published data

That is why Layer 2 matters. It preserves much of what makes blockchains trustworthy while making them cheaper and more usable. The exact guarantees depend on the design, especially around proofs, data availability, sequencing, and governance. But if you remember one thing tomorrow, remember this: Layer 2 works by changing where work happens without fully changing where trust lives.

How does this part of the crypto stack affect real-world usage?

Layer 2 design choices (proofs, data availability, sequencer model, and withdrawal rules) change fees, finality, and how quickly you can move funds back to Layer 1. Before you fund or trade assets that live on a Layer 2, check those properties and then use Cube to execute trades or move funds while accounting for the L2’s constraints.

- Read the Layer 2 project’s security model and note whether it uses zk‑proofs, optimistic fraud windows, or an external DA layer. Record the stated fraud‑challenge window or proof cadence.

- Verify onchain publication and data‑availability claims: open the rollup or bridge contract on a block explorer and confirm it posts calldata/blobs or links to a DA provider.

- Fund your Cube account with fiat or a supported crypto transfer for the asset you plan to use on that Layer 2.

- When placing trades or deposits, pick execution types that match the L2: use limit orders or scheduled transfers if the L2 reports long withdrawal or challenge delays.

- Before initiating withdrawals or bridge transfers, copy and verify the official bridge/contract address from the project site and check the estimated withdrawal time on the project’s docs.

Frequently Asked Questions

Optimistic rollups post state claims to Layer 1 and rely on a fraud‑challenge window during which anyone can submit a fraud proof if a claim is incorrect, which reduces upfront proving cost but delays finality (notably for withdrawals). ZK‑rollups post cryptographic validity proofs that Layer 1 verifies before accepting an update, which requires heavier proof generation but can give faster finality once a proof is accepted.

Data availability means users and external verifiers can access the transaction data needed to reconstruct a Layer 2’s state; without it, posted commitments or proofs can’t be independently checked and users may be unable to exit safely. Rollups therefore rely on Layer 1 (or a reliable DA layer) to publish or make that data available, which is why Ethereum proposals like EIP‑4844 add specialized “blob” data to lower the cost of publishing rollup data.

A validium uses validity proofs like a zk‑rollup for correctness but keeps most transaction data offchain, which lowers costs and raises throughput but changes the guarantee - users must trust an external data‑availability arrangement and could be unable to reconstruct state or exit if that arrangement fails or withholds data.

It depends on the Layer 2 design: if the system posts enough data or posts proofs to Layer 1, users can recover funds or verify state via Layer 1 even if the sequencer disappears; if the system requires an external committee, federation, or its own consensus to recover funds or access data, then users must trust that separate party and cannot fully rely on Layer 1 for recovery.

EIP‑4844 introduces blob‑carrying transactions (large data payloads not accessible to EVM execution but with onchain commitments) and a separate blob‑gas market to make publishing rollup data cheaper; however blobs increase mempool data and DoS risk so clients use announcement/sidecar propagation and retention parameters (e.g., a minimum sidecar availability epoch window) to mitigate operational risks.

No - sidechains run their own consensus and validator assumptions and therefore do not inherit the base chain’s security in the same way; a true Layer 2 is characterized by retaining the base chain as the ultimate trust anchor so users can recover or verify using Layer 1 rather than trusting a separate validator set.

They share the same high‑level pattern (move frequent activity offchain and use the base layer for settlement), but Lightning is built from bilateral payment channels and routing where the invariant is enforceability of the latest channel state onchain, while rollups create a shared offchain execution environment for many users and anchor shared state to Layer 1 via commitments, proofs, or challenges.

Security research has shown rollback/timing attacks against optimistic‑rollup designs that exploit delays between Layer 2 local confirmation and Layer 1 inclusion; mitigations required protocol and operational changes (e.g., new data‑posting components and software updates), but the existence of such attacks underscores that the gap between Layer 2 soft finality and Layer 1 hard finality is a real security boundary.

Related reading