What is Blobs and EIP-4844?

Learn what Ethereum blobs and EIP-4844 are, how blob transactions work, why rollups use them, and how they lower L2 data costs.

Introduction

Blobs and EIP-4844 are Ethereum’s answer to a very specific scaling problem: rollups need to publish a lot of data to Ethereum, but they do not need that data to live forever inside the EVM’s ordinary execution path. Before EIP-4844, rollups mostly used calldata for this purpose. Calldata is permanent, visible to the EVM, and priced inside Ethereum’s main gas market. That design was simple, but it forced rollups to buy a kind of storage and execution integration they did not really need.

EIP-4844 changes that trade. It adds a new transaction type that can carry large chunks of data called blobs. The crucial idea is that blob data must be available to the network for long enough to preserve rollup security, but it does not need to be permanently stored by ordinary nodes or directly readable by smart contracts. Once you see that distinction (available now versus stored forever and executable) the whole design clicks.

This is why EIP-4844 is important for Ethereum’s scaling roadmap. It gives rollups a dedicated data-availability channel that is much closer to what they actually need, while staying compatible with the longer-term path toward fuller forms of danksharding and data-availability sampling. In practice, it was introduced in the Dencun upgrade, where Ethereum added ephemeral blob data to help lower L2 costs.

How does EIP-4844 reduce rollup data publication costs?

| Permanence | EVM access | Fee market | Best for | Security window |

|---|---|---|---|---|

| Permanent | Readable by contracts | Main execution gas | Contract inputs & logs | Indefinite |

| Temporary (18 days) | Not EVM-readable | Separate blob gas | Rollup batch data | Bounded (days) |

A rollup works by doing most computation off-chain and then posting enough information to Ethereum so anyone can reconstruct or verify what happened. That phrase “enough information” matters. For many rollups, the expensive part is not execution on Ethereum; it is data publication. Ethereum must be able to say, in effect, the rollup published the batch data, so challengers or verifiers had a fair chance to inspect it.

If that publication happens through calldata, Ethereum treats the data as ordinary transaction input. That has two consequences. First, the data becomes part of the execution-layer block contents in a way that is permanently retained. Second, it competes in the same broad fee environment as other execution-layer demand. But rollup batch data is unusual. Contracts usually do not need to read it back inside the EVM, and the network does not need to preserve all of it forever just to maintain short-horizon security guarantees.

So the mismatch is structural, not cosmetic. Calldata is too integrated and too permanent for the job. EIP-4844 fixes that by creating a data lane optimized for availability rather than execution. The protocol keeps enough cryptographic structure to prove what data was posted, but avoids turning that data into normal EVM-accessible payload.

The result is not “free storage.” In fact, it is almost the opposite. Blob space is intentionally temporary. The network guarantees recent availability, not indefinite archival. That is why blobs are especially attractive for rollups, whose security models usually care about a bounded challenge or verification window rather than eternal in-protocol retention.

What is a blob in EIP-4844?

At the protocol level, a blob is a fixed-size byte vector. In the consensus specifications, it is defined as BYTES_PER_FIELD_ELEMENT * FIELD_ELEMENTS_PER_BLOB, with BYTES_PER_FIELD_ELEMENT = 32 and FIELD_ELEMENTS_PER_BLOB = 4096. So a blob holds 4096 field elements of 32 bytes each.

That fixed shape is not arbitrary bookkeeping. It exists because the blob is not just “some bytes.” It is treated as data that can be interpreted inside the finite-field structure used by the KZG commitment scheme. That cryptographic representation is what lets Ethereum commit to a blob compactly and later verify claims about it without exposing the whole blob to the EVM.

Still, the right first intuition is simpler than the algebra: a blob is a large data payload attached to a transaction, but attached in a special way. The transaction includes references to the blob through cryptographic commitments and versioned hashes. The full data itself travels through the consensus/networking path, not through ordinary execution as calldata.

This leads to the most important misunderstanding to avoid: a blob is not calldata with a discount. The EVM cannot simply inspect blob contents. Contracts can access the commitment-derived identifier, and Ethereum adds a precompile for KZG point evaluation verification, but the raw blob bytes are outside normal EVM execution access.

That constraint is not a bug. It is the mechanism that allows blobs to be cheaper.

How do blob-carrying transactions (EIP-2718) work?

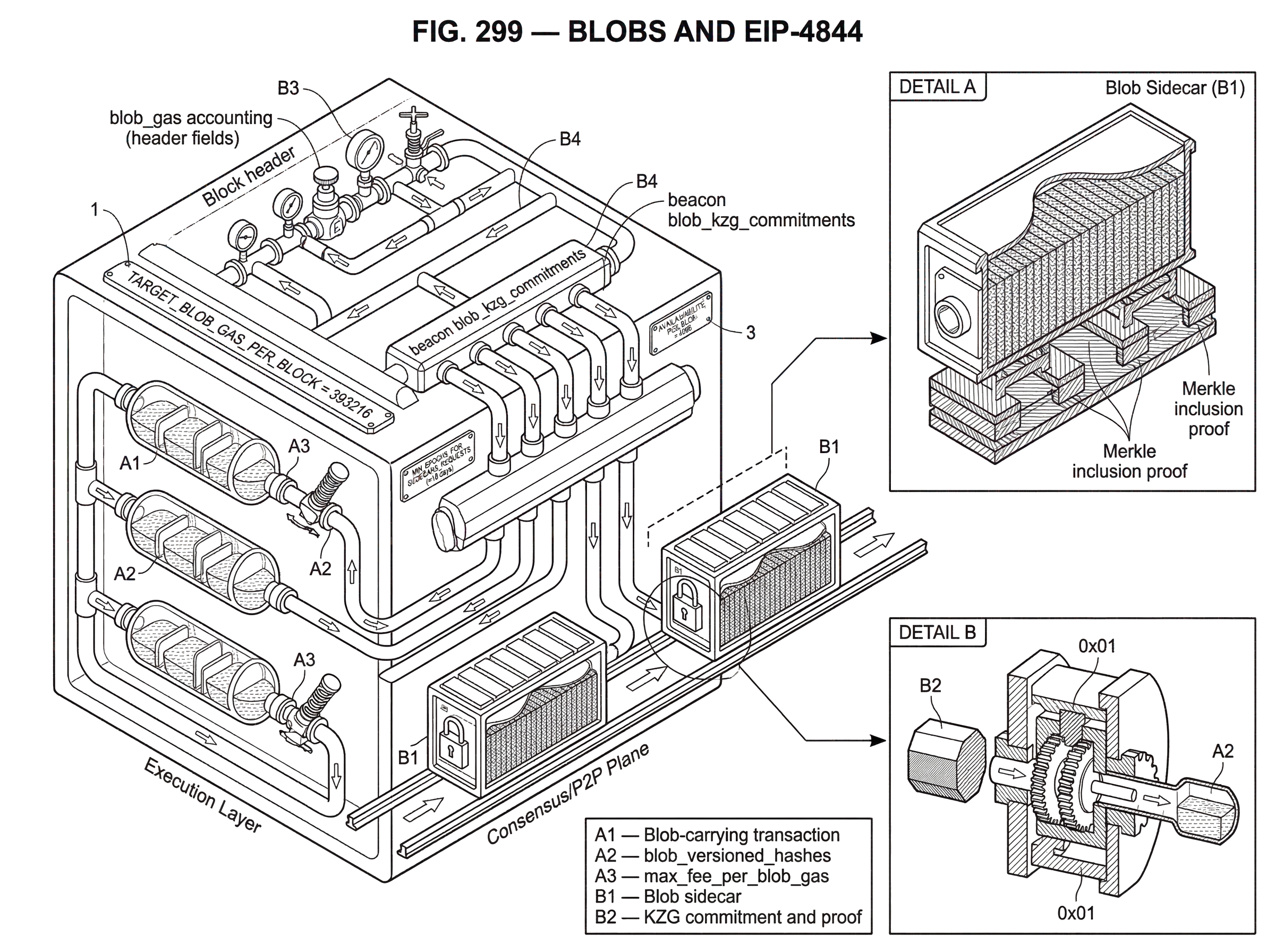

EIP-4844 introduces a new EIP-2718 transaction type, often called a blob transaction or blob-carrying transaction. Conceptually, start with an EIP-1559-style transaction, then add the pieces needed to pay for blob space and bind the transaction to specific blobs.

Two additions matter most. The first is max_fee_per_blob_gas, which is the sender’s fee cap for blob space. The second is blob_versioned_hashes, which are commitment-derived identifiers for the blobs associated with the transaction. These hashes are how the execution layer knows which blobs the transaction claims, without needing the blob contents themselves during EVM execution.

There is a nice separation of roles here. The execution layer validates the transaction format, fee rules, and commitment references. The consensus layer and p2p layer handle the actual blob payloads and their availability. The transaction, in other words, carries the right to consume blob capacity plus the references that bind it to actual data.

A useful worked example is a rollup batch submission. Imagine a rollup sequencer compresses a batch of L2 transactions into a data payload. Under the pre-4844 world, it would place that payload in calldata, pay calldata-related costs, and permanently inject those bytes into Ethereum’s execution history. Under EIP-4844, the sequencer instead packages the batch into one or more blobs, computes KZG commitments for them, derives versioned hashes from those commitments, and creates a blob transaction that names those hashes and offers a fee for blob gas. Ethereum includes the transaction in a block, and the corresponding blobs are propagated as sidecars alongside the block. Contracts can later reason about the commitment identifiers and proofs, but they never receive the full blob bytes as normal transaction input. The rollup got what it needed (network-available batch data for a bounded time window) without buying permanent EVM-visible calldata.

Why does EIP-4844 use a separate blob‑gas market?

| Priced for | Price dynamics | Charged per | Best avoids | Block target |

|---|---|---|---|---|

| Execution & state pressure | EIP-1559 base-fee | Per-opcode / calldata | Mispricing execution demand | EL gas target |

| Short-term data availability | Independent EIP-1559-like | Per-blob capacity | Cross-resource distortion | ≈3 blobs per block |

If blobs are solving a different problem than ordinary execution, they should not be priced exactly the same way. That is the reason for blob gas.

EIP-4844 introduces a new gas dimension, independent from normal execution gas. Blob gas follows its own EIP-1559-like pricing rule. The block header is extended with blob_gas_used and excess_blob_gas, which let the protocol adjust blob pricing based on recent usage relative to a target.

This separate market matters because Ethereum is serving two scarce resources at once. Ordinary gas prices execution and state-access pressure. Blob gas prices short-term data-availability bandwidth. Those pressures are related only loosely, so forcing them into one fee market would distort both.

The specification chooses conservative initial limits. The target corresponds to about 3 blobs per block, roughly 0.375 MB, and the maximum corresponds to 6 blobs per block, roughly 0.75 MB. In parameter terms, TARGET_BLOB_GAS_PER_BLOCK = 393216 and MAX_BLOB_GAS_PER_BLOCK = 786432. The consensus layer also sets MAX_BLOBS_PER_BLOCK = 6.

Those numbers are not the final intended endpoint for Ethereum’s scaling roadmap. They are deliberately a stop-gap design: enough new capacity to help rollups materially, but not so much that the network suddenly absorbs an untested jump in bandwidth and validation burden. The spec is explicit that this is a forward-compatible step toward fuller sharded or sampling-based data-availability designs.

The pricing mechanism also changes rollup behavior. Blob fees are charged per blob capacity consumed, not as fine-grained permanent calldata storage. That makes batching strategy important. If a rollup has enough demand to fill blobs efficiently, blob posting tends to make strong economic sense. If it has sparse demand, the tradeoff becomes more subtle because waiting to fill a blob adds latency. That is one reason blob adoption is especially natural for busy rollups.

How do KZG commitments bind blobs without exposing their bytes?

| Proof size | EVM verification | Trusted setup | Best for | Proof property |

|---|---|---|---|---|

| Constant, small | Point-eval precompile | Requires trusted setup | Compact evaluation proofs | Polynomial binding |

| Logarithmic, larger | Hash checks in EVM | No trusted setup | General inclusion proofs | Position-binding only |

The reason Ethereum can keep blob data out of the EVM while still binding transactions to it is KZG commitments. A KZG commitment is a compact cryptographic commitment to structured data; here, data interpreted as a polynomial over a finite field.

The key property is this: from the commitment, you can later verify a claim like “this committed blob evaluates to value y at point z,” given an appropriate proof, without seeing or reprocessing the entire blob. That is what lets Ethereum carry a short commitment inside normal protocol objects while keeping the large blob itself on a separate path.

This is where the fixed blob structure becomes meaningful. The blob’s 4096 field elements correspond to a polynomial in evaluation form. The Deneb polynomial-commitments spec says these polynomials are handled in Lagrange form and interpreted with bit-reversal conventions. Those details matter to implementers and library authors, but the conceptual point is simpler: Ethereum needs a commitment scheme whose proofs are small and efficient enough to be practical at block-production scale.

EIP-4844 adds a point-evaluation precompile at a fixed address so contracts can verify KZG evaluation claims against commitments. This does not expose blob bytes to the EVM. It exposes a narrower capability: verify a mathematically precise statement about committed data.

That distinction is worth holding onto. The commitment gives Ethereum a compact handle on the data. The proof system gives Ethereum a way to check claims about that data. Neither turns blobs into normal contract-readable storage.

What are versioned hashes and how do they reference blob commitments?

Execution-layer logic does not directly manipulate KZG commitments as the primary identifier. Instead, commitments are converted into versioned hashes.

In Deneb, the helper kzg_commitment_to_versioned_hash takes a KZG commitment, hashes it, and prefixes a version byte indicating the commitment scheme. For KZG-derived blob references, the version byte is 0x01. This versioning matters because it keeps the representation forward-compatible. A hash is not merely “some digest”; it is a digest tagged with the scheme used to produce it.

This helps separate fundamental design from convention. The fundamental requirement is that Ethereum must bind a transaction to specific committed blobs in a way the execution layer can validate. The choice to do that via versioned hashes, rather than exposing raw commitment objects everywhere, is a convention that improves upgrade flexibility and scheme identification.

So when a blob transaction carries blob_versioned_hashes, it is carrying execution-layer references to blob commitments. The execution engine validates those references, while the consensus layer ensures the actual blobs and commitments line up.

Why are blobs propagated as sidecars instead of inside the block body?

A natural question is: if blobs are part of block production, why not just put them directly inside the block body?

The answer is future compatibility and bandwidth discipline. In Deneb, beacon blocks reference blob commitments, but the full blobs are propagated separately as blob sidecars. A BlobSidecar includes the blob, its KZG commitment, its KZG proof, a signed block header, and a Merkle inclusion proof showing that the commitment belongs to the block body.

That design can seem awkward at first, but it solves a real architectural problem. If the block body had to permanently carry the full blob payload in the same way as ordinary data, Ethereum would be baking in a “download everything” model. Sidecars instead make blob availability a more modular subsystem. Today, nodes can download full blobs. Later, the protocol can evolve toward data-availability sampling without redesigning the core beacon-block format.

The p2p rules reflect this separation. Blob sidecars are gossiped on dedicated subnet topics, and nodes validate them before forwarding: the blob index must be in range, the sidecar must map to the correct subnet, the proposer signature and commitment inclusion proof must be valid, and the KZG proof must check out. There are also request-response protocols to retrieve sidecars by range or by block root and index.

This is the heart of the “ephemeral but available” promise. The network does real work to distribute and validate blobs while they matter, but it does not pretend that every ordinary node must store them indefinitely.

How long are blob sidecars available and what does 'ephemeral' mean?

People often hear that blob data is temporary and infer that it is unreliable. That is too crude.

Blob data is temporary in the sense that Ethereum does not commit to preserving it forever in ordinary node storage. The consensus specifications define a minimum serve window for blob sidecars, MIN_EPOCHS_FOR_BLOB_SIDECARS_REQUESTS = 4096, which is commonly described as about 18 days. During that window, blobs are expected to be available across the network. Afterward, they may be pruned.

The key question is not “is it permanent?” but “is it available long enough for the security model that depends on it?” For rollups, the answer is often yes. Ethereum.org’s Dencun FAQ notes that rollup withdrawal periods are typically around 7 days, so an availability window around 18 days is intended to exceed the period during which challengers or verifiers need the data.

That does not mean historical blob access is impossible. It means it is no longer guaranteed by default from ordinary nodes. If a rollup, archive provider, explorer, or research tool wants longer retention, it can store blobs externally. In practice, that opens a market and tooling layer around blob archiving, rather than forcing every Ethereum node to act as a permanent warehouse for rollup batch data.

How does EIP-4844 change execution vs. consensus layer responsibilities?

EIP-4844 is a good example of how Ethereum’s execution layer and consensus layer divide responsibilities.

On the execution layer, new transaction rules, header fields, and fee accounting are added. Clients must validate blob transactions, enforce fee caps such as the minimum blob fee requirement, check versioned-hash structure, and track blob_gas_used and excess_blob_gas in payloads and headers.

On the consensus layer, beacon blocks gain blob_kzg_commitments, and the p2p system gains the sidecar machinery that actually transports blob data. The Engine API between consensus and execution also changes so the execution engine receives versioned_hashes and the parent_beacon_block_root in payload processing.

This division explains an easy-to-miss fact: the execution layer does not store blob contents long-term. It mainly reasons about references and accounting. The consensus/networking side is what ensures recent blob availability. If you blur those roles together, the protocol can seem more confusing than it is.

How do rollups use blobs in production?

The primary users of blobs are rollups. They need to publish compressed batch data to Ethereum so that their state transitions can be checked, reconstructed, challenged, or proven. Blob transactions give them a cheaper channel for doing exactly that.

This is not merely theoretical. Ethereum’s own upgrade messaging around Dencun framed EIP-4844 as a way to reduce L2 transaction fees, and rollup systems adopted support accordingly. Arbitrum, for example, upgraded ArbOS to support posting L2 batches as blobs on L1 Ethereum. The notable point is not the brand name but the pattern: rollups can adopt blobs at the batch-posting layer without requiring every application on the rollup to change its own contract logic.

That is another sign that EIP-4844 is infrastructure, not an application feature. Users often experience it indirectly, as lower L2 costs or different rollup economics, rather than as a new wallet interaction on L1.

What assumptions and risks underlie EIP-4844's design?

The most obvious dependency is the trusted setup behind KZG commitments. KZG is efficient, but it is not transparent in the cryptographic sense; it requires structured setup parameters. Ethereum ran a large public KZG ceremony to generate the foundation used by EIP-4844, and the resulting transcript is published and verifiable. The security assumption is not “everyone in the ceremony was honest.” It is much weaker: the setup is secure if at least one contribution was honestly generated and its secret discarded.

That is a real assumption, not a fiction to be hand-waved away. The protocol chose KZG because the efficiency tradeoff was attractive enough to justify the setup ceremony. If Ethereum had insisted on avoiding trusted setup entirely, the design space would have looked different.

There are also implementation assumptions. Cryptographic libraries must deserialize points correctly, validate proofs consistently, and handle malformed inputs safely. The KZG implementations used for EIP-4844 were audited, and those audits did find issues, including upstream library and binding-level problems. That does not mean the design is broken; it means cryptographic plumbing is consensus-critical and easy to get subtly wrong.

Finally, there is a roadmap assumption. EIP-4844 is often called proto-danksharding because it is not the end state. Today, nodes still download full blobs to ensure availability. The longer-term plan involves data-availability sampling, where nodes can verify availability by sampling small pieces rather than downloading everything. Sidecars and commitment-based design are intentionally structured so that this future transition is possible.

So EIP-4844 should be understood as a carefully chosen intermediate point: immediately useful, mechanically real, but not the final scaling architecture.

What EIP-4844 does not solve or guarantee

It does not make arbitrary onchain storage cheap. Blob data is not a general-purpose database for contracts.

It does not directly reduce ordinary L1 execution fees in a guaranteed way. Blob space has its own fee market, and the main intended benefit is lower rollup posting cost, which can flow through to lower L2 fees.

It does not yet implement full data-availability sampling. All beacon nodes still need to handle blob availability more directly than they would in a mature DAS design.

And it does not remove the need for off-chain archival if someone wants old blob data beyond the protocol’s retention window. That is a feature of the design, not an accidental omission.

Conclusion

Blobs and EIP-4844 make sense once you separate data availability from permanent execution-layer storage. Rollups need Ethereum to guarantee that batch data is available when it matters; they do not need every byte to be forever embedded in calldata and exposed to the EVM.

EIP-4844 turns that insight into protocol mechanics: blob-carrying transactions, a separate blob-gas market, KZG commitments and proofs, and consensus-layer sidecars that carry temporary data. It lowers the cost of publishing rollup data today while preparing Ethereum for more scalable data-availability designs tomorrow.

If you remember one sentence, make it this: EIP-4844 gives Ethereum a cheaper lane for temporary, verifiable data; because availability, not permanence, is what rollups mostly need.

How do you get practical exposure to Ethereum after learning about blobs?

Get exposure to Ethereum (the asset whose protocol changes include EIP-4844) by buying or trading ETH on Cube Exchange. Cube provides fiat on‑ramps and crypto deposit paths so you can fund a trade quickly, then use the exchange’s order books to acquire ETH or related L2 exposure. Follow the Cube trading and withdrawal workflow below to complete a purchase and move funds where you need them.

Frequently Asked Questions

Blobs are cheaper because they provide temporary, consensus-layer-available data rather than permanent EVM calldata; Ethereum therefore charges for short-term blob bandwidth in a separate blob-gas market and avoids baking those bytes into permanent execution-layer storage. This separation reduces the cost rollups pay to publish batch data when they only need availability for a bounded challenge window.

No - the EVM cannot read raw blob bytes; contracts only see commitment-derived identifiers (versioned hashes) and can use a KZG point-evaluation precompile to verify specific claims about committed data without obtaining the full blob.

Blob sidecars are stored and served for at least MIN_EPOCHS_FOR_BLOB_SIDECARS_REQUESTS = 4096 epochs (commonly described as about 18 days), after which ordinary nodes are not required to retain them; longer-term archival is not guaranteed by default.

No - EIP-4844 does not provide permanent archival; blobs are intentionally ephemeral and may be pruned after the serve window, so rollups or third parties that need historical access must run their own archival storage or pay archive providers.

KZG commitments require a trusted setup produced via a public ceremony; the protocol’s security assumes at least one honest contribution discarded its secret, so the setup is a real trust assumption and not purely transparent. The ceremony transcript exists and was published, but the trusted‑setup nature and its distribution remain a noted caveat.

Blobs travel as separate 'blob sidecars' rather than being embedded in the block body so the core block format remains compact and upgradeable; sidecars let nodes download or later move to sampling-based availability without changing the beacon‑block format. This modular sidecar design also scopes bandwidth and validation work to the networking/consensus layer.

Blob gas is a distinct EIP‑1559–style fee market with a target sized for roughly 3 blobs per block (~0.375 MB) and a maximum of 6 blobs per block (~0.75 MB); these conservative limits (TARGET_BLOB_GAS_PER_BLOCK = 393216, MAX_BLOB_GAS_PER_BLOCK = 786432, MAX_BLOBS_PER_BLOCK = 6) let the protocol tune blob pricing separately from execution gas.

No - EIP‑4844 is explicitly a proto‑danksharding step, not the final data‑availability architecture; the protocol is designed to be forward‑compatible with full data‑availability sampling (DAS) but currently still requires nodes to download blobs and leaves DAS integration as future work.

Implementation and audit work matters because subtle bugs in KZG libraries or bindings have been found; auditors discovered issues (including upstream deserialization bugs), fixes were proposed, and the audit reports and repositories note remaining questions about propagation of fixes and exact client adoption.

Related reading