What is a Sidechain?

Learn what a sidechain is, how two-way pegs and bridges work, why sidechains help scaling, and how their security differs from rollups.

Introduction

Sidechain means a blockchain that runs separately from a main chain but can interact with it. The idea is attractive because it seems to offer the best of both worlds: keep the base chain stable and conservative, while moving activity to another chain that can be faster, cheaper, or more flexible. But the central fact to understand is this: a sidechain is not just “the same chain, somewhere else.” It is a different chain with different rules, different operators, and often a different security model.

That distinction is why sidechains matter in scaling, and also why they are often misunderstood. If you move an asset from a main chain to a sidechain, what actually happened? Was the original asset locked somewhere? Who is allowed to unlock it later? What proves that a deposit on one chain really occurred before assets are released on the other? Those questions are not implementation details. They are the mechanism.

At a high level, sidechains exist because base chains are intentionally hard to change. Bitcoin and Ethereum secure large amounts of value, so every protocol change is costly, slow, and contentious. A separate chain gives builders room to try different block times, privacy systems, scripting rules, fee models, or application environments without forcing those choices onto the base chain. The sidechain can specialize, while the base chain remains relatively simple.

The price of that freedom is that the sidechain must secure itself, or must rely on some bridge or federation or proof system to convince the main chain about what happened elsewhere. That is the governing tradeoff behind nearly every sidechain design. Sidechains expand the design space, but they do not inherit security by default.

How do sidechains move value without creating duplicate assets?

The problem a sidechain solves is easy to state. Suppose a main chain is expensive, congested, or intentionally limited in functionality. You want cheaper transfers, confidential transactions, different virtual machine rules, or faster finality. Starting a totally new chain is possible, but then you must bootstrap a new asset, new liquidity, new users, and new trust. A sidechain tries to avoid that bootstrapping problem by letting existing assets move onto another chain and come back later.

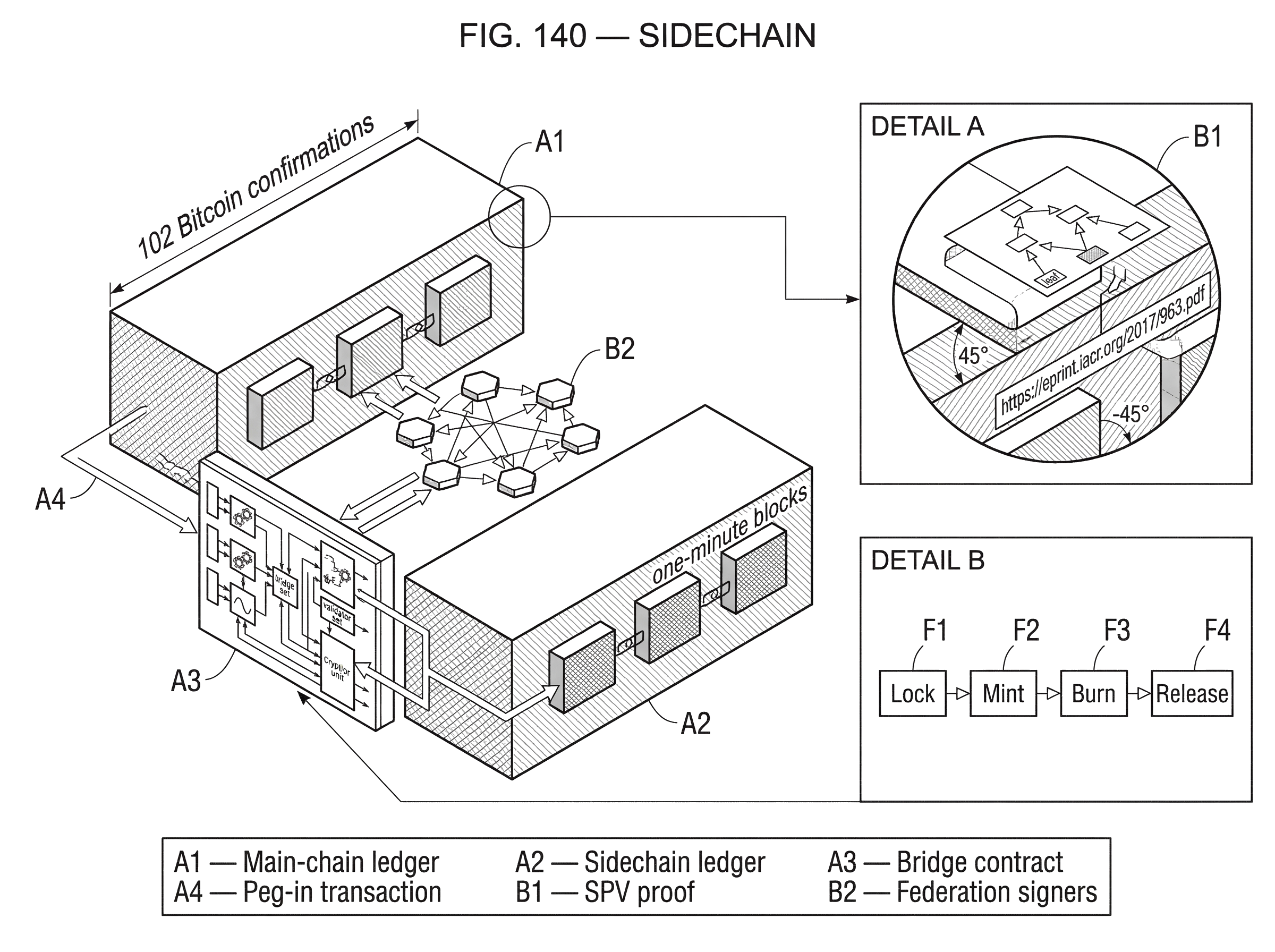

The key invariant is that the same economic value should not exist twice. If 1 BTC moves from Bitcoin to a Bitcoin sidechain, the goal is not to create extra bitcoin-like value out of nowhere. Instead, the bitcoin on Bitcoin is locked or otherwise encumbered, and a corresponding representation becomes usable on the sidechain. When the user wants to return, the sidechain representation is destroyed or redeemed, and the locked coins on the main chain are released.

That is why many sidechain explanations revolve around the phrase two-way peg. In the Blockstream sidechains paper, a two-way peg is the mechanism for transferring coins between chains at a deterministic exchange rate. Mechanically, this works by creating a transaction on the sending chain that locks assets, then creating a transaction on the receiving chain whose inputs include proof that the lock happened correctly. The sidechain asset is therefore not meant to be a free-floating IOU with arbitrary supply. It is meant to track an asset that has been immobilized somewhere else.

This is the first point many readers miss: a sidechain is not defined by speed or lower fees. It is defined by independent execution plus some method of validating facts from another chain. The exact validation method is what separates trust-minimized designs from more trusted ones.

How does a sidechain transfer (peg-in and peg-out) actually work?

| Method | Verifier on target | Trust model | Finality speed | Main risk |

|---|---|---|---|---|

| SPV proof | Header + Merkle proof | Relies on source PoW majority | Moderate (confirmations) | Deep reorg / majority attack |

| Succinct proofs (NIPoPoW/zk) | Compact proof verifier | Inherits source consensus assumptions | Faster (short proofs) | Parameter / incomplete proofs |

| Federation (multisig) | Functionary signatures | Trust in named operators | Fast (near instant) | Custodian compromise |

| Miner/validator voting | Mainchain miner votes | Depends on miner incentives | Slow (long withdrawal delay) | Miner collusion risk |

Consider the simplest useful example: moving bitcoin from Bitcoin onto a sidechain. A user sends BTC to a special output on Bitcoin that the sidechain recognizes as a peg-in. That Bitcoin output is not spendable in the ordinary way by the user anymore; it is locked according to the peg design. The sidechain then needs evidence that this deposit really occurred and is sufficiently final. Once it accepts that evidence, it creates or unlocks the corresponding sidechain asset, often called something like sBTC or, in Liquid’s case, LBTC.

Now imagine the return journey. The user destroys or withdraws the sidechain asset according to sidechain rules. Then some mechanism on Bitcoin must become convinced that this burn or withdrawal request on the sidechain is legitimate, final, and not already spent. If Bitcoin accepts that proof, the locked BTC can be released back on the main chain.

Notice what makes this difficult. A blockchain normally verifies only its own history. Bitcoin nodes know how to validate Bitcoin blocks, not arbitrary sidechain blocks. Ethereum contracts know Ethereum state, not every other chain’s consensus. So a sidechain system needs some way to import external truth. There are only a few broad ways to do this, and each one shifts the trust boundary differently.

In some designs, the receiving chain verifies a proof derived from the source chain’s consensus. In proof-of-work systems this may use an SPV proof, meaning a proof consisting of block headers showing sufficient proof-of-work plus a Merkle proof showing that a particular transaction was included in one of those blocks. In more advanced proposals, succinct proofs like NIPoPoWs aim to compress this further so the verifier does not need all headers. In other designs, a federation of signers attests that the transfer is valid. In still others, miners or validators vote over time on whether withdrawals should be honored.

The mechanism changes, but the question stays the same: who or what convinces chain B that an event on chain A really happened?

Why use a sidechain instead of launching a new chain or staying on mainnet?

If sidechains introduce extra trust assumptions, why use them? Because they let developers change things that base chains are unwilling or unable to change.

A sidechain can run different consensus, different block intervals, different fee rules, different scripting or smart-contract environments, different privacy features, or different asset models. The Blockstream paper explicitly presents sidechains as a way to experiment with both technical changes, like new transaction formats and privacy primitives, and economic changes, like alternative reward schemes. The reason this matters is not novelty for its own sake. It is that the base chain is constrained by social coordination and by the need to protect existing value.

A concrete example is Liquid. It is a Bitcoin sidechain built on Elements, designed for faster and more confidential transfers of bitcoin and issued assets. Liquid uses one-minute blocks and treats transactions as final after two confirmations because it does not use Bitcoin’s proof-of-work. Instead, it uses a federation of functionaries under a model called Strong Federations. That design gives different performance and features than Bitcoin, but it does so by changing the trust and consensus assumptions.

Ethereum-side examples make the same point from another angle. The Ethereum documentation defines sidechains as independent blockchains that run in parallel to Mainnet, connect through two-way bridges, and use their own consensus and block parameters. This is useful when applications want lower fees or custom execution environments without needing changes to Ethereum itself. But Ethereum’s own docs stress the key difference: sidechains derive security separately from Mainnet, whereas rollups derive security from Ethereum.

That contrast is the easiest way to see why sidechains belong in the scaling conversation but are not equivalent to L2s.

What security assumptions and risks do sidechains introduce?

The most important fact about sidechains is also the easiest to state: a sidechain is secured separately from the main chain unless a specific design proves otherwise. If the sidechain has its own validator set, then users depend on that validator set for correctness and liveness. If the bridge is run by guardians, users depend on those guardians and on the correctness of the bridge contracts. If withdrawals are approved by miners over time, users depend on miner incentives and the voting mechanism.

This is why sidechains are often confused with rollups but should not be treated the same. A rollup executes elsewhere but posts enough data or proofs to the base layer so that the base layer can enforce correctness or permit fraud resolution. A sidechain usually does not do that. It communicates with the base chain, but the base chain generally does not re-execute the sidechain or guarantee its state transitions. The sidechain is, in effect, another sovereign chain that happens to be bridged to the first.

That independence creates two kinds of risk.

The first is consensus risk on the sidechain itself. If the sidechain’s validators collude, are bribed, get hacked, or go offline, the sidechain can censor users, freeze, or produce invalid state. Liquid’s own documentation is explicit here: if one-third or more of the functionaries are offline, blocks stop being signed and the chain freezes until quorum returns. That is a very different failure mode from Bitcoin’s proof-of-work liveness profile.

The second is bridge or peg risk. Even if the sidechain runs correctly, the transfer mechanism can fail. In a federated peg, the custodians holding the locked assets may misbehave or be compromised. In proof-based pegs, the verification logic may accept fraudulent proofs or may be vulnerable to reorg timing attacks. In wrapped-asset bridge designs, a bug can allow unauthorized minting on the destination chain.

Incidents in the broader interoperability ecosystem make this concrete. Wormhole’s exploit involved bypassing signature verification and minting unbacked wrapped assets. Ronin’s compromise involved control of enough validator keys to forge withdrawals. These are not weird edge cases unrelated to sidechains. They reveal the basic rule: whenever assets move by lock-and-mint or burn-and-release, the security of the verifier is the security of the asset representation.

What trust assumptions underlie SPV-based sidechain pegs?

The appealing version of a sidechain peg is a cryptographic one: no trusted federation, just proofs. In proof-of-work systems, the classic primitive is the SPV proof. An SPV proof shows that a transaction appears in a block, and that the block sits under enough accumulated proof-of-work to be treated as part of the chain.

This sounds trustless, but the trust model is narrower than many people assume. The Blockstream paper is careful on this point: an SPV-based two-way peg has only SPV security. In practice that means the receiving chain trusts that the source chain’s proof-of-work majority is honest enough that a fraudulent history cannot be sustained through the relevant confirmation and contest periods. If an attacker controls a majority of hashpower, or can exploit reorganization windows, they may be able to present misleading proofs.

So the mechanism is not “cryptography replaces trust” in the absolute sense. The mechanism is “cryptography reduces the verifier’s workload, while still inheriting the consensus assumptions of the source chain.” That is an important difference.

Researchers have tried to improve the practicality of proof-based verification with succinct systems such as NIPoPoWs, which allow a prover to send only logarithmic-size data rather than an ever-growing header chain. Work on proof-of-work sidechains shows how such succinct proofs can support cross-chain event verification with a submit-proof, collateral, and contestation design. The receiving chain tentatively accepts a proof, anyone can challenge it during a dispute window, and honest participants are economically rewarded for monitoring. This is elegant, but it still depends on honest-majority assumptions and careful parameter choices for confirmation depth, contest period, and collateral.

In other words, a proof-based sidechain can reduce trust in named intermediaries, but it does not remove assumptions. It shifts them toward source-chain consensus security, timing assumptions, and proof-system correctness.

How do federated sidechains (e.g., Liquid) trade trust for deployability?

Because fully trust-minimized pegs are hard to deploy, many real systems use a federation. Here the peg is not unlocked by the main chain verifying foreign consensus directly. Instead, a designated group signs transactions that custody the main-chain assets or authorize releases.

This is the route Liquid takes. BTC pegged into Liquid becomes LBTC, and each unit is backed by BTC secured by network functionaries. Those functionaries have two roles: they sign Liquid blocks and they act as watchmen over the bitcoin backing the sidechain asset. Bitcoin itself does not natively verify Liquid consensus. The peg works because the federation manages the custody and release process.

The advantage is practical deployment. You do not need to change Bitcoin’s scripting system to verify sidechain proofs. You can get fast finality, confidential transactions, issued assets, and production operations now. The disadvantage is equally clear: users are trusting a set of operators, even if those operators are mutually distrusting and organized to be auditable.

Liquid’s operational details show how these tradeoffs surface in real life. A peg-in requires 102 Bitcoin confirmations before funds can be claimed on Liquid, which is a conservative response to Bitcoin reorg risk. Peg-outs are batched and processed by watchmen, with expected delays rather than instant release. The general public cannot independently peg out without relying on network participants. There are also recovery mechanisms, including emergency keys after prolonged failure, which improve recoverability but add another explicit governance layer.

None of this makes federated sidechains “bad.” It makes them legible. They are best understood as systems that trade some trustlessness for functionality, speed, and deployability.

How do miner-voted sidechains (Drivechain) differ from validator-based sidechains?

Not every sidechain peg is proof-based or federated in the same way. Some designs try to use the main chain’s existing block producers as the authorizers of withdrawals.

Drivechain is the clearest example in the Bitcoin world. It proposes a decentralized two-way peg in which funds enter sidechain-specific buckets on Bitcoin, and Bitcoin miners vote over a long period to authorize withdrawals. Combined with blind merged mining, the idea is to let sidechains benefit from Bitcoin miner participation without requiring miners to fully validate the sidechain. The attraction is that security is tied to Bitcoin’s mining ecosystem rather than a separate named federation.

But here again, the mechanism reveals the tradeoff. If miners are the withdrawal authority, then users must rely on miner incentives and on a long voting window. The Drivechain material presents the major criticism directly: a majority of miners could collude to steal sidechain funds. Proponents argue this would be economically irrational. Critics reply that “economically irrational” is weaker than a hard cryptographic guarantee. The long withdrawal delay, often described in months, is itself part of the security design but also part of the user cost.

This pattern shows a broader principle. A sidechain’s safety often comes from asking some external actor set to notice, attest, contest, or co-sign. Changing who that actor set is changes the risk, but it rarely makes the problem disappear.

How are sidechains different from bridges, parachains, and IBC-style cross-chain systems?

| Model | Security root | Verification method | Deployment speed | Typical trade-off |

|---|---|---|---|---|

| Classic sidechain | Sidechain validators | Peg proofs or federations | Medium | Flexibility for feature experiments |

| Wrapped-asset bridge | Bridge guardians or contracts | Signed VAAs / custodial mint | Fast | Operational custody risk |

| Parachain / IBC | Light client + relay | Light-client verification | Depends on ecosystem | Interoperable, explicit light-client trust |

The word sidechain is sometimes used too broadly, so it helps to separate neighboring ideas.

A classic sidechain is a separate chain connected to a main chain by a two-way peg or equivalent bridge. In practice, that already makes it part of the broader interoperability landscape. Systems like Cosmos IBC and Polkadot XCM approach the same general problem (chains reacting to events on other chains) but with different assumptions. IBC relies on each chain running a light client of the other plus off-chain relayers to transmit packets. Polkadot’s XCM defines a cross-consensus message format and supports multiple transfer models, including reserve-backed transfers and teleportation, where trust assumptions differ depending on how the asset is represented.

These systems help clarify that a sidechain is not just about moving coins. It is about verifying foreign state well enough to act on it. Sometimes the action is “mint a representation of this asset.” Sometimes it is “execute this message.” Sometimes it is “release custody.”

They also clarify where terminology becomes slippery. Some ecosystems casually call sidechains “Layer 2,” but that is imprecise. Ethereum’s documentation explicitly distinguishes sidechains from rollups because rollups derive security from Ethereum while sidechains do not. In the same way, a bridge that locks tokens and mints wrapped assets elsewhere may look similar to a sidechain peg from the user’s point of view, but the actual trust model depends on who verifies the lock, who can mint, and who can release.

The user experience may be “move token from A to B.” The security reality may range from light-client verification to multisig custody to guardian signatures to validator voting. That difference matters more than the interface suggests.

What can go wrong if a sidechain’s trust or verification assumptions fail?

A good way to understand sidechains is to ask what fails when one assumption stops holding.

If the sidechain’s own validator set is compromised, the chain may continue operating while producing invalid history, or may approve fraudulent withdrawals. If enough validators go offline in a BFT-style system, the chain may halt instead of finalizing. If the peg depends on a federation, compromise of the signing threshold can directly endanger the locked reserve. If the peg depends on source-chain SPV proofs, deep reorganizations or majority attacks during the challenge window can create false confidence. If the design depends on relayers, liveness may fail even when safety remains intact.

Implementation quality matters as much as protocol design. Wormhole’s failure was not because “cross-chain is impossible,” but because incorrect verification code let fake evidence through. Ronin’s failure was not because “validators are always bad,” but because operational security, key management, and privileged access paths collapsed the intended trust model. These examples are useful because they show that the architecture and the operations are inseparable.

There is also a scaling-specific tradeoff that is easy to overlook. Running many sidechains can pressure decentralization. The original sidechains paper notes that miners serving more chains need more resources to track and validate them all. If participation in sidechains increases rewards but also increases operational complexity, that can favor larger operators. Even merged-mining and blind-validation proposals are partly attempts to soften this pressure.

So sidechains do not simply “add throughput.” They redistribute complexity across consensus, verification, custody, and operations.

When should you choose a sidechain for scaling and experimentation?

| Option | Security proximity | Flexibility | Throughput | Best when |

|---|---|---|---|---|

| Sidechain | Independent from L1 | High (custom rules) | High (tunable) | Need custom VM or features |

| Rollup | Derives L1 security | Medium (constrained) | High (data availability dependent) | Maximize L1 security |

| Validium | Separate execution, DA offchain | Medium | Very high | When DA cost is main blocker |

| L1 protocol change | Native L1 security | Low (slow upgrades) | Varies | When consensus must change globally |

Sidechains are best seen as a scaling and experimentation tool, not as a universal answer. They help when you want a separate execution environment with different rules, and when you can tolerate or intentionally choose a different security model. They are especially useful when applications value low fees, fast settlement, specialized functionality, or governance flexibility more than they value inheriting the full security of the base chain.

That is why sidechains have persisted even as rollups became more prominent. A sidechain can do things that would be awkward, expensive, or politically difficult to push into a base layer. It can serve a community with distinct performance needs. It can host confidential assets, custom VMs, or domain-specific applications. And because it is independent, it can evolve faster.

But that same independence is why sidechains are not the default best answer for every scaling problem. If your primary requirement is to keep users as close as possible to main-chain security, a rollup or other light-client-verified system may be the better fit. If your primary requirement is flexibility and speed under a separate trust model, a sidechain may be exactly right.

Conclusion

A sidechain is a separate blockchain that can validate or react to events on another chain, often so assets can move between them. The important thing to remember is not the bridge interface or the lower fees. It is the underlying rule: a sidechain usually gains flexibility by accepting independent security.

Once that clicks, the rest follows. Two-way pegs lock value on one chain and recreate or release it on another.

The hard part is proving cross-chain events, and every solution answers that problem with a different trust model.

- SPV proofs

- succinct proofs

- federations

- miner voting

- relayers

- guardian signatures

Sidechains are powerful because they let blockchains specialize. They are risky because specialization always comes with assumptions about who verifies what, and why you believe them.

How does the sidechain layer affect real-world usage?

Sidechains change the practical risk and delay profile of moving or trading an asset because they rely on different peg and validator models (federation, SPV proofs, miner voting, etc.). Before you fund or trade a sidechain-backed asset, use Cube Exchange to hold and execute the trade while you verify the chain’s peg rules and withdrawal behaviour.

- Research the sidechain’s peg and withdrawal rules: note whether it is federated, SPV-based, or miner/validator-approved and record required confirmation depths and peg-out delays (for example, Liquid requires large BTC confirmations and batched peg-outs).

- Fund your Cube Exchange account with the asset you plan to move or trade (deposit on-chain BTC or use Cube’s fiat on-ramp).

- Open the relevant market on Cube and choose an order type that matches the asset’s liquidity; use a limit order for thinly traded sidechain representations or a market order for immediate fills.

- If you plan to withdraw to the sidechain or back to the main chain, review the exact withdrawal path and expected timing: set the correct destination network, confirm any contestation windows, and verify custodian/federation signers if applicable.

- After executing or initiating a withdrawal, monitor on-chain confirmations and any published contestation/status pages; if delays or unexpected behaviour occur, provide Cube support the transaction and withdrawal IDs so we can help investigate.

Frequently Asked Questions

A two-way peg works by locking or encumbering the original asset on the main chain and creating (minting) a corresponding representation on the sidechain; returning the asset requires destroying or redeeming the sidechain representation and presenting proof that the burn/withdrawal occurred so the locked main-chain asset can be released. This lock-and-mint / burn-and-release invariant is the mechanism intended to prevent the same economic value from existing twice.

Common approaches include SPV-style proofs (submitting headers plus Merkle proofs), succinct proofs like NIPoPoWs, multisignature or federated signers that attest to deposits/withdrawals, miners or validators voting on withdrawals, and off-chain relayers that pass messages; each method transfers the problem of “who convinces chain B that event A happened” into a different trust or economic model.

No - SPV-based pegs offer only SPV-level security: they reduce verifier workload but still assume the source chain’s consensus (e.g., an honest majority of hashpower) and are vulnerable to deep reorganizations or majority attacks during confirmation/contest windows.

Federated designs (like Liquid) remove the need for on-chain verification changes and enable fast finality and extra features, but they do so by placing custody and peg authority in a set of functionaries/multisigners whom users must trust for correct peg operation, watchment, and recovery procedures.

Sidechains do not generally inherit main-chain security; rollups post enough data or proofs to the base layer so the base layer can enforce correctness, whereas sidechains typically rely on their own validator set, federations, or bridge mechanisms and therefore have independent security models.

Operational failures and implementation bugs are frequent root causes: compromised validator or guardian keys, incorrect verification code, insecure RPC/allowlist paths, and insufficient monitoring or relayer liveness can enable theft, fraudulent minting, or long outages, as illustrated by Wormhole and Ronin incidents.

Succinct proof systems (NIPoPoWs, SNARKs) can reduce data and reliance on named custodians, but they still depend on assumptions like honest-majority security of the source chain, correct parameterisation, and in some SNARK constructions a trusted setup; they therefore shift - rather than eliminate - trust and deployment complexity.

Choose a sidechain when you need a separate execution environment, custom VM, privacy primitives, faster block times, or governance flexibility and can accept an independent security model; choose a rollup or other L2 when your priority is keeping users as close as possible to main-chain security and minimizing extra trust assumptions.

Related reading