What is Polygon?

Learn what Polygon is, how Polygon PoS and zkEVM work, why they exist, and the key security tradeoffs behind Polygon’s Ethereum scaling approach.

Introduction

Polygon is an Ethereum-focused family of blockchain protocols and tools built to make onchain activity cheaper, faster, and easier to deploy than using Ethereum mainnet alone. The difficulty is that the word Polygon no longer points to just one system. In practice, it can mean the long-running Polygon PoS chain, the Polygon zkEVM network, the CDK tooling for building chains, interoperability efforts such as Agglayer, or the broader product stack Polygon presents to developers and enterprises.

That ambiguity is not a branding footnote; it is the main thing a careful reader has to get right. If you hear “Polygon scales Ethereum,” the next question is: by what mechanism? A sidechain, a rollup, and a validium can all lower costs, but they do so with different trust assumptions, different data-availability choices, and different failure modes. Polygon has operated across all of those design spaces. So the right way to understand Polygon is not as a single chain, but as a stack of scaling approaches orbiting Ethereum.

At the center of today’s public usage is still Polygon Chain, which Polygon’s own documentation describes as a Proof-of-Stake EVM chain focused on low cost transactions, high throughput, and wide ecosystem support. Historically and mechanically, that refers to the Polygon PoS network: an EVM-compatible chain with its own validator-driven consensus and periodic checkpointing to Ethereum. Around that core, Polygon has also built zero-knowledge systems and chain-development tooling that push toward a different future: one where many chains share proving, interoperability, and settlement infrastructure.

The cleanest way to hold all this in your head is simple: Polygon began by making Ethereum-compatible blockspace cheaper through a sidechain-like design, and then expanded into a broader architecture for ZK-based chains and interconnection. Once that clicks, the pieces stop looking contradictory.

Why does Ethereum need scaling, and how does Polygon address scarce blockspace?

Ethereum mainnet is valuable because it is hard to rewrite and broadly trusted. But that security is expensive because every node has to re-execute and store the same activity. When demand rises, fees rise with it. That creates a basic scaling problem: people want Ethereum’s assets, users, and developer ecosystem, but they do not want every simple transfer, game action, or app interaction to compete directly for mainnet blockspace.

Polygon’s answer has been to move most Execution away from Ethereum while keeping meaningful ties back to Ethereum. The important design choice is which parts stay on Ethereum and which parts move elsewhere. If you move execution off-chain but keep verification on Ethereum, you get something rollup-like. If you move both execution and consensus to a separate validator network but periodically anchor results to Ethereum, you get something closer to a sidechain. If you keep proofs on Ethereum but store transaction data elsewhere, you get validium-style tradeoffs.

That is why Polygon cannot be understood by fee numbers alone. Low fees are an outcome, not the mechanism. The mechanism is a set of choices about execution, consensus, proof generation, and data availability. Different Polygon products make different choices.

For many users, the reason Polygon became important is straightforward: it offered a place where Ethereum-style applications could run with much lower transaction costs. Because it is EVM-compatible, developers can deploy Solidity contracts and use familiar tooling. Because costs are lower, applications that would be awkward on mainnet become viable. Payments, gaming, consumer apps, and high-frequency interactions all benefit from that basic shift.

How does Polygon PoS (Polygon Chain) work under the hood?

| Component | Primary job | Tech base | Ethereum link | User effect |

|---|---|---|---|---|

| Heimdall | Consensus & validator coordination | CometBFT based | Submits checkpoints to L1 | Anchors chain state to Ethereum |

| Bor | Execution & block production | Geth / Erigon clients | Runs Polygon execution off‑chain | Fast transactions, PoS trust model |

The current Polygon PoS architecture is the most important place to start, because it is the system most people mean when they casually say “Polygon.” Polygon’s documentation describes it as an EVM-compatible sidechain for Ethereum designed to improve throughput and significantly reduce gas costs. That wording matters. It tells you that Polygon PoS is not simply “Ethereum, but cheaper.” It is a separate chain that is compatible with Ethereum and connected to Ethereum, but not secured in exactly the same way Ethereum L2 rollups are.

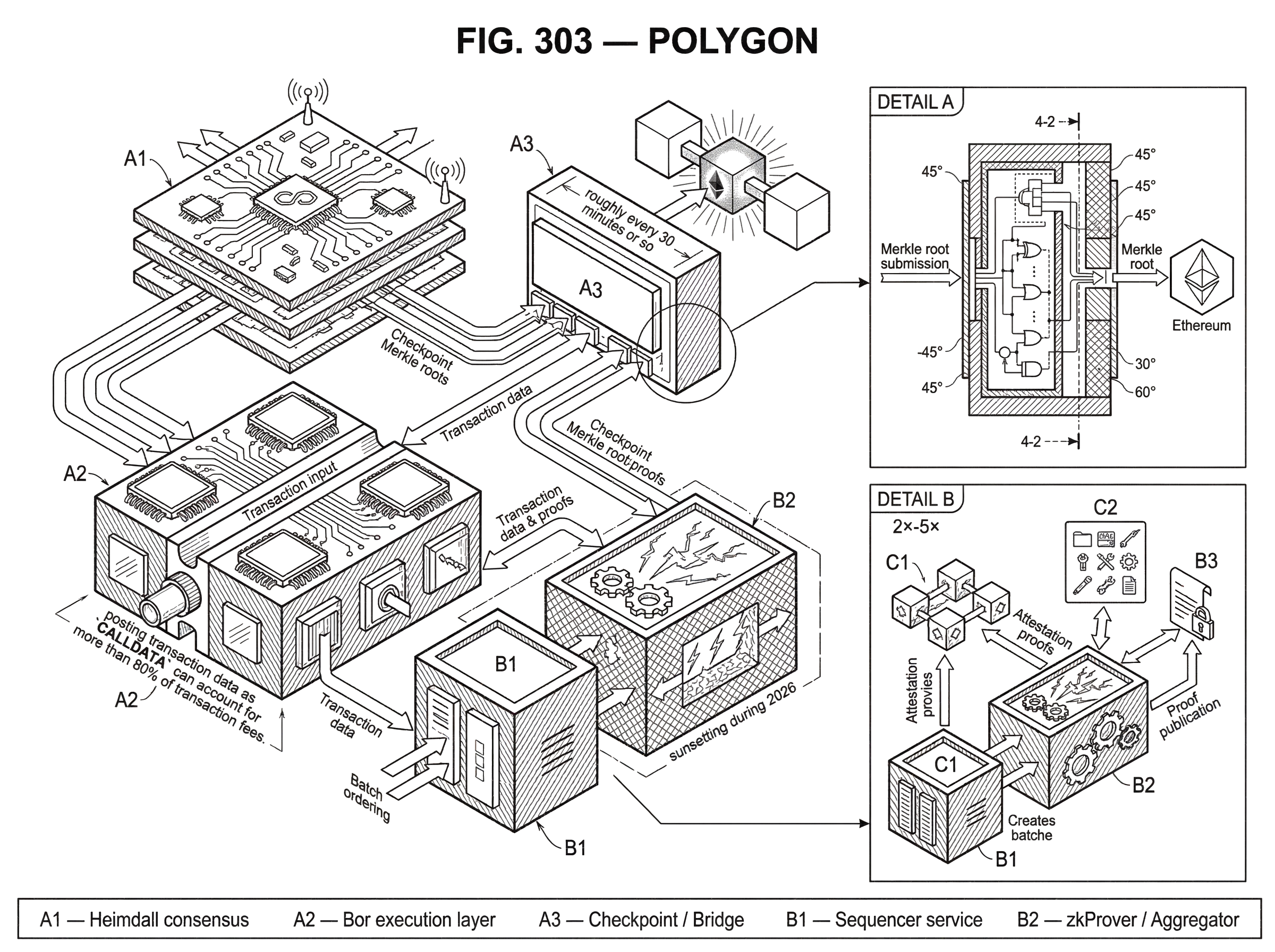

Its architecture has two main layers: Heimdall and Bor. Heimdall is the consensus-oriented layer. It is responsible for validator coordination, monitoring staking contracts deployed on Ethereum mainnet, handling some bridge-related events, and committing Polygon checkpoints to Ethereum. Polygon documents the newer Heimdall as being based on CometBFT, which gives a clue about its role: it is the part of the system concerned with agreement and validator behavior.

Bor is the execution layer. It is made of block-producing nodes that actually run transactions and advance the chain’s state. The primary Bor client is based on Go Ethereum, and Erigon is also supported. That is why developers experience Polygon PoS as familiar EVM infrastructure: at the execution layer, it behaves much like an Ethereum-style chain.

The split between Heimdall and Bor is useful because it separates two jobs that are conceptually different. Execution means taking transactions, running EVM code, and updating balances and contract storage. Consensus means deciding which transactions and blocks count as canonical and which validators are responsible for the chain’s progression. Bor handles the first kind of work; Heimdall coordinates the second and connects the system back to Ethereum.

Here is the mechanism in concrete terms. A user sends a transaction to Polygon PoS. Bor nodes execute it quickly on the Polygon chain, and for ordinary onchain activity the result can feel nearly immediate. Later, public checkpoint nodes take validated transaction history, build a Merkle root summarizing those transaction hashes, and submit that checkpoint to core contracts on Ethereum. Polygon’s docs describe this happening roughly every 30 minutes or so.

That checkpointing step is where many misunderstandings begin. A checkpoint on Ethereum does not mean Ethereum has re-executed every Polygon transaction and guaranteed every state transition the way a full validity rollup aims to do. It means Polygon has periodically anchored compact summaries of its own chain state or transaction history into Ethereum contracts. This improves auditability and is important for bridging and exits, but it does not erase the fact that Polygon PoS has its own validator set and its own consensus process.

So the right mental model is this: Polygon PoS borrows some settlement and anchoring properties from Ethereum, but day-to-day chain safety depends substantially on Polygon’s own validator and checkpoint system. That is why it is better described as a sidechain or sidechain-like PoS chain than as a classic rollup.

What does Polygon's checkpointing to Ethereum actually secure, and what risks remain?

Checkpointing exists to compress a lot of Polygon activity into a small commitment that Ethereum can store. A Merkle root works because it lets you commit to a large set of data with one small hash. If later someone needs to prove that a particular transaction or state element was part of that committed set, they can present a Merkle proof.

This matters most for bridging and exits. Polygon’s documentation says the core contracts on Ethereum anchor the Polygon chain and manage an exit queue to transfer assets back to Ethereum mainnet safely. That queue is not just an implementation detail. It exists because moving assets from a separate execution environment back to Ethereum requires a clear, verified process for deciding what claims are legitimate and in what order they are processed.

For simple activity entirely inside Polygon PoS, users often experience near-instant finality. The docs explicitly note that for simple transactions such as token transfers within the network, state finality is near instantaneous. But bridging is where the deeper settlement assumptions matter. An internal transfer only needs Polygon’s own chain to agree. A withdrawal to Ethereum needs Ethereum-side contracts to accept that Polygon-side events genuinely happened according to the bridge rules.

This distinction explains why “fast finality” can mean two different things. There is the fast practical finality users feel when the Polygon network accepts a transaction, and there is the slower, more conservative finality involved in anchoring and exiting through Ethereum-linked contracts. Confusing those two leads to wrong expectations about risk.

The analogy here is a receipt book and a bank ledger. Polygon PoS can issue receipts quickly within its own system, and every so often it writes a condensed summary into the bank’s ledger. That analogy helps explain why local transactions can be fast while cross-system settlement takes longer. Where it fails is that blockchains are not paper systems: the exact cryptographic commitments and bridge rules matter, and not every anchored summary gives the same level of safety as a validity proof.

What are the practical benefits and tradeoffs of building on Polygon PoS?

From a builder’s point of view, Polygon PoS succeeded because it gave a practical answer to a practical problem. The chain is EVM-compatible, so Solidity contracts, common wallets, and Ethereum developer habits carry over with relatively little friction. Fees are much lower than Ethereum mainnet fees in normal conditions, which changes application design. An app no longer has to treat every user action as precious blockspace.

That is why Polygon’s own materials emphasize payments, fintech, stablecoins, and broad ecosystem support. Cheap execution makes high-frequency transfers and consumer-scale interactions more realistic. If you are building a wallet, a marketplace, a game, or a payment flow, cost predictability often matters more than inheriting the strongest possible trust-minimized model.

But there is a tradeoff here, and it is better to state it plainly than to hide it behind marketing language. Polygon PoS is cheaper partly because it is not asking Ethereum to do as much work per transaction. The consequences are lower fees and higher throughput, but also a security model that depends more heavily on Polygon’s own validator and bridge design. For many applications that is an acceptable trade. For others, especially where Ethereum-level settlement guarantees are the whole point, it may not be.

This is the first place a smart reader might slip: seeing “uses Ethereum for settlement” and concluding “inherits Ethereum security in the same way as a rollup.” The docs do not support that stronger claim. They support a more precise one: Polygon PoS is connected to Ethereum through staking contracts, checkpoints, and core bridge contracts, but it remains a distinct chain with distinct assumptions.

How does Polygon zkEVM differ from Polygon PoS and other L2 designs?

Polygon did not stop at the PoS chain. It also built Polygon zkEVM, which its documentation describes as a Layer 2 network using zero-knowledge technology to provide validation and fast finality of off-chain transactions. This is a fundamentally different design direction from Polygon PoS.

In a zkEVM-style system, the key idea is not merely “run transactions elsewhere and checkpoint occasionally.” The idea is: run transactions off Ethereum, then produce a validity proof showing that the resulting state transition was computed correctly. Ethereum verifies that proof onchain. If the proof system works as intended and the needed data is available, this creates a stronger inheritance of Ethereum security than a sidechain-style architecture.

Polygon’s zkEVM architecture documentation breaks the system into several major components: a consensus contract on Ethereum, zkNode software, a zkProver, and a bridge. Operationally, three roles matter most: the sequencer, which orders and batches transactions; the aggregator, which computes proofs over those batches; and the L1 contract, which stores commitments and verifies proofs. The docs also state plainly that the live system has used a trusted sequencer and trusted aggregator even though the protocol is designed with more permissionless ambitions in mind.

That wording is important because it reveals a real-world tradeoff. The architecture aims for cryptographic verification, but the operational path there has included centralization. That is not unique to Polygon; many early L2s begin with more centralized operators for safety and iteration speed. Still, it means the clean theoretical story and the current practical trust model are not identical.

Mechanically, the flow is this. Users send L2 transactions to the sequencer. The sequencer orders them into blocks and batches, and L2 nodes can reflect those results quickly for user-facing finality. The aggregator then computes the resulting L2 state and generates zero-knowledge proofs attesting that the batch execution was correct. Finally, an Ethereum contract verifies the proof before accepting the new L2 state root.

That is the crucial difference from Polygon PoS. In Polygon PoS, Ethereum receives checkpoints that anchor Polygon activity. In zkEVM, Ethereum is meant to verify proofs of correct execution. Checkpointing says, in effect, “here is a compact commitment to what happened.” Validity proving says, “here is a cryptographic argument that what happened was computed according to the rules.” Those are not the same security statement.

Why does Polygon use zero‑knowledge proofs and how does its prover work?

| Prover type | Trusted setup | Proof size | On‑chain cost | Best for |

|---|---|---|---|---|

| zk‑STARK | No trusted setup | Large proofs | Higher verification cost | Fast, trustless proving |

| zk‑SNARK | Usually requires setup | Small proofs | Cheap verification | Succinct on‑chain checks |

| Hybrid (STARK+SNARK) | No STARK setup; SNARK compresses | Moderate (compressed) | Reduced on‑chain cost | Balance speed and gas |

Zero-knowledge proving sounds abstract until you ask what problem it solves. Ethereum cannot cheaply re-run every off-chain transaction from a high-throughput chain. So if an L2 wants Ethereum to accept its state transitions without trusting an operator blindly, it needs a compressed proof that Ethereum can verify cheaply.

Polygon’s zkProver is the machinery built for that purpose. The docs describe it as handling proving and verification logic for transaction validity, and they explain that it is modular: a main executor, secondary state machines, STARK and SNARK proof builders, and custom languages such as zkASM and PIL for expressing execution and polynomial constraints.

The high-level reason for this complexity is straightforward. EVM execution is messy. Smart contracts can branch, access storage, manipulate memory, call other contracts, and invoke cryptographic precompiles. To prove all that faithfully, the system has to turn ordinary program execution into mathematical constraints that a proving system can check.

Polygon’s design uses zk-STARKs for fast, trustless proof generation and then wraps or compresses those outputs with a zk-SNARK so onchain verification becomes cheaper. The docs explicitly describe this as a tradeoff: STARK proofs are attractive because they avoid a trusted setup and can be generated efficiently, but they are larger; SNARKs are more succinct for onchain verification. So the system combines them.

You do not need all the prover internals to understand the main point. The point is that Polygon’s ZK work is trying to replace “trust us that this off-chain computation was right” with “verify this compact cryptographic witness that it was right.” That is the heart of why ZK systems matter.

There are also caveats. Polygon’s zkEVM documentation says the network will be sunsetting during 2026, and independent assessments have raised concerns about practical data availability and the gap between intended proving guarantees and current deployment realities. So while zkEVM is central to understanding Polygon’s technological direction, it should not be treated as a settled, final endpoint.

What is data availability and why does it determine security for Polygon rollups vs validiums?

| Mode | Data location | Security | Fees | Recovery |

|---|---|---|---|---|

| Rollup | On‑chain (L1 calldata) | Reconstructable from L1 | Higher (data posting) | Exit directly to L1 |

| Validium | Off‑chain (DAC or servers) | Depends on DA custodian | Lowest fees | Depends on DAC availability |

| Volition | Hybrid (per batch choice) | Varies by chosen mode | Variable (tradeoff) | Can post data to L1 later |

If there is one concept that makes the broader Polygon stack click, it is data availability. A proof can show that a state transition is valid, but users and verifiers also need access to the underlying transaction data if they are to reconstruct state, check history, or safely exit under failure conditions. Where that data lives is one of the most important choices in blockchain scaling.

Polygon’s chain-building tooling, historically called Polygon CDK and now tied closely to the Agglayer ecosystem, makes this explicit. Builders choose between rollup mode and validium mode. In rollup mode, transaction data is posted to Ethereum, which gives the highest security because anyone can reconstruct the chain from L1 data. In validium mode, transaction data stays off-chain, which lowers fees but introduces trust in whatever mechanism preserves and serves that data.

Polygon’s own explanation is crisp: both modes use ZK proofs to validate L2 state, but validium stores transaction data off-chain rather than posting it to Ethereum. The reason is cost. Polygon notes that on its zkEVM, posting transaction data as CALLDATA can account for more than 80% of transaction fees. That means the expensive part is often not proving execution but publishing enough data for everyone else to verify and recover it.

To manage that tradeoff in validium mode, Polygon CDK uses a Data Availability Committee, or DAC. This is a permissioned group of nodes that attest that batch data is available. The sequencer sends batch data and its hash to the DAC; DAC members validate and store the data, sign the hash, and the sequencer submits enough signatures to an Ethereum contract that checks whether the configured threshold has been met. After that, the aggregator can generate and post the ZK proof.

This mechanism is elegant in one sense and blunt in another. It is elegant because it separates correct execution from data custody. A ZK proof can tell you the state transition follows from the batch; the DAC attestation tells you the batch data is supposedly available somewhere. But it is blunt because the trust model changes sharply. If data is not on Ethereum, users depend on the DAC or some equivalent off-chain availability layer.

That is why “uses ZK proofs” is not enough to characterize a chain’s safety. A validium can have excellent proving and still make a different security promise from a rollup, because users may not be able to reconstruct the chain from Ethereum alone. Polygon’s product line spans these choices, which is powerful for builders but confusing for casual observers.

What is Polygon's roadmap and how will it evolve into an ecosystem of chains?

Polygon’s recent direction is not just “run the PoS chain and also run a zkEVM.” It is to provide infrastructure for many chains. The documentation highlights Chain Development resources, the CDK, Vault Bridge, interoperability tooling, and Agglayer. The product homepage also presents an Open Money Stack for payments, wallets, on-ramps, and settlement.

The underlying idea is that a single blockchain need not be the final unit of scale. Instead, many application-specific or ecosystem-specific chains can exist, each choosing its own balance among cost, data availability, and security, while still interoperating through shared proof and settlement infrastructure. In that sense, Polygon is increasingly a network architecture project rather than only a single-network project.

This also helps explain governance and token changes. Polygon’s ecosystem is moving from MATIC toward POL as the native gas and staking token. Polygon’s documentation presents POL as the upgraded native token used for gas, staking, and network participation, with a 1:1 migration path from MATIC and an emissions schedule managed through ecosystem contracts and governance. The broader ambition, reflected in Polygon 2.0 proposals, is that stake can secure multiple services across the ecosystem, including validation, sequencing, proving, and data availability.

That is a significant architectural shift. In the older picture, one token primarily secures one chain. In the newer picture, one token and staking layer may coordinate security services across many chains and functions. Whether that vision is fully realized is still a matter of implementation and governance, but it shows where Polygon is trying to go.

What is Polygon? A concise summary of its networks, tools, and security tradeoffs

Polygon is best understood as an Ethereum scaling ecosystem with multiple architectures under one name. Its most established public chain, Polygon PoS, is an EVM-compatible Proof-of-Stake chain that lowers costs by running its own execution and consensus while anchoring checkpoints and bridge logic to Ethereum. Its ZK systems, such as zkEVM and CDK-based chains, pursue a different path: prove off-chain execution cryptographically and choose, chain by chain, how much data to keep on Ethereum versus elsewhere.

That is why discussions about Polygon often become confused. People use one word for several mechanisms. But the mechanisms are what matter. If you remember one thing, remember this: Polygon is not one scaling trick; it is a family of ways to move Ethereum-style activity off the main chain, each with its own balance of cost, speed, and trust.

How do you get Polygon exposure?

Buy Polygon exposure by purchasing the token that represents the network (commonly MATIC or POL) on Cube Exchange. Fund your Cube account, open the token's spot market, and place an order to convert fiat or a stablecoin into the Polygon asset you prefer. Follow the steps below to complete a spot purchase on Cube.

- Fund your Cube account with fiat via the on‑ramp or transfer USDC from an external wallet.

- Open the MATIC/USDC or POL/USDC spot market on Cube.

- Choose a limit order to control price or a market order for immediate execution, then enter the token amount.

- Review estimated fees and the order preview, then submit your trade.

Frequently Asked Questions

Polygon PoS is an EVM‑compatible sidechain with its own validator set and consensus; it periodically anchors compact Merkle‑root checkpoints to Ethereum (roughly every 30 minutes) but Ethereum does not re‑execute Polygon transactions, so it does not inherit rollup‑level reexecution/validity guarantees.

Heimdall is the consensus/validator coordination layer (recent Heimdall is based on CometBFT) responsible for staking monitoring and submitting checkpoints to Ethereum, while Bor is the execution layer (Geth/Erigon‑based) that produces blocks and runs EVM transactions.

Checkpointing commits a Merkle root summary of Polygon history to Ethereum to improve auditability and support bridge exits, but it does not mean Ethereum has verified every transaction; internal Polygon finality is fast, while cross‑chain withdrawals rely on the bridge and exit queue and therefore follow slower, different settlement assumptions.

In theory zkEVM aims to remove operator trust by producing cryptographic validity proofs that Ethereum verifies, but in practice the live zkEVM deployment has used a trusted sequencer and trusted aggregator so operational trust remains until the protocol’s permissionless/operator decentralization goals are implemented.

A Data Availability Committee (DAC) is a permissioned group that attests to off‑chain batch data availability in validium mode; it lowers on‑chain costs but shifts availability trust off‑chain, making users dependent on the DAC and creating risk if members are unavailable or act maliciously - detailed recovery mechanisms are not fully specified.

Polygon’s proving pipeline uses zk‑STARKs (no trusted setup, larger outputs) combined with a zk‑SNARK layer to compress those outputs for cheaper on‑chain verification, trading proof generation characteristics against on‑chain verification cost.

Documentation and public repositories do not clearly list operator identities for sequencers, provers, or DAC members, and multiple docs/evidence flag the current deployments as using trusted/permissioned operators and list the decentralization roadmap as unresolved.

If you need the strongest Ethereum‑level settlement, prefer a rollup mode that posts transaction data on‑chain (rollup mode); validium minimizes fees by keeping data off‑chain but provides weaker recovery guarantees, and Polygon CDK/Agglayer explicitly presents both rollup and validium as different security/cost tradeoffs.

The POL migration is presented as a 1:1 upgrade path from MATIC and POL is described as the planned native gas/staking token, but docs and PIP threads also note phased rollouts and operational caveats (e.g., POL contract non‑upgradeability and staking support timing), so exact timelines and some governance mechanics remain under-specified.

Related reading