What Are Zero-Knowledge Proofs?

Learn what zero-knowledge proofs are, how they work, why they matter in cryptography and blockchains, and the tradeoffs behind SNARKs and STARKs.

Introduction

Zero-knowledge proofs are a cryptographic way to prove that a statement is true without revealing why it is true. That sounds impossible at first. In ordinary life, if you prove you know a password, a route through a maze, or the sender and amount behind a private transaction, you usually reveal at least some of that hidden information in the process. Zero-knowledge changes that tradeoff.

This idea matters because modern digital systems constantly face a tension between verification and privacy. A blockchain wants everyone to verify that coins are not created from nothing, that a note was authorized, or that a rollup executed transactions correctly. But full verification often seems to require full disclosure. Zero-knowledge proofs exist because, in many settings, that intuition is too pessimistic.

The key shift is this: a proof does not have to be a static object containing the secret or a certificate derived too directly from it. It can be a carefully designed interaction (or, in later constructions, a transcript that simulates such an interaction) where the verifier becomes convinced that the prover could only have answered correctly by knowing a valid witness, yet learns nothing useful about that witness itself.

That combination of properties is why zero-knowledge proofs sit at the center of private payments, validity rollups, identity systems, verifiable computation, and a growing part of applied cryptography.

What problem do zero-knowledge proofs solve and why are they needed?

To see why the concept exists, start with a simpler notion: proving membership in a language. A statement might be “this graph has a Hamiltonian cycle,” “this number is a quadratic non-residue modulo m,” or “this transaction is valid under the rules of the protocol.” In each case there is a fact to be checked and often also a hidden object (a route, factorization-related structure, secret key, Merkle path, or execution trace) that explains why the fact is true.

Classical proofs often reveal too much. If you hand over the Hamiltonian cycle itself, you have certainly proved the graph has one, but you have also disclosed the exact cycle. If you reveal every intermediate step of a private transaction, you may prove balance and authorization, but you also expose sender, receiver, amount, and linkages. In cryptographic systems that is often unacceptable because the secret is not just supporting evidence; it is valuable information in its own right.

What zero-knowledge tries to preserve is an invariant: the verifier should learn the truth of the statement, but not the witness behind it. More carefully, the verifier should not learn anything it could not have generated for itself given only the statement being true. That is the compression point behind the subject. The proof is useful only because it transfers confidence, not because it transfers knowledge of the secret.

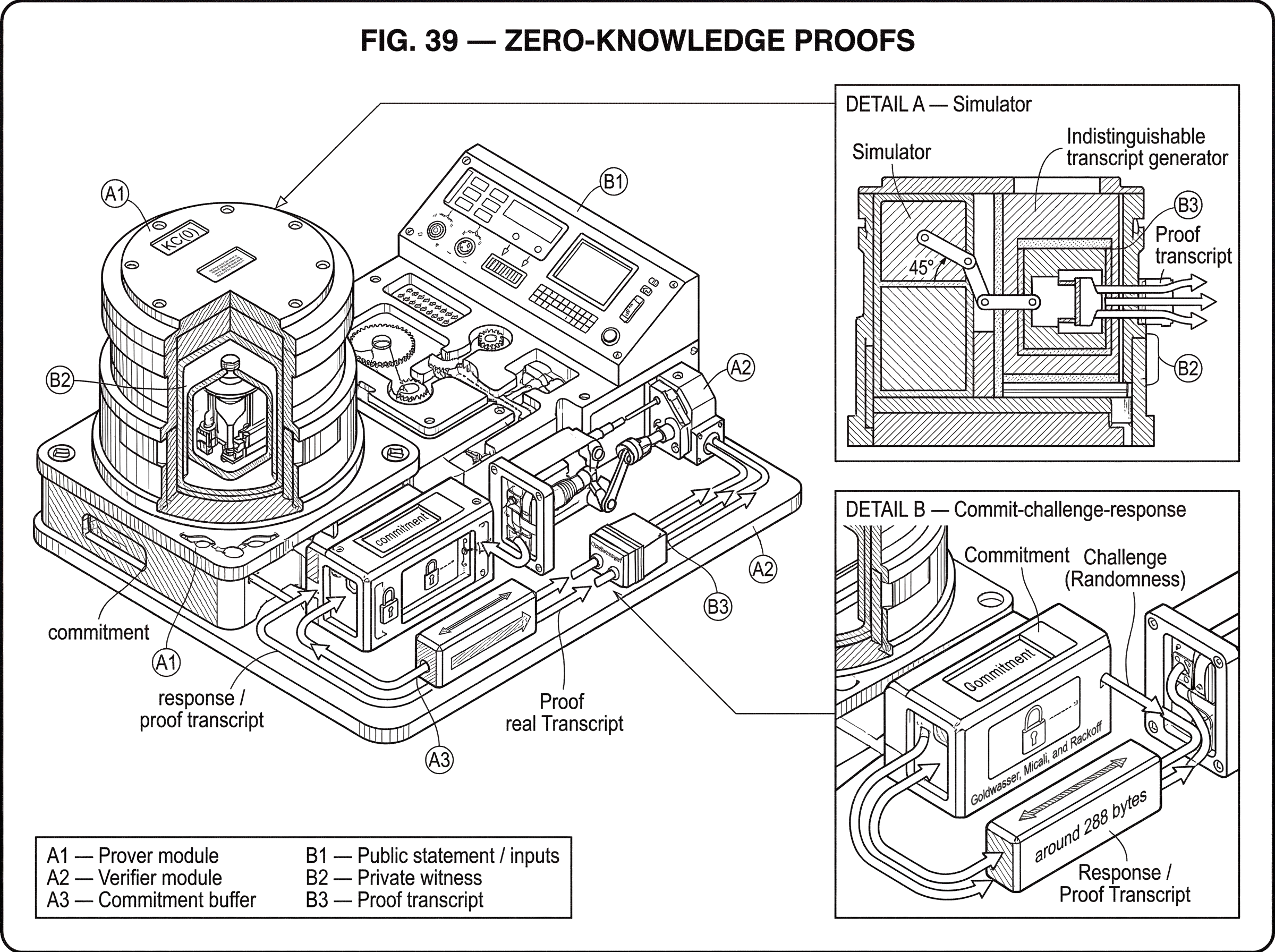

This is why the original theory was framed in terms of interactive proof systems and knowledge complexity. Goldwasser, Micali, and Rackoff introduced interactive proofs as a model where a powerful prover and a polynomial-time verifier exchange messages. They then asked a more subtle question than “is the verifier convinced?” They asked: how much extra knowledge does the verifier gain from the interaction? Their answer formalized the possibility that some proofs can have essentially zero additional knowledge; KC(0) in their terminology.

That was the breakthrough. It showed that proof and disclosure are not the same thing.

How do interactive proofs reduce information leakage?

If a traditional proof is like handing someone a finished document, an interactive proof is more like surviving an unpredictable oral exam. The verifier chooses challenges; the prover must answer them consistently. If the prover is bluffing, there is a good chance it will be caught. If the prover really knows the witness, it can keep answering correctly.

This interaction matters because it lets the verifier test structure without asking for the entire secret. The verifier does not need the witness itself; it needs evidence that the prover can respond correctly under many possible checks. The prover’s power lies in being able to coordinate answers across those checks in a way that would be infeasible without actual knowledge.

The analogy to an oral exam helps explain why interaction reduces leakage, but it has limits. In an exam, repeated questioning may still reveal a lot about what the student knows. In a zero-knowledge proof, the protocol is designed so that even a transcript of all questions and answers reveals nothing beyond validity. So the mechanism is not merely “ask random questions.” It is “ask questions in a way that extracts confidence while allowing the transcript to be simulated without the secret.”

That last clause is the heart of zero-knowledge. A protocol is zero-knowledge if whatever the verifier sees could have been produced by a simulator that does not know the witness, only the fact that the statement is true. If such a simulator exists, then the transcript cannot be carrying useful hidden knowledge about the witness, because the simulator made an indistinguishable transcript without it.

This simulator viewpoint is the cleanest way to understand the concept. Instead of trying to define directly what the verifier “learns,” which is slippery, cryptography asks a sharper question: could the verifier have generated an equivalent view on its own? If yes, the proof did not transfer additional knowledge.

What properties must a zero-knowledge proof satisfy (completeness, soundness, zero-knowledge)?

A zero-knowledge proof is not just about privacy. It has to solve three problems at once.

First, completeness means an honest prover with a valid witness can convince the verifier. A proof system that hides everything by never convincing anyone is useless. Completeness is the guarantee that truth remains provable.

Second, soundness means a false statement should not be accepted except with tiny probability. This is what prevents cheating. In modern systems you will often see this weakened from proof to argument: soundness then relies on computational assumptions, meaning a sufficiently powerful but computationally bounded adversary still cannot forge convincing proofs.

Third, zero-knowledge means the verifier learns nothing beyond the fact that the statement is true. In rigorous treatments this is expressed through simulation. In practical language: the proof should certify correctness without turning hidden data into public data.

These properties pull in different directions. Stronger privacy often makes protocol design harder. Stronger succinctness may require stronger assumptions. Removing interaction may require extra machinery, such as a common reference string or Fiat–Shamir-style hashing. So every deployed proof system is balancing these goals under a particular model.

How can a prover convince a verifier without revealing the secret (intuitive protocol sketch)?

Consider the common shape behind many protocols. A prover knows some hidden witness w for a public statement x. The verifier wants confidence that the relation “w proves x” is true, but does not want w itself.

Imagine a protocol where the prover first commits to something derived from w in a way that hides it. The verifier then sends a random challenge. Depending on that challenge, the prover reveals just enough to show consistency with the commitment and with x. If the challenge space is large or can be repeated many times, a dishonest prover who does not know w is unlikely to answer correctly for all possible challenges. But a real prover can.

Now focus on why this may be zero-knowledge. The verifier only ever sees a commitment and an answer to one random challenge, not enough information to reconstruct w. More than that, if for each possible challenge there is a way to fabricate a transcript that looks real without knowing w, then the transcript itself is not evidence of leaked secret data. Its evidentiary value comes from the fact that a cheating prover would have trouble matching the verifier’s unpredictable challenge in a live execution.

The earliest landmark example showed that quadratic non-residuosity could be proven with zero additional knowledge through interaction. That mattered because it gave a concrete, nontrivial language where the witness did not have to be exposed. It was not just philosophy; it was a protocol.

How are interactive zero-knowledge proofs converted into non-interactive proofs?

| Mode | Rounds | Proof form | Setup needed | Security model | Common use |

|---|---|---|---|---|---|

| Interactive | Multiple messages | Live transcript | Usually none | Can be info‑theoretic | Theory, protocol dialogues |

| Non‑interactive | Single message | Standalone string | CRS or Fiat–Shamir | Usually computational | Blockchains, attached proofs |

Interactive proofs are conceptually clean, but many real systems cannot afford back-and-forth. A blockchain validator cannot stop the chain and conduct a fresh dialogue with every prover. A payment proof may need to be attached to a transaction as a self-contained object. A rollup wants to post one proof to a base chain, not run an interactive protocol with every verifier.

So modern systems often use non-interactive zero-knowledge proofs, where the proof is a string that anyone can verify later. The usual way to get there is to replace the verifier’s randomness with some public source of randomness or with a hash function applied to the transcript so far. In the random-oracle model, this transformation is often captured by Fiat–Shamir. Conceptually, the prover behaves as if it is talking to a verifier whose challenges are determined by hashing the conversation.

This is where assumptions enter more visibly. Interactive zero-knowledge can have strong information-theoretic features. Non-interactive systems usually rely on algebraic or hash-based assumptions, setup assumptions, or idealized models. In other words, zero-knowledge as a concept is broad, but concrete proof systems live inside engineering tradeoffs.

That is why the word proof is often replaced in practice by argument. A SNARK is usually a succinct non-interactive argument of knowledge: soundness holds against efficient adversaries, not literally all adversaries. A STARK is also an argument system, typically built from interactive oracle proofs plus cryptographic hashing. The distinction is not pedantic. It tells you which guarantees are unconditional and which depend on hardness assumptions.

Why do blockchains use zero-knowledge proofs for privacy and scalability?

Blockchains turned zero-knowledge from an elegant theory into infrastructure.

The reason is simple. Public chains want global verifiability, but users and applications often want local privacy and lower verification costs. Zero-knowledge addresses both at once. It can hide private inputs and compress heavy computation into a short proof.

In shielded payment systems, the statement being proved is not “I want to spend this exact output.” It is closer to “there exists some committed note in the ledger that I am authorized to spend; I am consuming it exactly once; the values balance; and the newly created commitments are well formed.” The public chain sees a proof, commitments, and nullifiers. It does not see which note was spent. Zcash’s protocol specification makes this structure explicit: shielded transactions include zk-SNARK proofs that enforce existence of commitments, correct nullifier computation, authorization, and value consistency without identifying the underlying notes.

That mechanism is worth slowing down for. A commitment is public but hides the note’s contents. When the note is spent, the spender reveals a nullifier, which is unique and prevents double-spending. The zero-knowledge proof ties these together: it proves that the revealed nullifier corresponds to some valid committed note and that the spender knows the required authorizing data. The chain learns enough to reject inflation and double-spends, but not enough to link sender and receiver in the fully shielded case.

Rollups use the same core idea differently. Here the hidden object is not necessarily a private secret; often it is a huge computation trace. The rollup wants to prove: “the new state root was obtained by correctly applying the protocol’s transition rules to the previous state and this batch of transactions.” Without succinct proofs, an L1 verifier would need to re-execute everything. With a zero-knowledge validity proof, it checks a compact argument instead.

The zero-knowledge property can be optional here. Some rollup proofs are mainly about succinct validity, not hiding the trace. But the same proof-system families support both succinctness and privacy, and the engineering stacks often overlap.

SNARKs vs STARKs; what are the practical trade-offs?

| Variant | Proof size | Setup | Assumptions | Post‑quantum | Best for |

|---|---|---|---|---|---|

| SNARKs | Very small | Often trusted setup | Pairings / algebraic | No | Compact proofs, fast verify |

| STARKs | Larger | Transparent (no CRS) | Hash / ROM style | Yes | Transparency and scalability |

The broad family name is zero-knowledge proof, but most deployed systems use specialized subfamilies.

SNARKs are valued for very short proofs and fast verification. Groth16, for example, gives extremely compact proofs (in one construction, just three group elements) and low verification cost. Pinocchio was an earlier milestone showing that practical verifiable computation could produce constant-sized proofs, around 288 bytes in its implementation, with verification on the order of milliseconds. These systems helped make “prove a large computation, verify quickly” feel practical rather than merely asymptotic.

The price is usually some combination of algebraic assumptions and setup complexity. Many preprocessing SNARKs require a circuit-specific or universal trusted setup to produce proving and verification keys. If that setup is compromised in the wrong way, soundness may fail. This is why ceremonies and setup models matter so much in SNARK-based systems.

STARKs push toward a different point in the design space. They aim for transparency (avoiding trusted setup) and rely more on hash-based machinery that is intended to be more comfortable in a post-quantum world. The STARK literature emphasizes scalable prover time and polylogarithmic verifier time, with tools like FRI making low-degree testing efficient. The cost is proof size: the original STARK paper notes that STARK proofs were substantially larger than contemporary SNARK proofs, even while offering transparency and post-quantum-oriented assumptions.

So the organizing principle is not that one family is “better.” It is that they optimize different bottlenecks. If proof size is everything, a pairing-based SNARK may be attractive. If transparent setup and post-quantum concerns dominate, a STARK may be preferable. Many modern systems then add recursion, aggregation, or custom arithmetization to move the tradeoff frontier.

How are statements encoded as circuits and constraints for zero-knowledge proofs?

A common misunderstanding is that zero-knowledge proofs directly prove arbitrary English statements. They do not. They prove that a formal relation holds.

In practice, that relation is often encoded as an arithmetic circuit or a constraint system over a finite field. The public inputs are the visible statement. The private inputs are the witness. The circuit computes checks such as signature validity, Merkle-path membership, balance conservation, or correct state transition. The prover then shows that there exists an assignment to the private wires satisfying all constraints.

This is why tools like circom, libsnark, arkworks, bellman, and gnark matter. They are not just software wrappers around magic proofs. They are compilers and libraries for turning ordinary program logic into algebraic constraints that a proving system can handle. Circom, for example, compiles circuits into constraints and the artifacts needed for proving. Libraries such as libsnark and gnark implement concrete zk-SNARK proving systems and gadget frameworks. Arkworks provides a Rust ecosystem from finite fields up through R1CS constraints and proving systems.

The consequence is important: the security of a zero-knowledge application is not only about the proof system. It is also about whether the circuit correctly captures the intended claim. If the relation is wrong, the proof can be perfectly valid and still certify the wrong thing.

What practical failure modes affect zero-knowledge deployments?

| Failure mode | Root cause | Effect | Mitigation |

|---|---|---|---|

| Unsound circuit logic | Incorrect or missing constraints | False statements provable | Circuit audits and tests |

| Proof‑system bugs | Implementation errors | Broken soundness or proofs | Use audited libs; fuzzing |

| Side‑channel leakage | Timing/cache/randomness leaks | Secret material exposed | Constant‑time code; entropy |

| Weak assumptions | Inappropriate crypto/params | Future or attackable breakage | Choose robust assumptions; update |

This is the part many introductions understate. Zero-knowledge proofs are mathematically deep, but deployed systems fail in ordinary engineering ways too.

One failure mode is unsound circuit logic. If a circuit forgets to constrain a variable, mishandles field overflow, or accidentally permits multiple encodings of the same value, a malicious prover may satisfy the formal constraints while violating the intended application rule. The gnark advisory on non-unique binary decomposition is a good example: comparison methods built on faulty bit decomposition allowed incorrect inequalities to be proved. The bug was not “zero-knowledge is broken.” The bug was that the implemented relation was weaker than intended.

Another failure mode is proof-system implementation bugs. Plonky2 disclosed a soundness issue in lookup-table handling where zero-padding introduced an unintended 0 -> 0 pair. That let malicious provers certify false lookup claims in affected circuits. Again, the lesson is structural: the theorem proved by the implementation was not the theorem the developer thought they were proving.

A third failure mode is side-channel leakage. Even if the protocol is zero-knowledge in the formal model, software may leak through timing, memory access patterns, cache behavior, or poor randomness sources. The libsnark repository explicitly warns that its code is research-quality and notes data-dependent runtimes and randomness concerns. Formal zero-knowledge says what the transcript reveals, not what your CPU cache reveals.

There are also assumption-level limits. Some SNARK constructions rely on pairing-friendly curves and nonstandard or model-dependent assumptions. Some STARK constructions rely on random-oracle-style hashing and pay with larger proofs. Recursive systems such as Halo remove trusted setup but are not fully succinct in the strongest sense; they shift where verification work is paid. None of this invalidates zero-knowledge. It means every concrete system comes with a specific threat model and cost profile.

What is recursive proof composition and why does it matter?

Once proofs become short objects, a natural idea appears: can one proof verify another proof, and then prove that verification happened correctly? This is recursion.

Recursion matters because it lets systems compress long chains of verification into a single final proof. In blockchains, that means many state transitions or batches can be folded together. In verifiable computation, it means intermediate proofs can be aggregated. In identity systems, many attestations may be combined while preserving privacy.

Halo is a useful milestone here because it showed practical recursive proof composition without a trusted setup. Its design uses polynomial commitments and amortization tricks to keep recursive verification feasible, while accepting that some verification cost is deferred to the ultimate verifier. The broader point is that recursion changes the economics of zero-knowledge: it turns “proofs of computations” into “proofs of proofs of computations,” which is why modern rollups and proof aggregation systems have moved so quickly.

What does zero-knowledge actually guarantee; and what remains exposed?

It is worth separating the fundamental idea from conventions that happen to be popular today.

The fundamental idea is simulation-based privacy during proof of validity. A verifier should become convinced of truth without gaining usable information beyond that truth.

Interaction versus non-interaction is not fundamental. The concept began with interaction, but many modern systems are non-interactive arguments.

Trusted setup is not fundamental either. Some systems require it; others are transparent.

Succinctness is also not fundamental. A proof can be zero-knowledge without being tiny. SNARKs and STARKs combine zero-knowledge with succinctness, but the privacy property itself is older and broader.

And zero-knowledge does not mean “nothing at all is revealed.” The statement itself is revealed. Public inputs are revealed. Metadata outside the proof may be revealed. In payment systems, partially shielded designs may still leak linkable information even if the internal proof is zero-knowledge. Privacy comes from the whole system design, not just the acronym.

Conclusion

Zero-knowledge proofs solve a very specific problem: how to transfer confidence without transferring the secret that creates that confidence. The central trick is not mystical. It is to design a proof transcript whose convincing power comes from structure, challenge-response consistency, and simulation-based privacy; not from disclosure of the witness.

That idea now supports private transactions, validity rollups, outsourced computation, and recursive proof systems. But the concept only delivers its promise when the surrounding machinery is correct: the relation must match the intended claim, the implementation must avoid soundness bugs and side channels, and the assumptions must fit the use case.

If you remember one thing tomorrow, make it this: a zero-knowledge proof is not about hiding evidence; it is about proving that evidence exists and is valid, without handing the evidence over.

What should I check about zero-knowledge proofs before using a protocol?

Before using a protocol that relies on zero-knowledge proofs, verify the proof family, setup model, and recent implementation audits so you know what guarantees (and which risks) apply. After you finish those checks, use Cube Exchange to fund and trade or transfer with the informed expectation of the protocol’s privacy and soundness trade-offs.

- Check whether the protocol uses SNARKs, STARKs, or another argument type and whether it requires a trusted setup (look for explicit mention of a CRS/ceremony or “transparent” design).

- Search for public audits, advisories, or CVEs for the proving libraries and circuit implementations (look for terms like “circuit bug,” “lookup-table issue,” or the project names used in the protocol docs).

- Inspect example on-chain proofs or transaction formats on a block explorer to confirm the protocol publishes commitments, nullifiers, or proof-verify events that match its privacy claims.

- Fund your Cube account with fiat or a supported crypto and open the relevant market or transfer flow for the asset you plan to use.

- Choose an order or transfer details (use a limit order for price control, or a market order for immediate execution), review fees and the destination chain/asset, and submit.

Frequently Asked Questions

Zero-knowledge means the verifier learns the truth of the public statement but not the private witness; formally this is captured by the existence of a simulator that can produce an indistinguishable verifier view without knowing the witness, so whatever the verifier sees could have been generated without the secret.

SNARKs prioritize very short proofs and fast verification but typically rely on algebraic hardness assumptions and often a trusted setup; STARKs prioritize transparency and hash-based (post‑quantum‑friendly) assumptions at the cost of substantially larger proofs and different prover/Verifier tradeoffs.

Non‑interactive proofs are usually obtained by removing the verifier’s live randomness (e.g., via Fiat–Shamir or a public randomness/source), but that transformation brings in model or cryptographic assumptions (random‑oracle/hash assumptions or setup), so non‑interactivity typically weakens some ideal information‑theoretic guarantees.

A trusted setup creates public parameters that some SNARKs need and if that setup is compromised it can break soundness, which is why ceremonies and setup models are a critical operational concern for SNARK-based deployments.

Common practical failure modes are: incorrect circuit/constraint encodings that leave holes a prover can exploit, implementation bugs in proving libraries (e.g., lookup or padding mistakes), and side‑channel leaks like timing or poor randomness; these are distinct from a failure of the mathematical zero‑knowledge notion itself.

Recursion lets a proof attest that another proof verified correctly, enabling compression of many verifications into a single short object; this is powerful for rollups and aggregation but can shift verification costs and brings its own extractor/assumption subtleties (for example, Halo supports recursion without trusted setup but is not fully succinct).

Zero‑knowledge does not hide the public statement or outside metadata; proofs reveal the public inputs and any protocol metadata, and privacy is only achieved when the whole system (commitments, nullifiers, transaction format) is designed to avoid linking leaks.

Calling something an “argument” rather than a “proof” signals that soundness depends on computational assumptions (i.e., only efficient adversaries are prevented from forging proofs), whereas an information‑theoretic proof would resist unbounded adversaries.

Practical zero‑knowledge systems are built over encoded relations (arithmetic circuits or constraint systems), so the security and correctness depend critically on whether the circuit faithfully captures the intended policy; a correct proof only proves the encoded relation, not the informal English requirement.

There are unresolved foundational questions about the precise power of zero‑knowledge complexity classes (for example, whether KC(0) is contained in NP or in constant‑round IP) that remain open in the theoretical literature.

Related reading