What Is Staking?

Learn what staking is, why proof-of-stake blockchains use it, how rewards and slashing work, and the key trade-offs across major networks.

Introduction

Staking is the act of committing cryptoassets to help secure a proof-of-stake blockchain. That sounds like an investment feature, and many people first encounter it that way, but the deeper point is not yield. The deeper point is security: a Proof-of-Stake system needs some way to decide who gets to help make blocks, who checks everyone else’s work, and what those participants stand to lose if they cheat or fail badly.

That is the puzzle staking solves. In proof-of-work, miners prove commitment by burning external resources; mainly electricity and hardware. In proof-of-stake, validators prove commitment by putting assets already inside the system at risk. The mechanism is different, but the goal is the same: make attacks expensive and honest participation economically attractive.

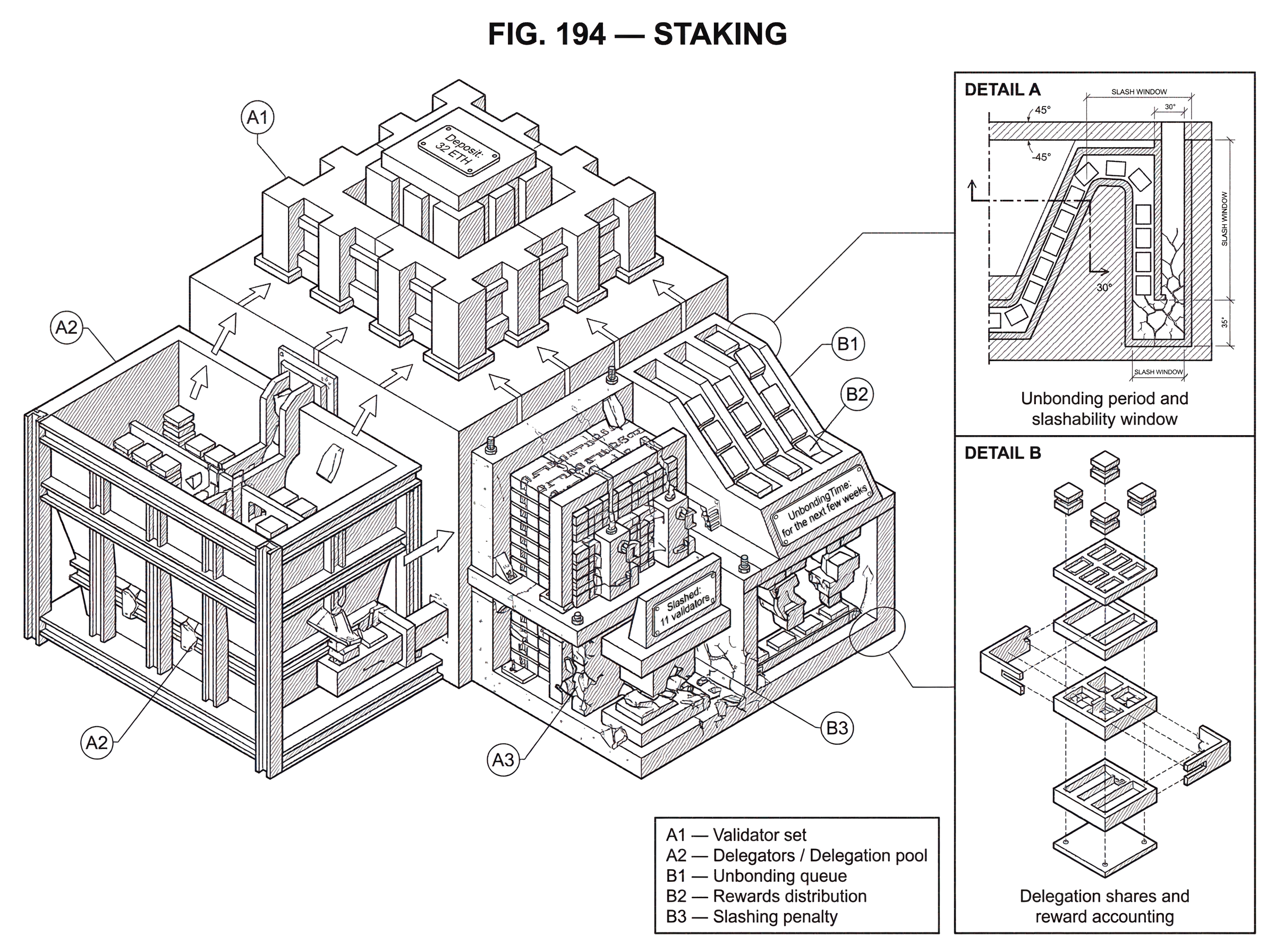

Once you start from that premise, many details that seem arbitrary stop seeming arbitrary. Why are funds sometimes locked? Why do some chains let you delegate instead of running a validator yourself? Why is there an unbonding period before stake becomes freely movable again? Why can being offline be mildly penalized while double-signing can be punished much more severely? These are not random product decisions. They are ways of protecting a core invariant: the chain should be easier to secure honestly than to manipulate dishonestly.

Staking is not one single protocol feature implemented the same way everywhere. Ethereum, Cosmos chains, Polkadot, Cardano, and Solana-adjacent ecosystems all use the general idea differently. Some require a fixed amount to run a validator directly. Some let token holders delegate to validators. Some support liquid staking tokens through third-party protocols. Some emphasize slashing heavily; others rely more on reward structure and validator-set design. But across these designs, the same structure keeps reappearing: lock capital, grant consensus influence, reward useful behavior, and penalize behavior that threatens safety or liveness.

How does staking turn capital into accountability on proof‑of‑stake chains?

At the center of staking is a simple bargain. A participant says, in effect, *I want influence over the chain’s consensus, and I am willing to post economic collateral to get it. * The protocol accepts that bargain by letting stake affect validator selection, voting power, or both.

Why is collateral necessary? Because consensus is not just about choosing blocks; it is about choosing them in a way other participants can trust. If a validator could vote carelessly, sign conflicting histories, or disappear at critical moments with no cost, then the protocol would be asking the network to trust actors who have little to lose. Staking changes that. It ties a validator’s future economic position to its present behavior.

Here is the mechanism in ordinary language. A blockchain maintains some set of participants who can help finalize or produce blocks. Those participants are weighted, directly or indirectly, by stake. If they do their job correctly (producing blocks when selected, attesting correctly, staying online, following protocol rules) they earn rewards. If they violate important rules, the protocol can reduce their stake through penalties or slashing. This creates a feedback loop: stake buys influence, but influence comes with liability.

That liability is the crucial idea. Rewards by themselves do not secure a chain; they attract participation. Penalties and lockups are what make participation credible. A chain is secure not because people are paid, but because they can be punished for violating the consensus rules in ways that endanger everyone else.

Validators vs. delegators: why do some chains separate economic backing from operation?

| Role | Responsibility | Barrier | Slash exposure | Reward claim |

|---|---|---|---|---|

| Solo validator | Run consensus software | High, 32+ ETH & ops | Direct slashing risk | Full protocol rewards |

| Delegator | Back validators with stake | Low, no infra needed | Shared via validator | Pro-rata rewards share |

| Staking pool | Aggregate stake and operate | Medium, trust operator | Pool-level exposure | Pool token or commission |

A smart reader often asks: if staking is about security, why doesn’t every token holder need to run a validator? The answer is practical. Running consensus infrastructure continuously is operational work. It requires software, networking, monitoring, upgrades, and careful key management. Many holders want to contribute capital without taking on that operational burden.

That is why many proof-of-stake systems separate economic backing from operational execution. Validators run the infrastructure and participate directly in consensus. Delegators, nominators, or pool participants back those validators with stake. The protocol then treats the validator as the active operator and the delegated stake as part of the economic weight behind that operator.

This separation solves two problems at once. It lowers the barrier to participation, because ordinary token holders do not need to maintain always-on infrastructure. And it creates a market for validator quality, because stake can flow toward operators who are reliable, well-run, and trusted.

Cosmos makes this especially explicit. In the Cosmos SDK staking module, holders of the native staking token can become validators or delegate to them, and the effective validator set is determined by stake. Validators move among statuses such as Bonded, Unbonded, and Unbonding. Only bonded validators are actively signing blocks and earning rewards. Delegation is tracked through shares, which are an accounting tool: instead of updating every delegator separately whenever rewards or slashes occur, the protocol adjusts the validator’s token base, and the value of each share rises or falls with it.

Polkadot uses a related but distinct design called Nominated Proof-of-Stake. Token holders act as nominators who back validator candidates, and the network elects an active validator set using stake-distribution algorithms. The important idea is not the specific algorithm name; it is that staking can be used not just to weight validators directly, but to choose which validators get into the active set in the first place.

Cardano pushes the separation in yet another direction. Delegation lets ADA holders back stake pools while retaining normal spending power. That design reflects a different trade-off: participation is made easier because stake assignment and token spendability are less tightly coupled than in many other systems.

Ethereum is somewhat stricter at the base layer. To run a validator directly, you deposit 32 ETH and activate validator software. That validator then helps store data, process transactions, propose blocks when selected, and attest to others’ blocks. If you have less than 32 ETH (or do not want to run infrastructure) you usually participate through pooled or liquid staking solutions built on top of the protocol rather than directly into it.

Why do staking systems enforce lockups and unbonding periods?

The most common beginner intuition is that staking should work like a savings account: deposit, earn, withdraw whenever you want. But that would defeat much of the security model.

Suppose a validator could help secure the network today, misbehave in a way that is only discovered tomorrow, and withdraw all stake instantly tonight. Then the chain would have given that validator consensus influence without maintaining enough time to enforce accountability. This is why many staking systems use a delay between the decision to exit and the moment funds become fully liquid again.

That delay is usually called an unbonding period, though different chains use different terms and exact mechanics. The purpose is not to annoy users. The purpose is to preserve a window in which the protocol can process evidence of misbehavior and apply penalties to stake that was still economically backing the validator at the relevant time.

Cosmos documents this logic clearly. Validators can be Unbonding for a chain-specific UnbondingTime, and delegations remain slashable for qualifying past offenses during that period. Redelegation can take effect immediately from the user’s point of view, but it is still tracked in a special state so those funds remain exposed to slashing for earlier faults. The protocol is preserving causality: if your stake helped support a validator during an infraction, you do not get to escape all responsibility just because you moved away before the evidence landed on-chain.

Polkadot uses the same basic idea. Misbehavior can slash both validators and nominators, and the unbonding period acts as a safety window for offenses discovered later. This is a recurring pattern in staking systems because delayed evidence is not an edge case; it is a normal consequence of distributed systems.

Where a chain relaxes lockups, it usually does so by introducing some other mechanism or trust layer rather than by making the security problem disappear. Cardano’s delegation model is more liquid from the user perspective, but the protocol design around stake rights and pool operation differs from a stricter bonded-and-unbonding model. And liquid staking protocols on Ethereum make funds feel liquid by issuing tradable receipts, but the underlying stake is still subject to the base protocol’s validator and withdrawal rules.

What are staking rewards actually paying you to do?

People often say staking earns yield, but that phrasing can hide the mechanism. In a proof-of-stake chain, rewards are payments for useful consensus behavior. They are not magic interest appearing from nowhere.

Ethereum states this plainly: rewards are given for actions that help the network reach consensus. Running validator software that correctly batches transactions into blocks and checks other validators’ work earns rewards because that work is what keeps the chain functioning securely. In other words, rewards are compensation for maintaining the system’s safety and liveness.

Different chains package this differently. On Ethereum, a validator that is online and behaving correctly earns protocol rewards tied to its duties. On Polkadot, rewards are often calculated by era, validators earn points for useful participation, and rewards are distributed after validator commission. On Cosmos-style chains, rewards are reflected in validator economics and ultimately in the value represented by delegator shares. These details differ, but the economic grammar stays the same: the protocol mints or distributes value to those whose behavior keeps the consensus process running.

A useful way to think about this is as payment for two scarce things. The first is credible availability: validators must be there when the protocol needs them. The second is credible correctness: they must not sign invalid or conflicting states. Rewards encourage both, but they do not enforce both equally well. Availability failures are often accidental and usually punished more mildly. Safety violations like double-signing are more dangerous because they can directly undermine consensus, so they tend to face harsher penalties.

That asymmetry is not arbitrary. Being offline hurts liveness: the network may slow down or lose some efficiency. Signing conflicting messages hurts safety: it can help create or justify incompatible histories. Safety faults are usually treated as the more serious category because a blockchain can sometimes recover from temporary inactivity, but contradictory finalized history is much harder to unwind.

What is slashing and when can your stake be reduced?

| Fault | How proven | Typical penalty | Who is affected |

|---|---|---|---|

| Double-signing | Conflicting signatures on-chain | Large slashing | Validator and delegators |

| Prolonged downtime | Missed attestations/votes | Staged penalties | Validator mainly |

| Misconfiguration | Operational evidence or logs | Variable penalty | Validator and possibly delegators |

If rewards are the carrot, slashing is the part that makes staking credible. Slashing means reducing staked funds when a validator commits a serious protocol violation. Not all proof-of-stake systems use slashing in the same way or to the same degree, but where it exists, it is the sharpest expression of staking’s logic: consensus power is conditional, and violating that condition costs real money.

The classic example is double-signing. If the same validator key signs conflicting votes from two different active instances, the protocol can interpret that as evidence that the validator helped support incompatible histories. This is not a theoretical concern. In a Lido incident on Ethereum, validator keys were duplicated across two active clusters, leading to a double vote and the slashing of 11 validators. The postmortem tied the event to duplicated keys and a Prysm client bug that gave misleading confirmation around key deletion and re-import. The important lesson is not merely that software had a bug. It is that staking systems assume operational mistakes can happen, so they make some mistakes expensive by design.

That cost is intentional. Without it, validators could run redundant active signers with loose discipline, try to maximize uptime carelessly, or recover from failover procedures in unsafe ways. Slashing forces operators to take key management, restart procedures, doppelgänger protection, and client behavior seriously.

Tendermint-based systems reflect the same concern operationally. Validators broadcast signed votes, and the tooling warns operators about recent-signature checks on restart. The exact on-chain penalty handling may live elsewhere in the stack, but the operator guidance makes the underlying risk obvious: using the same consensus key in two live places is dangerous.

Slashing is not the only penalty. Some protocols also impose inactivity leaks, missed-reward penalties, or reduced returns for downtime. Ethereum’s design goals for its proof-of-stake specifications explicitly include staying live through major partitions and large-scale node outages while minimizing complexity and hardware requirements. That helps explain why staking systems typically distinguish between faults that threaten liveness and faults that threaten safety. The protocol wants to keep operating through messy real-world conditions without treating every outage as malice.

Staking walkthrough: what happens from deposit to potential penalty?

Imagine a small proof-of-stake chain where Alice wants to help secure the network but does not want to run infrastructure herself. She delegates tokens to a validator named Northstar. What has happened mechanically is not just that Alice clicked “stake.” She has attached her capital to Northstar’s future behavior. Northstar’s voting weight rises, so Northstar has more influence in block production or finalization. Because Alice’s delegated capital helped give Northstar that influence, Alice also shares in the economic consequences.

For the next few weeks, Northstar stays online, signs valid messages, and performs well. The chain distributes rewards. Alice sees her staking position grow. It is tempting to describe this as passive income, but that misses what the network is really paying for. The network is paying Northstar for being present and correct at the moments consensus required it, and Alice receives a share because her stake backed that service.

Now suppose Northstar’s operator performs a botched failover. An old validator instance was never fully shut down, and a new one comes online with the same signing key. Both instances sign. Evidence appears on-chain showing conflicting votes from the same validator. At that moment, the protocol has something objective to act on. It does not need to read intentions. It does not need to know whether the operator was malicious or merely careless. It only needs to know that a safety rule was broken in a way that can be cryptographically proven.

The chain slashes Northstar. If Alice’s stake was still bonded or in an unbonding window covering the fault period, the value of her position falls too. That may feel harsh, but it is the point of staking rather than a defect in it. If delegators got only upside while validators absorbed all downside, delegators would have little reason to choose operators carefully, and unsafe validators could attract stake too easily. Shared risk is how the protocol turns stake allocation into a form of decentralized supervision.

Finally, Alice decides to leave. She cannot always exit instantly. The protocol places her stake into an unbonding state for some period. During that time, she may stop earning full active rewards, but her funds can still remain exposed to certain earlier faults. Again, this is not an arbitrary waiting room. It is the mechanism that keeps accountability attached to the period when her capital was actually securing the chain.

What is liquid staking and what additional risks does it introduce?

| Option | Liquidity | Counterparty risk | Slashing exposure | Best for |

|---|---|---|---|---|

| Solo staking | Low, funds locked | Minimal (self-custody) | Direct with validator | Max protocol security |

| Liquid staking | High, tradable receipt | Smart contract + governance | Indirect, shared risk | Liquidity plus staking yield |

| Exchange staking | High, exchange liquidity | Custodial counterparty risk | Concentrates stake risk | Ease of use |

Staking creates a tension. The network wants capital to be sticky enough to enforce accountability. Users want capital to stay useful and mobile. Liquid staking exists to reduce that tension, but it does so by adding another layer of machinery and risk.

The basic idea is straightforward. A protocol takes in users’ tokens, stakes them through validators, and issues a transferable receipt token representing the user’s claim on the underlying staked position. Lido’s early Ethereum design expresses this clearly: users deposit ETH into Lido smart contracts and receive stETH, a tokenized version of staked ether. That token tracks the corresponding staked ETH plus rewards and minus slashing. In effect, the staking position is locked at the protocol level, but the user regains market liquidity by holding a tradable claim on it.

This solves a real problem. A user can contribute to network security and still use the receipt token in trading, payments, or DeFi. But it does not make staking risk disappear. It changes the shape of the risk.

With liquid staking, you now depend not only on the base chain’s validator rules, but also on smart contracts, operator selection, accounting, governance, and sometimes oracle systems. Lido’s design, for example, required DAO-governed operators and oracles because the execution-layer contracts could not directly read beacon-chain state. That means the system’s correctness depends on more than raw proof-of-stake mechanics. It depends on a surrounding protocol that can reconcile balances, select operators, and respond to failures.

This is why official Ethereum guidance stresses that pooled staking is not native to Ethereum and carries third-party risks, and why centralized exchange staking carries even heavier trust assumptions. If you stake through an exchange, you are no longer just using protocol rules; you are relying on a custodian that may pool large amounts of stake, control withdrawal flows, and create concentration risk for the network.

Rocket Pool represents another approach in the same design space: decentralized Ethereum liquid staking with guides for both stakers and node operators. The exact architecture differs, but the common point is that liquid staking is not “staking but better” in some absolute sense. It is staking plus an additional coordination layer that trades simplicity for accessibility and liquidity.

What does staking actually secure; and what are its limits?

It is tempting to summarize staking as “more stake means more security.” That is directionally true but incomplete.

More honest stake usually means attacking the chain becomes more expensive. Ethereum’s public documentation makes this intuition explicit: as more ETH is staked, controlling a majority of validators requires controlling more ETH, which raises the cost of attack materially. But the phrase honest stake matters. A chain is not secured by raw token count alone. It is secured by the distribution of stake across operators, software clients, governance structures, and failure domains.

The Solana outage report illustrates why. A validator runtime bug caused an infinite recompilation loop, and because more than 95% of cluster stake was running the affected client version, consensus halted irrecoverably until a coordinated restart. That was not a failure of staking as collateral. It was a reminder that economic stake only secures a system if it is paired with operational and software diversity.

The same lesson appears in slashing incidents. If large amounts of stake concentrate behind a small number of operators, clients, or custodians, then failures become correlated. A proof-of-stake chain may still have a large nominal amount staked and yet be more fragile than it appears.

So the right way to think about staking security is not just how much capital is locked? It is also who controls it, how independently they operate, how heterogeneous their setups are, and how quickly the protocol can detect and punish faults?

Key trade-offs of staking: liquidity, decentralization, and complexity

Staking is powerful because it internalizes security: the asset used by the chain is also the collateral securing the chain. But that design creates persistent trade-offs.

The first trade-off is between security and liquidity. Strong lockups and unbonding windows improve accountability, but they make capital less flexible. Liquid staking softens that cost for users, but only by introducing additional smart-contract, governance, and operator risks.

The second trade-off is between accessibility and decentralization. Lowering participation barriers through delegation, pools, and exchanges makes staking easier, which can increase overall participation. But convenience often concentrates stake in large intermediaries. Ethereum’s documentation warns explicitly that centralized providers can become dangerous concentration points and single points of failure.

The third trade-off is between simplicity and optimization. Ethereum’s consensus-spec design goals explicitly prioritize minimizing complexity, even at some efficiency cost, while also supporting large validator participation and low hardware requirements. That is a real protocol philosophy: sometimes a simpler rule that many operators can run safely is better than a more elegant but fragile optimization.

The fourth trade-off is between economic design and operational reality. On paper, staking aligns incentives cleanly. In practice, validators are software systems run by humans under imperfect conditions. Bugs happen. Networks partition. Monitoring fails. Keys are mishandled. This is why a mature understanding of staking always includes operations, not just tokenomics.

Conclusion

Staking is best understood as a way to convert capital into credible accountability for consensus. You lock value, the protocol grants influence, honest participation earns rewards, and serious misbehavior can destroy part of what you locked.

Everything else is built around that core.

- delegation

- unbonding

- reward schedules

- slashing rules

- liquid staking wrappers

The details differ across chains, but the idea worth remembering tomorrow is simple: staking exists so that the people helping decide the chain’s history have something meaningful to lose if they abuse that role.

How do you build a crypto position over time or earn on it?

Build a position by buying the crypto you want on Cube Exchange and dollar-cost-average over time; once you hold the asset, evaluate staking or yield options separately (native staking, liquid staking tokens, or third-party providers) before moving funds. Use Cube to fund your account and automate buys, then research lockups, slashing rules, and fees for any staking path you consider.

- Fund your Cube account with fiat via the on-ramp or by transferring a supported crypto to your Cube wallet.

- Open the market for your target asset (for example ETH/USD or ETH/USDC) and set a recurring buy. Use limit orders for price control or market orders for immediate fills.

- After accumulating a base position, research staking paths for that asset: native protocol staking, liquid-staking tokens, or custodial/exchange staking. Compare unbonding periods, slashing exposure, and fees.

- If you choose to stake, follow the chosen provider’s instructions to move or lock the funds off-chain or on-chain, and confirm withdrawal and penalty rules before committing.

Frequently Asked Questions

Unbonding periods create a window during which the protocol can accept on‑chain evidence of past misbehavior and apply penalties to stake that was economically backing a validator at the relevant time; the delay prevents actors from escaping accountability by withdrawing immediately. Exact lengths and mechanics vary by chain.

Yes - if your delegation was backing a validator when a provable violation occurred (or during the chain’s slashable window), your delegated stake can be reduced; the specifics (who is liable and how much) differ across protocols.

Liquid staking issues a tradable receipt token that represents a claim on underlying staked assets, restoring market liquidity, but it adds extra smart‑contract, governance, operator and oracle risks because you now rely on the liquid‑staking protocol and its operators in addition to the base chain.

Protocols usually treat safety faults (e.g., double‑signing or signing conflicting histories) much more severely because they threaten finality, while availability faults (being offline) are typically penalized more mildly since they mainly affect liveness; exact punishments and thresholds depend on the chain.

Not necessarily; more total stake raises the nominal cost of an attack, but real security depends on who controls that stake, how distributed and operationally diverse validators and clients are, and how quickly faults can be detected and penalized - concentrated stake or homogeneous client deployments can make a network fragile despite high total stake.

Because running a validator requires continuous infrastructure, monitoring, key management, and software maintenance, many token holders delegate to operators to avoid that operational burden; delegation lowers the barrier to participation and creates market incentives to choose reliable operators.

In Cosmos‑style systems a validator issues 'shares' as an accounting unit: the validator’s token pool is adjusted for rewards and slashes, and each delegator’s share value rises or falls accordingly so the protocol need not update every delegator individually when rewards or penalties occur.

Yes; pooled providers and exchanges centralize custody and voting power, creating concentration and counterparty risks (they can become single points of failure), which is why Ethereum guidance and liquid‑staking analyses warn that pooled or exchange staking carries additional trust and centralization risks.

Related reading