What Are SNARKs?

Learn what SNARKs are, why they exist, how they work, and the tradeoffs behind succinct zero-knowledge proofs in blockchains and cryptography.

Introduction

SNARKs are proof systems that let one party convince another that a statement is true (often that a computation was performed correctly) while sending only a very short proof that can be checked very quickly. That sounds almost contradictory. If checking a computation normally takes as much work as doing it, how can verification be much cheaper than recomputation?

That puzzle is the reason SNARKs matter. They are one of the few cryptographic ideas that genuinely change the shape of a system. A blockchain can verify the correctness of thousands of off-chain transactions with a small on-chain check. A privacy system can prove that money was conserved without revealing who paid whom or how much. A remote server can prove it followed a program without the client rerunning the whole program.

The catch is that SNARKs are not magic. Their efficiency comes from representing computation in a very particular algebraic form, and their security depends on concrete assumptions and engineering choices. Some families require a setup ceremony. Some optimize for tiny proofs at the cost of stronger assumptions. Others reduce trust in setup but change the prover’s cost profile. To understand SNARKs, it helps to start not with the acronym, but with the problem they are trying to solve.

Why ordinary verification (recomputing work) is impractical and how SNARKs shift costs

Suppose Alice wants Bob to trust the output of a large computation. Maybe Alice is a rollup prover claiming that a batch of transactions was executed correctly. Maybe she is proving that a private transfer obeyed all the rules of a shielded asset system. Maybe she is just outsourcing a heavy computation to a cloud service.

Without special cryptography, Bob has only a few basic options. He can rerun the computation himself, which defeats the purpose of delegation. He can trust Alice, which is often unacceptable. Or he can ask for a conventional proof, but conventional proofs of computation tend to grow with the computation itself: the more work Alice did, the more Bob must inspect.

A SNARK changes this asymmetry. The prover may still do substantial work, often more than the original computation. But the verifier’s work is succinct: it depends mostly on the security parameter and the size of the public statement, not on the full size of the hidden witness or the raw execution trace. Early work on succinct arguments explicitly framed this goal as making verifier time independent of the underlying NP machine’s running time t, while preserving reasonable prover complexity.

This is the central trade: SNARKs move work from the verifier to the prover, and then compress the verifier’s task into a small algebraic check. Once that clicks, the rest of the machinery has a place to land.

What does SNARK mean; Succinct, Non‑interactive, Argument, of Knowledge explained

The acronym usually expands to Succinct Non-interactive Argument of Knowledge. Sometimes people say zk-SNARK when they want to emphasize zero-knowledge, because not every SNARK is automatically privacy-preserving unless that property is built in.

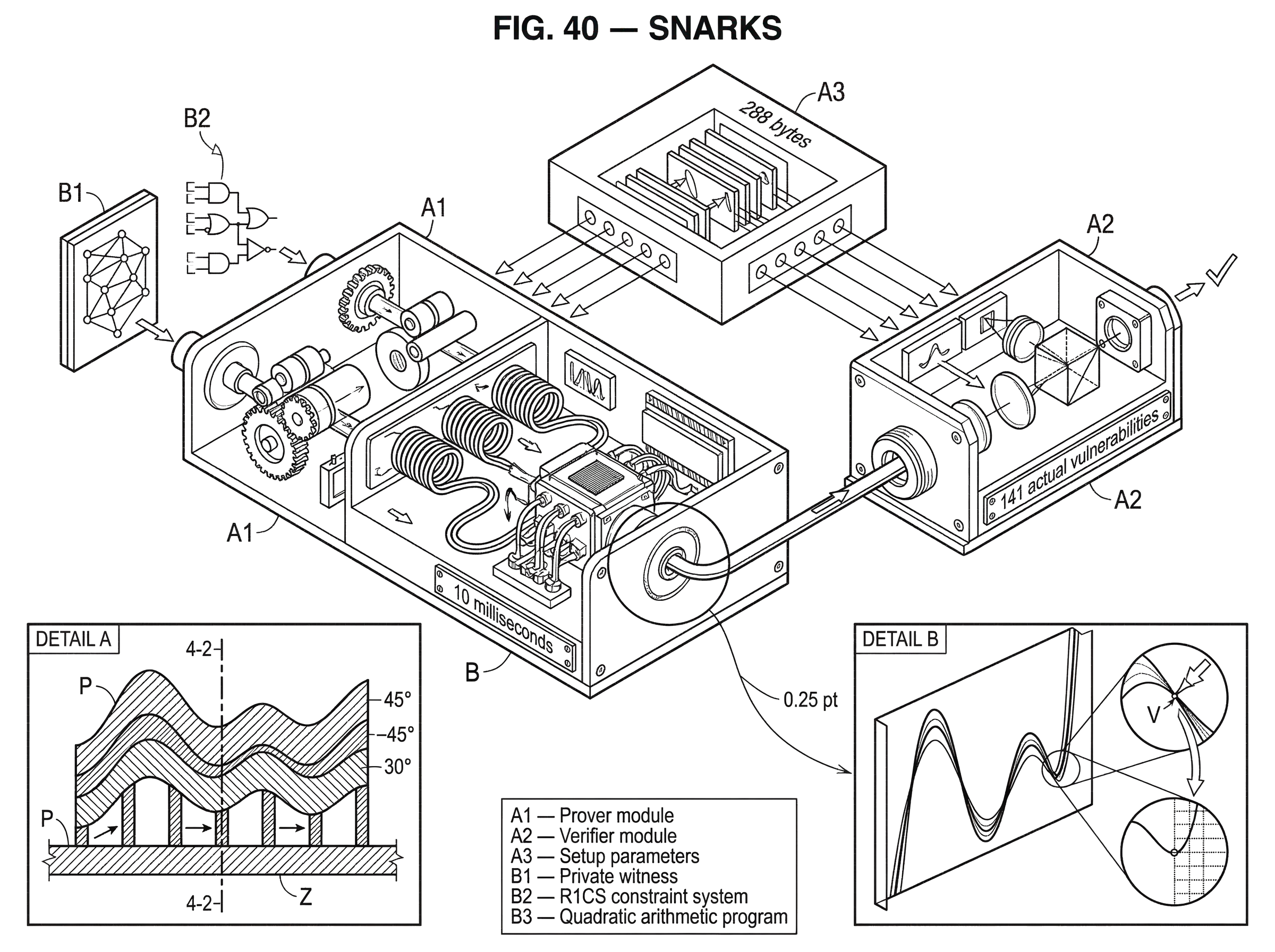

Succinct means the proof is short and verification is fast relative to the size of the computation being proved. In practical pairing-based systems, this can be extreme. Pinocchio reported constant-size public proofs of 288 bytes with verification typically around 10 milliseconds, and Groth16 later reduced proofs to just 3 group elements with verification using 3 pairings. Those are not universal numbers across all SNARK families, but they show what “succinct” can mean concretely.

Non-interactive means the prover sends a single proof, rather than engaging in a back-and-forth protocol with the verifier. This matters for blockchains because a smart contract or consensus system wants a proof object it can verify without participating in an interactive dialogue.

Argument is a technical word. It means soundness holds against computationally bounded provers, not arbitrary unbounded ones. That is weaker than an information-theoretic proof, but it is the normal trade in modern efficient cryptography.

Of knowledge means the prover is not just asserting a true statement by luck; security is framed so that a successful prover must, in a precise technical sense, “know” a witness. If the statement is “this circuit has a satisfying assignment,” the argument-of-knowledge property says a prover who convinces the verifier can be associated with an extractor that could recover such an assignment under the scheme’s assumptions.

If zero-knowledge is included, there is another guarantee: the verifier learns nothing beyond the truth of the statement, except whatever is intentionally exposed as public input.

How do SNARKs compress a large computation into a short algebraic check?

A useful way to think about a SNARK is this: the prover does not directly prove “I ran the whole program.” Instead, the prover proves that there exists a structured object (usually called a witness) that makes a large collection of local constraints all hold at once.

Why local constraints? Because computation can be broken into tiny pieces: each gate in a circuit, each arithmetic relation among wires, each step in a trace. If every local rule is satisfied and all the pieces fit together consistently, then the whole computation was valid.

But checking every constraint individually would still be too expensive for the verifier. So SNARKs use algebra to batch many checks together. The prover encodes the witness and the constraints into polynomials or related algebraic objects. Then, instead of checking thousands or millions of conditions directly, the verifier checks a small number of statements about these encodings. The algebra is arranged so that if even one important constraint fails, the combined check fails except with negligible probability.

This is the compression point: SNARKs do not compress arbitrary evidence directly; they compress the verification of structured algebraic consistency.

That is why so much of SNARK engineering revolves around “arithmetization”; converting a program into algebraic constraints that are easy to batch.

How are programs converted to circuits and R1CS for SNARK proving?

Real programs are messy. They have branches, memory access, loops, bit operations, and complex data structures. SNARKs prefer something cleaner: equations over a finite field. So the first mechanical step in many SNARK systems is to compile a computation into a constraint system.

A common form is R1CS, or rank-1 constraint systems. In R1CS, computation is represented as many equations of the form “a linear combination times another linear combination equals a third linear combination.” Libsnark describes R1CS as an NP-complete language, and many toolchains target it because arithmetic and Boolean circuits can be reduced to it.

Here is the mechanism in ordinary language. A program is broken into intermediate values called wires. Every operation in the program becomes a small algebraic rule relating some input wires and output wires. Addition is easy. Multiplication fits naturally. More awkward operations, like bit decomposition or inequality tests, can still be expressed, but often with many extra constraints. That is why SNARK-friendly cryptographic primitives such as Poseidon matter in practice: they reduce how many constraints the circuit needs.

A simple example helps. Suppose the public statement is “I know private numbers a and b such that a * b = 35 and a + b = 12.” The witness is the hidden pair, say a = 5 and b = 7. A constraint system would create wires for a, b, the product, and the sum, then enforce local equations saying the product wire equals a * b, the sum wire equals a + b, the product equals 35, and the sum equals 12. A SNARK does not reveal a or b; it proves there exists an assignment to those hidden wires satisfying all constraints.

That toy example is trivial. A real zk-rollup circuit might include thousands or millions of constraints saying that digital signatures verified, balances updated correctly, state transitions matched the previous root, and the final commitment was computed properly. The structure is the same. Only the scale changes.

In practice, developers usually do not write raw R1CS by hand. Toolchains compile higher-level descriptions into constraints. Circom, for example, compiles circuits into constraints and emits artifacts used by proving tools like snarkjs. Arkworks provides libraries ranging from finite fields and elliptic curves up to R1CS-building utilities and packaged SNARK implementations such as Groth16 and Marlin.

Why do SNARK constructions use polynomials and QAP divisibility checks?

Once a computation has been reduced to constraints, many influential SNARK systems go one step further: they encode those constraints as polynomial identities.

The idea sounds abstract, but the reason is practical. Polynomials are an algebraic format in which many consistency conditions can be combined, committed to, evaluated at hidden points, and checked with very little communication.

A major example is the Quadratic Arithmetic Program, or QAP, used in systems like Pinocchio and Groth16. A QAP associates the circuit with families of polynomials and a target polynomial t(x). A witness satisfies the circuit if and only if a certain polynomial expression p(x) is divisible by t(x). That means all gate constraints vanish at the required points.

Here is the mechanism behind that statement. Each gate contributes local conditions. The construction chooses evaluation points so that satisfying the gate at its point corresponds to making the combined polynomial vanish there. If the witness is correct at every gate, then p(x) has all the roots encoded by t(x), and therefore t(x) divides p(x). If some gate is wrong, this divisibility breaks.

This is powerful because a divisibility relation can be checked succinctly once the relevant polynomials are committed to in the right way. Pinocchio showed how this could support nearly practical public verifiable computation, with key generation and proof generation linear in computation size, verification linear in I/O size, and constant-size proofs. It also showed that adding zero-knowledge could be done with negligible overhead relative to the base protocol.

The analogy here is a checksum, but only partially. A checksum summarizes a large object into something short that helps detect errors. A polynomial identity similarly summarizes whether many constraints fit together. The analogy helps explain compression. It fails because a SNARK is not just a heuristic error detector; it is a cryptographic proof system with a carefully designed adversarial soundness argument.

How do polynomial commitments let verifiers check hidden proofs without seeing full polynomials?

| Scheme | Proof size | Setup needed | Hiding | Best for |

|---|---|---|---|---|

| KZG | constant, very small | SRS required | computational hiding | polynomial commitments |

| Pedersen‑style | constant to small | SRS required | unconditional hiding possible | privacy‑sensitive proofs |

| Merkle commitments | logarithmic openings | no algebraic setup | no hiding (public) | authenticated data / large sets |

At this point, the verifier wants confidence about polynomials or related encodings without seeing them in full. That is where commitments enter.

A commitment is like locking a value in a box: you bind yourself to it now, and later you can open or prove facts about it. For SNARKs, the important version is often a polynomial commitment. KZG-style commitments, introduced in early work on constant-size commitments to polynomials, let a prover commit to a polynomial with a short group element and later prove claimed evaluations with short witnesses.

This primitive matters because many SNARK verifiers do not inspect an entire polynomial. They ask for evidence that the committed polynomial evaluates to certain values, or that several committed polynomials satisfy a relation at a challenge point. If the commitment scheme is binding, the prover cannot adapt the polynomial after seeing the challenge. If openings are succinct, the verifier’s workload stays small.

There is a cost. KZG commitments require structured reference string parameters of the form (g, g^α, g^(α^2), ...), generated by a trusted or distributed authority. That setup model is one reason some SNARK systems need ceremonies. The reward is strong succinctness: constant-size commitments and very short openings.

This trade shows up repeatedly in SNARK design. Smaller proofs and faster verification often come from stronger algebraic setup assumptions.

What is a SNARK trusted setup and why do parameter ceremonies matter?

| Setup type | Reusability | Trust model | Operational burden | Typical examples |

|---|---|---|---|---|

| Circuit‑specific CRS | single‑circuit only | high trust in generator | high; per‑circuit ceremonies | Groth16 |

| Universal/updatable SRS | many circuits reuse | distributed if updated | moderate; one‑time then updates | PLONK, Marlin |

| Transparent / no setup | reusable without CRS | minimal trust required | low operational burden | STARKs, transparent schemes |

Many widely deployed SNARKs are preprocessing SNARKs. Before anyone can prove statements about a fixed circuit, a generator algorithm must produce a proving key and a verification key. Libsnark describes this explicitly for ppzkSNARKs: you first choose the circuit or system, then run a setup algorithm, then generate and verify proofs.

Why is setup needed? In these constructions, the public parameters contain structured algebraic material that makes succinct verification possible. But whoever knows the secret randomness used to create those parameters (often called toxic waste) may be able to forge proofs.

That sounds alarming, and it is a real tradeoff. The practical response has been multi-party computation ceremonies. Instead of trusting one party, many participants jointly generate parameters so that security holds as long as at least one participant behaves honestly and destroys their secret randomness. The Zcash Foundation’s Powers of Tau ceremony published final parameters, the transcript, verification tools, and attestations, and emphasized this key assumption: correctness of proofs using the parameters requires at least one participant to have destroyed their sampled randomness. It also noted an important nuance: the protocol preserved zero-knowledge even if all participants were compromised.

There are two different axes here that people often conflate. One is circuit specificity. A scheme like Groth16 traditionally uses circuit-specific parameters. Another is setup trust. A universal or updatable setup can reduce operational friction and distribute trust, but it does not automatically mean “no setup at all.” PLONK is a useful example: it uses a universal structured reference string that can support many circuits, and the setup can be updatable, which reduces the burden compared with per-circuit ceremonies.

So when someone says a SNARK has a trusted setup, the precise question is: what exactly is trusted, how reusable are the parameters, and what breaks if the setup trapdoor survives?

Why is Groth16 popular; what you gain and what you trade off with tiny proofs

If you want to understand why pairing-based SNARKs became so popular in blockchains, Groth16 is the clearest example. It gives a preprocessing zero-knowledge SNARK for arithmetic-circuit satisfiability with a proof of just 3 group elements, and verification is a single pairing-product equation using 3 pairings.

That matters because on-chain verification is expensive. A scheme with tiny proofs and a tiny verifier fits much better into smart contracts and recursive proof systems than a scheme with larger proof objects. Groth’s paper also proved a lower bound in the generic asymmetric bilinear-group model ruling out single-group-element pairing-based SNARGs of the relevant form, which is a way of saying the design is close to the limit in that model.

The price is that the security story is not as simple as “standard assumptions, end of story.” Groth16’s most efficient instantiation takes what the paper itself calls an aggressive stance, relying on a proof in the generic bilinear group model, with additional hedging in the symmetric setting. More broadly, pairing-based SNARKs often rely on specialized algebraic assumptions. That does not make them unusable (they are widely used) but it is part of the cost of the efficiency.

This is why Zcash, many Ethereum applications, and numerous rollup systems have accepted setup and pairing assumptions: the efficiency is good enough to dominate the operational tradeoff.

How does PLONK's universal setup reduce ceremony friction compared with circuit‑specific SNARKs?

As SNARK deployment broadened, one friction point became obvious: circuit-specific setup is awkward. If every new circuit needs its own ceremony, development slows and trust management becomes a recurring burden.

PLONK addresses this by using a universal SNARK setup and a different arithmetization style based on evaluations over a subgroup in Lagrange basis, together with a permutation argument. The technical details are deeper than we need here, but the essential shift is easy to state: instead of tailoring setup parameters to one specific computation, you perform a setup that can support a large class of circuits up to some bound, and then specialize without a fresh toxic-waste event for each program.

That changes system design significantly. A proving ecosystem can standardize around one reusable setup. Developers can iterate on circuits more easily. The verifier remains succinct. According to the PLONK preprint, prover running time is significantly reduced relative to prior universal-SRS work like Sonic, though exact gains depend on circuit structure.

This does not eliminate all tradeoffs. Universal setup is still setup. The proof-size and verifier-cost profile differ from Groth16. And implementation details matter a great deal. But it shows how SNARK design evolved: not just toward smaller proofs, but toward better operational ergonomics.

What are common real‑world SNARK use cases in privacy, rollups, and verifiable computation?

The most important uses of SNARKs all come from the same mechanism: a lot of private or off-chain work can be compressed into a proof that is cheap for others to check.

In privacy-preserving payments, a user proves a transaction is valid without revealing critical details. Zcash’s shielded pool is a canonical example: the system uses Groth16 proofs to show that spends are authorized and balances are conserved while hiding sender, receiver, and amount.

In zk-rollups, an off-chain executor processes many transactions, computes a new state root, and posts a proof to the base chain. The chain does not need to replay every step. It verifies one succinct proof and accepts the batch if the proof passes. This is why SNARK-friendly hashing, circuit design, and proof verification costs matter so much in blockchain scaling.

In verifiable computation more broadly, SNARKs let a client outsource computation and check the result quickly. Pinocchio was framed in exactly this way, with public verifiable computation and a C-to-circuit toolchain. That same idea continues in modern proving stacks, even if the tooling and proving systems have changed.

The pattern is the same across architectures. Whether the proof is checked by a privacy protocol, a smart contract, a bridge, or a remote verifier, the system is buying a new asymmetry: expensive proving, cheap checking.

When do SNARKs become impractical, expensive, or risky?

The first thing that surprises newcomers is that SNARKs often make the prover much slower, not faster. Verifying becomes cheap because the prover has done substantial algebraic work: compiling the program, generating a witness, committing to polynomial objects, running multi-scalar multiplications, FFT-like routines, and more. In some systems the proving cost is only linear in circuit size asymptotically, but the constants are still large.

The second surprise is that not every computation maps cleanly into cheap constraints. Arithmetic over finite fields is natural. Bitwise logic, comparisons, and general-purpose program structure can be awkward. This is why specialized circuit languages, gadgets, and SNARK-friendly primitives emerged. It is also why a hash function designed for ordinary CPUs may be a poor choice inside a SNARK circuit.

The third limitation is setup and assumptions. Some of the most efficient SNARKs need structured reference strings whose generation must be trusted in a qualified sense. Even when ceremonies are carefully designed, they create operational burden and residual trust assumptions. Universal and updatable setups reduce the burden but do not make it vanish.

The fourth limitation is security in implementation. Formal protocol proofs are not the whole story. A recent systematization-of-knowledge paper cataloged 141 actual vulnerabilities in SNARK implementations and argued that treating SNARKs as “just math” is dangerous. Side channels, unsafe randomness, serialization bugs, witness-handling errors, and verifier integration mistakes all matter. Libsnark itself explicitly warns that its code is research-quality, not production-ready, and notes timing and cache side channels in some components.

So the right mental model is not “SNARKs prove everything cheaply.” It is “SNARKs make a narrow but very powerful bargain, and the bargain only works if the algebra, assumptions, setup, and implementation all line up.”

SNARKs vs STARKs and other proofs; how do they differ and when to choose each?

| System | Trusted setup | Proof size | Post‑quantum | Verifier cost | Best for |

|---|---|---|---|---|---|

| Pairing SNARKs | circuit‑specific CRS | very small (3 elems) | not post‑quantum | very cheap | on‑chain and recursive proofs |

| Universal SNARKs | reusable updatable SRS | small to moderate | not post‑quantum | cheap | many circuits, iterative dev |

| STARKs | no trusted setup | larger proofs | post‑quantum | moderate | transparent, setup‑free systems |

It is hard to understand SNARKs in isolation because many design choices become visible only by contrast.

The most important comparison is with STARKs. STARKs are also succinct proof systems for computation, but their design aims for transparency: no trusted setup and no trapdoor parameters. The original ZK-STARK work emphasized this explicitly and also positioned STARKs as post-quantum secure. In exchange, STARK proofs are usually larger than pairing-based SNARK proofs, and verifier and prover tradeoffs differ.

That is why the SNARK-versus-STARK choice is not a matter of one being strictly better. If your system cares intensely about tiny proofs and very cheap on-chain verification, pairing-based SNARKs remain attractive. If you care more about avoiding trusted setup and about post-quantum positioning, STARK-style constructions become appealing.

Even within SNARKs, there is no single template. Groth16 optimizes hard for proof size and verification cost, but uses a circuit-specific setup. PLONK moves toward universal reusable setup. Marlin, implemented in ecosystems like arkworks, offers another universal-setup option for R1CS. The internal mechanisms differ, but they are all trying to solve the same underlying problem: preserving succinct verification while changing which costs and assumptions are acceptable.

Conclusion

A SNARK is a way to prove that a hidden witness satisfies a large structured computation, using a proof that stays small and fast to verify. Its core trick is not mysterious once you see it clearly: turn computation into algebraic constraints, combine those constraints into a compact consistency claim, and use cryptography to let a verifier check that claim without seeing all the underlying data.

That is why SNARKs exist. They create an asymmetry that ordinary verification cannot: heavy proving, light checking.

Everything else is in service of that one idea.

- circuits

- QAPs

- commitments

- setup ceremonies

- universal SRS designs

- implementation tradeoffs

What should I understand about SNARKs before using SNARK‑based services?

Understand the core SNARK tradeoffs before you deposit, trade, or evaluate projects on Cube Exchange. Know whether a project relies on trusted setup, which proof family it uses, and what that implies for on‑chain verification cost and operational risk. Below are concrete checks and a short Cube workflow to reduce surprises when interacting with SNARK‑backed systems.

- Check the proof family and setup model used by the project (Groth16, PLONK, STARK, etc.) and whether parameters are circuit‑specific or universal/updatable.

- Find the published proof size (bytes) or the verifier gas cost on the target chain; compare that to expected on‑chain verification budgets for transactions you care about.

- Verify ceremony transcripts, SRS/Powers‑of‑Tau artifacts, and audit reports; search the project repo or docs for "transcript", "SRS", "ceremony", or the auditor's report and confirm dates.

- Fund your Cube account with a small deposit, then trade or deposit a small test amount for the asset; watch the contract or explorer events to confirm that proofs verify on‑chain and that state roots or receipts match published claims.

Frequently Asked Questions

Some very efficient SNARK constructions require a structured reference string (SRS) whose creator knows secret randomness (the “toxic waste”); if that secret survives, an attacker can forge proofs, so parameters are generated by multi‑party ceremonies to distribute trust and require at least one honest participant to destroy its secret. Ceremonies reduce but do not eliminate setup trust, and universal/updatable SRS designs try to make the operational burden smaller.

STARKs aim for transparency (no trusted setup) and post‑quantum security at the cost of larger proofs and different prover/verifier tradeoffs, whereas many SNARKs (especially pairing‑based ones) achieve much smaller proofs and extremely cheap verification but usually rely on a trusted setup or stronger algebraic assumptions; the choice depends on whether you prioritize tiny on‑chain costs or avoiding setup and quantum‑vulnerable assumptions.

Verification is succinct because the verifier checks a small algebraic consistency claim rather than replaying the computation, but creating that short proof requires substantial algebraic work by the prover (polynomial encoding, multiexponentiations, FFTs, witness generation), so provers are often much slower and use more resources even when verification is fast.

No - “SNARK” by itself does not imply zero‑knowledge; zero‑knowledge is an additional property that must be built into the construction (many deployed systems are zk‑SNARKs, but some SNARKs only provide succinctness and soundness without hiding the witness).

R1CS (rank‑1 constraint systems) expresses computation as many small algebraic equations of the form (linear)*(linear) = (linear), and toolchains commonly compile high‑level programs into R1CS because arithmetic and Boolean circuits can be reduced to it, making it a practical target for SNARK builders and optimizers.

Polynomial commitments (e.g., KZG) let a prover commit to a polynomial with a short group element and later open evaluations succinctly; KZG‑style schemes give very short commitments and openings but require SRS elements like g, g^α,... produced in a trusted or multi‑party setup, so the succinctness comes with stronger setup assumptions.

Most pairing‑based SNARKs rely on bilinear‑group assumptions (and security proofs sometimes in the generic bilinear‑group model), so they are not considered post‑quantum secure; if post‑quantum assurance is required, transparent families such as STARKs are the recommended alternative.

Real‑world SNARK implementations have a nontrivial attack surface: papers and audits have cataloged many implementation issues (timing and cache side channels, unsafe randomness, serialization bugs), and prominent libraries explicitly warn their code is research‑quality, so production use requires careful engineering, audits, and attention to side channels and randomness sources.

Yes - some modern SNARKs use a universal or updatable SRS so the same setup can support many circuits up to a size bound, which reduces the need for a fresh per‑circuit ceremony; the tradeoff is that universal setups still require trust in the SRS generation and may change prover cost or proof‑size profiles compared with circuit‑specific schemes like Groth16.

Not every computation maps efficiently to SNARK constraints: arithmetic over finite fields is natural, but bitwise logic, comparisons, and other non‑arithmetic operations can require many extra constraints, so SNARK performance depends heavily on circuit design and SNARK‑friendly primitives (special hash functions, gadgets) rather than being uniformly cheap for all programs.

Related reading