What is BFT Consensus?

Learn what BFT consensus is, why Byzantine faults require 3f+1 validators, how quorums create finality, and how PBFT, Tendermint, HotStuff, and more work.

Introduction

BFT consensus is a way for a distributed system to reach agreement even when some participants behave arbitrarily; not just by crashing, but by lying, sending conflicting messages, or trying to break the protocol on purpose. That is the real puzzle it solves. In an ordinary distributed system, failure often means silence. In a Byzantine system, failure can mean deception.

This matters because blockchains and replicated databases do not just need progress; they need a shared history that honest participants can rely on. If one part of the network thinks a payment happened and another part thinks it did not, the system is not merely slow; it is inconsistent. BFT consensus exists to prevent that kind of split-brain outcome under a strong adversarial model.

The core idea is simple to state and difficult to achieve: honest nodes must converge on the same ordered sequence of decisions, even if some minority of nodes tries to make them disagree. Everything else in BFT protocol design (quorum thresholds, rounds, leaders, view changes, signatures, timeouts, checkpoints, locks, and certificates) is machinery built to preserve that outcome.

In blockchain systems, BFT consensus is often what gives users fast finality. Once a block or transaction has enough votes from the right quorum, it is not merely “unlikely” to be reversed in the probabilistic way associated with longest-chain proof-of-work systems. Under the protocol’s assumptions, it is finalized. Cosmos chains built on CometBFT use this model. HotStuff-style systems do too. Hedera’s hashgraph pursues a stronger asynchronous variant. The details differ, but the underlying problem is the same.

What problem does BFT consensus solve?

To see why BFT consensus is special, start with the classic statement of the Byzantine Generals Problem. A commander sends an order to lieutenants. Two conditions must hold. First, all loyal lieutenants must follow the same order. Second, if the commander is loyal, loyal lieutenants must follow the order the commander actually sent. Lamport, Shostak, and Pease formalized these as interactive consistency conditions, usually called IC1 and IC2.

Those conditions sound modest, but they encode the whole challenge. A faulty participant may tell different peers different stories. It may pretend a message never arrived. It may relay altered content. It may try to create two honest majorities that each believe they are acting consistently. So the problem is not “how do nodes vote?” The deeper problem is how do honest nodes know that enough other honest nodes saw the same thing they saw?

That is the compression point for understanding BFT consensus: agreement is not created by a node deciding locally. It is created by overlapping knowledge. A node can finalize a value only when the set of nodes supporting that value is large enough that any competing finalized value would have had to overlap with it in at least one honest participant. That overlap makes contradiction impossible, because an honest node will not honestly certify two conflicting outcomes.

This is why quorum intersection matters so much. If two quorums could be completely disjoint, the system could finalize two different histories at once. BFT protocols are designed so that the quorums needed to finalize decisions necessarily overlap in honest participants, as long as the number of Byzantine participants stays below the fault threshold.

Why do classic BFT protocols require n ≥ 3f + 1 (the one‑third rule)?

| Model | Required n | Quorum size | Overlap guarantee | Consequence |

|---|---|---|---|---|

| Oral messages | n ≥ 3f + 1 | 2f + 1 | ≥ f + 1 honest overlap | one‑third fault bound |

| Signed messages | no strict 3f+1 requirement | policy‑dependent signed quorum | signed votes are attributable | can tolerate more faulty nodes |

| Stake‑weighted | voting‑power model | ≥ 2/3 voting power | voting‑power intersection | <1/3 Byzantine preserves safety |

The best-known fact about classic BFT consensus is the 3f + 1 bound. Here f means the maximum number of Byzantine nodes the system wants to tolerate, and n means the total number of nodes. In the classic oral-message model (ordinary messages without unforgeable signatures) Lamport, Shostak, and Pease proved that consensus is solvable if and only if n >= 3f + 1, equivalently fewer than one-third of participants are Byzantine.

Why does one-third appear? Because the protocol needs enough honest overlap to defeat conflicting stories. Suppose the system has only 3f nodes. Then after excluding f faulty nodes, only 2f honest nodes remain. A faulty coalition can try to split those honest nodes into two groups of size f, feeding each group a different account of reality. There is no longer enough honest overlap to force convergence. With 3f + 1 nodes, by contrast, there are at least 2f + 1 honest nodes, and quorums of size 2f + 1 must intersect in at least f + 1 nodes. Since at most f are faulty, that intersection contains at least one honest node. That single honest overlap is enough to block two conflicting certificates from both being valid.

This arithmetic is the heart of many practical protocols. A common pattern is:

- total validators:

n = 3f + 1 - Byzantine tolerance: up to

f - quorum to justify progress or finality: at least

2f + 1

The point is not the formula by itself. The point is the invariant it enforces: any two decision-making quorums overlap in honest voting power.

In stake-weighted systems, the same logic is expressed in voting power rather than node count. Tendermint, for example, frames safety in terms of Byzantine voting power: if less than one-third of the voting power is Byzantine, two conflicting commits cannot both gather the required two-thirds majority without at least one-third equivocation. That is the weighted version of the same intersection argument.

How does BFT consensus provide finality in a blockchain?

Mechanically, most blockchain BFT protocols are instances of state machine replication. That phrase sounds abstract, but the idea is concrete. If every honest replica starts from the same state and executes the same ordered inputs deterministically, then they all end in the same next state. So consensus does not need to solve every computation problem. It only needs to solve one thing reliably: what is the next input, and in what order?

CometBFT describes itself exactly this way: it performs Byzantine Fault Tolerant State Machine Replication for arbitrary deterministic, finite state machines. The application logic lives above the consensus engine. The consensus engine’s job is to make every honest replica feed the same ordered requests into that logic.

A blockchain is just a state machine with a particular data structure wrapped around it. Transactions are the inputs. Blocks are batches of ordered inputs. Finality means the network has enough evidence that honest replicas will not later execute a different block at that same position.

This is why BFT consensus fits naturally in systems that want quick confirmation and deterministic execution. The protocol is not trying to discover a longest chain by accumulated work. It is trying to explicitly certify a single history with authenticated votes.

How does a leader‑based BFT round (PBFT/Tendermint style) reach agreement?

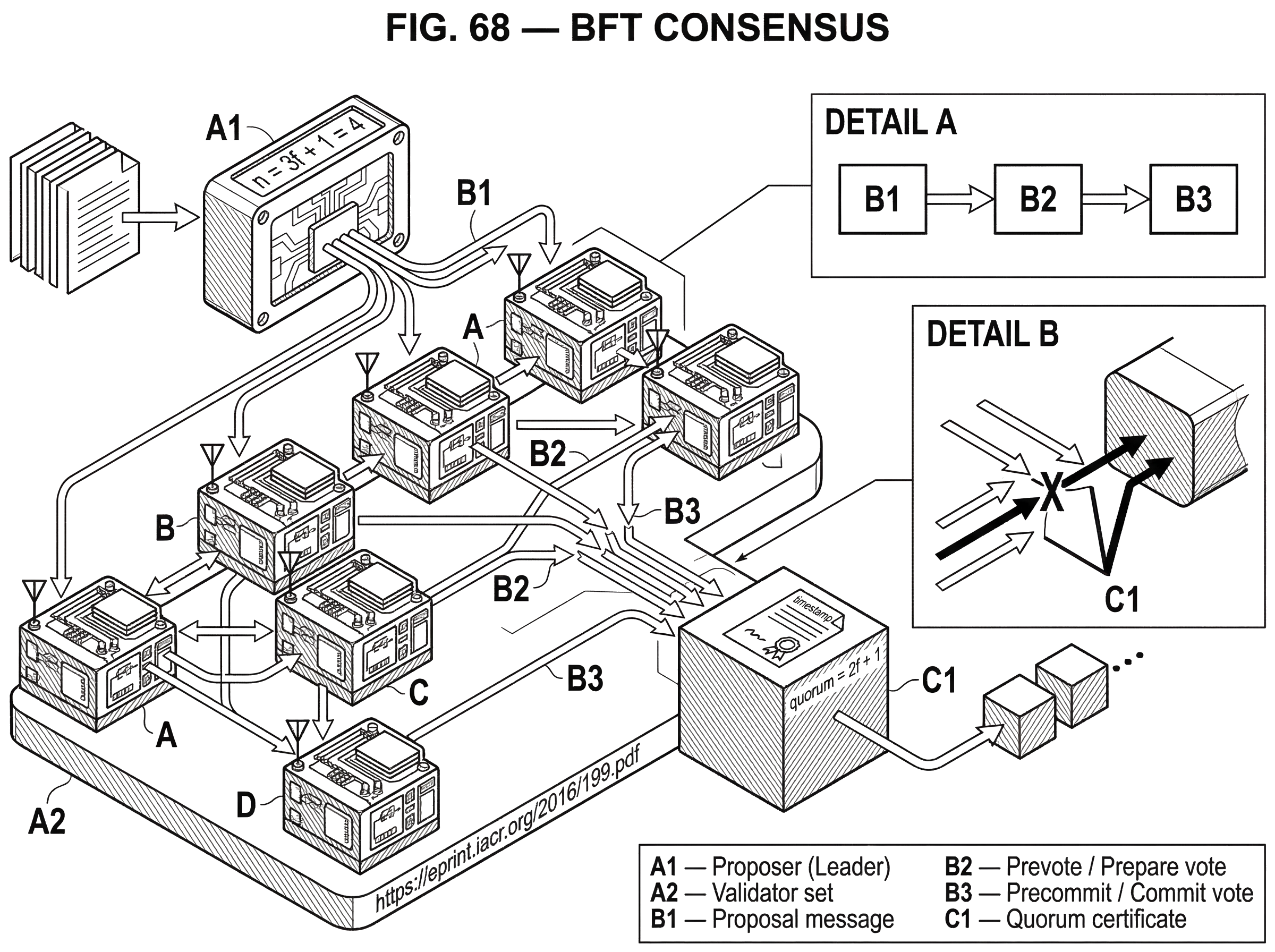

Consider a network with four validators: A, B, C, and D. The system is designed to tolerate f = 1 Byzantine validator, so it needs n = 3f + 1 = 4 validators total. A quorum is 2f + 1 = 3 votes.

Suppose A is the current leader. A client sends a transaction batch to the network, and A proposes a block containing that batch. In a PBFT-style or Tendermint-style protocol, the other validators do not immediately treat the proposal as final. They first ask a more careful question: do enough of us see the same proposal in the same round or view?

So B, C, and D verify the proposal and broadcast votes for it. If B sees the leader’s proposal and receives matching support from enough peers, it reaches a state analogous to being prepared in PBFT: it now knows the proposal is not just its own local impression, but something a quorum has acknowledged. That matters because a quorum-sized acknowledgment means any later conflicting certified value would need to overlap this one.

Now the protocol moves to a second confirmation stage. Validators broadcast another round of votes; PBFT calls the phases pre-prepare, prepare, and commit; Tendermint calls the relevant votes prevote and precommit around a proposal. The names differ, but the mechanism is similar: the second phase converts “a quorum saw this proposal” into “a quorum knows that a quorum saw this proposal.” That extra layer is what makes final commitment safe even if leadership changes immediately after.

If A is honest, the process is straightforward. Three validators sign the same candidate block, a commit certificate forms, and the block is finalized. If A is Byzantine and sends one block to B and C but a different block to D, the quorum rules stop the deception from becoming final. No conflicting block can collect three valid votes from honest participants without exposing equivocation or failing to gather enough overlap.

This explains why BFT protocols often look message-heavy compared with simpler crash-fault protocols. The extra communication is not ceremony. It is how nodes turn local observations into shared knowledge strong enough to survive deception.

What is PBFT and why did it make BFT practical?

The classic breakthrough from theory to systems was PBFT, or Practical Byzantine Fault Tolerance. Castro and Liskov showed that BFT replication could be practical in asynchronous environments like the Internet, with safety preserved despite arbitrary faults and liveness achieved under a weak synchrony assumption.

PBFT centers on a primary, or leader, in each numbered view. The primary proposes an order for client requests. Replicas then move through three phases: pre-prepare, prepare, and commit. The first phase ties a request to a sequence number in a specific view. The second phase makes replicas confirm they saw the same assignment. The third phase ensures enough replicas know that enough replicas confirmed it, so execution is safe even across failures and leader replacement.

The subtlety is that PBFT is not just a voting gadget. It is a protocol for preserving an ordering decision across time. If the leader stalls or misbehaves, replicas perform a view change and move to a new primary. Safety depends on carrying forward enough evidence from the old view that the new leader cannot safely overwrite a decision that was already sufficiently justified. That is why PBFT logs messages, uses checkpoints, and treats view change as a first-class part of consensus rather than an operational afterthought.

PBFT also showed an important engineering lesson: cryptography choices shape performance. In the normal case, PBFT used message authentication codes for routine messages and reserved digital signatures for view-change-related messages that occur less often. That separation was part of what made the protocol practical instead of merely correct on paper.

How do modern BFT protocols (Tendermint, HotStuff, etc.) differ from PBFT?

Many later protocols keep PBFT’s core invariants while changing the machinery around them. Tendermint, used in the Cosmos ecosystem and implemented in CometBFT, is leader-based and round-based. Each round has a proposal, then validators broadcast prevote and precommit messages. A block is committed when more than two-thirds of validator voting power signs commit votes for it. If fewer than one-third of voting power is Byzantine, no two honest validators can commit different blocks.

Tendermint adds a useful intuition through its locking mechanism. When a validator observes more than two-thirds prevotes for a block, it locks on that block and will not casually move to another. That lock is how the protocol preserves safety across rounds. Unlocking requires evidence strong enough to show that progress can continue without violating the prior safety condition. Here the important invariant is not “who voted most recently,” but “what evidence exists that a quorum has already converged around.”

HotStuff takes another step. It is still leader-based and still lives in the partially synchronous world, but it streamlines the protocol so communication complexity is linear in the number of replicas rather than quadratic in common cases. It also emphasizes responsiveness: once the network becomes synchronous enough, a correct leader can drive progress at the pace of actual network delay rather than waiting for conservative worst-case timers. That design matters in larger validator sets, where communication costs during leader changes become painful.

The family resemblance remains strong. A leader proposes. Validators vote. Quorum certificates summarize support. New leaders build on certified history rather than starting from scratch. The surface protocol changes, but the structure underneath is still about quorum intersection and preserving safe choices across adversarial conditions.

How do timing assumptions affect safety and liveness in BFT systems?

| Network model | Safety | Liveness | Typical protocols | Practical cost |

|---|---|---|---|---|

| Asynchronous | safety can hold (aBFT) | liveness guaranteed without timing | HoneyBadgerBFT, hashgraph | complex primitives, batching |

| Partially synchronous | safety guaranteed | eventual progress with timeouts | PBFT, Tendermint, HotStuff | timeouts and leader changes |

| Synchronous | safety guaranteed | progress with known bounds | theoretical constructions | requires strict timing bounds |

A common misunderstanding is that BFT consensus either “works” or “does not work.” The more accurate view is that BFT protocols usually separate safety from liveness.

Safety means honest nodes do not finalize conflicting values. Liveness means the system eventually makes progress. Many BFT protocols guarantee safety under quite weak conditions, but need stronger timing assumptions for liveness. PBFT’s safety does not rely on synchrony, but liveness requires a weak synchrony assumption: the adversary cannot delay correct nodes and messages forever. Tendermint similarly assumes partial synchrony and reasonably accurate local clocks for liveness. Round durations increase over time so the protocol eventually outruns actual network delay and can progress.

This split is not an accidental inconvenience. It reflects a deep impossibility result in distributed computing: in a fully asynchronous system, deterministic consensus cannot guarantee termination if even one participant may fail in the relevant adversarial way. So practical BFT systems usually pick one of two paths. They assume eventual synchrony and engineer around timeouts, or they use asynchronous techniques with more elaborate mechanisms.

HoneyBadgerBFT is the clearest example of the second path in the supplied material. It is designed as a practical asynchronous BFT protocol, guaranteeing liveness without timing assumptions. Instead of relying on a leader to keep the network moving, it composes asynchronous primitives for atomic broadcast. The cost is complexity and, in practice, a dependence on batching and threshold cryptographic setup to achieve efficiency. The benefit is robustness in highly unpredictable or adversarial wide-area networks where partially synchronous protocols can be slowed or halted by carefully chosen message delays.

So when someone says “this chain uses BFT,” the next question should be: under what network model, and what exactly is guaranteed under that model?

How do digital signatures and authentication change BFT protocol design?

The original Byzantine Generals paper distinguished between oral messages and signed messages. In the oral-message model, the 3f + 1 lower bound applies. With unforgeable signatures, the signed-message algorithm changes the landscape dramatically. Authentication limits what Byzantine nodes can fake, because a loyal participant’s signed statement cannot be forged or altered undetectably.

This does not magically remove all engineering costs, but it changes protocol design in a fundamental way. Modern blockchain BFT protocols rely heavily on authenticated voting. A quorum certificate is meaningful only because each vote is attributable and verifiable. Without that, “three votes for this block” would be much weaker evidence.

The same underlying idea appears outside block production. In threshold signing and multi-party computation, cryptography can replace fragile trust in single machines with structured trust across participants. A concrete example is Cube Exchange’s decentralized settlement design, which uses a 2-of-3 threshold signature scheme: the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. That is not a consensus protocol for ordering blocks, but it expresses the same BFT instinct: distribute authority so no single compromised actor can unilaterally create an unsafe outcome.

What different trust models and protocol families exist for BFT consensus?

It is tempting to treat BFT consensus as synonymous with “PBFT-like validator voting.” That is too narrow. What unifies BFT systems is the goal of agreement under Byzantine faults, not a single network architecture.

Some systems assume a globally known validator set with weighted voting, as in Tendermint and many HotStuff-derived proof-of-stake designs. Some pursue asynchronous, leaderless designs, as in HoneyBadgerBFT or Hedera’s hashgraph, which uses gossip-about-gossip and virtual voting rather than explicit leader-driven proposal rounds. Some alter the trust model more radically. The Stellar Consensus Protocol uses federated Byzantine agreement, where quorums arise from per-node trust choices called quorum slices instead of a single globally fixed validator set.

That changes the central invariant. In Stellar’s model, safety depends on quorum intersection induced by those slice choices. If the chosen slices do not imply intersection, no protocol trick can restore agreement. This is a good example of what is fundamental and what is conventional. The exact vote names, message patterns, and leader rules are conventions of particular protocols. The need for overlapping decision power is fundamental.

What are the practical costs and trade‑offs of using BFT consensus?

| Cost | Why it arises | Impact | Mitigation |

|---|---|---|---|

| Replication overhead | need 3f+1 replicas | higher hardware or stake cost | committee or sharding designs |

| Communication overhead | quorum voting, many messages | bandwidth and latency growth | linear protocols and batching |

| Implementation fragility | paper ≠ production code | bugs, security incidents, halts | audits, fuzzing, tests |

| Operational discipline | fast finality demands correctness | operator errors can halt chain | runbooks, monitoring, automation |

BFT consensus buys stronger fault tolerance and faster finality by spending resources elsewhere.

The first cost is replication overhead. If you want to tolerate f Byzantine faults in the classic model, you need at least 3f + 1 replicas. That is more expensive than crash-fault-tolerant systems, which can often do more with fewer replicas because they assume faulty nodes stop rather than deceive.

The second cost is communication. The original Byzantine algorithms were famously expensive, and even modern practical designs pay significant coordination overhead. PBFT-style protocols often have quadratic communication patterns in the number of replicas, especially around all-to-all voting and view changes. HotStuff’s linear communication is important precisely because this overhead becomes a bottleneck as replica counts grow.

The third cost is implementation fragility. A correct whitepaper is not the same thing as a secure production system. Recent security analysis of BFT implementations emphasizes that real deployments suffer from subtle logic errors, concurrency bugs, cryptographic mistakes, and mismatches between the theoretical model and the code. The Cosmos Hub v17.1 chain halt is a vivid reminder from adjacent production reality: not because CometBFT’s core safety proof failed, but because software around validator-set updates hit an inconsistent state and panicked during EndBlock, halting the chain for hours. Consensus guarantees live inside an implementation stack, not in isolation.

The fourth cost is operational discipline. Validators must upgrade correctly, monitor timeouts and network health, manage signing safely, and keep deterministic application behavior. Tools like Cosmovisor exist because even coordinating a binary switch at upgrade height is part of keeping a BFT network live. Fast finality is powerful, but it leaves less room for sloppy operations.

When should systems use BFT consensus?

The natural use case for BFT consensus is a system that values finality, consistency, and fault tolerance under active adversaries more than open-ended validator participation at internet scale. That is why it appears so often in proof-of-stake blockchains, consortium chains, replicated services, and settlement systems.

In a Cosmos-style chain, BFT consensus gives applications confidence that once a block is committed by the validator quorum, it is final enough for interchain proofs and higher-layer protocols to build on. In a HotStuff-derived chain, the goal is similar: low-latency finality with cleaner scaling properties. In asynchronous designs such as HoneyBadgerBFT, the attraction is surviving ugly network conditions without relying on timing assumptions for liveness.

The mechanism is general because the need is general. Whether the replicated state machine is a payments ledger, a decentralized exchange, a naming service, or a file service like the PBFT paper’s Byzantine NFS example, the core requirement is the same: all honest replicas must process the same ordered operations.

What can cause BFT consensus to fail in practice?

BFT consensus is powerful, but it is not magic.

If more than the tolerated fault threshold becomes Byzantine, the safety argument can fail. If the application state machine is not deterministic, replicas can diverge even with perfect consensus. If quorum configuration is wrong, as in poorly chosen federated quorum slices, agreement can become impossible. If the network never becomes sufficiently synchronous in a partially synchronous protocol, liveness can fail even though safety holds. If cryptographic assumptions fail, authenticated voting stops being trustworthy. And if implementations contain bugs in the places where theory assumes ideal behavior, the real system can halt or misbehave despite a sound protocol design.

It is also important not to confuse finality with privacy or fairness. PBFT explicitly does not solve confidentiality against faulty replicas. Some protocols make stronger fairness claims than others, but ordinary BFT agreement by itself does not guarantee all desirable transaction-ordering properties. You have to ask what the protocol actually proves, not what one hopes a consensus layer might imply.

Conclusion

BFT consensus is the machinery that lets a distributed system agree on one history even when some participants are actively malicious. Its central insight is not “take a vote,” but use overlapping quorums so conflicting decisions cannot both gather enough honest support. That is why the one-third fault threshold, the two-thirds quorum, and the language of locks, certificates, and view changes keep reappearing across very different protocols.

If you remember one thing tomorrow, remember this: BFT consensus turns agreement into an overlap problem. Once you see that, the rest of the protocol details start to make sense.

What should you understand before using BFT‑based infrastructure?

Before trading, depositing, or withdrawing on a chain that uses BFT consensus, understand how that chain achieves finality, what fault threshold it assumes, and which confirmation signal actually means “final.” On Cube Exchange, use these checks to map protocol guarantees to concrete deposit and execution actions so you don’t treat a tentative block as final.

- Confirm the asset and its network: identify the chain (e.g., a Tendermint chain, HotStuff-derived chain, or an asynchronous design) and note the chain’s stated finality signal.

- Verify the confirmation threshold: look for a commit certificate or the chain’s recommended confirmation count (for Tendermint-style chains, require a block with >2/3 precommits / a commit certificate before treating funds as final).

- Deposit to your Cube account using the verified network and wait until the chain-specific finality condition is met and Cube shows the deposit as fully credited.

- Trade or withdraw on Cube: for large trades use limit orders or split into smaller orders to control slippage, and only initiate external withdrawals after the chain’s finality condition is satisfied.

Frequently Asked Questions

The one‑third bound arises because protocols need quorums that necessarily overlap in at least one honest node. With n = 3f + 1 there are at least 2f + 1 honest nodes and any quorum of size 2f + 1 intersects another quorum in at least f + 1 nodes, so at least one honest node prevents two conflicting certificates from both being valid; with only 3f nodes a faulty coalition can split honest nodes and create disjoint majorities.

Quorum certificates summarize verifiable votes from a sufficiently large set of validators so that any two certificates must overlap in at least one honest participant; because an honest node will not honestly sign two conflicting values, that honest overlap makes it impossible for two conflicting decisions both to be certified.

Safety (no two honest nodes finalize different values) is typically guaranteed without timing assumptions, while liveness (the system eventually makes progress) usually requires a weak synchrony assumption; practical BFT protocols therefore separate the two and rely on timeouts or eventual synchrony to ensure liveness.

Authentication changes what Byzantine nodes can fake: in the oral‑message model the 3f+1 lower bound applies, but with unforgeable signatures votes become attributable and quorum certificates become meaningful, allowing different protocol designs that rely on authenticated voting.

Because honest nodes must convert a local observation into ‘‘enough others saw it too,’’ BFT protocols add phases and rounds (prepare/commit, prevote/precommit, certificates) that require extra messages; this, plus replication (3f+1 nodes) and view‑change bookkeeping, produces higher communication and implementation overhead compared with crash‑fault protocols.

Leader‑based, partially synchronous designs (Tendermint, PBFT, HotStuff) use rounds, locking, and view changes and need eventual synchrony for liveness but can be simpler and lower‑latency; asynchronous designs (HoneyBadgerBFT) guarantee liveness without timing assumptions but add complexity and rely on batching and threshold crypto/trusted setup to be practical.

Real‑world bugs, race conditions, or mismatches between the protocol model and implementation can break liveness or cause halts even when the protocol is correct on paper - for example, a Cosmos Hub validator‑set update bug led to a chain halt despite CometBFT’s core safety proofs.

For state‑machine replication to work safely under BFT consensus, the replicated application must be deterministic and finite‑state so replicas executing the same ordered inputs end in the same state; CometBFT and similar engines explicitly require deterministic application logic.

No - BFT consensus ensures agreement and finality, not confidentiality or ordering fairness; PBFT and similar protocols do not prevent a faulty replica from leaking state, and additional cryptographic mechanisms are required to provide privacy or stronger fairness guarantees.

If more than the tolerated f nodes become Byzantine, the safety argument can fail and conflicting decisions become possible; likewise, if a partially synchronous protocol never experiences sufficient synchrony, liveness can stall even though safety still holds.

Related reading