What is a STARK?

Learn what STARKs are, how they prove computation correctly, why they avoid trusted setup, and the tradeoffs versus SNARKs.

Introduction

STARKs are cryptographic proofs that let one party convince others that a computation was executed correctly, without forcing every verifier to rerun that computation. That sounds like a small improvement until you notice the asymmetry it creates: a prover may spend substantial effort once, while many verifiers check the result much more cheaply. In blockchains, rollups, private computation, and verifiable virtual machines, that asymmetry is the whole game.

What makes STARKs distinctive is not just that they are succinct proofs. It is the particular way they achieve succinctness. They avoid the trusted setup that many SNARK systems rely on, and they are built mainly from hash-based assumptions that are generally viewed as more plausible in a post-quantum world than number-theoretic assumptions such as discrete logarithms. The tradeoff is real: STARK proofs are typically much larger than the smallest SNARK proofs, and proving can still be expensive.

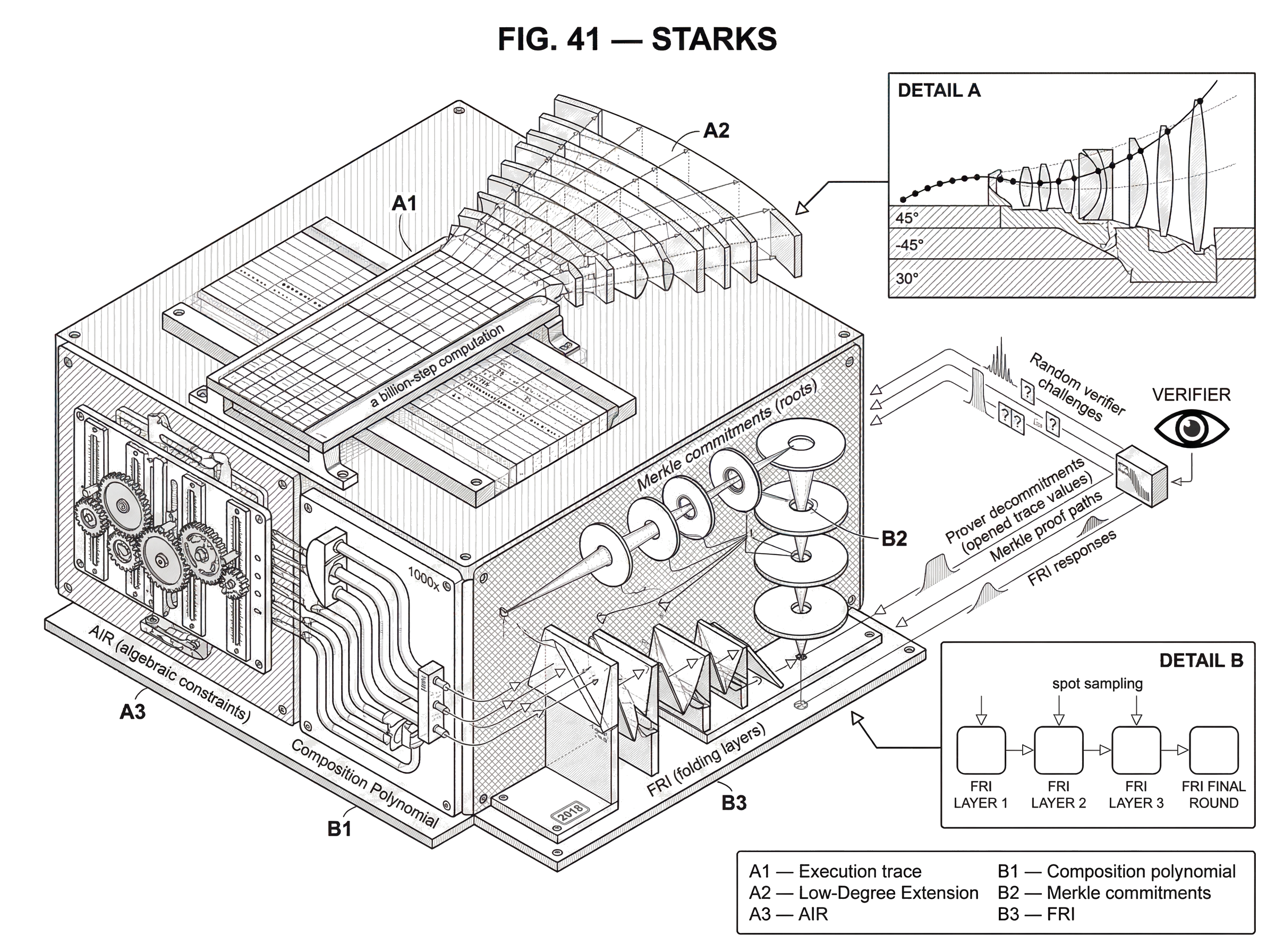

The idea becomes easier once you stop thinking of a computation as a program and start thinking of it as a very large table whose rows must obey local consistency rules. A STARK prover commits to that table, extends it into a polynomial-friendly form, and then answers a small number of random spot checks that would be extremely hard to fake if the table were globally inconsistent. Most of the machinery exists to make that last sentence mathematically sound.

What problem do STARKs solve for verifiable computation?

| Option | Verifier cost | Setup trust | Proof size | Post‑quantum | Best for |

|---|---|---|---|---|---|

| Recompute | As expensive as computation | No setup | No proof | N/A | Small computations |

| SNARK | Very low | Often trusted setup | Very small | Not post‑quantum | On‑chain byte‑sensitive apps |

| STARK | Low (polylogarithmic) | No trusted setup | Larger than SNARKs | Hash‑based, post‑quantum‑leaning | Large computations, transparency |

Suppose a server claims it ran a billion-step computation and got output y. If you do not trust the server, the obvious way to check is to rerun the computation yourself. But then delegation bought you nothing. What you want instead is a proof that is much cheaper to verify than the original work was to perform.

This is the core problem of computational integrity: how can a weak verifier check a strong prover’s work without repeating it? In the zero-knowledge setting, there is a second requirement: the prover should be able to convince the verifier without revealing the private inputs used during the computation.

A useful way to see the design space is to separate three layers. First there is an information-theoretic proof skeleton, often built from PCP or IOP ideas, that says what would be checkable if the verifier could query huge objects as if by magic. Then there is an arithmetization layer that rewrites the original computation into algebraic constraints over a finite field. Finally there is a cryptographic layer that replaces those magical oracle queries with concrete commitments, usually using hashes and Merkle trees. STARKs fit naturally into this pattern.

The reason this matters in practice is not confined to one chain or one product. A rollup can use a STARK to prove a batch of transactions was executed correctly. A virtual machine can attach a STARK proof of execution to a program run. A Bitcoin-oriented project can use recursive STARKs to compress chain-state verification. The same underlying idea appears in very different systems because the problem is the same: verification is the bottleneck, not raw computation.

How do STARKs represent program execution as a constrained execution trace?

The compression point in STARKs is this: instead of proving a whole computation step by step to the verifier, the prover represents the computation as an execution trace and proves that this trace satisfies a small set of algebraic rules.

An execution trace is a table recording the machine state over time. If a program computes Fibonacci numbers, a row might contain the current pair (a, b), and the next row should contain (b, a + b). If a virtual machine executes instructions, a row might include registers, memory values, flags, and the program counter. The trace can be enormous, but the rules that relate neighboring rows are local. That locality is the reason succinct proof becomes possible.

STARK systems usually express those local rules in an Algebraic Intermediate Representation, or AIR. AIR says, in effect, “for every row, these polynomial relations must hold between this row and nearby rows.” Some constraints pin the first or last row to public inputs and outputs. Others enforce the transition rule. The exact encoding is a design choice, but the invariant is stable: if the trace is valid, all constraints evaluate to zero everywhere they are supposed to.

This is where readers often misinterpret the protocol. The prover is not sending the whole trace to the verifier and hoping spot checks catch mistakes by luck. The trace is transformed into algebraic objects with strong global structure. The random spot checks work because a function that is close to a low-degree polynomial has rigid behavior, and a function that deviates significantly gets caught with high probability.

Why do STARK constructions convert traces into low-degree polynomials (LDE)?

Why turn computation into polynomials at all? Because low-degree polynomials are easy to test probabilistically. A gigantic table of values might look arbitrary, but if those values come from evaluating a polynomial of low degree over a field, then a small amount of carefully chosen checking can say a lot about the whole table.

The first important object is the low-degree extension, or LDE. Start with trace values defined on a basic domain S. Interpolate those values by a polynomial, then evaluate that same polynomial on a larger domain S'. The result is a redundancy-expanded version of the trace. That expansion is not wasted overhead. It creates room to test whether the committed data behaves like evaluations of a low-degree polynomial rather than an arbitrary function.

There is an analogy here to adding structured redundancy to data so corruption becomes detectable. What it explains is why the prover intentionally enlarges the object it is committing to. Where it fails is that the redundancy is algebraic, not merely duplicated data. The verifier is testing deep consistency with a polynomial model, not just checking repeated copies match.

Once the trace columns and constraints are expressed in this form, the prover combines them into what engineers often call a composition polynomial. The point of this object is to aggregate many constraint checks into a single algebraic claim. If the execution trace violates even one required transition often enough, then the composition polynomial will fail to behave like the low-degree object it is supposed to be. That converts “the program ran incorrectly” into “this function is not close to any low-degree polynomial,” which is exactly the sort of claim STARK machinery knows how to test.

How do IOPs and hash-based commitments make STARKs transparent?

| Commitment | Setup | Binding property | Reveal cost | Quantum resistance | Best for |

|---|---|---|---|---|---|

| Merkle commitments | No trusted setup | Collision‑resistant hashes | Logarithmic auth paths | Hash‑based, plausible | IOP / large table commits |

| Polynomial commitments | Often transparent | Algebraic binding | Constant‑size openings | Depends on pairing assumptions | Short openings, SNARKs |

| CRS / trusted setup | Requires trusted ceremony | Structured reference string | Very small proofs | Number‑theoretic (fragile) | On‑chain byte‑critical apps |

At the abstract level, a STARK is built from an Interactive Oracle Proof, or IOP. In an IOP, the prover writes down large strings or tables, and the verifier checks only a few queried positions chosen at random. If the verifier’s randomness is public and no secret setup is needed, the system is called transparent.

But in a real protocol, the prover cannot just say “trust me, I had a giant table and here are the few values you asked for.” The verifier needs assurance that every revealed value comes from one fixed committed object, not from answers invented on the fly. That is where hash-based commitments enter.

A common implementation pattern is to arrange evaluations into a Merkle tree and send the root as the commitment. Later, when the verifier asks for a value at a particular position, the prover reveals the value plus the authentication path proving that this value really belongs to the committed tree. This is why STARK proofs often have a clear two-part structure: a commitment phase, where roots to various evaluation tables are published, and a decommitment phase, where the prover reveals selected values and their authentication paths.

This hash-based structure explains two major properties of STARKs at once. First, it explains transparency: there is no trusted setup ceremony producing structured public parameters that must remain uncontaminated. Second, it explains the post-quantum story: the assumptions are primarily collision resistance and random-oracle-style hashing, rather than pairings or discrete-log-style algebra that is more directly threatened by large quantum computers. That is not a proof of eternal safety; it is a statement about which assumptions the design leans on.

What is FRI and how does it enable practical low-degree testing in STARKs?

The most technical and most important subroutine in a STARK is usually FRI, short for Fast Reed-Solomon IOP of Proximity. If you want one named component to remember, remember this one.

FRI solves the following problem efficiently: given oracle access to a function over a large domain, how can the verifier check that this function is close to the evaluation of a low-degree polynomial? Without such a test, the arithmetization story would remain elegant but impractical.

The mechanism is a repeated folding process. At each round, the prover transforms a long list of evaluations into a shorter one that should correspond to a related polynomial of roughly half the degree. Intuitively, FRI keeps compressing the claim “this big table comes from a low-degree polynomial” into smaller and smaller claims of the same kind. The verifier does not inspect every entry in every layer. Instead, it samples random locations and checks that the answers across layers are mutually consistent.

A useful concrete detail from engineering explanations is that to derive the next FRI layer at a point related to x^2, the prover only needs paired values from the previous layer at x and -x. That pairing is what allows the domain to collapse round by round. After roughly logarithmically many rounds, the verifier is left with a tiny residual object that can be checked directly.

This is the source of STARK scalability. The original STARK paper reports a FRI-based low-degree test with prover arithmetic cost linear in the domain size and verifier arithmetic cost logarithmic in the domain size. Combined with the rest of the construction, that yields the broad headline: proving grows roughly quasi-linearly with computation size, while verification grows only polylogarithmically.

Worked example: how to prove a Fibonacci recurrence with a STARK

Take a toy computation: start with public inputs a0 and a1, iterate t steps of the Fibonacci rule, and claim the final value is z. A naive proof would show every step. A STARK proof does something subtler.

The prover first writes the execution trace: row 0 contains (a0, a1), row 1 contains (a1, a0 + a1), row 2 contains (a0 + a1, a1 + (a0 + a1)), and so on. Already the important structure is visible: each row depends only on the previous one, and the transition rule is local. The AIR captures exactly this locality by requiring that if a row contains (u, v), then the next row must contain (v, u + v). Separate boundary constraints state that the first row matches the claimed starting values and the last row’s second coordinate equals z.

The prover then treats each trace column as values of a function over a finite domain and computes low-degree extensions over a larger domain. Next it combines the transition and boundary constraints into a composition polynomial designed so that a valid trace makes this object low-degree. Instead of handing over all these tables, the prover commits to them with Merkle roots.

Now the verifier sends random challenges. These challenges determine which positions in the extended tables must be opened and how the combined constraint expression is formed. For each queried position, the prover reveals the needed trace values, the corresponding composition values, and the Merkle authentication paths. The verifier checks two things at once: first, that the opened values are consistent with the committed roots; second, that they satisfy the local algebraic relations implied by the AIR and the folding relations imposed by FRI.

Why does this work? Because a fake trace has a dilemma. If it violates the Fibonacci transition on many rows, the composition polynomial stops looking low-degree and FRI is likely to catch it. If it tries to answer each random query separately with locally consistent lies, the Merkle commitments force those answers to come from one fixed global object, so the lies must all fit together. That combination of local algebra plus global commitment is the mechanism.

Are STARKs zero-knowledge by default, and how is ZK achieved?

| Mode | What verifier learns | Extra steps | Cost impact | Use case |

|---|---|---|---|---|

| Integrity‑only | Only statement correctness | No blinding | Slightly smaller proofs | Public audits, rollups |

| Zero‑knowledge | Statement only, witness hidden | Masking / randomization | Higher prover work | Private computation |

A STARK can be only a proof of integrity, or it can also be zero-knowledge. The distinction matters. Integrity alone says the claimed computation was carried out correctly. Zero-knowledge adds that the verifier learns nothing beyond the truth of the claim, up to the formal leakage allowed by the protocol.

In practice, zero knowledge in STARKs is typically achieved by randomizing or masking the committed algebraic objects so the revealed queried positions do not leak meaningful information about the witness. The high-level picture is enough for most readers: the same machinery that supports succinct verification can be augmented so the verifier sees only carefully blinded slices of the execution trace.

It is worth being precise here. Zero-knowledge is not automatic merely because a system uses a STARK-like proof. Some implementations emphasize transparency and proof of computation first, and stronger privacy guarantees may require additional protocol choices. For example, a reference implementation can be fully functional yet explicitly note that it does not provide perfect zero-knowledge. So when someone says “this uses STARKs,” that tells you a lot about the proof family, but not every privacy detail.

When and why do blockchains use STARKs (rollups, VMs, recursion)?

The reason STARKs matter operationally is simple: they turn expensive computation into cheap verification under a trust model many systems prefer.

In a rollup, a sequencer or prover executes many transactions off-chain and posts a proof that the resulting state transition is valid. The base chain need not replay the full batch. In StarkNet’s ecosystem, this logic extends further into SHARP, a shared proving and aggregation system that can combine proofs for multiple Cairo programs and eventually submit a unified proof for on-chain verification. That is an answer to a practical problem created by STARKs themselves: proving and verification are cheaper than full re-execution, but still expensive enough that batching and recursion matter.

The same pattern appears outside Ethereum-style systems. Miden VM is designed so program execution automatically produces a STARK-based proof of execution. ZeroSync uses recursive STARKs and Cairo-based tooling to compress Bitcoin chain-state validity into a compact proof, aiming to make verification easier on constrained devices. There is even work exploring full on-chain verification of STARK proofs on Solana. These examples matter because they show STARKs are not tied to one chain architecture. They are a general answer to verifiable computation.

What tradeoffs do STARKs make (proof size, prover cost, assumptions)?

STARKs are attractive because they combine several properties that are hard to get together: transparency, broad applicability to general computation, strong verifier scalability, and hash-based assumptions with a plausible post-quantum story. But there is no free lunch.

The most visible cost is proof size. The original STARK paper explicitly noted that contemporary ZK-SNARKs could be around 1000x shorter than STARK proofs. Later implementations have improved constants a great deal, and some practical systems produce proofs in the tens to hundreds of kilobytes rather than something enormous. Still, the structural fact remains: STARK proofs are usually larger than the most succinct pairing-based SNARK proofs.

The second cost is prover work and hardware pressure. Even if asymptotically near-linear, the prover is doing a lot: generating traces, computing low-degree extensions, evaluating composition polynomials, hashing large tables, and producing decommitments across many FRI layers. Real systems often lean heavily on parallelism, specialized engineering, and sometimes recursion or aggregation to make this affordable.

A third tradeoff is that some security arguments depend on the random oracle model and on careful parameter choices. Transparency does not mean “assumption-free.” It means the setup is public rather than trusted. Soundness still rests on concrete choices about field sizes, query counts, hash functions, expansion factors, and FRI parameters.

And implementation details matter. Repository documentation for production-oriented provers can include caveats like “the verifier checks consistency with the public input embedded in the proof, but external logic must validate that the public input itself is the intended one.” Audits of STARK-based systems have also exposed higher-layer issues that are not failures of STARK mathematics but do affect safety. A proof system can be sound while the surrounding application logic is buggy.

STARKs vs SNARKs: what practical differences should you care about?

It is common to hear “STARK vs SNARK” as if these were two opposite camps. The reality is subtler. A STARK is best understood as a particular style of succinct argument system emphasizing transparency and scalability through IOP-based and hash-based techniques. Many SNARKs emphasize very short proofs and efficient on-chain verification, often at the cost of a trusted setup or assumptions that are not thought to be post-quantum secure.

So the practical question is not which acronym is better. It is which bottleneck matters more in your setting. If on-chain bytes are painfully expensive and a trusted setup is acceptable, a SNARK may be a better fit. If avoiding trusted setup is central, if long-term cryptographic conservatism matters, or if the system naturally benefits from AIR/trace-based proving, STARKs become compelling.

The relation is also conceptual, not just competitive. Both are implementations of the broader idea of zero-knowledge proofs or Validity Proof. They differ in the algebraic encodings, commitment mechanisms, proof sizes, setup assumptions, and performance envelope. Knowing this prevents a common misunderstanding: choosing STARKs is usually not choosing “more advanced ZK,” but choosing a different point in the design space.

Which STARK security assumptions matter, and what fails if they break?

STARKs depend on several structural assumptions. If the hash function used for commitments stops behaving like a collision-resistant commitment primitive, the commitment layer weakens. If the random-oracle heuristic is an unacceptable modeling choice for your application, then the non-interactive form of the protocol becomes harder to justify in the same way. If parameter choices are too aggressive, soundness error may become larger than intended.

There are also system-level assumptions. A prover may be able to prove successful execution but not failed execution in a way that supports every application-level need. That distinction has shown up in real StarkNet-related design discussions, where proving failure versus censorship can matter operationally. Likewise, proofs establish correctness of the modeled computation, not data availability or chain-selection rules outside that model. ZeroSync, for example, emphasizes that a proof can attest to one chain’s validity but cannot by itself solve data availability or enforce the longest-chain rule.

These are not defects unique to STARKs. They are reminders that every proof system lives inside a larger protocol. The proof only says what it was designed to say.

Conclusion

A STARK is a transparent, hash-based proof system for showing that a computation was executed correctly, often with zero-knowledge, while keeping verification far cheaper than redoing the work. Its core trick is to turn computation into algebraic constraints on a large execution trace, commit to that structure, and use FRI-based low-degree testing plus random spot checks to make cheating unlikely.

The reason STARKs matter is the combination they offer: no trusted setup, strong verifier scalability, and assumptions that are more comfortable in a post-quantum setting. The reason they are not automatically the answer to everything is equally important: proofs are bigger than the smallest SNARKs, provers are still heavy, and the surrounding system design matters. If you remember one thing tomorrow, remember this: STARKs make very large computations checkable by reducing global correctness to a few checks on rigid algebraic structure.

What should I check before using STARK-based infrastructure?

Understand the operational and security checks you should do before relying on STARK-backed infrastructure, and how that affects trading or moving funds. On Cube Exchange, the practical path is: verify the STARK proof publication and verification model, confirm data-availability and privacy choices, then use normal deposit-and-trade flows while accounting for proof-related delays and on-chain costs.

- Verify the project publishes STARK proofs and where they are verified (on-chain root, aggregator contract, or off-chain verifier).

- Check whether the implementation provides zero-knowledge or only integrity, and whether public inputs (state root, batch commitments) match the on-chain records you expect.

- Confirm data availability and finality rules (how long proofs or challenge windows take to settle) before moving funds or interpreting balance changes.

- Fund your Cube account with fiat or a supported crypto transfer, then open the relevant market or withdrawal flow and place a limit order or withdrawal request while accounting for any proof-confirmation time and on-chain gas implications.

Frequently Asked Questions

STARKs are transparent: they avoid a trusted-setup ceremony by using hash-based commitments (typically Merkle roots) and public randomness instead of structured, secret parameters; soundness therefore rests on hash collision resistance and random-oracle–style instantiations rather than a multi-party trusted CRS.

Because low-degree polynomials have rigid, testable global structure, STARKs interpolate the execution trace into polynomials and compute a low-degree extension (LDE) so a few random checks can probabilistically certify the whole trace instead of inspecting every row.

FRI (Fast Reed–Solomon IOP of Proximity) is the low-degree testing engine in most STARKs: it repeatedly ‘‘folds’’ evaluation tables to compress the claim that a function is close to a low-degree polynomial, allowing the verifier to spot-check only logarithmically many positions while the prover does near-linear work.

STARK proofs are generally larger because they commit to expanded algebraic objects (LDEs, composition polynomials, multiple FRI layers) and use hash-based decommitments rather than pairings and succinct algebraic encodings; the original STARK paper even noted contemporary SNARKs could be on the order of 1000× shorter, though implementations have since reduced constants.

Zero-knowledge is not automatic: STARK systems can be made zero-knowledge by masking or blinding algebraic objects so opened positions leak nothing useful, but some implementations emphasize transparency and integrity first and explicitly do not provide perfect zero-knowledge.

If the hash function or random-oracle heuristic used for commitments fails to meet its intended properties, the commitment layer and non-interactive soundness guarantees weaken - transparency removes trusted setup but does not make STARKs assumption-free, and the random-oracle model should be treated as a practical heuristic rather than a formal oracle.

A STARK proof only attests to the correctness of the modeled computation; it does not by itself guarantee data availability or enforce consensus rules such as the longest-chain rule, so protocol-level components and p2p logic remain necessary.

The main practical bottlenecks are prover cost and resource pressure - building traces, computing LDEs, hashing large tables, and running FRI are CPU-, memory-, and I/O-heavy - so real systems rely on parallelism, parameter tuning, batching/aggregation, and sometimes recursion to make proving affordable.

Related reading