What is the Internet Computer?

Learn what the Internet Computer is, how canisters, subnets, chain-key cryptography, cycles, and NNS governance work, and why it exists.

Introduction

For the token explainer, see ICP.

The Internet Computer is a blockchain network designed to run complete internet applications on-chain, not just tokens or narrowly scoped smart contracts. That claim sounds ordinary until you compare it with how most blockchains are actually used. On many networks, the chain is the place where critical state changes are settled, while the user interface, web hosting, indexing, and much of the application logic live elsewhere. The Internet Computer tries to collapse more of that stack into the protocol itself.

The central question is not merely whether a blockchain can execute code. Many already do. The harder question is whether a decentralized network can host stateful software that behaves like an online service: storing data, responding to users, coordinating multiple components, and in some cases even serving web assets directly. The Internet Computer’s architecture is built around answering that question with “yes,” but only by making a series of strong design choices about execution, consensus, cryptography, and governance.

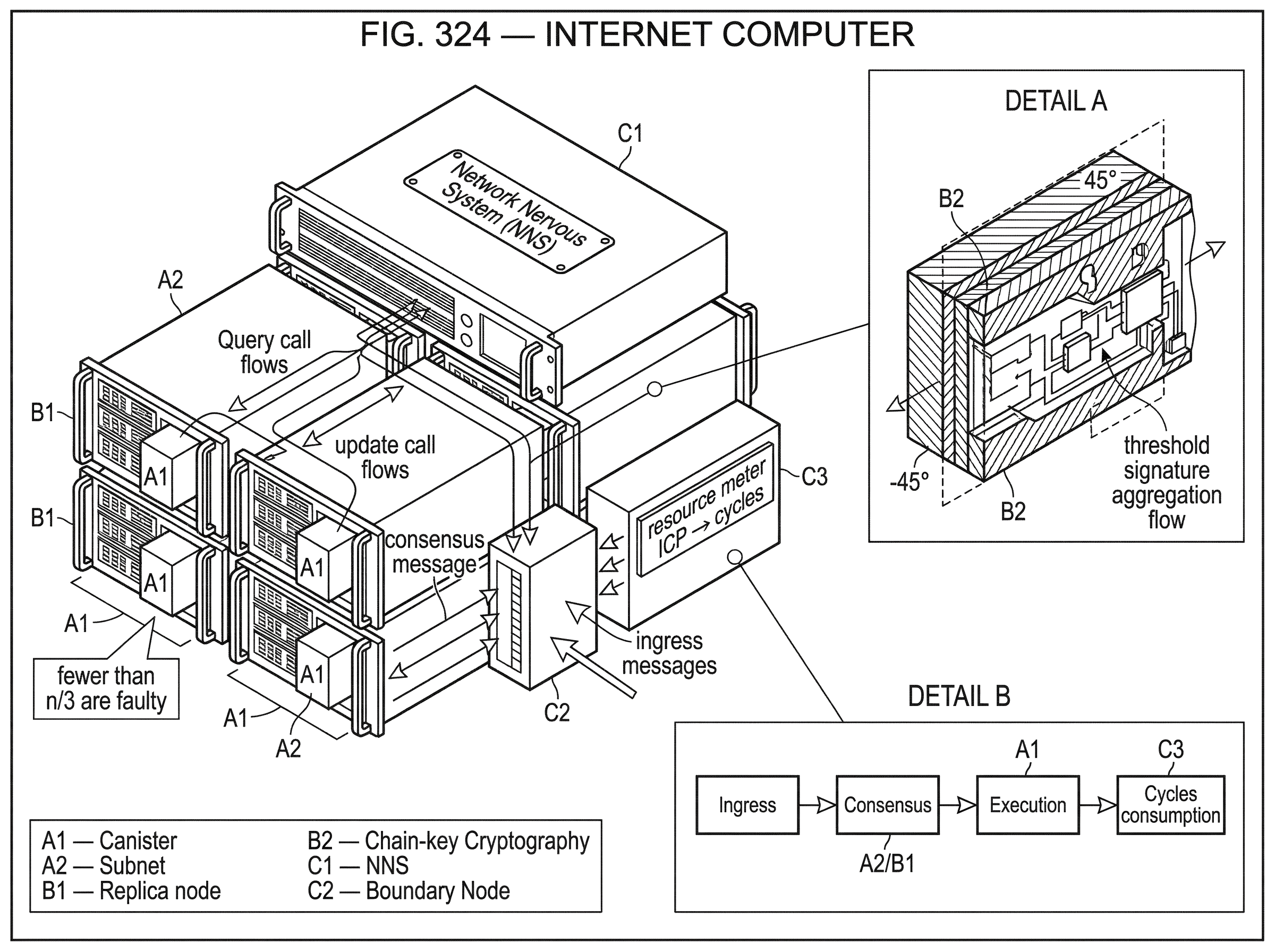

If you keep one idea in view while reading, let it be this: the Internet Computer is trying to turn a blockchain from a single global machine into a network of coordinated replicated machines. Those machines are called subnets. The programs they run are called canisters. And much of the protocol’s unusual machinery exists to make those many subnets feel like one coherent system to developers and users.

What is a canister and how does it differ from an account?

| Abstraction | State persistence | Execution target | Isolation | Typical app fit | Billing model |

|---|---|---|---|---|---|

| Canister | Durable in-canister memory | WebAssembly (Wasm) | Replica-sandboxed process | Full backends & web hosting | Prepaid cycles by operator |

| Ethereum-style contract | Ledger-backed storage entries | EVM (chain VM) | Contract-scoped state | Settlement, token and DeFi primitives | User-paid gas per tx |

To see why the Internet Computer feels different from Ethereum-style systems, start with its basic abstraction. The fundamental computational unit is the canister: a software container that bundles code and persistent state. The whitepaper describes a canister as roughly analogous to a process. That is a useful starting point because a process is not just code sitting on a ledger; it is something that receives input, changes internal state, and can produce output over time.

This matters because the Internet Computer is built for applications that keep running as long-lived services. A canister can store data, perform general computation on that data, and respond to messages. Its code is compiled to WebAssembly, or Wasm, which gives the platform a portable execution target rather than a custom application-level virtual machine designed only for financial transactions. That choice is practical: Wasm is expressive enough for general-purpose software while still giving the platform a constrained runtime model.

The easiest way to picture a canister is as a small on-chain service with memory. Imagine a chat application. On many chains, the contract might only record high-value events: user registrations, token transfers, perhaps hashes of messages. The actual chat history, media, and API behavior would likely live in centralized infrastructure because on-chain execution is too constrained or expensive. On the Internet Computer, the intended model is different. A canister can hold the application state itself, update that state as users interact, and expose functionality that behaves more like an application backend than a minimal settlement script.

That does not mean canisters are unconstrained or magical. They are still replicated across nodes in a subnet, so every state-changing operation must be executed in a fault-tolerant setting. But the design target is plainly broader than “smart contracts as tiny deterministic accounting programs.” It is closer to smart contracts as durable software services.

Why does the Internet Computer use subnets instead of a single global chain?

A single blockchain that asks every node to execute every application hits an obvious limit: throughput and storage scale badly because the whole network carries the whole workload. The Internet Computer answers this by organizing nodes into subnets, each of which is its own replicated state machine.

A subnet is a group of replicas (node machines running the replica software) that collectively host a set of canisters. Instead of one global set of validators processing everything, the network spreads applications across many subnets. This is the architectural move that makes the system more like a distributed computer than a monolithic chain.

Here is the mechanism. Within a subnet, replicas run consensus, agree on message ordering, execute canister code, and maintain the subnet’s state. Different subnets can host different canisters and scale capacity by adding more subnets. The network as a whole is then not one chain in the simplest sense, but a collection of chains or replicated state machines tied together by common cryptographic and governance infrastructure.

This also clarifies a common misunderstanding. “More subnets” is not merely a marketing way of saying “more shards.” The meaningful point is that the protocol must make outputs from one subnet trustworthy to another subnet and to external users without requiring everyone to validate everything. If that step fails, the system fragments into isolated clusters. So the Internet Computer’s scaling story depends not only on dividing work, but on proving across divisions that the work was done correctly.

The fault model is standard for Byzantine fault-tolerant systems, but it is worth stating plainly because many later claims depend on it. If a subnet has n replicas, the whitepaper assumes fewer than n/3 are faulty, and those faults may be Byzantine. Safety is designed to hold even under asynchronous conditions, while liveness depends on partial synchrony; periods when message delay is bounded well enough for the protocol to make progress. That is a real assumption, not a detail to skip past. The Internet Computer is not claiming to escape the classic tradeoffs of distributed systems; it is choosing where to sit within them.

How does chain-key cryptography let subnets present unified, verifiable outputs?

If subnets are the scaling mechanism, chain-key cryptography is the unifying mechanism. This is the idea that makes much of the platform click.

Ordinarily, when many machines jointly maintain state, verification can become cumbersome. An outside user may need to know a large validator set, verify many signatures, or rely on a bridge-like trust layer to understand whether some output is real. The Internet Computer’s answer is to use threshold cryptography so that a subnet can present a single public verification key even though many replicas jointly control the corresponding signing power.

The consequence is powerful. A subnet can produce certified outputs (for example, statements about state or responses) that others can verify against that subnet’s public key. Other subnets and external users do not need to inspect the whole subnet’s internal process every time. They can verify the signature and trust that it represents the threshold-backed result of the subnet.

This is why the term “chain-key” matters. It does not just mean “the chain has keys.” It means the network is designed so that cryptographic verification is attached to the chain’s evolving state in a compact way. The whitepaper describes threshold signatures, distributed key generation, and related tools as components of this system. The practical effect is that the network can authenticate outputs efficiently while scaling across multiple subnets.

An analogy helps here, with limits. You can think of a subnet as a committee whose members jointly control a single stamp. No individual member can legitimately stamp a document alone, but once enough members cooperate, the document gets a stamp that outsiders recognize immediately. What this analogy explains is why verification can stay simple even when control is distributed. Where it fails is that threshold signatures are not a social convention like a committee stamp; they are cryptographic objects with strict security assumptions and precise failure thresholds.

This same family of ideas also underpins the Internet Computer’s external integration story. Official docs describe direct Bitcoin integration using a Bitcoin adapter and threshold signatures such as t-ECDSA and t-Schnorr, and they describe canisters signing and submitting transactions to Ethereum and EVM chains through an EVM RPC canister. The details differ by integration, but the common theme is consistent: the network wants canisters to act outwardly in cryptographically authenticated ways rather than depending entirely on centralized relayers.

How do replicas inside a subnet reach consensus and finalize blocks?

Once you know that a subnet is the execution unit, the next question is how its replicas agree on order. The Internet Computer uses a round-based blockchain protocol inside each subnet. A random beacon assigns each replica a rank in each round, and the lowest-ranked replica is the round’s leader.

This leader proposes a block, but proposal alone is not enough. The block must gather broad support from the subnet. The whitepaper describes notarization and finalization steps that require support from n - f replicas, where f is the maximum number of faulty replicas tolerated. This is the protocol’s way of turning “someone proposed this block” into “the subnet collectively recognizes this block as sufficiently confirmed.”

The random beacon matters because leader selection should not be predictable in a way that gives attackers an easy target schedule. The whitepaper ties this to threshold-signature-based randomness. The execution layer also has access to a Random Tape, a distributed source of pseudorandomness derived from threshold mechanisms. That randomness is useful not only for consensus but for applications that need unpredictability supplied by the network rather than by a single untrusted party.

A worked example makes the flow clearer. Imagine a user submits an update that changes a canister’s state; perhaps posting a message in a decentralized forum. That request enters the target subnet. In the current round, one replica has the best rank and proposes a block containing the message. Other replicas check whether the proposal is valid and, if so, support notarizing it. Once enough support exists, the block is notarized, which means the subnet has cryptographic evidence that a sufficient set of replicas accepted it for that round. As execution proceeds and the protocol advances, the block becomes finalized. Only then is the state transition treated as part of the durable replicated history.

The important point is not the vocabulary of rounds, leaders, notarization, and finalization by itself. The point is the invariant they protect: all honest replicas in the subnet should converge on the same ordered sequence of state transitions despite Byzantine behavior by a minority.

When should I use query calls versus update calls on the Internet Computer?

| Call type | Consensus path | Typical latency | Finality/trust | Best for |

|---|---|---|---|---|

| Query call | Local replica execution | Milliseconds | No consensus finality | Interactive reads, low-latency UIs |

| Update call | Consensus-ordered execution | Seconds (slower) | Consensus-backed finality | State changes, payments |

One of the most practically important parts of the Internet Computer is the distinction between query calls and update calls.

An update call changes state, so it must go through consensus. That makes it slower, but the result becomes part of the subnet’s replicated history. A query call, by contrast, can often be served more quickly without full consensus on a new state transition. This gives developers a way to build applications that feel more responsive for read-heavy interactions.

The tradeoff is straightforward once stated clearly. A query is fast because it avoids the full cost of replicated state agreement for each read. But because it does not itself create a consensus-backed state change, it does not carry the same finality properties by default. The system can still authenticate outputs using certified state and chain-key techniques in some contexts, especially when responses need to be validated by clients, but the key point is that the fast path and the fully replicated path are intentionally different.

This is not unique in spirit to the Internet Computer. Many systems separate reads from writes because the performance requirements are different. What is distinctive here is that the distinction is built into a blockchain-style platform that still wants users to verify what they are seeing.

Boundary nodes play a role at the edge of this experience. Official materials describe them as services that translate HTTPS requests from conventional clients into ingress messages, provide denial-of-service protection and caching, and authenticate responses using chain-key cryptography. In effect, they help ordinary web users interact with canister-based applications through familiar internet protocols.

How do cycles work and who pays for computation on the Internet Computer?

| Model | Payer | Unit | How obtained | User UX | Primary token role |

|---|---|---|---|---|---|

| Reverse-gas (Internet Computer) | App / canister operator | Cycles | Convert ICP into cycles | Users often don't pay per call | ICP used for conversion & governance |

| Traditional gas (Ethereum-like) | End user | Gas (native token) | User funds wallet per tx | Users pay per interaction | Native token used as payment |

Most readers approaching a smart-contract platform expect a gas model where the end user submits a transaction and pays fees directly in the native token. The Internet Computer instead uses what its whitepaper calls a reverse-gas model.

Here is the mechanism. The native token is ICP. Developers or canister operators convert ICP into cycles, and those cycles pay for computation, storage, and bandwidth. In other words, the application is typically pre-funded, and the service consumes those prepaid resources as it runs.

This changes the feel of the platform. Instead of every user interaction being framed as “bring your own token to pay execution fees,” an application can pay on the user’s behalf, more like a conventional internet service that absorbs infrastructure costs. The whitepaper and related materials describe cycles as the resource unit for operating canisters, while ICP also serves as the token used in governance.

The logic is easy to appreciate from first principles. If the goal is to host applications that resemble mainstream software products, requiring every end user to hold and spend the native token for each interaction adds friction. A reverse-gas model lets developers decide when users should face blockchain-native costs and when the application should internalize them.

That said, the underlying economics do not disappear. Converting ICP to cycles burns ICP, and the broader token system also includes minting through governance rewards and node-provider remuneration. Some official materials discuss proposals to reduce inflation by adjusting those reward flows and increasing burn through greater compute demand. Those proposals are important for tokenomics, but they should not be confused with the basic runtime fact: cycles are what canisters spend to live and work.

What is the Network Nervous System (NNS) and how does governance control the network?

Many blockchains have governance processes, but on the Internet Computer governance is unusually operational. The Network Nervous System, or NNS, is an on-chain DAO integrated into the network’s administration.

Its role is not merely advisory. Official governance materials describe the NNS as able to execute many adopted proposals automatically, including creating new subnets and updating replica software. That is a significant design choice because it shifts network evolution away from purely social coordination plus occasional hard forks and toward explicit on-chain control of the system’s configuration.

Users participate by staking ICP into neurons, which are governance objects with voting power. The NNS implements a form of liquid democracy: neuron owners can vote directly or configure their neurons to follow other neurons automatically. Voting can earn maturity, which can later be used to generate new ICP under the system’s rules.

This governance architecture has a technical consequence beyond politics. Because the network can change topology, admit capacity, and roll out upgrades through NNS proposals, the Internet Computer behaves less like a static protocol and more like an administered distributed system whose administration is itself placed on-chain. Supporters see that as a way to avoid risky hard forks and coordinate complex operations. Critics may see it as introducing governance dependence into core network behavior. Both observations follow from the same mechanism.

Related to this, the Service Nervous System, or SNS, extends similar governance ideas to applications. Official materials describe SNS as a way for an app to become an “open internet service” with its own tokenomics and community control. That fits the platform’s larger ambition: not just decentralized infrastructure, but decentralized administration of the services running on it.

What types of applications are best suited to run on the Internet Computer?

The Internet Computer’s application profile is best understood as a consequence of the design just described. If you give developers persistent Wasm-based canisters, direct stateful execution, web-facing delivery paths, and a reverse-gas model, you are encouraging them to build things that look like full applications, not just DeFi primitives.

That includes social applications, enterprise-style services, developer tools, wallets, games, identity-linked systems, and cross-chain services. The docs emphasize that canisters can directly interact with Bitcoin and Ethereum-related infrastructure through ICP-native mechanisms. They also describe chain-key tokens as digital twins of Bitcoin, Ethereum, and ERC-20 assets secured on ICP with chain-key cryptography. Whether a particular implementation is elegant or risky depends on the exact integration, but the conceptual move is clear: external assets and external transaction signing are meant to become inputs to canister-based applications.

At the same time, the architecture also explains where the model is less natural. If an application only needs minimal settlement and is happy keeping most of its logic and data off-chain, the Internet Computer’s more expansive execution environment may be unnecessary. Its main value appears when a developer actually wants the backend itself to be part of the decentralized trust boundary.

What security, liveness, and governance assumptions does the Internet Computer rely on?

The strongest claims about the Internet Computer are also the ones most worth qualifying.

First, security and correctness are subnet-local in an important sense. The protocol assumes fewer than one-third Byzantine faults per subnet. If that assumption fails, the guarantees deteriorate. This is not a special weakness of the Internet Computer so much as the normal boundary of Byzantine fault-tolerant replication, but because the system scales by dividing into subnets, readers should keep in mind that the relevant trust domain is often the subnet hosting a given canister.

Second, liveness depends on partial synchrony. Safety is not the same as liveness. A network can remain safe under harsh conditions (meaning it does not finalize conflicting histories) yet fail to make timely progress until communication conditions improve. When people hear phrases like “web speed” or “unstoppable,” it is worth translating them back into distributed-systems language. Performance and availability are properties achieved under assumptions, not unconditional facts.

Third, chain-key cryptography compresses verification, but that compression rests on nontrivial cryptographic assumptions and implementation correctness. Threshold signatures and distributed key generation are powerful because they hide complexity from users. They also concentrate a lot of trust in getting those mechanisms right.

Fourth, governance is both a strength and a dependency. The NNS can automate upgrades and scaling actions, which is operationally valuable. But it also means the network’s evolution is inseparable from the behavior of its governance process and the distribution of voting power among neuron holders.

Finally, some official materials use broad marketing language about total decentralization, immunity to cyber attack, or absolute censorship resistance. Those statements express an aspiration and a product position more than a complete threat model. The more grounded version is simpler and more useful: the Internet Computer is an attempt to push more of the application stack into a Byzantine fault-tolerant, cryptographically certified, governable network environment. Whether that is the right architecture depends on what part of your trust boundary you want on-chain.

Conclusion

The Internet Computer is best understood as a blockchain that tries to be a general computing platform, not just a ledger for transactions. Its core abstraction is the canister, its scaling unit is the subnet, and its unifying trick is chain-key cryptography, which lets a network of many replicated state machines present compactly verifiable outputs.

Everything else follows from those choices. Consensus orders updates inside subnets. Queries provide a faster path for reads. Cycles let applications prepay for computation and storage. The NNS governs the network as an on-chain administrative system. The result is a platform aimed at hosting software services themselves, not merely settling around them.

The short version to remember tomorrow is this: the Internet Computer exists to make “run the app on-chain” mean something much closer to running the whole service, not just posting its final balances to a blockchain.

How do you buy Internet Computer?

You can get exposure to Internet Computer on Cube Exchange by funding your account and using a straightforward spot-trading workflow. Keep the process simple: choose the market, pick the order type, and review the fill before you submit.

- Fund your Cube account with fiat or a supported crypto transfer.

- Open the spot market for the asset and check the current spread and displayed depth.

- Choose a limit order for price control or a market order for immediate execution, then enter the size you want.

- Review the estimated fill and fees, submit the order, and confirm the position after execution.

Frequently Asked Questions

By using chain-key cryptography and threshold signatures a subnet can present a single public verification key even though many replicas jointly produce signatures, so other subnets and external clients can efficiently verify certified outputs without checking every replica’s work.

You generally trust the subnet that hosts a canister: the protocol assumes fewer than n/3 Byzantine faulty replicas per subnet, so guarantees (safety/liveness) are scoped to that subnet’s fault model rather than the whole network.

Use an update call when you need a state change recorded in replicated history - updates go through consensus and finalize slowly but durably; use a query call when you want fast, local reads that skip full consensus but do not by themselves produce the same finality guarantees.

Applications typically convert native ICP into ‘cycles’ ahead of time and canisters spend those cycles for computation, storage, and bandwidth, so developers or service operators normally prepay execution rather than having every end user pay per request.

Yes - canisters can interact with external chains: the protocol describes a Bitcoin adapter and uses threshold signature schemes (e.g., t‑ECDSA/t‑Schnorr) and an EVM RPC canister for Ethereum, but the high-level overview defers many implementation details to deeper docs and different integrations use different mechanisms.

Inside each subnet a round-based protocol elects a leader via a random beacon, the leader proposes blocks, and notarization/finalization steps requiring broad replica support turn proposals into a single ordered sequence of state transitions that honest replicas converge on.

The design depends on standard distributed‑systems and cryptographic assumptions: safety holds if fewer than one‑third of replicas per subnet are Byzantine, liveness requires partial synchrony (periods of bounded message delay), and chain‑key/threshold schemes depend on nontrivial cryptographic assumptions and correct implementation.

The Network Nervous System (NNS) is an on‑chain governance DAO where users lock ICP into neurons to vote or follow other neurons, and the NNS can automatically execute adopted proposals including creating subnets and rolling out replica upgrades - so governance directly controls many operational aspects of the network.

Running a node has operational cautions: the official installer can wipe disks, IPv4 deployments require unique addresses per node, images/releases are versioned and the docs recommend recent releases, and onboarding processes and identity proofs for node providers are still in draft and community review in some places.

Public pages on the project include marketing claims like “100% decentralized” or “immune to cyber attack,” but the overview materials do not provide measurable definitions or technical proofs for those phrases and raise unresolved questions about how decentralization is quantified and how some cryptographic/trust claims map to original chains.

Related reading