What is Formal Verification?

Learn what formal verification is, how it works, why it matters for security, and where mathematical proofs help - and fail - in software and blockchains.

Introduction

Formal verification is the practice of using mathematics to show that a system satisfies a precise specification. In security, that matters because many failures are expensive not merely because software crashed, but because software violated an invariant that people were relying on: funds should not be spendable twice, privileges should not appear without authorization, a compiler should not silently change a program’s meaning, and a consensus protocol should not let honest participants finalize conflicting histories.

The puzzle formal verification tries to solve is simple to state. We already have testing, code review, fuzzing, audits, and production monitoring. Why add proofs? The answer is that all of those methods examine examples of behavior, while formal verification aims at all behaviors allowed by the model. If your concern is a bug that appears only in a rare state transition, under a strange input, or after an unusual sequence of events, sampling can miss it. A proof, when it is sound and about the right thing, is trying to rule it out entirely.

That is the central idea to keep in mind: formal verification does not mean “very careful checking.” It means expressing behavior in a mathematical form and then proving that certain bad states are impossible, or that certain good properties always hold, relative to an explicit specification. NIST describes formal verification as a systematic process using mathematical reasoning and proofs to verify that a system satisfies its desired properties, behavior, or specification, meaning the implementation is a faithful representation of the design.

This is powerful, but it is not magic. Formal verification only proves what you actually specified, under the assumptions you actually modeled, using tools you trust to be sound enough for the job. That limitation is not a footnote. It is the main thing a careful reader should remember while still appreciating why the method is so valuable.

Why do formal proofs target absence of bugs while tests show presence?

Most software assurance methods work by observation. You run the program, feed it inputs, and inspect what happens. If the output is wrong, you found a bug. If the output is right on a million cases, you gained useful confidence. But you still did not prove that the million-and-first case, or the case after six prior transactions, or the case involving a reentrant callback and an integer edge condition, is safe.

Formal verification changes the question. Instead of asking, “What happened on these test cases?” it asks, “Given the rules of this system, can any execution reach a state that violates property P?” Here P might be something like “balances never become negative,” “only an authorized signer can approve settlement,” or “once a block is finalized, honest nodes do not finalize a conflicting block at the same height.”

This difference is easier to feel with a small example. Imagine a vault contract that lets users deposit and withdraw tokens. Traditional testing might try deposits of different sizes, failed withdrawals, and repeated calls. Formal verification instead encodes claims such as: if the total liabilities before a withdrawal equal the sum of user balances, and the withdrawal succeeds, then the liabilities afterward still equal the new sum of user balances; or, no sequence of valid calls lets an attacker withdraw more than their recorded balance. The point is not that tests are useless. The point is that a proof tries to cover the whole state space described by the model, not a sample from it.

That is why formal verification is especially attractive for systems with high consequence and low tolerance for ambiguity: kernels, compilers, cryptographic code, consensus protocols, bridges, custody systems, and smart contracts that cannot be patched easily after deployment. In those environments, the cost of a latent invariant violation can be much larger than the cost of proving the invariant ahead of time.

What does formal verification actually prove about a system?

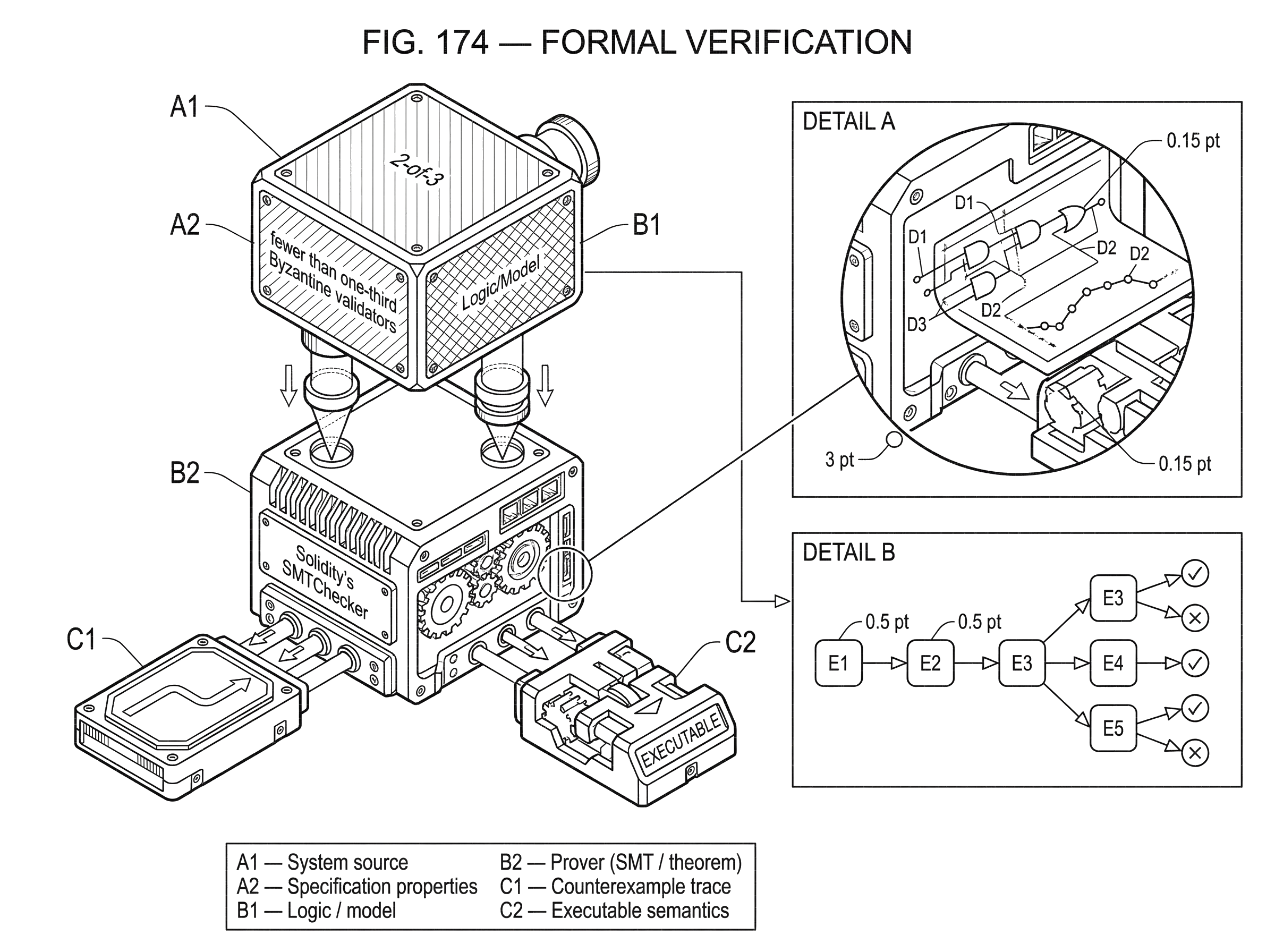

A proof always has three moving parts: a system, a specification, and a logic or model that connects them. If any of the three is vague, the result is weaker than it sounds.

The system is the artifact you care about: source code, bytecode, a protocol state machine, a hardware design, or a compiler pass. The specification is the statement of intended behavior. Sometimes that specification is high-level, such as “consensus safety holds under fewer than one-third Byzantine validators.” Sometimes it is low-level, such as “this assertion is true after every execution path through the function.” The logic or model is the mathematical representation used to reason about the system: transition systems, Hoare logic, temporal logic, type systems, SMT formulas, theorem-prover definitions, or executable semantics.

The most important misunderstanding to avoid is thinking that formal verification proves a system is “correct” in some absolute sense. It proves correctness with respect to a specification. If the specification forgets an important property, the proof may be impeccable and still fail to protect you from a real exploit. Ethereum’s developer documentation is explicit about this for smart contracts: formal verification can only check whether execution matches the formal specification. If the specification is incomplete, verification can miss vulnerabilities.

This is why experienced teams often spend as much effort clarifying the property as they do running the tool. The hard part is often not proving the statement. The hard part is deciding what statement is worth proving.

How does formal verification model allowed executions and invariants?

A useful way to picture formal verification is this: every program or protocol induces a huge space of possible executions. Inputs vary, timing varies, other actors behave differently, and internal state changes over time. Verification tries to describe that execution space precisely enough that a prover can reason about it, then establish that every path stays inside a fence defined by the specification.

Some properties are safety properties. They say that something bad never happens: no out-of-bounds array access, no arithmetic underflow, no unauthorized state transition, no two finalized blocks for the same height, no transfer without sufficient balance. Safety fits verification well because it often reduces to proving that no reachable state violates an invariant.

Other properties are liveness properties. They say that something good eventually happens: a valid transaction can eventually settle, a protocol does not deadlock, a committed operation becomes visible, a contract can eventually escape from an intermediate state. Liveness is usually harder because it depends not only on internal logic but on timing, fairness, network assumptions, and the behavior of external components.

That distinction matters in blockchains. A contract may be safe in the sense that funds cannot be stolen, yet still fail liveness if funds can become permanently stuck. A consensus protocol may guarantee that honest validators do not finalize conflicting blocks, yet only under synchrony assumptions can it guarantee eventual progress. Formal verification makes those assumptions visible instead of hiding them inside prose.

How is formal verification performed (model checking, theorem proving, symbolic execution)?

| Method | Automation | State support | Human effort | Best for |

|---|---|---|---|---|

| Model checking | Automated | Finite or bounded states | Low to medium | Temporal/protocol properties |

| Theorem proving | Interactive | Infinite or rich state | High | Deep correctness proofs |

| Symbolic execution | Semi-automated | Path-sensitive per function | Medium | Edge-case and path bugs |

| SMT/Horn solvers | Automated backend | Encodes constraints | Low | Automated property checks |

In practice, formal verification is not a single technique. It is a family of methods that differ mainly in what they automate, what they model, and what tradeoffs they accept.

One broad approach is model checking. Here the system is represented as a state machine, and an algorithm explores whether the specification holds across reachable states. This is good for checking temporal properties and protocol behaviors, especially when the state space is finite or can be bounded. Its main enemy is state explosion: as the number of variables, actors, or steps grows, the number of states can grow exponentially.

Another approach is theorem proving. Instead of exhaustively exploring states, a theorem prover helps construct a mathematical proof from definitions and lemmas. This can handle richer systems and even infinite-state reasoning, but often needs significant human guidance. Cardano’s formal-methods materials describe this tradeoff directly: expressive theorem proving tools such as Isabelle/HOL can represent complex mathematical objects and encode significant proofs, but the effort is high enough that machine-checking policy matters.

A third common approach is symbolic execution. Rather than running a program on concrete inputs, it runs on symbolic inputs and accumulates path conditions. An SMT solver then asks whether those conditions allow a bug or violate a property. This is often effective for finding edge-case bugs in smart contracts and other bounded programs, though path explosion can become severe when branching behavior is complex.

Underneath many practical tools are SAT and SMT solvers. SAT asks whether a Boolean formula is satisfiable. SMT extends that idea with theories such as arithmetic, bit-vectors, arrays, and uninterpreted functions. Many automated verification tools work by translating code and properties into formulas a solver can prove or refute. If the solver finds a satisfying assignment for the negation of your property, that assignment becomes a counterexample.

NIST’s work on reducing software vulnerabilities places formal methods, static analysis, assertions, contracts, and correct-by-construction techniques in the same broad direction: pushing assurance earlier into the design and implementation process rather than waiting for bugs to surface in testing or production. The common mechanism is not “more scanning.” It is making intended behavior explicit enough that tools can reason about it.

How does formal verification apply to smart contracts and DeFi invariants?

Smart contracts make the value proposition unusually clear because they are often small, public, financially consequential, and difficult to patch after deployment. That combination makes the gap between testing and proof easy to see.

Suppose a lending contract keeps a mapping of collateral balances and lets users withdraw collateral only if their position remains above a required threshold. A developer might test common flows and edge cases. But the security question is not whether the contract works on the tested flows. It is whether any reachable execution lets an account become undercollateralized while still withdrawing.

A formal specification might say that after every successful withdrawal, the user’s collateral value remains at least their debt times a safety ratio. To verify this, the tool models storage updates, arithmetic, preconditions, and call behavior. If it can prove the condition for all reachable states under the assumptions, the result is stronger than a test suite. If it cannot, it may produce a counterexample showing a sequence of values and calls that breaks the invariant.

This is close to how Solidity’s SMTChecker works. The tool treats require(...) conditions as assumptions and assert(...) conditions as properties to prove. It translates the relevant behavior into SMT or Horn-clause problems and asks solvers to prove that the assertions always hold. If a proof fails, it can emit a counterexample. It also targets concrete bug classes such as arithmetic errors, division by zero, out-of-bounds access, and insufficient funds conditions.

The design choice behind this is important. By writing assumptions as require and invariants as assert, the developer is not just documenting intent for humans. They are exposing intent in a form a prover can consume. That is a recurring theme in formal verification: the proof becomes possible when the intended behavior is made precise enough to be machine-checked.

The same idea scales beyond Ethereum. A UTxO-based contract can be modeled as a constrained state transition system over inputs, outputs, and validator conditions. Cardano’s tooling work reflects this by formalizing ledger assumptions and execution semantics in theorem-proving environments, so that contract properties can be stated against the actual transaction model rather than vague prose.

Why do executable semantics and compiler semantics matter for verification?

| Level | Trust boundary | Verified translation? | Practical assurance | Main risk |

|---|---|---|---|---|

| Source | Models source meaning | No | Moderate assurance | Compiler changes behavior |

| Intermediate | Closer to generated code | Sometimes | Higher assurance | Translation bugs possible |

| Bytecode | Matches deployed artifact | No | Strong assurance | VM quirks matter |

| VM semantics | Executable VM model | Yes if validated | Strong end-to-end | Model incompleteness |

| Verified compiler | Proves preservation | Yes | High end-to-end assurance | Verifier scope limits |

There is a subtle problem lurking behind every proof about code: what, exactly, does the code mean? If your proof talks about a source-language model but the deployed system runs bytecode on a virtual machine, then your assurance depends on the gap between those layers.

That is why executable formal semantics matter. Projects such as KEVM model the Ethereum Virtual Machine itself in the K framework, providing a mathematical account of EVM execution that can be used for symbolic reasoning, proofs, and conformance testing. The point of such a semantics is not academic elegance for its own sake. It is to reduce ambiguity about the machine the contract actually runs on.

The same issue appears in compiler verification. Suppose you verify a C program against its source semantics, then compile it with an ordinary compiler. If the compiler has a bug that changes the program’s meaning, your source-level proof no longer gives the guarantee you thought it did. CompCert addresses exactly this trust gap by providing a machine-checked proof that generated code preserves the semantics of the source program. That does not verify the application itself, but it removes a major source of uncertainty from the toolchain.

This is a general lesson: the closer the proof is to the real execution semantics, the stronger the end-to-end assurance. The farther away it is, the more trusted translation steps sit in the middle.

How is formal verification used for consensus protocols and distributed systems?

People often first encounter formal verification through code, but many of its most important uses are about protocols. Consensus is the clearest case. A consensus protocol is really a distributed state machine running under adversarial conditions, timing assumptions, and fault thresholds. The key properties are rarely about line-by-line implementation behavior alone. They are about global invariants such as agreement, validity, and liveness.

Tendermint’s specification, for example, states safety and liveness claims under assumptions including partial synchrony and fewer than one-third Byzantine voting power. Even when those proofs are initially informal, they identify the real structure a formal model must capture: rounds, proposals, prevotes, precommits, locking rules, and the threshold arithmetic behind finality. The point of formalization is to make sure those claims survive exact reasoning instead of intuition.

This is where formal verification helps security at the architecture level. It forces a protocol to say what counts as an honest participant, what network conditions are assumed, what threshold is tolerated, and which guarantees are safety versus liveness. Many failures in distributed systems come not from a bad local function, but from a gap between the informal story and the actual state machine.

How should teams combine formal verification with testing, audits, and runtime controls?

| Control | Primary goal | Strength | Typical cost | Best used with |

|---|---|---|---|---|

| Formal verification | Mathematical guarantees | Eliminates bug classes | High | Testing, audits, monitoring |

| Testing | Find concrete failures | Practical bug finding | Low | Formal specs, CI |

| Audits | Human logic review | Business-logic checks | Medium | Formal methods, testing |

| Runtime monitoring | Detect failures live | Limit damage | Low | Incident response |

| Threshold crypto | Reduce key compromise | Architectural mitigation | Medium | Formal verification |

Formal verification is strongest when used as part of a layered assurance strategy. It is not a replacement for testing, audits, runtime monitoring, code review, or operational safeguards. It changes the shape of the residual risk.

Testing is still valuable because it catches integration mistakes, environment mismatches, and specification misunderstandings quickly. Audits remain useful because humans notice business-logic surprises, threat-model gaps, and assumptions that were never formalized. Runtime controls matter because even a verified component may be embedded in a larger unverified system. Off-chain services, upgrade keys, governance processes, front ends, and event relayers can still fail.

Cross-chain bridge security makes this limit obvious. Many bridge losses come from permission errors, logic flaws, event handling mistakes, and off-chain components interpreting on-chain events incorrectly. A formally verified on-chain contract can reduce some of those risks, especially around permissions and invariants, but it does not automatically secure the relayer, signer set, frontend, or trust model.

This is also where threshold cryptography becomes a practical complement to formal methods. In decentralized settlement systems, the goal is often to make a dangerous power impossible to exercise unilaterally. Cube Exchange, for example, uses a 2-of-3 threshold signature scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share; no full private key is ever assembled in one place; and any two shares are required to authorize a settlement. Formal verification can help prove properties about the settlement logic or protocol state machine, while threshold signing reduces key-compromise risk at the architectural level. These are different controls solving different failure modes.

How can formal verification give a false sense of security?

The most dangerous misconception around formal verification is that a successful proof means a system is safe in the ordinary sense. Often it means something narrower and more precise: within this model, under these assumptions, the implementation satisfies these properties.

If the specification omits a needed property, the proof cannot save you. If the model treats an external call too abstractly, you may miss behavior that matters in production. Solidity’s SMTChecker documentation is candid here: external calls are treated as calls to unknown code by default, and certain trusted-call modes can become computationally costly or unsound if misused. That is not a defect of the idea. It is what honest tooling looks like when it tells you where the abstraction boundary is.

Likewise, some properties are fundamentally hard or undecidable in full generality. Tools respond by bounding the problem, using abstractions, or focusing on a restricted property class. This can introduce false positives, where the tool warns about a bug that is impossible in the concrete program, or leave some features unsupported. Ethereum’s documentation notes performance and decidability limits explicitly; NIST’s software-vulnerability report notes that model checkers and related decision procedures can be powerful, but face exponential growth as problem size increases.

There is also the human cost. Semi-automated theorem proving can require large amounts of expert effort. Cardano’s discussion cites the seL4 microkernel as an illustration of how expensive deep verification can be for critical infrastructure: the verification artifact can be far larger than the code being verified. That does not make the effort irrational. It means verification intensity should be matched to system criticality.

Which problems and properties is formal verification best suited for?

Formal verification shines where the property is crisp, the consequence of failure is large, and the state machine can be modeled with reasonable fidelity. Permission invariants are a classic example: only specific principals may trigger specific transitions. Arithmetic and accounting invariants are another: balances conserve value, liabilities do not exceed collateral, settlement can only move assets according to defined rules. Compiler correctness, instruction semantics, and protocol safety also fit well because they can be stated in precise mathematical terms.

It is often particularly effective at eliminating classes of bugs rather than isolated defects. If you can prove that every array access is in bounds, or that every compiled program preserves source semantics, or that no reachable contract state violates a conservation invariant, you have not just fixed one instance. You have ruled out an entire family of failures.

This is why high-assurance systems often invest in verified kernels, verified compilers, or verified cryptographic components even when the rest of the stack remains more conventional. Reducing uncertainty in foundational layers can improve the assurance of everything built above them.

Which parts of the verification workflow are engineering choices vs. fundamentals?

Not every part of a verification workflow is fundamental. Some parts are conventions chosen for practicality.

Whether you specify properties as assertions in source code, as temporal formulas over a state machine, or as lemmas in a theorem prover is partly a tooling decision. Whether you verify source code, an intermediate representation, or bytecode depends on where you want the trust boundary. Whether you use model checking, SMT-based automation, interactive proving, or a combination depends on the shape of the system and the budget for human effort.

What is fundamental is simpler: there must be a mathematically precise statement of intended behavior, a model of the system’s behavior, and a sound enough reasoning process connecting the two. Everything else is a way of making that triangle usable in real engineering.

Conclusion

Formal verification is mathematical assurance about specified behavior, not just careful testing and not a blanket guarantee of safety. Its value comes from targeting all modeled executions rather than sampled cases, which is why it can eliminate whole classes of bugs in high-stakes systems.

Its limit is equally important: a proof only covers the specification, assumptions, and semantics you actually gave it. Remember the durable version this way: formal verification is strongest when the property is precise, the model is faithful, and the remaining trust gaps are made explicit rather than ignored.

How do you secure your crypto setup before trading?

Secure your crypto setup by combining clear key hygiene, confirmation checks, and cautious transfer habits before you trade. Cube Exchange uses a non‑custodial MPC model and a 2‑of‑3 threshold signature pattern in settlement; use the checklist below to align on key recovery, funding, and execution steps before moving assets.

- Fund your Cube account with fiat or a supported crypto transfer on the correct network (select the chain explicitly, e.g., ETH vs. Polygon).

- Verify your key and recovery posture: confirm the MPC/2‑of‑3 arrangement (your share, Cube’s share, Guardian Network share) and record an offline backup or hardware‑wallet backup of your user share.

- Check chain finality and confirmations for the asset before trading or withdrawing (for example, wait ~12 confirmations on many L1s or a chain’s documented checkpoint threshold) and only act after that threshold is met.

- For transfers or withdrawals, send a small test amount first and double‑check the destination address by comparing the first and last characters and re‑pasting; for trading, pick a limit order when you need price control or a market order for immediate execution and review estimated fees.

Frequently Asked Questions

Testing observes examples of behavior; formal verification expresses behavior mathematically and attempts to prove that no execution allowed by the model violates the desired properties, so proofs aim at absence of bugs across the whole modeled state space rather than presence in sampled runs.

A proof needs three pieces: the system (what is being analyzed, e.g., source, bytecode, or protocol), the specification (the precise property you want to hold), and a logic or model that connects them (transition systems, Hoare/temporal logic, SMT encodings, etc.); if any piece is vague the assurance is correspondingly weaker.

No - formal verification proves correctness only relative to the formal specification, model, and assumptions provided; a flawless proof of an incomplete or wrong specification can still miss real vulnerabilities.

Because proofs reason about program meaning, the closer the formal model is to the real execution semantics (for example, verifying bytecode or the VM semantics with tools like KEVM, or using verified compilers like CompCert), the fewer trusted translation steps remain and the stronger the end‑to‑end guarantee.

Safety properties (something bad never happens) are usually amenable to invariant proofs and suit many verification techniques, while liveness properties (something good eventually happens) are harder because they depend on timing, fairness, and environment assumptions and therefore require richer models and assumptions.

Common practical techniques include model checking (good for finite/bounded state and temporal claims but faces state explosion), interactive theorem proving (expressive and can handle infinite-state reasoning but needs substantial human effort), and symbolic execution/SMT-based analysis (effective for bounded programs and edge cases but subject to path explosion); SAT/SMT solvers are at the heart of many automated approaches.

Formal verification is best seen as one layer in defense‑in‑depth: it reduces residual risk for the verified properties but does not replace testing, audits, runtime monitoring, or architectural controls (for example, bridges still require secure relayers and threshold signatures complement verification by reducing key‑compromise risk).

The most common failure mode is proving the wrong thing: a proof can be mathematically correct yet irrelevant if the specification omits important behaviors, models external calls too abstractly, or assumes environmental conditions that do not hold in production.

Scalability and decidability limits mean tools must bound or abstract problems; that leads to state/path explosion, undecidable properties, conservative abstractions, and sometimes false positives or unsupported features - for example, Solidity’s SMTChecker uses abstractions for some EVM features and warns about performance and decidability limits.

Formal verification gives the most value when the property is crisp, the consequence of failure is large, and the system can be modeled faithfully - classic cases include permission invariants, arithmetic/accounting conservation, compiler correctness, and protocol safety where ruling out entire classes of bugs is worth the verification cost.

Related reading