What is a Data Feed?

Learn what a data feed is in blockchain systems, how oracle feeds work, why push vs. pull matters, and where price and data feeds can fail.

Introduction

Data Feed is the mechanism by which a blockchain receives an external value in a form smart contracts can actually use. That may sound narrow, but a surprising amount of onchain finance depends on it. A lending market cannot decide whether collateral is sufficient unless it has a price. A synthetic asset cannot track an external benchmark unless it can observe that benchmark. A derivatives protocol cannot settle fairly unless it knows something about the world beyond the chain.

This is the basic puzzle: blockchains are valuable precisely because they are self-contained and deterministic, but useful applications often depend on facts that are not on the blockchain. A smart contract can verify its own state, transaction inputs, and prior history. It cannot natively know the BTC/USD price, whether a reserve account still holds backing assets, or what an interest rate is on some external venue. If that information matters, it has to be brought in somehow.

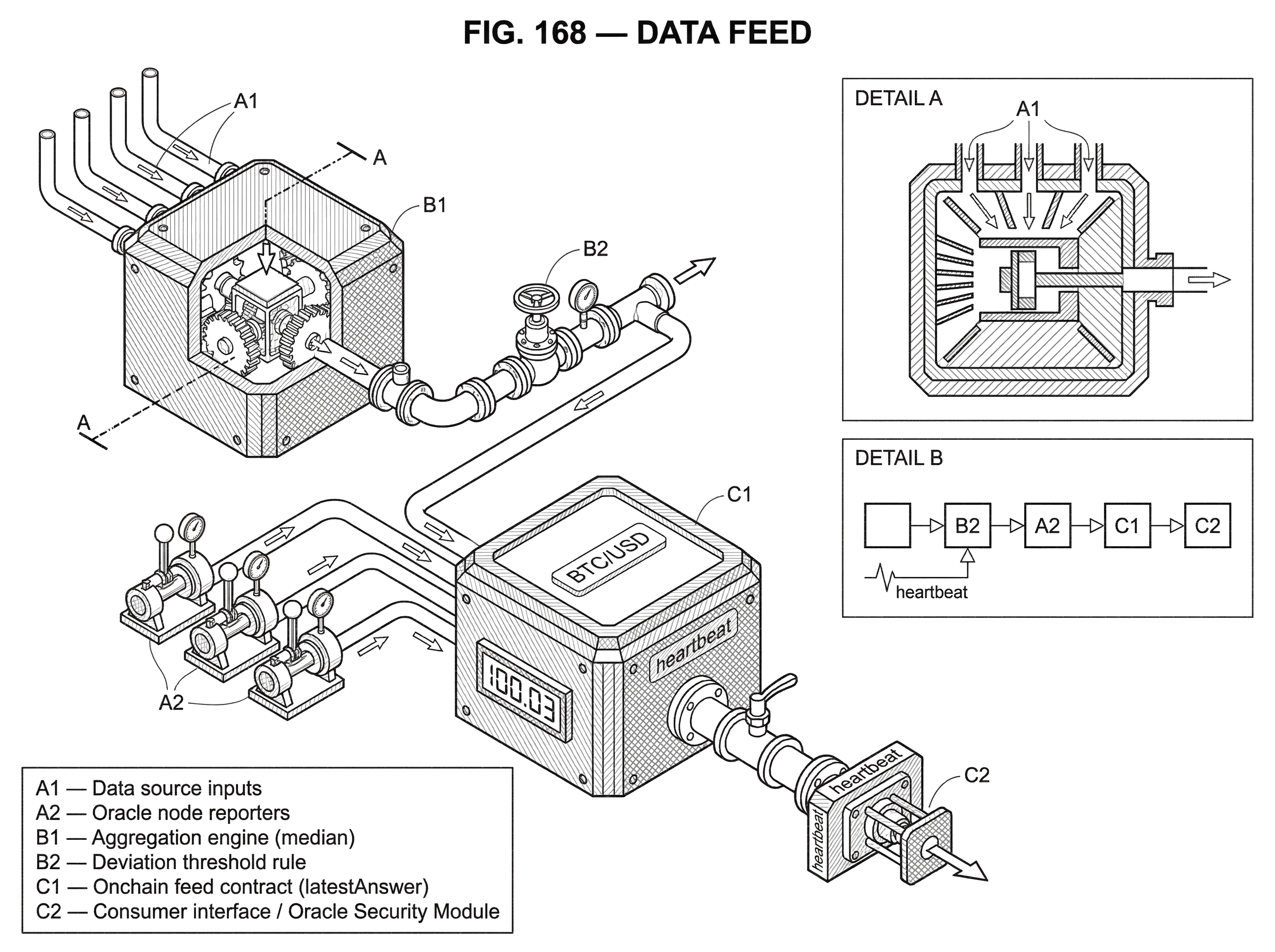

That “somehow” is the role of an oracle, and a data feed is the concrete output of that oracle system: a stream of values made available to smart contracts. In practice, people often use “oracle” and “data feed” loosely, but the distinction is useful. The oracle is the broader bridge between onchain and offchain worlds. The data feed is the published result that contracts read.

The important thing to notice is that a data feed is not just a number. It is a process. It includes where the data comes from, how multiple inputs are combined, when updates are published, what happens when the source data disagrees, and what assumptions consumers are making when they trust it. Most of the real engineering lives there.

Why do blockchains need external data feeds?

Smart contracts run in a closed environment. They only have direct access to data already onchain or included in the transaction that calls them. That restriction is not an accident. It is what allows every node in the network to replay the same computation and arrive at the same result. If a contract could freely query the internet during execution, different nodes might see different answers, and consensus would break.

So the design problem is constrained from the start. External data cannot simply be fetched ad hoc by the contract itself. Instead, some external system must observe the outside world, package the result in a blockchain-compatible form, and publish it so contracts can consume it deterministically.

That is what a data feed solves. It turns “what is the current ETH/USD price?” from an offchain question into an onchain state variable that contracts can read. The contract is still deterministic because it is not browsing the web; it is reading chain state. The non-deterministic work happened elsewhere, before the value reached the chain.

This is why data feeds are foundational to onchain finance rather than a peripheral add-on. Once a protocol needs any real-world reference point, the feed becomes part of the protocol’s security boundary. If the feed is wrong, the protocol may behave correctly according to its code and still produce a disastrous outcome. A liquidation engine that uses a bad price can liquidate healthy positions. A borrowing market can accept insufficient collateral. Because blockchain execution is automated and usually irreversible, feed failures are often not mere data-quality issues; they are loss events.

What components make up a blockchain data feed?

The cleanest way to think about a data feed is as a pipeline that preserves a single invariant: many messy observations from outside the chain must become one sufficiently trustworthy value onchain. Everything in feed design follows from that compression step.

Outside the chain, the world is noisy. Different exchanges quote slightly different prices. APIs can lag or fail. Some sources are thinly traded and easy to manipulate. Some assets trade around the clock, others in sessions. Even apparently simple quantities like “the price of ETH” are not primitive facts. They are measurements constructed from venues, timestamps, and methodology choices.

A feed system therefore has to do more than relay. It usually selects sources, gathers observations, filters bad or stale inputs, aggregates the remaining values, and then decides whether the result is important enough to publish onchain. In decentralized designs, that work is often performed by multiple independent node operators rather than a single publisher, so that no one party is the sole point of failure.

Once onchain, the feed also needs a consumer interface. Smart contracts need to know not just the latest answer, but often whether it is fresh enough, whether it is considered valid, and what assumptions attach to it. MakerDAO’s oracle module makes this visible in a different style than Chainlink’s feed contracts: there is a data production layer, an aggregation layer, and then a consumption layer with its own timing and permission rules. The pattern is general even when implementations differ.

So when someone says “use a data feed,” what they really mean is: trust this end-to-end mechanism to transform offchain observations into an onchain reference value without introducing unacceptable error, latency, cost, or manipulation risk.

How is a market price turned into an on‑chain reference?

Suppose a lending protocol wants to accept wrapped bitcoin as collateral on a blockchain where BTC itself does not live. The protocol needs a BTC/USD value so it can compute whether a borrower remains safely collateralized.

The obvious but flawed design would be to let the protocol read the price from a single exchange API. That seems simple, but it makes one venue, one API, and one publisher the entire trust anchor. If the API fails, the feed stops. If the exchange experiences a dislocation, the protocol inherits it. If the publisher is compromised, the protocol can be fed arbitrary numbers.

A more robust feed starts by observing multiple market venues. Independent operators collect recent BTC/USD observations from several reliable sources. Those observations are compared and aggregated so that an outlier from one venue does not dominate the result. In some systems the aggregate is a median, because the median has a useful property: if enough honest observations are present, a few faulty values cannot drag the result outside the honest range. Chainlink’s Off-Chain Reporting design uses this logic when forming reports from multiple oracle observations. Maker’s Median contract follows the same broad intuition in a different architecture by computing a median across authorized price submissions.

But producing an aggregate offchain is still not enough. The system then has to decide when to write a new answer onchain. Publishing every tiny movement would be expensive and unnecessary. Publishing too rarely would leave consumers with stale values. So feed operators typically use trigger rules. A common rule is a deviation threshold: post a new onchain value only when the current aggregate differs from the last onchain answer by more than some configured percentage. A second common rule is a heartbeat: even if the price has not moved much, force an update after some maximum time interval so that consumers are not left relying on an old value indefinitely.

Now the lending protocol reads the onchain feed. If BTC falls sharply and the deviation threshold is crossed, a fresh answer is published and liquidation logic can react. If BTC trades sideways for a long period, the heartbeat still refreshes the answer so consumers know the feed is alive and not simply stuck. The value the protocol sees is not “the true price” in any metaphysical sense. It is a carefully constructed reference price with explicit update rules and implicit trust assumptions.

That distinction matters. A good feed gives protocols a price that is fit for decision-making, not a magical ground truth.

Push vs pull data feeds: when to publish onchain or fetch on demand

| Model | Onchain availability | Typical cost | Latency | Best for |

|---|---|---|---|---|

| Push | Always available | Continuous gas cost | Low (synchronous) | Shared reference prices |

| Pull | Fetched on demand | Pay‑per‑use cost | Potentially higher | Low‑cost high‑frequency |

The biggest architectural choice in feed design is not usually about math. It is about timing. There are two broad ways to deliver data: push-based and pull-based.

In a push-based feed, the oracle network publishes updates to the blockchain proactively. The value is already onchain before any consuming contract asks for it. This is the model many DeFi users implicitly expect because it feels like shared public infrastructure: a lending protocol, DEX, and derivatives platform can all read the same onchain reference value. Chainlink Data Feeds are the clearest mainstream example. They publish on a schedule such as a heartbeat, on significant change such as a deviation threshold breach, or both.

The mechanism is straightforward. Offchain observers keep tracking the external value. When the update condition is met, they produce and transmit a new report, and an onchain contract stores the latest answer. Consumers then read that answer cheaply and synchronously as part of their own transactions.

The advantage is availability. If a contract needs the latest published value during execution, it can just read it. The cost is that someone has to keep writing updates onchain even when no specific user is asking at that moment. On a busy or expensive chain, that can become costly.

In a pull-based feed, the high-frequency observation and aggregation may still happen offchain, but the data is brought onchain only when needed. The smart contract or its caller effectively says: fetch the current data now and make it available for this use. Chainlink Data Streams and Pyth’s support for pull-style updates point in this direction, though the exact product mechanics differ.

The tradeoff is almost the mirror image of push. Pull models can support very fast offchain refresh rates without paying continuous onchain posting costs, because only the values that matter to actual users get settled onchain. That is attractive for applications like low-latency trading or perpetuals where market data changes quickly and only some updates need to be materialized onchain. But the burden shifts to the consumer path: data availability is no longer guaranteed to exist onchain ahead of time. The user flow or application architecture has to incorporate fetching and proving the relevant update when needed.

There is no universally correct choice. The right model depends on what the consuming protocol needs most: always-available shared state, or lower cost with more selective publication.

How do update policies (heartbeats, thresholds) affect feed reliability?

| Policy | Trigger | Cost | Benefit | Mitigates |

|---|---|---|---|---|

| Deviation | Percent change threshold | Reduces writes | Avoids trivial updates | Noise‑induced churn |

| Heartbeat | Maximum time interval | Regular writes | Bounds staleness | Silent outages |

| Hysteresis | Persistence or margin | Fewer oscillations | Prevents flapping | Trigger oscillation |

People new to data feeds often focus on source quality and stop there. Source quality matters, but update policy is equally important because a feed is a time-dependent object. A perfectly sourced price that updates too slowly can still be dangerous. A very responsive price that updates on every tiny fluctuation can become prohibitively expensive or noisy.

Deviation thresholds exist because not all movement deserves an onchain write. If the last onchain price is 100 and the new aggregate is 100.03, writing an update may add little decision value while consuming gas and creating extra state churn. But if the price moves from 100 to 103, that may materially affect collateralization and risk checks. The threshold formalizes that distinction.

Heartbeats exist because low volatility and failure can look similar from the outside. If a feed has not updated in hours, is that because the market is calm or because publishers are stuck? A heartbeat breaks that ambiguity by forcing periodic refreshes even when the value has barely moved. This gives consumers a bounded staleness assumption.

Real systems also have to handle edge behavior near the threshold. If the value hovers around the trigger point, the feed may oscillate between update and no-update states, causing repeated unnecessary posts. A common mitigation is hysteresis, which requires the value to move past the threshold by some margin or remain there for some duration before the trigger resets. The idea is simple: do not let the system flap because the underlying signal is jittery.

What matters here is the mechanism, not the terminology. A feed is not just trying to be accurate; it is trying to be accurate enough, fresh enough, and cheap enough for a specific class of onchain decisions.

How does decentralization reduce single points of failure in oracle feeds?

| Design | Single point of failure | Manipulation cost | Operational complexity | Best use |

|---|---|---|---|---|

| Centralized feed | High | Low for attacker | Low | Simple integrations |

| Decentralized Oracle Network | Reduced | Higher | Medium to high | DeFi risk‑sensitive apps |

| Multi‑layer decentralization | Minimal | Highest | High | Large‑value protocols |

A centralized feed can be technically elegant and still be a poor fit for trust-minimized finance. If one operator controls data sourcing, aggregation, and publication, then one outage, compromise, or malicious act can directly corrupt downstream contracts. That is why many production feed systems use a decentralized oracle network, or DON: multiple independent nodes, often drawing from multiple data sources, jointly contribute to the final onchain result.

The point of this design is not decentralization as branding. It is fault containment. If one node goes down, others can continue. If one source reports a bad value, aggregation can dampen it. If one operator attempts manipulation, the system should require enough independent agreement that the attempt does not control the answer.

Chainlink’s OCR protocol shows the engineering logic clearly. Rather than having every node post a separate onchain transaction each round, nodes coordinate offchain, produce a jointly attested report, and then only one transmitter needs to submit it onchain. This reduces gas while preserving multiparty contribution. The underlying security model assumes tolerance of up to f Byzantine nodes when the network has enough total participants, and uses aggregation over many observations to keep faulty nodes from arbitrarily moving the published median.

This is an important subtlety: decentralization alone does not guarantee correctness. If all nodes rely on the same bad upstream API, the system can still fail in a correlated way. If all governance over parameters is concentrated, decentralization at the reporting layer may not eliminate control risk. “Garbage in, garbage out” still applies. The best feed designs decentralize not just who signs the report, but also where the raw data comes from and how consumers can monitor the result.

How should smart contracts read a data feed safely?

A feed is only half the system. The other half is how protocols consume it.

Some protocols read the latest published value directly and act immediately. That is the simplest integration pattern, but it assumes the feed’s freshness and manipulation resistance are sufficient for the protocol’s risk tolerance. Other protocols add an intermediate safety layer. MakerDAO’s architecture is a useful example because it makes this explicit: authorized reporters feed values into a Median contract, and then the Oracle Security Module, or OSM, introduces a delay before those values are used by the core system.

The reason for the delay is not to improve accuracy. It is to create reaction time. If a malicious or erroneous value enters the upstream feed, governance and operators have a window to detect and respond before the system fully acts on it. That comes at a cost: the value consumed by the protocol is intentionally stale relative to the latest median. For some applications, that is an acceptable trade. For others, especially latency-sensitive trading systems, it would be unusable.

This shows a deeper point. There is no such thing as “the best data feed” in the abstract. There is only a feed-consumer pairing that makes sense for a particular failure model. A stablecoin collateral module may prefer slower, safer pricing with delays and governance hooks. A perpetuals venue may prefer faster, selectively pulled data with different safeguards.

What are common failure modes and attacks on data feeds?

The failure cases are where the concept becomes real. A feed can fail because the data source is wrong, because aggregation is flawed, because publication is delayed, because onchain consumers misread the feed, or because the surrounding market can manipulate what the feed is measuring.

One major class of failure comes from using immediately queryable onchain market prices as if they were robust fair-value references. Research and real exploits have shown that if a protocol reads a manipulable DEX price at exactly the moment an attacker has distorted it, the protocol can make economically disastrous decisions within the same transaction or block. The issue is not that the DEX trade price was “fake” in some narrow sense. It was real for that trade. The issue is that a lending protocol wanted a stable reference value for collateral, not a transient execution price that could be moved atomically.

This is why feed designers often prefer aggregated multi-source prices, sanity bounds, delay layers, medians, or validation against known-good references. The core defense is to make the value costly to manipulate at the exact instant the protocol depends on it.

Another failure mode is stale-but-valid-looking data. If no update arrives, consumers may continue to read the last good answer and assume all is well. Heartbeats help, but only if consumers actually check freshness and enforce their own limits.

A third failure mode is governance or authorization error. Maker’s documentation is candid about this: misconfigured permissions or missed update calls can create dangerous states. Any feed with administrator powers, whitelists, source selection, or emergency controls inherits operational risk from those controls.

And some designs depend on specialized trust anchors. Town Crier, for example, explored authenticated data feeds built around trusted execution hardware that could scrape HTTPS websites and attest to the result. That solves a different problem (source authentication from web data) but introduces another assumption: the integrity of the trusted hardware itself. This is not necessarily bad, but it reminds us that every oracle architecture chooses its trust anchor somewhere.

How do data feed designs differ across blockchain architectures?

Although Ethereum-based DeFi made the term familiar, the concept is not specific to one chain or one oracle network. The same underlying problem appears anywhere smart contracts need offchain facts.

On EVM chains, push-based price feeds are common because many protocols want a shared onchain price that multiple contracts can reference. On Solana and other high-throughput environments, designs that support pull and push modes can make different latency-cost tradeoffs, as Pyth’s product structure suggests. In Cosmos-style ecosystems, a chain like BandChain can package oracle logic into its own validator-driven data platform and then serve data across chains. The surface area changes, but the internal logic stays the same: acquire external observations, aggregate them under some trust model, and deliver them in a form smart contracts can consume deterministically.

That continuity is useful because it separates the concept from any particular vendor. Chainlink, Pyth, Maker, Band, and older authenticated-feed systems like Town Crier are not all doing the same thing internally. But they are all solving the same structural problem.

Conclusion

A data feed is not merely a value posted onchain. It is the mechanism that turns uncertain, external observations into a usable onchain reference for smart contracts.

The key idea is simple enough to remember: a data feed is where the outside world gets compressed into one decision-grade number or signal. Everything important follows from that; source selection, aggregation, decentralization, update triggers, latency, cost, and failure handling. If you understand that, you understand why data feeds exist, why they are hard to design well, and why so much of onchain finance depends on getting them right.

What should you understand before relying on a data feed?

Before you rely on a data feed, know whether it is push or pull, how often it updates, and what aggregation and governance assumptions back it. On Cube, fold those checks into your trade or deposit workflow so you act on decision‑grade prices rather than transient or stale values.

- Read the feed docs or onchain contract and note the feed type (push vs pull), heartbeat interval, and deviation threshold.

- Verify upstream sourcing and aggregation by checking how many independent sources contribute and whether the feed uses a median, mean, or other rule.

- Cross‑check the feed price against an alternate onchain reference (another feed or a DEX TWAP) before large trades or deposits and flag any material discrepancy.

- Choose execution controls on Cube: use limit orders or split large orders, set slippage tolerances, and place stop‑loss/take‑profit orders to limit exposure to sudden feed moves.

- Review the feed’s governance and admin permissions in the docs so you understand who can change sources or parameters and monitor for updates before opening big positions.

Frequently Asked Questions

Push-based feeds proactively publish onchain so any contract can read a shared, always-available reference (good when consumers need synchronous access), while pull-based feeds bring data onchain only when a caller requests it, lowering continuous posting cost and enabling higher offchain refresh rates at the cost of guaranteed onchain availability; the right choice depends on whether the consumer values always-available shared state (push) or lower cost and selective publication (pull).

A deviation threshold avoids writing tiny, low‑value changes by posting only when the new aggregate differs from the last onchain answer by more than a configured percentage, while a heartbeat forces an update after a maximum time so consumers can bound staleness; both reduce failure modes but must be balanced (too tight causes excess updates, too loose causes stale references) and are often combined with hysteresis to prevent oscillation.

Decentralization reduces single points of failure by having multiple independent nodes and sources contribute to an aggregate, but it does not guarantee correctness - if nodes share the same bad upstream data or governance is centralized the feed can still fail, so decentralization must be applied to sourcing, aggregation, and governance to meaningfully reduce correlated risk.

Consumers can harden themselves by checking freshness and validity, adding delay or quarantine layers (e.g., MakerDAO’s Oracle Security Module adds a deliberate delay so operators can respond), or using sanity bounds and multi-feed cross-checks; these patterns trade immediacy for reaction time and are chosen based on the consumer’s failure model.

Common failure modes include using manipulable onchain DEX prices as if they were robust references (allowing atomic manipulation), relying on stale-but-valid-looking values when updates stop, and operational/governance errors that misconfigure authority or update flows; feed designs mitigate these with aggregation, sanity checks, delays, and diverse sources.

There is no universal numeric rule in the article; how many independent sources and node operators are required is a configuration decision tied to a protocol’s integrity, availability, and economic-risk targets, so teams must choose counts and SLAs that match their threat model rather than rely on a single canonical number.

Different architectures make different trust assumptions: some designs rely on multiple independent node operators and multi‑source aggregation (e.g., Chainlink’s DON/OCR), others add economic incentives and staking (Pyth’s staking/Publisher Quality concepts), and some rely on trusted execution hardware to authenticate web data (Town Crier used Intel SGX); each choice replaces one trust anchor with another and brings its own tradeoffs.

Related reading