What is Bug Bounty?

Learn what a bug bounty is, why organizations use it, how bug bounty programs work, and where they fit among disclosure, audits, and security controls.

Introduction

Bug bounty is a security practice in which an organization offers rewards to external researchers who find and responsibly report vulnerabilities. The interesting part is not the reward itself. The deeper idea is that security defects are often easiest to discover from the outside, by people with different tools, incentives, and ways of thinking than the internal team. A bug bounty turns that reality into a system.

That system exists because organizations face a recurring problem: attackers can test their systems continuously, but defenders usually test in bounded ways. Internal reviews, audits, and penetration tests matter, but they happen with limited time, limited personnel, and limited imagination. A bug bounty tries to widen the search by inviting independent researchers to look for flaws before criminals exploit them.

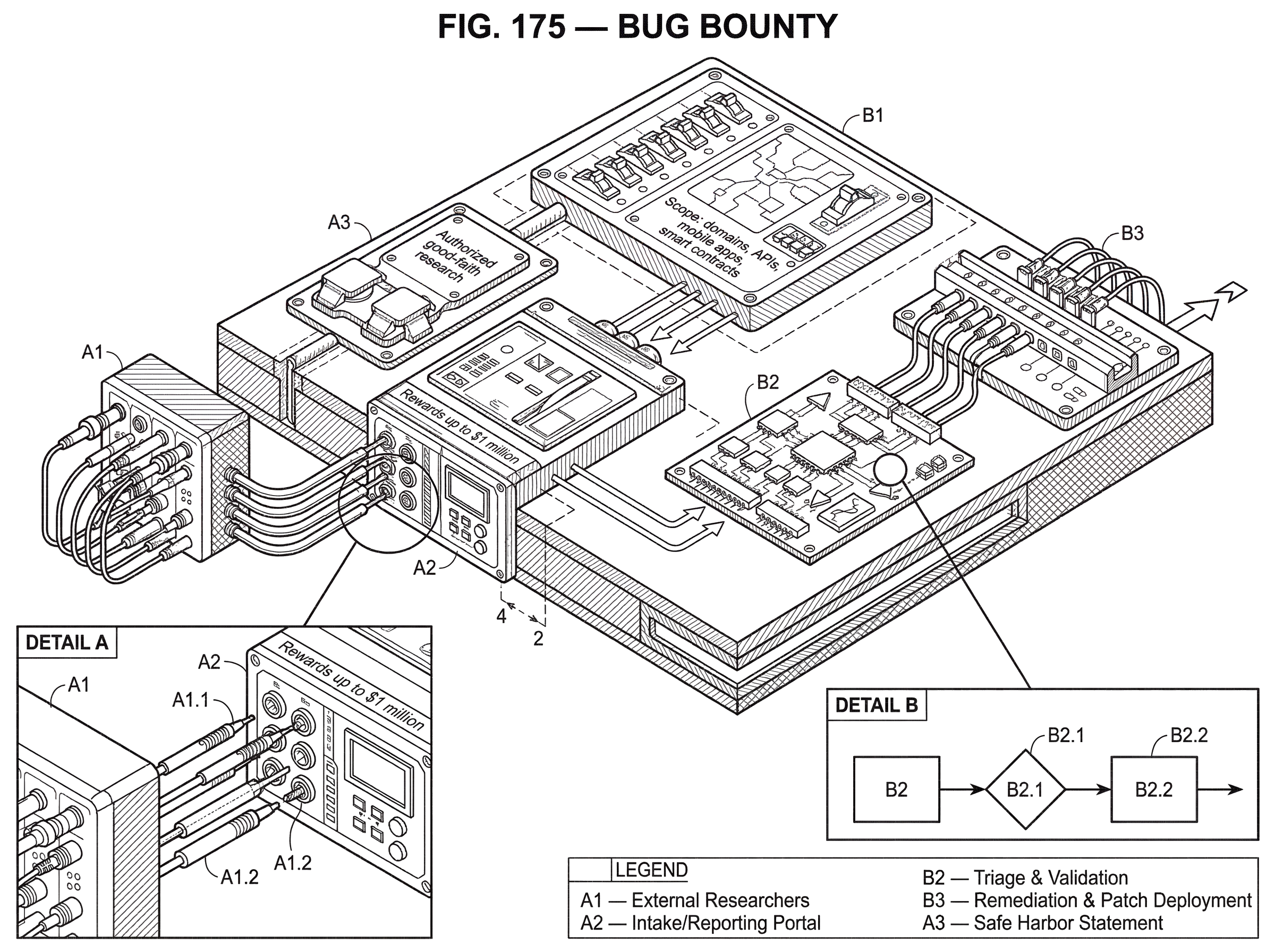

The key distinction is that a bug bounty is not merely “paying hackers.” It is a structured arrangement for authorized testing, responsible reporting, validation, remediation, and reward. Without that structure, the same activity can become noisy, legally ambiguous, operationally disruptive, or even unsafe. With it, bug bounties become part of a broader vulnerability disclosure and handling process rather than a standalone stunt.

This matters especially in modern software and blockchain systems, where assets are exposed continuously to the internet and where mistakes can be expensive, fast-moving, and public. In a web application, a vulnerability might expose customer data. In a smart contract or bridge, it might directly endanger funds. In both cases, the central question is the same: how do you make it easier for someone who finds a flaw to report it safely and worth their effort, instead of ignoring it or exploiting it?

What incentive problem do bug bounties solve for security reporting?

At first glance, bug bounties look like a market for vulnerabilities. That description is partly right, but it misses the reason the market exists. The real problem is an incentive mismatch.

Suppose an independent researcher discovers a serious flaw in your system. Reporting it takes work. They need to verify it, document it, explain impact, often build a proof of concept, and then wait while your team investigates. If there is no clear contact path, no authorization, no assurance they will be treated fairly, and no compensation or recognition, the rational outcome may be silence. In worse cases, the researcher may fear legal retaliation simply for having looked.

A bug bounty changes that calculus. It gives the researcher a legitimate channel, some promise of organizational response, and usually a reward tied to the severity of the validated issue. The goal is not just to buy information. It is to make responsible disclosure the easiest attractive option.

This is why bug bounties sit next to, rather than replace, vulnerability disclosure programs. A vulnerability disclosure program, or VDP, tells outsiders how to report security issues and what conduct is authorized. A bug bounty adds an explicit incentive layer on top. In practice, mature programs treat the bounty as an extension of disclosure, not a substitute for it. Standards and guidance around vulnerability disclosure and vulnerability handling emphasize the receiving, triaging, remediating, and communicating parts of the process because those are the parts that convert a report into actual risk reduction.

That framing also explains a common misunderstanding. People sometimes imagine a bug bounty as outsourced penetration testing at scale. But a penetration test is a commissioned assessment with a defined team, timeline, and methodology. A bug bounty is an open-ended invitation to a broader population of researchers, each bringing their own perspective. The value comes from diversity and persistence, not just from formal test coverage.

How does a bug bounty program work step by step?

Mechanically, a bug bounty program has a simple core loop. An organization defines what can be tested, how it can be tested, how reports should be submitted, what happens after submission, and how validated findings are rewarded. Researchers then look for vulnerabilities within those rules, submit reports, and the organization triages and remediates them.

That sounds straightforward, but each step exists to preserve an important invariant: the organization wants more vulnerability discovery without losing operational control. The program has to increase signal while limiting harm.

Scope is where that starts. A program must say which assets are in scope (domains, APIs, mobile apps, smart contracts, bridge contracts, validator infrastructure, or other systems) and which are out of scope. It usually also restricts techniques that create too much risk, such as denial-of-service testing, phishing, social engineering, or testing against third-party infrastructure. In blockchain settings, some programs prohibit testing on mainnet and require testing on local forks or staging environments, because “proving” a bug on live contracts can itself cause loss.

Then comes reporting. Good programs provide a clear intake channel, often a dedicated portal or security contact, and specify what a useful report must contain. The reason is practical: a report that merely says “your app is vulnerable” does not reduce risk. A report that explains the vulnerable component, reproduction steps, expected and actual behavior, impact, and supporting evidence gives the defender something actionable.

After intake, the organization validates and triages the issue. This is where many weak programs fail. The report must be checked for accuracy, duplicates must be identified, severity must be estimated, and the finding must be routed to whoever can fix it. Guidance from ISO and CERT/CC places heavy emphasis on vulnerability handling because discovery is only the front door. If reports pile up without validation or remediation, the program stops being a security control and becomes a backlog generator.

Only after validation does the bounty logic fully apply. Most programs tie payouts to impact or severity. Critical flaws receive more than low-severity issues because the purpose of the reward is to align researcher effort with organizational risk. Some Web3 platforms make this especially explicit: they may define critical rewards as a percentage of funds directly at risk, sometimes with a minimum and maximum payout. That reflects a setting where exploit impact can be measured more directly in economic terms than in many traditional applications.

Finally, the vulnerability is remediated and, at some point, disclosed more broadly if appropriate. Coordinated disclosure guidance treats this not as a single announcement but as a process involving the finder, the vendor, and sometimes coordinators or downstream deployers. The vulnerability is not truly “handled” when the report is filed. It is handled when mitigation exists and affected parties can act on it.

Do cash payouts alone attract top security researchers, or what else matters?

The bounty itself is the visible part of the program, so it is easy to overestimate or underestimate its role. Money matters because vulnerability research is skilled work, and rewards recognize both the effort and the value of the finding. But bug bounty programs work only partly because of cash.

Researchers also care about predictability, recognition, and fairness. If a program is known for slow responses, arbitrary scope decisions, lowballing severity, or retroactive disputes, strong researchers will avoid it. Conversely, leaderboards, public thanks, reputation, and a history of respectful handling can make a program attractive even when payouts are not the highest in the market.

This points to a first-principles truth: the organization is not only buying reports. It is competing for the attention of capable researchers. A program with poor incentives does not just receive fewer reports; it receives fewer reports from the people most likely to find subtle, high-impact flaws.

Still, incentives have limits. In some environments the upside from exploitation can dwarf any legitimate reward. Research on blockchain interoperability incidents notes that bug-bounty payouts, while meaningful in aggregate, are still small relative to total losses from major hacks. That means a bounty can encourage honest researchers and avert some losses, but it cannot by itself neutralize a determined criminal incentive. The program is best understood as a way to attract the cooperative edge of the researcher population, not to convert every potential attacker into a defender.

How can a smart contract bug move from discovery to patch and payout?

Consider a DeFi protocol preparing to launch a lending market. The team has already run internal reviews and a formal audit. They now open a private bug bounty to a vetted set of researchers because the contracts are complex and the downside of a missed flaw is high.

A researcher studies the liquidation logic and notices that under an unusual sequence of price updates and collateral changes, the protocol can treat an undercollateralized position as healthy for one transaction longer than intended. On a local fork, the researcher shows that an attacker could borrow against temporarily mis-evaluated collateral and withdraw value before the system catches up.

The researcher submits a report with the vulnerable function, the state assumptions required, a proof of concept, and an explanation of maximum impact. The protocol’s triage team reproduces the bug, confirms that the issue is not already known, and escalates it to the smart contract engineers. They determine that the flaw could place user funds at risk if triggered under realistic market conditions, so it is classified as high or critical depending on the exact exploitability and loss scenario.

The engineers patch the logic, update tests, and delay launch until the fix is deployed. The researcher receives a payout based on the severity table, perhaps plus recognition if the program offers it. What made the process work was not simply that money changed hands. It worked because the protocol had already defined scope, prohibited unsafe testing on live systems, staffed triage, and had the engineering capacity to fix the issue quickly.

The same mechanism applies outside Web3. A company may run a public bug bounty for its web properties and receive a report about an authentication bypass in a mobile API. The technical details differ, but the structure is the same: authorized discovery, actionable reporting, validation, remediation, reward.

How do bug bounties fit with audits, penetration tests, and incident response?

Bug bounties are useful precisely because they are incomplete. They do something other controls do not do as well: they expose systems to a broad and ongoing search by outsiders. But they do not replace secure development, code review, audits, penetration testing, red teaming, monitoring, or incident response.

Here is the mechanism behind that complementarity. A secure development lifecycle tries to reduce vulnerabilities before software ships. Audits and penetration tests provide concentrated expert review at specific moments. Monitoring and detection try to catch malicious activity quickly if prevention fails. A bug bounty sits in between these layers by creating a continuous path for external discovery after deployment or near launch.

That placement matters in blockchain systems because many components are difficult to patch under pressure. A smart contract may be immutable or only partially upgradeable. A bridge may depend on validator operations, key management, relayers, or off-chain services as much as on contract code. In those systems, discovering vulnerabilities earlier has outsized value because the remediation window may be narrow and the exploit path financially direct.

But a bug bounty cannot find everything, and it cannot undo architectural weakness. The Ronin bridge postmortem is a useful illustration. After the breach, Sky Mavis announced a bug bounty with rewards up to $1 million as part of a broader security response. That makes sense: external review can help surface vulnerabilities before the next exploit. But the root causes described in the postmortem involved employee compromise, stale allowlist permissions, and validator key control failures. Those are not problems a bounty alone can reliably solve. They require stronger operational security, access control, key management, network design, and approval workflows.

This is the right way to think about bug bounties in relation to neighboring security topics. They can mitigate some forms of smart contract risk by increasing the chance that logic flaws are found before exploitation. They can also help surface misconfigurations and unsafe assumptions. But they depend on the rest of the security system to absorb, prioritize, and fix what they reveal.

Public vs private vs time‑bounded bug bounties; which model should you use?

| Program type | Researchers | Submission volume | Control level | Best when |

|---|---|---|---|---|

| Public | Open to all | High | Low to medium | Mature triage teams |

| Private | Vetted invite-only | Low to medium | High | Pre-launch or fragile systems |

| Time-bounded | Variable pool | Bursty | Medium | Focused launch or sprint |

Organizations do not all expose themselves to researchers in the same way because the tradeoff is not just “more people means better security.” The real tradeoff is between coverage, control, and operational readiness.

A private program limits participation to invited or vetted researchers. This is useful when the target is sensitive, early-stage, or operationally fragile. A protocol about to deploy a new bridge, for example, may prefer a private group that understands smart contract exploitation and can work within stricter rules.

A public program opens participation more broadly. That increases diversity of thought and can uncover edge cases that a smaller set misses. It also raises the volume of submissions, duplicates, and lower-quality reports. Public programs are most effective when the organization already has mature triage and remediation workflows.

Some programs are also time-bounded, such as launch events or focused bounty sprints. These compress attention around a release or a newly exposed surface. They work best when the goal is concentrated scrutiny of a particular target rather than indefinite coverage.

Government practice reflects these same choices. CISA’s bug bounty support for agencies allows both public and private models, with agencies customizing scope, researcher pools, severity-based payouts, and bounty pools. The important point is not that one model is universally better. It is that program shape must match the organization’s ability to handle the resulting flow of vulnerability information.

What does safe harbor mean for researchers and why does authorization matter?

| Scenario | Safe-harbor effect | Limitations |

|---|---|---|

| Good-faith testing | Reduces legal risk | Not absolute across jurisdictions |

| Following scope | Authorization implied | Doesn't cover prohibited techniques |

| Disclosure of data | Encourages responsible reporting | Privacy/regulatory duties still apply |

| Retroactive removal | Should not be withdrawn | May be rescinded for bad faith |

A bug bounty program only works if researchers believe they can participate without unreasonable legal risk. This is where safe harbor becomes central.

The underlying problem is simple. Security research often involves intentionally probing systems for weakness. Without explicit authorization, the line between permitted testing and prohibited access can be uncertain. That uncertainty discourages responsible disclosure, especially when researchers fear civil claims or criminal referral for activity that was meant to improve security.

Safe harbor is the organization’s statement that good-faith research conducted within the program’s rules is authorized and will not trigger legal action by that organization. U.S. DOJ guidance on vulnerability disclosure emphasizes that clear policies describing authorized conduct can materially reduce the likelihood that good-faith discovery and disclosure will be treated as unlawful under the CFAA. Product guidance from industry platforms similarly stresses that protection should be clear, not retroactively withdrawn without evidence of bad faith, and not made so conditional that authorization becomes meaningless.

This does not make safe harbor magic. It is still bounded by jurisdiction, by third-party rights, and by whether the conduct actually stayed within policy. But from a first-principles perspective, safe harbor solves a necessary precondition: if the organization wants outsiders to tell it hard truths about its security, it must reduce the risk of punishing them for doing so.

The operational side of safe harbor is equally important. A program should define prohibited techniques, treatment of sensitive data, acceptable proof forms, and communication expectations. That protects both the researcher and the organization. Vague permission is not enough; researchers need to know where the guardrails are.

What causes bug bounty programs to fail or produce little value?

The most common failure mode is not “researchers find nothing.” It is that the organization is not ready to receive truth at scale.

If scope is unclear, researchers test the wrong things or avoid the program entirely. If the reward structure is weak or inconsistent, high-quality researchers spend time elsewhere. If triage is slow, duplicates pile up and trust erodes. If engineering teams cannot remediate findings, the program may reveal risk faster than the organization can reduce it. In that case, a public bug bounty can increase visibility into weakness without producing enough mitigation.

There are also structural limits. Some systems are hard to test safely because proof of exploit can itself cause damage. Smart contracts, bridges, and wallet infrastructure can have this property. Programs respond with rules like “local fork only,” “no mainnet interaction,” or “no testing against third-party oracles,” but those rules also constrain what researchers can prove. The safer the testing boundary, the more the organization must rely on inference instead of live demonstration.

Another limit is that bug bounties are best at finding vulnerabilities that are discoverable by technical probing. They are less reliable against failures rooted in governance, insider abuse, weak key ceremonies, poor change control, or supply-chain complexity. Those can sometimes be reported, but they do not fit the canonical “find a reproducible bug, submit a proof of concept, receive a reward” path nearly as well.

And there is the incentive ceiling mentioned earlier. In ecosystems with enormous on-chain rewards for exploitation, a bug bounty may not outbid theft. Programs can reduce harm, but they do not erase adversarial economics.

What operational capabilities do you need to run a successful bug bounty?

| Capability | Minimum standard | Why it matters |

|---|---|---|

| Scope & rules | Published, asset-by-asset scope | Guides safe, focused testing |

| Intake channel | Dedicated portal or email | Reduces reporting friction |

| Triage capacity | Fast validation and routing | Prevents backlog and trust loss |

| Engineering ownership | Assigned fix owners | Enables timely remediation |

| Budget & payouts | Severity-based payout table | Aligns incentives with risk |

A mature bug bounty is best understood as an operating capability, not a webpage with a payout table.

The organization needs clear scope, documented rules of engagement, an intake channel, triage capacity, engineering ownership, remediation workflows, and a budget. It also needs a disclosure posture: how fixes will be communicated, when public awareness is appropriate, and how multi-party issues will be coordinated when vendors, deployers, or infrastructure providers are also affected. This is why standards and guidance separate vulnerability disclosure from vulnerability handling. Receiving reports and fixing vulnerabilities are distinct disciplines, and both must work.

In practical terms, many organizations should launch a VDP before or alongside a bug bounty. The VDP establishes the communications and authorization baseline. The bounty then adds incentive and prioritization. This sequencing is especially sensible for teams new to external reporting. If you cannot yet process unsolicited reports predictably, adding financial rewards usually magnifies the operational strain.

In blockchain environments, maturity also means understanding what “impact” means for each asset class. A reentrancy bug in a vault contract, an oracle manipulation path, a validator misconfiguration, and an API authentication flaw do not have the same exploit mechanics or remediation timelines. A good program reflects those differences in scope and reward logic rather than pretending all vulnerabilities fit a single template.

Conclusion

A bug bounty is a structured way to turn outside security research into earlier vulnerability discovery, safer disclosure, and faster remediation. Its value comes from a simple idea: the people most able to find your weaknesses are not always on your payroll, so your security process must give them a lawful, intelligible, and worthwhile way to help.

When bug bounties work, they do not work because a reward page exists. They work because authorization, scope, triage, remediation, and disclosure all fit together. The reward attracts attention, but the process converts that attention into security.

The short version to remember is this: a bug bounty is not a substitute for security engineering. It is a way to widen the search for truth; provided you are prepared to act on what that truth reveals.

How do you secure your crypto setup before trading?

Bug Bounty belongs in your security checklist before you trade or transfer funds on Cube Exchange. The practical move is to harden account access, verify destinations carefully, and slow down any approval or withdrawal that could expose you to this risk.

- Secure account access first with strong authentication and offline backup of recovery material where relevant.

- Translate Bug Bounty into one concrete check you will make before signing, approving, or withdrawing.

- Verify domains, addresses, counterparties, and approval scope before you confirm any sensitive action.

- For higher-risk or higher-value actions, test small first or pause the workflow until the security check is complete.

Frequently Asked Questions

A private program restricts participation to invited or vetted researchers and is useful for sensitive, early-stage, or operationally fragile targets; a public program opens participation to a broader pool, increasing diversity and coverage but also volume and triage burden. The right choice depends on your ability to triage, validate, and remediate at the scale a public program produces.

Safe harbor is the program’s explicit authorization that good‑faith research within the rules will not trigger legal action by the organization; it reduces legal uncertainty and encourages disclosure but is not absolute - it remains bounded by jurisdiction, third‑party rights, and whether the researcher stayed within policy.

Bug bounties complement secure development, audits, penetration testing, monitoring, and incident response by providing ongoing external discovery after deployment, but they cannot substitute for those controls and are weak against governance failures, insider abuse, or operational key‑management flaws.

A mature program needs clear scope and rules of engagement, an intake channel, triage capacity, engineering ownership and remediation workflows, a budget for payouts, and a disclosure posture for coordinated reporting and fixes; without those elements, a bounty often creates noise rather than value.

Many blockchain programs prohibit testing on mainnet and require testing on local forks or staging environments because proof‑of‑exploit on live contracts can itself cause loss; those safe‑testing boundaries reduce risk but also limit what researchers can demonstrate.

Payouts are usually tied to validated impact or severity - with some Web3 programs defining rewards as percentages of funds at risk - and money matters, but predictable handling, fair triage, timely response, and recognition are equally important for attracting top researchers.

When a proof‑of‑concept would itself cause damage (for example by draining funds on a live contract), programs respond with constraints such as “no mainnet interaction,” “local fork only,” or requiring non‑destructive PoCs, which makes reproving some issues harder and forces reliance on inference rather than live demonstration.

The article and supporting evidence recommend benchmarking and tailoring bounty ranges to asset class and impact but do not provide concrete payout numbers or universal tables, so organizations must set ranges based on their risk profile, market benchmarking, and operational budget.

Related reading