What is a Blockchain Node?

Learn what a blockchain node is, how it validates and relays data, why full and light nodes differ, and why nodes make blockchains decentralized.

Introduction

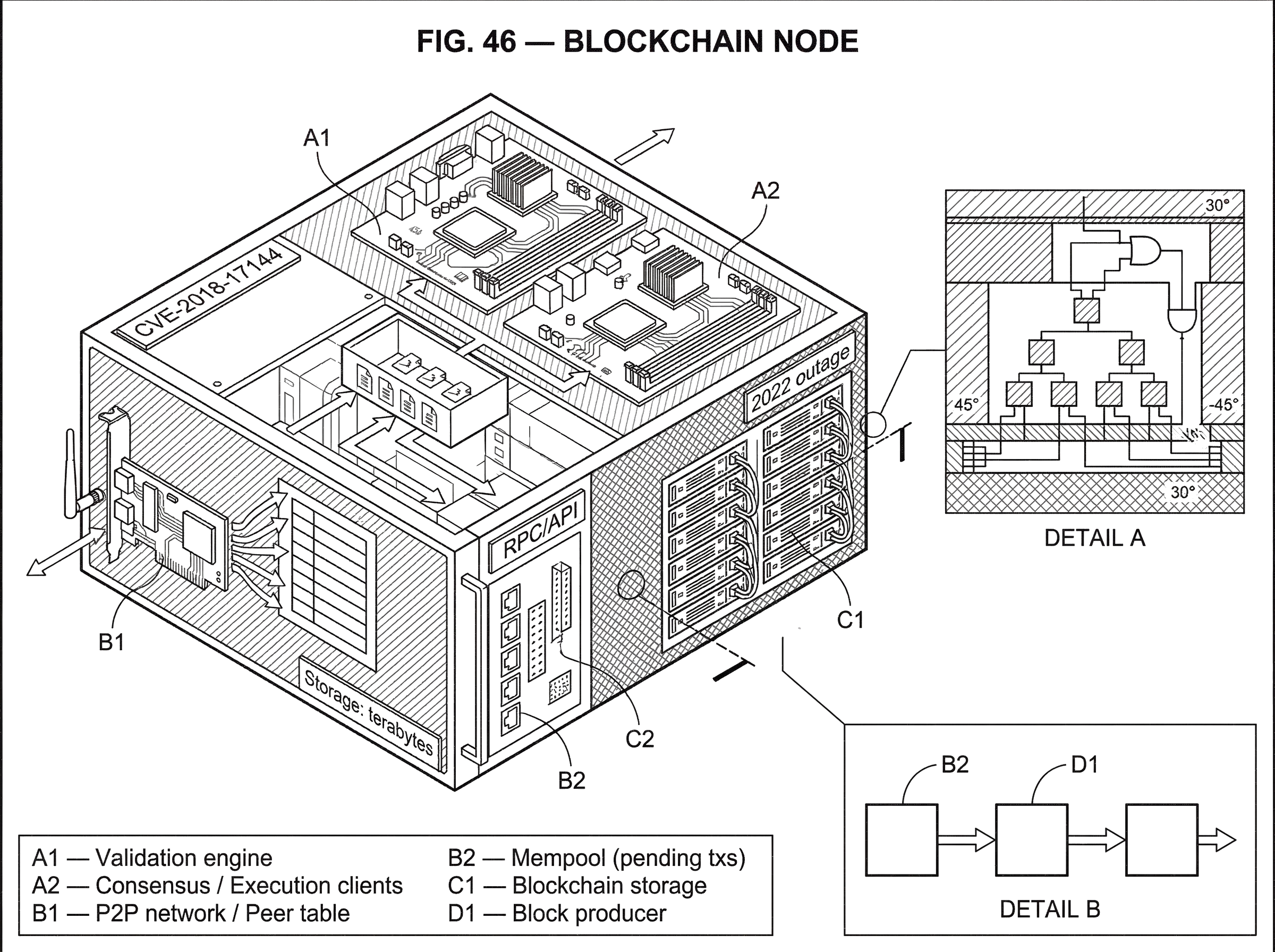

blockchain node is the name for a computer running blockchain software that participates in a network by checking, sharing, and often storing the chain’s data. That sounds modest, but it is the difference between a system you must trust and a system you can verify for yourself. If a blockchain had only wallets and explorers, but no independently run nodes, it would not really be a decentralized ledger at all; it would be a database with branding.

The puzzle is that blockchains are often described as if “the blockchain” were a single thing, almost like a website or a server. Mechanically, it is the opposite. A blockchain exists as many machines, run by many parties, each maintaining its own view of the ledger and each deciding whether new transactions and blocks obey the protocol’s rules. Agreement does not come from one authoritative copy. It comes from many nodes independently arriving at the same answer.

That is why nodes sit at the foundation of blockchain infrastructure. They are where validation happens, where blocks and transactions are propagated, where applications get data through RPC interfaces, and where decentralization becomes something more than a slogan. To understand a blockchain node is to understand where the chain actually lives.

Why do nodes verify blocks and transactions locally instead of trusting others?

The most useful starting point is this: a node’s core job is not “hosting the blockchain.” Its core job is enforcing the protocol rules locally. Storage, networking, and APIs matter, but they matter because they support that central function.

Imagine you receive a new block from the network. A node does not ask, “Did an important company send me this?” It asks, “Does this block satisfy the rules I already know?” Those rules depend on the chain. In Bitcoin, a full node validates transactions and blocks against Bitcoin’s consensus rules. In Ethereum, client software verifies block and transaction data according to the protocol, with the execution layer checking transaction execution and state transitions and the consensus layer checking whether the block belongs on the chain under proof-of-stake rules. In both cases, the key invariant is the same: a node does not outsource validity.

That local verification is what removes the need for a central ledger operator. If every participant had to trust someone else’s statement that a block was valid, the system would collapse back into ordinary client-server architecture. A blockchain node changes the trust model because it can reject invalid data even if that data is widely broadcast.

This also explains why not all machines that “connect to a blockchain” are equal. Some systems mainly query other infrastructure. Others verify for themselves. The closer a machine is to independently checking the chain’s rules, the more it behaves like a real node in the trust-minimizing sense that matters.

How do peer-to-peer nodes work together to create a single blockchain?

A blockchain network is a peer-to-peer system. In a peer-to-peer network, nodes send data to one another directly rather than relying on a central relay. Bitcoin’s original design describes nodes broadcasting transactions and blocks over a best-effort peer-to-peer network, where participants can join, leave, and later rejoin. Ethereum clients also connect to peer networks rather than to a central server. Polkadot nodes likewise join the network directly and can expose RPC services from software they run themselves.

The phrase best effort matters. It means the network does not guarantee that every node sees every message instantly, or in the same order, or even at all on the first try. Delays happen. Peers disconnect. Different nodes can temporarily have different views of the tip of the chain. That sounds fragile, but the system is designed around exactly this reality.

Here is the mechanism. A wallet creates a transaction and sends it to a node. That node checks whether the transaction is well-formed and valid under the current state it knows. If it is, the node relays it to peers. Those peers perform their own checks and relay it onward. The same pattern applies to blocks: a block is received, validated, and then propagated. Consensus is therefore layered on top of ordinary unreliable networking. Nodes do not assume the network is perfect; they assume that enough honest nodes eventually hear about valid data and converge.

This is why a blockchain is not just a data structure. A chain of blocks on disk is not yet a live blockchain. What makes it a blockchain system is a community of nodes repeatedly doing three things: validating new data, relaying it, and selecting which history to extend when there is temporary disagreement.

What happens at the node level when I receive a cryptocurrency payment?

Suppose someone sends you a payment on a proof-of-work chain like Bitcoin. Your wallet may show an incoming transaction quickly, but what makes that information meaningful is the work done by nodes behind the scenes.

The transaction first enters the network through some node. Full nodes that receive it inspect it against their current view of spendable outputs and consensus rules. If it tries to spend coins that do not exist, spends the same output twice, or violates formatting or script rules, a validating node rejects it. If it passes, the node may keep it in its memory pool of pending transactions and relay it outward. Other nodes do the same, so the transaction spreads through the network.

A miner is just a node with an added job: it assembles valid pending transactions into a candidate block and performs proof-of-work. If it finds a valid block first, it broadcasts that block. Then each receiving node does not merely accept the miner’s claim. It checks the block for itself: whether the proof-of-work is sufficient, whether the transactions are valid, whether no coins were created beyond what the rules allow, and whether the block builds on what the node considers the best chain.

If the block passes validation, the node appends it to its local chain state and updates which outputs are spendable. Your wallet, if connected to a node or service watching the chain, can now see that the transaction is included in a block. As more blocks are added on top, reversing that payment becomes progressively harder, because an attacker would need to produce a competing chain with greater cumulative proof-of-work. The familiar language of “confirmations” is really just a summary of that node-level process: each confirmation means more independently validating nodes are observing the payment buried deeper under accepted chain work.

The same broad story carries across architectures even though the mechanics differ. On Ethereum today, an execution client processes transactions in the EVM and tracks state, while a consensus client follows the proof-of-stake chain and fork-choice rules. But again the central fact is unchanged: your node decides locally whether new data is valid and where the head of the chain is.

What's the difference between validating nodes and block producers (miners or validators)?

A common confusion is to treat “node” and “miner” or “validator” as synonyms. They are related, but they are not the same thing.

A node is the broad category: software connected to the network that verifies and shares chain data. A block producer is a node, or a system attached to nodes, that also participates in the mechanism that creates new blocks. In Bitcoin’s original design, miners are nodes performing proof-of-work, motivated by block rewards and transaction fees. In Ethereum’s current architecture, a functioning node requires both an execution client and a consensus client, while proposing or attesting to blocks requires an additional validator process tied to staked ETH.

The distinction matters because validation and production solve different problems. Validation answers, “Should this block count?” Production answers, “Who gets to propose the next block?” A network can have many more validating nodes than block producers, and that is usually healthy. Broad validation disperses power to check the rules. Block production, by contrast, is often concentrated by hardware economics, stake distribution, or operational complexity.

This separation is one reason nodes matter even to people who never mine or stake. Running a validating node means you still choose what rules you accept. You are not merely observing consensus; you are independently checking it.

Full node vs light client vs pruned vs archive: which node type should I run?

| Node type | Verification | Storage | Can serve RPC | Best for |

|---|---|---|---|---|

| Full node | Full validation | Current state + recent blocks | Yes (typical) | Personal verification and relaying |

| Light client | Headers + inclusion proofs | Minimal (headers only) | Limited | Low-resource devices and wallets |

| Pruned node | Full validation (no history) | Reduced (7 GB possible) | No historical serving | Low-storage operators |

| Archive node | Full validation | Full historical state (terabytes) | Yes (historical queries) | Researchers and analytics |

Once the core purpose is clear, the main node varieties make more sense. They are not arbitrary labels. They are different compromises between verification, storage, and serviceability.

A full node fully validates transactions and blocks. That is the canonical form on many chains. In Bitcoin guidance, a full node validates blocks and transactions and usually also relays them to peers, helping both the network and lightweight clients. In Ethereum, “full node” usually means a node that verifies the chain and maintains current enough state to serve ordinary use, though exact storage details depend on client and sync mode.

A light client reduces local resource use by verifying less data directly and relying on compact proofs. Bitcoin’s whitepaper describes simplified payment verification, or SPV, where a client keeps only block headers and verifies inclusion via proofs instead of storing full transaction data. Ethereum documentation also distinguishes light nodes from full and archive nodes, though current light-sync support has practical limits in proof-of-stake Ethereum. The analogy is a receipt checker instead of a warehouse auditor: useful, cheaper, but dependent on assumptions about what full nodes are making available. What the analogy explains is reduced local cost. Where it fails is that a well-designed light client still does real cryptographic verification; it is not just “trusting a summary” in the ordinary human sense.

A pruned node fully validates the chain but discards old raw block data it no longer needs for day-to-day operation. Bitcoin Core’s pruning mode can reduce storage dramatically, but the trade-off is that the node cannot serve the full historical block set and is incompatible with features such as full transaction indexing and some rescanning workflows. The important point is conceptual: pruning changes what data you retain, not whether you validated it when you first received it.

An archive node keeps much more historical data, often the full historical state needed for deep queries. Ethereum documentation notes that archive nodes can require storage measured in terabytes, which is why they are less attractive for average users. These nodes matter when applications or researchers need historical state lookups that ordinary full nodes may no longer serve efficiently.

Different chains expose these trade-offs differently, but the pattern repeats. Bitcoin emphasizes full validation and optional pruning. Ethereum emphasizes client pairings plus full, light, and archive modes. Polkadot similarly documents pruned, archive, and light nodes. The categories are best seen as answers to the same engineering question: how much should this machine verify, remember, and serve?

How do nodes provide RPC and infrastructure for wallets, exchanges, and dapps?

| Option | Trust model | Privacy | Cost | Operational burden | Best for |

|---|---|---|---|---|---|

| Run your own node | Trustless local verification | Queries kept local | Hardware + bandwidth | Maintenance and monitoring | Privacy-conscious users and dapps |

| Third-party RPC | Rely on provider's view | Provider sees your queries | Subscription or free | Minimal operational work | Fast development and small apps |

Once a node has a validated local view of the chain, it becomes useful not only to its operator but to applications around it. This is where nodes stop looking like abstract consensus participants and start looking like infrastructure.

Wallets use nodes to learn balances, broadcast transactions, and watch confirmations. block explorer are built on nodes plus indexing layers. Exchanges and payment processors use nodes to decide when deposits are real enough to credit. Smart-contract applications read chain state through node APIs. Many of these interactions happen through RPC, a programmatic interface exposed by node software.

You can see this clearly in client documentation. Bitcoin Core publishes versioned RPC documentation for its node software. Ethereum execution clients expose JSON-RPC methods that let applications read state and simulate behavior. In Geth, for example, eth_call executes a read-only message call without creating an on-chain transaction, and eth_simulateV1 allows more elaborate local simulations without changing chain state. Polkadot documentation similarly treats RPC control as something node operators configure directly, including which methods are exposed and how many connections are allowed.

This is why public RPC providers exist: running nodes well is operational work, and many applications outsource it. But outsourcing has consequences. If you rely on someone else’s node, you inherit their uptime, filtering choices, privacy posture, and view of the chain. Running your own node restores direct access to the protocol at the cost of hardware, bandwidth, maintenance, and monitoring.

Why does node syncing take time and what are the trade-offs between sync modes?

| Sync mode | Typical time | Bandwidth | Historical data | Validation guarantee | Best for |

|---|---|---|---|---|---|

| Initial Block Download (IBD) | Long (hours–days) | High | Full chain | Full block-by-block validation | Trust-minimizing, archival operators |

| Snap / fast sync | Shorter (minutes–hours) | Medium | Partial or recent state | Verified state with shortcuts | Everyday users who need speed |

| Pruned sync | Medium | Medium | Recent blocks only | Full validation on received blocks | Low-storage operators |

A node is easy to describe in one sentence and much harder to run well. The first operational hurdle is synchronization: how a new node catches up to the current chain.

On Bitcoin, a node starting from scratch goes through Initial Block Download, or IBD, downloading and validating the chain from earlier blocks up to the present. This can take substantial time and consume CPU, bandwidth, and storage. During IBD, the node is busy constructing a trustworthy local view; it is not instantly useful just because the software is installed.

Ethereum has its own sync strategies. Current documentation describes snap sync as the default fast strategy on mainnet for the execution layer, while the consensus client synchronizes its own chain data. The existence of multiple sync modes tells you something important: there is no single canonical way to get a node into a usable state. There are only trade-offs between time, bandwidth, disk, and how much historical material is retained locally.

Polkadot operators face a similar reality. Sync time varies with hardware, and the node’s usefulness depends on whether it has fully caught up. Across chains, the pattern is stable: installing node software is the easy part; bringing it into verified sync with the live network is the real beginning.

This is also where many newcomers misunderstand decentralization. A decentralized protocol does not mean every machine gets a free, effortless copy of reality. It means anyone willing to bear the cost can independently reconstruct and verify that reality from network data and protocol rules.

What are the security limits of nodes and how can node setups fail?

People sometimes talk as if nodes make a blockchain secure by their mere existence. The truth is more conditional. Nodes enforce rules, but their security depends on the surrounding consensus assumptions and on the quality of the software they run.

In Bitcoin’s original proof-of-work model, safety against double-spending depends on honest chain work outpacing an attacker’s competing chain. If an attacker controls a majority of hashing power, the attacker’s chance of building a longer chain rises enough to threaten consensus. Nodes still validate exactly as instructed, but the economic assumption behind chain selection has broken down. So the fundamental protection is not “many nodes” by itself. It is many validating nodes plus a consensus mechanism whose assumptions still hold.

Software correctness matters too. Node bugs can threaten availability or even validity. Bitcoin Core’s disclosure of CVE-2018-17144 described both a denial-of-service issue and an inflation vulnerability in affected releases. More recent advisories and release notices also show that node software can contain severe operational bugs, including platform-specific crash cases and wallet migration issues. The lesson is simple: decentralization reduces some kinds of institutional trust, but it does not eliminate engineering risk.

Different chains reveal different failure modes. Solana’s 2022 outage report described validators overwhelmed by extreme transaction floods, leading to memory exhaustion, crashes, and stalled consensus until a coordinated restart. That does not mean “nodes failed” in some vague sense. It means particular networking, memory, and consensus dynamics under extreme load exceeded what the software and infrastructure could handle. Nodes are where protocol assumptions meet real computers, real operating systems, and real traffic.

Does running your own node guarantee better privacy, and what leaks remain?

Running your own node can improve privacy because you no longer reveal every query to a third-party RPC provider. But node networking can also leak information.

Bitcoin research on Dandelion++ starts from a blunt fact: ordinary transaction broadcast can allow observers to link transactions to originating IP addresses. That means the way nodes relay data is not just a performance detail; it can become a privacy channel. Proposed anonymity schemes try to reduce this leakage, but later research shows the protection depends heavily on adversary models and network assumptions. In other words, node-level privacy is not solved by saying “the network is decentralized.”

Reachability also affects what we can even observe about node populations. Bitnodes’ Bitcoin network snapshots estimate reachable nodes by active crawling, but that method naturally undercounts nodes that do not accept inbound connections or hide behind privacy networks. The same network can look very different depending on whether you count only publicly reachable peers, all validating machines, or all machines indirectly relying on nodes.

So when people ask, “How many nodes does this chain have?” the right response is often, “What kind of node, and visible to whom?” The count matters less than the structure: who validates, who is reachable, who serves others, and where operational concentration sits.

Why should I run a node; the benefits, costs, and typical use cases

The reasons cluster around one underlying motive: control over verification and access.

Some people run a node because they want to verify their own funds and transactions instead of trusting a wallet provider. Some run one because they need reliable RPC infrastructure for an exchange, explorer, staking setup, or dapp backend. Some run nodes to improve privacy by keeping their reads and broadcasts local. Some do it to support the network by relaying data and serving lighter clients. And some run nodes because they are developing protocol software or applications and need direct, version-specific access to client behavior.

Across ecosystems, the official guidance says much the same thing in different words. Bitcoin full-node documentation emphasizes validation and relay. Ethereum documentation says running your own node allows direct, trustless, private use of the network while helping decentralize it. Polkadot documentation highlights direct network interaction, privacy, and control over RPC requests and queries. These are not three separate stories. They are three expressions of the same mechanism: if you operate the verifying machine, you decide what to trust and how to access the chain.

Conclusion

A blockchain node is a computer running protocol software that independently checks, stores, and shares blockchain data. That is the basic fact, but the memorable part is why it matters: a blockchain is not a ledger people believe in; it is a ledger nodes keep proving to themselves.

Everything else follows from that. Full nodes maximize independent verification. Light clients reduce cost by relying on headers and proofs. Pruned and archive modes trade storage for capability. RPC turns nodes into application infrastructure. Consensus assumptions, software bugs, bandwidth limits, and privacy leaks all show up at the node layer because that is where protocol rules meet the real world.

If you remember one thing tomorrow, let it be this: the node is the place where decentralization becomes concrete. It is where “don’t trust, verify” stops being a slogan and becomes a running process on an actual machine.

How does this part of the crypto stack affect real-world usage?

Nodes determine how quickly and reliably on-chain events finalize, so they directly affect when deposits are spendable and trades are safe. On Cube Exchange, use simple node- and network-level checks before you fund or trade to reduce settlement, privacy, and congestion risks while following Cube’s trading workflow.

- Fund your Cube account with fiat or a supported crypto deposit on the correct network and confirm the deposit is credited.

- Check the chain’s finality and confirmation guidance before trading: for Bitcoin, target ~6 confirmations for large transfers; for PoS chains, wait for a finality checkpoint per the chain docs.

- If the asset crosses rollups or bridges, verify the rollup exit or challenge window has passed before relying on cross-chain settlement.

- Open the relevant market on Cube, pick an order type (use limit orders to control price during congestion; use market orders for small, immediate fills), review estimated fees and slippage, and submit.

Frequently Asked Questions

A node enforces the protocol rules locally: when it receives a transaction or block it independently checks formatting, spends, scripts/execution, and consensus conditions (e.g., sufficient proof-of-work or correct proof-of-stake/consensus headers) rather than trusting the sender; only after passing those checks will it accept and relay the data. This local verification is what removes the need for a central operator.

These labels reflect trade-offs: full nodes fully validate and typically relay data; light clients verify less (e.g., headers and inclusion proofs) to save resources but rely on assumptions about full nodes; pruned nodes fully validate but discard old block data so they cannot serve historical blocks or indexing; archive nodes retain complete historical state for deep queries but require terabytes of storage. Which to run depends on how much you need to verify, how much storage/bandwidth you can afford, and whether you must serve others.

Using a public RPC provider outsources uptime, filtering choices, privacy posture, and the node’s view of the chain to that operator, while running your own node restores direct, trust-minimizing access but incurs hardware, bandwidth, maintenance, and monitoring costs. Outsourcing is operationally convenient but inherits the provider’s availability and privacy trade-offs.

Syncing means rebuilding a trustworthy local view of the chain and can be slow because nodes must download and validate historical data (Bitcoin’s Initial Block Download) or choose among faster modes that trade off how much history is retained (e.g., Ethereum’s snap sync); the options trade time, bandwidth, disk usage, and how much historical material you keep locally. Installing software is easy, but reaching a verified synced state is the operational hurdle.

Nodes enforcing rules are necessary but not sufficient to stop all attacks: for example, in proof-of-work systems safety against double-spends relies on honest chain work outpacing an attacker’s hashing power, so if an attacker controls a majority of hashpower they can build a longer chain and subvert consensus despite nodes validating normally. In short, many validating nodes help, but the security also depends on the underlying consensus assumptions holding.

Running your own node reduces reliance on third parties and therefore improves privacy compared with querying public RPCs, but node-level networking (how transactions are relayed) can leak origins and deanonymization countermeasures like Dandelion++ have limits tied to network and adversary models, so running a node does not by itself provide perfect privacy. Practical privacy depends on relay protocols, attacker capabilities, and additional hardening.

Node software bugs can threaten availability or even ledger validity and have required coordinated fixes in the past; notable examples in the evidence include an inflation/DoS vulnerability disclosed for Bitcoin Core (CVE-2018-17144) and production outages where validator software crashed under extreme load (Solana’s 2022 incident). Operators must track releases and apply patches because decentralization does not eliminate engineering risk.

A node is any validating, relaying participant; a block producer (miner or validator) is a node that additionally participates in creating new blocks - validation and production are distinct roles because verifying blocks answers "should this block count?" while producing answers "who proposes the next block?" - networks commonly have far more validators than producers. This separation lets many parties check rules while a smaller set competes or is selected to build blocks.

Related reading