What is Leader Election?

Learn what leader election is in consensus, why protocols choose temporary leaders, how failover works, and why proposer selection affects safety and MEV.

Introduction

Leader election is the mechanism a distributed system uses to decide which participant is allowed to drive progress for a period of time; for example, by proposing the next block, coordinating replication, or breaking ties when many nodes could otherwise act at once. In consensus systems, this is not a cosmetic role assignment. It is a way of turning a noisy many-party problem into something the network can actually execute under delay, disagreement, and faults.

The puzzle is simple: if every node is allowed to propose independently, the system gets parallelism, but it also gets collisions, conflicting histories, and a much harder agreement problem. If only one node is allowed to lead, coordination becomes simpler, but the system now depends on replacing that leader when it is slow, faulty, or malicious. Leader election exists because both sides of that tradeoff are real. Consensus protocols use it to get the simplicity of coordination through a single proposer without giving that proposer permanent authority.

This is why leader election appears in systems that otherwise look very different. Raft uses elections to choose a leader that replicates a log. PBFT and HotStuff use view-based leaders to propose ordered commands while preserving Byzantine Safety. Tendermint rotates proposers by voting power. Ethereum consensus assigns one proposer per slot. Algorand and Ouroboros-family designs use cryptographic randomness so leaders are selected in ways that are hard to predict or target. The surface details vary, but the underlying problem is the same: who gets to act first, for how long, and how does everyone else know when to stop following them?

Why do consensus protocols use a leader instead of symmetric proposals?

Consensus is fundamentally about making many machines behave like one state machine. The difficulty is not just that machines may fail. It is that they may see messages in different orders, wait for different lengths of time, and temporarily disagree about what is happening. If every participant can introduce new ordering decisions at the same time, the space of possible histories explodes.

A leader narrows that space. Instead of asking all nodes to negotiate every ordering decision symmetrically, the protocol says: for this moment, proposals come from this node, and the others respond according to fixed rules. That does not by itself solve consensus; followers still need rules for when to accept, reject, or override the leader. But it removes a major source of nondeterminism. In Raft, this is explicit: the protocol is built around a strong leader, and log entries flow only from the leader to followers. That design makes the system easier to reason about because replication has a single direction.

The deeper invariant is not “the leader is always right.” It is the opposite: the leader is allowed to coordinate, but not to decide unilaterally. A healthy consensus design treats leadership as a proposal privilege constrained by quorum rules. The leader suggests an ordering or block; the quorum structure decides whether that suggestion becomes part of the shared history. This is why leader election belongs inside consensus, not above it. The protocol must define both the temporary authority of the leader and the exact conditions under which that authority ends.

What responsibilities does a leader have in consensus protocols?

In ordinary language, a leader is the node that gets to turn many pending possibilities into one concrete candidate history. In a replicated log, that means appending the next entries. In a blockchain, it usually means proposing the next block. In a BFT state machine, it may mean assigning sequence numbers to client requests.

That role bundles several powers that matter mechanically and economically. The first is coordination power: a leader can keep replicas working on the same candidate instead of scattering effort across many candidates. The second is timing power: a leader can determine which proposal is seen first, which often matters under network delay. The third is ordering power: in blockchains, the proposer usually has temporary control over transaction inclusion and order within its block or slot. That last power is why leader election is tied so closely to MEV. If a protocol picks one proposer for a slot, it is also choosing who gets monopoly power over ordering during that slot.

This is the part readers often underestimate. Leader election is not just about fault recovery after a crash. It is also about allocating scarce ordering rights. In permissioned systems the concern is mostly correctness and availability. In open blockchains, incentive design matters too, because whoever becomes leader may be able to extract value from ordering. The protocol therefore has to care not only about whether a leader can be replaced, but also about how often leaders rotate, whether selection is predictable, and whether temporary proposer power can be abused.

How does leader election, following, and replacement work in practice?

Most leader-based protocols share the same skeleton even when the details differ.

First, the system divides time into leadership epochs. Raft calls them terms. PBFT uses views. Ethereum consensus uses slots, with one proposer per slot. These epochs matter because they separate “who is leader now” from “who was leader before.” Without that separation, old messages from a dead or superseded leader could keep confusing the system.

Second, the protocol defines how a leader is chosen for a given epoch. That choice may come from a vote, a deterministic schedule, stake-weighted rotation, or cryptographic randomness. The important property is that honest participants can converge on the same answer.

Third, followers act under rules tied to the current epoch. They accept proposals only if they come from the valid leader for that term, view, round, or slot, and only if the proposal is compatible with whatever safety conditions the protocol maintains.

Fourth, if progress stalls, the system moves to a new epoch and tries a new leader. This is the heart of leader election in practice. The hard problem is usually not naming a leader once. It is replacing a bad one without losing safety or forgetting work that was already nearly agreed.

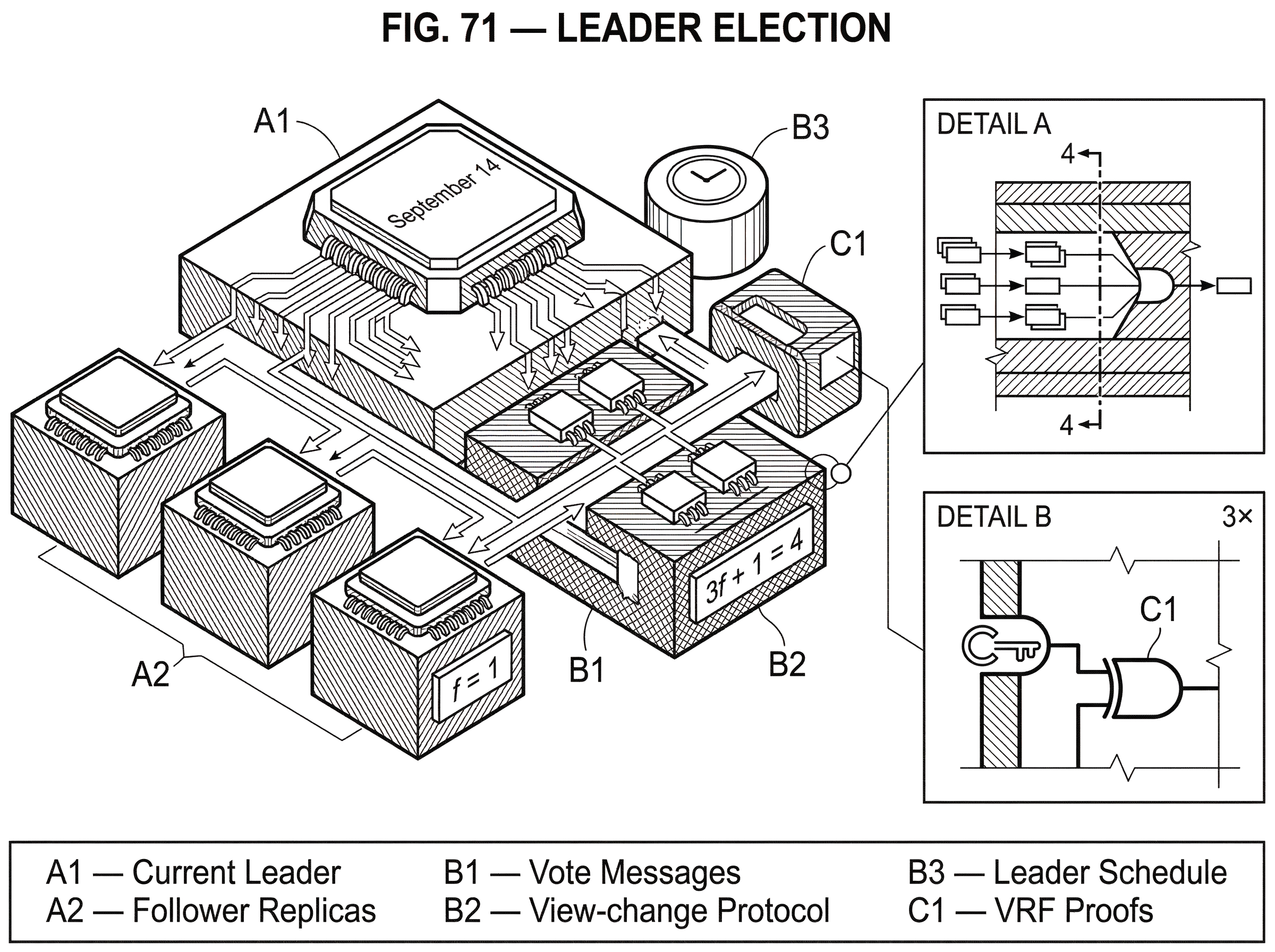

A short example makes this concrete. Imagine five replicas running a BFT protocol that tolerates f = 1 Byzantine fault, so it needs 3f + 1 = 4? No; for f = 1, it needs 3f + 1 = 4 replicas minimum, and here it has five, which is above the minimum. In the current view, replica A is leader. A proposes an operation, but then stops responding. Some replicas saw the proposal; others did not. The system cannot just say “pick B and continue,” because B needs to know whether A’s proposal was already far enough along that it must be preserved. So replicas send evidence about what they have seen. The next leader collects enough of that evidence to reconstruct the safest continuation, then proposes under the new view. That is leader replacement as consensus work, not just a timeout and a name change.

What are the main methods to select a leader and their trade-offs?

| Method | Predictability | Attack risk | Liveness | Complexity |

|---|---|---|---|---|

| Voting after failure | Unpredictable | Low targetability | May be slow under contention | Moderate |

| Deterministic schedule | Highly predictable | High targetability | Fast when honest leader | Low |

| Cryptographic lottery (VRF) | Privately unpredictable | Reduced targeting window | High responsiveness | Higher crypto cost |

The organizing distinction is whether the system discovers the leader by voting after apparent failure or computes the leader from a schedule or lottery known in advance or derivable from shared state.

In Raft, leader election is explicit voting. Servers live in one of three states: follower, candidate, or leader. Time is divided into numbered terms. If a follower stops hearing from a leader, it waits for a randomized election timeout, becomes a candidate, increments its term, and asks peers for votes. Randomized timeouts are the key trick: if everyone timed out together, split votes would be common. By choosing timeouts randomly from an interval, Raft reduces the chance that multiple candidates launch simultaneously. Once a candidate receives a majority, it becomes leader for that term. Raft also guarantees Election Safety: at most one leader can be elected in a given term.

In PBFT, the choice of leader within a view is deterministic, but replacement is driven by a view-change protocol. Each view has one primary and the rest are backups. If the primary appears faulty, replicas multicast VIEW-CHANGE messages for the next numbered view. The new primary for that view collects enough such messages and sends a NEW-VIEW message that tells replicas how to resume safely. The election mechanism here is not “vote among arbitrary candidates.” It is “advance to the next view, whose leader is predetermined, once enough replicas agree the current one is not making progress.”

Tendermint also uses a schedule rather than an ad hoc campaign. Its whitepaper describes proposers as chosen in round-robin fashion weighted by voting power, and the proposer-selection specification gives a more precise weighted round-robin mechanism. Each validator has an accumulated priority A(i) and voting power VP(i). On each run, the protocol increases every validator’s priority by its voting power, chooses the validator with the highest accumulated priority as proposer, then reduces that proposer’s priority by the total voting power P. The purpose is not mere bookkeeping. It makes proposer frequency track stake over time while remaining deterministic, so honest validators given the same state compute the same proposer.

Ethereum consensus and Solana both expose another common pattern: an externally visible leader schedule. Ethereum has one proposer per slot; with N active validators, a validator is expected on average to propose once every N slots. Solana exposes an RPC that returns the leader schedule for an epoch, mapping validator identities to arrays of leader slot indices relative to that epoch. In both cases, leadership is not discovered by emergency vote at the moment of failure. It is assigned by the protocol’s scheduling machinery in advance for a given time window.

Then there is the cryptographic-lottery style used in systems like Algorand and Praos-family protocols. Algorand uses Verifiable Random Functions, or VRFs, so users can privately check whether they are selected to participate and then attach a proof of selection to their messages. The key shift is that leadership can be privately known before it is publicly revealed. That matters because a publicly known future leader can be targeted in advance. Ouroboros Praos pushes on the same pressure point by emphasizing unpredictability and resilience against adaptive corruption in a semi-synchronous setting.

How can a protocol replace a stalled or malicious leader without violating safety?

| Approach | Fault model | Safety invariant | Overhead |

|---|---|---|---|

| PBFT view-change | Byzantine (≤ f) | Carry forward prepared requests | High message complexity |

| Raft up-to-date check | Crash faults | New leader has latest log | Low messaging overhead |

| Quorum-evidence merge | Crash or Byzantine | New leader inherits quorum state | Moderate evidence handling |

This is where leader election stops looking like administration and starts looking like the core of the protocol.

Suppose a leader proposes something, and some honest nodes accept it while others do not. If the system changes leader too casually, the next leader may propose a conflicting history and cause different honest nodes to commit different outcomes. So leader change needs to preserve a crucial invariant: anything that is already sufficiently endorsed must constrain future leaders.

PBFT shows this clearly. Within a view, the primary proposes an ordering using the pre-prepare, prepare, and commit phases. A replica is prepared for a request (view, sequence number, digest) once it has the request, the matching PRE-PREPARE, and 2f matching PREPARE messages from different backups. Those thresholds are not arbitrary. With 3f + 1 replicas, quorums large enough to move the protocol forward must intersect in enough honest replicas that conflicting histories cannot both look valid. During a view change, replicas send information about prepared requests so the new primary can carry forward the safe ordering rather than overwrite it.

Raft solves the same basic problem in a crash-fault model with different machinery. A candidate does not just ask for votes; it must have a log that is sufficiently up to date. This prevents an outdated server from becoming leader and overwriting entries that are already safely replicated. Raft’s commitment rule is intentionally conservative: leaders count replicas to commit only entries from their current term. Older-term entries may be present on a majority and still not be considered committed by simple counting. That rule exists because naive counting across terms can make safety arguments fail.

The unifying idea is simple enough to remember: a new leader is not free to start from scratch. It inherits obligations from what quorums have already seen.

How does timing affect leader election, liveness, and failover?

Many consensus protocols can preserve safety without assuming precise clocks or fixed message delays. But leader election is usually where liveness runs into time.

Raft states this very cleanly. Safety does not depend on timing, but availability does. In practice, the system works well when broadcastTime << electionTimeout << MTBF, where MTBF is mean time between failures. If election timeouts are too short, nodes trigger unnecessary elections during ordinary network hiccups. If they are too long, failover becomes sluggish. Randomized timeouts help resolve split votes, but tuning still matters.

PBFT makes a different but related point. It preserves safety in full asynchrony, but liveness requires a weak synchrony assumption: the adversary cannot delay messages forever. Its liveness strategy uses timers, waiting rules, and increasing timeouts so that eventually enough honest replicas spend enough time in the same view to make progress under a correct primary. HotStuff, which is also leader-based under partial synchrony, emphasizes responsiveness: once network communication becomes synchronous, a correct leader can drive consensus at the pace of actual network delay rather than the worst-case timeout bound.

Tendermint likewise assumes partial synchrony and bounded clock drift. That assumption is not an implementation footnote; it is part of why proposer rotation and voting rounds eventually terminate. When readers hear “leader election,” they often imagine only a logical process. In practice, it is always entangled with the network model: crash faults or Byzantine faults, synchrony assumptions, and what the protocol is allowed to infer from silence.

Deterministic schedules vs. secret lotteries: what are the security and operational trade-offs?

There is a genuine design tension here.

A deterministic schedule is simple to compute and easy to coordinate around. Tendermint’s proposer selection requires determinism: honest validators with the same validator set, height, and round must compute the same proposer. Ethereum’s one-proposer-per-slot model gives the system a clear focal point every slot. Solana’s leader schedule makes future assignment queryable. These designs are operationally convenient.

But predictability has a cost. If everyone knows who the next leaders are, an adversary may target them with denial-of-service attacks or exploit periods of expected proposer control. That is one reason cryptographic private selection became attractive in some proof-of-stake designs. Algorand’s VRF-based private checking lets users learn locally whether they are selected and reveal that fact only when they send a message with proof. This shortens the window in which an identified leader can be attacked before acting. The paper also notes that participants keep no private state except their private keys, allowing immediate replacement after they send a message and helping mitigate targeted attacks once identity is revealed.

Praos-family work pushes further on unpredictability, especially under adaptive corruption. The point is not only fairness. It is that if an attacker can predict future leaders far enough ahead, it may corrupt or disable them strategically. VRFs and forward-secure techniques are ways to defend the election process itself against that kind of foresight.

There is no universal winner. Deterministic schedules are easier to debug and reason about operationally. Secret or less predictable elections can improve attack resistance, but they bring more cryptographic machinery and different complexity around verification and randomness generation.

How does leader election affect MEV and economic incentives in blockchains?

| Mechanism | Revenue effect | Fairness | MEV exposure | Mitigation |

|---|---|---|---|---|

| Weighted round-robin | Stable proposer income | Proportional over time | Moderate | Frequent rotation |

| Per-slot assignment | Predictable per-slot rewards | Proportional in expectation | High if predictable | Obfuscate schedule |

| Private lottery (VRF) | Uneven short-term income | Long-term fairness possible | Low targeted MEV | Private selection proofs |

In a blockchain, a leader is not just a coordinator. It is often a short-lived monopolist over block construction.

That matters because transaction ordering has value. The Flash Boys 2.0 study documents arbitrage bots competing in priority gas auctions, bidding up fees to obtain earlier execution and block position. The paper frames this as one form of MEV, miner extractable value, and argues that high fees paid for priority ordering can pose consensus-layer security risks. The connection to leader election is structural: if the protocol elects a leader, it is also deciding who gets first control over transaction ordering in that period.

This changes how we should think about fairness and rotation. In Tendermint, weighted round-robin selection aims to make proposer frequency roughly proportional to voting power over time. In proof-of-stake systems more broadly, leader election is part of how the protocol allocates revenue opportunities as well as responsibility. The fairness question is not only “does the system progress?” but also “who repeatedly gets the right to extract ordering rents?”

That is why proposer schedules, randomness sources, and stake weighting are not secondary details. They shape both security and incentives.

What can go wrong with leader election if network or fault assumptions fail?

Leader election depends on assumptions that are easy to gloss over until they fail.

If network delays are unbounded for too long, timeout-driven replacement can thrash: honest nodes keep deciding the leader is faulty because they cannot distinguish slowness from failure. If too many participants are Byzantine, quorum intersection arguments fail, and leadership changes can no longer safely preserve history. PBFT and Tendermint both rely on the familiar BFT threshold of tolerating up to 1/3 Byzantine faults or voting power; beyond that, the logic that keeps quorums from supporting conflicting outcomes breaks down.

If leader choice is too predictable, the elected node may be attacked before it can act. If it is too opaque or too random without common verifiability, honest nodes may disagree on who should lead. If election timing is tuned poorly, the system may oscillate between leaders and waste throughput on failover. If membership changes are handled badly, the protocol can accidentally create disjoint majorities. Raft addresses this with joint consensus, where the majorities of the old and new configurations overlap during transition.

Operational failures can expose these pressures in unpleasant ways. Solana’s September 14 outage illustrates that liveness can fail when load, resource exhaustion, forks, and validator disagreement interact badly. The initial overview describes a transaction flood that caused unbounded memory growth in a forwarder queue, validator crashes, multiple forks, and eventually a stall because validators could not agree on the current state. The immediate root cause was not “leader election bug” in isolation, but the incident is a reminder that who leads, how forks are resolved, and how the network re-converges are inseparable from real-world availability.

Key takeaway: what is the essential idea about leader election?

Leader election is the protocol’s answer to a basic question: who gets temporary authority to propose the next shared step, and how do we revoke that authority safely when reality changes?

Everything else follows from that. A leader simplifies coordination by giving the system a focal proposer. Quorum rules keep that proposer from becoming a dictator. Timeouts, terms, views, rounds, and slots delimit when leadership begins and ends. View changes, elections, and schedules decide how leadership rotates. Randomness and secrecy matter when predictability becomes an attack surface. And in blockchains, leader election also decides who briefly controls transaction ordering, which is why it reaches into incentives and MEV as well as correctness.

If you remember one sentence tomorrow, make it this: leader election is not about picking a boss; it is about concentrating coordination just enough to make agreement possible, while constraining and replacing that coordinator before it can break the system.

What should you understand about leader election before trading or transferring?

Understand how leader election affects finality, availability, and ordering incentives before you trade or move funds. On Cube Exchange, fund your account and follow chain-specific checks so you avoid transfers or trades during leader instability or periods of predictable proposer power.

- Fund your Cube account with fiat on‑ramp or a supported crypto deposit so you have funds ready to trade or transfer.

- Check the chain’s leader-selection and finality rules in the protocol docs or node RPC (e.g., whether it uses slots, weighted proposers, or VRFs) and note the recommended Confirmations or finality threshold for safe settlement.

- Choose the correct network and set the confirmation/finality wait you determined in step 2 before moving large amounts (for trading, prefer smaller, staggered fills if proposer predictability or MEV looks elevated).

- Open the market or transfer flow on Cube, pick an order type that matches your risk (use Limit Order to reduce front‑running risk or Market Order for immediacy), review fees and the destination network, and submit.

Frequently Asked Questions

A leader narrows the space of possible histories by giving the system a single focal proposer for a period of time, which simplifies coordination and ordering while leaving final decisions to quorum rules rather than the leader alone.

Protocols require the new leader to inherit evidence of what quorums already agreed on so it cannot safely propose a conflicting history; PBFT does this by collecting prepared messages during view-change and Raft prevents outdated servers from winning elections by requiring sufficiently up-to-date logs before granting leadership.

Common approaches are (1) run-time voting after a suspected failure (Raft’s randomized election timeouts and majority votes) and (2) precomputed schedules or lotteries (round‑robin weighted by stake, one-proposer-per-slot, or cryptographic lotteries/VRFs); the trade-off is operational simplicity and transparency versus attack-resistance and unpredictability.

Because proposers temporarily decide which transactions enter and in what order, leader election determines who captures ordering rents like MEV; predictable, concentrated proposer power can therefore create economic incentives for abuse, so proposer schedules and randomness sources are part of both security and incentive design.

Timing rarely affects safety but it is critical for liveness: systems use timeouts, randomized delays, or partial‑synchrony assumptions to ensure progress, and poor timeout tuning can cause unnecessary elections or sluggish failover (Raft emphasizes electionTimeout ≫ broadcastTime but ≪ MTBF; PBFT requires eventual synchrony for liveness).

If network delays are unbounded or Byzantine participants exceed protocol thresholds (e.g., more than about 1/3 for PBFT-style quorums), quorum intersection guarantees fail and leader replacement or election machinery can no longer preserve a single shared history, causing safety or liveness breakdowns.

Deterministic schedules are easy to compute and reason about (helpful operationally and for debugging), but they make future leaders predictable and thus susceptible to targeted DoS or bribery; unpredictable or private selection reduces that attack surface but increases cryptographic and coordination complexity.

Raft requires that leaders only consider entries committed in their own term when counting replicas as committed, which prevents an outdated server from becoming leader and causing overwritten or conflicting committed entries - a conservative rule chosen to simplify safety reasoning.

There is no single ideal rotation rate or timeout; practical settings balance fast failover (short timeouts) against false positives during transient network hiccups (long timeouts), and protocols provide randomized timeouts, backoff, or increasing timeouts to reduce harmful oscillation - tuning remains deployment‑specific.

Related reading