What is Sybil Resistance?

Learn what Sybil resistance is, why open blockchains need it, and how proof-of-work, proof-of-stake, and other designs make influence costly.

Introduction

Sybil resistance is the part of a consensus system that stops one real actor from pretending to be many participants and gaining outsized influence. That sounds narrow, but it is actually one of the central problems in open distributed systems. If a network lets anyone join, and if votes are counted by identities alone, then an attacker can simply create more identities than everyone else combined. In that world, “decentralized” becomes mostly cosmetic.

This is why the question is not merely who is allowed to participate? but what gives participation weight? In a small permissioned system, the answer can be known institutional identity. In an open blockchain, that usually does not work. The system instead needs some way to make influence costly or otherwise hard to duplicate. That design choice is what people mean by Sybil resistance.

The basic attack is called a Sybil attack: a single entity forges many identities and uses them to defeat any defense that assumes many identities mean many independent actors. John Douceur’s classic result makes the problem sharper: without a trusted identity authority, Sybil attacks remain possible unless you assume unusually strong and generally impractical conditions about resources and coordination. That is the compression point for the whole topic. **Sybil resistance is not mainly about naming participants correctly. It is about finding a credible scarce basis for influence. **

Why are fake identities a serious threat to open consensus?

An identity in a distributed system is just a persistent handle: some representation that lets the system treat repeated messages as coming from “the same participant.” That is useful for networking, reputation, replication, and voting. But the moment the system relies on counts of identities, it silently assumes that each identity corresponds to a distinct underlying actor. If that assumption fails, the protocol can be manipulated at very low cost.

Here is the mechanism. Suppose a protocol says a decision is accepted if most nodes agree. If creating a node is cheap, then the protocol is not really majority rule among independent participants. It is majority rule among labels. An attacker can mint labels much faster than honest users can recruit actual independent people, machines, or capital. The attacker has not broken cryptography or invalidated signatures. They have broken the mapping between identity and independence.

That is why Sybil attacks defeat redundancy-based thinking. Many distributed systems become robust by replicating work across supposedly separate participants. But replication only helps if those participants fail independently. If ten validators, peers, or witnesses are all controlled by one actor, then “ten” no longer means ten independent checks. It means one controller speaking through ten mouths.

Douceur’s result is important because it rules out a tempting intuition: perhaps there is some purely protocol-level trick that can infer unique human or organizational identity without any anchor outside the protocol. The paper argues no. Without a logically centralized authority, or very strong assumptions about scarce resources and simultaneous verification, Sybil attacks are always possible. Even under friendly network assumptions, the problem remains. That tells us something fundamental: open consensus cannot get security from identity count alone.

How do systems prevent one-identity-one-vote to resist Sybil attacks?

| Approach | Scarce basis | Openness | Main assumption | Best for |

|---|---|---|---|---|

| Trusted membership | Vetted institutional identity | Low openness | Honest gatekeeper exists | Consortium or private chains |

| Resource-based (PoW/PoS) | Computation or bonded stake | High openness | Scarce resource controls influence | Public blockchains |

| Social-graph | Trust links / attack edges | Moderate openness | Attack edges remain limited | Communities with strong trust |

Once you see the failure mode, the design principle becomes clear. A system must avoid giving power on a simple one-identity-one-vote basis unless identities themselves are tightly controlled. Instead, it must tie influence to something that is harder to forge than account names.

There are only a few broad ways to do that. One is to rely on a trusted membership authority, explicit or implicit, so that each recognized identity corresponds to a vetted entity. Another is to tie power to a scarce resource, such as computation, stake, storage, bandwidth, or some other costly input. A third family tries to use social structure, where fake identities may be easy to create but trust links from honest users are harder to fabricate at scale. These are very different implementations, but they all solve the same problem: they try to restore a meaningful relationship between apparent participation and underlying cost or trust.

The key tradeoff is that every Sybil-resistance mechanism imports an assumption from somewhere. proof-of-work imports scarcity of computation and energy. proof-of-stake imports scarcity of stake and the ability to punish it. Permissioned systems import trusted identity governance. Social-graph systems import assumptions about human trust networks having limited “attack edges.” There is no assumption-free version waiting to be discovered; the question is which assumption is explicit, auditable, and appropriate for the system.

How does proof-of-work provide Sybil resistance and what are its limits?

Bitcoin’s whitepaper is unusually clear on this point. It does not try to identify unique humans or trusted institutions. Instead, it changes the meaning of voting power. Satoshi describes proof-of-work as solving representation in majority decision-making by making it effectively one-CPU-one-vote rather than one-IP-address-one-vote or one-account-one-vote.

The mechanism is straightforward. To propose a block with authority, a miner must find a nonce that makes the block hash satisfy a difficulty target. Producing that proof is expensive on average, while verifying it is cheap. This asymmetry matters. It means a participant cannot cheaply create influence by minting identities. A thousand miner identities with no hash power are still nearly powerless; a single identity controlling enormous hash power is influential. The protocol deliberately stops caring about how many names exist and starts caring about how much scarce work backs them.

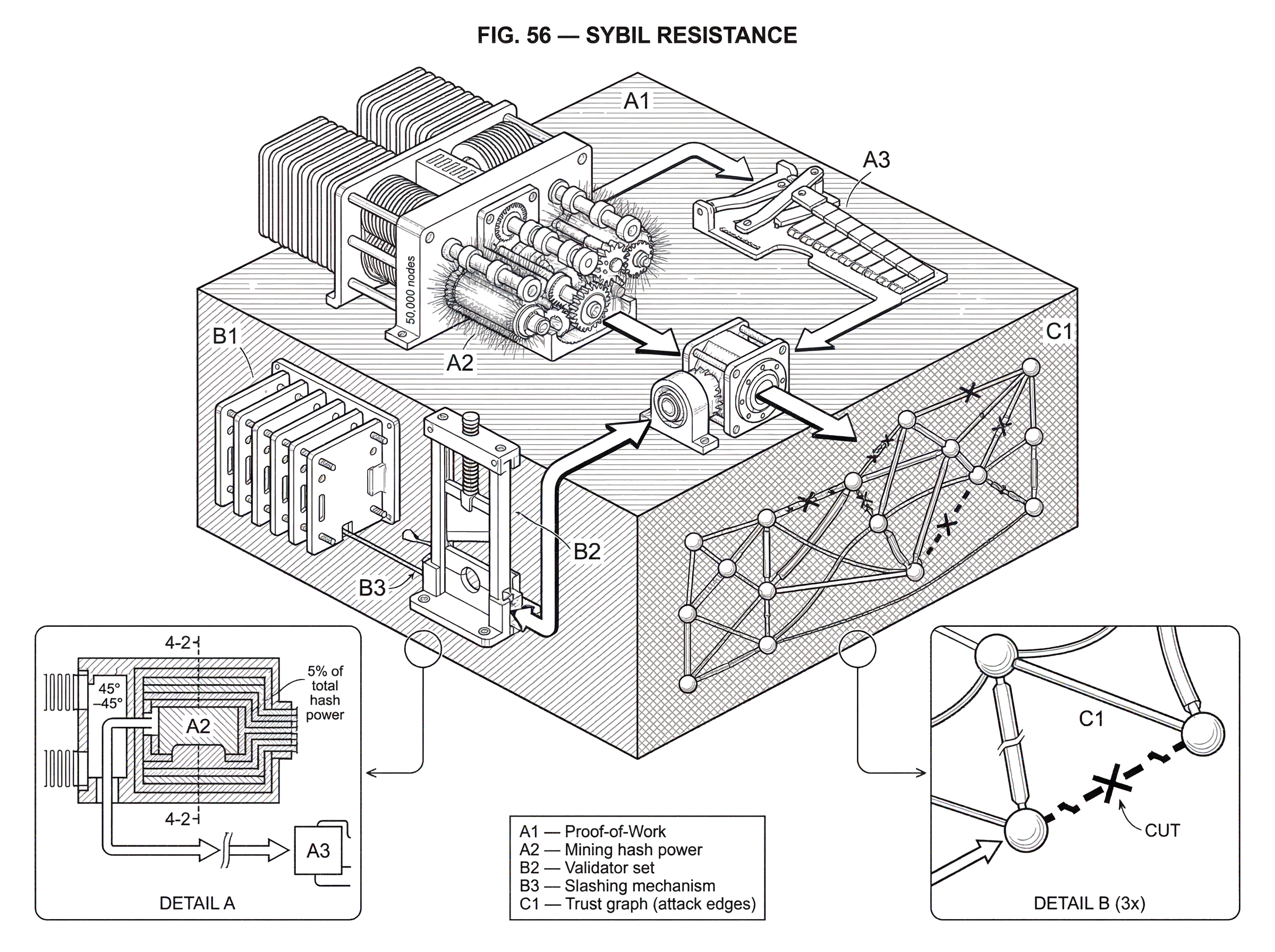

A worked example makes the shift concrete. Imagine an attacker connects 50,000 nodes to the network and claims to be 50,000 independent miners. If consensus were based on node count, this would be devastating. But in Bitcoin, each of those nodes still needs real computational work to extend the chain. If together they control only 5% of total hash power, then all 50,000 identities together still produce blocks at roughly the rate expected from 5% of the work. The labels multiply, but the influence does not. The system has made identity cheap but authority expensive.

This is why the longest-chain rule matters. Nodes follow the chain with the greatest cumulative proof-of-work, because that chain represents the largest committed pool of computation. The rule is not “believe the loudest crowd.” It is “believe the history that would have been most expensive to fabricate.” Under the whitepaper’s security assumption, the system remains secure so long as honest nodes collectively control more CPU power than any cooperating attacker group.

That last sentence also shows where proof-of-work breaks down. It does not make attacks impossible; it makes them costly and threshold-dependent. If attackers control equal or greater effective mining power, they can outcompete honest miners. If mining centralizes into a few pools or specialized operators, the nominal openness of the system may hide concentrated control over the scarce resource that matters. And economic research on bribery attacks suggests that under some conditions, buying temporary cooperation from dispersed miners may be cheaper than simplistic models imply. So proof-of-work solves the identity problem by moving security to the resource layer, but then the resource layer becomes the critical assumption.

How does proof-of-stake defend against Sybil attacks and what assumptions does it require?

| Variant | Scarce basis | How it bounds influence | Punishment mechanism | Practical limit |

|---|---|---|---|---|

| Basic PoS validator | Locked on-chain stake | Voting weighted by bond | Slashing for misbehavior | Depends on stake distribution |

| Nominated PoS (Polkadot) | Nominated locked capital | Election spreads stake | Slashing nominators and validators | Minimum active bond complexity |

| Ethereum-style PoS | Deposit contract stakes | Validators tied to deposits | Slashing and exit penalties | Weak subjectivity considerations |

proof-of-stake makes the same conceptual move as proof-of-work, but with a different scarce resource. Instead of burning electricity to earn block production rights, participants lock stake. Influence is then weighted by economically committed capital rather than by identity count.

This is the deep continuity between PoW and PoS. People often compare them as if they were entirely different categories. At the level of Sybil resistance, they are variants of the same idea: replace cheap identities with costly weight. In PoW, the cost is externalized into ongoing resource expenditure. In PoS, the cost is internalized as collateral at risk.

Ethereum’s proof-of-stake specs include a deposit contract and validator machinery precisely because joining consensus is not just a matter of opening another network connection. A validator identity becomes meaningful only when backed by stake under protocol rules. The same is true across staking-based systems, even when the details differ. In Polkadot’s Nominated Proof-of-Stake, capital locked on-chain is the basis of security, and an attack requires acquiring and risking enough stake to matter. In Cardano’s Ouroboros Praos, security is framed around an honest majority of stake and strengthened by cryptographic tools such as VRFs and forward-secure signatures. In Solana, validators post bonds, and slashing is used to punish provable conflicting votes.

Here again, a concrete example helps. Suppose an attacker creates 10,000 validator keypairs in a PoS network. On paper, that looks like massive participation. But if each validator requires bonded stake, the attacker’s influence is bounded by the stake actually locked behind those validators. Splitting S units of stake across 10,000 identities usually does not create more total voting weight than holding S under one identity. The protocol is designed so that fragmentation of identity does not create new authority. If anything, spreading stake may introduce overhead and operational risk without adding power.

Slashing is what makes PoS more than a simple registry of balances. If a validator can sign conflicting histories at no cost, identities may still be cheap to weaponize. Slashing raises the price of misbehavior by threatening bonded stake. Polkadot explicitly frames this as partial or full confiscation for misbehavior; Solana similarly describes slashing for double-voting. The system is saying: not only must you post scarce capital to speak with weight, you may lose that capital if you abuse the role.

Still, PoS has its own assumptions and edges. Security depends on stake distribution, not just stake existence. If an attacker gains enough stake, colludes with enough stake, rents influence indirectly, or exploits users who delegate without monitoring, the system can weaken. Some PoS designs also need weak subjectivity: a node coming online cannot infer the correct chain from genesis purely from objective resource traces in the same way PoW tries to do; it needs a reasonably recent trusted checkpoint or social knowledge to avoid certain long-range history problems. So PoS resists Sybil attacks, but not by magic. It does so by making consensus membership and authority economically gated and punishable.

How do permissioned validators eliminate Sybil risk, and what openness trade-offs follow?

There is a simpler way to get Sybil resistance: do not allow arbitrary identities to count. If a consortium, company, or public authority decides who the validators are, then fake self-created identities can simply be excluded. This is the model behind permissioned networks and proof-of-authority systems.

Mechanically, this works because the protocol no longer has to discover distinctness on its own. It imports distinctness from an external governance process. A validator key is meaningful because some trusted authority says it corresponds to an approved entity. Reputation, contractual accountability, and removal procedures take the place of open economic competition.

This is often the most direct answer to the Sybil problem, but it changes what the system is. The network becomes less open and more dependent on institutional trust. That may be exactly the right choice for some applications. The important point is conceptual honesty: the system is Sybil-resistant because it has a gatekeeper, not because it has solved decentralized identity uniqueness in the abstract.

When can social-graph defenses (SybilGuard, SybilLimit) stop Sybil attacks?

Some systems try to avoid both central identity authorities and pure resource pricing by using social relationships. The insight behind SybilGuard and SybilLimit is subtle. A malicious actor can create many fake identities internally, but it may be much harder for those identities to obtain many genuine trust links from honest users. If the number of such honest-to-malicious links (often called attack edges) stays limited, then the Sybil region is connected to the honest region through a relatively small cut in the trust graph.

SybilGuard exploits this structure using verifiable random routes through the graph. Very roughly, honest nodes’ routes tend to remain in the honest region and intersect in ways that Sybil routes cannot easily imitate unless there are enough attack edges. This lets the protocol bound how many Sybil groups can be accepted and how large those groups can be. SybilLimit builds on the same idea and improves the bounds significantly, with near-optimal guarantees within that model family.

This is an elegant approach because it attacks the real bottleneck: not fake-account creation, which is easy, but integration into the honest trust fabric, which may be hard. It is also a reminder that Sybil resistance need not always be economic. Sometimes the scarce resource is social capital.

But the assumptions are demanding. These schemes rely on structural properties of social networks, such as fast mixing, and on the attack edge count staying limited. If adversaries can buy, coerce, or socially engineer many trust relationships, the cut ceases to be small and the guarantee weakens. If the social graph does not have the right structure, the mathematics no longer gives the same comfort. So these systems are best understood not as universal Sybil solutions, but as defenses that work when social trust really is costly to counterfeit.

Why is Sybil resistance foundational to consensus safety and liveness?

Consensus protocols are often described in terms of Safety and liveness: not finalizing conflicting histories, and continuing to make progress. But in open systems, both properties quietly depend on Sybil resistance first. If anyone can cheaply become “most of the network,” then threshold rules stop meaning what they appear to mean.

This is easiest to see in Byzantine fault tolerant systems. A rule like “decide when two-thirds of validators agree” only says something if validator weight cannot be inflated for free. Tendermint, for example, is a BFT state machine replication engine, but the practical question is who gets to be in the validator set and with what weight. That membership layer (usually stake, delegation, and slashing in Cosmos-style systems) is what gives the BFT threshold real meaning. Without that layer, the threshold is just arithmetic over names.

Leaderless protocols such as the Snow family used in Avalanche change the communication pattern, using repeated network subsampling and metastable convergence rather than a classical leader. But even there, the same underlying issue remains: the protocol must sample from a population whose influence is not cheaply dominated by fabricated identities. The sampling mechanism may not require accurate knowledge of every participant, yet some membership and weighting assumptions still carry the Sybil-resistance burden. Consensus logic and Sybil resistance are separable on paper, but in deployed systems they are tightly coupled.

Common misconceptions about Sybil resistance

A common misunderstanding is to think Sybil resistance means “preventing fake accounts.” That is too narrow. Most open blockchains do not try to stop fake accounts from existing. You can create many addresses and many network peers. What they try to stop is those accounts acquiring governance or consensus influence for free.

Another misunderstanding is to think decentralization automatically strengthens Sybil resistance. Sometimes it does, because the scarce resource becomes more widely held. But decentralization can also complicate security economics. Research on bribery attacks shows that when power is spread among many small operators, each operator may bear only a small personal share of the damage from a successful attack, which can make corruptive coordination cheaper than naive models suggest. The right lesson is not that decentralization is bad, but that the cost of corrupting the relevant resource is a strategic question, not a slogan.

A third misunderstanding is to treat Sybil resistance as binary. In reality it is almost always threshold-based and assumption-based. A network is not “Sybil-proof.” It is Sybil-resistant under stated conditions: honest majority of hash power, honest majority of stake, bounded attack edges, trusted validator admission, or similar. The important engineering question is whether those assumptions are legible and whether the failure mode is acceptable.

How do Sybil problems affect airdrops, token allocation, and other non-consensus actions?

| Use case | Sybil risk | Defense type | Timing | Typical tools |

|---|---|---|---|---|

| Airdrops | Wallet splitting captures rewards | Post-hoc detection | After distribution | Graph clustering and heuristics |

| Access control | Fake accounts gain privileges | Admission controls | Before access | Permissioning and caps |

| Reputation systems | Inflated reputations via fakes | Social verification | Ongoing | Trust links and manual review |

The same problem appears wherever a protocol allocates value or influence by apparent participation. Airdrops are a good example. If rewards are meant for many distinct users, but one operator can split activity across many wallets, then the distribution can be captured by identity multiplication. That is why projects sometimes run Sybil-detection pipelines over on-chain graphs, funding patterns, and behavioral clusters.

Arbitrum’s published methodology for airdrop filtering is a useful example of what this looks like in practice. It builds graphs from transfers, funding transactions, and sweep behavior, then looks for clusters that suggest one entity controlled many addresses. This is not consensus security in the narrow sense, but it is the same underlying problem: the system wants “many addresses” to correspond, at least roughly, to many independent recipients. When that assumption matters, Sybil resistance becomes an allocation problem as well as a consensus problem.

This also shows why detection and resistance are different. Consensus mechanisms usually try to make fake multiplicity unhelpful by design. Airdrop filtering often tries to infer after the fact which identities were probably linked. The first approach changes incentives ex ante; the second attempts cleanup ex post. Both matter, but they operate at different layers.

Why can't protocols alone fully prevent Sybil attacks (Douceur's impossibility)?

The deepest fact about Sybil resistance is still Douceur’s impossibility result. If a system has no trusted identity authority and no reliable scarce resource that can be tested under realistic conditions, then it cannot stop one actor from presenting many identities. That is not a bug in a particular blockchain. It is a structural fact about open distributed systems.

Resource tests help only under specific assumptions. Douceur discusses communication, storage, and computation challenges, and shows they can theoretically bound the number of identities a faulty entity presents only if validation is sufficiently coordinated and attackers’ resources are limited in the right way. In large-scale systems, those requirements are often impractical. Web-of-trust ideas help only if the trust graph itself cannot be cheaply infiltrated. Permissioned identity works only because someone remains trusted to administer membership. Every path out of the problem goes through an assumption that lies deeper than mere pseudonymous naming.

Conclusion

Sybil resistance is the mechanism that stops a decentralized system from confusing many identities with many independent participants. The core trick is always the same: do not let raw names determine power. Tie influence to something harder to fake; trusted membership, computation, stake, or social trust.

That is why Sybil resistance is not an optional add-on to consensus. It is the condition that makes consensus thresholds mean anything at all. Without it, an attacker can win by cloning themselves. With it, the real question becomes which scarce basis for influence your system can justify; and what assumptions you are willing to live with.

What should I understand about Sybil resistance before trading, depositing, or bridging?

Understand Sybil resistance so you can judge how a chain’s consensus and validator economics affect the safety of deposits, withdrawals, and trade execution. On Cube Exchange, that means doing a few concrete checks before you fund, trade, or move assets and then using Cube’s standard deposit and order workflows to act.

- Identify the chain and its consensus model (PoW, PoS, permissioned, or social-graph) and note whether finality is probabilistic or requires checkpoints.

- Check validator/stake concentration and recent slashing or governance incidents on a public chain explorer before depositing or delegating.

- Fund your Cube account with fiat or a supported crypto transfer using the standard deposit flow.

- For assets with delayed or probabilistic finality, wait for the chain-specific confirmations/finality threshold, then place a limit order on Cube for controlled execution and review fees and network details before submitting.

Frequently Asked Questions

Proof-of-work ties voting weight to costly computation: miners must expend real CPU/electricity to produce valid block proofs, so creating many cheap identities doesn't multiply influence - only hash power matters. Its limits are that security requires an honest majority of CPU power and can be undermined by mining centralization or bribery schemes that rent or buy enough hash power to outcompete honest miners.

Proof-of-stake replaces expensive computation with bonded capital: validators must lock stake to obtain voting weight and can be penalized (slashed) for equivocation, so spinning up many keypairs without economic backing does not increase authority. Its assumptions include honest-majority-of-stake, correct slashing and stake-distribution mechanics, and additional concerns such as weak subjectivity and risks if stake becomes concentrated or rentably controlled.

Douceur’s impossibility result shows that without a trusted identity authority or strong, coordinated resource tests, a purely protocol-level method cannot reliably prevent one actor from presenting many identities; practically this means protocols must import an external assumption (scarce resource, governance, or social cost) to be Sybil-resistant.

Social-graph defenses like SybilGuard and SybilLimit work by assuming the honest region and Sybil region are connected by relatively few "attack edges," exploiting fast-mixing properties so honest random routes intersect more than Sybil routes. They fail if the adversary can create many honest-to-malicious trust links, if the social graph does not mix as assumed, or if honest users are widely compromised or socially engineered.

Not necessarily; decentralization can spread the scarce resource and make Sybil attacks harder, but it can also make bribery or temporary collusion cheaper in practice because many small operators may be easier or cheaper to co-opt than a single large one. The net effect depends on the economic and governance details of how the critical resource (hash power, stake, reputation) is distributed.

Yes - permissioned systems avoid Sybil attacks by design because a governance process explicitly vets and admits validators, so fake self-created identities can be excluded; the tradeoff is reduced openness and dependence on the gatekeeper's trustworthiness and governance processes.

Sybil problems matter wherever rewards or influence are allocated by apparent participation: airdrops, for example, can be captured by address-splitting, so projects often run post-hoc Sybil-detection pipelines (graph clustering, behavioral heuristics) to filter claims. Protocol-level Sybil resistance tries to make multiplicity unhelpful ex ante, while airdrop filtering is an ex post mitigation with its own accuracy and subjectivity limits.

Leaderless protocols such as Avalanche change communication and decision mechanics (subsampling and metastability) but do not remove the need for Sybil resistance: they still rely on sampling from a population whose influence cannot be cheaply dominated by fabricated identities, and the exact Sybil-resistance mechanism or parameter choices remain part of the system assumptions.

Sybil resistance is not binary; it is assumption- and threshold-based - systems are Sybil-resistant only under stated conditions (honest majority of hash power, honest majority of stake, bounded attack edges, or trusted admission). Designers therefore must make those assumptions explicit, auditable, and acceptable, and plan for the consequences when they fail.

Related reading