What Is PBFT (Practical Byzantine Fault Tolerance)?

Learn what PBFT is, why it needs 3f+1 replicas, how pre-prepare/prepare/commit work, and where Practical Byzantine Fault Tolerance fits in blockchain.

Introduction

PBFT, or Practical Byzantine Fault Tolerance, is a consensus protocol for making a group of replicas behave like one reliable service even when some of those replicas act arbitrarily or maliciously. That problem is harder than ordinary fault tolerance because the bad replicas are not merely slow or crashed: they can send different stories to different participants, pretend to agree and later deny it, or try to stall progress at carefully chosen moments.

The puzzle PBFT solves is this: if there is no central machine everyone trusts, and some participants may lie, how can the rest still agree on the exact order of operations? Order is the essential thing. If replicas execute the same requests in the same order, a deterministic service produces the same state and the same output everywhere. If the order can fork, the service stops being one service and becomes several conflicting ones.

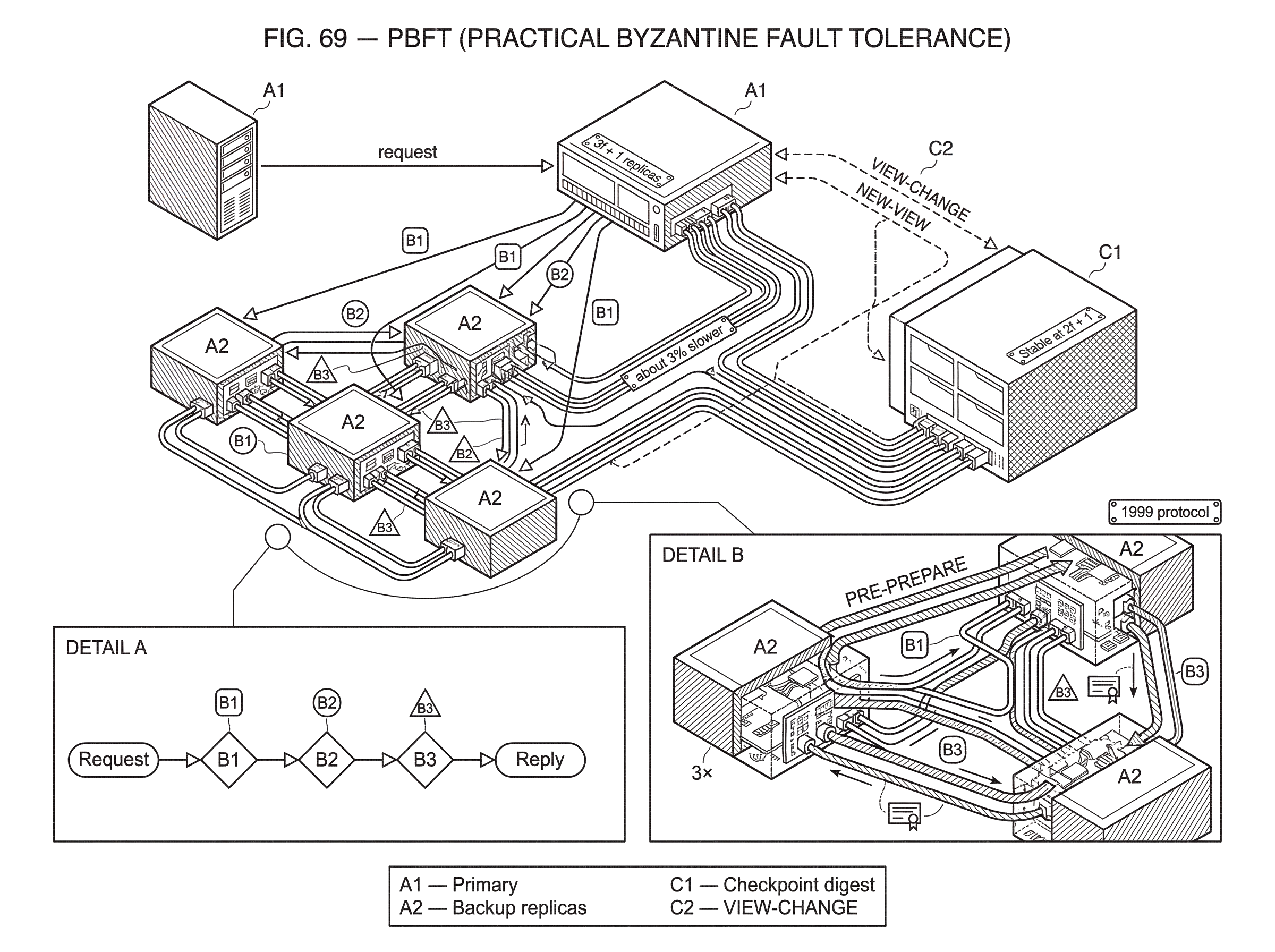

PBFT became influential because it showed that Byzantine fault tolerance did not have to remain a mostly theoretical idea. Castro and Liskov presented a protocol that tolerates Byzantine faults with 3f + 1 replicas to survive up to f faulty replicas, works safely in asynchronous network conditions, and can still make progress under a weak synchrony assumption. In the normal case, it executes read-only operations in one message round trip and read-write operations in two. Their implementation of a Byzantine-fault-tolerant NFS, called BFS, was reported to be only about 3% slower than standard unreplicated NFS in the Andrew benchmark. That was the important shift: Byzantine fault tolerance could be engineered, not just admired.

In blockchains and distributed ledgers, PBFT matters because many later protocols are either direct descendants of it or explicit reactions to its costs. Tendermint uses a PBFT-derived round structure. Hyperledger Fabric’s SmartBFT ordering service uses the familiar three-phase pattern. Systems such as Zilliqa adapted PBFT inside shards and then optimized its communication with multisignatures. And newer protocols such as HotStuff are easiest to understand once you see exactly what PBFT is trying to preserve and where its original design becomes expensive.

Why PBFT treats consensus as ordered state-machine replication

The cleanest way to understand PBFT is to ignore Byzantine behavior for a moment and start with the service abstraction it is protecting. Imagine a replicated key-value store. A client sends set(x, 5), then set(y, 9), then get(x). If every replica executes exactly that sequence in exactly that order, all honest replicas end in the same state and return the same result. The service looks singular even though it is physically replicated.

That is the invariant PBFT cares about most: honest replicas must not diverge on the order of committed requests. Everything else in the protocol exists to preserve that invariant when the leader lies, the network drops messages, or some replicas equivocate.

This is why PBFT is best seen as an ordering protocol for state-machine replication rather than as a vague “agreement system.” It is not enough that replicas agree that some request exists. They must agree on where that request sits in a sequence. If one honest replica executes request A before B while another executes B before A, safety is already gone for any non-commutative application.

The leader in PBFT is called the primary. The other replicas are backups. In the normal case, the primary proposes an order by assigning each client request a sequence number. But the primary is not trusted to do this unilaterally. PBFT’s structure forces that proposal to pass through enough cross-checking that a bad primary cannot cause two honest replicas to commit conflicting histories.

Why does PBFT require 3f + 1 replicas?

| Setup | Quorum size | Honest overlap | Safety result |

|---|---|---|---|

| PBFT (n = 3f + 1) | 2f + 1 | ≥ f + 1 honest | Conflicting commits impossible |

| Fewer replicas (n ≤ 3f) | ≤ 2f + 1 | ≤ f honest | Conflicting commits possible |

The 3f + 1 bound can look like a magical formula until you ask what property quorums must have. PBFT relies on sets of replicas large enough that any two such sets overlap in at least one honest replica. That overlap is the memory of the system: it prevents one view of history from silently being replaced by another.

Suppose there are up to f Byzantine replicas. Then a quorum of size 2f + 1 guarantees at least f + 1 honest replicas inside it, because at most f members can be faulty. Now consider two quorums of size 2f + 1 drawn from a total of 3f + 1 replicas. Those quorums must overlap in at least f + 1 replicas. Since only f can be faulty, at least one overlap member is honest. That honest overlap replica cannot honestly support two conflicting committed histories.

This is the structural reason PBFT uses 3f + 1, not an arbitrary engineering choice. If you try to do the same job with fewer replicas, the quorum intersections no longer force an honest witness to sit inside every pair of conflicting certificates. Once that overlap guarantee weakens, a Byzantine adversary can try to make different parts of the system believe different pasts.

There is a second point that readers often miss. **3f + 1 is about replicas, not clients. ** Clients are outside the replicated group. They submit requests and wait for matching replies. In classic PBFT, a client accepts a result after receiving f + 1 matching replies, because at least one of those replies must come from an honest replica.

How do PBFT’s pre-prepare, prepare, and commit phases work?

| Phase | Primary role | Goal | Certificate threshold | Effect |

|---|---|---|---|---|

| Pre-prepare | Propose sequence | Propose request slot | No quorum | Backups verify proposal |

| Prepare | Backups multicast | Show many saw proposal | 2f + 1 support | Replica becomes prepared |

| Commit | Replicas multicast | Anchor decision across views | 2f + 1 commits | Execute request and reply |

PBFT’s normal path has three named phases: pre-prepare, prepare, and commit. The names matter less than the job each phase performs.

The primary begins by receiving a client request and assigning it a sequence number n in the current view v. A view is simply the current leadership epoch: each view has one designated primary. The primary then multicasts a PRE-PREPARE message to all backups saying, in effect, “in view v, request r is proposed for slot n.”

That message alone is not enough to trust. A Byzantine primary could send different PRE-PREPARE messages to different backups for the same slot. So each backup checks that the message is well formed, comes from the correct primary for that view, and does not conflict with what it has already accepted. If it passes those checks, the backup multicasts a PREPARE message to the whole replica set.

The purpose of the prepare phase is not yet commitment. It is to establish that enough replicas have seen the same proposal for the same slot in the same view. The paper defines a replica as prepared for a request when it has logged the request, a matching PRE-PREPARE, and 2f matching PREPARE messages from different backups. With its own state included, that means the replica has evidence that 2f + 1 replicas support that exact proposal at that slot.

Once prepared, the replica multicasts a COMMIT message. The commit phase serves a subtler purpose than “more votes.” It ensures the decision remains anchored even across a later view change. A replica executes the request only after it has sufficient commit evidence, and then sends a REPLY to the client. The client accepts the result after receiving f + 1 matching replies.

The compression point is this: prepare says “enough replicas saw this proposal in this view,” while commit says “enough replicas know that enough replicas saw it,” which is what lets the order survive a leadership change. Without that second layer, a request might seem decided locally but fail to carry safely into the next view.

How PBFT’s phases prevent replicas from diverging (concrete example)

Consider four replicas, because 4 = 3f + 1 is the smallest nontrivial PBFT deployment and tolerates f = 1 Byzantine fault. Call them A, B, C, and D. Suppose A is the primary for the current view, and the client submits a request to transfer funds.

If A is honest, it assigns sequence number 27 and multicasts a PRE-PREPARE for that request. B, C, and D verify that the proposal is valid and not conflicting, then each multicasts a PREPARE. Once B sees the original PRE-PREPARE plus two matching PREPARE messages from the other backups, B is prepared. The same is true for C and D. Each then multicasts a COMMIT. When a replica collects a sufficient commit set, it executes the transfer and replies to the client. Because the same request occupied the same slot at every honest replica, the resulting state remains aligned.

Now make A Byzantine. It sends B and C a PRE-PREPARE saying slot 27 contains transfer X, but sends D a different PRE-PREPARE saying slot 27 contains transfer Y. B and C will prepare X only if they see matching PREPARE support. D will try to prepare Y, but it cannot gather enough matching honest support because B and C are preparing X, not Y. Since there is only one Byzantine replica in this example, the conflicting proposal cannot acquire a large enough quorum to become prepared and then committed among honest replicas.

The mechanism is not that Byzantine behavior becomes impossible. The mechanism is that Byzantine behavior cannot gather enough intersecting support to make two conflicting values both look committed to honest nodes.

What makes PBFT view changes difficult and how are they solved?

The happy path is only half the protocol. PBFT would be far less important if it only worked under a correct leader. The real challenge is switching leaders without losing safety or getting stuck forever.

A faulty primary can stall progress in several ways. It can stop proposing. It can propose but selectively omit some replicas. It can equivocate. PBFT handles this with a view-change protocol. When replicas suspect the primary is faulty or too slow, they move toward a higher-numbered view with a new primary.

This is where the distinction between prepared and committed matters. A new primary cannot simply start proposing fresh slots from scratch, because some requests may already be safely far along in the old view. If the protocol forgot that evidence, a request that was effectively decided in one view could be replaced by a conflicting request in the next.

So each replica sends a VIEW-CHANGE message containing proofs about what it knows, including checkpoint information and prepared requests. When the new primary receives 2f + 1 valid VIEW-CHANGE messages for view v + 1, it constructs a NEW-VIEW message. Using the collected proofs, it determines which sequence numbers are already constrained by prior prepared state and must be preserved. For gaps, it may insert null requests so that sequence numbers remain continuous without accidentally introducing conflicting operations.

That “fill gaps with nulls” detail is not cosmetic. It keeps the log structure coherent across views. The new primary is not inventing history freely; it is rebuilding the safest continuation consistent with the intersection of prior evidence.

This part of PBFT is both essential and implementation-sensitive. A later verification effort, Shipwright, found subtle PBFT implementation bugs around view-change message encoding and stapled signatures that caused liveness failures without violating safety. That is a useful lesson: the protocol idea is elegant, but the view-change boundary is where many practical mistakes live.

How do checkpoints enable garbage collection and recovery in PBFT?

A replicated log cannot grow forever without some way to compress old agreement history. PBFT handles this with checkpoints.

Periodically, replicas take a snapshot digest of the application state at a sequence number N and multicast a CHECKPOINT message. A checkpoint becomes stable when a replica has collected 2f + 1 matching checkpoint messages for that same state digest at N. At that point, the replica has enough proof that honest replicas agree on the state up to N, so it can discard old PRE-PREPARE, PREPARE, and COMMIT records at lower sequence numbers.

This is not just an optimization for storage. Stable checkpoints are part of recovery and view change. They give replicas a compact, trustworthy anchor from which to transfer state or rebuild progress after disruption. Without them, the evidence needed to justify new views would become unmanageably large.

You can think of a stable checkpoint as the protocol saying, “everything before here is settled enough that we no longer need the full trial transcript.” The analogy helps explain compaction, though it fails in one respect: unlike a legal archive, the checkpoint is not merely recordkeeping; it is active protocol evidence used for future safety.

What engineering choices made PBFT practical in real systems?

The word practical in PBFT was earned mainly through engineering choices that reduced cost in the common case. The original system avoided using public-key signatures on every protocol message. Instead, it authenticated normal traffic with message authentication codes, or MACs, and reserved digital signatures mainly for VIEW-CHANGE and NEW-VIEW messages, which occur less often. Castro and Liskov describe this as eliminating the main performance bottleneck of earlier Byzantine systems.

That choice reveals an important theme in BFT design. The protocol’s logical safety comes from quorum intersection and state-machine replication. The cryptography’s role is narrower but still essential: it prevents faulty replicas from forging support they do not have. Once you see that division, many later optimizations make more sense. Zilliqa, for example, moved in a different direction and used digital signatures with EC-Schnorr multisignatures to compress PBFT-style prepare and commit evidence, reducing normal-case communication from O(n^2) to O(n) inside its consensus groups.

PBFT also optimized latency. In the no-fault case, the protocol can complete read-write operations in two message round trips and read-only operations in one. That is one reason it became attractive for permissioned systems, where validator sets are small enough and stable enough that these low-latency paths matter.

The BFS implementation result matters for the same reason. A fault-tolerant NFS service running only about 3% slower than unreplicated NFS in the Andrew benchmark made the claim concrete: Byzantine replication was not automatically doomed to be too slow for real services.

When does PBFT’s communication cost limit scalability?

PBFT’s main scaling cost is its communication pattern. In the classic design, replicas multicast to all replicas during prepare and commit, which gives quadratic message complexity in the number of replicas. That is manageable for small committees, but it becomes a bottleneck as committees grow.

There are really two related costs here. The first is network cost: each extra replica increases not just the number of participants but the number of pairwise messages. The second is verification and bookkeeping cost: each replica must track more certificates, more checkpoints, and more client interactions. Some analyses also note that clients in classic PBFT typically wait for f + 1 matching replies, which creates linear client-side overhead as the system size increases.

This is why PBFT fits naturally in permissioned settings, where the validator set is small and membership is known. Hyperledger Fabric’s SmartBFT ordering design is a good example: it uses the PBFT-style three phases to totally order requests and preserve safety across views in an environment where a limited set of orderers can afford close coordination. BFT-SMaRt, a widely used Java library for Byzantine state-machine replication, occupies a similar design space and exposes the practical realities of deployment such as state transfer, reconfiguration, and the dangers of non-deterministic application behavior.

In open, large-validator environments, PBFT’s quadratic pattern quickly becomes painful. That pressure is what drove later protocols toward collectors, aggregated signatures, and linear-communication variants. HotStuff is one prominent response: it keeps the leader-based BFT structure but aims for linear communication and responsiveness in the partially synchronous model. SBFT pushes in a similar direction with collectors and threshold signatures, explicitly treating PBFT as the baseline being improved.

What assumptions does PBFT make and what breaks if they fail?

PBFT’s safety does not depend on timely delivery. Even in asynchronous conditions, honest replicas should not commit conflicting orders as long as the cryptographic assumptions hold and the number of Byzantine replicas stays within the bound. That is a strong property.

Its liveness, however, is conditional. The original paper assumes a weak synchrony condition: message delays cannot grow faster than retransmission time forever. In plainer language, the network may be unpredictable for a while, but eventually enough messages between honest participants must get through in a timely way for timeouts and leader replacement to work.

This distinction matters because people often hear “works in asynchronous systems like the Internet” and infer unconditional progress. PBFT does not promise that. It promises that safety is maintained despite asynchrony, while progress requires eventual periods where the network behaves well enough.

There are other assumptions that are easy to overlook. The replicated service should be deterministic. If honest replicas execute the same ordered requests but make different local choices because of clocks, random numbers, or non-deterministic serialization, replication breaks above the consensus layer. This is one reason practical middleware work found that non-determinism handling remained a real deployment obstacle.

PBFT also does not provide privacy by itself. A Byzantine replica may leak application state or client inputs. The original paper notes that secret sharing would be needed to address privacy for opaque inputs or state. So PBFT solves agreement and replication, not confidential computation.

How is PBFT adapted in blockchain systems like Tendermint, Fabric, and Zilliqa?

| System | Voting stages | Block handling | Scalability tweak | Best suited for |

|---|---|---|---|---|

| Tendermint | Prevote, Precommit | Agree on BlockID | Gossip full block parts | Fast-finality chains |

| Hyperledger SmartBFT | Pre-prepare, Prepare, Commit | Ordering service total order | Small known orderers | Enterprise permissioned networks |

| Zilliqa | PBFT inside shards | Shard microblocks aggregated | EC‑Schnorr multisignatures | Sharded throughput scaling |

In blockchain settings, PBFT is usually not dropped in unchanged. What carries over is the logic of quorum-certified ordering under a rotating leader.

Tendermint, for instance, uses rounds with a proposer and two voting stages, prevote and precommit, to agree on the next block. The names differ from classic PBFT, and full blocks are disseminated separately from the compact identifier being voted on, but the family resemblance is clear: a designated proposer suggests an order, validators exchange authenticated votes, and commitment depends on quorum structure designed to preserve safety across rounds.

Hyperledger Fabric’s SmartBFT shows a more direct inheritance. Its ordering service uses the recognizable pre-prepare, prepare, and commit pattern to totally order requests and keep committed requests stable across views. This is the classic PBFT idea applied to enterprise-style ordering rather than an open Nakamoto-style chain.

Zilliqa shows a different adaptation. It runs PBFT inside shards and in a directory-service committee, then uses EC-Schnorr multisignatures to compress the communication footprint that would otherwise become too large. The motivation is straightforward: classic PBFT’s O(n^2) messaging is acceptable only up to modest committee sizes, so if you want parallel shard-level agreement you must aggressively reduce the cost of proving quorum support.

These examples all point to the same underlying truth. PBFT is not important because everyone runs the 1999 protocol unchanged. It is important because it established the durable pattern for Byzantine state-machine replication in systems where finality is immediate once a quorum certificate exists, rather than probabilistic as in longest-chain designs.

What practical deployment challenges arise when implementing PBFT?

PBFT is conceptually clean, but building real systems on it is not frictionless. Experience reports on the Castro-Liskov middleware found obstacles such as weak support for dynamic client management, application-managed state, unresolved non-determinism issues, limited web integration, and the need for significant engineering effort before an application developer could use the protocol comfortably.

That gap matters because consensus protocols live at an awkward layer. A paper can prove that a replicated state machine is safe and live under certain assumptions, yet operators still need configuration distribution, state transfer, reconfiguration, secure key management, and application determinism. BFT-SMaRt’s documentation, for example, warns about deterministic serialization in state transfer and notes that some read-only optimizations can threaten liveness unless carefully handled.

So when people ask whether PBFT is “used in practice,” the honest answer is yes, but usually through substantial engineering wrappers, variants, and specialized libraries rather than through direct textbook deployment. The core mechanism is practical; the full surrounding system still demands disciplined implementation.

Conclusion

PBFT is a protocol for turning a small set of partially trusted machines into one logically consistent service, even when some of them lie. Its key insight is that if enough replicas witness the same ordered request, and those witness sets intersect in honest nodes, then conflicting histories cannot both become committed. The three phases, the 3f + 1 replica bound, the view-change machinery, and stable checkpoints all serve that one goal.

What is easy to remember tomorrow is this: PBFT makes Byzantine agreement practical by treating consensus as ordered state-machine replication and by using quorum intersection to preserve one history across faulty leaders. Its descendants may change the message pattern, the cryptography, or the scalability profile, but they are still mostly arguing with PBFT’s original design rather than replacing its core idea.

What should you understand before relying on PBFT-based networks?

Understand whether a network uses PBFT-style finality and how that affects deposit finality and trade settlement. On Cube Exchange, you can verify the network model, fund your account using the exact network shown for the asset, and then follow the steps below to reduce risk when trading or moving funds tied to BFT-based infrastructure.

- Check the asset’s finality model: open the token or network documentation linked from Cube and confirm whether it uses immediate (PBFT-style) finality or probabilistic finality.

- Fund your Cube account using the exact network and contract address shown on Cube (use the listed network tag to avoid cross-chain mistakes).

- Wait for the chain’s finality confirmation before treating the deposit as settled (for PBFT-derived chains, wait for block finalization or the project’s recommended checkpoint depth; for probabilistic chains, wait additional confirmations).

- Place your trade on Cube: open the market, choose a limit order to control price on low-liquidity PBFT-backed tokens or a market order for immediate execution, review estimated fill and fees, and submit.

Frequently Asked Questions

Because PBFT requires quorum intersection that guarantees at least one honest replica in any two quorums: with up to f faulty replicas, quorums of size 2f+1 drawn from n replicas must overlap in at least f+1 replicas, which forces n = 3f+1 so every two quorums share at least one honest witness and conflicting histories cannot both appear committed to honest nodes.

Pre-prepare is the primary proposing a request and slot, prepare proves that 2f+1 replicas have seen the same proposal in the same view (establishing it is widely witnessed), and commit proves that enough replicas know that enough replicas saw it so the decision will survive a view change; only after sufficient commit evidence does a replica execute the request and reply to the client.

During a view change replicas send VIEW-CHANGE messages containing checkpoints and prepared-request proofs; the new primary collects 2f+1 such messages and uses the intersection of that evidence to preserve any sequence numbers constrained by prior prepared state, filling gaps with null requests so previously prepared (or effectively decided) requests are not lost or overwritten by the new view.

Castro and Liskov made PBFT practical by avoiding expensive public-key ops in the normal case: routine messages use MACs while digital signatures are reserved for rarer view-change/NEW-VIEW messages, and their BFS implementation showed that a BFT service could run with only modest overhead (reported ~3% slower than unreplicated NFS in the Andrew benchmark).

The classic protocol’s all-to-all prepare/commit multicasts give O(n^2) message complexity, which limits PBFT to small, permissioned committees; later systems address this by aggregating or collecting votes and using multisignatures or threshold signatures (examples include Zilliqa’s multisignature approach, HotStuff’s leader-based linearization, and SBFT’s use of threshold/BLS signatures) to reduce communication costs.

PBFT preserves safety under arbitrary message delays (asynchrony) but does not guarantee progress in perpetual asynchrony; liveness requires a weak synchrony assumption (eventually timely delivery so timeouts and leader replacement can make forward progress).

No - PBFT does not provide confidentiality by itself; a Byzantine replica can leak application state or client inputs, and protecting privacy for opaque inputs or state requires additional techniques such as secret sharing beyond the PBFT agreement layer.

Replicas periodically exchange CHECKPOINT messages and a checkpoint becomes stable when a replica collects 2f+1 matching checkpoint digests for the same sequence number; a stable checkpoint anchors agreed state, lets replicas garbage-collect earlier PRE-PREPARE/PREPARE/COMMIT records, and provides a compact recovery anchor for state transfer.

Practical deployments face many pitfalls: non-deterministic application behavior can break replication, view-change encoding or signature handling bugs have caused liveness failures (as found in Shipwright analyses), and real systems require engineering work for client management, state transfer, deterministic serialization, and key management rather than a straight textbook drop-in.

Classic PBFT is best suited to permissioned settings with small, known validator sets because of its quadratic messaging and client-reply costs; blockchains reuse PBFT’s core idea but adapt it (for example Tendermint’s proposer/prevote/precommit rounds, Hyperledger Fabric’s SmartBFT ordering, and Zilliqa’s sharded PBFT with multisignatures) rather than running the 1999 protocol unchanged.

Related reading