What Is Federated Consensus?

Learn what federated consensus is, how quorum slices and quorum intersection work, and why trust configuration determines safety and liveness.

Introduction

Federated consensus is a way for distributed systems to reach agreement without requiring every participant to accept a single, globally fixed validator set. Instead, each node chooses for itself which other nodes it trusts, and global agreement emerges (or fails to emerge) from the overlap among those local trust choices.

That design matters because it changes the usual starting point of consensus. In classical Byzantine fault tolerant systems, the set of validators is typically known in advance. In proof-of-work and proof-of-stake systems, influence is usually tied to work or stake under a network-wide rule. Federated consensus begins somewhere else: with subjective trust at the node level. The hope is to get open participation, fast finality, and less resource waste than proof-of-work, while avoiding the rigid membership assumptions of classical BFT.

The key question is simple to ask and hard to answer: if everyone can choose their own trusted peers, when does the whole system still behave like one system instead of breaking into incompatible islands? That question leads to the core concepts of federated Byzantine agreement, quorum slices, quorums, quorum intersection, and the practical tradeoff between safety and liveness.

What coordination problem does federated consensus solve?

Consensus exists to solve a specific coordination problem: many machines need to maintain the same state even when some machines are faulty, offline, malicious, or simply delayed. If two honest nodes permanently disagree about what happened, the ledger is no longer a shared ledger. If nodes refuse to decide until perfection arrives, the system stops being useful. A consensus protocol therefore has to balance at least two demands that pull against each other: safety, meaning honest participants do not confirm contradictory outcomes, and liveness, meaning the system keeps making progress.

Classical BFT systems solve this in networks with a known validator set. That gives strong structure. Everyone knows who counts, what threshold is required, and which failures are tolerable. But this also creates a membership problem: who chooses the validators, and how do new participants enter? Public blockchains often answer with proof-of-work or proof-of-stake, which replace explicit trust in named validators with network-wide resource rules. That improves openness in one dimension, but introduces other costs and assumptions.

Federated consensus tries a different move. Instead of requiring the network to agree first on a global validator set, it lets each node specify its own agreement requirements. A bank, exchange, wallet provider, or independent validator can say, in effect, “I consider a decision credible if these particular parties, or enough of them, agree.” The system then asks whether those local policies overlap enough to support global agreement.

That is the compression point: federated consensus is not consensus without trust; it is consensus where trust is expressed locally and the global quorum structure is the consequence of those local choices.

How do local trust choices produce global agreement in federated consensus?

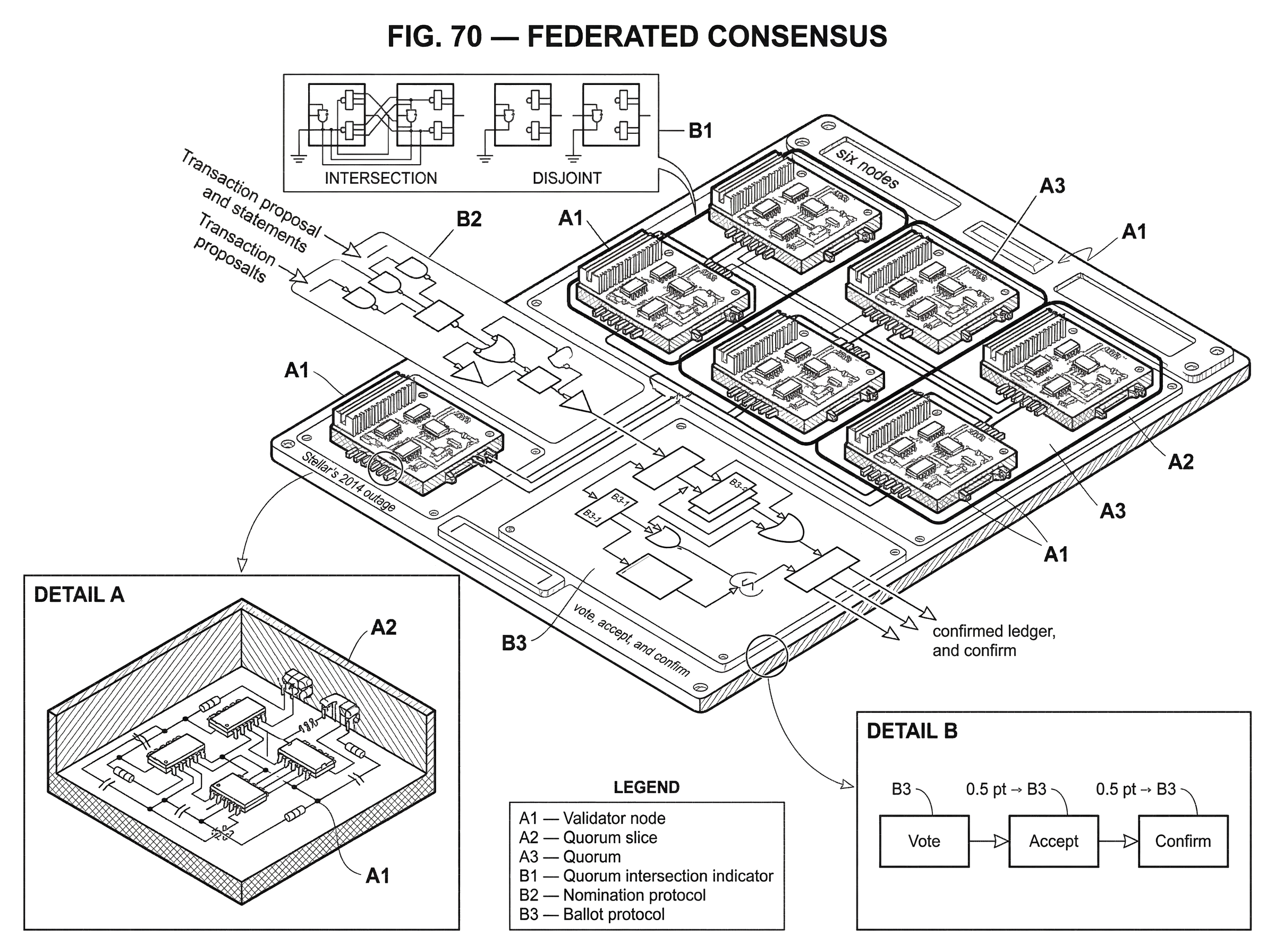

The formal model introduced for this idea is the federated Byzantine agreement system, usually abbreviated FBAS. An FBAS consists of a set of nodes and, for each node, one or more sets of peers that the node treats as sufficient for agreement. These sufficient sets are called quorum slices.

A quorum slice is easiest to understand as a node’s personal rule for when it feels safe moving forward. Suppose node A says it trusts any two of {B, C, D} together with itself. Then {A, B, C}, {A, B, D}, and {A, C, D} are examples of quorum slices that satisfy A’s requirement. Another node may choose a different rule entirely. There is no requirement that all nodes have the same trust list or the same threshold.

A quorum is the next level up. A set of nodes is a quorum if, for every node in that set, the set contains at least one quorum slice that satisfies that node. In plain language, a quorum is a self-supporting coalition: everyone in it sees enough trusted support inside the group to accept the group’s decision.

This is the central mechanism. In threshold BFT, quorums are imposed from above by a common rule like “2/3 of validators.” In federated consensus, quorums are discovered from below by composing many local trust rules. That gives flexibility, but it also makes the system’s safety depend on the shape of the trust graph rather than on a simple global threshold.

An analogy helps here. Think of a professional community where each person has a list of peers whose agreement they consider authoritative. A community-wide conclusion becomes stable only if enough of those circles overlap. The analogy explains why local trust can yield global agreement. It fails, however, in one important way: a consensus protocol needs precise guarantees about conflicting decisions, not just social plausibility.

Why is quorum intersection required for safety in federated consensus?

| Quorum type | Safety | Liveness | Example |

|---|---|---|---|

| Intersecting | Prevents conflicting decisions | May stall if slices unavailable | Overlapping camps share nodes |

| Disjoint | Permits contradictory confirmations | Leads to persistent forks | {A,B,C} vs {D,E,F} |

If there is one condition a reader should remember, it is quorum intersection. An FBAS has quorum intersection when every two quorums share at least one node. This matters because if two disjoint quorums can each internally satisfy their members, then two different parts of the network can each finalize incompatible outcomes without any common participant to stop the contradiction.

That is not a minor technical detail. It is the basic reason safety can or cannot hold. The Stellar Consensus Protocol white paper states this sharply: no protocol can guarantee agreement without quorum intersection among the well-behaved nodes. If the trust structure allows separate, non-overlapping quorums, then there is no purely protocol-level trick that can force global agreement in the face of Byzantine behavior or adverse timing.

A small worked example makes this concrete. Imagine six nodes split into two camps: {A, B, C} and {D, E, F}. Every node in the first camp requires agreement from the other two in its own camp; every node in the second camp does the same. Then {A, B, C} is a quorum and {D, E, F} is another quorum. They do not intersect. If the first quorum confirms transaction set X and the second confirms conflicting transaction set Y, both groups have satisfied their own rules. From inside each camp, nothing looks wrong. But globally the system has forked in the strongest possible sense: there is no shared authority tying the two outcomes together.

Now change the configuration so that each camp also requires at least one node from the other camp, or both camps depend on a common trusted organization. The quorums start to overlap. That overlap is what turns a collection of local trust policies into a single safety domain.

This is why federated consensus cannot be evaluated by counting nodes alone. Ten highly interconnected nodes may be safer than a hundred nodes arranged into weakly overlapping clusters. In an FBAS, topology matters more than raw population.

Why do federated consensus systems trade liveness for safety?

Consensus always lives under a constraint: under adverse conditions, you generally do not get maximal safety and maximal liveness at once. Federated systems make this tradeoff especially visible because nodes choose their own trust dependencies.

Safety means honest nodes do not ratify conflicting decisions. In a payment network, that is the difference between a delayed transfer and a double-spend. Liveness means honest nodes eventually reach a decision and continue advancing the ledger. In practice, many federated designs, including Stellar’s deployment of SCP, prioritize safety over liveness. If trust dependencies are not sufficiently available (because trusted validators are offline, partitioned, or misconfigured) the system may stall rather than confirm something unsafe.

This can feel like a weakness until you compare failure modes. A liveness failure is a halt or delay. A safety failure is a fork with contradictory final outcomes. The second is much harder to unwind. David Mazières, who introduced FBA and SCP, argues that federated systems often deliberately choose larger quorum slices so nodes are more likely to remain in agreement than remain live. That is a design preference, not a theorem about all systems, but it matches how operators usually think about high-value settlement.

There is also a practical asymmetry here. In federated systems, nodes can often recover from liveness failures by changing their trust choices without first needing the stalled system itself to agree on a reconfiguration. That is operationally useful. But it also means configuration management is part of the security model, not just an implementation detail.

How does federated voting (SCP) reach agreement in practice?

The most important concrete implementation of federated consensus is the Stellar Consensus Protocol, or SCP, which is a construction of federated Byzantine agreement. SCP uses a voting mechanism that moves statements through stages so nodes can converge on the same value while preventing previously stuck or conflicting statements from causing permanent inconsistency.

At a high level, SCP separates consensus for each ledger slot into two linked tasks. The nomination protocol tries to produce candidate values. These values might represent transaction sets proposed for the next ledger. But nomination alone is not enough, because several candidate values may circulate at once. The ballot protocol then takes over to drive the system toward a single committed value.

The intuition is straightforward. First, nodes surface plausible candidates from the network. Then they engage in structured voting to ensure that only one compatible outcome becomes committed. The official Stellar documentation describes federated voting in terms of three stages; vote, accept, and confirm. A node may vote for a statement when it is consistent with what it has seen so far. It may accept once enough of its trust conditions support the statement. It confirms only when support is strong enough that the statement is stable relative to its quorum structure.

The ballot phase adds more machinery because real networks do not present a single clean proposal at the same time to everyone. Nodes may race, messages may be delayed, and some nodes may push incompatible candidates. SCP therefore uses ballots that can be prepared, committed, or aborted. That sounds more elaborate than a simple yes/no vote because it is solving a more elaborate problem: not just choosing a value, but doing so without getting trapped forever by partially adopted earlier statements.

The deeper point is that federated consensus is not merely “everyone trusts someone.” It requires a protocol that can transform overlapping, asynchronous local views into stable confirmation rules. SCP’s formal contribution was to show that this can be done with provable safety under the right quorum assumptions.

How does the fault model of federated consensus differ from threshold BFT?

A common misunderstanding is to ask, “How many faulty nodes can the system tolerate?” That is a natural question from threshold BFT, but it is incomplete for an FBAS. In federated systems, the importance of a node depends on who trusts it, not just on how many nodes exist.

The SCP paper formalizes this with concepts such as dispensable sets, befouled nodes, and intact nodes. The intuition is more important than the terminology. A dispensable set is a set of nodes you can mentally remove while still preserving the essential conditions needed for the remaining nodes to be both safe and live. Intact nodes are those that remain in the well-behaved core after accounting for bad behavior and trust dependencies.

Why does this matter? Because failures can cascade through trust. A node may be honest and online, but if too many of the nodes it depends on are gone or Byzantine, it may no longer be able to participate safely in consensus. So “honest” is not enough; the node must also remain structurally supported by the surviving quorum topology.

Research on FBAS analysis has turned this idea into practical metrics such as safety and liveness buffers, intact-node computation, and minimal splitting or blocking sets. A blocking set is, roughly, a set of nodes whose absence can stop progress. A splitting set is, roughly, a set whose failure can endanger safety by allowing incompatible quorum behavior. These are not just academic labels. They tell operators which validators are truly critical and where the network is more centralized than it may appear from a simple node count.

Why is validator configuration critical in federated consensus deployments?

Federated consensus is often introduced as flexible trust, and that is true. But the freedom to choose trust relationships creates the hardest problem in the system: choosing them well.

A protocol like SCP can be formally safe relative to the quorum slices nodes declare, yet a deployment can still be fragile if those slices are poorly chosen. The protocol cannot manufacture quorum intersection from a bad trust graph. Nor can it guarantee liveness if nodes depend on unavailable or tightly concentrated validators.

This is where theory meets operations. Research and tooling now exist to enumerate quorums, test quorum intersection, compute intact nodes, and identify minimal blocking and splitting sets. Tools such as fbas_analyzer and related observability work have been used to analyze networks like Stellar and MobileCoin. Their existence tells you something important: in federated systems, consensus analysis is partly graph analysis.

It also explains why open membership is more subtle than it first appears. In principle, anyone can run a validator. In practice, becoming relevant to consensus requires other nodes to include you in their trust choices. Some empirical analyses of FBAS networks describe a small “top tier” of nodes that effectively determines liveness. That does not negate open membership, but it does mean that influence can become concentrated even without a formally closed validator set.

So the core governance question is not only “Can anyone join?” It is also “Whom do participants actually trust, and how diversified are those trust dependencies?” Federated consensus pushes governance into the trust graph.

How do Stellar and the XRP Ledger implement federated consensus?

| System | Trust config | Voting model | Quorum unit | Fault note | Typical deployment |

|---|---|---|---|---|---|

| Stellar (SCP) | Quorum sets / slices | Nomination + ballot | Quorums discovered from slices | Depends on quorum intersection | Low-latency settlement |

| XRP Ledger | Unique Node List (UNL) | Iterative proposal rounds | Server-specific trusted sets | Depends on UNL overlap | High-throughput payments |

Stellar is the clearest example because SCP was designed specifically as an FBA protocol. In Stellar, validator nodes choose quorum sets and thresholds that determine their quorum slices. Consensus rounds nominate transaction sets for a ledger and then use the ballot protocol to confirm one safely. The network aims for low-latency finality while preserving decentralized control in the sense that nodes choose their own trust configurations.

The XRP Ledger uses a related trust-based model, though with different terminology and protocol details. There, participants choose a Unique Node List, or UNL, of validators they trust. Servers iteratively adjust proposals based on messages from trusted validators until they converge on the next validated ledger. The vocabulary differs from SCP’s quorum-slice formalism, but the family resemblance is clear: consensus depends on overlapping trust selections rather than on mining or a globally fixed staking committee.

These systems are useful examples because they show that federated consensus is not just a theoretical midpoint between classical BFT and public blockchains. It is a live design pattern for systems that want faster settlement and less resource waste than proof-of-work, while retaining more openness than closed validator committees.

What practical failures and outages have federated consensus networks experienced?

The most important practical failure mode is not a clever cryptographic attack. It is bad or brittle trust structure combined with ordinary operational failures.

If too many important validators go offline, enough quorum slices may become unsatisfied that the network stalls. Stellar’s 2014 outage is an early, concrete example of a consensus halt caused by validator failures associated with resource exhaustion. Several validating nodes failed, consensus on ledgers stopped, and transactions halted. That incident was not a proof that federated consensus is uniquely fragile; any consensus system depends on validator availability. But it does illustrate how quickly liveness can disappear when trust dependencies concentrate on a small operational core.

Another class of problem is centralization by configuration. A network may advertise open participation, yet if most nodes rely on the same small set of trusted validators, those validators become critical infrastructure. This can preserve safety if configured carefully, but it narrows the real fault domain and creates organizational choke points. Some analyses of deployed FBAS structures have emphasized exactly this issue.

There are also deeper open questions. The SCP white paper notes unresolved problems around deterministic termination under adversarial timing and around safe reconfiguration or upgrade voting. This is a reminder that federated consensus is not a finished or universally settled subject. The safety story is comparatively crisp: it depends on quorum intersection and protocol correctness. The liveness and reconfiguration story is more contingent on timing assumptions, operator behavior, and recovery processes.

Federated consensus vs classical BFT and Nakamoto-style systems

| System | Membership | Sybil resistance | Finality | Resource cost | Best for |

|---|---|---|---|---|---|

| Federated | Per-node trust choices | Named trust, not stake | Fast deterministic finality | Low energy, operator costs | Low-latency payments |

| Classical BFT | Fixed validator set | Identity + threshold | Deterministic finality | Moderate compute | Permissioned ledgers |

| PoW/PoS | Open, stake/work based | Work or stake economics | Probabilistic or stake-weighted | High energy (PoW) or staking | Public permissionless networks |

It helps to place federated consensus between two neighboring ideas.

Compared with classical BFT systems such as Tendermint or CometBFT, federated consensus relaxes the assumption of a single globally agreed validator set. Tendermint-style systems begin with known validators and use fixed supermajority thresholds, often under weak synchrony assumptions. Their structure is cleaner, and their guarantees are usually easier to state. Federated systems trade that simplicity for local trust autonomy.

Compared with proof-of-work and many proof-of-stake systems, federated consensus does not use resource expenditure or stake weight as the primary source of Sybil resistance and decision authority. Instead, it uses named trust relationships. That can dramatically reduce waste and latency. But it means legitimacy comes from social and institutional trust expressed in configuration, not from a globally uniform economic rule.

This is the right way to see the design: federated consensus is not “better consensus” in the abstract. It is a different answer to the question of who gets counted and why.

Conclusion

Federated consensus is a consensus model in which each node chooses whom it trusts, and system-wide agreement depends on how those trust choices overlap. The essential mechanism is the move from quorum slices (local trust requirements) to quorums; self-supporting groups that can validate decisions.

The property that makes the whole idea work is quorum intersection. If quorums overlap among well-behaved nodes, safety can be maintained. If they do not, no clever protocol can prevent contradictory outcomes. In practice, this means federated consensus is as much about trust topology and operator configuration as it is about message-passing rules.

That is the memorable version: federated consensus replaces a single global validator set with overlapping local trust sets; and the network is only as safe as the structure those overlaps create.

What should you understand before using federated consensus?

Understand the trust topology, finality model, and typical failure modes of federated consensus before relying on a federated network for trading or transfers. On Cube Exchange, you can still complete deposits, trades, and withdrawals as usual, but pair those actions with a short checklist that verifies the network’s quorum properties and confirmation semantics so you avoid stalls or unexpected forks.

- Fund your Cube account with fiat or a supported crypto transfer.

- Identify the asset's network and open its validator/quorum documentation (project docs, published quorum JSON, or stellarbeat).

- Check quorum intersection and centralization indicators: run or consult an FBAS tool (for example fbas_analyzer or published analyses) on the network’s quorum set or UNL to spot small blocking/splitting sets.

- For deposits/withdrawals, wait for the network’s committed/validated ledger confirmation and then add one extra confirmation interval for networks that prioritize safety; for sensitive trades, prefer a limit order to control execution price.

Frequently Asked Questions

Quorum intersection means every two quorums share at least one common node, and it is required for safety because if two disjoint quorums can each satisfy their members they can finalize conflicting outcomes with no overlap to detect or prevent the contradiction.

There is no fixed ‘‘how many’’ faulty-nodes number for an FBAS; fault tolerance depends on who trusts whom - some nodes are critical because many others include them in quorum slices - so resilience must be measured by analyzing the trust topology (e.g., intact nodes, blocking and splitting sets) rather than a simple fraction of faulty nodes.

Federated systems often prefer safety over liveness because safety failures (irreversible forks or double-spends) are far harder to recover from than temporary stalls, so designers and operators deliberately choose larger or more conservative quorum slices that reduce the chance of conflicting confirmations even if that increases the risk of halting when trusted nodes are unavailable.

Anyone can run a validator, but being influential requires other nodes to include you in their quorum slices or UNLs; in practice a small ‘‘top tier’’ of validators that many participants trust often emerges and effectively determines liveness and fault impact despite nominal open membership.

A quorum slice is a single node’s local rule for which peers are sufficient to convince it (e.g., ‘‘any two of B,C,D plus A’’), while a quorum is a set of nodes that contains, for every member, at least one of that member’s quorum slices so the set is self-supporting for decision-making.

The most common real-world failures are brittle or poorly chosen trust configurations combined with ordinary operational faults: if many critical validators go offline or if many nodes point to the same few validators, the network can stall, and misconfiguration or resource failures have caused real Stellar outages.

Federated consensus differs from classical BFT by removing the need for a single, agreed validator set (nodes pick local trust rules) and from PoW/PoS by basing decision authority on named trust relationships rather than resource or stake weight, trading lower resource cost and latency for trust-based legitimacy.

Operators and researchers use graph-analysis tooling (for example, the fbas_analyzer package and related observability tooling that consume stellarbeat JSON) to enumerate quorums, test quorum intersection, and compute intact nodes or minimal blocking/splitting sets, though the article notes operational guidance for specific configurations remains limited.

There is no universally safe, fully automatic method guaranteed to deterministically reconfigure quorum slices under adversarial timing; the SCP literature highlights open problems around deterministic termination and safe automated reconfiguration, so recovery often requires operator action or randomized mechanisms.

Blocking sets are node sets whose absence can stop progress (affecting liveness), splitting sets are those whose failure can permit incompatible quorums (affecting safety), and intact nodes are those that remain in the well-behaved core after accounting for bad behavior and trust dependencies - these concepts help identify which validators are truly critical.

Related reading