What is RPC?

Learn what RPC is, why blockchain nodes expose it, how JSON-RPC works, and where RPC helps, fails, and shapes real node infrastructure.

Introduction

RPC (short for remote procedure call) is the mechanism that lets one program ask another program to do work across a network as if it were calling a local function. In blockchain infrastructure, RPC is the main interface between applications and nodes: wallets use it to read balances, explorers use it to fetch blocks and transactions, exchanges use it to submit transactions and monitor confirmations, and operators use it to inspect node health. If you have ever pointed an app at an “RPC endpoint,” you were really giving it a way to send structured requests to a node and get structured answers back.

That sounds simple, but there is an important puzzle underneath it. A local function call is immediate, in-process, and usually reliable in ways we take for granted. A remote call is none of those things. It crosses a network, can be delayed, can fail halfway through, can reach a machine running different software, and may return data from a changing view of the blockchain. RPC exists to hide some of that complexity (enough to make systems usable) without pretending the network has become local.

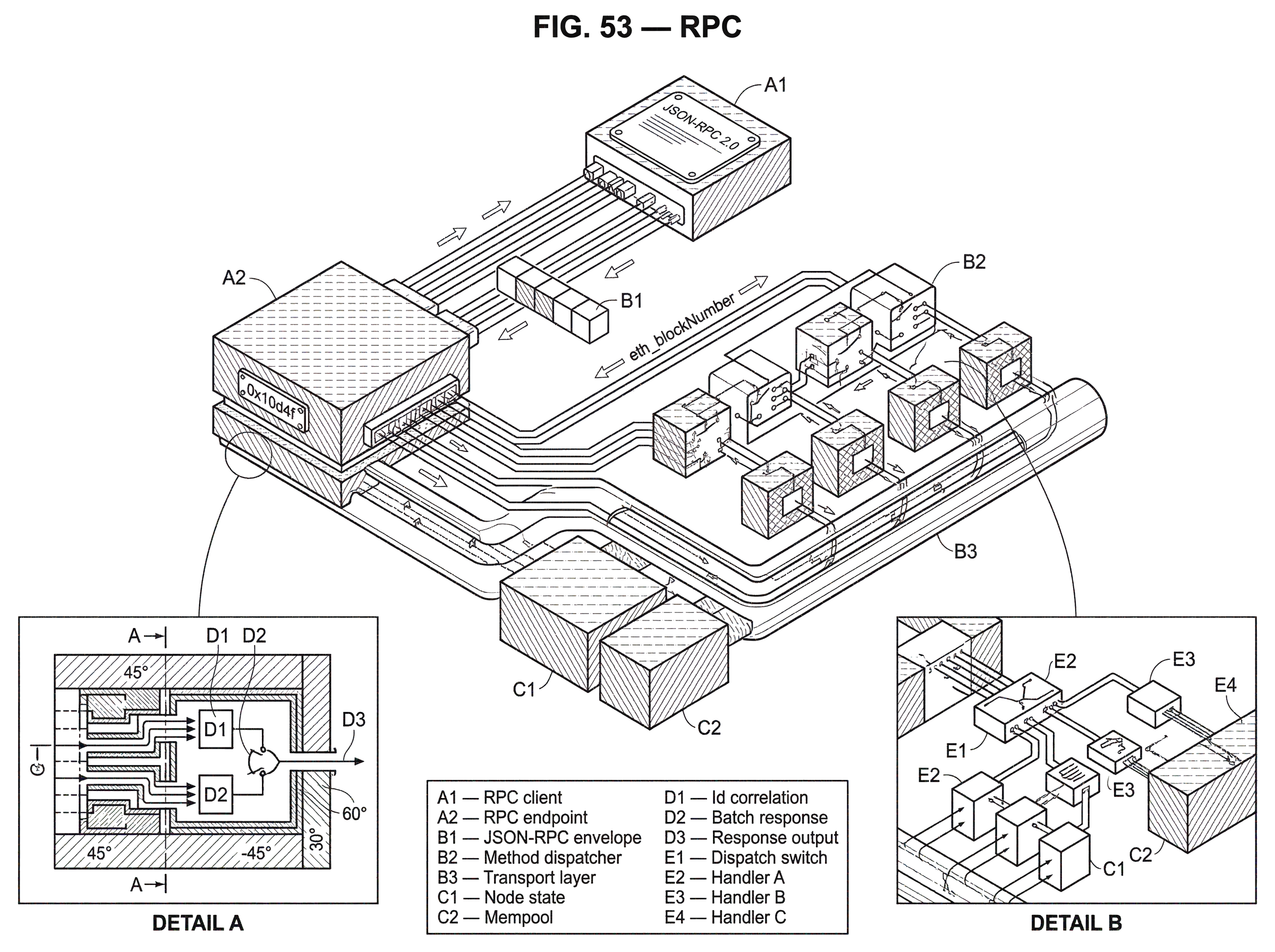

That tension is the key to understanding RPC. The idea is not “make networking disappear.” The idea is to give networking a procedure-shaped interface, so developers can ask for eth_blockNumber, getblock, or system_health instead of manually designing every wire message from scratch. Once that clicks, the rest follows: request formats, method namespaces, batching, streaming, authentication layers, and the operational reality that an RPC endpoint is often the public face of a much more complex node system behind it.

How does RPC present a remote operation like a local function call?

| Aspect | Local call | Remote RPC |

|---|---|---|

| Latency | Very low, in-process | Higher, network-dependent |

| Failure model | Deterministic; local exceptions | Partial failure; outcomes ambiguous |

| State visibility | Shared memory view | Stale or diverging views |

| Serialization | None or shallow | Requires byte/JSON encoding |

| Developer ergonomics | Direct language semantics | Procedure-shaped API |

At first principles, RPC solves a coordination problem. Suppose one machine has the data or capability you need, and another machine wants to use it. You need some way to name an operation, send inputs, run that operation on the remote side, and send the result back. You could do this with an ad hoc protocol every time. RPC says: instead, present remote operations in the shape of procedure calls.

This is why classic RPC papers describe the mechanism in terms that resemble ordinary programming. The caller invokes a procedure. The caller’s environment pauses. Parameters are packaged and transmitted. The remote machine executes the target procedure. Results are sent back. The caller resumes. That is the conceptual model, and it remains useful even when the actual implementation is JSON over HTTP, binary frames over HTTP/2, or WebSocket messages.

But this analogy has limits, and it is important to name them. A local call usually shares memory conventions, timing assumptions, and failure domains with the caller. A remote call does not. There is no shared address space. Objects must be serialized into bytes or JSON. Latency is much higher. Partial failure is possible: the client can time out without knowing whether the server ran the operation. So RPC is best understood as borrowing the ergonomics of local calls, not the guarantees of local execution.

That distinction matters a lot in blockchain systems. If you ask a node for the latest block number, the node may answer from the latest head it knows. Another node may answer differently a moment later. If you send a transaction through RPC and the connection drops, you may not know whether the node accepted it before the failure. RPC makes these operations callable, but it does not erase the underlying distributed-system uncertainty.

What happens step-by-step during an RPC call to a blockchain node?

Mechanically, an RPC call has a few indispensable parts. The client needs a way to identify the operation it wants. It needs to encode the inputs in a format both sides understand. The server needs to decode those inputs, dispatch the request to the right handler, run the logic, and encode a success result or an error. Then the response has to be correlated with the original request.

Older RPC systems often used generated stubs for this. A client-side stub looked like a local function but actually packed arguments into a network message. A server-side stub unpacked the message and called the real server implementation. That architecture is still alive in systems like gRPC, where service definitions generate client and server code automatically. Even when you use a more loosely specified interface such as JSON-RPC, the same conceptual pieces are there. They are just often implemented more dynamically.

A simple blockchain example makes this concrete. Imagine a wallet wants to know the current Ethereum block height. It sends a JSON object to a node’s RPC endpoint naming the method eth_blockNumber. The node’s RPC server parses the request, matches eth_blockNumber to the handler that reads the execution client’s current head, converts the result into the expected return format, and sends back a response. To the wallet developer, this looks like “call a method, get a value.” Underneath, there was parsing, routing, state access, serialization, network transport, and error handling.

The same pattern appears on Bitcoin nodes, Tendermint-based systems, and Substrate-based chains. The method names differ and the returned data differs, but the structural invariant is the same: RPC is a contract for turning remote capabilities into named calls with inputs and outputs.

Why do blockchain nodes expose RPC endpoints instead of using P2P directly?

A blockchain node already participates in the peer-to-peer network. So why is a separate RPC interface needed?

Because the peer-to-peer protocol and the application interface solve different problems. A node’s P2P layer speaks to other nodes to exchange blocks, transactions, votes, or consensus messages. An application does not usually want to join that conversation directly. It wants higher-level answers: the balance of an address, the receipt for a transaction, the current sync status, the logs matching a filter, or a way to broadcast a signed transaction. RPC is the translation layer between node internals and application needs.

This is why block explorers depend on RPC. They are not reimplementing the node’s full storage and execution logic from scratch. They query node data through RPC, index it, and present it in a form humans can search. Wallets do the same for balances and transaction history. Exchanges and custodians use RPC to submit withdrawals, observe confirmations, and monitor mempool or chain conditions. Infrastructure providers package this into hosted endpoints so customers do not need to run every node themselves.

Seen this way, RPC is not a side feature. It is the operational boundary of the node. For many users, the RPC endpoint is the node, because it is the only part they ever touch.

What is JSON-RPC and why is it common in blockchain infrastructure?

| Characteristic | JSON-RPC | gRPC |

|---|---|---|

| Encoding | JSON text | Protocol Buffers binary |

| Transport | Transport-agnostic (HTTP, WS) | HTTP/2 (built-in) |

| Typing & stubs | Dynamic; manual client code | Strongly typed; codegen stubs |

| Streaming | Limited (WS subscriptions) | Bi-directional streaming |

| Best fit | Simple, interoperable tooling | High throughput, typed APIs |

In blockchain systems, the most common RPC style is JSON-RPC 2.0. The reason is not mysterious. It is lightweight, stateless, transport-agnostic, and uses JSON, which is easy for many languages and tools to handle. The JSON-RPC 2.0 specification defines the message format, how requests and responses are matched, how errors are reported, and how batch calls work. It does not define transport security, authentication, or delivery guarantees. Those have to be provided elsewhere.

A JSON-RPC request has a small core structure. It includes "jsonrpc": "2.0", a string method, optional structured params, and optionally an id. The id is what lets the client match a response to a request. A successful response contains "result"; an unsuccessful one contains "error"; and the response echoes the request’s id. The protocol is intentionally minimal.

Here is a representative request to an Ethereum node:

{

"jsonrpc": "2.0",

"method": "eth_blockNumber",

"params": [],

"id": 1

}

And a representative successful response:

{

"jsonrpc": "2.0",

"id": 1,

"result": "0x10d4f"

}

That result is returned as a hex quantity because Ethereum’s JSON-RPC conventions require integers to be hex strings prefixed with 0x, using a compact representation. This detail is easy to miss, but it illustrates an important point: JSON-RPC defines the envelope, while each blockchain ecosystem defines many of the domain-specific conventions inside that envelope.

Bitcoin Core also exposes an RPC interface, though its method set and conventions are different from Ethereum’s. Substrate-based nodes expose namespaces such as system, state, and chain, and some compatible nodes also expose Ethereum-style eth_* methods. Tendermint-based systems provide their own RPC surface for status, blocks, transactions, and consensus-related information. The broad pattern repeats even when the payload semantics change.

What is the JSON-RPC request–response contract and when does it break down?

The useful fiction of RPC is that you ask for something and then get the answer. The real mechanism is stricter: you send a request object, and the server either returns a corresponding response or does not.

This is where the id field becomes more than bookkeeping. It is what preserves correlation when there are many in-flight requests. JSON-RPC batch requests make this especially clear. A client may send an array of requests together, and the server may process them concurrently and return the responses in any order. So clients must match by id, not by position. This makes batching efficient, but it also makes clear that the system is not a simple stack of synchronous local calls.

Error handling reveals the same truth. JSON-RPC responses report errors with an object containing a numeric code, a short message, and optional data. Some codes are standardized, such as parse error or invalid request, while others are server-defined. The important insight is that in RPC, failure is part of the normal protocol surface. A malformed request, an unavailable method, invalid parameters, and an internal execution error all need explicit representation.

There is also a special case: the notification. In JSON-RPC, a notification is a request without an id. That means the client is explicitly saying it does not want a response. The server must not reply. This can be useful for fire-and-forget style signaling, but there is a cost: notifications are not confirmable. If something goes wrong, the client receives no RPC-level indication. That is a good example of RPC exposing a tradeoff directly rather than hiding it.

How do discovery and machine-readable schemas (OpenRPC) help integrate with nodes?

As RPC surfaces grow, a problem appears: how do clients know what methods exist and what parameters they expect? Human-written documentation helps, but it does not scale well by itself. That is where machine-readable descriptions become valuable.

For JSON-RPC, OpenRPC plays this role. It defines a standard way to describe a JSON-RPC API so that humans and tools can discover and understand the service. An OpenRPC-enabled API can expose a method named rpc.discover that returns the schema describing the service. That matters because tooling can then generate documentation, clients, validation layers, and interactive explorers without reverse-engineering the endpoint.

Ethereum’s execution API documentation is an important example of this approach. The specification presents a canonical method surface for execution clients and is powered by OpenRPC. This matters operationally because it supports interoperability: applications can rely on a common interface across different execution clients, even if implementation details differ. The same general idea appears elsewhere too. Substrate nodes can expose rpc_methods to retrieve methods available on a particular node. Discovery reduces guesswork.

This is one of the quiet reasons RPC becomes infrastructure rather than just messaging. Once method names, input schemas, output schemas, and discovery conventions are standardized, a whole tooling ecosystem can form around them.

How do transport choices (HTTP, WebSocket, gRPC) change RPC behavior?

A common misunderstanding is to treat RPC and HTTP as the same thing. They are not.

JSON-RPC is transport-agnostic. It defines the message structure and processing rules, but not the transport itself. In practice, blockchain nodes often expose JSON-RPC over HTTP, and sometimes over WebSocket for subscriptions or long-lived event flows. Geth, for example, exposes a JSON-RPC server and supports batch requests as well as real-time events. The RPC layer says what the messages mean. The transport layer says how they move.

This distinction matters because different transports change the system’s behavior. HTTP request-response is simple and ubiquitous, which makes it a natural fit for stateless queries like block lookups or transaction submission. WebSocket allows longer-lived connections and subscription-style patterns such as new-head or log streaming. gRPC, by contrast, commonly uses Protocol Buffers as its interface definition and binary encoding format, runs over HTTP/2, and supports bi-directional streaming with pluggable authentication and observability components.

So when people compare JSON-RPC and gRPC, they are usually comparing two different RPC ecosystems, not merely two encodings. JSON-RPC favors minimalism and easy interoperability. gRPC favors strongly typed service definitions, generated stubs, efficient binary serialization, and richer streaming semantics. Neither is “RPC” in the abstract; each is a concrete design point inside the broader RPC idea.

What are RPC failure semantics and how do they affect transaction submission?

| Case | What RPC shows | Why ambiguous | Recommended action |

|---|---|---|---|

| Successful response | Result or tx hash returned | Clear confirmation | Proceed but verify on-chain |

| Timeout after submit | No definitive reply | Server may have executed | Query by txhash or mempool |

| Notification (no id) | No response expected | No confirmation or error | Use only for fire-and-forget |

| Error response | Error object with code | Server may still have side effects | Inspect logs and recheck state |

The place where smart readers most often underestimate RPC is failure. If the response never comes back, what happened?

The classic RPC literature puts this sharply. If a call returns successfully, the implementation may be able to guarantee the server procedure was invoked exactly once. But if the call ends in an exception or communication failure, the truth may be ambiguous: the remote procedure might have run once, or not at all, and the caller may not know which. That ambiguity is not a bug in one implementation. It is a consequence of distributed systems.

In blockchain RPC, this matters whenever a method has side effects. If you submit a transaction and your client times out, you cannot infer from the timeout alone that submission failed. The node may have accepted and propagated the transaction before the connection broke. Good client design therefore separates transport acknowledgment from domain outcome. Instead of asking only “did the RPC call succeed?”, robust systems also ask “did the chain later show the intended effect?”

Even reads can be trickier than they look. Ethereum’s JSON-RPC lets many state queries specify a block parameter such as latest, safe, finalized, or pending, or a concrete block number. That is not cosmetic. It is how the caller states which version of chain state it wants. Without this, applications can accidentally mix data from unstable and stable views of the chain and make incorrect decisions.

This is a good example of the broader rule: RPC does not remove distributed-system semantics. It gives you a clean place to express them.

How are RPC methods organized and why do namespaces define capability boundaries?

Blockchain node RPC APIs are usually organized into method namespaces because nodes expose many different classes of capability. Some methods are harmless status queries. Some are computationally expensive. Some reveal debugging internals. Some are dangerous if exposed publicly.

Geth’s documentation reflects this by grouping methods into namespaces such as eth, net, debug, admin, and txpool, while also marking some namespaces deprecated. Substrate documentation goes further by labeling some methods unsafe, active only when the node is started with appropriate flags. That label matters because RPC is not just a convenience interface; it is also a capability boundary. Exposing the wrong methods to the wrong audience can turn a node into an attack surface.

This is why production RPC deployments usually do not treat all methods equally. Read-heavy public methods may sit behind rate limits and caches. Sensitive administrative methods may be disabled entirely or bound to private networks. Debug methods may be reserved for operators. The structure of the RPC method space often mirrors the trust model of the node operator.

What security and operational controls must you add to JSON-RPC deployments?

This leads to the next important point: an RPC specification is not a security architecture.

JSON-RPC deliberately omits authentication, authorization, transport security, and replay protection. Those are outside the protocol. That design keeps the message format simple, but it means a real deployment must add its own controls. This is not optional in blockchain infrastructure, where APIs expose valuable data and privileged operations and are attractive targets for abuse.

The practical risks are the familiar API risks. Authentication can be implemented incorrectly. Object- or method-level authorization can be too broad. Expensive methods can be abused without rate limiting, causing denial of service or large infrastructure cost. Poor inventory and version management can leave deprecated endpoints exposed longer than intended. These are not special to blockchain, but blockchain RPC endpoints often face them at scale because public demand is high and some queries are resource-intensive.

Operationally, this is why hosted RPC providers invest in monitoring, rate control, redundancy, and incident communication. Public status pages such as Infura’s make visible a fact that is easy to miss from the client side: an RPC endpoint is a live service with uptime variation, latency changes, degraded performance states, and external dependencies. If your application depends on RPC, then RPC reliability becomes part of your application’s reliability.

This is also why many teams use multiple providers or combine provider endpoints with their own nodes. RPC looks like a simple URL, but behind that URL there may be load balancers, caches, replicated node fleets, failover logic, and request shaping. The endpoint is simple because the infrastructure behind it is not.

How does an exchange submit and track an Ethereum withdrawal via RPC?

Imagine an exchange needs to process an Ethereum withdrawal. The user has already authorized the withdrawal internally. The exchange signs a transaction and submits it through an execution node’s RPC endpoint using eth_sendRawTransaction. At that moment, the RPC server’s job is narrow: parse the request, validate the parameters are structurally acceptable, pass the raw transaction into the node’s transaction handling path, and return either a transaction hash or an error.

If the exchange receives a successful result, that tells it the node accepted the submission request and returned the hash. It does not yet mean the withdrawal is final on-chain.

The exchange then uses other RPC calls to track whether the transaction was propagated, included, and eventually finalized strongly enough for its policy.

- perhaps

eth_getTransactionByHash eth_getTransactionReceipt- block or head queries

Now suppose instead the exchange’s connection times out right after submission. RPC alone cannot resolve the ambiguity. The transaction may have been accepted before the timeout, or not. So the exchange checks for the transaction hash if it can reconstruct it locally, queries recent mempool or chain state through available methods, and avoids blindly resubmitting in a way that could create duplicates or replacement behavior it did not intend.

The point of the example is not that RPC is unreliable. It is that RPC is a boundary between application logic and networked node behavior, and good applications are written with that boundary in mind.

Where signing architecture matters, RPC also sits next to custody design. In decentralized settlement systems, transaction authorization may happen without any full private key existing in one place. Cube Exchange, for example, uses a 2-of-3 Threshold Signature Scheme for decentralized settlement: the user, Cube Exchange, and an independent Guardian Network each hold one key share, no full private key is ever assembled in one place, and any two shares are required to authorize a settlement. RPC then becomes the interface for broadcasting and tracking the resulting transaction, while threshold signing handles authorization separately. This is a useful reminder that RPC is the communication layer, not the whole trust model.

Why does RPC remain central to blockchain infrastructure?

RPC keeps reappearing because it matches how developers think. Most software is built from named operations with inputs and outputs. Exposing remote services that way is often more natural than thinking directly in raw messages. The persistence of the idea from early RPC systems to JSON-RPC and gRPC is not an accident. The abstraction is genuinely useful.

What changes across implementations is the tradeoff surface. Some systems emphasize strict schemas, generated code, and binary efficiency. Some emphasize easy manual use with curl, readable payloads, and transport flexibility. Some add discovery layers and code generation on top. Some support subscriptions or streaming. The core remains: turn remote work into callable operations.

For blockchain infrastructure, that core is especially valuable because the node is both complex and indispensable. Applications need a standard way to ask the node questions and hand it transactions. RPC is that standard interface.

Conclusion

RPC is the mechanism that makes a remote node feel callable. It works by naming operations, packaging inputs, dispatching them on another machine, and returning results in a standard format. In blockchain systems, that is how wallets, explorers, exchanges, and operator tools interact with nodes.

The most important thing to remember is simple: RPC gives you the shape of a function call across a network, not the certainty of a local call. Once you see that, the design of JSON-RPC, method namespaces, discovery, batching, security controls, and failure handling all make sense.

How does this part of the crypto stack affect real-world usage?

RPC determines how reliably you can submit transactions, read balances, and observe confirmations; so it directly affects when it's safe to fund, trade, or withdraw. Before you move funds or place a trade, check the network's finality model, RPC provider redundancy, and the transport/methods you will rely on; then use Cube's funding and execution flows to complete the action.

- Fund your Cube account with fiat or a supported crypto transfer. Choose the asset and network that match the on‑chain destination you plan to use.

- Check the network finality option you need (e.g., prefer a "finalized" block parameter or wait an appropriate number of confirmations) and confirm your chosen RPC provider or fallback list supports that view.

- Open the market or withdrawal flow on Cube. For trading, choose a limit order for price control or a market order for immediate execution; for withdrawals, select the correct network and gas/fee option.

- Review fees, selected block/finality settings, and destination details, then submit the trade or transfer.

Frequently Asked Questions

RPC gives no implicit atomic guarantee that a side-effecting call both reached and executed on the node; a successful RPC response only confirms the node accepted the request at the RPC/transport level, and a timed-out or failed call is ambiguous - the transaction may or may not have been submitted. Robust clients therefore treat transport acknowledgment as distinct from on‑chain outcome and verify the effect later with chain queries (e.g., by checking the transaction hash, receipt, or block inclusion).

A JSON-RPC notification is a request without an "id" where the client asks the server not to reply; because the server must not send a response, notifications are fire-and-forget and provide no RPC-level confirmation if something goes wrong. That makes notifications unsuitable for any operation where the client needs to know success or failure.

Use HTTP for simple, stateless request/response patterns (block lookups, transaction submission), WebSocket for long‑lived connections and subscription-style events (new-heads, logs), and consider gRPC when you want strongly typed schemas, efficient binary encoding, and richer streaming semantics; each transport changes latency, batching/streaming support, and tooling trade-offs, because JSON-RPC itself is transport‑agnostic.

When batching requests the client must correlate responses by the request id, not by array position, because servers may process and return batched responses in any order; the id field is the mechanism that preserves response correlation for concurrent in‑flight requests.

You should not use the node's P2P protocol as an application API because P2P is designed for node-to-node consensus and gossip, whereas RPC translates node internals into high-level, application‑oriented operations (balances, receipts, broadcast) that apps need without joining consensus or gossip subsystems.

The JSON-RPC spec intentionally omits authentication, authorization, transport security, and replay protection, so operators must add those controls externally (TLS, API keys/ACLs, per-method authorization, rate limits, and monitoring); failing to do so risks abuse of expensive or privileged methods.

Machine‑readable descriptions (OpenRPC) let clients discover methods, parameter and result schemas, and generate tooling or clients automatically; using OpenRPC (or an equivalent rpc.discover method) reduces guesswork and supports interoperability across different execution clients.

Exposing administrative, debug, or "unsafe" namespaces publicly enlarges your attack surface because those methods can be computationally expensive or reveal internals and some are intentionally disabled or gated by flags in clients like Geth and Substrate; production deployments therefore isolate or disable sensitive namespaces, apply authorization, and restrict them to operators.

Relying on a single hosted RPC provider means your app inherits that provider's operational characteristics (latency variation, outages, rate limits), so many teams either run their own nodes, use multiple providers in fallback, or combine hosted endpoints with local nodes to improve resilience and control.

When making state queries you must specify the block context (e.g., latest, finalized, pending, or an exact block) because different block parameters express different consistency/stability expectations and mixing views can lead to incorrect decisions.

Related reading