What Is Data Sharding?

Learn what data sharding is, how it scales blockchains through blobs and data availability, and why it differs from execution sharding.

Introduction

Data sharding is a way to scale a blockchain by splitting the burden of data storage and data availability across the network instead of making every node handle every byte. The stakes are high because modern blockchain demand is often limited less by computation than by the cost of publishing data that others can verify. If every validator must download, store, and serve all block data, throughput stays tightly capped by what ordinary nodes can safely process.

The puzzle is that blockchains need two things that pull in opposite directions. They need more data throughput so rollups and applications can publish more transactions cheaply. But they also need independent verifiability, which means users must not be forced to trust a block producer who merely claims the data exists. Data sharding exists to reconcile those goals: raise data capacity without simply centralizing the network around a few machines with extreme bandwidth and storage.

The key idea is easy to miss. Data sharding is not primarily about splitting computation. It is about splitting the responsibility for making block data available. In older “multi-shard” visions, each shard might run its own transactions and state transitions. In modern rollup-centric designs, especially Ethereum’s roadmap, the base layer increasingly acts as a place to order data and guarantee its availability, while execution happens elsewhere. That is why Ethereum’s roadmap explicitly describes danksharding and proto-danksharding as a simpler form of data sharding rather than traditional execution sharding.

Why do blockchains need data sharding?

A blockchain block is not useful just because it has a valid header or a correct state root. Verifiers need access to the underlying data that explains how the new state was reached. This is the core of data availability: confidence that the data required to verify a block was actually published to the network.

Here is the mechanism behind the bottleneck. Suppose a rollup posts batches of transactions to a base chain so anyone can reconstruct the rollup state, challenge fraud, or verify proofs. If that data lives directly in normal block space, then every full node of the base chain pays the cost: receiving it, checking it, storing it for some period, and relaying it onward. As more rollups compete for space, fees rise and throughput hits a ceiling. The limiting resource is often not EVM execution or signature checking, but bandwidth and data propagation.

This is why simply increasing block size is not a clean solution. Larger blocks do create more room, but they also make it harder for ordinary nodes to stay in sync and easier for well-provisioned actors to dominate. Research on Fraud Proof and data availability sampling framed this clearly: if clients do not download all data, they need another way to detect when a producer has withheld part of it. Otherwise a malicious producer can create a block that appears valid from the outside while hiding the data needed to prove it invalid.

So the invariant that matters is not “everyone stores everything forever.” The invariant is stronger and more precise: when the chain accepts a block, the data needed to verify that block must have been genuinely available to the network. Data sharding tries to preserve that invariant while reducing how much any one node must handle.

How does data sharding separate ordering, availability, and execution?

A good way to think about data sharding is to separate three jobs that a monolithic blockchain often bundles together.

The first job is ordering: deciding which data becomes part of the canonical chain and in what order. The second is availability: ensuring that data is actually published so verifiers can obtain it. The third is execution: running transactions against state and computing results. Traditional blockchains ask the same network to do all three for all users. Data sharding relaxes that design by saying: perhaps the base layer mainly needs to order data and make it available, while execution can happen in rollups, app-chains, or application clients.

This is the design direction behind systems like Celestia and behind Ethereum’s danksharding roadmap. Celestia explicitly describes itself as a modular data-availability network that orders blobs and keeps them available while execution and settlement live above it. Ethereum’s blob roadmap has a similar spirit, though within Ethereum’s own consensus and fee system: provide much cheaper data publication for rollups without making that data directly executable in the EVM.

The word sharding here means partitioning the workload. But the relevant workload is not “who runs which smart contracts.” It is “who must download, store, sample, serve, or attest to which parts of the block data.” That distinction is the conceptual hinge for the whole topic.

What parts of a blockchain are sharded; data versus state/execution?

| Type | EVM access | Consensus download | Persistence | Fee market |

|---|---|---|---|---|

| Calldata | Readable by EVM | Downloaded by full nodes | Permanent history | Shared with execution |

| EIP‑4844 blobs (proto) | Not readable by EVM | Downloaded by consensus nodes | Temporary (18 days) | Separate blob gas |

| Full data sharding (DAS) | Not directly readable | Probabilistic sampling | Temporary, ecosystem stores | Specialized blob market |

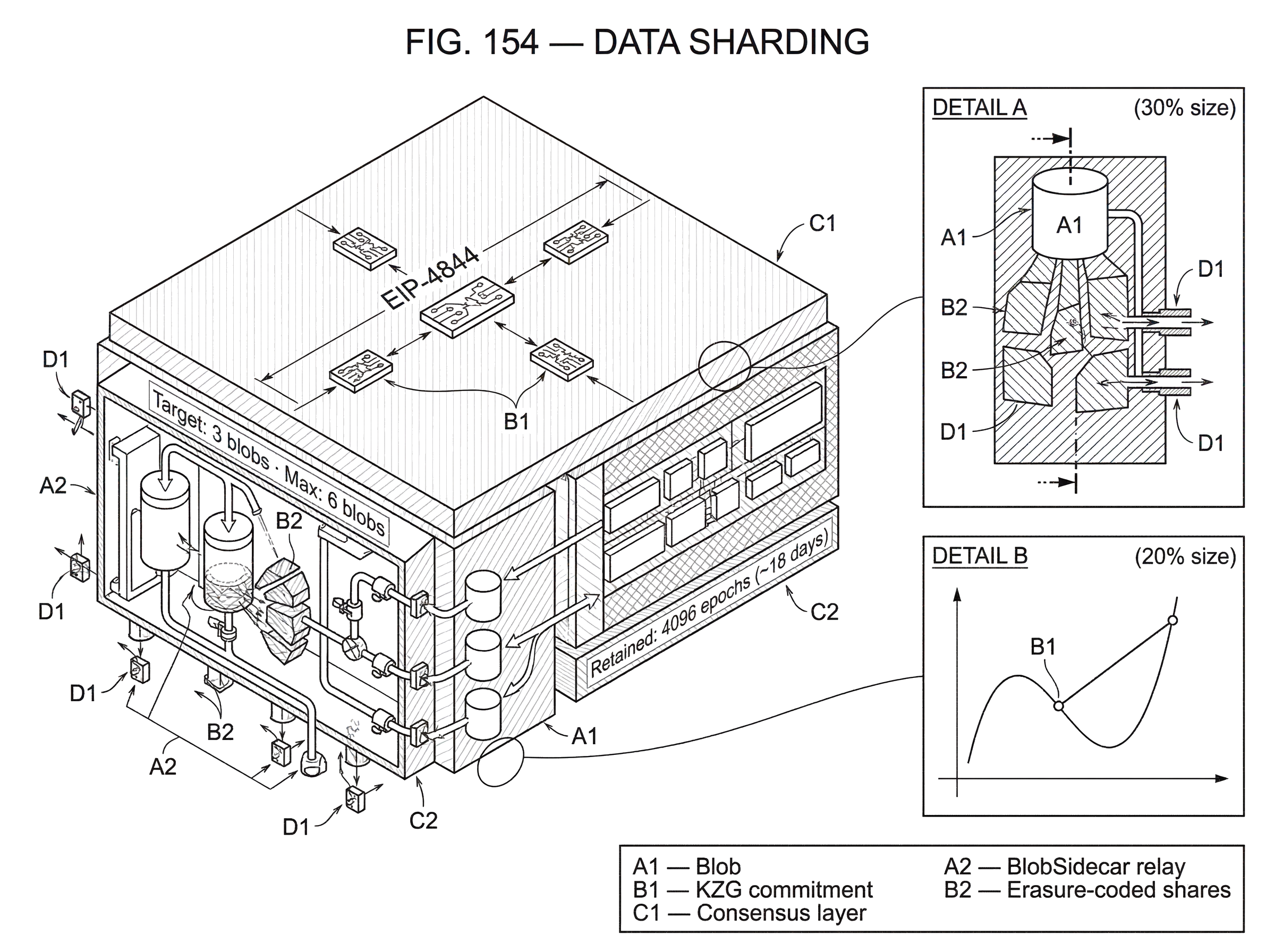

In data sharding, the chain introduces a data container (often called a blob) that can hold a large amount of transaction data or other payloads. The base layer does not need to interpret the blob contents as smart-contract input in the normal execution environment. Instead, it needs to make sure the blob is committed to, propagated, and available.

Ethereum’s EIP-4844 is the clearest deployed example of this direction. It introduced blob-carrying transactions, where a transaction can include large data payloads that are not accessible to EVM execution, while their cryptographic commitments are accessible. This is a crucial design choice. If blob data were treated exactly like ordinary calldata, then it would compete directly with execution resources and long-term state access. By separating blob data into its own resource class, Ethereum created cheaper blockspace for rollups without pretending that all data must be executed or retained forever.

This also explains why EIP-4844 is called a stop-gap. It implements the transaction format for future sharded data, but not full sharding itself. Today, blobs are still fully downloaded by consensus nodes and propagated as separate sidecars. That already helps because the fee market and storage model are different from calldata, but it does not yet achieve the full benefit of data sharding, where nodes can check availability probabilistically rather than downloading every blob in full.

So a smart reader should keep two layers separate in their head. Proto-danksharding gives a data-oriented transaction format and cheaper temporary blob space now. Full data sharding goes further by changing how availability itself is verified at scale.

Why do data sharding designs use cryptographic commitments like KZG?

| Scheme | Commitment size | Proof size | Trusted setup | On‑chain verify |

|---|---|---|---|---|

| Merkle tree | Single root | Merkle branch O(log n) | No | Cheap hash checks |

| KZG polynomial | Fixed small element | Constant‑size openings | Requires trusted setup | Pairing precompile |

| Validity proofs (SNARKs) | Tiny commitment | Succinct proofs | Varies by scheme | Verifier cost varies |

Once data stops being directly embedded in ordinary execution payloads, the chain still needs a compact way to refer to it. This is where cryptographic commitments enter.

A commitment is a short value that binds a large piece of data. The chain can store or reference the commitment, and later participants can verify claims about the underlying data against it. For blob-based designs, this lets the protocol say, in effect, “this exact blob was the one associated with this block,” without putting every byte directly into the core authenticated structure.

Ethereum’s blob design uses KZG polynomial commitments. At a high level, the blob is interpreted as data defining a polynomial, and the protocol stores a small commitment to that polynomial. A verifier can later check claims about the blob using a proof that the polynomial evaluates to a given value at a given point. EIP-4844 even adds a point-evaluation precompile so the EVM can verify such proofs, even though it cannot read the raw blob contents directly.

The important mechanism is not the polynomial language by itself. It is the scaling property. Research on polynomial commitments showed that a verifier can check evaluations using short commitments and constant-size openings. That is exactly the kind of compression a data-heavy blockchain needs: bind a large object to a small on-chain reference, then verify specific facts about it later.

There is, however, a real assumption here. KZG relies on a trusted setup. Ethereum addressed this through a large public ceremony with more than 140,000 contributions, with the standard security assumption that as long as at least one contribution was honestly generated and secret, the toxic waste remains unknown. That is a practical engineering choice, not a law of nature. Other commitment schemes are possible, and Ethereum’s versioned hash mechanism exists partly to preserve future upgrade paths.

Why is data availability the hard part of sharding?

A commitment proves which data was promised. It does not, by itself, prove the data was actually published.

That distinction is the heart of data sharding. A malicious block producer could commit to a large blob, include the commitment in the block, and reveal only a tiny subset of the actual data. If most users do not have the full blob, the producer may be able to hide invalid state transitions or make the block unverifiable in practice. So a complete data-sharding design must ensure not only commitment integrity but data availability.

This is why modern designs focus on data availability sampling, or DAS. The idea is that data is encoded with redundancy (usually through erasure coding such as Reed-Solomon) and then nodes sample small random pieces. If enough randomly chosen pieces are available and valid, the probability that the full dataset is unavailable becomes very small. Instead of every node downloading the whole block, many nodes each check different tiny parts.

The compression point here is simple: availability becomes a statistical property verified collectively. No single light node needs the entire block. But if the system is designed correctly, the producer cannot withhold a meaningful fraction of the data without being caught with high probability by samplers.

This is why Ethereum’s current sidecar model is explicitly described as forward-compatible with DAS. In Deneb, blobs are relayed separately as BlobSidecar objects. Beacon blocks carry KZG commitments, while the full blob contents move through dedicated networking paths and are served for a limited recent window. The protocol can later replace the current “download all blobs” behavior with a black-box is_data_available() mechanism based on sampling, without redesigning the whole block structure.

How does a rollup publish batches using blobs and proto‑danksharding?

Imagine a rollup that has processed thousands of user transactions off-chain and now wants to publish enough information on the base layer so anyone can verify or challenge what it did.

In a traditional calldata model, the rollup posts those transactions directly as ordinary block data. That works, but it is expensive because calldata is part of the permanent execution-layer history and competes for scarce blockspace with everything else. Every full node must treat it like ordinary chain data.

In a blob-based data-sharding model, the rollup instead places its batch data into one or more blobs. It creates commitments to those blobs and includes references to those commitments in a transaction. On Ethereum today, that transaction is a blob transaction under EIP-4844, carrying blob_versioned_hashes and paying a separate blob gas fee rather than only ordinary gas. The execution layer can see the references and commitments, but not directly read blob contents inside the EVM.

The consensus layer then handles the blob data itself. In today’s proto-danksharding design, the blobs are propagated as sidecars and kept for a limited time. The fee model is separate as well: blob space has its own EIP-1559-style pricing rule, with a target and maximum number of blobs per block. EIP-4844 chose a target of 3 blobs and a maximum of 6 blobs per block in its initial design, which bounded near-term bandwidth growth while making rollup data substantially cheaper.

Now consider what an independent verifier needs. If it is a full participant, it can fetch the blob sidecars, reconstruct the rollup input data, and check the batch. If the future system supports full DAS, a lighter participant may only need to sample random shares of erasure-coded blob data and verify them against commitments. The verifier does not need every byte personally to get high confidence that the data was truly published. That is the step where data sharding stops being just “cheap temporary storage” and becomes a genuine scaling architecture.

How do separate fee markets (blob gas) help scaling?

Data sharding is not only a networking and cryptography problem. It is also an economics problem.

If data publication uses the same fee market as computation, then spikes in one resource distort pricing for the other. Rollups may crowd out smart-contract execution, or vice versa. A clean scaling design needs prices that track the thing actually becoming scarce.

Ethereum’s blob gas is an example of solving this at the mechanism level. Blob gas is a separate gas type with its own base-fee adjustment rule, based on persistent excess_blob_gas accounting. That means the protocol can tune the price of blob data according to blob demand rather than ordinary execution demand. The immediate consequence is better resource isolation. The deeper consequence is that the chain can add a specialized data lane without pretending it is identical to CPU-like execution.

This separation is one reason data sharding fits a rollup-centric world so well. Rollups mainly need affordable publication of compressed transaction data. They do not need the base layer to re-execute every user transaction in the main smart-contract environment. Pricing those needs separately matches the actual system architecture.

What assumptions does data sharding rely on, and where can they fail?

Data sharding sounds elegant, but it is not free magic. It shifts the burden from universal full download to a more delicate set of assumptions about coding, commitments, networking, and participation.

The first assumption is that the network can authenticate sampled pieces. A node sampling random chunks must know that the chunk it received really belongs to the committed data. That is why binding commitments are essential.

The second assumption is that the data was encoded correctly. If erasure-coded data is malformed, naïve sampling can be fooled. Practical DAS designs therefore need some way to rule out invalid encoding. Researchers and implementers discuss three broad families of approaches: fraud proofs, polynomial-commitment-based checks, and Validity Proof. Each moves cost and complexity around differently.

The third assumption is about network reality. In idealized explanations, nodes randomly sample from a bulletin board that honestly serves data if it exists. Real peer-to-peer networks are messier. Routing can fail, peers can eclipse users, Sybil attacks can bias what some clients see, and storage incentives may be weak. Secondary literature on DAS emphasizes that many security claims depend on stronger networking assumptions than casual explanations reveal. So when people say DAS gives high probability guarantees, the hidden clause is always: under a particular network and adversary model.

The fourth assumption concerns retrievability versus availability. Availability means the data could be obtained when the block was accepted, so the block was verifiable. It does not mean the protocol stores the data forever. Ethereum’s blob design is explicit about this: blob data is temporary, retained for a limited window of roughly 4096 epochs, around 18 days at the time of the referenced documentation. That is enough for many rollup challenge and verification workflows, but not the same as archival permanence. Long-term storage becomes an ecosystem responsibility.

This distinction is easy to misunderstand. Data sharding is primarily about making new blocks verifiable at scale, not about giving every application free eternal storage.

How do Ethereum, Celestia, and modular designs differ in implementing data availability?

| System | Core job | Encoding | Sampling method | Execution location |

|---|---|---|---|---|

| Ethereum (proto‑dank) | Order + DA anchor | KZG blobs | Full download today → DAS later | Rollups off‑chain |

| Celestia | Modular DA network | 2D Reed‑Solomon + NMTs | Random sampling by light nodes | Above‑layer execution |

| LazyLedger | DA‑focused consensus | 2D erasure coding | Probabilistic sampling | Client‑side execution |

The cleanest way to see data sharding is to compare how different systems instantiate the same principle.

Ethereum’s roadmap uses blobs, commitments, separate blob fees, and a path from full download today to sampling later. It keeps the base layer as a settlement and data-availability anchor for rollups. Its current deployed form, proto-danksharding, is intentionally incomplete but already useful.

Celestia pushes the modular version further. It treats ordering and data availability as the core job of the chain, while application execution happens above it. Its design uses 2D Reed-Solomon encoding, random sampling by light nodes, and Namespaced Merkle Tree so applications can retrieve only their own data with completeness proofs. The details differ from Ethereum’s current design, but the principle is the same: consensus does not need to execute every application to provide a strong shared data-availability layer.

The earlier LazyLedger research made this architectural shift explicit. Consensus was optimized for ordering and availability, while application-specific clients handled validity. That work is useful because it shows the first-principles reason data sharding matters: once you stop demanding that consensus nodes execute everything for everyone, throughput can be limited by a narrower and more manageable question; is the data available?

What data sharding is not: common misconceptions (execution sharding, big blocks)

It helps to end by clearing away two common confusions.

First, data sharding is not the same as execution sharding. Execution sharding partitions computation and often state across multiple shards, which creates difficult cross-shard communication and composability problems. Data sharding instead scales the publication and verification of data, often leaving execution to rollups or app-specific layers.

Second, data sharding is not just bigger blocks under a new name. Bigger blocks alone ask every node to do more of the same work. Data sharding changes the verification model so the network can support more data without each participant bearing the full cost.

That is why Ethereum’s current design, even before full danksharding, already points toward the future shape of scaling. The chain is becoming a system that offers scarce execution, yes, but also a specialized market for data availability.

Conclusion

Data sharding is the idea that a blockchain can scale by distributing the burden of publishing, checking, and serving data rather than forcing every node to process every byte in the same way. Its core promise is simple: more data throughput without giving up independent verification.

The important thing to remember tomorrow is this: the breakthrough is not that data is split up; it is that availability can be verified without universal full download. Blobs, commitments, erasure coding, sidecars, sampling, and separate fee markets are all pieces of that one mechanism. When they work together, the base layer becomes a scalable foundation for rollups and modular execution rather than a bottleneck that every application must squeeze through byte by byte.

How does data sharding affect real‑world usage?

Data sharding changes how networks publish and verify transaction data, which affects whether you can independently verify activity or rely on network upgrades when valuing or using rollup‑dependent assets. On Cube Exchange, you can check an asset’s dependence on sharding features and then fund and trade while accounting for data‑availability and fee‑market risks.

- Look up the asset’s chain and project notes on Cube to confirm whether it depends on blob/DAS features (EIP‑4844, proto‑danksharding, or Celestia‑style DA).

- Review the project or chain docs linked from Cube for short‑term data retention (for example, EIP‑4844 blobs are retained ~4096 epochs) and for whether separate blob fees or gas types apply.

- Fund your Cube account with fiat or a supported crypto transfer so you can trade without on‑chain funding delays.

- Open the asset market on Cube, pick an order type (use a limit order for upgrade/event risk or a market order for immediate execution), set slippage and size, and submit.

Frequently Asked Questions

Data availability sampling (DAS) encodes the blob with redundancy (e.g., Reed–Solomon) and lets many nodes each fetch a few random shares; if sufficiently many random samples check out, the probability the producer withheld a meaningful fraction of the data becomes very small. This is a statistical guarantee that depends on sampling parameters and the network/adversary model rather than absolute proof.

A cryptographic commitment (like a KZG commitment) compactly binds the blob to the block so everyone can refer to the exact object that was promised, but the commitment alone does not prove the blob bytes were actually published - availability still needs independent checking (e.g., sampling or serving).

KZG polynomial commitments require a trusted setup; Ethereum mitigated this by running a large public ceremony with many contributions, and the security assumption is that at least one contribution stayed secret. That trusted‑setup dependence is a practical engineering trade‑off rather than a cryptographic law.

Blob data is temporary on the core protocol: EIP‑4844 blobs are retained only for a limited window (roughly 4096 epochs, about 18 days in the referenced docs), so the protocol does not guarantee indefinite archival storage - long‑term retrievability is an ecosystem responsibility.

Separating blob gas from ordinary execution gas creates an independent fee market so blob prices reflect data demand rather than CPU/execution demand; that isolates resource pricing and prevents rollup data from crowding out execution (and vice versa).

Full danksharding depends on other upgrades (for example proposer‑builder separation, data‑availability sampling, and proof‑of‑custody mechanisms) and is described as still several years away; proto‑danksharding (EIP‑4844 / deneb sidecars) has been deployed earlier as an intermediate step.

Data sharding scales the network by partitioning who must download, store, sample, and attest to block data (availability), whereas execution sharding partitions computation and state across shards and introduces cross‑shard execution and composability challenges; the two are distinct goals.

Real P2P networks introduce risks that weaken DAS guarantees - routing failures, eclipse or Sybil attacks, biased peer selection, and weak storage incentives can all reduce the effectiveness of random sampling - so DAS security claims are conditional on a particular network and adversary model and on sufficient independent samplers.

Related reading