What Is a Polynomial Commitment?

Learn what polynomial commitments are, how they prove polynomial evaluations succinctly, and why they matter for SNARKs, secret sharing, and data availability.

Introduction

Polynomial commitments are a cryptographic way to commit to an entire polynomial and later prove specific facts about it (usually that the polynomial evaluates to a claimed value at a chosen point) without revealing the whole polynomial. That may sound narrow, but it turns out to be exactly the interface many modern protocols need. A zk-SNARK prover wants to commit to large algebraic objects and answer a few evaluation queries. A secret-sharing protocol wants to bind a dealer to one polynomial so participants can check shares consistently. A data-availability system may want to commit to encoded data and verify small checks against it. In all of these settings, the same pressure appears: bind a large structured object with a short commitment, then open only tiny pieces of it efficiently.

The puzzle is why a polynomial, of all things, deserves its own commitment primitive. Why not just commit to the coefficient list with an ordinary hash or Merkle tree? The answer is that many cryptographic protocols do not merely store a list; they compute with low-degree polynomials. The important invariant is not just “these bytes are fixed,” but “these values come from one polynomial of bounded degree.” A polynomial commitment preserves that algebraic structure. That is the feature that makes the primitive more than a compressed container.

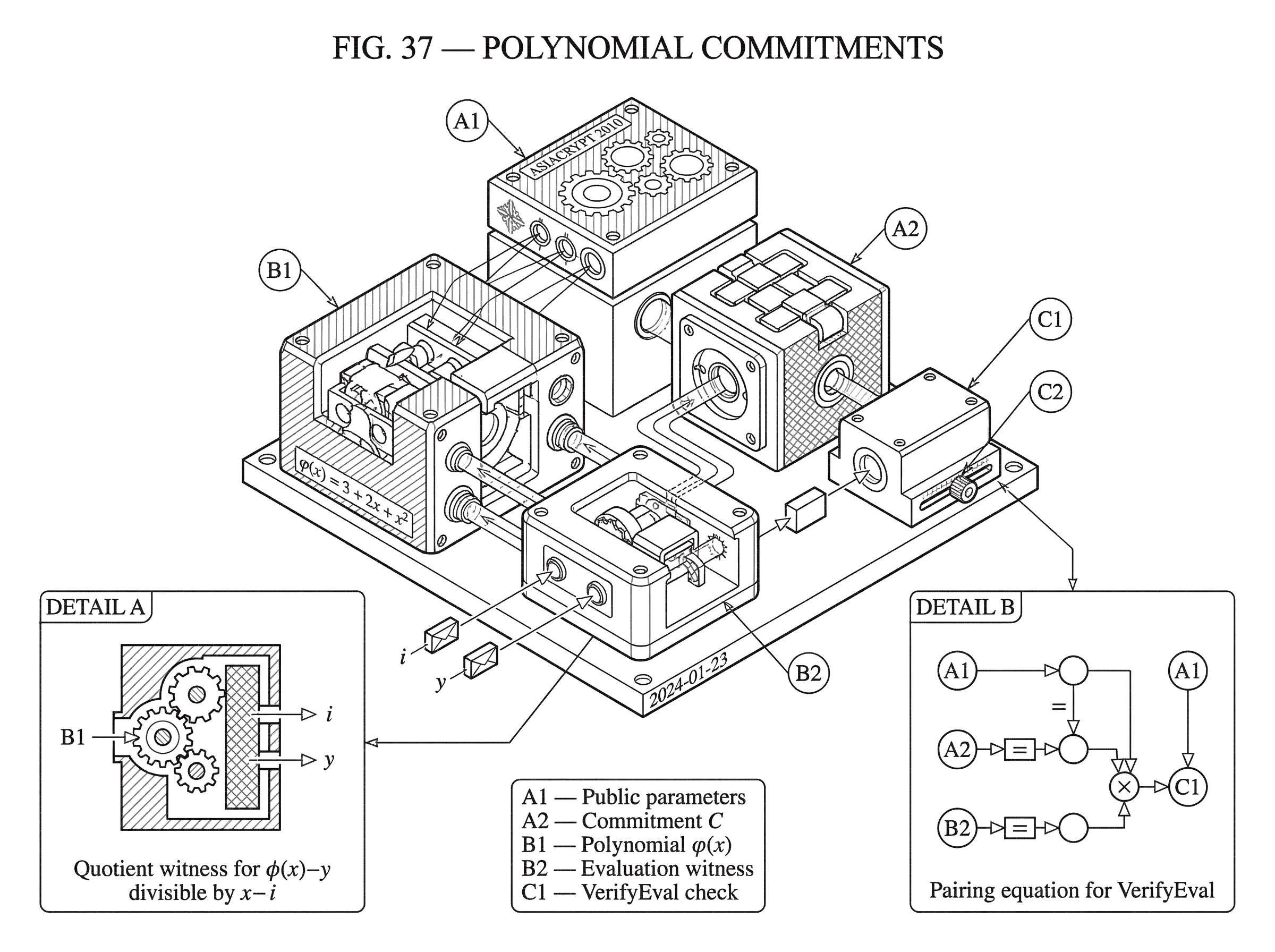

At a high level, a polynomial commitment scheme lets a committer choose a polynomial φ(x), produce a short commitment C, and later generate a witness showing that φ(i) = y at some point i. A verifier checks the witness against C and accepts if the claim is consistent with the committed polynomial. In the formal definition given by Kate, Zaverucha, and Goldberg, the primitive is organized into algorithms such as setup, commit, witness creation, and evaluation verification. That abstract interface is the foundation. Different concrete schemes then fill in the mechanics with different tradeoffs in setup, proof size, hiding, and assumptions.

Why are Merkle trees insufficient for proving polynomial evaluations?

| Scheme | Proof size | Opening size | Algebraic binding | Homomorphism | Setup |

|---|---|---|---|---|---|

| Coefficient hash | Full-size | Whole coefficients | No | No | None |

| Merkle tree | Logarithmic per opening | Merkle path (log n) | No | Limited | None |

| Polynomial commitment | Constant-size proofs | Constant-size witness | Yes (degree bound) | Yes | Trusted SRS |

To see why this primitive exists, imagine you commit to the coefficients of a degree-t polynomial using a hash or a Merkle tree. That certainly binds you to the coefficients. But if someone later asks, “What is the value at point i?” the verifier still has a problem. They either need all coefficients, or they need a proof that lets them recompute the evaluation from authenticated coefficient data. With a Merkle tree, that proof is about the coefficients as stored leaves, not about the polynomial’s algebraic behavior. You can prove that a coefficient is present, but not directly that a claimed evaluation is correct without doing more work.

This is the key contrast with polynomial commitments. The commitment is to a function with structure, not merely to a bag of values. Because the verifier cares about evaluations, the most useful opening is not “here is leaf 37,” but “here is proof that the committed polynomial takes value y at input i.” In the original polynomial commitment work, this leads to constant-size commitments and constant-size evaluation witnesses for bounded-degree polynomials, whereas Merkle-style approaches give logarithmic-size openings in the number of committed items. The asymptotic difference matters because these openings sit inside larger protocols that repeat them many times.

There is another reason ordinary commitments fall short: homomorphism. Many polynomial commitment schemes let you combine commitments in algebraically meaningful ways. If a protocol asks about a(x) + b(x) or a random linear combination of several polynomials, it is useful if commitments and witnesses can be combined correspondingly. The original constructions explicitly highlight homomorphism and randomizability. That is one reason these schemes fit naturally into proof systems and secret-sharing protocols where linear combinations are everywhere.

How does a polynomial commitment let you commit now and prove evaluations later?

A polynomial commitment scheme is easiest to understand as a two-stage promise. First, the committer says, “I have fixed a polynomial.” Later, they may be challenged on specific points. If they answer correctly, the verifier gains confidence that all those answers come from one underlying polynomial, not from ad hoc values invented per query.

Formally, the classic definition includes six algorithms: Setup, Commit, Open, VerifyPoly, CreateWitness, and VerifyEval. Not every modern paper keeps exactly this six-algorithm presentation, but the underlying roles remain the same. Setup produces public parameters, often called a structured reference string or SRS. Commit takes a polynomial φ(x) and returns a commitment C. CreateWitness produces a proof that the committed polynomial evaluates to some claimed value φ(i) at point i. VerifyEval checks that proof. Some schemes also support opening the whole polynomial or opening many evaluations together.

The most important correctness property is simple: honest openings verify. The most important security property is binding: once a commitment C is fixed, the committer should not be able to produce valid proofs for inconsistent evaluation claims. In many applications, there is also a degree bound. The verifier is not asking only for “some polynomial,” but for one of degree at most t. That bound is often essential, because a low-degree polynomial is determined by relatively few points. If the degree condition fails, several protocol guarantees can fail with it.

Some schemes also provide hiding, meaning the commitment conceals the polynomial beyond what later openings reveal. The original paper gives two pairing-based constructions with different hiding tradeoffs. PolyCommitDL offers hiding based on the discrete logarithm assumption, while PolyCommitPed adds a random polynomial in a Pedersen-like way to achieve unconditional hiding and computational binding under a strong Diffie–Hellman-type assumption. This is a good example of what is fundamental versus what is a design choice. Binding to one polynomial is fundamental to the primitive. Hiding is optional and depends on what the surrounding protocol needs.

How do KZG (pairing-based) polynomial commitments work?

| Scheme | Setup | Hiding | Binding assumption | Witness size | Best for |

|---|---|---|---|---|---|

| KZG (pairing) | Powers-of-tau SRS | Computational / optional | Pairing / t-SDH | Single group element | Short proofs, SNARKs |

| PolyCommitDL | SRS-based | Computational (DL) | t-SDH family | Constant-size | Compact openings |

| PolyCommitPed | SRS + randomness | Unconditional hiding | SDH (computational) | Constant-size | Protocols requiring hiding |

The best-known family of polynomial commitments is the pairing-based line introduced in the ASIACRYPT 2010 paper, now commonly called KZG commitments after Kate, Zaverucha, and Goldberg. The compression point of the scheme is this: instead of committing to coefficients individually, the setup creates public parameters tied to a secret value α, and the commitment is effectively the polynomial evaluated at that hidden point. Because the setup publishes powers related to α, the committer can compute a commitment to φ(x) as a single group element representing φ(α) in the exponent, without learning α itself.

That sounds abstract, so here is the mechanism in ordinary language. Suppose the setup somehow fixes a secret trapdoor α and publishes group elements corresponding to 1, α, α^2, ..., α^t in exponent form. If the polynomial is φ(x) = a0 + a1 x + ... + at x^t, the committer can combine those published powers to form a single group element encoding φ(α). The verifier does not know α, and neither does the committer after setup, but both can still use the published powers. The commitment is short because it is not a list of coefficients. It is one algebraically compressed object.

Now suppose the committer wants to prove that φ(i) = y for some point i. The core identity is that if the claim is true, then the polynomial φ(x) - y is divisible by x - i. So there exists a quotient polynomial q(x) such that φ(x) - y = q(x)(x - i). The witness is then a commitment-like encoding of q(α). Verification checks, via a bilinear pairing equation, that the commitment, the claimed value y, the witness, and the public setup are all consistent with this divisibility relation at the hidden point α.

This is the idea that makes KZG click: an evaluation proof is really a proof of divisibility. You are not separately proving every coefficient. You are proving that the committed polynomial differs from the constant y by a factor x - i. Pairings let the verifier test that relation succinctly in the exponent. That is why the witness can remain constant size.

Example: what does a KZG evaluation proof look like in practice?

Take a small polynomial φ(x) = 3 + 2x + x^2, and suppose the committer wants to prove that at i = 2, the value is 11. The arithmetic check is straightforward: φ(2) = 3 + 4 + 4 = 11. But the verifier does not see the polynomial. They see only the commitment.

What makes the proof possible is the factorization φ(x) - 11 = (x - 2)(x + 4). The quotient polynomial here is q(x) = x + 4. In a KZG-style scheme, the witness is a group element corresponding to q(α), while the commitment corresponds to φ(α). The verifier then checks a pairing relation expressing that φ(α) - 11 = q(α)(α - 2). Since neither side explicitly knows α, the pairing machinery and the published powers from setup let the verifier test the relation indirectly.

This example also clarifies what the witness is not. It is not a transcript of the polynomial, not a Merkle path, and not a numerical quotient sent in the clear. It is a cryptographic encoding of the quotient evaluated at the hidden setup point. The witness is short because the scheme compresses an algebraic statement, not because it avoids checking anything. The checking still happens; it has just been moved into a pairing equation.

How do polynomial commitments reduce communication in SNARKs, VSS, and DA systems?

Once you see evaluation proofs as divisibility proofs, their role in other protocols becomes much clearer. Many cryptographic systems reduce correctness to statements of the form “this polynomial vanishes on these points” or “these two expressions agree on a domain.” A polynomial commitment lets a prover commit to the relevant polynomials once, then answer a small number of carefully chosen evaluation queries. This turns large algebraic objects into short messages.

That is why polynomial commitments became central in modern zk-SNARK design. PlonK, for example, relies on polynomial commitments to bind the prover to several low-degree polynomials and then open them at a few points. The protocol’s efficiency depends heavily on being able to batch these openings and keep proofs compact. In that setting, the commitment is not an add-on. It is the bridge between abstract polynomial identities and a succinct proof a verifier can actually check.

The same primitive appears in verifiable secret sharing. In a Shamir-style scheme, a dealer chooses a low-degree polynomial whose constant term or evaluations encode the secret. Participants receive shares that are evaluations of that polynomial. The hard part is making sure the dealer has not sent inconsistent shares. Polynomial commitments solve that by letting the dealer commit once to the sharing polynomial and later prove that each share matches the committed polynomial. The original paper shows that this can reduce communication in some VSS settings substantially, even to constant-size broadcast in the sharing phase where older coefficient-based approaches needed communication growing with the threshold.

The primitive also appears in data-availability designs. Ethereum’s EIP-4844 uses KZG commitments to commit to blob data represented as a polynomial, then provides proof mechanisms to verify evaluations against the commitment. On the execution layer, contracts see only a versioned hash derived from the KZG commitment, while consensus handles the blob sidecars and proof verification logic. This is a concrete example of polynomial commitments escaping the world of pure proof systems and becoming protocol infrastructure.

What are batch openings and when should a protocol use them?

| Opening type | Proof size | Verifier work | Assumption strength | Best when |

|---|---|---|---|---|

| Single opening | Constant per evaluation | Low per evaluation | Standard pairing assumptions | Few independent queries |

| Batched opening | Single witness for many points | Moderate to high | t-BSDH (stronger) | Many related queries |

| Packed multipoint | One or two group elements | Higher pairing / G2 operations | AGM or stronger models | Minimize prover bandwidth |

A single evaluation proof is useful, but many protocols need several openings at once. If each opening required a separate witness, proof size and verifier work would grow quickly. The original polynomial commitment paper already includes batch opening, where multiple evaluations can be opened together with a single witness element, with security argued under a stronger bilinear assumption than the one used for single openings.

Mechanically, batching works because several evaluation constraints can be folded into one algebraic relation. Instead of separately proving divisibility by each x - i, the prover constructs a witness for a larger relation that captures the whole set. The exact shape of that relation varies by scheme, but the principle is consistent: use linear combination and divisibility to compress many checks into one. This is where homomorphism stops being a nice extra and becomes operationally important.

Modern work pushes this further. Later KZG-based schemes show how a single group element, or at worst a couple of group elements, can serve as an opening proof for multiple polynomials at different subsets of points. The tradeoff is that proof size can shrink while verifier work increases, especially when many distinct evaluation points are involved. This is a recurring pattern in polynomial commitments: you can often move cost among setup size, proof size, prover computation, and verifier computation, but you rarely get all four to be minimal at once.

What trust model and cryptographic assumptions do KZG commitments require?

The elegance of constant-size commitments and proofs can obscure what you pay for them. The classic pairing-based constructions require a structured setup. Public parameters contain a sequence of powers tied to a trapdoor such as α, and their size grows with the supported degree bound. In the original paper, setup outputs an O(t)-sized tuple when the committed polynomial degree is bounded by t. This means the scheme is not transparent in the way a plain hash-based commitment is.

That setup may be generated by a trusted authority or by a distributed ceremony. The original paper notes that the trapdoor need not be retained after setup if the degree bound is fixed. In practice, systems often use multi-party computation ceremonies, sometimes called powers-of-tau ceremonies, so that security holds as long as at least one participant behaves honestly and destroys their secret contribution. Ethereum’s KZG ceremony for EIP-4844 is a prominent recent example, with a public transcript and tooling for independent verification.

This setup requirement is not a minor engineering footnote. It is part of the primitive’s trust model. If the trapdoor is known, soundness can collapse. Audit literature around real deployments makes this concrete: a compromised or improperly generated CRS can let an attacker forge proofs. So when people compare polynomial commitments with Merkle trees, the real comparison is not only proof size versus opening size. It is also algebraic succinctness versus setup complexity and stronger assumptions.

The assumptions themselves are also stronger than standard hash collision resistance or ordinary discrete logarithm hardness. The original KZG-style constructions rely on pairing-group assumptions such as t-SDH, and stronger variants like t-BSDH for batched openings. Some analyses and later protocols also use idealized models like the Algebraic Group Model with assumptions such as Q-DLOG. These are standard in the literature around pairing-based SNARKs, but they are still modeling choices, not free facts of nature.

When do degree bounds matter and how can higher-degree cheating be prevented?

A smart reader might think the commitment already binds to a polynomial, so what more is there to say about degree? Quite a lot. Many protocols need not just binding to some polynomial, but to one whose degree is at most a specified threshold. That condition is essential in secret sharing, coding arguments, and many SNARK soundness proofs.

Recent work on using KZG in verifiable secret sharing points out an important subtlety: the original KZG commitment is not, by itself, enough to guarantee degree binding against standard adversaries in the strongest sense one may want for VSS. If a protocol assumes that f + 1 shares determine a unique degree-f polynomial, then cheating with a higher-degree polynomial can break reconstruction consistency. This is not a small edge case; it goes to the heart of what the commitment is being used to enforce.

One proposed fix is to augment KZG with an additional degree proof, for example by publishing an aggregated linear-sized commitment that makes higher-degree cheating detectable. The point is conceptually important even if the construction details vary: the algebraic statement a protocol needs may be richer than “these evaluations are mutually consistent.” It may also require “and they come from a polynomial inside this degree class.” When reading claims about polynomial commitments, it is worth asking whether degree binding is built in, assumed, or added separately.

What problems do polynomial commitments not solve compared with Merkle trees?

Polynomial commitments are powerful, but they are not a universal replacement for other commitment tools. If your data has no useful algebraic structure, a polynomial commitment may be unnecessary complexity. If you need a transparent setup and are happy with logarithmic openings, Merkle trees may be the cleaner tool. If you need post-quantum conservatism today, pairing-based KZG-style commitments may not fit that requirement.

They also do not eliminate implementation risk. Real verifier code must correctly handle pairings, curve checks, field arithmetic, edge cases around roots of unity, and CRS handling. Audits of production proof verifiers have found failures as basic as not checking the actual result returned by a pairing precompile, mishandling Lagrange evaluations in edge cases, or relying on weak setup artifacts during testing. Those are implementation failures rather than conceptual flaws, but in practice the distinction matters only if the code is correct.

And while polynomial commitments can support data-availability systems, they do not by themselves provide full data availability. In Ethereum’s proto-danksharding design, KZG commitments and proofs are only one layer. Blob propagation, persistence, and later data-availability sampling mechanisms are separate protocol components. A commitment can prove that an evaluation is consistent with some polynomial; it cannot force the network to retain all underlying data indefinitely.

When are polynomial commitments the right primitive for a protocol?

The reason polynomial commitments became so important is that they match the language many advanced protocols already speak. Once a protocol is phrased in low-degree polynomials, a commitment scheme that preserves low-degree structure becomes the natural cryptographic wrapper. It lets the protocol reveal only the algebraically necessary slices while keeping communication short.

That is why the primitive connects such different-seeming systems. In PlonK and related SNARKs, polynomial commitments turn polynomial identities into succinct proofs. In secret sharing, they bind a dealer to one sharing polynomial and make disputes checkable. In EIP-4844, they give the chain a compact way to refer to large blobs and verify small algebraic checks against them. The surface applications differ, but the underlying need is the same: commit once to a structured function, open later with tiny evidence.

Conclusion

A polynomial commitment is a commitment to a low-degree function rather than to a raw list of data. Its core trick is to preserve algebraic structure, so a verifier can check evaluation claims with short proofs instead of seeing the whole polynomial. That is why the primitive sits underneath modern SNARKs, secret-sharing systems, and some blockchain data-availability designs.

The tradeoff is equally important to remember tomorrow: polynomial commitments buy succinct algebraic proofs by leaning on stronger assumptions, specialized setup, and careful implementation. In other words, they are valuable not because they are a fancier hash, but because they let cryptographic protocols speak the language of polynomials efficiently.

What should you understand before using polynomial commitments?

Before acting on assets or protocols that use polynomial commitments, understand the trust model (setup transcript and pairing assumptions), audit coverage, and whether the chain or contract supports the specific commitment scheme (e.g., KZG / EIP‑4844). On Cube Exchange you can act on that knowledge directly: fund your account, trade the asset, or withdraw to the correct network while having verified the protocol’s setup and audits.

- Check the protocol docs and smart-contract references to confirm it uses a polynomial commitment (look for KZG, powers-of-tau, or EIP-4844 mentions) and open any published SRS/ceremony transcript.

- Verify the setup and audits: locate the ceremony transcript or multi-party-ceremony page and read the protocol’s audit reports for the verifier contract and CRS handling.

- Fund your Cube account with fiat or a supported crypto on the exact network the protocol requires (match token and chain to the commitment’s expected network).

- Trade or transfer on Cube using conservative execution: place a limit order to control price when trading assets tied to commitment-backed systems, and when withdrawing, choose the correct destination network and confirm its data-availability support (e.g., EIP‑4844) before submitting.

Frequently Asked Questions

KZG-style schemes use a structured setup that publishes group elements for 1, α, α^2, … and the commitment is essentially the polynomial evaluated at the hidden α; an evaluation proof is a short witness for the quotient q(x) satisfying φ(x)−y = q(x)(x−i), and the verifier checks this divisibility relation indirectly via a bilinear-pairing equation. This divisibility viewpoint explains why proofs are constant-size and verification reduces to a pairing check.

Because standard polynomial commitments (like KZG) rely on a trapdoor α and publish O(t)-sized parameters, they require a trusted or multi-party setup whose security depends on at least one honest participant destroying their secret; if the trapdoor were known, soundness can collapse. The setup size grows with the degree bound t, so the CRS is non‑transparent and nontrivial to generate safely.

The basic KZG commitment binds to evaluations at an SRS-related point but does not by itself guarantee the committed polynomial has degree ≤t in every threat model, and protocols that need degree-binding often add explicit degree proofs (e.g., linear-sized aggregated proofs) or other augmentations to detect higher-degree cheating. This limitation and the proposed fixes are discussed because some VSS and reconstruction arguments require strong degree bounds.

You can open many evaluations together by folding their constraints algebraically so a single (or a small number of) witness elements prove multiple points; batching reduces proof size but typically increases verifier work or requires stronger assumptions for security, so the tradeoff depends on the scheme and parameters.

If your data has no useful low-degree algebraic structure, a Merkle tree (transparent setup, logarithmic openings) is usually simpler and safer; polynomial commitments are worth it when the protocol computes with low-degree polynomials and needs constant-size, homomorphic openings that match algebraic checks.

KZG and similar pairing-based schemes rely on stronger, non-post-quantum assumptions (pairing-group assumptions such as t-SDH / t-BSDH and analyses often in the Algebraic Group Model), so they are not considered post‑quantum secure today. If post‑quantum security is a hard requirement, these pairing-based PCS are not an appropriate choice.

Polynomial commitments do not by themselves provide data availability guarantees; for example EIP‑4844 uses KZG to commit to blobs and enable compact checks, but blob persistence, sampling and other DA mechanisms remain separate protocol layers. A commitment only makes small verifications possible, it does not force the network to retain the full underlying data.

Real-world risks include incorrect pairing or curve checks, mishandled edge cases (e.g., Lagrange evaluations), and improper CRS handling; production audits and ceremony tooling exist but implementation and operational mistakes have produced failures and require careful vetting. The literature and deployment reports stress that these are implementation and ceremony risks rather than conceptual flaws.

The main cryptographic assumptions named for KZG-style schemes in the literature include t‑SDH and t‑BSDH for pairing-based soundness and stronger variants or AGM/Q‑DLOG-style assumptions when proving packed or one-element batch proofs, and some papers explicitly ask whether comparable schemes can be built from weaker assumptions as an open problem.

Related reading