What is Sharding?

Learn what sharding is, why blockchains use it to scale, how shards coordinate, and the security and data-availability tradeoffs it creates.

Introduction

Sharding is a way to scale a blockchain by splitting work across smaller groups, instead of making every node process every transaction, every piece of state, and every block. The appeal is obvious: if a network can divide validation and storage across many parallel parts, total capacity can grow without turning node operation into a datacenter-only activity. The difficulty is that blockchains are not ordinary databases. They are adversarial systems. The moment different nodes see and verify different subsets of activity, you gain throughput, but you also create new places for invalid data, unavailable data, delayed messages, and ordering mistakes to hide.

That tension is the reason sharding matters. In an ordinary replicated blockchain, the core invariant is simple: everyone can independently verify the same thing. Sharding changes that invariant. Not everyone verifies everything anymore. So the real question is not just how do we split the workload? It is how do we split the workload without losing the security properties that made replication valuable in the first place?

A good way to understand sharding is to start with the bottleneck it is trying to remove. Traditional blockchains often scale by stronger hardware, larger blocks, or faster block production. But those moves raise the cost of running a node, which pushes the system toward fewer operators. Sharding attacks the problem at a different layer. Instead of asking each participant to handle the full global workload, it asks each participant to handle only a fraction, while the protocol coordinates those fractions into one coherent system.

That sounds straightforward until you ask what exactly is being split. A blockchain can be burdened by at least three different kinds of work: storing data, executing transactions, and participating in consensus about ordering and validity. Different sharded systems divide these burdens differently. Some aim to shard execution across parallel chains or partitions. Some primarily shard data availability so that rollups can publish more data cheaply. Some combine a coordinating chain with many subordinate shards. Others keep a single logical chain but physically distribute per-shard chunks of block data. The common idea is partitioning; the details matter because each design inherits different tradeoffs.

What is the basic idea behind sharding and how does it increase blockchain throughput?

The simplest mental model is to compare two worlds. In a fully replicated blockchain, every validator or full node checks the same stream of transactions against the same global state. If there are N validating nodes, the network gets redundancy and strong independent verification, but it does not get N times the throughput. Most of that work is duplicated.

Sharding tries to convert some of that duplication into parallelism. The system is divided into shards: subsets of state, transactions, or data, each processed by only a subset of participants. If shard A and shard B can make progress at the same time, aggregate throughput can rise. The reason this is attractive is first-principles simple: if ten groups can each process a different tenth of the workload, the system may handle much more total work than a single group processing everything serially.

But there is a catch hidden inside that sentence. A blockchain is not useful because it processes work quickly in isolation. It is useful because the work composes into a shared, trustworthy ledger. If accounts, contracts, or messages need to move between shards, those shard-local decisions must still fit together into one global history. So sharding always has two jobs at once: it must create local parallelism, and it must preserve enough global coordination to keep the result coherent.

This is why sharding is often described as a response to the blockchain trilemma: the tension among scalability, decentralization, and security. The promise is that by reducing the per-node resource burden, more people can still participate, while total system capacity rises. Whether a particular design actually delivers that depends on the mechanisms underneath: how validators are assigned, how randomness is generated, how shards communicate, how data is proven available, and how the system reshuffles membership over time.

Which blockchain components are typically sharded: execution, data availability, or consensus?

| Shard type | Partitioned element | Cross-shard complexity | Primary benefit | Best for |

|---|---|---|---|---|

| Execution shards | State + execution | High | Parallel smart contracts | Native parallel execution |

| Data shards | Data availability only | Low | Cheap large data publication | Rollups and L2s |

| Chunked single-chain | Per-block shard chunks | Medium | Single logical chain semantics | NEAR-style designs |

| Parachain / relay | Independent chains | Medium | Shared security | App-specific chains |

The word sharding can hide important differences, because systems shard different things.

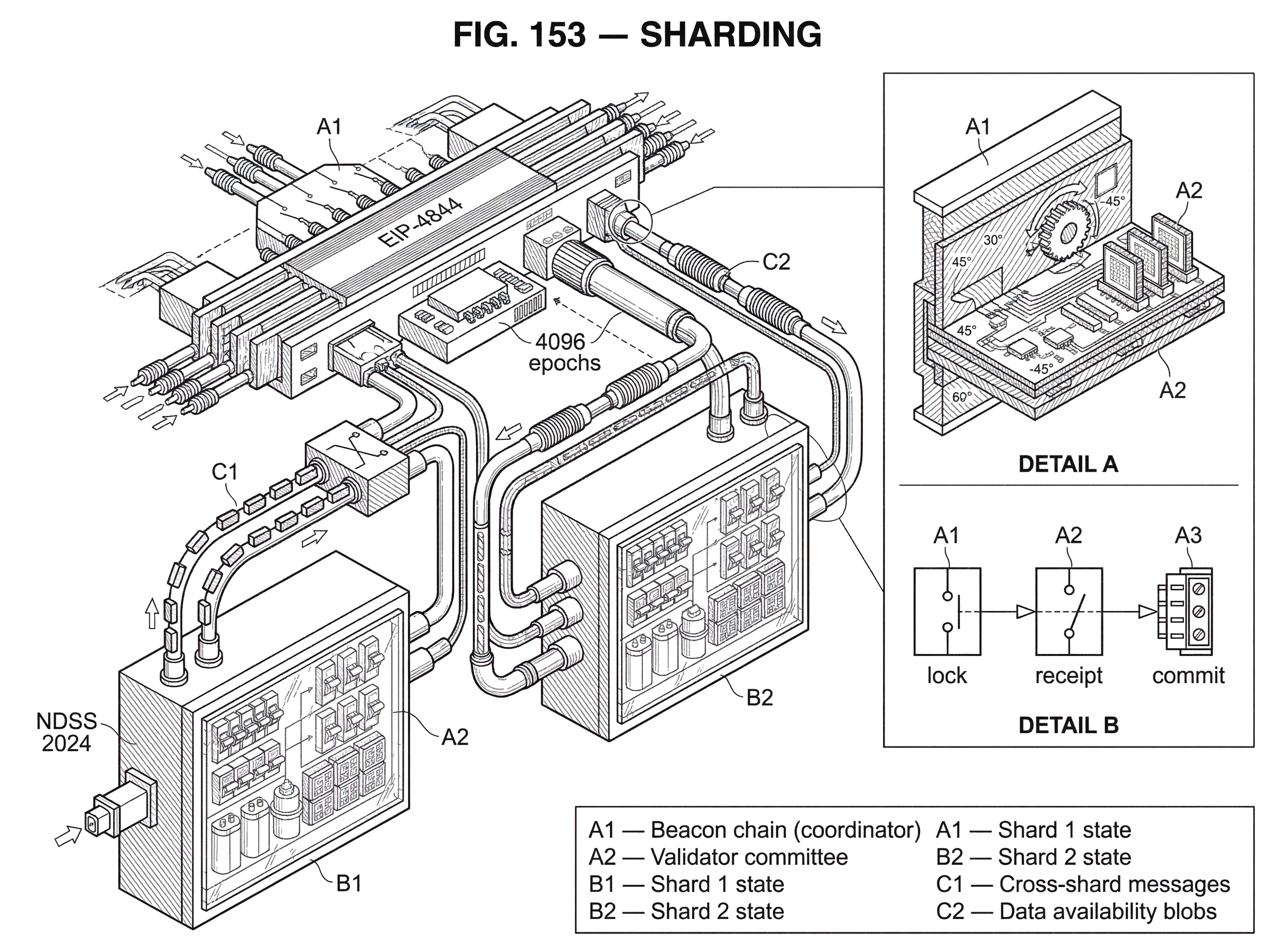

In execution sharding, different shards execute different transactions against different pieces of state. This is the intuitive version: many shard chains or partitions run in parallel, and together they form the full system. Early Ethereum roadmap documents described shard chains under a beacon chain, with the beacon chain coordinating validators and consensus while shards would increase aggregate capacity. Zilliqa also pursued this style in practice by dividing its network into shards that process transactions in parallel, with a directory service committee coordinating shard formation and block aggregation.

In data sharding, the protocol focuses less on having every shard execute its own contracts and more on increasing the amount of data the network can make available. This matters especially for rollup-centric architectures. Rollups do execution elsewhere, but they still need to publish enough data on the base layer for users and verifiers to reconstruct state and challenge invalid behavior. Ethereum’s proto-danksharding, introduced by EIP-4844, is a concrete example. It adds blob-carrying transactions whose data is stored temporarily in the beacon layer rather than permanently in the execution layer, making data publication cheaper for rollups. That is not “full sharding” in the original many-execution-shards sense, but it is absolutely a shard-like scaling move because it partitions and prices data availability differently.

Some systems blur the line. NEAR’s Nightshade is presented as a single blockchain logically containing all shard activity, while physically splitting each block into per-shard chunks that different participants validate and download. Polkadot’s parachains are application-specific parallel chains whose state transitions are verified by relay-chain validators. TON uses a hierarchical model with a masterchain, workchains, and many shardchains, with dynamic split and merge behavior. These are all sharded designs, but they are not the same architecture wearing different branding. They make different decisions about what remains global and what becomes local.

The useful unifying definition is broad: sharding partitions nodes or work into subsets that handle storage, communication, or computation without fine-grained synchronization on every step. That broad definition matters because it explains both the benefit and the danger. Less synchronization means more parallelism. Less synchronization also means more coordination problems.

How does a sharded blockchain assign validators and process shard blocks?

To make the idea concrete, imagine a network that wants to split itself into four shards. The protocol first needs a way to decide who validates what. If the same participants could choose their own shard permanently, attackers would pile into a target shard and capture it. So serious sharded systems generally rely on randomness and rotation. Validators are sampled or assigned to shards using protocol-generated randomness, then reassigned periodically so no shard stays vulnerable to a stable coalition.

This is why proof of stake has often been treated as a natural fit for sharding. Ethereum’s Phase 0 design explicitly tied sharding feasibility to easy access to an active validator set for random committee sampling. In a proof-of-stake system, the protocol has a direct view of the validator set and can reshuffle it. OmniLedger similarly used bias-resistant public randomness and cryptographic sortition to assign validators into statistically representative shards. The principle is simple: if the global validator set is mostly honest, random sampling should make each shard mostly honest too, provided committees are large enough.

Once validators are assigned, each shard needs intra-shard consensus. That can be BFT-style consensus inside each shard, a heaviest-chain rule with a finality gadget, or some other local mechanism. Zilliqa uses PBFT-like consensus inside shards and in its coordinating committee, with multisignatures to reduce communication overhead. NEAR uses a heaviest-chain design with a separate finality mechanism. OmniLedger used shard-level BFT via ByzCoinX. The architecture varies, but the invariant is the same: each shard must be able to agree locally on its own next state.

Then comes the hard part: global coordination. If shards are isolated forever, the system is not very useful. Users need to move assets, invoke contracts, or pass messages across shard boundaries. This is where most of sharding’s complexity lives, because a cross-shard action touches more than one local state machine.

A concrete example helps. Suppose Alice’s account lives in shard 1 and she wants to send tokens to Bob in shard 3. In a monolithic chain, this is a single state transition: subtract from Alice, add to Bob, and everyone verifies the same result. In a sharded system, shard 1 controls Alice’s balance, while shard 3 controls Bob’s. If shard 1 debits Alice before shard 3 credits Bob, the protocol must ensure the credit is eventually delivered and cannot be replayed or dropped. If shard 3 credits Bob before shard 1 has really locked or burned Alice’s funds, the system can create money from nothing.

So cross-shard processing usually becomes a message-passing or atomic-commit problem. OmniLedger’s Atomix treated this as a client-driven lock/unlock protocol: source shards lock inputs, destination shards later unlock to commit or abort based on proofs. NEAR routes cross-shard effects as receipts from one shard to another. Polkadot uses message passing among parachains, with relay-chain-validated state transitions and XCM as the communication format. These approaches differ in who coordinates and how quickly finality arrives, but they are all trying to preserve the same invariant: a transaction spanning shards must either happen coherently or fail coherently.

Why is cross‑shard coordination the main bottleneck in sharded systems?

| Workload locality | Throughput gain | Latency impact | Composability | Recommended design |

|---|---|---|---|---|

| Mostly intra-shard | High | Low | Near-monolithic | Standard sharding |

| Mixed interaction | Moderate | Medium | Asynchronous patterns | Locality-aware design |

| Highly cross-shard | Low or negative | High | Weak async composability | Avoid sharding or use a hub |

This is the point many smart readers underestimate. The main challenge in sharding is not splitting the state. It is preserving useful composability after the split.

If most activity stays within a shard, sharding works beautifully in principle. Each shard can progress mostly independently, and cross-shard traffic is rare. But if applications naturally touch many shards, the coordination overhead can eat the gains. The system starts paying for parallelism with extra latency, more proofs, more messages, and more failure modes.

This is why transaction placement matters. Zilliqa assigned transactions to shards based on sender address, so all transactions from a sender go to the same shard and local double-spend detection stays simple. That reduces some complexity, but it is also a design choice about what locality the protocol wants to preserve. TON uses address-prefix-based shard assignment. Other systems explore reshaping state or workloads to reduce cross-shard frequency. These are not cosmetic engineering details. They determine whether the system mostly enjoys local execution or constantly pays inter-shard coordination costs.

Cross-shard communication also weakens a property users often take for granted on single-chain systems: tight composability. On a monolithic smart contract platform, one transaction can call many contracts in one atomic execution path. Across shards, those calls may become asynchronous messages or receipts delivered over multiple blocks. Polkadot’s own documentation is direct on this point: cross-chain latency is inherent, and optimistic delivery takes at least two blocks. That is not a minor UX wrinkle. It changes what application patterns are easy to build.

There is also a fairness angle. Recent research has shown that sharded systems can introduce new ordering vulnerabilities because “processed first” and “executed first” are no longer the same thing when different shards handle different phases. The NDSS 2024 paper on finalization fairness describes how cross-shard front-running can arise from this processing-execution gap. The significance is broader than one attack. It shows that once order is distributed across shards, preserving a globally meaningful notion of sequence becomes difficult, especially for order-sensitive applications like DeFi.

How does data availability affect sharding security and the ability to recover state?

| Method | Proof type | Storage burden | Detection guarantee | Best for |

|---|---|---|---|---|

| Full replication | Full block download | High | Deterministic | Light trust assumptions |

| Erasure coding | Merkle + coded pieces | Moderate | Reconstructable with enough parts | Chunked shard designs (NEAR) |

| Data availability sampling | Random sample checks | Low | Probabilistic | Many blobs, high scale |

| Blob + KZG | KZG commitments | Low ephemeral storage | Compact cryptographic proofs | Rollups and proto-dank |

Sharding only helps if the data needed to verify or reconstruct shard state is actually available. Otherwise an attacker can make a shard appear to progress while hiding the underlying data, preventing honest participants from checking validity or recovering state.

This is why modern sharding discussions put so much weight on data availability. In a monolithic chain, a full node downloads the whole block and can directly verify it. In a sharded system, many participants only see slices. The protocol must still give the network confidence that shard data can be retrieved if needed.

Different architectures handle this differently. NEAR’s Nightshade uses erasure coding for chunks, distributing coded parts and publishing a Merkle root in the chunk header so validators can verify pieces and reconstruct data if enough parts are available. Ethereum’s sharding roadmap has long emphasized data availability sampling, where clients would check small random portions of blob data and infer with high probability that the full data is available if enough samples succeed. Proto-danksharding does not yet implement full data availability sampling, but it is explicitly designed to be forward-compatible with that future direction.

EIP-4844 is a useful case because it shows sharding’s evolution in practice. Instead of jumping directly to many execution shards, Ethereum first introduced blob transactions for cheaper, temporary data publication. These blobs are committed to using KZG commitments, an efficient vector commitment with fixed-size proofs. The blob data lives in beacon nodes, not the execution layer, and is pruned after a limited period, around two weeks in the project materials and about 4096 epochs, roughly 18 days, in ethereum.org’s explanation. The mechanism matters: by making blob data ephemeral and keeping it outside permanent execution-layer history, Ethereum can price this data much cheaper than calldata while still making it available long enough for rollups to use.

This is a reminder that sharding is not a single switch. It is often a sequence of designs aimed at the same bottleneck: how to let the system expose much more verifiable data or execution capacity without requiring every node to bear the full burden forever.

Why do sharded systems use random validator assignment and frequent reshuffling?

A sharded system is only as strong as its weakest shard. If an attacker can capture one shard, they may be able to double-spend within it, censor it, or inject invalid state transitions that spill outward.

That is why node assignment is not just bookkeeping. It is security-critical. A sound design wants shard committees to be statistically representative of the whole validator set, and it wants them to change over time. The survey literature on sharded blockchains treats node selection, epoch randomness, node assignment, and shard reconfiguration as core architectural components for exactly this reason.

The underlying logic is straightforward. Suppose 20% of the global validator stake is malicious. If shard memberships are random and committee sizes are large enough, most shards will also land near 20% malicious participation, safely below a one-third Byzantine threshold in BFT-style settings. But if committees are too small, or assignments are predictable, the variance becomes dangerous. One unlucky shard can end up overrun.

This is why whitepapers often spend surprising space on randomness generation and committee sizes. OmniLedger used public randomness precisely to resist bias in shard assignment. Zilliqa’s security analysis tied safe operation to sufficiently large committee sizes. NEAR concealed and rotated validator assignments to make adaptive corruption harder. These mechanisms are not peripheral. They are the answer to the deepest objection to sharding: if only some nodes validate a shard, why can’t an attacker just target that shard?

What role do beacon, relay, or master chains play in sharded architectures?

Many sharded systems end up with some kind of coordinating layer: a beacon chain, relay chain, masterchain, or directory committee. This is not an accident. Once work is partitioned, something usually has to track validator assignments, shard headers, finality information, or cross-shard message inclusion.

Ethereum’s beacon chain was explicitly designed as the core consensus layer that could later coordinate shard chains. Polkadot’s relay chain validates parachain state transitions and provides shared security. TON’s masterchain stores network configuration, validator lists, and the latest state references for workchains and shardchains. Zilliqa’s DS committee manages sharding and aggregates shard outputs. These systems differ in how centralized or distributed that coordinator function is, but they converge on the same structural need: local shard execution must be anchored into some global source of truth.

This coordinating layer is where many tradeoffs surface. It can simplify security and interoperability, because shards inherit shared consensus and a common validator pool. But it can also become a throughput governor, communication hotspot, or fairness bottleneck if too much work is funneled back through it. Research proposals like Haechi even use a beacon-like chain to impose global order on cross-shard transactions specifically to prevent fairness failures. That helps security, but it also illustrates the cost of distributed execution: once you split the system, you often have to rebuild global guarantees somewhere else.

When does sharding fail to improve performance or security?

Sharding is powerful, but it is not free scalability.

It breaks down when the workload is too cross-shard-heavy, because then the system spends more effort coordinating than it saves by parallelizing. It breaks down when data availability is weak, because hidden shard data can make invalid progress look legitimate. It breaks down when validator assignment is biased or committees are too small, because then an attacker can dominate a shard. And it breaks down, more subtly, when developers assume a sharded system behaves like a monolithic chain even though cross-shard calls are asynchronous, delayed, or differently ordered.

There are also architectural limits that depend on what exactly is being optimized. If the goal is cheaper data for rollups, as in proto-danksharding, then ephemeral blob storage can be enough to unlock large fee reductions without solving general cross-shard execution. If the goal is native parallel smart contract execution, the design space is harder, because cross-shard state access and composability become first-order concerns. This distinction matters because people sometimes speak of sharding as if every design is trying to do the same thing. It is more accurate to say that sharding is a family of partitioning strategies, and each member of that family solves a different bottleneck while exposing different edge cases.

Even the strongest throughput claims should be read with care. Some architectures describe very high or even effectively unbounded scaling potential, especially where shards can split dynamically. Those claims may reflect real design intent, but the practical bottlenecks are usually cross-shard messaging, validator bandwidth, data distribution, and coordination overhead. In other words, the theoretical ability to create more shards does not by itself guarantee that the whole system scales cleanly under realistic application behavior.

Conclusion

Sharding is the idea of scaling a blockchain by replacing full replication with structured partitioning. The basic mechanism is simple: smaller groups process different parts of the workload in parallel. The hard part is preserving the properties replication gave you for free; validity, data availability, coherent ordering, and secure coordination across the whole system.

That is why the deepest way to understand sharding is not as “many chains in parallel,” but as a change in the blockchain’s security model. Once not everyone verifies everything, the protocol must create new machinery for assignment, communication, sampling, and finality. When that machinery is well designed, sharding can raise throughput without pricing ordinary participants out of the network. When it is not, the system gains speed by quietly giving up the very guarantees users thought they still had.

How does sharding affect how I use a blockchain?

Sharding changes settlement finality, composability, and how verifiable data is published; all things that affect how quickly transfers settle and how risky cross-shard interactions are. Before funding or trading a sharded-network asset on Cube Exchange, check the network's sharding and data-availability model, then fund your account and execute an order type that matches the network's constraints.

- Read the protocol whitepaper or official docs and note whether the network uses execution sharding, data sharding (proto‑danksharding/blobs), or no sharding; record blob lifetime (epochs/days) and whether KZG commitments or DA sampling are used.

- Check cross‑shard semantics: find the stated committee rotation period, cross‑shard message delivery latency or optimistic delivery bounds (e.g., ≥2 blocks), and any required receipt proofs.

- Verify data‑availability and trust assumptions: confirm use of erasure coding, DA sampling plans, or a KZG trusted‑setup and note any operational caveats.

- Fund your Cube Exchange account via fiat or a supported crypto transfer, then place a limit order for price control or a market order for immediate execution, sizing the trade to reflect sharding and DA risks.

Frequently Asked Questions

Random assignment and periodic reshuffling make it statistically unlikely that an attacker can concentrate enough stake or nodes in a single shard to control it; by sampling validators from the global set each epoch, honest-majority assumptions for the whole network map to honest-majority with high probability at the shard level, provided committees are large enough.

Cross-shard operations are handled as message-passing or atomic‑commit protocols rather than one atomic state transition; concrete approaches include client-driven lock/unlock (OmniLedger’s Atomix), receipts routed between shards (NEAR), or relay‑chain–validated message passing (Polkadot), all of which aim to ensure a multi-shard transaction either commits coherently or aborts.

data availability sampling lets clients check small random pieces of a large shard blob and, with high probability, conclude the whole blob is available; it’s critical because without it an attacker can make a shard appear to have produced valid blocks while hiding the underlying data needed for independent verification or recovery.

Proto‑danksharding (EIP‑4844) is a partial, forward‑compatible step that introduces ephemeral blob transactions with KZG commitments to make publishing rollup data cheaper, whereas full sharding would split execution and long‑term storage across many shards; proto‑danksharding stores blobs only temporarily (about 4096 epochs, roughly 18 days) and is not a full execution sharding design.

Sharding does not guarantee unlimited scaling in practice because cross‑shard coordination, validator bandwidth, data distribution, and coordination layers usually become new bottlenecks; the theoretical ability to add shards must be weighed against these practical limits and application workload patterns.

Sharding typically weakens tight composability: cross‑shard contract calls often turn into asynchronous receipts with extra latency, and research shows new ordering and front‑running vulnerabilities can arise when ordering is distributed across shards - for example Polkadot expects optimistic delivery to take at least two blocks and NDSS research highlights cross‑shard finalization fairness issues.

A coordinating layer (beacon chain, relay chain, masterchain, or directory committee) anchors shard headers, validator assignments, and finality information so shards can inherit shared security and interoperate, but that same layer can become a throughput governor, communication hotspot, or a fairness bottleneck if too many cross‑shard responsibilities are placed on it.

Data availability is a security problem because if the protocol cannot probabilistically ensure a shard’s underlying data can be retrieved, an attacker can make a shard appear to make valid progress while withholding data needed for independent verification or reconstruction - solutions include erasure coding (NEAR) or data‑availability sampling planned for Ethereum’s roadmap.

Committees must be large enough to make the probability of an attacker forming a controlling minority negligible; for example, Zilliqa’s analysis suggested committee sizes on the order of hundreds (n0 ≈ 800) to keep per‑committee Byzantine fractions acceptably below one‑third with high probability.

EIP‑4844’s use of KZG vector commitments requires a distributed (trusted) setup ceremony to generate parameters, which introduces operational and trust considerations that the spec and community materials flag as needing careful handling and auditing.

Related reading