What is a Consensus Mechanism?

Learn what consensus mechanisms are, how blockchain networks agree on one history, and the tradeoffs between PoW, PoS, BFT, and probabilistic designs.

Introduction

consensus mechanisms are the rules a distributed system uses to decide which history is valid when many independent participants can propose, verify, or reject updates. In blockchains, this is the difference between a shared ledger and a pile of conflicting claims. The hard part is not storing data; it is getting strangers on the internet to converge on the same sequence of state changes without handing control to a central operator.

That problem has a sharp edge. If two honest nodes see different valid-looking histories, money can be double-spent, applications can execute against incompatible states, and the network can split into separate realities. So a consensus mechanism is not just a voting system in the casual sense. It is a machine for preserving a few critical guarantees under adversarial conditions: that honest participants do not permanently disagree about finalized history, that the system can keep making progress, and that influence cannot be cheaply faked by spinning up endless identities.

The first idea that makes the topic click is this: consensus is really about choosing a rule for resolving disagreement under uncertainty. Different mechanisms do that in different ways. Some make participants spend computational work, some require them to lock economic stake, some use explicit voting rounds among a known validator set, and some rely on repeated random sampling. But beneath the variety, all of them are trying to answer the same question: when the network is noisy, delayed, or attacked, why should everyone still accept the same outcome?

What problem do consensus mechanisms solve in blockchains?

Imagine a ledger replicated across thousands of computers. Anyone can broadcast a transaction. Messages arrive at different times. Some participants may be offline, buggy, or malicious. There is no global clock, no perfect knowledge of who is online, and no trusted referee. Under those conditions, two nodes can easily receive transactions in different orders or hear about competing blocks at different times. If the protocol gives no disciplined way to resolve that disagreement, the network does not have one ledger; it has many local opinions.

This is why blockchains need more than cryptography. Digital signatures can prove who authorized a transaction. Hashes can make tampering visible. Merkle trees can compactly prove inclusion. But none of those tools, by themselves, tell a network which of two incompatible histories to accept. Consensus is the missing layer that turns individually verifiable data into a collectively accepted history.

There is also a deeper obstacle: in an open network, identities are cheap. If influence were assigned one account, one vote, an attacker could create millions of accounts and dominate the system. So any practical open consensus mechanism needs Sybil resistance: some costly or scarce basis for influence that cannot be forged at near-zero cost. In Bitcoin, the whitepaper frames this through proof-of-work as effectively a one-CPU-one-vote system, with the valid chain being the one with the greatest cumulative work. In proof-of-stake systems, influence is tied instead to bonded stake. The details differ, but the structural requirement is the same: the protocol must make participation open while making fake majority power expensive.

What guarantees (safety, liveness, accountable influence) must a consensus mechanism provide?

| Guarantee | What it prevents | How it can hold | User impact | Typical assumption |

|---|---|---|---|---|

| Safety | Conflicting finalized histories | Deterministic threshold or vanishing probability | No permanent double-spends | Honest-majority (stake or work) |

| Liveness | System keeps making progress | Eventual or partial synchrony assumptions | Transactions eventually confirm | Reasonable network delivery |

| Accountable influence | Identify and penalize misbehavior | Signed evidence tied to validators | Misbehaviour can be punished | Identifiable validator keys |

The exact terminology varies, but most consensus designs are balancing three concerns.

The first is safety: honest participants should not end up permanently accepting conflicting outcomes. If one part of the network believes transaction A happened and another believes the incompatible transaction B happened, the ledger has failed at its most basic job. In some systems safety is deterministic once a threshold is crossed; in others it is probabilistic, meaning the chance of reversal becomes vanishingly small rather than mathematically impossible.

The second is liveness: the network should keep making progress. A perfectly safe protocol that never commits anything is useless. But liveness is always conditional on assumptions about the network. Research on finality gadgets makes this explicit: deterministic finality cannot be achieved in a fully asynchronous network with even a single fault, so real systems weaken the model and assume some form of eventual or partial synchrony. That is not a flaw in one particular blockchain; it is a fact about the problem itself.

The third is accountable influence: the protocol needs a clear rule for who gets to help decide the next state and why. In proof-of-work, this influence comes from expended computation. In proof-of-stake, it comes from stake that can often be penalized. In BFT-style protocols, it comes from an identified validator set that exchanges votes. Once you see consensus as a way of allocating decision power under adversarial conditions, many design choices become easier to interpret.

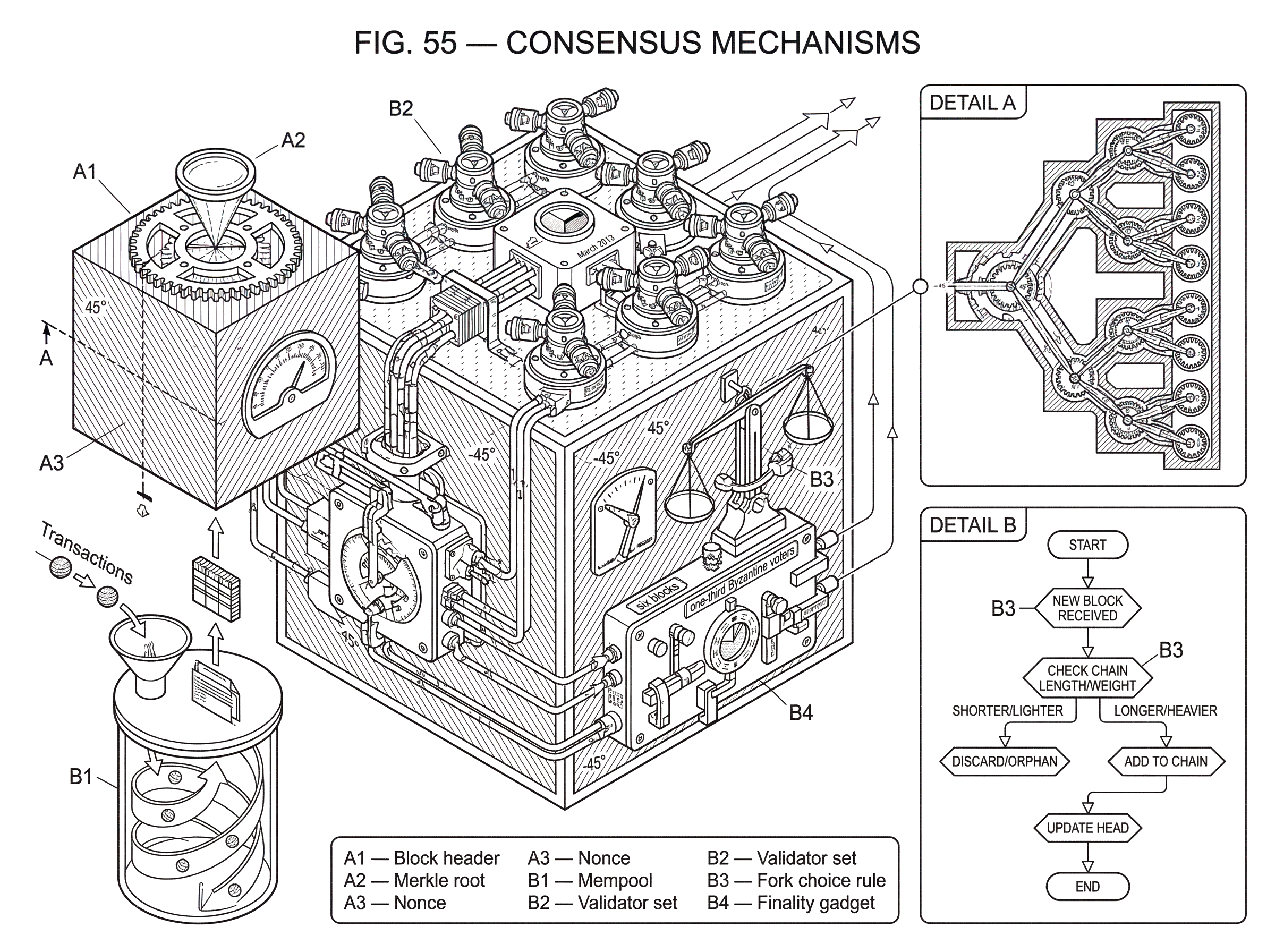

How do consensus protocols propose, observe, choose, and extend blocks?

Nearly every blockchain consensus mechanism, however different its details, follows the same broad shape. Some participant or group of participants proposes a candidate next block or state transition. Other participants check whether it is valid under the execution rules. The network may temporarily have more than one valid candidate because messages travel at different speeds. The consensus mechanism then supplies the tie-breaker: a rule that tells honest nodes which candidate to treat as canonical and build on.

That last step is the heart of the design. A consensus mechanism does not eliminate disagreement instantly. It gives disagreement somewhere to go. Competing views may appear for a moment, but the protocol tries to make one of them increasingly costly, unlikely, or impossible to dislodge as more work, stake-weighted votes, or confirmations accumulate behind it.

A simple worked example makes this concrete. Suppose two miners find blocks at nearly the same time, each extending the same parent block but containing different transactions. For a short period, some nodes hear about block X first and others hear about block Y first. The network is split, but not broken. In a Nakamoto-style system, miners simply keep mining on the branch they saw first. Eventually one branch gets another block before the other does. At that point it has more cumulative proof-of-work, so honest nodes adopt it as the best chain. The transactions in the losing block return to the mempool if they are still valid. What resolved the disagreement was not a central decision-maker. It was the combination of a branch-selection rule and the costliness of extending history.

That same pattern appears elsewhere with different machinery. In a BFT voting system, validators may briefly disagree or be delayed, but repeated voting rounds create a threshold certificate or finalization event that tells everyone which branch has crossed the line. In Avalanche-style protocols, repeated subsampling pushes the network toward a metastable preference that becomes harder and harder to reverse. The implementations differ, but the underlying job is the same: turn transient disagreement into eventual convergence.

How does proof-of-work reach agreement through accumulated cost?

Bitcoin’s design is the canonical example of Nakamoto consensus. Blocks are chained together, and each block requires proof-of-work: miners must find a nonce such that the block hash satisfies the difficulty target. Producing that proof is expensive on average, but verifying it is cheap. This asymmetry is doing real work for the protocol. It makes proposing history costly while making auditing history easy.

The key rule is often informally called the longest-chain rule, though more precisely it is the chain with the greatest cumulative proof-of-work. Nodes accept and extend the branch that represents the most total computational effort. The Bitcoin whitepaper’s security argument rests on a simple race: if honest nodes collectively control more CPU power than any cooperating attacker group, the honest chain tends to grow faster and outpace competing histories.

Why does this produce consensus? Because rewriting the past means not only inventing an alternative block but redoing the proof-of-work for that block and then catching up to the honest chain. As more blocks are added on top of a transaction, the attacker falls further behind in cumulative work unless it has enough hashpower to close the gap. This is why confirmations matter. In the whitepaper’s model, the probability of a successful catch-up attack drops exponentially with the number of blocks the attacker must overtake, assuming the honest majority condition holds.

The elegance of proof-of-work is that it solves two problems at once. It gives the network a public, objective tie-breaker, and it makes Sybil attacks expensive because identities do not matter unless they bring real computational power. But the mechanism is not magic. It depends on incentive alignment and distributional assumptions. The whitepaper notes that block rewards and fees encourage honest behavior, yet later work on selfish mining shows that following the nominal protocol is not always perfectly incentive-compatible. A colluding group can sometimes gain more than its fair share by strategically withholding blocks, which means consensus security is partly game-theoretic, not only cryptographic.

Proof-of-work also offers only probabilistic finality. A transaction buried under six blocks is very unlikely to be reversed under ordinary conditions, but not impossible in the formal sense. That is a meaningful difference from systems where finality is explicitly voted and, once reached, cannot be revoked without violating the protocol’s fault assumptions.

How does proof-of-stake use bonded stake to secure consensus?

Proof-of-stake replaces ongoing computational expenditure with locked economic stake as the basis of influence. The core intuition is straightforward: instead of proving commitment by burning electricity, validators prove commitment by putting capital at risk. If they follow the protocol, they earn rewards. If they equivocate or violate key rules, that stake can be reduced or slashed, depending on the design.

This changes the mechanism in two important ways. First, leader selection and voting power can be assigned in proportion to stake rather than hashpower. Second, once the protocol can identify misbehavior, it can often make safety violations accountable in a way proof-of-work cannot. In many PoS systems, if a validator signs conflicting messages, there is evidence of misconduct tied to a specific key and stake position.

Ethereum’s proof-of-stake specifications are a good example of how modern PoS is engineered around explicit validator duties, fork choice, and finality rather than a simple longest-chain race. The repository’s design goals are revealing: remain live through major network partitions and large offline sets, keep hardware requirements low, and minimize complexity even at some efficiency cost. That is a different optimization target from Bitcoin’s open mining race. The system is trying to preserve broad participation while obtaining stronger coordination and faster, more explicit finality.

Cardano’s Ouroboros Praos highlights another important PoS dimension: who gets chosen to act, and how predictable that choice is. Its security model emphasizes adaptive adversaries and semi-synchronous networks, and it uses verifiable random functions and forward-secure signatures to make leader selection harder to exploit. This matters because if an attacker can predict exactly who will produce future blocks and corrupt them just in time, stake weighting alone is not enough. Here the mechanism is not just “stake decides.” It is “stake decides through a randomized process designed to stay unpredictable under attack.”

PoS inherits its own assumptions. The most basic is honest-majority stake rather than honest-majority hashpower. That sounds like a simple substitution, but it changes the attack surface. Wealth concentration, validator coordination, and long-range or adaptive attacks matter differently than in proof-of-work. The mechanism can be more energy-efficient and often offers faster finality, but its security depends on social and economic structure as much as on protocol rules.

How do BFT-style protocols reach agreement through voting?

A different family of consensus mechanisms comes from Byzantine fault tolerant state machine replication. Here the network does not wait for a probabilistic race to settle. Instead, a known validator set exchanges messages in rounds and commits a value once a threshold of votes is reached. The foundational PBFT work made this practical for asynchronous internet-like environments, showing that replication could tolerate Byzantine behavior without prohibitive slowdown.

The right way to see BFT-style consensus is as disciplined coordination among a bounded set of voters. If up to f validators can behave arbitrarily, the protocol typically requires more than 3f total validators to preserve safety and liveness under its network assumptions. The exact thresholds and phases vary by protocol, but the structure is recognizable: propose a value, vote on it, then vote again in a way that makes conflicting commitment impossible unless too many validators misbehave.

Tendermint is a widely used blockchain expression of this model. Its documentation describes it as performing Byzantine Fault Tolerant state machine replication for deterministic, finite state machines. That last phrase matters. Consensus only works if all honest validators, given the same ordered inputs, reach the same post-state. Deterministic execution is therefore not a convenience around consensus; it is one of its preconditions.

BFT-style systems usually offer faster and clearer finality than Nakamoto-style systems. Once a block gets the required votes, users can often treat it as final rather than merely unlikely to be reversed. But that strength comes with a tradeoff. These protocols generally assume a more structured validator set and often incur more communication overhead as that set grows. They are not usually trying to solve openness in exactly the same way Bitcoin does.

What is a finality gadget and why separate block production from finality?

| Layer | Primary job | Finality type | Typical latency | Model assumptions | Key benefit |

|---|---|---|---|---|---|

| Block production | Keep chain moving and accept transactions | Tentative until finalized | Low initial latency | Optimistic progress and proposer selection | Quick transaction appearance |

| Finality gadget | Decide when blocks are provably committed | Deterministic under assumptions | Higher finality latency | Partial synchrony and 2/3 votes | Provable, irreversible finality |

Some systems split consensus into two layers: one mechanism produces blocks quickly, and another mechanism later finalizes them. This is the idea behind a finality gadget. Rather than forcing a single protocol to optimize equally for rapid production, safety, and finality, the design lets the chain move forward optimistically and then adds a separate process that says which blocks are now provably committed.

GRANDPA, used in the Polkadot ecosystem, makes this separation explicit. The research paper defines a finality gadget as a protocol that works alongside block production and proves important limits: deterministic finality in a fully asynchronous model is impossible even with one fault. So GRANDPA instead assumes partial synchrony and tolerates up to one-third Byzantine voters. It uses prevote and precommit rounds, together with a GHOST-style rule, to finalize a chain.

The design is conceptually useful because it decouples two jobs that are often conflated. Block production is about keeping the chain moving. Finality is about deciding when rollback risk has dropped from “possible” to “ruled out under the protocol assumptions.” In practice, this can improve user experience and recovery behavior. Transactions can appear quickly, while stronger guarantees arrive slightly later.

How do leaderless, subsampling protocols (like Avalanche) reach consensus?

Avalanche and the Snow family take yet another route. Instead of relying on one leader per round or a global longest chain, they use repeated random subsampling of peers. A node asks a small random subset what they prefer, updates its own preference, and repeats. Under the right conditions this creates metastability: once the network leans sufficiently toward one outcome, feedback reinforces that lean until the alternative becomes overwhelmingly unlikely.

This is a different intuition from both chain racing and explicit all-to-all voting. The system does not need every node to hear from everyone else each round. The paper emphasizes that no node processes more than logarithmic bits per decision, unlike traditional protocols where some participants may process linear communication in the number of nodes. That is why this family is attractive for scaling to large participation.

But the guarantees are probabilistic. Avalanche does not usually claim the same kind of deterministic finality as classical BFT voting. Instead, it aims for extremely strong probabilities of convergence and safety, with very fast confirmation latency under assumed conditions. The gain is throughput and scalability; the cost is that the security story is expressed in bounds and parameters rather than an absolute commitment certificate.

Why is ordering (e.g., Proof of History) not the same as consensus?

A common confusion is to call any mechanism that helps sequence events a consensus mechanism. Solana’s Proof of History is a good example of why that can be misleading. Its whitepaper describes PoH as a cryptographic way to verify passage of time and ordering between events. That is valuable because shared ordering reduces coordination overhead in a Byzantine replicated system. But PoH by itself does not settle disputes about validity or finality. It is better understood as a clock or ordering tool that complements the actual consensus process.

This distinction matters because many blockchain architectures are modular. One component may provide a source of time or sequencing. Another chooses leaders. Another gathers votes. Another finalizes blocks. If you treat all of that as a single black box called “consensus,” important tradeoffs disappear from view. You cannot evaluate the system well unless you ask which piece is solving which part of the problem.

When and how can consensus mechanisms fail or be undermined?

Consensus mechanisms are defined as much by their assumptions as by their rules. When those assumptions fail, strange things happen.

The March 2013 Bitcoin chain fork is a good illustration. Pre-0.8 nodes used Berkeley DB with lock limits that caused some large but otherwise valid blocks to be rejected, while 0.8 nodes using LevelDB accepted them. The result was a chain split. What is striking is the lesson: an implementation detail had effectively become part of consensus. The network was not disagreeing about signatures or proof-of-work. It was disagreeing because software behavior around database locks changed what “valid block” meant in practice.

Network-layer assumptions can fail too. eclipse attacks show that if an attacker monopolizes a node’s peer connections, it can distort that node’s view of the chain and enable downstream attacks on mining, confirmations, and fork choice. Consensus protocols are often analyzed as if honest nodes have reasonably representative views of network state. Isolation attacks undermine exactly that premise.

Operational failures expose another boundary. During a 2020 Solana Mainnet Beta outage, block production halted and the network required coordinated validator restart with an active-stake threshold before resuming. Even without diving beyond the incident report, the point is clear: consensus is not only a paper protocol. It is also a live operational system whose recovery procedures reveal where coordination really exists when normal assumptions break.

How should you compare consensus mechanisms and their trade-offs?

| Mechanism | Finality | Openness | Energy | Scalability | Best for |

|---|---|---|---|---|---|

| Proof-of-work | Probabilistic finality | Permissionless open | High energy cost | Moderate throughput | Open participation, censorship resistance |

| Proof-of-stake | Faster explicit finality | Often permissionless | Low energy cost | Good throughput | Energy-efficient finality |

| BFT voting | Deterministic finality | Permissioned or limited set | Low energy cost | Degrades with large set | Clear finality, fast confirmations |

| Subsampling (Avalanche) | Probabilistic finality | Flexible openness | Low energy cost | High throughput | Large-scale participation |

The useful question is not which mechanism is “best” in the abstract. It is which tradeoff surface a system has chosen.

If the priority is open participation with minimal dependence on identified validators, Nakamoto-style proof-of-work has a compelling simplicity: cumulative work decides. If the priority is stronger explicit finality and lower energy use, proof-of-stake and BFT-derived designs become attractive. If the priority is scaling decision-making across many participants without heavy all-to-all communication, subsampling-based approaches offer a different path. If the architecture wants fast production with delayed certainty, a finality gadget may be the right decomposition.

Each choice changes what the system is betting on. Proof-of-work bets on honest majority hashpower and the cost of rewriting work. Proof-of-stake bets on honest majority stake and the ability to penalize misconduct. BFT-style protocols bet on bounded validator faults and threshold voting under partial synchrony. Subsampling protocols bet on probabilistic convergence under carefully chosen parameters. None of these are free lunches. They are different answers to the same underlying coordination problem.

Conclusion

A consensus mechanism is the rule a decentralized system uses to turn many local views into one accepted history. The form can be proof-of-work, proof-of-stake, explicit Byzantine voting, probabilistic sampling, or a layered combination of these. What matters is not the label but the mechanism: who gets influence, how disagreement is resolved, what assumptions make safety and liveness possible, and what happens when those assumptions fail.

The short version to remember tomorrow is this: blockchains do not work because data is shared; they work because there is a credible rule for settling conflicts over shared data. Consensus mechanisms are those rules made operational.

What should you understand before using consensus-dependent assets?

Before trading, depositing, or withdrawing an asset, understand how its chain reaches finality and what reversal risks remain. On Cube Exchange, use that understanding to time deposits, choose order types, and avoid moving funds before the protocol’s recommended confirmation threshold. Follow the checklist below to reduce the chance your trade or transfer is affected by chain reorganizations or slow finality.

- Check the chain’s finality model and recommended confirmation count for the asset you plan to use (e.g., probabilistic PoW: 6+ confirmations; PoS/BFT: explicit finality events).

- Verify the asset’s exact network and token identifier before depositing (select the correct chain and contract address or network token on Cube).

- Deposit funds to your Cube account and wait for the chain-specific confirmation threshold or finality event before trading or withdrawing.

- When placing a trade, choose the order type to match settlement risk: use limit orders for price control when finality is slow, or market orders only if you accept the instant fill and possible reorg risk; plan withdrawals only after the recommended confirmations.

Frequently Asked Questions

Open systems make identities cheap, so consensus protocols tie decision power to costly or scarce resources: proof-of-work assigns influence by expended computation, proof-of-stake by bonded stake that can be penalized, and other designs use identified validator sets or sampling; this makes creating a fake majority expensive rather than trivial.

Impossibility results and practical designs both show deterministic finality cannot be achieved in a fully asynchronous network with even one fault, so real systems replace that ideal with weaker assumptions (e.g., partial synchrony) or probabilistic guarantees.

Probabilistic finality (typical of Nakamoto proof-of-work) means the probability of reversal falls toward zero as more confirmations are added, while deterministic finality (typical of BFT voting) produces an explicit commitment that cannot be revoked except by violating the protocol's fault assumptions.

Implementation or deployment details can change what nodes accept as valid and thus split the network: for example, differing Berkeley DB locking behavior caused a Bitcoin chain fork in 2013, showing that software-level differences can become de facto consensus rules.

A finality gadget separates fast block production from a later voting-based finalization step, improving user-perceived confirmation speed while introducing assumptions (typically partial synchrony and bounded Byzantine voters) required for the gadget’s deterministic guarantees.

Protocols like Avalanche use repeated random subsampling and local preference updates to drive the network toward a metastable consensus; this yields high scalability and low latency but replaces absolute guarantees with extremely strong probabilistic guarantees that depend on parameter choices and adversary models.

Proof of History is a cryptographic ordering or timestamping tool that reduces coordination costs but does not by itself resolve validity or finality disputes, so it must be combined with a consensus protocol to settle conflicts.

Network-layer attacks and incentive exploits can undermine consensus assumptions: eclipse attacks can give a node a distorted view of history, and selfish-mining or similar strategic behaviors can let coalitions gain outsized influence, so consensus security depends on both network-health and incentive alignment.

Related reading