What Is a Fork Choice Rule?

Learn what a fork choice rule is, why blockchains need it, and how rules like longest chain, GHOST, and LMD-GHOST select the canonical head.

Introduction

fork choice rule is the part of a consensus protocol that tells a node which branch of a block tree to treat as the canonical chain when more than one branch is available. That may sound like a narrow implementation detail, but it is actually where a distributed ledger stops being a pile of candidate histories and becomes a single history that applications can use.

The basic puzzle is simple. If two honest participants produce blocks at nearly the same time, both blocks may be valid. Different nodes may see them in different orders because networks have delay. For some period, the system does not contain one chain; it contains a tree. Consensus needs a rule for turning that tree back into a single preferred path.

That is what fork choice does. It does not create blocks, and in many systems it does not by itself provide finality. Instead, it answers a more immediate question: given everything I know right now, which tip should I build on? In proof-of-work systems this question is often answered with a longest-chain or most-work rule. In newer proof-of-stake systems it is often answered with some weighted rule based on validator votes. The details differ, but the job is the same.

The important idea is that validity and preference are different. A block can be valid yet not be the preferred head. Fork choice is the preference rule. Once that distinction clicks, many other pieces of blockchain consensus become easier to understand: reorgs, confirmations, attestations, checkpoints, finality gadgets, and even why client implementations spend so much effort on what looks like “just choosing a chain tip.”

Why do blockchains need a fork choice rule?

If a blockchain lived on a perfectly synchronous network, every honest node would receive every block instantly and in the same order. In that imaginary world, two conflicting tips would almost never appear. Real networks are not like that. Messages take time to propagate, clocks are not perfectly aligned, and participants can behave adversarially.

Here is the mechanism. Suppose Alice sees block A first and Bob sees block B first, where A and B both extend the same parent. Alice may start building on A, while Bob starts building on B. For a while, both branches grow. Neither node is necessarily doing anything wrong; they are acting on incomplete information. Without a common rule for preferring one branch over the other, the network can fragment permanently.

So consensus protocols need an invariant that honest nodes can independently compute from their local view and that tends to make those local views converge. The fork choice rule is that invariant in operational form. It says: from the set of blocks and votes you currently know, compute a preferred chain. If honest nodes eventually see mostly the same information, they should eventually compute the same answer.

This is also why fork choice is not the same thing as “conflict resolution after something goes wrong.” Forks are normal. They are a routine byproduct of concurrency in a distributed system. The rule must therefore work continuously, under ordinary network conditions, not just during attacks.

How does fork choice convert a block tree into a single chain?

The easiest way to picture fork choice is to imagine all known blocks as a tree rooted at genesis. Every block points to a parent, so the structure fans out whenever two blocks share the same parent or when later blocks extend competing tips. The protocol needs to select one path from the root to a leaf as the chain to extend.

The fork choice rule is the function that maps “tree plus evidence” to “preferred path.” The evidence may be different in different families of consensus. In proof-of-work, the evidence is accumulated work. In a heaviest-subtree design, the evidence may include blocks that are not on the final selected path but still contribute weight to one side of a fork. In proof-of-stake, the evidence is usually validator voting weight, often with rules about which vote from a validator counts and which votes are ignored because of equivocation or slashing.

This way of thinking clarifies an easy misunderstanding. People often speak as if a blockchain is already a chain and fork choice merely picks the longer of two chains. But before fork choice acts, the data structure visible to a node is usually a tree or DAG-like ancestry graph. The chain is the output, not the input.

The consequences are practical. Wallets, exchanges, rollups, bridges, and applications all care about which transactions sit on the preferred path. A transaction in a valid block that is not on the selected head path may disappear from the canonical view after a reorganization. Fork choice determines when that happens and how likely it is.

What are longest-chain and most-work fork choice rules?

The simplest widely known fork choice rule is the Nakamoto-style rule: prefer the chain with the greatest accumulated proof-of-work. In casual language this is often called the “longest chain,” but the more precise idea is most work, not merely most blocks.

Why does this help? Because producing blocks requires scarce external cost. If two branches compete, the branch backed by more total work represents more expended resources. Honest miners, by extending the branch with the most work they know, tend to reinforce one branch rather than split across many. The protocol converts decentralized production into convergent selection.

This rule works well when forks are relatively rare. But the mechanism has a scaling tension. If blocks are created more frequently, or are larger and thus propagate more slowly, honest forks become more common. Under those conditions, the longest-chain view throws away information from stale blocks that were honestly mined but did not land on the final main chain. Research on GHOST was motivated by exactly this issue: if honest effort is scattered across near-simultaneous branches, perhaps the protocol should count more of that effort instead of ignoring it.

That leads to a deeper distinction between the selected branch and the evidence used to select it. Longest-chain rules mostly count evidence that ended up on one chain. Heaviest-subtree rules try to count evidence in subtrees around forks, not just along the final winning trunk.

How does GHOST pick the heaviest subtree instead of the longest chain?

GHOST stands for Greedy Heaviest-Observed Sub-Tree. The key idea is almost embarrassingly simple once stated: when a fork occurs, do not ask which descendant chain is longest; ask which child leads into the heaviest subtree.

Here is the mechanism. Start at the root. If there are multiple children, measure the total weight under each child. Choose the child with the most weight. Then repeat the same decision at the next level. The rule is “greedy” because it makes the locally heaviest choice at each fork, walking step by step until it reaches a leaf.

What problem does this solve? In a high-throughput network, many honest blocks may become stale because propagation delays create frequent races. A longest-chain rule can undercount that honest activity because stale blocks do not contribute directly to the final chain length. GHOST tries to recover that information. Even if some honest blocks are not on the eventual selected path, they still add weight to the subtree they support. That can make the protocol more robust in environments where forks are common.

The analogy is a road system. Longest-chain looks only at the single road that currently stretches farthest. GHOST looks at traffic in the regions beyond each fork and asks which branch leads into the busier network. That explains why it can make better use of dispersed honest activity. But the analogy fails in one important way: real fork choice does not measure “traffic” directly; it measures protocol-specific evidence such as blocks or votes, and those measurements can be manipulated or delayed by an adversary.

This caveat matters. Modern analyses show that GHOST’s behavior depends heavily on assumptions about network delay, adversarial strategy, and tie-breaking. A recent tight analysis of GHOST consistency shows that its formal consistency region can be worse than Nakamoto’s longest-chain rule under some adversarial models, and that deterministic tie-breaking materially improves security relative to adversarial tie-breaking. So GHOST is not “strictly better” in every sense. It changes the tradeoff surface.

How does fork choice use validator votes in Proof-of-Stake?

| Model | Evidence | Weight unit | Equivocation handling | Typical rule |

|---|---|---|---|---|

| Proof-of-Work | Accumulated mining work | Total work / hashpower | Not applicable | Longest-chain (most-work) |

| Proof-of-Stake | Validator attestations/votes | Stake-weighted votes | Ignore/penalize equivocation | LMD‑GHOST (latest-message) |

Once a system moves from proof of work to proof of stake, the natural source of branch weight changes. Instead of counting computational work, the protocol counts validator support. The fork choice rule still has the same job (pick the preferred path through a block tree) but the evidence is now stake-weighted voting messages.

Ethereum’s beacon chain provides the clearest canonical example. Its fork choice uses LMD-GHOST, where “LMD” means Latest Message Driven. The GHOST part gives the tree-walking structure: at each fork, choose the child with the highest weight. The LMD part explains where that weight comes from: for each validator, count only its latest relevant message, rather than every historical vote it ever sent.

That “latest message” idea solves an important problem. If every past vote continued to count, old preferences would accumulate even after validators had moved on, and the weight on branches would become distorted. By using only the latest message from each validator, the protocol tries to reflect current support rather than historical noise.

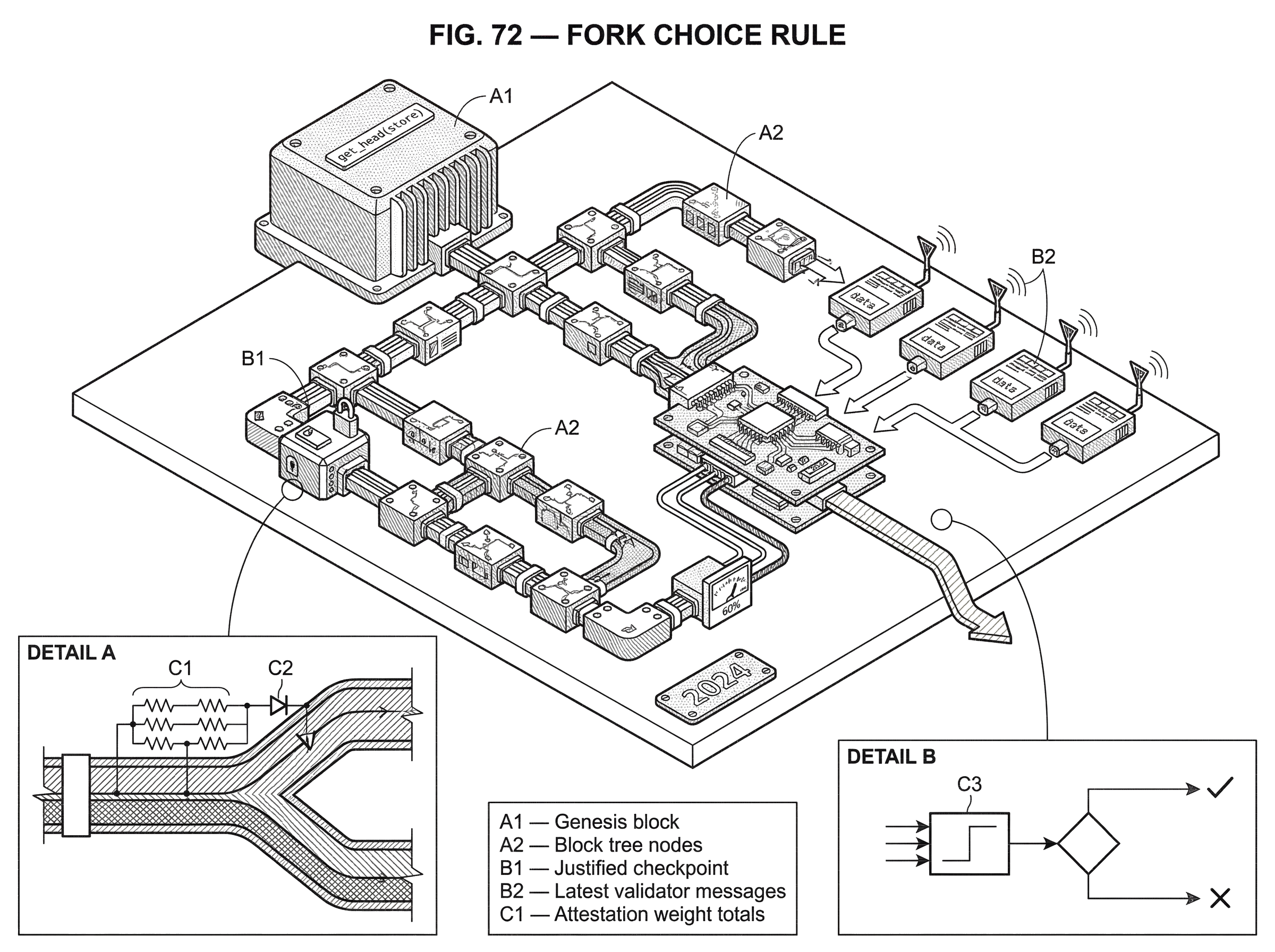

In the Phase 0 beacon-chain specification, the canonical head is defined by get_head(store). The algorithm first filters the block tree so that only viable branches remain; specifically, branches whose justified and finalized information is compatible with the node’s fork-choice store. Then it starts from the current justified checkpoint and repeatedly chooses the child with maximal weight, breaking ties lexicographically by block root. That is the rule in its simplest form: filter, then walk to the heaviest child, then repeat.

How does LMD‑GHOST choose a head; a step‑by‑step example

Imagine a justified checkpoint J. From J, two children appear: branch X and branch Y. Under X, there are several descendant blocks; under Y, there are also several descendant blocks. Now suppose validators have sent attestations indicating which block they currently support.

The node does not simply count how many attestations mention X or Y directly. Instead, for each active, unslashed validator, it looks at that validator’s latest message. If that latest vote points to a descendant of X, then the validator’s effective balance contributes weight to X at the fork. If it points to a descendant of Y, its weight contributes to Y. A vote for a deeper descendant implicitly supports the ancestors on that path, because if a validator prefers a leaf under X, it also prefers the branch choice that leads into X.

Suppose the latest messages of validators representing 60% of effective balance point somewhere under X, while 40% point under Y. The fork choice walk chooses X. Now the node moves one level down and repeats the same process among X’s children. At this next fork, perhaps the support splits differently; say 35% under one child and 25% under another. The rule chooses the heavier of those children and continues until there is no heavier child to descend into.

That is the heart of the mechanism. The head is not chosen by comparing every leaf directly against every other leaf. It is chosen by a sequence of local branch decisions, each informed by current validator support projected onto the tree.

Two details from the Ethereum specification matter here. First, branches are filtered before selection so that nodes do not choose histories incompatible with known justified or finalized checkpoints. Second, validator equivocations are treated specially: an equivocating validator’s vote should not simply keep contributing weight as if nothing happened. The spec’s attestation scoring counts active, unslashed validators whose latest messages support the block through ancestry and excludes those marked equivocating.

How is fork choice different from finality?

| Concept | Purpose | Timescale | Guarantee | Effect on reorgs |

|---|---|---|---|---|

| Fork choice | Select preferred head | Continuous / immediate | Best current guess | Can cause short reorgs |

| Finality gadget | Lock history permanently | Checkpointed / slower | Strong irreversibility | Prevents deep reorgs |

A common confusion is to treat fork choice as if it directly tells you what is final. Usually it does not. Fork choice tells you the current preferred head. Finality tells you something stronger: which part of history should no longer be reverted except under extreme fault or protocol failure conditions.

Ethereum makes this separation explicit. The research paper on Gasper describes the protocol as the combination of LMD-GHOST for fork choice and Casper FFG as a finality gadget. The fork choice rule decides which head to build on right now. Casper FFG justifies and finalizes checkpoints based on validator votes. The two mechanisms interact, but they solve different problems.

This separation is useful because “best current guess” and “economically or cryptographically locked-in history” are not the same notion. If a node asked only for finality before deciding what to build on, it would often have nothing recent to extend. If it asked only for a head and had no finality mechanism, users would have weaker guarantees about deep history.

The same conceptual separation appears in other systems, though with different machinery. Tendermint, for example, uses rounds of proposals, prevotes, and precommits, and a block is committed when more than two-thirds of voting power precommits it. There the canonical choice is tightly bound to explicit BFT voting and locking rules rather than a continuously evaluated heaviest-tree walk. Snowman in Avalanche is different again: preference emerges through repeated subsampled queries and transitive voting, with probabilistic finality rather than a separate FFG-style finality gadget. Different architecture, same core need: a network must convert local observations into a shared preference over competing histories.

Which implementation details (tie-breaking, filtering) affect fork choice security?

At first glance, tie-breaking sounds like a boring edge case. It is not. If two children have equal weight and different nodes break ties differently, the network can remain split longer than necessary. This is why deterministic tie-breaking is important. Ethereum’s specification breaks equal-weight ties lexicographically by root. Recent theoretical work also shows that deterministic tie-breaking can enlarge the safe operating region relative to adversarial tie-breaking in GHOST-like protocols.

Filtering is just as important. In Ethereum, get_filtered_block_tree(store) excludes branches whose justified or finalized data do not agree with the store’s view. This means the fork choice rule does not roam freely over every known branch; it roams over branches that are still considered viable under the protocol’s checkpoint logic. That constraint is what prevents ordinary head selection from undoing finalized history.

There are also timing-related adjustments. The Ethereum spec includes a proposer boost mechanism: under certain conditions, the first timely block for a slot may receive a temporary score boost if it is the timely canonical proposer’s block. The purpose is not to rewrite the protocol’s core logic, but to reduce unnecessary short-range reorgs and give timely proposal behavior an edge. Mechanically, the boost adds proposer score when the candidate block is an ancestor of store.proposer_boost_root.

These details illustrate a broader lesson: fork choice is not just a headline algorithm like “longest chain” or “LMD-GHOST.” Real deployments need event handling, timing assumptions, checkpoint compatibility checks, equivocation handling, and deterministic fallback behavior. The protocol’s safety and liveness often depend on these so-called details.

Why is fork choice stateful and how do clients implement it efficiently?

| Approach | Read cost | Write cost | Memory | Best for |

|---|---|---|---|---|

| Spec (naive) | Heavy head recompute | Low | Low | Clarity and correctness |

| Cached spec | Faster ancestor lookups | Moderate (cache upkeep) | Moderate | Small optimizations |

| Protoarray (array) | O(1) head lookup | Higher (propagate updates) | Higher | Production clients |

| Optimized (heuristics) | Fast with fast-paths | Batched / amortized | Moderate | High-throughput nodes |

From a client’s perspective, fork choice is not a single formula evaluated in a vacuum. It is a state machine updated over time as new information arrives. Ethereum’s specification is explicit about this: a fork-choice Store is updated by handlers such as on_tick, on_block, on_attestation, and on_attester_slashing. Invalid handler calls must not mutate the store.

That design reflects the real problem nodes face. A node learns about blocks, attestations, slashing evidence, and time progression incrementally. Fork choice therefore depends on maintained state: known blocks and their ancestors, block states, latest validator messages, justified and finalized checkpoints, equivocating indices, and various caches or bookkeeping structures.

The straightforward specification is intentionally readable, not optimized. In a naive implementation of LMD-GHOST, a head lookup may require repeatedly checking ancestry relations and summing vote weights across many validators. That becomes costly at scale. Production clients therefore use optimized data structures.

A useful example is the proto_array approach described in implementation documents and used in production clients such as Prysm. The broad idea is to maintain an array-based stateful DAG of block nodes, along with validators’ latest votes and balances, so that weight changes can be propagated efficiently and head computation can be made much cheaper than recomputing everything from scratch. This is a classic systems tradeoff: do more bookkeeping when updates arrive so that queries for the head are fast.

Those engineering choices are not separate from the theory. If a client cannot process fork-choice data quickly enough during non-finality or heavy forking, it may fail operationally even if the protocol is sound on paper. Incident reports from chain splits underline this point: resource usage, checkpoint-state storage, peer quality, and fork-choice recovery tools can become decisive during stress.

What risks and failure modes affect fork choice?

Fork choice rules depend on assumptions. If the assumptions shift, the behavior can degrade.

The first assumption is that honest participants eventually see similar information. If the network is partitioned long enough, different groups of honest nodes may maintain different preferred heads. A fork choice rule cannot conjure synchrony out of an asynchronous network; it can only specify what to prefer given local knowledge.

The second assumption is that the weight source is meaningful and constrained. In proof of work, this means the adversary does not control too much hashpower and work is expensive. In proof of stake, it means votes are authenticated, equivocation is penalized or filtered, and the protocol is designed so that validators cannot cheaply support incompatible branches without consequence. The 2024 GHOST analysis makes this point sharply: unconstrained equivocation breaks security for GHOST-style rules unless mitigated at the protocol level.

The third assumption is that implementation choices preserve the intended rule. If tie-breaking is inconsistent, pruning is unsafe, or a client optimistically imports and persists problematic blocks into fork-choice state, recovery can become difficult. The Holesky rescue retrospective from the Lodestar team is instructive here. It describes how importing a problematic block into fork choice interfered with pivoting to the correct chain, and how checkpoint states, trusted peers, and emergency blacklisting features were used as recovery tools. None of that means the idea of fork choice is flawed; it means operational consensus is the combination of protocol and implementation.

Even success has tradeoffs. A rule that is more responsive to fresh votes may improve liveness and reduce some stale-history effects, but it may also increase sensitivity to timing, message delays, and strategic behavior. A rule that heavily constrains reorgs through finality checkpoints improves safety for older history, but may require extra machinery and more complex state management.

What is the core purpose of a fork choice rule?

At a deeper level, a fork choice rule is a way of translating distributed evidence into a single action recommendation: which block to treat as head and extend next. The evidence may be work, validator votes, quorum certificates, or repeated sampled preferences. The action recommendation must be locally computable, deterministic enough to support convergence, and robust enough that honest nodes do not drift apart under ordinary conditions.

That framing also explains why fork choice sits at the center of so many neighboring concepts. Attestations matter because they are evidence consumed by the rule. Finality matters because it constrains how far fork choice is allowed to revise history. Reorganizations are what users observe when the preferred path changes. Client performance matters because the rule must be executed continuously on live data, not admired as a theorem.

So when someone asks, “What chain is the real one?” the technically correct answer is often: the one selected by the protocol’s fork choice rule, subject to whatever stronger finality conditions the protocol also defines. There is no chain independent of that rule. The rule is part of what makes the chain canonical in the first place.

Conclusion

A fork choice rule is the procedure a blockchain node uses to select the canonical branch from competing valid histories. Its purpose is simple but fundamental: turn a tree of possible blocks into one path the network can build on.

The memorable version is this: valid blocks can conflict, so consensus needs a preference rule. Longest-chain rules, GHOST, LMD-GHOST, BFT commit rules, and subsampled preference protocols are different ways of expressing that rule under different assumptions. If you remember that fork choice answers “what should I extend now?” while finality answers “what should no longer change?”, you will have the core idea that makes the rest of consensus design much easier to understand.

What should you understand before using this part of crypto infrastructure?

Before you trade, deposit, or move funds on a chain, understand how that chain picks its canonical history and how many confirmations or checkpoints the protocol uses to avoid risky reorgs. Cube Exchange keeps your trades and transfers on-chain; follow the checks below and then use Cube’s deposit and trading flows to execute once the network conditions you require are met.

- Look up the network’s finality model and a safe confirmation threshold: check the chain’s docs or a block explorer for whether it uses probabilistic confirmations, checkpoint finality, or a finalized-epoch mechanism.

- Confirm the asset and network in Cube: select the exact token contract and network (e.g., Ethereum mainnet vs. a layer-2) before initiating a deposit or trade.

- Fund your Cube account via the recommended path (fiat on-ramp or a direct on-chain transfer) and wait for the chain-specific confirmation/checkpoint threshold to be reached.

- Place your trade or transfer on Cube. Use a limit order for large fills or when you need price control; if you must execute immediately, use a market order and consider splitting large trades to reduce slippage.

Frequently Asked Questions

Fork choice selects the node’s current preferred head to build on given its local view, while finality is a stronger guarantee that parts of history should not be reverted except under extreme failure; Ethereum deliberately separates these roles (LMD‑GHOST for fork choice, Casper FFG as the finality gadget).

Deterministic tie‑breaking prevents prolonged splits when children have equal weight because different nodes won’t break ties inconsistently; the Ethereum spec uses lexicographic tie‑breaking by block root and recent analyses show deterministic tie‑breaking materially improves GHOST‑style security versus adversarial tie‑breaking.

No - fork choice cannot force convergence during a long network partition because it only acts on each node’s local information; if honest nodes do not eventually see similar evidence (blocks or votes), they can compute different preferred heads and remain split until connectivity is restored or other mechanisms intervene.

LMD‑GHOST computes weight from validators’ latest relevant messages: for each active, unslashed validator, only its most recent attestation is counted and that balance is attributed to the child branch containing the attested descendant, then the algorithm greedily walks to the heaviest child until it reaches a leaf.

GHOST (including LMD‑GHOST variants) can better use honest work or votes that fall in stale branches by counting subtree weight rather than only trunk length, but it is not strictly superior in all models - recent tight analyses show GHOST’s consistency region can be worse than Nakamoto longest‑chain under some adversarial assumptions and that tie‑breaking and equivocation constraints matter a great deal.

Proposer boost gives a temporary score increment to the timely block produced by the canonical proposer to reduce short‑range reorgs and reward timely proposal behavior; mechanically the boost applies when the candidate block is an ancestor of store.proposer_boost_root and the aim is operationally fewer unnecessary flips of the head.

Clients keep state and do bookkeeping so head lookup is fast: instead of recomputing weights from scratch, production implementations maintain data structures (for example proto_array) that track block nodes, validators’ latest votes and propagated weight deltas so updates are done incrementally and Head queries are cheap.

Unconstrained equivocation breaks security for GHOST‑style rules because validators can cheaply support incompatible branches, so practical PoS designs must mitigate or penalize equivocation (e.g., slashing, filtering equivocators) or GHOST’s guarantees do not hold; the formal analyses explicitly call this out as a requirement.

Operational recovery can be hard: importing a problematic block into fork‑choice state has in practice hindered pivoting to the correct chain, and teams have relied on checkpoint states, trusted peers, emergency blacklisting, and manual intervention in past incidents (e.g., the Holesky retrospective); these are emergency tools and raise tradeoffs about centralization and abuse risk.

The Phase‑0 spec and related documents do not mandate concrete pruning/retention policies for store.blocks and block_states; pruning and storage bounds are left to implementations and are listed as unresolved/specified‑outstanding questions in the spec notes.

Related reading