What is Avalanche?

Learn what Avalanche is, how its Snow and Snowman consensus protocols work, why it achieves fast probabilistic finality, and its key tradeoffs.

Introduction

For the token explainer, see AVAX.

Avalanche is a blockchain network built around an unusual idea about consensus: instead of having every validator talk to everyone, or electing a leader to drive agreement, each validator repeatedly asks a small random sample of other validators what they currently prefer. Out of that very local behavior, the whole network can converge quickly on the same result.

That is the core reason Avalanche exists. Traditional blockchain designs tend to force an uncomfortable choice. You can have open participation and simple mechanics, as in proof-of-work systems, but then finality is slow and always somewhat tentative. Or you can have much stronger and cleaner finality, as in classical Byzantine fault tolerant systems, but often with coordination patterns that become expensive or bottlenecked as the validator set grows. Avalanche was designed to sit in the space between those poles: fast finality, low communication overhead, and no single leader that everyone depends on.

That promise is only interesting if the mechanism actually works. So the right way to understand Avalanche is not to start with token tickers, or even with its chain architecture. The right place to start is the consensus idea underneath it: a family of protocols called Snow, then Snowball, and finally Avalanche and Snowman as concrete ways to order real transactions and blocks.

Once that clicks, the rest of the network makes more sense: why Avalanche talks about probabilistic finality rather than deterministic finality, why its validators sample a small subset of peers rather than broadcasting every vote globally, why some parts of the network use a DAG while others use a linear chain, and why Avalanche can feel fast in normal operation while still depending heavily on correct implementation and parameter choices.

What consensus trade-offs does Avalanche try to resolve?

Consensus in a blockchain is the problem of making many machines, spread across the internet and some of them faulty or malicious, agree on which transactions happened and in what order. That sounds simple until you add the constraints that actually matter. The network is asynchronous enough that messages arrive late or out of order. Some participants may lie. And the system must keep working without handing too much power to a small coordinating committee.

There are two old patterns here.

In Nakamoto-style systems, such as Bitcoin, the network accepts that multiple versions of history may temporarily compete, and it uses economic work and chain growth to make one branch increasingly likely to remain canonical. The advantage is openness and robustness. The cost is latency: users wait for more confirmations because finality is not immediate. What you get is not a clean moment of decision but a decreasing probability of reversal.

In classical BFT systems, validators exchange structured votes to finalize decisions directly. That can give much faster and clearer finality. But the communication pattern often becomes heavy as the validator set grows, and many such systems rely on leaders or rounds coordinated around specific proposers. That creates practical bottlenecks and points of sensitivity.

Avalanche asks a different question: what if agreement does not need everyone to hear from everyone else on each decision? What if each validator only needs a small, random glimpse of the network's current preference, repeated enough times that a weak majority becomes a self-reinforcing one?

That is the central move. Avalanche replaces global coordination with repeated random subsampling. The design goal is to keep the per-node work roughly constant as the validator set grows, while still pushing the system toward a common result quickly.

How can repeated small samples produce network-wide agreement?

Imagine a very large crowd trying to choose between red and blue, but nobody can poll the entire crowd. Instead, each person repeatedly asks 20 randomly chosen people what they currently prefer. If the sample comes back strongly blue, the person leans blue. If that happens again and again, the person's confidence in blue rises. Meanwhile everyone else is doing the same thing.

At first this may seem too weak to matter. A sample of 20 feels tiny compared with a network of thousands. But the point is not that a single sample proves the truth. The point is that once one side gets even a modest edge, repeated sampling tends to amplify that edge. A validator that sees a strong blue sample becomes more likely to answer blue in future queries from others. That makes future blue samples slightly more likely across the network. Those samples then push still more validators toward blue.

This feedback loop is why the Snow family is described as metastable. The system can hover in uncertainty when preferences are mixed, but once it drifts far enough toward one side, the drift reinforces itself and becomes increasingly hard to reverse. The formal analysis in the original Snow paper models this behavior and shows an irreversibility effect: once the network has moved toward a majority, the probability of snapping back to the minority falls exponentially under the stated assumptions.

That is the compression point for Avalanche. It is not “small committees are cheaper,” though they are. It is that small random samples, repeated under a reinforcement rule, can create network-wide convergence without a leader and without all-to-all voting.

The price of this approach is equally important: the guarantee is probabilistic. Avalanche does not claim absolute safety in the same way a deterministic protocol does. Instead, it offers ε-safety: by choosing parameters appropriately, the probability of conflicting decisions can be made negligibly small, but not literally zero.

How do Snow and Snowball make a node decide in Avalanche?

| Parameter | Reference value | What it controls | Effect of larger value |

|---|---|---|---|

| k | 20 | Sample size per query | More accuracy, more bandwidth |

| α | 14 | Quorum inside sample | Stricter quorum, fewer false positives |

| β | 20 | Consecutive successes needed | Longer streaks, stronger finality |

The Snow family has a few layers, and the names can be confusing if introduced too quickly. The useful distinction is this.

Snow gives the basic repeated-sampling idea. A node queries a small random subset of validators and observes which option has enough support.

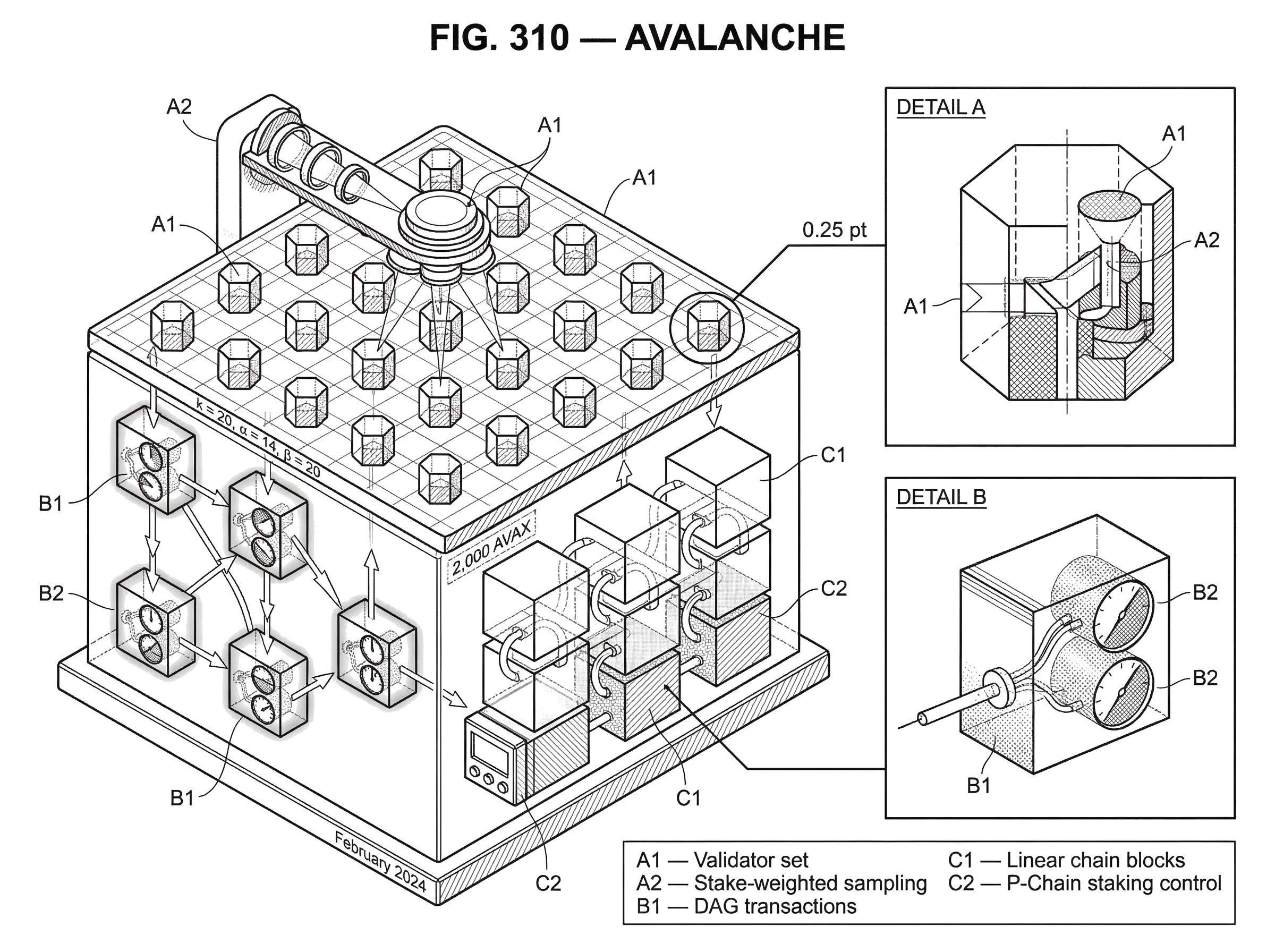

Snowball adds memory. A node does not just react to the latest sample in isolation; it accumulates confidence in a choice over time. In Avalanche's developer documentation, the key parameters are usually written as k, α, and β. Here k is the sample size, α is the quorum threshold inside that sample, and β is the number of consecutive successful observations needed before a node treats a choice as decided. Avalanche documentation gives reference values of k = 20, α = 14, and β = 20.

The mechanism works like this in ordinary language. Suppose a validator is trying to choose between two conflicting transactions. It asks k sampled validators which one they currently prefer. If at least α of them prefer transaction A, the validator records a successful vote for A. If it keeps seeing the same outcome enough times in a row (reaching the threshold β) it accepts A and rejects the conflicting alternative.

What matters here is not just majority rule but streaks. A single favorable sample could be noise. Twenty consecutive favorable samples are much less likely to be noise, especially once the network has already begun to coordinate on the same preference. The consecutive-count rule turns repeated local observations into strong confidence.

In production Avalanche, sampling is not uniform across all nodes. The builder documentation says it is stake-weighted: validators with more stake are sampled more often. That means consensus influence comes from bonded stake, not simply from the number of machine identities. This is how Avalanche addresses Sybil resistance at the network level. The original Snow paper explicitly leaves Sybil control outside the core consensus mechanism and assumes some external mechanism provides it; Avalanche's staking system fills that role in the deployed network.

When does Avalanche use a DAG and when does it use Snowman (linear ordering)?

| Ordering | Best for | Throughput | Finality | Primary Network chain |

|---|---|---|---|---|

| Partial order | Many non-conflicting transactions | High, amortized per-tx | Fast probabilistic | X-Chain (DAG style) |

| Total order | Smart contracts / EVM | High, linearized | Very fast probabilistic | C-Chain (Snowman) |

Once you have the sampling rule, the next question is what exactly is being voted on.

Here Avalanche splits into two closely related protocol shapes.

The original Avalanche protocol organizes transactions in a directed acyclic graph, or DAG. This is useful when many transactions do not conflict with each other and can be processed with only a partial order. The DAG structure lets the system amortize work across many transactions at once.

Snowman is the linear-chain variant. It takes the same Snowball-style repeated subsampled voting and applies it to a blockchain where blocks form a single parent-linked chain. This is the variant used for chains that need a total order, especially smart contract execution. Avalanche's own documentation describes Snowman as the implementation for linear chains.

This difference matters because not every application needs the same ordering discipline. A simple payment model can often tolerate partial ordering among unrelated transactions, because only conflicting spends need direct competition. Smart contracts are different. Once contracts read and write shared state, a total order becomes the safer and more natural model. So Avalanche the network uses different consensus shapes for different jobs rather than forcing every workload into the same structure.

The Primary Network reflects that choice. According to Avalanche's official documentation, it runs three built-in chains. The X-Chain handles Avalanche Native Tokens and historically aligns with the DAG-oriented Avalanche style. The P-Chain handles validator and network-level operations such as staking and creation of new chains. The C-Chain is the EVM-compatible smart contract chain and therefore uses linear ordering, which is why Snowman is the relevant mental model there.

How does Avalanche's DAG design improve consensus efficiency?

The DAG design is easiest to understand by focusing on conflicts and ancestry.

In a UTXO-style payment system, not every transaction competes with every other transaction. Two transactions conflict if they try to spend the same input. If they do not conflict, there is no reason the whole network needs to force them into a single global sequence immediately. Avalanche takes advantage of that fact.

When a node issues or learns about a transaction, it places it in a DAG whose edges point to parent transactions. A query about one transaction implicitly carries information about its ancestors as well. The original paper describes this as piggybacking many Snowball instances together. A vote for a transaction effectively supports the chain of dependencies behind it.

The paper introduces two useful terms here: chits and confidence. A chit is a one-time indicator that a transaction received enough support when queried. If a transaction gets the required threshold of yes-votes, its chit is set to 1 and stays there. The confidence of a transaction is then the sum of chits in its progeny; roughly, how much later support has accumulated on top of it through descendants.

The important invariant is monotonicity. Chits do not flip back and forth, and confidence only grows. This gives a node a way to compare conflicting transactions based on the amount of confirmed support that has accreted behind each one. Because a query about a descendant also reinforces its ancestors, the network can process many related decisions with amortized communication cost. The paper argues that this reduces per-node cost from logarithmic to effectively constant for the multi-transaction system.

That is the upside. The downside is that dependencies between transactions become part of the protocol's liveness story. Later security analysis pointed out that the interaction between descendants and ancestor acceptance could create liveness problems in the original formulation under certain conditions. In plain language, if votes on descendants can repeatedly interfere with progress on their ancestors, an attacker may be able to delay some honest transactions far longer than intended. The same analysis also noted that the deployed implementation included an alternative mitigation, so the attack as formalized there does not necessarily map directly onto the live network. But the broader lesson is important: the DAG buys efficiency by coupling decisions together, and that coupling must be handled carefully.

Example: How Snowman finalizes blocks on the C-Chain

Suppose a user submits a transaction to an application on the C-Chain. The transaction enters a candidate block, and validators need to decide whether that block should be preferred over competing alternatives at that height or along that branch.

A validator does not ask the whole network. It samples a small, stake-weighted set of peers; say 20 of them. If at least 14 respond that this block is currently their preference, the validator treats that round as successful for the block. Because a vote for a block also counts for all its ancestors, the sampled preference is transitive: support for the current tip also reinforces the chain beneath it.

Now imagine this happens repeatedly across the network. Each validator is both querying others and answering queries based on its current preference. Once a block begins to gather enough successful rounds in a row, more validators treat it as the preferred continuation. That increases the chance that future samples also favor it. After enough consecutive successful observations (the reference threshold is 20) validators accept the block as final for practical purposes.

Notice what did not happen. There was no universal broadcast of every vote to every validator. There was no single leader whose failure would halt the round. And there was no need to wait for several more blocks to bury the decision under additional chain work. The mechanism is lighter than classical all-to-all voting and more direct than longest-chain accumulation.

This is why Avalanche often feels fast to users. The official documentation describes Snowman as delivering sub-second, immutable finality, though it is more precise to say very fast probabilistic finality whose security depends on the protocol parameters and the network assumptions. The original paper's experiments reported median confirmation latency around 1.35 seconds in a 2,000-node geo-replicated test and throughput around 3400 transactions per second in that setup, with smaller clusters reaching higher throughput. Those numbers are implementation- and environment-dependent, but they show the design target clearly: many validators, low latency, and no communication explosion per decision.

Why does Avalanche run multiple built-in chains (X-, P-, C-Chain)?

| Chain | Main role | Ordering model | Typical operations |

|---|---|---|---|

| C-Chain | EVM smart contracts | Linear chain (Snowman) | Contract execution, dApps |

| P-Chain | Network control plane | Linear chain | Validator staking, create chains |

| X-Chain | Asset exchange and issuance | DAG-style partial order | Native tokens, transfers |

The multi-chain architecture is not an afterthought. It follows directly from the observation that different jobs want different ordering and execution models.

The C-Chain is where most developers encounter Avalanche because it is EVM-compatible. It runs an implementation of the Ethereum Virtual Machine and supports the Geth API, which means existing Ethereum tooling and Solidity contracts can often be reused with limited changes. That compatibility matters because a consensus improvement is only useful if developers can actually build on it.

The P-Chain is more like the control plane of the network. It manages validators, staking operations, and the creation of additional Avalanche L1s. These are network-governance and platform-level actions, so keeping them on a specialized chain helps separate them from ordinary contract execution.

The X-Chain handles native asset creation and transfer. This division reflects Avalanche's view that a blockchain platform does not need to force asset issuance, validator management, and general-purpose computation into one identical data structure.

More recently, Avalanche has leaned further into this architecture with the move toward sovereign L1s. Official upgrade material for the Etna release describes a model in which new Avalanche L1 networks can have their own validator sets and customized rules, with lighter-weight validation economics than the Primary Network's 2,000 AVAX validator stake requirement. This pushes Avalanche from being just a single smart contract chain toward being a platform for launching related chains with shared interoperability tools.

How do staking and incentives secure the Avalanche network?

Consensus tells you how validators form agreement. It does not by itself tell you who gets to be a validator or what happens if they misbehave.

On Avalanche's Primary Network, validators must stake AVAX. Official documentation states that a node can become a Primary Network validator by staking at least 2,000 AVAX. Sampling is stake-weighted, so stake determines how much influence a validator has on the sampled consensus process. Delegators can also assign stake to validators, with official docs listing a minimum delegation amount of 25 AVAX.

Avalanche's incentive model differs from proof-of-stake systems that use slashing aggressively. The developer docs explicitly note that Avalanche does not use slashing. A misbehaving validator does not automatically lose bonded AVAX, but it may lose reward eligibility. The same docs and governance materials note an uptime requirement for reward eligibility, with recent ACP material indicating an increase from 80% to 90% uptime for Primary Network validators. That means the network relies more on reward withholding than stake confiscation as its direct economic penalty.

This is a meaningful design choice. The benefit is a less punitive validator experience and lower risk of catastrophic operator loss from accidents. The cost is that economic deterrence is weaker than in systems where provable misbehavior can directly burn or seize stake. So Avalanche's security rests on a combination of stake-weighted sampling, protocol-level convergence properties, and relatively softer economic discipline.

What are Avalanche's strengths and its key assumptions or risks?

The strongest thing about Avalanche is not merely that it is fast. Many systems are fast under easy conditions. The interesting part is why it can be fast: each validator does only small-sample work per round, there is no single leader to bottleneck progress, and the network's preference can self-reinforce once a choice starts to dominate.

That gives Avalanche a distinctive position among consensus designs. It offers probabilistic finality like Nakamoto systems, but usually much faster. It avoids the full communication burden of many classical BFT protocols. And through Snowman, it can support the total ordering needed for an EVM chain without abandoning the underlying subsampling idea.

But the assumptions matter.

First, finality is probabilistic. Avalanche can make disagreement arbitrarily unlikely by tuning parameters, but “arbitrarily unlikely” is not the same thing as impossible. Applications that need to reason about edge-case failure should understand that distinction.

Second, the security analysis depends on network conditions, adversary fraction, and parameterization. The original paper provides formal liveness and safety claims under stated assumptions, including stronger liveness results when the adversary is bounded relative to network size. Those are meaningful guarantees, but they are model-based guarantees, not magic.

Third, real networks fail for software reasons as much as for abstract consensus reasons. Avalanche's February 2024 block finalization stall is a good example. According to the official status report, a buggy logic change introduced in AvalancheGo v1.10.18 caused validators to send excessive transaction gossip. That saturated stake-weighted per-peer bandwidth allocation, prevented pull queries from being processed in time, and stalled consensus because polls were no longer being handled. The fix was to upgrade to AvalancheGo v1.11.1, which disabled the problematic logic. This incident is revealing because the consensus idea itself was not the only issue; the surrounding networking and client behavior were enough to halt finalization on the Mainnet Primary Network until enough stake upgraded.

That is a healthy reminder about blockchain systems generally. Performance claims belong to the whole stack, not just to the abstract protocol paper. Consensus, peer-to-peer networking, bandwidth management, implementation quality, and upgrade coordination all shape the user-visible result.

What use cases and applications is Avalanche suited for?

If you strip away ecosystem branding, Avalanche is useful anywhere people care about three things at once: fast transaction confirmation, broad validator participation without all-to-all messaging, and a platform architecture flexible enough to host different execution environments.

That is why Avalanche has attracted smart contract activity through the C-Chain, validator and subnet management through the P-Chain, and asset issuance through the X-Chain. It is also why application builders have been drawn to its EVM compatibility: they can target a familiar execution environment while benefiting from a different consensus engine underneath.

At the same time, Avalanche's architecture makes it a platform for multiple chains, not just a single base layer. The move toward Avalanche L1s and standardized interchain messaging extends the original logic of specialization: if different applications want different validator sets, fee rules, or governance, the network should let them separate those concerns rather than forcing them all onto one shared chain.

Conclusion

Avalanche is a blockchain network built on a simple but powerful idea: global agreement can emerge from repeated local sampling. Validators do not need to hear every vote from every other validator. They sample a few peers, repeat that process, and let reinforcement push the network toward a stable shared choice.

From that idea comes most of Avalanche's character: fast probabilistic finality, low per-node communication, no leader bottleneck, and a design that supports both DAG-style and linear-chain ordering. It also explains Avalanche's tradeoffs. The guarantees are probabilistic, the implementation details matter enormously, and some of the efficiency comes from assumptions and coupling that must be engineered carefully.

If you remember one thing tomorrow, let it be this: Avalanche is not just “another fast chain.” It is a different answer to the consensus problem; one where many small random glimpses of the network are enough, under the right rules, to make the whole network settle on one history.

How do you buy Avalanche?

Buy AVAX on Cube by trading the AVAX/USDC market. Fund your Cube account with fiat or a supported crypto, then open the AVAX/USDC market at /trade/AVAXUSDC to place an order.

- Deposit fiat via the on-ramp or transfer USDC into your Cube account.

- Open the AVAX/USDC market at /trade/AVAXUSDC.

- Choose an order type: use a limit order to control your entry price or a market order to buy immediately, then enter the AVAX amount or USDC spend.

- Review the estimated fill, network and Cube fees, then submit the order.

Frequently Asked Questions

Avalanche provides probabilistic (ε-) finality: decisions are not mathematically impossible to reverse but the protocol can make the probability of a conflicting decision negligibly small by choosing parameters appropriately. The article describes this as tunable ‘ε-safety’ rather than the absolute finality offered by some classical BFT protocols.

The Snow-family parameters control how quickly local sampling turns into strong global confidence: k is the number of peers sampled per query, α is the quorum threshold inside that sample, and β is the number of consecutive successful observations needed to accept a choice; Avalanche’s docs give reference values k=20, α=14, β=20. These parameters trade off failure probability, latency, and communication cost, but the precise tolerated adversary fraction for the default parameters (especially under stake-weighted sampling) is not fully specified in the cited materials.

Avalanche resists Sybil attacks by making sampling stake-weighted: validators with more bonded AVAX are sampled more often and therefore have more consensus influence. On the Primary Network a validator historically needed to stake 2,000 AVAX (with delegations allowed), so influence comes from bonded stake rather than raw node count.

Avalanche (the DAG variant) lets many non-conflicting transactions be only partially ordered and amortizes consensus across dependencies, while Snowman is the linear-chain variant that applies the same Snowball sampling rules to blocks to achieve a total order (used by the C-Chain for EVM-compatible smart contracts). The choice reflects whether an application needs partial ordering (DAG) or strict total ordering (chain).

Coupling acceptance across ancestors and descendants gives efficiency in the DAG but also introduces subtleties: subsequent analysis showed that under some conditions descendants' votes could interfere with ancestor progress and enable liveness delays. The paper notes this attack and that the deployed implementation includes an alternative mitigation, but understanding the exact deployed variant and its limits remains an open area.

Avalanche does not rely on slashing to punish misbehavior; instead, misbehaving validators can lose reward eligibility (the docs explicitly state there is no automatic stake confiscation). The network relies on reward withholding and uptime requirements as economic deterrents rather than immediate stake-burning penalties.

A February 2024 finalization stall was traced to a buggy logic change in AvalancheGo v1.10.18 that caused excessive transaction gossip, which saturated per-peer bandwidth and prevented polls from being handled; upgrading to v1.11.1 (which disabled the problematic logic) restored progress once sufficient stake upgraded. The incident highlights that networking and client behavior can stop finalization even when the abstract consensus idea is sound.

In published experiments the original Avalanche paper reported median confirmation latency around 1.35 seconds in a 2,000-node geo-replicated test and throughput near 3,400 transactions per second for that setup; the developer docs also describe sub-second, practical finality as a design target. These numbers are implementation- and environment-dependent and come with the usual caveats about parameter choice and network conditions.

Avalanche’s multi-chain Primary Network separates concerns: the X-Chain for native assets (DAG-friendly), the P-Chain for validator and subnet management, and the C-Chain for EVM smart contracts (Snowman/linear ordering). Recent platform work (Etna) pushes this further by enabling sovereign L1s with their own validator sets and lighter-weight validation economics than the Primary Network’s historical 2,000 AVAX minimum.

To become a Primary Network validator the documented minimum stake has been 2,000 AVAX, and the project has raised validator reward uptime requirements (ACP notices reference an increase toward 90% uptime). Documentation is not fully consistent about the effective uptime threshold and enforcement timing, so operators should consult the latest ACPs and client releases for authoritative rules.

Related reading