What is Allowlist/Blocklist?

Learn what allowlists and blocklists are, how deny-by-default differs from deny-by-exception, where each works best, and where they fail.

Introduction

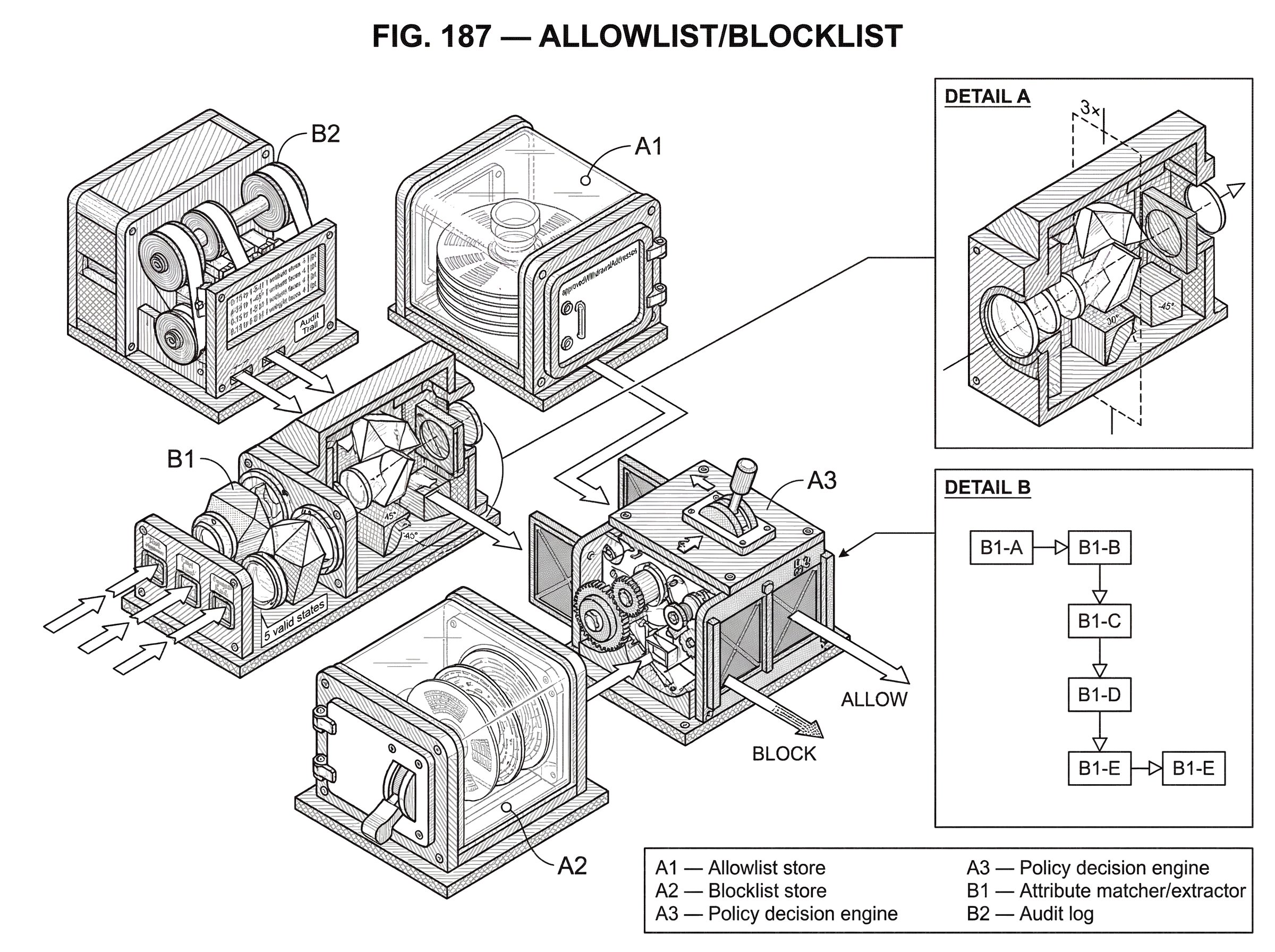

Allowlist/blocklist is a security pattern for deciding what a system should permit and what it should refuse. The idea sounds almost trivial: write down a list of things that are allowed, or a list of things that are denied, and enforce it. But the interesting part is not the list itself. The interesting part is the assumption the list makes about the world.

An allowlist says: only explicitly approved things may proceed. A blocklist says: everything may proceed except what has been explicitly prohibited. Those are not mirror-image choices. They produce different failure modes, different operational costs, and different levels of protection against the unknown. That is why security teams are usually much more careful about where they trust a blocklist to stand alone.

NIST defines an allowlist as a documented list of specific elements that are allowed, per policy decision. That wording matters. An allowlist is not just a technical filter; it is an expression of policy. Someone has decided what belongs on the list, under what conditions, and who gets to change it. Historically, older documentation often used the term whitelist. Current guidance generally prefers allowlist and denylist or other direct descriptive names.

The core question behind the concept is simple: when the system sees something it does not recognize, should the default answer be yes or no? Once that clicks, most of the design tradeoffs become easier to understand.

How do allowlists and blocklists differ in their default action?

| Control | Default action | Novelty behavior | Best protection against | Typical use-case | Main operational cost |

|---|---|---|---|---|---|

| Allowlist | Deny by default | Unknown treated as suspicious | Unknown / zero-day threats | Approved withdrawal addresses and trusted apps | High maintenance and governance |

| Blocklist | Allow by default | Unknown treated as harmless | Known-bad indicators | Malicious domains and file hashes | Lower maintenance |

The deepest distinction between allowlists and blocklists is the default action.

With an allowlist, the default is deny. If an item is not on the approved set, the system rejects it. With a blocklist, the default is allow. If an item is not on the prohibited set, the system lets it through. In security terms, this is the difference between permit by exception and deny by exception.

That default matters because security is rarely about perfectly classifying everything. It is usually about making decisions under uncertainty. Attackers exploit exactly that uncertainty: new malware, new domains, slightly modified payloads, fresh wallet addresses, unfamiliar applications, novel phishing infrastructure, manipulated inputs that do not exactly match yesterday’s bad pattern. When the system encounters something it has never seen before, an allowlist and a blocklist behave in opposite ways. The allowlist treats novelty as suspicious until approved. The blocklist treats novelty as harmless until proven otherwise.

This is why allowlists are often stronger against unknown threats. NIST’s guidance on application whitelisting makes the contrast explicit: antivirus and similar controls often block known bad activity and permit everything else, while application allowlisting permits known good activity and blocks everything else. The same logic appears in software authorization guidance: deny-all, permit-by-exception can stop previously unknown software, while allow-all, deny-by-exception has little effect on zero-day variants because small changes can evade the blocked pattern.

There is no magic here. The mechanism is simple. If the universe of legitimate things is small enough and stable enough to define precisely, an allowlist gives you a strong boundary. If the universe of bad things changes too quickly or is too large to enumerate completely, a blocklist is inherently incomplete.

Why think of allowlists as gates and blocklists as watchlists?

A useful way to think about the two controls is this: an allowlist behaves like a gate, while a blocklist behaves like a watchlist.

A gate checks whether you belong in a limited, pre-approved set. If not, you do not pass. This is effective when access is supposed to be narrow: approved withdrawal addresses, trusted applications on a server, specific partner systems allowed to connect to an identity provider, a fixed menu of accepted values in an API field, a set of IP addresses allowed to reach an admin endpoint.

A watchlist works differently. It scans for things already identified as dangerous and tries to stop those. This is useful when the space of legitimate activity is broad and open-ended, but some known patterns are common enough that blocking them still helps: malicious domains, known phishing URLs, a list of disallowed file hashes, previously identified IP addresses tied to abuse.

The analogy explains the basic mechanism, but it has limits. Real systems often combine both. A firewall might use an allowlist-first policy for which applications are permitted, then add block rules for specific high-risk destinations. A web application might allowlist acceptable input structure while also blocklisting a few especially dangerous filenames. The point is not that one list type completely replaces the other in every setting. The point is that they play different roles, and confusing those roles leads to weak designs.

What identity attributes should I use for an allowlist or blocklist?

| Object type | Identity attribute | Evasion difficulty | Maintenance burden | Best when |

|---|---|---|---|---|

| IP address | IP address | Low; IP rotation common | Medium; frequent updates | Static admin panels |

| Wallet address | Crypto address | Very low; address immutability | Low; small approved set | Exchange withdrawal destinations |

| Software binary | Hash or digital signature | Medium–high; living‑off‑land bypasses | High; updates and releases | Centrally managed hosts |

| Domain / URL | DNS name or URL | High; rotations and variants | High; continuous feeds | Phishing and URL filtering |

| Input field | Exact value or pattern | Medium; encoding/obfuscation | Medium; rule tuning | Fixed-select or structured inputs |

An allowlist or blocklist is only as good as the thing it is matching against. That sounds obvious, but it is where many implementations become fragile.

The system must answer two questions. First, what kind of object is being controlled? An IP address? A wallet address? A software binary? A script? A domain? A user input value? A relying party in a federation? Second, what identity attribute defines that object? Name, path, publisher signature, cryptographic hash, version, account ID, DNS name, URI path, or something else?

This choice determines how easy the control is to evade and how expensive it is to maintain. For application control, NIST warns that weak attributes such as file name, path, or size are poor identifiers on their own. A malicious file can adopt a benign-looking name or land in a trusted-looking directory. Stronger approaches use attributes that tie more closely to the software’s identity, especially digital signatures and cryptographic hashes. Microsoft’s AppLocker reflects the same design space: rules can be based on publisher, product, file name, version, path, or hash. Each option is a tradeoff between durability across updates and resistance to spoofing.

The same principle carries into other domains. If an exchange lets users withdraw only to a pre-approved list of wallet addresses, the address itself is the identity key. That is useful because crypto transfers are irreversible enough that a narrow gate is worth the inconvenience. If a WAF allows traffic only from a maintained IP list, the source IP is the identity key. That can work for a private admin panel, but it is brittle if users roam across networks or if attackers can operate from allowed infrastructure. If an input field must contain one of five valid states, the safest approach is to compare exactly against those five values on the server. In each case, the list works because the thing being matched is concrete and the acceptable set is meaningfully bounded.

When should you use an allowlist versus a blocklist; trade-offs and operational costs?

The main strength of an allowlist is not sophistication. It is smallness. The control becomes powerful when the set of legitimate things is naturally limited.

Consider a production server that should run only a defined set of services and scripts. Here, application allowlisting fits the shape of the environment. The workload is stable, centrally managed, and changes are planned. If an unknown executable appears and tries to run, denying it by default is reasonable. NIST explicitly notes that application allowlisting is most practical on centrally managed hosts with consistent workloads, and especially valuable in high-risk environments where security matters more than unrestricted flexibility.

Now contrast that with a general-purpose laptop used by a developer, analyst, or student. The set of legitimate software changes often. New tools are installed, versions shift, scripts are tested, libraries update, and one-off tasks appear constantly. An allowlist can still be used, but the operational burden rises sharply. Every legitimate change risks becoming a support ticket unless policy, tooling, and automation are good enough to absorb the churn.

This is why allowlists are as much an operations problem as a security mechanism. The list has to be created, updated, reviewed, logged, and tested. Someone has to decide how software updates get approved, what to do with exceptions, how emergency changes work, and how to avoid locking out legitimate business activity. CIS guidance captures this governance dimension by recommending reassessment of authorized software regularly and requiring unauthorized software either to be removed or to receive a documented exception.

So the right question is not “is allowlisting more secure?” in the abstract. Usually it is. The right question is whether the environment is stable enough, visible enough, and governed well enough for the list to remain accurate.

How does a withdrawal address allowlist prevent crypto theft?

A withdrawal address allowlist in a crypto exchange makes the logic unusually clear because the stakes are concrete.

Imagine an account holder enables withdrawals only to a short list of approved addresses. The policy is simple: if a withdrawal destination is not on that list, the exchange refuses the transfer. Mechanically, the exchange compares the requested destination address against the stored authorized set before signing or broadcasting anything. If the address matches an approved entry, the request can continue through whatever other checks exist. If it does not match, the request fails, even if the user’s password was correct or the session looked normal.

Why does this help? Because many account-takeover attacks do not begin by changing every credential. An attacker may steal a session, phish a one-time code, compromise an API key, or trick a user into approving a bad action. A withdrawal allowlist creates a second boundary around the highest-consequence action: moving assets out. Even if the attacker gains some control, they cannot redirect funds to a fresh address unless that address has already been approved through the exchange’s policy process.

This also shows the cost. The user loses spontaneity. Sending funds to a brand-new address now requires a separate approval step, and often a delay. But in this context the friction is the point. Crypto transfers are easy to authorize and hard to undo, so a deny-by-default destination policy is often a good trade.

The same pattern appears outside exchanges. In federated identity, NIST notes that an allowlist may be the list of relying parties allowed to connect to an identity provider without subscriber intervention. Again, the mechanism is the same: a narrow, pre-approved set reduces the chance that a new or unexpected party receives trust automatically.

Why use blocklists at all; use cases and limitations?

If allowlists are stronger against the unknown, why use blocklists at all?

Because many systems must operate in a world where the set of acceptable activity is too broad to enumerate cleanly. The open web is the obvious example. A browser or email system cannot run on an allowlist of every benign domain a user might ever visit. A large enterprise network cannot always enumerate every external service any employee might need. User-generated text cannot be reduced to a neat finite set of approved strings. In these settings, blocklists are attractive because they are cheap to express: stop the things you already know are bad and let the rest continue.

That does provide value. DNS filtering against known malicious domains can reduce malware callbacks and phishing exposure. URL filters can prevent access to clearly risky destinations. A WAF can block requests from source IPs known to be abusive. A file upload control can reject especially dangerous filenames or executable types. These are all sensible uses of blocklists.

But they are best understood as risk reduction, not complete prevention. OWASP’s input validation guidance is especially clear here: denylisting dangerous characters or patterns is easy to bypass and often breaks legitimate input. Attackers can encode, split, transform, or slightly mutate payloads. Meanwhile honest users get rejected for normal data that happens to resemble a blocked pattern. That is the general shape of blocklist weakness everywhere: badness is easier to vary than goodness is to define.

So blocklists remain useful, but usually as a supplement, a broad screening layer, or a way to quickly respond to known threats. They are rarely the strongest possible primary boundary.

What common mistakes break allowlist and blocklist implementations?

| Failure mode | Cause | Consequence | Mitigation |

|---|---|---|---|

| Identifier instability | Weak identifier (path, name) | Easy evasion or false matches | Use hashes or signatures |

| Trusted ≠ safe | Trust by signature only | Signed/native binaries abused | Monitor behavior; restrict hosts |

| Scope confusion | Gate only, no runtime checks | Post-launch abuse permitted | Combine allowlist with runtime controls |

| Static lists | No lifecycle or governance | Outages or stale defenses | Inventory, policy, audit logs |

Most failures of allowlist/blocklist controls come from one of four broken assumptions.

The first is believing that the identifier is stable and meaningful when it is not. If you allow software by file path alone, an attacker may plant a malicious binary in a trusted path. If you block by domain only, an attacker can rotate to a new domain. If you allow by source IP, a NAT, proxy, VPN, or cloud service may blur who is actually behind that address.

The second is assuming that “trusted” software is automatically safe. MITRE documents a common bypass technique in which adversaries use signed or native system binaries to proxy malicious activity. This is the world of living off the land tools: binaries that are already present, already signed, and often already permitted. If a control says “Microsoft-signed binaries may run,” that does not imply every use of those binaries is benign. The binary may be trusted; the behavior may not be. This is why application control that relies only on digital signature or native presence can be bypassed.

The third is forgetting scope. AppLocker’s own guidance notes that it controls whether an app launches, not what it does after launch. That limitation is broader than AppLocker. Any allowlist that gates entry but does not observe behavior can be bypassed by abuse inside the gate. Allowing a script host, browser, plugin framework, wallet extension, or automation tool may implicitly allow a great many downstream actions. The control is still useful, but only for the boundary it actually enforces.

The fourth is treating the list as static. In reality, secure lists are living policy objects. Software updates change hashes. Publishers rotate certificates. Infrastructure moves IP addresses. organizations adopt new tools. Wallets change operational flows. If updates are not handled carefully, a good allowlist turns into an outage generator, and a stale blocklist turns into theater.

How should security teams implement and govern allowlists and blocklists?

Strong allowlist/blocklist programs are built around inventory, policy, testing, and auditability.

Inventory comes first because you cannot authorize what you cannot identify. NIST’s software asset management guidance emphasizes comparing actual software state against desired state. That same logic applies beyond software: know the apps, addresses, identities, domains, scripts, or counterparties you are trying to govern. Without inventory, the list is guesswork.

Policy gives the list meaning. NIST’s glossary definition calls an allowlist a documented list allowed per policy decision. That means there must be owners, approval criteria, exception handling, and change control. Who can add a new withdrawal address? Who can approve a new publisher certificate? Under what conditions can a risky domain be temporarily blocklisted? How are emergency overrides handled? A list without governance is just configuration drift with better branding.

Testing matters because deny-by-default controls fail loudly when they are wrong. NIST recommends evaluating application allowlisting technologies in monitoring or audit mode before enforcement. Microsoft gives the same advice for AppLocker: deploy in audit-only mode first to observe what would have been blocked and to refine rules safely. This pattern is widely applicable. Before enforcing a strict list, learn what normal activity actually looks like.

Auditability closes the loop. Changes to the list should be logged with who changed what and when. That is important for accountability, troubleshooting, and incident response. If a malicious address was added to a withdrawal allowlist, or a dangerous script was accidentally approved, you want a durable record of the change path. This is where allowlists and audit trails naturally connect: the list is the rule, and the audit trail explains the rule’s lifecycle.

How do you combine allowlists and blocklists effectively?

The most practical designs usually combine the two, but in a disciplined order.

Start with an allowlist when the legitimate set is small enough to define and the protected action is high consequence. This gives the system a strong default of implicit deny. Then add blocklists to catch especially risky items within the broader space that remains. Palo Alto’s guidance expresses this well at the network-policy level: move toward an explicit-allow policy and use targeted block rules for risky IPs, websites, and applications. The block rules are useful, but they are not carrying the whole security model.

A simple example is an admin interface. You might first allow access only from a maintained set of corporate IPs. That narrows the reachable population dramatically. Then, inside that boundary, you might still block requests tied to known malicious indicators or suspicious paths. Another example is software execution: permit only approved applications and scripts, then separately block known dangerous native tools that are unnecessary in the environment and commonly abused for bypass. The order matters because it determines the default behavior when the system sees something new.

This same pattern can appear in blockchain-adjacent systems. A custodian might allow withdrawals only to approved addresses, while also blocklisting addresses known to be sanctioned internally, compromised, or associated with scams. The allowlist creates the hard boundary. The blocklist adds specific caution inside or around that boundary.

Should I use allowlist/denylist or whitelist/blacklist in my systems?

Older security literature often uses whitelist and blacklist. Modern guidance increasingly prefers allowlist and denylist or even more explicit names such as allowedRecipients or blockedDomains. This is partly about inclusive language, but it also improves technical clarity.

The better names describe the action directly. Allow and deny tell you what the mechanism does. In code and documentation, more specific forms are often better still because they reveal the object being controlled: allowedIPs, authorizedPublishers, blockedExtensions, approvedWithdrawalAddresses. Clear names reduce the chance that a reader mistakes a broad policy object for a narrow technical list, or assumes the wrong matching semantics.

That may sound secondary, but security failures often grow in the gaps between intent and implementation. Precise naming helps keep the policy legible.

Conclusion

Allowlists and blocklists are both ways of turning policy into enforcement, but they answer uncertainty in opposite ways. An allowlist says unknown means no until approved. A blocklist says unknown means yes until prohibited.

That single difference explains most of the rest. Allowlists are usually stronger when the legitimate set is small, stable, and important enough to govern carefully. Blocklists are useful for catching known bad patterns in broad, open-ended environments, but they are incomplete by design. In practice, good security programs use both; with the allowlist setting the boundary where possible, and the blocklist adding targeted friction where helpful.

If you remember one thing tomorrow, remember this: the list matters less than the default. In security, the default answer to the unknown is often the whole game.

How do you secure your crypto setup before trading?

Secure your crypto setup before trading by hardening account access, verifying destination hygiene, and running simple pre-transfer checks. Cube Exchange uses a non-custodial MPC model for key security; follow these concrete steps to reduce phishing, mistaken-network, and wrong-address risk when you trade or transfer on Cube.

- Enable strong 2FA: set up an authenticator app or register a hardware security key for your Cube account, and store the recovery codes offline.

- Harden your device and browser: install OS/browser updates, remove unknown extensions, and confirm you’re on the correct Cube domain before logging in.

- Verify asset, network, and address: confirm the exact token and chain (e.g., ERC‑20 vs native token), paste the destination into a checksum verifier, and compare the full address or first/last 6 characters.

- Test and confirm new destinations: for any new recipient, send a small test transfer first and confirm the address with the recipient over a second channel (call or secure message) before sending larger amounts.

- Maintain an approved-address list offline: keep a secure record of trusted destination addresses and re-check it before initiating each transfer on Cube.

Frequently Asked Questions

An allowlist’s default is deny: anything not explicitly approved is blocked. A blocklist’s default is allow: anything not explicitly prohibited is permitted. That difference matters because unknown or novel items are treated oppositely - allowlists distrust novelty while blocklists trust it - so allowlists are stronger against previously unseen threats while blocklists are inherently incomplete against variants and zero‑days.

Weak identifiers such as file name, file path, or size are poor bases for an allowlist because they are easy to spoof; stronger identifiers tie more directly to identity, for example digital signatures or cryptographic hashes. The article and NIST/Microsoft guidance both recommend preferring durable, hard-to-forge attributes (publisher signatures or hashes) when you need deterministic authorization.

Allowlists are operationally costly when legitimate sets change frequently because every new legitimate item requires approval, updates, and testing; they work best where the universe of allowed items is small, stable, and centrally managed. The article and CIS/NIST guidance note that environments like production servers or custodial withdrawal addresses are good fits, whereas general-purpose developer laptops are not without strong automation and governance.

Yes - allowlisting based solely on digital signatures or native presence can be bypassed because adversaries can abuse signed or native binaries to proxy malicious actions (the "living off the land" problem). The article cites MITRE’s technique descriptions and community catalogs (LOLBAS) showing that trusted binaries can be repurposed to evade simple app‑allow rules.

Use blocklists where the acceptable activity space is too broad to enumerate - examples include web browsing, email, and large user-generated inputs - because blocklists are cheap to express and can reduce known risks. However, blocklists are best treated as risk‑reduction or supplemental controls since attackers can evade denylists by mutating indicators and blocklists frequently miss novel threats, as noted by the article and OWASP guidance.

Start with an allowlist where the allowed set is small and the protected action is high consequence, then add blocklists to catch specific risky items inside or near that boundary. The article’s recommended pattern is an explicit-allow posture supplemented by targeted deny rules so that the allowlist sets the default deny boundary while blocklists add focused protection.

Effective programs treat lists as living policy objects built on four pillars: accurate inventory, clear policy and owners, testing (audit-only mode before enforcement), and logged change control so every modification is auditable. The article and NIST/CIS references emphasize that you cannot authorize what you cannot inventory, that rules need documented approval and exception processes, and that audit trails are required for accountability.

Common implementation mistakes include relying on unstable or weak identifiers (like filename or IP alone), assuming that a trusted app is harmless in all uses, confusing the gate (what you block at entry) with runtime behavior, and treating the list as static rather than a maintained policy. The article enumerates those four broken assumptions as the typical failure modes.

The movement from terms like “whitelist/blacklist” to “allowlist/denylist” is recommended for clarity and inclusivity, but the evidence notes translation and backward‑compatibility concerns; projects should plan renames carefully because documentation, APIs, and saved data may still use legacy terms. Inclusive‑language guidance on the evidence list observes that renaming improves clarity but raises migration questions that require operational coordination.

Related reading